“Claude COBOL” is not the name of a vulnerability and it’s not a new programming language. In practice, the phrase is shorthand for a specific moment in enterprise software: Anthropic’s Claude, especially Claude Code-style workflows, being framed as a way to accelerate COBOL modernization—work that has historically required large teams, long timelines, and deep institutional knowledge. (Claude)

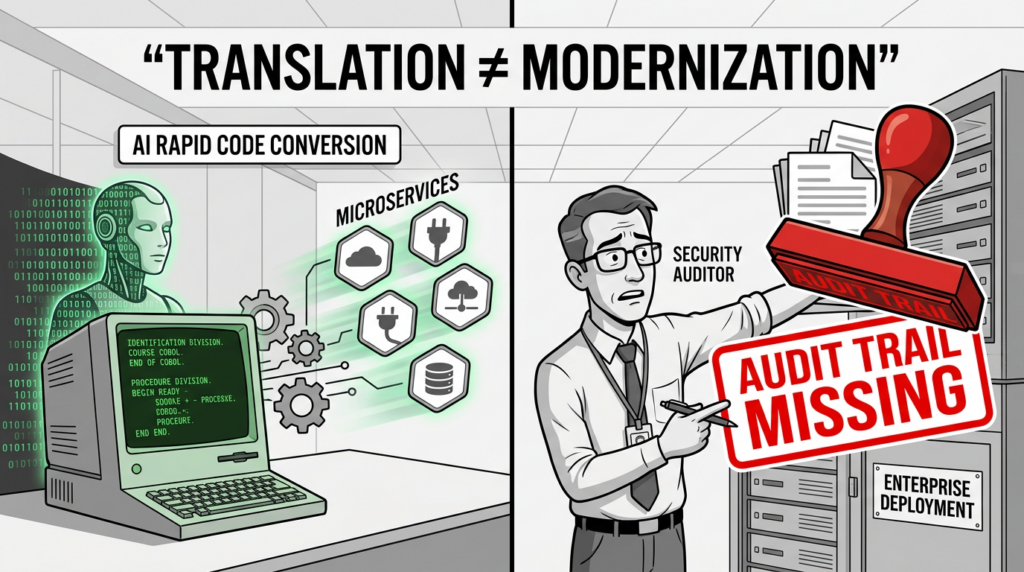

That framing went from “interesting” to “urgent” when it hit mainstream business coverage. Reuters reported that IBM shares fell 13.2% on February 23, 2026, their steepest single-day decline since 2000, after Anthropic argued its tooling could modernize COBOL “in quarters instead of years.” (Reuters) Barron’s and others followed with analysis of why investors reacted so strongly and why the reality is more nuanced than a simple “translation replaces modernization” story. (Barron’s)

Security engineers do not search “claude cobol” because they want stock commentary. They search it because they recognize the pattern:

AI can lower the cost of understanding and changing the most mission-critical legacy systems in the world—and that changes the attack surface faster than most organizations can adapt their controls.

In other words, “Claude COBOL” is a proxy for a new security boundary.

This article answers the questions an AI security engineer or penetration tester is actually asking:

- What does “Claude COBOL” really mean in day-to-day engineering workflows

- Why this keyword became high-intent in security circles

- Which vulnerability classes and high-impact CVEs matter most in modernization pipelines

- How to build controls that produce evidence—auditable, testable proof—not vague assurances

- Where offensive validation fits naturally when modernization creates new endpoints and new trust boundaries

What “Claude COBOL” actually refers to, beyond the headline

Anthropic published a post describing how AI can break the cost barrier to COBOL modernization, arguing that the exploration and analysis phases consume most of the effort and that tools like Claude Code can automate large parts of that work—mapping workflows, dependencies, and data flows that current staff may not fully remember. (Claude)

In real organizations, “Claude COBOL” typically means one or more of these patterns:

Large-scale comprehension and mapping

- Identifying program entry points and transaction flows

- Tracing COPYBOOK usage and data layouts

- Extracting batch job sequences and dependencies

- Building call graphs and data lineage views

Documentation and knowledge extraction

- Converting tribal knowledge into searchable documents

- Summarizing modules, interfaces, and error paths

- Building modernization “playbooks” for staff who don’t speak COBOL

Incremental modernization and refactoring

- Creating service wrappers around COBOL functions

- Translating selected modules into modern languages

- Running old and new systems in parallel with validation

This matters because each step tends to move critical logic into new places: new repositories, new ticket systems, new CI pipelines, new staging environments, new log stores, and often new cloud services.

IBM’s response—summarized in its “Lost in Translation” post—is that “translation” misses the real complexity: the modernization challenge is not just COBOL syntax, but the integrated stack and operational environment around it. (IBM Newsroom) Security teams should read that as a warning: even if AI helps generate code, the highest-risk work often happens in the surrounding ecosystem.

Why security teams are searching “claude cobol” now

If you look at the coverage cluster around the phrase, you’ll notice the same “click magnet” topics repeated: COBOL modernization, mainframes, AI refactoring, Claude Code, IBM disruption, quarters instead of years. (Reuters)

Security intent is different. Here are the real drivers.

Modernization is the biggest change window many organizations will face for years

Legacy systems tend to be stable, heavily governed, and operationally conservative. Modernization changes that by introducing:

- New APIs and integration points

- New identity paths and role mappings

- New data replication and transformation pipelines

- New CI/CD workflows and build infrastructure

- Temporary “bridge layers” that become permanent

Attackers love change windows because controls are weakest when architecture is in motion.

AI reduces the cost of understanding legacy logic for both defenders and attackers

Scarcity used to protect COBOL-heavy environments: fewer people could read the code, fewer people could reason about edge cases, fewer people could quickly map business logic.

AI can compress that understanding phase. That helps defenders modernize—but it also helps attackers target systems more intelligently, because exploitation begins with comprehension.

Business logic becomes data, expanding leakage and compliance exposure

COBOL systems frequently encode:

- Eligibility rules

- Payment and settlement logic

- Fraud and risk decision paths

- Exception behavior that defines real security outcomes

If modernization workflows paste that into third-party systems or leak it into logs, you may expose proprietary controls and sensitive operational rules.

AI-assisted workflows can become a supply chain problem

Modernization inevitably involves tooling: build scripts, dependency updates, wrappers, logging stacks, service frameworks. Adding AI into this pipeline creates a new class of risk: privileged automation that can be influenced by untrusted input.

The modern supply chain lesson is simple: compromise does not need to happen in “your” code to land in your production artifacts.

The security boundary shift, a practical threat model for AI-assisted modernization

A useful threat model for “Claude COBOL” is not “the model might be wrong.” It is:

- What data does it see

- What can it change

- What can it execute

- What credentials does it touch

- How do we prove integrity and equivalence after changes

You can structure this into three planes.

The knowledge plane, what the modernization effort must ingest

Typical inputs include:

- COBOL sources, COPYBOOKs, JCL and job control definitions

- Schema definitions, VSAM layouts, DB2 access patterns

- Operational runbooks and incident postmortems

- Ticket histories and integration documentation

Key risks:

- Proprietary logic leakage

- Prompt injection via contaminated documents

- Poisoned knowledge artifacts that steer refactors toward insecure outcomes

Controls that actually help:

- Treat every artifact as a supply chain input with provenance

- Separate “read context” from “write actions”

- Build immutable audit logs of what the model saw and why it acted

The change plane, where new code and configs are created

This includes:

- Generated patches, wrappers, migrations, refactors

- New service configs, IaC, deployment scripts

- Dependency upgrades and logging changes

Key risks:

- Subtle backdoors or insecure defaults in generated code

- Authorization drift when old roles map to new services

- “Temporary” debug endpoints that survive into production

Controls that actually help:

- Semantic review gates and “two-person” integrity for risky changes

- Cryptographic signing of artifacts and protected branches

- Reproducible builds and dependency pinning

The execution plane, what runs and where it runs

This includes:

- CI runners, build servers, staging environments

- Parallel-run “shadow stacks” and integration brokers

- API gateways, logging/metrics agents, job schedulers

Key risks:

- Credential theft in CI

- Privilege escalation from test to prod

- Lateral movement via integration or observability stacks

Controls that actually help:

- Short-lived credentials and scoped workload identity

- Network segmentation and egress control

- Runtime policies for service-to-service access

The CVEs that matter most to “Claude COBOL” risk

A common mistake is to hunt for “COBOL CVEs.” The larger risk typically lives in the modernization scaffolding: supply chain dependencies, logging stacks, CI/CD, gateways, and the new services you create while wrapping legacy behavior.

Below are high-impact CVEs and why they map cleanly to modernization programs.

CVE-2024-3094, XZ Utils supply chain compromise, why your build pipeline is the crown jewel

CISA issued an alert on the reported supply chain compromise affecting XZ Utils, tracked as CVE-2024-3094. (Futurum)

Why this belongs in a Claude COBOL conversation:

- Modernization expands build complexity and dependency surfaces

- Builds run with privilege and produce trusted artifacts

- A compromised component can change what ships even if your “source diffs look clean”

If your modernization program cannot answer “what exactly shipped, and why should I trust it,” you do not have an upgrade path—you have a future incident waiting for the right trigger.

CVE-2021-44228, Log4Shell, why wrappers and integration layers often reintroduce catastrophic risk

CISA’s Log4j guidance documents the Log4Shell remote code execution vulnerability in Log4j (CVE-2021-44228). (The Economic Times)

Why it matters here:

- Modernization often wraps COBOL logic into Java or JVM-based services

- Logging becomes an always-on ingestion path for attacker-controlled inputs

- Vulnerabilities in “supporting infrastructure” can become system-wide failures

Log4Shell remains a canonical example of why “non-core” components can dominate your risk profile.

Prioritize what’s exploited, not what’s discussed

CISA’s Known Exploited Vulnerabilities catalog exists for a reason: it reflects vulnerabilities with real-world exploitation, not theoretical severity. Use it as an input to modernization risk gates, especially when new services and dependencies are introduced quickly. (Futurum)

What the best coverage gets right, and where security teams should be skeptical

The coverage that drove the keyword spike tends to emphasize speed: “quarters instead of years.” Reuters reported that framing directly and tied it to investor fear around IBM’s modernization revenue. (Reuters)

The strongest counterpoint—relevant for security—is IBM’s argument that “translation isn’t modernization,” because the real complexity is the integrated stack and the operational environment. (IBM Newsroom)

Security translation of that debate:

- If leadership treats modernization like language conversion, you will underfund controls

- If engineers treat modernization like code generation, you will miss identity, network, and operational risk

- If governance treats AI like a documentation assistant, you may accidentally grant it privileged execution paths

Your security program has to defend the workflow shape, not the marketing claim.

Practical controls that produce evidence, not confidence

A good “Claude COBOL” security program is a program that can demonstrate:

- what the AI saw

- what it changed

- what got reviewed

- what shipped

- what was validated under representative conditions

Below is an evidence-driven control stack you can implement without waiting for perfect tooling.

Control stack, from governance to runtime proof

| Layer | What can go wrong | Control objective | Evidence you should be able to produce |

|---|---|---|---|

| Data governance | Proprietary logic leaks via prompts, logs, tickets | Prevent and detect exfiltration | Classification rules, access logs, retention settings, redaction policies |

| Model usage | Prompt injection through untrusted artifacts | Keep data from becoming instructions | Provenance tags, isolation zones, tool-call allowlists and approvals |

| Code change | Logic drift, insecure defaults, hidden backdoors | Preserve semantic integrity | Semantic diffs, review records, signed commits, test coverage deltas |

| Build and CI | Supply chain compromise, stolen CI tokens | Constrain and attest builds | Locked dependencies, SBOM, build attestations, short-lived credentials |

| Deployment | Debug endpoints or misconfigs in prod | Enforce least exposure | Network policies, inventory, egress rules, runtime alerts |

| Validation | “It compiles” but breaks invariants | Prove equivalence | Golden datasets, parallel-run reconciliation reports, invariant checks |

Code example, extract COBOL CALL dependencies to scope review and testing

Before you can secure change, you need to know what a module calls. This small script builds a dependency view from common CALL patterns, which is often enough to prioritize review and testing in early modernization phases.

import re

from pathlib import Path

CALL_RE = re.compile(r"\\bCALL\\s+['\\"]?([A-Z0-9_-]+)['\\"]?", re.IGNORECASE)

def extract_calls(cobol_text: str):

return sorted(set(m.group(1) for m in CALL_RE.finditer(cobol_text)))

def scan_repo(root: str):

root_path = Path(root)

results = {}

for p in root_path.rglob("*"):

if p.suffix.lower() in {".cob", ".cbl", ".cobol"}:

text = p.read_text(errors="ignore")

calls = extract_calls(text)

if calls:

results[str(p)] = calls

return results

if __name__ == "__main__":

repo = "./cobol_repo"

depmap = scan_repo(repo)

for file, calls in depmap.items():

print(f"\\n{file}")

for c in calls:

print(f" - {c}")

Security payoff:

- You can identify high-centrality modules that deserve stricter gates

- You can detect unexpected external calls early

- You can tie review requirements to dependency boundaries

CI guardrail example, separate AI-assisted changes from production deploy rights

The fastest way to get burned is to let “helpful automation” inherit production credentials. A simple baseline:

- AI-assisted PRs can run tests

- AI-assisted PRs cannot deploy to production

- Deployments require protected branches and human approval

name: ai-assisted-modernization-ci

on:

pull_request:

jobs:

test:

runs-on: ubuntu-latest

permissions:

contents: read

steps:

- uses: actions/checkout@v4

- name: Run unit tests

run: ./scripts/test.sh

deploy:

if: false

runs-on: ubuntu-latest

environment:

name: production

permissions:

id-token: write

contents: read

steps:

- name: Deploy

run: ./scripts/deploy_prod.sh

This is intentionally blunt. The point is to enforce a boundary: generation and exploration are not deployment authority.

The quiet failure mode, prompt injection in modernization artifacts

Modernization programs do not feed only code into AI. They feed:

- tickets

- wiki pages

- incident summaries

- PDF specs

- vendor docs

- copied logs

If any of those inputs can be influenced by untrusted parties—or simply contain attacker-crafted text copied from external sources—an LLM can be steered toward actions that violate your intent.

A practical defensive posture:

- Assume all external or user-contributed artifacts are untrusted

- Use separate “read-only analysis” workflows and “write access” workflows

- Require explicit approvals for tool calls that touch secrets, infra, or deploy steps

- Keep replayable logs so you can audit why changes occurred

Validation is the real hard part, how to avoid semantic drift

Even proponents of AI-assisted modernization implicitly acknowledge that the hardest part is not generating code—it’s ensuring the system remains correct under real workloads. The investor debate around “translation vs modernization” is a public signal that equivalence is not a given. (IBM Newsroom)

For security and reliability, equivalence must include invariants, not just outputs:

- totals reconcile across batch cycles

- idempotency holds for retries

- authorization decisions remain consistent

- exception paths are preserved or intentionally redesigned with sign-off

A robust pattern is parallel run with reconciliation:

- Mirror real input data into a controlled validation environment

- Run legacy and modernized components in parallel

- Compare outputs plus invariants and side effects

- Produce reconciliation reports as merge gates

If you cannot generate reconciliation evidence, you cannot credibly claim modernization is safe—especially when new APIs and service layers are introduced.

AI-assisted COBOL modernization almost always introduces new surfaces: web services, APIs, gateways, admin panels, integration endpoints, and orchestration tooling. Those surfaces are where exploitation usually happens, not inside COBOL syntax.

That’s where an evidence-first validation workflow fits naturally. Penligent focuses on turning “we think it’s secure” into “we verified the reachable paths and have reproducible evidence and reporting.” For modernization programs, the most valuable output is not a dashboard—it’s defensible proof you can hand to engineering leadership, auditors, or incident responders.

Two Penligent resources that align with this boundary-focused view:

- The 2026 Ultimate Guide to AI Penetration Testing, The Era of Agentic Red Teaming https://www.penligent.ai/hackinglabs/the-2026-ultimate-guide-to-ai-penetration-testing-the-era-of-agentic-red-teaming/

- AI Agents Hacking in 2026, Defending the New Execution Boundary https://www.penligent.ai/hackinglabs/ai-agents-hacking-in-2026-defending-the-new-execution-boundary/

References

- Anthropic, How AI helps break the cost barrier to COBOL modernization (Claude)

- Reuters, IBM posts steepest daily drop since 2000 after Anthropic says AI can modernize COBOL (Reuters)

- Barron’s, IBM stock had its worst day in 25 years over AI disruption fears (Barron’s)

- IBM Newsroom, Lost in Translation, what the AI code debate keeps getting wrong (IBM Newsroom)

- Fast Company, IBM stock falls after Anthropic says AI can modernize COBOL (Fast Company)

- TechRadar Pro, coverage on AI changing COBOL modernization mapping workflows and data flows (TechRadar)

- CISA alert on XZ Utils supply chain compromise, CVE-2024-3094 (Futurum)

- CISA guidance on Apache Log4j vulnerability, CVE-2021-44228 (The Economic Times)

- https://www.penligent.ai/hackinglabs/the-2026-ultimate-guide-to-ai-penetration-testing-the-era-of-agentic-red-teaming/

- https://www.penligent.ai/hackinglabs/ai-agents-hacking-in-2026-defending-the-new-execution-boundary/