Git worktrees solved one problem and exposed another. They let you keep multiple branches checked out at once in separate directories while still sharing one repository. That is exactly the shape modern coding agents want. OpenAI’s Codex uses worktrees for independent tasks, including dedicated background worktrees for automations, and Claude Code documents a --worktree mode for parallel tasks. Git itself is explicit about the boundary: linked worktrees share the repository and differ mainly in per-worktree files such as HEAD and index. That is enough to stop one task from trampling another task’s files. It is not enough to stop one task’s runtime from trampling another task’s ports, databases, caches, secrets, or test state. (Git)

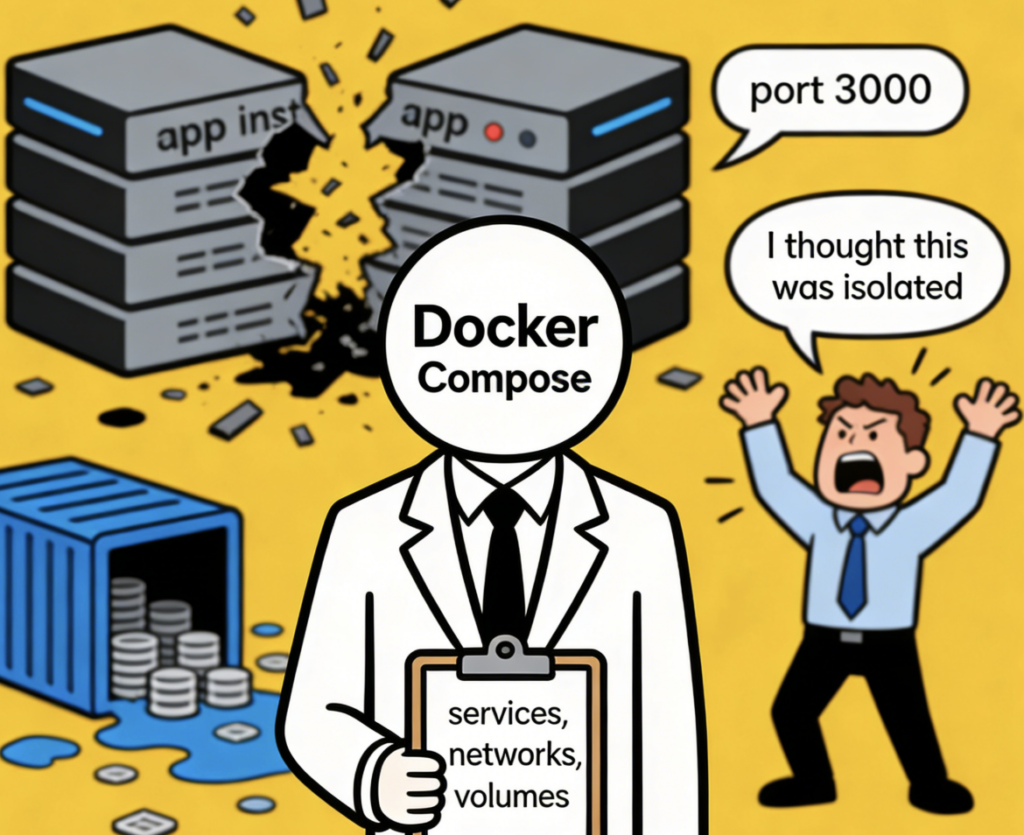

That gap used to be annoying. In 2026 it is becoming structural. The moment one machine is running multiple agent-assisted branches side by side, “just use worktrees” stops being a complete answer. Two branches can each have their own code checkout and still both expect localhost:3000. Two branches can each have their own file tree and still both try to write migrations into the same shared database. Two branches can each have clean Git history and still poison each other through bind-mounted host paths, reused browser state, or an over-helpful .env file copied into the wrong process. None of that is a Git problem. It is a runtime problem. The official Coasts docs frame the same issue directly: worktrees are strong at code isolation, but they do not solve runtime isolation by themselves. (Coasts)

That distinction matters even more once AI agents enter the loop. A coding agent does not stop at file edits. It reads code, runs commands, executes tests, watches logs, and often keeps iterating until it gets a runtime signal back. If the runtime underneath it is not isolated by worktree, the agent’s view of cause and effect becomes unreliable. A test can fail because the branch is wrong, because the port was already taken, because a stale database volume leaked in, or because another agent mutated the same host-mounted path five seconds earlier. At that point you are not debugging your branch. You are debugging interference. The cost is not just wasted time. It is false confidence. (OpenAI Developers)

Git worktrees became an agent primitive

Git worktrees used to be a power-user feature. They are now becoming an execution primitive for AI coding systems. Git’s own documentation describes git worktree add as creating a new working tree associated with the same repository, sharing everything except per-worktree files. Claude Code tells users to use worktrees for parallel tasks so separate Claude instances do not interfere with one another. OpenAI’s Codex app goes further and says worktrees let Codex run multiple independent tasks in the same project without conflicting with ongoing work. That is not an edge case anymore. It is the workflow surface. (Git)

This is a subtle but important shift. In the pre-agent era, a developer might occasionally spin up a second worktree to hotfix production while keeping unfinished feature work intact. The runtime implications were easy to ignore because parallel execution was sporadic. In the agent era, parallel execution is the point. One task reviews auth code, another task experiments with a migration, another task tries to reproduce a failing test, and a fourth task opens a speculative refactor branch. The Git layer is now naturally parallelized. The runtime layer often is not. (OpenAI Developers)

That mismatch is why teams feel friction the first time they seriously try parallel AI agent development on one laptop. Everything looks correct at the repository layer. Every task has its own branch, its own directory, and its own diff. Then the first process binds to the familiar host port and all the others start failing in ways that look random. Or a migration from one task updates shared state and a second task reads it as if it were part of its own branch. Or a task succeeds because another task already warmed the cache and hid a missing dependency. Parallel code became easy before parallel runtime did. (Coasts)

The important point is not that worktrees are insufficient. They are indispensable. The important point is that they solve only one of the two parallelism problems. They isolate code state. They do not isolate execution state. Once you accept that, the rest of the design space becomes much clearer. You stop asking “how do I make worktrees do everything” and start asking “what runtime control layer belongs beside worktrees.” That question is where tools like Coasts become relevant, but the question comes first. (Git)

Code isolation is not runtime isolation

A linked worktree gives you a separate checked-out branch and separate per-worktree Git metadata. It does not create a separate network namespace on your host. It does not create a separate Postgres data directory. It does not rewire your browser profile, shell history, Docker daemon, or long-running development services. If a process outside Git can affect your result, worktrees do not automatically isolate it. (Git)

That sounds obvious once stated plainly, but most runtime bugs in parallel development come from forgetting exactly this boundary. Developers see two directories and intuitively assume they have two environments. They do not. They have two file trees pointing at one larger machine state. If both trees start the same multi-service stack, the host still has only one port 3000, one port 5432, one Docker daemon unless you isolate it, one set of default shell environment variables, and one host filesystem that bind mounts can modify. Some resources are naturally separated by path. Others are shared unless you intervene. (Docker Documentation)

The fastest way to make this concrete is to separate the problem into domains.

| Domain | What worktrees isolate | What worktrees do not isolate | Why it matters |

|---|---|---|---|

| Git state | Branch checkout, per-worktree HEAD, index, working directory | Shared repository object database | Safe parallel edits, shared repo history |

| Host ports | Nothing | Any process that binds the same host port | Two branches cannot both own localhost:3000 |

| Container network | Nothing by themselves | Whatever Docker or another runtime decides | Service discovery may be isolated or shared depending on runtime setup |

| Persistent data | Nothing by themselves | Volumes, bind mounts, databases, caches | Test results and migrations can bleed across branches |

| Secrets and env | Nothing by themselves | Host env vars, copied files, mounted credentials | Wrong branch can see wrong credentials |

| Browser and auth state | Nothing | Same browser profile or cookies | One task can silently inherit another task’s session |

| Logs and observability | Nothing | Shared stdout, shared Docker daemon, shared background processes | Root-cause analysis becomes ambiguous |

The nuance matters. Some runtime state can be separated with naming conventions or good Compose hygiene. Some cannot. Some is easy to isolate and expensive to share. Some is easy to share and expensive to isolate. That is why a serious runtime-isolation design never treats the whole environment as one blob. It needs explicit policy for ports, service lifecycle, persistent data, and secrets. Coasts’ docs make exactly this kind of distinction when they describe ports, assign behavior, shared services, volume topology, and secret injection as separate concerns rather than one giant switch. (Coasts)

There is also a human factor here. Once teams begin to rely on AI agents, ambiguity becomes more expensive because agents are fast enough to turn a small misunderstanding into a pile of artifacts. A human notices “oh, I think I accidentally hit the wrong database” and pauses. An agent keeps going until an error or a guardrail stops it. That means runtime isolation is not just about convenience. It is about preserving the integrity of feedback loops. A system that can edit code in parallel needs runtime signals that are attributable to the right branch and the right execution context. (OpenAI Developers)

Where Docker Compose still collides

Docker Compose solves a different problem. It defines and manages multi-container applications through a single configuration file covering services, networks, and volumes. By default, Compose creates a network for an app and makes services discoverable on that network by service name. The network name is derived from the project name, and the project name usually comes from the directory name unless it is overridden. That means Compose already gives you some namespacing out of the box. If you launch the same stack from two different worktree directories, you often get two different project names, two different default networks, and two separate sets of default-named resources. That part is real and important. (Docker Documentation)

But that still leaves several classes of collision untouched. The obvious one is host port publishing. If two copies of the stack both declare 3000:3000, the first one wins and the second one fails, regardless of how neatly Compose named its internal network. The same is true for any other published host port such as 5432, 6379, or 8080. Compose can help you describe the stack. It cannot make one host port belong to two active instances at the same time. (Docker Documentation)

A second class of collision comes from how data is mounted or shared. Docker’s documentation is explicit that bind mounts map host directories into containers and that bind mounts have write access to host files by default. That is great for hot reload and terrible for pretending the host no longer matters. Named volumes behave differently: they are managed by Docker and are generally the preferred persistence mechanism for container data. But even there, the isolation story depends on naming, topology, and whether you deliberately use external volumes. Compose gives you mechanisms, not a worktree-aware policy. (Docker Documentation)

A third class of collision is logical rather than technical. Even when Compose names resources separately, teams still have to decide which resources should remain isolated and which should be shared. Maybe each branch needs its own application services but can reuse a common Redis instance. Maybe each branch needs a fresh Postgres but can share a local package proxy. Maybe integration tests should hit branch-local backends but a shared mock third-party service. Compose does not know any of that. It will happily start whatever you define. The policy lives elsewhere. Coasts exposes that policy surface explicitly through ports, shared services, volumes, and assign strategies. That is the difference between describing containers and orchestrating branch-aware runtimes. (Coasts)

A minimal Compose file shows the shape of the problem:

services:

web:

build: .

ports:

- "3000:3000"

depends_on:

- db

volumes:

- ./:/app

db:

image: postgres:16

environment:

POSTGRES_PASSWORD: dev

ports:

- "5432:5432"

volumes:

- pgdata:/var/lib/postgresql/data

volumes:

pgdata:

This stack is completely normal for local development. It is also exactly the kind of stack that starts hurting the moment you try to run multiple branch-specific instances side by side. The host ports are rigid, the source tree is bind-mounted from the host, and the data strategy is implicit rather than worktree-aware. Compose did its job. The rest of the problem is still yours. (Docker Documentation)

The following matrix is a more honest way to think about it.

| Resource | Compose default behavior | When it still collides | Typical result |

|---|---|---|---|

| Service-to-service networking | Namespaced by Compose project | If you need predictable host-facing access across multiple instances | Confusion over which instance is “the one on localhost” |

| Host ports | Explicitly published to host | Any duplicate published port across running stacks | Immediate startup failure or accidental reuse |

| Named volumes | Often project-scoped by naming | If marked external, reused intentionally, or project names are forced to match | Shared or polluted persistent state |

| Bind mounts | Direct host path mapping | Whenever two instances point at the same host path or write to sensitive host locations | Cross-branch file mutation and host coupling |

| Environment variables | Inherited from shell or env files | When different branches need different credentials or feature flags | Wrong runtime behavior with no code diff causing it |

| Build context | Taken from the selected path | When malicious or unexpected build instructions enter the workflow | Unsafe host interaction during build, especially if trust is assumed |

What most teams need is not “more Compose.” They need a control plane above Compose that understands worktrees, preserves parallel access, and decides what is isolated, what is shared, and what counts as the currently active canonical instance. That is the gap this article is really about. (Docker Documentation)

What runtime isolation must actually control

A serious runtime-isolation layer for worktrees should do at least five things well.

First, it must separate parallel access from canonical access. In plain language, every running instance needs an address you can always reach, but one instance also needs to be able to pretend it is the “normal localhost” environment. Coasts formalizes this through dynamic ports and canonical ports. Every declared port gets a dynamic host port so every instance stays reachable. The checked-out instance also gets the canonical port forwarded to the host. That model is more important than it may look. It is what lets multiple instances exist in parallel without giving up the habit of testing one “main” instance on the familiar local ports. (Coasts)

Second, it must control service behavior when code changes or branch assignment changes. Not every service reacts to code changes the same way. A frontend with file watchers can usually hot-reload. A backend that reads code at startup may need a restart. A service whose code is baked into the image may require a rebuild. A database often needs none of the above and should be left alone. Coasts exposes this directly through assign strategies such as none, hot, restart, and rebuild. Even if you never use Coasts itself, that distinction is the right mental model. Runtime isolation is not only about starting duplicate stacks. It is about updating the right subset of a stack when the branch changes. (Coasts)

Third, it must expose a deliberate data topology. Some persistent state should be isolated per instance. Some should be shared because duplication is wasteful or harmful. Docker’s own documentation distinguishes bind mounts, which share host paths directly, from volumes, which Docker manages. A worktree-aware runtime layer needs to sit above those primitives and turn them into policy: isolate this database, share that cache, keep this secret ephemeral, persist that daemon state. Coasts’ docs treat volume topology and shared services as first-class concepts, which is exactly what you want in this category. (Docker Documentation)

Fourth, it must handle secrets as part of the environment, not as an afterthought. Coasts’ secret model is useful here because it is explicit: secrets are extracted from the host, encrypted at rest in a local keystore, and injected into instances as environment variables or files. That is not the only possible design, but it captures the right principle. In branch-parallel local development, the question is not merely “can the app start.” The question is “which credentials exist in which runtime, in which form, with which lifetime.” The moment that is vague, branches start inheriting trust they did not mean to have. (Coasts)

Fifth, it must give humans an observability surface. Parallel worktree runtimes are easy to create and hard to reason about when something breaks. A CLI alone can work, but a system becomes much easier to operate when you can see projects, instances, statuses, port mappings, logs, volumes, and secrets metadata in one place. Coasts’ local UI, Coastguard, is built around exactly that problem. It runs locally, is launched from the CLI, and presents instance state, port mappings, logs, and metadata. Again, the specific implementation is less important than the design lesson: once you parallelize runtime, visibility stops being a nicety. It becomes part of correctness. (Coasts)

Coasts as a concrete model

Coasts is useful to analyze because it is unusually direct about the problem it is solving. The project describes itself as localhost service isolation and orchestration for Git worktrees. Its GitHub README says it is a CLI tool with a local observability UI for running multiple isolated instances of a full development environment on one machine. The docs emphasize that it is built for local development, not as a hosted cloud service, and that it works with any agentic coding workflow that uses worktrees without requiring special harness-side configuration. (GitHub)

That framing already sets it apart from several adjacent categories. It is not just “Git worktree helpers.” It is not just “Docker Compose but again.” And the docs explicitly say it is not dev containers either. Their distinction is important: dev containers generally mount an IDE into a single containerized workspace, while Coasts are headless and optimized for multiple isolated, worktree-aware runtime environments running in parallel. That is a narrow claim, but a valuable one. It is close to how many AI coding systems actually work. (Coasts)

Coasts puts a Coastfile at the root of the repository as the configuration layer. The docs describe this as a TOML file that tells Coast how to build and run isolated development environments, including what services to run, what ports to forward, how data is handled, and how secrets are managed. The minimum Coastfile can be almost empty, but most real projects either point it at an existing Compose file or define bare services directly. That “works with your current setup” design is reflected in the README and the docs: compose = "./docker-compose.yml" is a first-class path, and if you omit compose, you can still use bare services or interact with the container directly via coast exec. (Coasts)

A representative configuration looks like this:

[coast]

name = "shop-api"

compose = "./docker-compose.yml"

primary_port = "web"web = 3000 api = 8080 postgres = 5432 redis = 6379

default = “none”

web = “hot” api = “restart”

extractor = “env” var = “APP_ENV” inject = “env:APP_ENV”

extractor = “env” var = “DB_PASSWORD” inject = “env:DATABASE_PASSWORD”

This example is not interesting because the syntax is exotic. It is interesting because the configuration surface mirrors the actual problem. The project name identifies the runtime family. The Compose file imports the existing stack. The ports block says which host-facing ports matter. The assign policy admits that services react differently to branch changes. The secrets block makes environment injection explicit rather than magical. If you compare that to the usual pile of shell aliases and half-documented local conventions, the value is not abstraction for its own sake. The value is that the runtime policy becomes reviewable. (Coasts)

The ports model is one of the strongest ideas in the system. The docs say every instance gets a dynamic port in a high range for each declared port, and the checked-out instance also receives the canonical port forwarded to the host. That gives you two separate invariants: every instance stays reachable, and one instance can still behave like the “default local environment.” In practice that means you can inspect branch A and branch B in parallel without giving up the muscle memory of visiting the active branch on the port your app always used. The docs also expose a primary_port concept for quick links, which reinforces that this is about operating real parallel instances, not merely naming them. (Coasts)

The branch-switch behavior is similarly pragmatic. Coasts’ assign configuration lets you decide, service by service, what happens when you reassign a running instance to another worktree: do nothing, hot-reload, restart, or rebuild. That matters because in real stacks the right answer is almost never uniform. Databases and caches often should not reset on every branch reassignment. Frontend and backend services often should. Some services only need restart; others need a full image rebuild because the code is baked into the image. The value here is not merely automation. It is operational specificity. (Coasts)

Coasts also leans into a mixed human-and-agent workflow. The overview says you can containerize an agent, but in many cases you do not actually need to. Because Coasts shares the filesystem with the host through a shared volume mount, the docs recommend running the coding agent on the host and using coast exec for runtime-heavy tasks such as integration tests. The separate agent-shell docs make the same point more strongly: in most workflows, you do not need to containerize the coding agent. That design choice lines up with how many teams actually want to work. They want the agent close to their normal tools, but they want the runtime state behind the agent to be branch-aware and reproducible. (Coasts)

There is a real observability story too. Coastguard, the local web UI, runs on port 31415 and shows projects, running instances, checkout state, port mappings, logs, runtime stats, image artifacts, volumes, and secret metadata. That is not a toy add-on. Once you have three or four runtimes alive at once, operational visibility is part of whether the system remains usable. If you cannot answer “which branch is on the canonical port right now,” “which instance owns this log stream,” or “which volume belongs to which instance,” the whole setup loses trust fast. (Coasts)

It is also worth noting how seriously the docs take multi-harness workflows. The overview says Coasts is harness-agnostic, and the docs include surfaces for Codex, Claude Code, Conductor, Cursor, T3 Code, and Shep. The multiple-harness guidance even recommends a shared /coasts workflow plus harness-specific always-on rules. That is a small but revealing detail. It shows the project is not pretending the agent layer will standardize anytime soon. Instead, it assumes worktrees are the common denominator and builds the runtime layer around that. (Coasts)

A working example with Git worktrees, Docker Compose, and Coasts

A typical parallel setup starts with Git, not with the runtime. That part is still simple.

git worktree add ../shop-auth -b feature/auth-rewrite

git worktree add ../shop-search -b fix/search-timeout

git worktree add ../shop-sec -b test/security-regression

Git now gives you three linked worktrees, each with its own branch-specific working directory and per-worktree metadata. That is the code layer handled correctly. (Git)

The next step is to build the runtime layer once and then create worktree-aware instances. Coasts’ docs describe coast run <name> as creating a new instance from the latest build, and their harness guidance shows that coast run <name> -w <worktree> can create and assign a worktree in one step for certain harnesses. A simple workflow looks like this:

coast build

coast run auth-1 -w ../shop-auth

coast run search-1 -w ../shop-search

coast run sec-1 -w ../shop-sec

coast ls

coast ui

At that point you want every instance reachable without stepping on the others. That is where the dynamic-port model matters. Each instance gets dynamic ports for the declared services, so all three can remain live together. The instance you want to treat as the “main local environment” can then be checked out or assigned onto the canonical ports. The exact mental model matters more than the exact command sequence: parallel access stays stable, canonical access is movable. (Coasts)

Suppose auth-1 is the branch you want on your familiar browser bookmarks and local API clients. You make that the checked-out instance and leave the others on their dynamic ports. Then you use coast exec for branch-specific runtime work:

coast exec auth-1 -- npm test

coast exec search-1 -- npm run test:integration

coast exec sec-1 -- pytest tests/security -q

This is a clean separation of concerns. The agent or operator can keep using normal host-side tools, while runtime-heavy work is pushed into the correct isolated instance. That is exactly the host-agent, isolated-runtime model the Coasts docs recommend. (Coasts)

Now the interesting part: switching behavior. Let’s say the auth rewrite branch changes only frontend and backend code, while the database schema stays stable. You do not want a full rebuild of Postgres every time you flip between adjacent worktrees for the auth and search tasks. The assign model exists for this reason. A reasonable policy often looks like this:

| Service | Good default assign action | Why |

|---|---|---|

| Postgres | none | Persistent data should stay stable unless schema policy says otherwise |

| Redis | none | Cache warm-up is expensive and often branch-agnostic |

| Frontend dev server | hot | File watching usually picks up branch-local code changes |

| API service | restart or rebuild | Depends on whether code is live-mounted or baked into image |

| Test runner image | rebuild | Often depends on branch-specific code copied into image |

This is the sort of table most teams never write down, and then they wonder why branch switching feels random. The value of a runtime-isolation layer is not only in the commands. It is in forcing these decisions to become explicit.

There is also a performance dimension. Coasts’ runtime docs say images are pre-cached on the host and loaded into the inner daemon, and the inner daemon’s state persists in a named volume so subsequent runs can skip image loading. That design is not glamorous, but it addresses one of the biggest practical objections to isolated runtimes: startup cost. A branch-parallel runtime system only survives daily use if spinning up another instance is cheap enough that engineers and agents will actually do it. (Coasts)

This is also the point where runtime isolation becomes useful for review, not just execution. If a regression appears only in search-1, you can inspect that instance’s logs, ports, and service status without disturbing auth-1. If a security test changes only one worktree’s environment, you can keep that evidence attributable to the correct branch. If an agent’s theory is wrong, you discard the instance instead of manually unwinding your entire local machine state. The engineering payoff is not abstract. It is fewer invisible dependencies between branches. (Coasts)

Security boundaries that still matter

The word “isolation” is dangerous because developers often hear it as “safe enough.” That is not what runtime isolation means here. A worktree-aware runtime layer is primarily an operational boundary. It reduces collisions, clarifies ownership, and makes feedback reproducible. It does not mean arbitrary repositories, arbitrary Dockerfiles, or arbitrary agent instructions are suddenly safe to execute. The boundary you built is only as strong as the trust model under it. (Coasts)

Coasts is admirably clear about one major part of that trust model. Today, the only production-tested runtime is Docker-in-Docker. The docs say the outer container is created from the docker:dind image with --privileged mode enabled, and the inner daemon runs the project’s Compose services. The same page notes that Sysbox would offer a better security posture in principle, but it is not wired into the daemon and does not work on macOS today; Podman support is likewise not yet connected end to end. That is not a criticism. It is a useful boundary marker. The current system is engineered for local development effectiveness first, not as a hardened sandbox for untrusted code. (Coasts)

Docker’s own storage documentation reinforces the same caution from another angle. Bind mounts expose host directories directly inside containers, and Docker states plainly that bind mounts have write access to the host filesystem by default. They are also strongly tied to host structure. That is powerful for hot reload and local iteration. It is exactly why you should not confuse “convenient development isolation” with “strong security containment.” If the host is the trust anchor and the container can write into host-mounted paths, then the security story is about controlled trust, not magic separation. (Docker Documentation)

The container ecosystem has already produced real examples of why that distinction matters. NVD describes CVE-2024-21626 in runc as a file-descriptor leak issue that could let a newly spawned container process end up with a working directory in the host filesystem namespace, enabling host filesystem access and a container escape in affected versions. Docker’s security advisory from the same disclosure cycle groups that issue with multiple BuildKit flaws. The lesson is not that local container workflows are futile. The lesson is that the runtime boundary depends on components that have had real escape-class bugs and therefore deserve the same patching discipline as any other security-critical layer. (NVD)

Build pipelines are part of the trust surface too. Docker’s official security announcements describe CVE-2024-23651 as a BuildKit race condition in which malicious build steps sharing cache mounts with subpaths could expose files from the host system to the build container. Docker’s workaround advice specifically says to avoid building malicious projects or untrusted Dockerfiles with the affected behavior until fixed versions are in use. This is highly relevant in the age of agentic coding because one common failure mode is to treat “local development branch” and “trusted build input” as synonyms. They are not synonyms. If an agent is pulling in untrusted projects, untrusted dependencies, or unreviewed Dockerfiles, the build surface itself becomes part of the threat model. (Docker Documentation)

The AI layer adds another class of risk that runtime isolation alone cannot solve: prompt injection and instruction hijacking through content the agent reads. OpenAI’s official guidance argues that the goal should not be perfect classification of malicious inputs. The goal should be to constrain the impact even when manipulation succeeds. That framing matters because many agent workflows naturally ingest untrusted material: docs, issue threads, test outputs, HTML, generated files, even code comments. A worktree-aware runtime helps keep environments separate, but it does not make the content flowing through those environments trustworthy. (OpenAI)

Anthropic’s own public materials make the same problem concrete from a practitioner angle. The official claude-code-security-review repository warns that the action is not hardened against prompt injection attacks and should only be used to review trusted pull requests. That is an unusually direct statement, and teams should take it seriously. If an agent is allowed to read and reason over untrusted repository content with meaningful permissions, you must assume that hostile instructions can be embedded in that content. Runtime isolation can reduce blast radius between branches. It cannot replace trust gating. (GitHub)

A practical security model for local parallel runtimes therefore needs layered controls.

| Risk | Why it matters here | Practical mitigation |

|---|---|---|

| Host port collisions | Wrong service receives traffic or tests fail nondeterministically | Separate dynamic and canonical ports, make checkout state explicit |

| Persistent-state bleed | Branch A mutates data read by Branch B | Use per-instance data where required, document which services are intentionally shared |

| Bind-mount host exposure | Container can write into host paths by default | Prefer read-only mounts when possible, minimize trusted mount surface |

| Untrusted Docker build input | Build system may expose host data or run hostile logic | Patch BuildKit and runtime components, do not build untrusted projects casually |

| Container-runtime escape | The isolation layer depends on runtimes that have had escape-class flaws | Keep runtime stack updated, avoid overstating security guarantees |

| Prompt injection into agents | Agent may follow hostile embedded instructions | Constrain tool permissions, gate untrusted content, require review for sensitive actions |

| Secret oversharing | Wrong branch or wrong process gets credentials | Make secret injection explicit and per-runtime, audit what is injected where |

There is an architectural lesson in all of this. Good local runtime isolation should make operational interference rare and visible. Good agent security should make adversarial manipulation expensive and bounded. Those are related goals, but they are not the same goal. Mixing them up produces exactly the kind of false comfort that leads teams to run too much trust through a local branch environment. (Docker Documentation)

Why this matters to security engineers and pentesters

This topic is not only for application developers. Security engineers hit the same problems in sharper form because their work depends on reproducibility. A code reviewer can tolerate a little local mess and still open a pull request. A pentester or security validator cannot tolerate ambiguity as easily because the output needs to stand up as evidence. If one branch reproduces an auth bypass only because it inherited the wrong cookie jar, wrong feature flag, or wrong database state from another local run, the result is not just noisy. It is misleading. (OpenAI)

Worktree-aware runtime isolation is useful whenever a team needs to compare patched and unpatched states, test dependency-version differences, reproduce multi-step findings, or keep separate auth contexts alive in parallel. A common security workflow looks like this: one branch adds logging or instrumentation, one branch holds the suspected vulnerable code path, one branch contains the patch candidate, and a fourth branch runs regression checks. Git worktrees make the file state manageable. Runtime isolation makes the evidence attributable. Without both, the validation loop gets muddy fast. (Git)

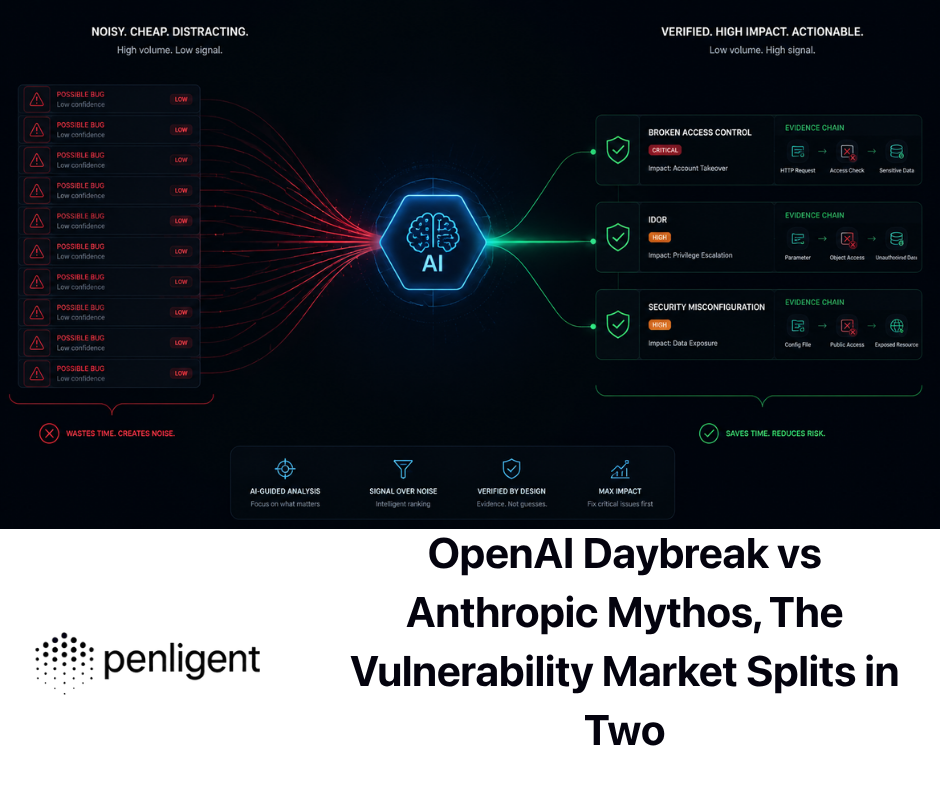

This is also where the connection to Penligent becomes practical rather than promotional. Penligent’s public homepage describes an AI pentesting engine designed for security engineers, pentesters, and red teams, with support for more than 200 tools, and its Kali quick-start docs position the workflow as a real operator environment rather than a chat-only toy. Its recent article on AI pentest copilots also emphasizes an evidence-first model in which the system should preserve context, gather proof, and distinguish plausible hypotheses from verified reality. That same evidence discipline is exactly why local runtime isolation matters in offensive testing. An agent that can orchestrate tools still benefits from a branch-clean, state-clean place to reproduce and retest what it thinks it found. (Penligent)

In practical terms, that means a team might use an agentic offensive workflow to map likely attack paths, draft retest logic, or coordinate tools, while using isolated local runtimes to hold separate app versions, dependency trees, or auth states stable enough for repeatable validation. The runtime layer is not the finding engine. It is part of the proof environment. For teams that care about traceable output rather than clever demos, that distinction is healthy. (Penligent)

When raw worktrees or plain Compose are enough

Not every repository needs this extra layer. If your project is a single lightweight service with no persistent local state and no need to run multiple live instances at once, raw worktrees may be enough. If your workflow is almost entirely remote and every branch already gets an ephemeral preview environment in CI or a cloud development platform, local worktree-aware runtime orchestration may be unnecessary overhead. If your agent mostly edits code and rarely executes tests or services, the runtime problem may simply not be large enough to justify another tool. Those are legitimate conclusions, not failures of imagination. (Git)

Plain Compose also remains valuable when your need is only to define and manage one multi-service stack at a time. Docker’s documentation is right to present Compose as the main way to control services, networks, and volumes from one YAML file. The mistake is not using Compose. The mistake is expecting Compose alone to become a branch-aware runtime orchestrator once your workflow shifts to parallel worktrees and agents. Many teams will be perfectly happy with Compose plus disciplined human habits. The problems start when parallelism becomes normal instead of occasional. (Docker Documentation)

Dev containers can also be the right answer for a different shape of problem. Coasts’ docs are careful here, and they are right to be. Dev containers are usually about creating one containerized development workspace, often IDE-attached, with dependencies and tools preinstalled. That is a strong fit for standardizing one developer environment or one branch environment. It is not the same thing as running many headless, worktree-aware runtimes side by side and switching canonical ports between them. Once you see those as different jobs, the comparison becomes much less emotional. (Coasts)

So the right question is not “should everyone adopt runtime isolation.” The right question is “at what point does runtime interference become a bigger cost than runtime orchestration.” If the answer is “not yet,” keep things simpler. If the answer is “every day,” then refusing to add a control layer is just another form of complexity — only this time the complexity is hidden. That hidden complexity is usually worse.

Choosing between worktrees, Compose, dev containers, and runtime isolation

A clear comparison helps.

| Approach | What it solves well | What it does poorly | Best fit |

|---|---|---|---|

| Raw Git worktrees | Parallel code changes, branch separation, cheap local branching | Ports, data, secrets, runtime state remain unmanaged | Developers or agents doing mostly code-level work |

| Docker Compose alone | One multi-service app definition and lifecycle | No native worktree-aware canonical switching or service reassignment policy | Teams running one active local stack at a time |

| Dev containers | One standardized containerized workspace, often IDE-centered | Awkward for many branch-parallel live runtimes on one machine | Standardized single-workspace development |

| Worktree-aware runtime isolation layer | Parallel live instances, branch-aware runtime control, explicit port and state policy | Extra moving parts, more up-front configuration, trust model still matters | Agent-heavy or multi-branch local workflows with real runtime dependence |

This table is intentionally blunt. None of these approaches is “the winner.” They stack. In fact, the most mature teams often use several of them at once. Git worktrees remain the code primitive. Compose remains the service-definition primitive. A runtime-isolation layer becomes the parallel-execution primitive. Dev containers may still be used for standardizing a single baseline environment. The failure mode is not choosing one over all others. The failure mode is pretending one layer has already solved a problem owned by another. (Git)

There is a second decision axis too: human versus agent workflows. Human developers can often work around a certain amount of local ambiguity. They notice that a port is already taken, or that a browser session seems stale, or that the wrong logs are streaming. Agents are weaker at that kind of tacit correction. They are faster, more literal, and more dependent on clean feedback loops. That is why the same repository can feel manageable for one human and chaotic for three parallel agents. When you choose infrastructure for the next few years of work, it makes sense to optimize for the workflow that is getting denser, not the workflow that is fading. (OpenAI Developers)

A rollout checklist for teams

A useful rollout starts with inventory, not tooling. Before adopting anything, identify the resources your branches actually fight over today. Is it always host ports. Is it one shared local database. Is it environment variables from a single .env file. Is it browser sessions. Is it a long-running Docker daemon with too much sticky state. Most teams already know the answer in anecdotal form. Write it down explicitly. That alone often clarifies whether the problem is worth solving with structure.

Next, separate your services into three buckets: must isolate, may share, and should stay on the host. For many full-stack applications, application services land in must isolate, databases and caches may vary by test policy, and certain tooling or package caches may share. The right answer is project-specific, but the categories are stable. If you skip this step, your runtime layer will become a machine that duplicates everything by default or shares everything by accident. Both are bad.

Then reduce needless rigidity. Hard-coded host port publishing is the first thing to tame. Unreviewed bind mounts are the second. Branch-specific secrets should be explicit rather than inherited implicitly from whichever shell launched the process. If you are going to let agents run commands, decide whether they run on the host and delegate to isolated runtimes, or whether they run inside containers for stronger environmental separation. Coasts recommends host-side agents for most workflows, which is often the most practical answer, but it should still be a conscious decision. (Coasts)

Finally, treat the runtime layer as operational infrastructure that deserves security hygiene. Patch runc, BuildKit, Docker Engine, and related components on the same cadence you would patch anything else touching trust boundaries. Do not casually run untrusted repositories or untrusted Dockerfiles inside privileged local workflows. Do not assume prompt injection is somebody else’s problem just because the repository is “only local.” Constrain tool permissions, keep review gates around high-impact actions, and remember OpenAI’s core point: good systems are designed so the impact of manipulation stays bounded even when the manipulation gets through. (OpenAI)

The real boundary

Git worktrees are one of the best things to happen to local branching. They remove a whole class of context-switching pain. Modern coding agents are making them even more central by turning them into natural containers for parallel tasks. But that only solves half of parallel execution. If worktrees are now the unit of parallel code change, runtimes need to become the unit of parallel execution. (Git)

Coasts is not interesting because it is fashionable. It is interesting because it names the missing layer clearly: localhost service isolation and orchestration for Git worktrees. Whether you end up using Coasts itself or build a simpler in-house version of the same idea, the underlying lesson is durable. Code isolation without runtime isolation is enough for light branching and weak enough for serious parallel agent workflows. The more your tools think in worktrees, the more your runtime has to think in worktrees too. (GitHub)

The healthiest way to adopt that lesson is not to romanticize isolation. Runtime isolation is not a silver bullet and not a substitute for security boundaries, patching discipline, trust review, or explicit permission models. It is a way to make parallel execution honest. Once you have that honesty, agent output becomes easier to verify, security testing becomes easier to reproduce, and your local machine stops acting like one giant shared mystery box. That is a worthwhile upgrade all by itself. (OpenAI)

Further reading

Git worktree documentation, the official definition of linked worktrees and their boundaries. (Git)

Docker Compose documentation, official references for services, networks, and volumes. (Docker Documentation)

Docker bind mounts and volumes documentation, useful for understanding host-path exposure and persistent-state behavior. (Docker Documentation)

OpenAI Codex worktrees documentation, showing worktrees as a first-class parallel-task surface. (OpenAI Developers)

Claude Code documentation for parallel worktree usage and general security posture. (Claude)

OpenAI guidance on prompt injection and impact-constrained agent design. (OpenAI)

NVD and Docker security advisories for CVE-2024-21626 and CVE-2024-23651. (NVD)

Coasts official docs and repository, for the project’s actual architecture, configuration model, and supported workflows. (GitHub)

AI Security Operating Models Break at the Agent Boundary, useful if you want a security-governance view of agent boundaries and controlled execution. (Penligent)

AI Pentest Copilot, From Smart Suggestions to Verified Findings, relevant to the evidence-first offensive workflow discussed above. (Penligent)

Penligent documentation, useful for understanding the project’s operator-facing setup and Kali-based workflow. (Penligent)

Penligent homepage, the most direct product page if you want to compare agentic offensive workflow claims against your own validation needs. (Penligent)