The phrase “anthropic cybersecurity tool” sounds generic, but the people searching it usually have a very specific question in mind.

They are not asking whether Anthropic “cares about security.” They are asking whether Anthropic has shipped something that can change how real engineering teams review code, find vulnerabilities, and get fixes merged without drowning in noise.

That question became much more concrete after Anthropic announced Claude Code Security on February 20, 2026, as a limited research preview built into Claude Code on the web. Anthropic says it scans codebases for vulnerabilities, suggests targeted patches, and uses multi-stage verification before surfacing findings for human review. Anthropic also emphasizes severity and confidence ratings and makes it clear that fixes still require human approval. (Anthropic)

Anthropic’s support documentation adds the part security teams care about even more: how this fits into actual workflows. The docs describe automated security reviews in Claude Code, including an on-demand / المراجعة الأمنية command and a GitHub Actions path for pull request review. Anthropic explicitly notes that these reviews should complement, not replace, existing security practices and manual code review. (مركز مساعدة كلود)

That combination is why this keyword matters. The market is trying to figure out whether Anthropic has entered cybersecurity as a serious workflow player, not just as a model provider.

And the short answer is yes—but in a very specific lane.

Claude Code Security is best understood as a code-centric security review layer. It is not a SIEM. It is not a runtime defense platform. It is not a replacement for external validation or pentesting. It is a tool for helping engineers and security teams reason about vulnerabilities in code faster and with more context than rule-only scanners usually provide. Anthropic’s own announcement and docs support exactly that framing. (Anthropic)

What people are actually trying to learn when they search this

Publicly, you cannot get a universal “highest CTR keyword” across the entire web unless you own the analytics. But you can still see what the market is clicking by looking at which phrases repeat across official pages, support docs, GitHub repos, and security media coverage.

Right now, the strongest recurring sub-intents are clearly tied to:

- Claude Code Security

- automated security reviews

- / المراجعة الأمنية

- GitHub Action security review

- AI-powered code security review

You can see that language line up across Anthropic’s announcement, the Claude help center page, and the GitHub repository description for Anthropic’s security review action. The repository itself describes an “AI-powered security review GitHub Action” that analyzes code changes for vulnerabilities with context-aware analysis. (Anthropic)

That matters because it tells you what kind of article actually serves search intent. Most readers are not looking for another abstract essay on “AI in cybersecurity.” They want to know what this thing does, where it fits, and whether it is safe to trust.

The most important clarification: this is not “all of cybersecurity”

A lot of confusion comes from the phrase “cybersecurity tool” being too broad.

In practice, “AI cybersecurity tooling” now spans at least three very different categories:

One category is about securing AI systems themselves—prompt injection, model abuse, data leakage, and inference-time controls.

Another is about using AI in security operations—triage, alerts, incident analysis, or threat research.

The category Anthropic is addressing here is different again: using AI to review software code for security issues and propose fixes.

That distinction is not just semantic. It determines what the tool can and cannot tell you.

If you hand a code-review system a repo and ask whether your internet-facing production environment is safe, you are asking the wrong question. Code review can surface real bugs. It cannot, by itself, prove exploitability in the deployed system, validate network exposure, or account for every runtime control. Anthropic’s own materials stay code-focused and workflow-focused, and the support docs explicitly position the feature as a complement to existing practices rather than a replacement. (Anthropic)

That is a good sign. The product is being introduced with a realistic boundary.

Why this release matters anyway

It would be easy to dismiss this as “just another AI scanner,” but that misses what is actually changing.

What is different here is not simply that Anthropic can classify vulnerabilities. The more important shift is that Anthropic is trying to make security review feel native to developer workflows, not something bolted on afterward.

The signal is in the product shape:

إن / المراجعة الأمنية command means a developer can run a security pass in the same environment where they write code. The GitHub Action means PRs can be reviewed with security context in-line, where developers already make decisions. Anthropic’s announcement also claims multi-stage verification and attempts to falsify its own findings, which suggests they know adoption will live or die on trust and noise levels. (Anthropic)

That last point is critical. Security teams are not suffering from a lack of scanners. They are suffering from review fatigue, backlog, and uneven signal quality. If a tool improves the ratio of “actionable findings” to “reviewer time,” that is operationally meaningful even if it doesn’t replace any existing category outright.

CyberScoop’s coverage captures the rollout in that context too: a security feature embedded in Claude Code that scans codebases and suggests patches, following internal stress-testing and research work. (سايبر سكوب)

The best way to think about Claude Code Security

The cleanest mental model is this:

Claude Code Security looks like a high-context, white-box security review assistant.

That framing is stronger than “AI scanner” and safer than “autonomous AppSec engineer.”

It matters because it sets realistic expectations. In a mature security program, you want different layers doing different jobs well. A tool like this can accelerate code-level discovery and patch drafting. It does not remove the need for runtime validation, external testing, or evidence that a fix actually closed the path an attacker would use.

Anthropic’s support docs themselves reinforce that the feature should complement manual reviews and existing security controls. (مركز مساعدة كلود)

That is exactly the posture serious teams should take.

What it can likely improve in real teams

There are a few places where this category is genuinely promising, and Anthropic’s workflow choices suggest they understand them.

The first is PR review speed. Security feedback often arrives too late because AppSec teams cannot manually review every change, and developers are not going to wait days for a secure coding opinion on a small feature PR. A diff-aware, context-aware AI review in the PR flow could reduce that lag substantially. Anthropic’s GitHub Action repo is designed for this exact path. (جيثب)

The second is patch suggestion quality. Finding issues is only half the battle. Security teams lose time writing repetitive remediation guidance, and developers lose time translating abstract advice into framework-specific changes. Anthropic’s announcement explicitly highlights patch suggestions for human review, which is where a lot of practical value sits. (Anthropic)

The third is developer trust if false positives are managed. Anthropic emphasizes multi-stage verification and confidence ratings, which reads like an attempt to address the exact complaint that has dogged many traditional tools: “too much noise, too little context.” Whether every team sees that in practice is an empirical question, but it is the right problem to be aiming at. (Anthropic)

What it absolutely does not prove

This is where a lot of articles become marketing copy, so let’s stay grounded.

A code-level finding—even a very good one—does not automatically tell you:

- whether the vulnerable endpoint is exposed externally,

- whether auth or gateway controls make exploitation impractical,

- whether the vulnerable code path is reachable in the deployed build,

- whether the patch was rolled out everywhere,

- whether the old exploit path still works in production-like conditions.

That gap is not theoretical. It is the difference between “we fixed the code” and “the risk is gone.”

And if you have worked a real incident, you already know those can be two very different states.

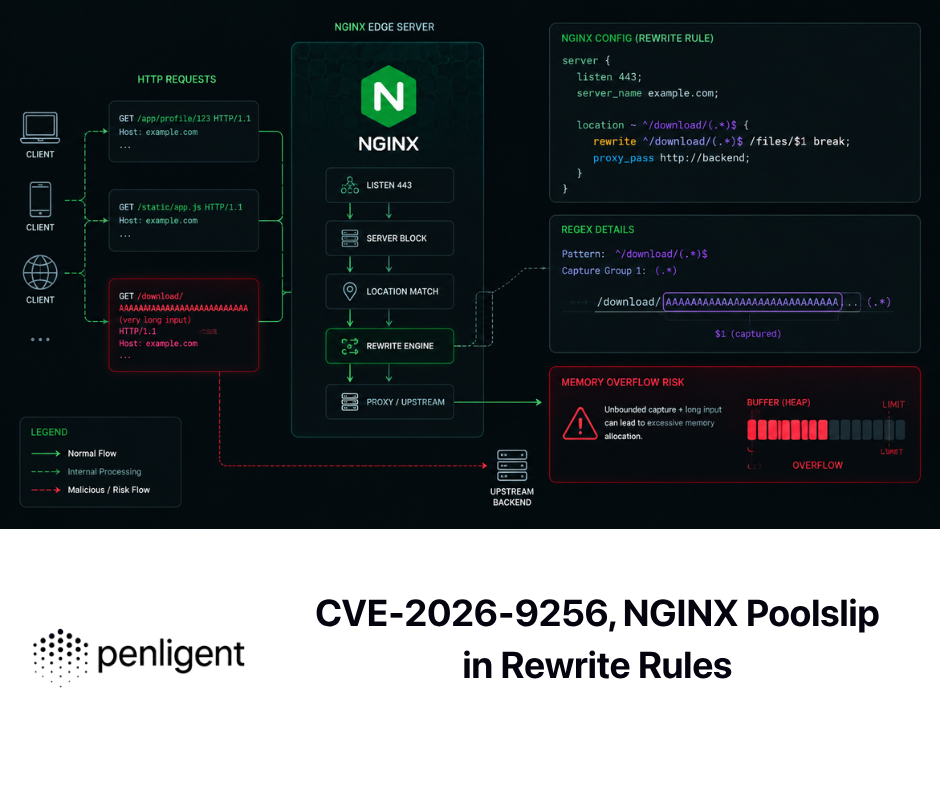

Why CVEs still matter when evaluating AI code-security tools

The easiest way to avoid over-claiming is to test your mental model against high-impact CVEs.

Not because every CVE maps to this product category, but because the mismatch is the point. CVEs show where code review shines and where entirely different controls are required.

خذ Log4Shell (CVE-2021-44228). NVD describes the issue as a Log4j2 JNDI-related vulnerability that can allow remote code execution under affected conditions, with version and behavior context documented in the record. (NVD)

Log4Shell is a perfect example of why code-level analysis matters—and why it is still not enough. Teams needed to identify vulnerable code and unsafe patterns, yes. But they also had to deal with transitive dependencies, hidden packaged components, asset inventory, patch propagation, and runtime validation. A code-review assistant can help with part of that story. It cannot solve the whole incident lifecycle.

انظر الآن إلى CVE-2024-3400 in PAN-OS GlobalProtect. NVD describes it as a command injection issue resulting from arbitrary file creation in specific PAN-OS versions/configurations, potentially allowing unauthenticated remote code execution with root privileges. NVD also notes that certain products like Cloud NGFW, Panorama, and Prisma Access were not impacted. (NVD)

This is a completely different shape of problem. Many defenders cannot inspect or patch that code directly. Their workflow is exposure identification, mitigation, vendor advisory tracking, patch rollout, and validation. A repo-centric AI security review tool is not the primary control here, and it should not be sold as one.

ثم هناك CVE-2023-34362 in MOVEit Transfer, where NVD describes an unauthenticated SQL injection vulnerability in affected MOVEit Transfer versions that could allow database access. (NVD) CISA and FBI also released a joint advisory tied to CL0P exploitation of this vulnerability, underscoring the operational reality of mass exploitation against internet-facing systems. (CISA)

MOVEit is a useful reminder that once a vulnerability is being exploited in the wild, defenders need more than patch guidance. They need response speed, forensic validation, and proof that “patched” actually means “not still compromised.”

The same pattern holds for CVE-2024-6387 (regreSSHion), which NVD describes as a regression in OpenSSH ش.س.ش.د involving unsafe signal handling and a race condition that an unauthenticated attacker may be able to trigger under timing conditions. (NVD) Regression bugs are exactly the sort of thing semantic code review may help catch, especially in mature codebases with long histories. But preventing regression fallout still depends on testing, release discipline, and patch adoption in the field.

And then there is CVE-2024-3094 (xz backdoor), where NVD records malicious code in upstream xz tarballs and obfuscated build behavior that altered the build process and resulting library behavior. (NVD) This is a supply-chain integrity problem as much as a code problem. It lives in provenance, artifact trust, release process, and build chain boundaries. A code-review assistant inside your application repo is useful, but it is not your primary defense for an xz-class event.

The pattern across all of these is straightforward:

AI-assisted code review can be a strong control. It is just not a complete security program.

What a good rollout looks like in the real world

The teams that get value from this category will not start by asking the tool to “secure everything.”

They will start by putting it where the feedback loop is shortest and the accountability is clearest: active repositories with regular pull requests and owners who can actually act on findings.

In practice, the best pilots tend to begin in a few repos where CI already works, tests exist, and PR review is healthy enough that security comments will be read rather than ignored. You want a pilot where you can compare outcomes—what the AI found, what humans agreed with, what got fixed, and what turned out not to matter in runtime conditions.

The wrong way to do this is to dump a new AI review process into a giant monorepo with unclear ownership and no patch discipline, then declare the tool “good” or “bad” based on the chaos that follows.

Anthropic’s own human-review framing makes this easier to implement responsibly, because it already assumes humans stay in the loop. (Anthropic)

A simple policy that prevents most adoption mistakes

The biggest risk in AI security review is not false positives.

It is false confidence.

A noisy finding wastes time. A trusted-but-wrong conclusion can ship risk.

The safest policy is not complicated: use AI findings to accelerate review and remediation, but require explicit human sign-off for high-severity issues and require test evidence for security fixes. Anthropic’s emphasis on severity, confidence, and human approval aligns well with that model. (Anthropic)

A lot of teams skip that step because they think governance will slow adoption. In reality, basic governance is what lets the tool survive first contact with incidents and audits.

Practical code examples that show the difference between “looks fixed” and “is fixed”

Here is a simple example of a bug pattern an AI security review system may flag correctly: using a client-controlled header as an authorization signal.

from flask import Flask, request, jsonify

app = Flask(__name__)

@app.route("/api/admin/export")

def export_users():

# bad pattern: trusts a client-controlled header

if request.headers.get("X-Internal-Job") == "true":

return "csv,data,here"

return jsonify({"error": "forbidden"}), 403

A context-aware reviewer can reasonably identify this as suspicious because the authorization decision depends on a header the client can send.

But whether it is exploitable depends on deployment reality. Maybe a trusted internal proxy strips that header from external traffic and injects it only for a scheduled job. Maybe it doesn’t. The code-level finding is useful. The security conclusion still requires verification.

That is the core theme of this entire category.

Now look at the fix side. Here is a small regression test pattern that proves a dangerous SQL sort parameter is handled safely by allowlisting:

import pytest

ALLOWED_SORTS = {"created_at", "email", "id"}

def build_query(sort_value: str) -> str:

sort = sort_value if sort_value in ALLOWED_SORTS else "created_at"

return f"SELECT id, email FROM users ORDER BY {sort} LIMIT 100"

@pytest.mark.parametrize("bad", [

"created_at; DROP TABLE users;--",

"1 desc, (select pg_sleep(5))",

"email NULLS LAST",

])

def test_bad_sort_falls_back_to_safe_default(bad):

q = build_query(bad)

assert "DROP TABLE" not in q

assert "pg_sleep" not in q

assert q.endswith("ORDER BY created_at LIMIT 100")

A lot of teams stop one step too early. They accept a patch suggestion because it looks correct in review. The better habit is to encode the exploit attempt as a regression test and make the fix prove itself every build.

That is where AI security review becomes part of engineering quality instead of a one-off code comment generator.

The cleanest, most honest way to describe the relationship is this: Anthropic’s Claude Code Security sits primarily on the white-box side of the security workflow. It helps teams inspect code changes, reason about vulnerabilities, and draft fixes earlier in the SDLC. Anthropic’s announcement, support docs, and GitHub action all point in that direction. (Anthropic)

Penligent fits on the verification side when teams need to answer the next question: did the fix actually remove the exploitable path in a real target or environment? Penligent’s own Hacking Labs content already frames this as a “white-box findings to black-box proof” workflow, which is exactly the right conceptual bridge for readers trying to operationalize AI-assisted code review without over-trusting it. (Anthropic)

That positioning avoids fake competition and reflects how mature teams actually work. Code review and attack-surface verification are not interchangeable. They are sequentially valuable.

What security leaders should watch next

This category is moving fast, so “anthropic cybersecurity tool” will likely split into more specific searches over the next few months: setup questions, enterprise controls, false-positive behavior, pricing, comparison articles, and integration details.

What will matter most is not how many headlines mention AI, but whether teams can answer boring, practical questions with confidence:

Did review time drop?

Did patch quality improve?

Did security regressions decrease?

Did developers trust the output enough to act on it?

Did runtime validation confirm the fixes?

Did incidents get easier to explain because evidence was preserved?

If the answer to those starts becoming “yes,” then this is not just another launch. It is a meaningful shift in how secure code review gets done.

Anthropic has shipped something real here. Their own materials are careful enough to make that clear without pretending the tool replaces the rest of security engineering. That restraint is a strength, not a weakness. (Anthropic)

فكرة ختامية

The best way to use an Anthropic cybersecurity tool is not to ask it for certainty.

Use it to get to the right questions faster, surface likely problems earlier, and draft better fixes while the code is still fresh in the developer’s head.

Then do what good security teams have always done: test the fix, verify the deployment, and keep evidence.

That is how AI becomes part of a stronger security program instead of just a faster source of opinions.

Links

- Anthropic announcement: Claude Code Security limited research preview (Anthropic)

- Claude support docs: Automated Security Reviews in Claude Code (مركز مساعدة كلود)

- Anthropic GitHub Action repository for security review (جيثب)

- CyberScoop coverage of the rollout (سايبر سكوب)

- NVD CVE records for 2021-44228, 2024-3400, 2023-34362, 2024-6387, 2024-3094 (NVD)

- CISA/FBI MOVEit advisory (CL0P exploitation) (CISA)

- Claude Code Security and Penligent: From White-Box Findings to Black-Box Proof (your existing Hacking Labs article)

- Related Penligent Hacking Labs posts on exploit verification, CVE validation, and evidence-driven testing