A lot of confusion around AI pentesting starts with one bad shortcut. People see a coding agent read files, run commands, call tools, and produce plausible security reasoning, then jump straight to calling it an AI pentester. That shortcut breaks down the moment the work has to survive outside the demo. Anthropic’s own documentation describes Claude Code as an agentic coding tool that reads codebases, edits files, runs commands, and integrates with development tools across terminal, IDE, desktop, and browser surfaces. Anthropic’s skills model adds specialized instructions through SKILL.md, while subagents add task-specific contexts, tools, and permissions. Penligent’s public product materials, by contrast, emphasize a different center of gravity: 200+ supported tools, latest-CVE scanning, one-click PoC generation, signal-to-proof execution, traceable artifacts, scope locking, and editable reports aligned with SOC 2 and ISO 27001. Those are not the same product category, even if both use agents and both can participate in offensive workflows. (Claude)

That distinction matters because security work is not judged by how impressive the reasoning looks in the middle. It is judged by whether the result is valid, reproducible, within scope, and useful to someone else. A coding agent can be brilliant at repo-local reasoning and still leave you with a weak proof chain. A vertical pentest workflow can be less flexible than a coding agent and still be much more useful to a real team if it consistently turns signal into verified impact and verified impact into something engineering and compliance can act on. The right question is not whether Claude Code can be used for pentesting. It can. The right question is where the coding-agent model ends, where the pentest-workflow model begins, and why that boundary changes the way good teams should build, buy, and evaluate their stack. (Claude)

Research on LLM-driven pentest agents has started to put numbers behind that intuition. One recent 2026 study analyzing 28 LLM-based penetration-testing systems found that many failures do not disappear just because you add more tools or a better model. The authors split failures into tractable engineering gaps and deeper planning and state-management problems, showing that agents still overcommit to low-value branches, mismanage long-horizon tasks, and exhaust context before finishing the chain. A separate live enterprise evaluation found that AI agents can be strong at systematic enumeration, parallel exploitation, and cost efficiency, yet still show higher false-positive rates and weaker performance on GUI-heavy tasks than strong human practitioners. Those findings point to the same conclusion: the hard part is no longer only “can the model generate the next step.” The hard part is whether the system around the model preserves direction, evidence, and control. (arXiv)

Claude Code for pentesting starts with the right mental model

Claude Code is easiest to misunderstand when people treat it as a chatbot with shell access. Anthropic’s own product description is more specific than that. It is an agentic coding environment that can read a codebase, edit files, run commands, and integrate with tools. In practice, that makes it less like a security scanner and more like a programmable operator workspace for technical users. It is designed to inhabit the same surface where engineers already work: the repo, the shell, the editor, the browser-connected workflow, and the toolchain around them. That is exactly why it can be compelling for security researchers. Many offensive and defensive tasks begin with local understanding: tracing auth logic, mapping trust boundaries, reviewing changes, replaying test harnesses, checking build scripts, or instrumenting small validation loops inside a real project. (Claude)

The official Claude Code documentation makes this model even clearer when you look at how execution is governed. By default, Claude Code uses strict read-only permissions. When it needs to do more than read, such as editing files, running tests, or executing commands, it asks for explicit approval. Anthropic also documents sandboxing as a separate, defense-in-depth layer: permissions decide which tools and resources Claude can attempt to use, while sandboxing provides OS-level enforcement for the Bash tool’s filesystem and network boundaries. In other words, Anthropic is not presenting Claude Code as a free-roaming autonomous hacker. It is presenting it as an agentic environment that can be granted more or less power depending on how the operator and organization configure it. That distinction matters because “Claude Code for pentesting” is meaningful only if you understand the permission model it actually runs under. (Claude)

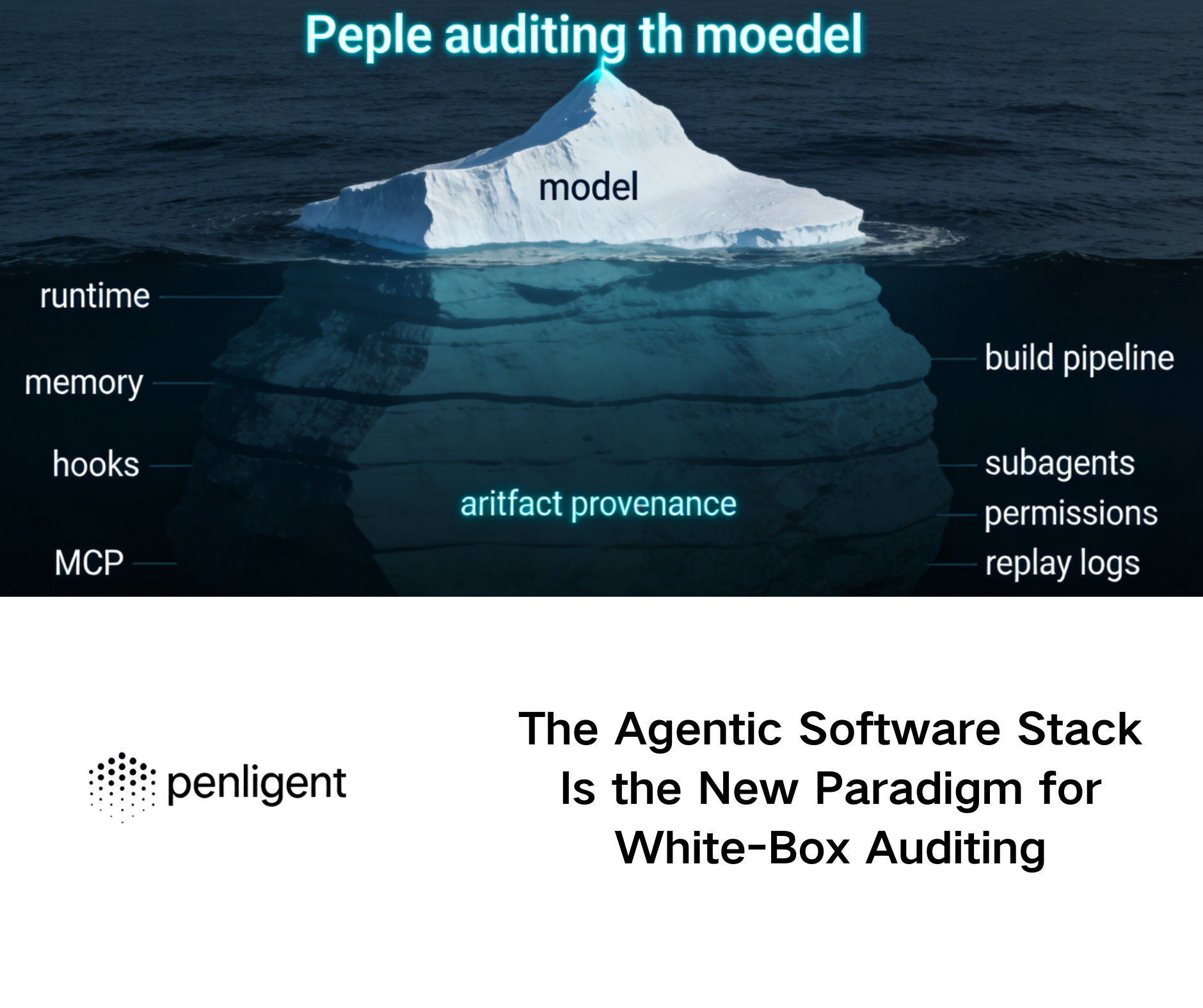

It also matters that Claude Code has state and specialization primitives beyond a single conversation. Anthropic documents two memory channels, CLAUDE.md and auto memory, both loaded at the start of each conversation, with the explicit warning that Claude treats them as context rather than hard enforcement. Anthropic also documents subagents as separate assistants that run in their own context windows with their own prompts, tool access, and permissions. Hooks can run user-defined shell commands, HTTP endpoints, or LLM prompts at specific lifecycle points. Skills extend behavior through Markdown instruction packages. MCP, in Anthropic’s own framing, is an open standard for connecting AI applications to external systems and tools. Once you stack those pieces together, you get something powerful: not a fixed pentest product, but a deeply extensible workbench for building one-off or semi-reusable security workflows around code, tools, and context. (Claude API Docs)

That is exactly why Claude Code feels strong in white-box and repo-aware security work. If you already have source access, existing scripts, CI artifacts, or known tooling, Claude Code can shorten the loop between “I think something is wrong here” and “I have a hypothesis, a patch candidate, and a targeted validation step.” It is good at collapsing tedious context-building work: where is auth enforced, where are user-controlled fields normalized, which codepath governs file writes, what changed between two commits, what does this Semgrep output actually mean, what configuration drift appeared in this branch, why does the test harness fail only in staging. That is not theoretical. It is exactly the kind of multi-file, multi-tool, multi-output work Anthropic’s own docs position Claude Code to do. (Claude)

The shape of that strength becomes easier to see in a practical comparison.

| Security task shape | Why Claude Code is naturally strong | Where the boundary shows up |

|---|---|---|

| Large codebase review | Repo-wide reading, file edits, command execution, memory, subagents | A convincing explanation is not proof of exploitability |

| Static analysis triage | Easy to connect findings with local code and tests | False positives still need independent validation |

| Patch-direction work | Strong at proposing fixes and regression tests | Fix quality depends on local context and operator review |

| Tool-assisted research | Skills, hooks, MCP, and SDK make custom workflows feasible | Every extension adds trust and attack-surface questions |

| Ad hoc automation | Useful for one-off scripts and research loops | Reproducible evidence and reporting still need a workflow |

The table looks obvious once you say it out loud, but it is exactly where many comparisons go wrong. Claude Code is strongest where the truth already lives close to the repo, close to the terminal, or close to the operator’s local toolchain. It can be very good at deciding what to inspect next, what experiment to run, and what patch to try. That is a real advantage, not a consolation prize. But the same table also shows the limit: the output of a coding agent is not automatically a pentest-grade artifact. There is still a difference between “smart next step” and “valid finding you can ship.” (بنليجنت)

What Claude Code pentest skills actually add

The word “skills” causes more confusion than it should because people often project much more onto it than Anthropic does. Anthropic’s documentation is straightforward: skills extend what Claude can do by adding instructions through SKILL.md, and Claude can load them automatically when relevant or invoke them through a slash command. That is powerful, but it is not magic. A skill can encode methodology, structure, guardrails, domain knowledge, preferred tool use, or output formatting. What it cannot do by itself is change the trustworthiness of the underlying environment, create OS-level enforcement, or convert speculative reasoning into verified impact. A pentest skill can make Claude behave more like a disciplined security analyst. It does not transform Claude Code into a validated pentest operating model on its own. (Claude API Docs)

Public security-focused skill ecosystems already reflect that reality. Trail of Bits’ public Skills Marketplace for Claude Code includes plugins for static analysis, Burp Suite project parsing, supply-chain risk auditing, Semgrep rule creation, YARA authoring, false-positive verification, and other security workflows. That is strong evidence that the ecosystem sees skills as reusable workflow and analysis components, not as self-authenticating exploit engines. The Trail of Bits repository itself describes the marketplace as a collection of skills for AI-assisted security analysis, testing, and development workflows. That phrasing matters. “AI-assisted security analysis” is a much more precise promise than “autonomous pentest platform,” and it aligns with how strong teams should think about skills. (جيثب)

A good pentest skill therefore looks less like an exploit pack and more like a disciplined method wrapper. The most valuable skills do not just tell the agent what tools exist. They force it to ask better questions before it acts, and they force it to record better evidence before it concludes.

---

name: pentest-evidence-check

description: Require evidence before calling a finding exploitable.

---

When analyzing a security issue, do not label it verified unless all of the following exist:

1. A control request or baseline behavior

2. A test request or modified input

3. An observable effect tied to the claim

4. Preconditions such as role, session, feature flag, or configuration

5. Retest notes explaining how the claim should be re-validated after a fix

If any item is missing, describe the result as a hypothesis, not a confirmed finding.

That kind of skill is useful because it changes the quality bar of the conversation. It narrows the gap between “the model thinks this is likely” and “a reviewer can tell whether the claim deserves trust.” It also makes explicit something security teams often forget in AI conversations: the most important value of a skill is frequently not more autonomy but better constraint. Anthropic’s docs support that interpretation indirectly through the rest of the Claude Code model. Skills are only one layer. Permissions, sandboxing, and operator review still sit underneath them. If you attach a powerful pentest skill to a sloppy permission policy, you do not get a safer pentest system. You get a more capable agent inside a weakly governed environment. (Claude API Docs)

The same principle applies when skills are paired with hooks, MCP servers, or local security tools. A pentest skill may say “use Burp data first,” “triage Semgrep results before running tests,” or “reject claims without independent evidence,” but the real-world behavior still depends on which tools Claude can call, which domains it can reach, which files it can write, and which lifecycle actions your hooks trigger. Anthropic’s hooks model is powerful precisely because it can automate shell commands, HTTP endpoints, and even LLM prompts at different lifecycle events. That opens a lot of room for good security engineering. It also means a skill-only comparison is too shallow. When people say “Claude Code plus pentest skills,” the serious version of that phrase is actually “Claude Code plus skills plus permissions plus sandboxing plus hooks plus toolchain hygiene plus validation discipline.” (Claude API Docs)

Why Claude Code is genuinely strong in white-box security work

Once the mental model is right, Claude Code’s strongest security use cases become much easier to describe. It excels when the target problem is deeply entangled with the codebase itself. Think of a repo where the hard problem is not just to find a suspicious pattern but to understand what role checks are supposed to do, how a deserializer is used three layers down, whether this logging sink ever receives attacker-controlled content, or how a pipeline step could expose secrets when run under a mis-scoped token. Those are not brute-force detection problems. They are interpretation problems across code, config, history, and execution context. Claude Code is very well matched to that kind of work because its native surface is the codebase and its nearby artifacts. (Claude)

Subagents make that especially interesting for security review. Anthropic documents that each subagent gets its own context window, its own prompt, and its own tool access and permissions. That means you can isolate noisy exploratory work from decisive implementation work. A code-reviewing subagent can stay read-only. A reproduction-oriented subagent can be allowed to run bounded commands. A research subagent can summarize an unfamiliar auth flow without flooding the main session with every intermediate dead end. That sounds like a productivity feature, but in security work it is also a reliability feature. One of the most common failure modes in AI-heavy reviews is context contamination: an agent carries too much stale detail, too many partial guesses, or too many irrelevant tool outputs into later steps. Subagents are not a cure-all, but they are a meaningful control over that problem. (Claude API Docs)

Memory matters for the same reason. Anthropic’s documentation on CLAUDE.md and auto memory makes clear that Claude Code can carry project instructions and discovered patterns across sessions, even though those memories remain contextual rather than hard policy. For security work, that can be more useful than it sounds. A mature codebase often has recurring facts that matter to threat modeling: where privileged middleware lives, which paths bypass the public router, which build step signs artifacts, which directories contain generated code, or which scripts are safe to run in a staging clone but never on production-adjacent data. If those facts stay visible across sessions, the agent wastes less effort relearning the same environment. That does not make the analysis automatically correct, but it does make the workflow more realistic for sustained review. (Claude API Docs)

Hooks deepen the story. Anthropic documents hooks as lifecycle-triggered shell commands, HTTP endpoints, or LLM prompts. In a security context, that means you can automate preflight and postflight checks that a normal coding agent would otherwise skip or inconsistently remember. You can automatically tag sessions with scope metadata. You can force artifact capture after particular classes of tool use. You can warn or halt if a command would reach a prohibited domain. You can run a read-only parsing step after Burp export ingestion, or insist on a local diff review before accepting a patch touching auth logic. Those are not glamorous features, but they are exactly how serious engineering teams turn a smart agent into a more trustworthy workflow component. (Claude API Docs)

Anthropic’s commercial data policy also matters to buyers evaluating repo-centric security workflows. Anthropic states that under commercial terms, it does not train generative models using code or prompts sent to Claude Code unless the customer has explicitly opted in to provide data for model improvement. That does not answer every procurement question, and it does not remove the need to review deployment, retention, or provider choices. But it is still a concrete difference between “we have to infer the vendor’s position from marketing language” and “the vendor has published a specific training-use statement.” For security engineering teams dealing with source code, internal findings, and remediation discussions, that is not a side issue. It is part of whether a tool can be used in the first place. (Claude API Docs)

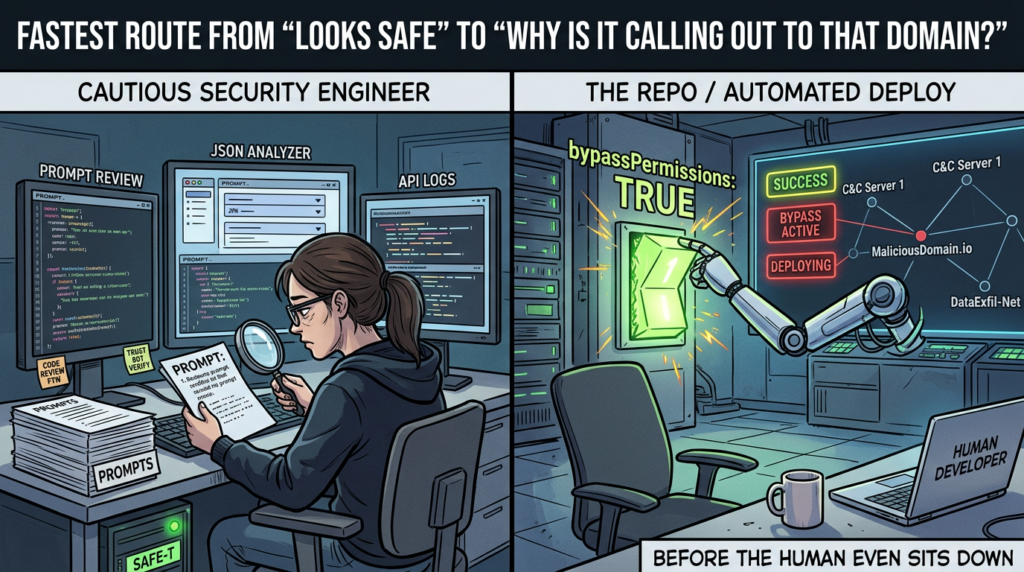

The flip side is that white-box strength is not the same as black-box proof. Claude Code can be excellent at deriving a likely exploit path from source or configuration. It can draft a test. It can connect several local clues into a strong hypothesis. But the final question in a pentest is usually not “does the code look risky.” It is “what happened on the target, under what conditions, and can another person reproduce it.” That is why a good Claude-centered security workflow almost always needs an explicit verifier-backed layer. One of Penligent’s own public Claude-focused articles makes this point well: a useful pentest copilot is instrumented, constrained, and verifier-backed. That framing is useful precisely because it does not oversell the model. It treats the model as the reasoning layer, not as the final judge of reality. (بنليجنت)

Why Penligent optimizes for a different part of the job

Penligent’s public materials point to a different center of optimization. The homepage emphasizes 200+ supported tools, latest-CVE scanning, one-click PoC exploit scripts, signal-to-proof execution, artifacts with traceable proof, prompt editing, scope locking, action customization, and editable reports aligned with SOC 2 and ISO 27001. Even if you ignore all branding language and take only the concrete pieces, the design intent is obvious. This is not primarily a repo-native coding assistant. It is a workflow product trying to shrink the distance between finding, validation, proof, and report. That is a different problem from “help me reason inside a codebase,” and it leads to different product choices. (بنليجنت)

That difference becomes more explicit in Penligent’s own technical writing. Its public article on moving from a fast PoC to reproducible proof makes the point that security teams do not fix PoCs in the abstract. They fix reproducible evidence attached to a real system, version, trust boundary, and observed behavior. Another Penligent article about natural-language orchestration describes the goal as taking natural language in and producing a multi-tool attack chain out, with artifacts usable by engineering, compliance, and leadership. Those are good descriptions of a workflow-native offensive product because they center the artifact chain, not the dialogue. Even if a reader never uses Penligent, that design emphasis is still the right lens for evaluating any serious AI pentest system. (بنليجنت)

This is also why a vertical workflow product can feel less “general” than Claude Code while still being closer to what many security teams actually need. The hard middle of pentesting is messy. Findings are partial. Auth contexts drift. Business logic requires replay. Headers lie. Sessions expire. A request that looked conclusive in a scratchpad turns out to depend on a hidden role or stale cache. A sharp coding agent helps with reasoning inside that mess. A workflow system helps by forcing the mess into repeatable state: artifact capture, sequence retention, report structure, and re-testability. Those are different kinds of value. A serious buyer should not pretend they are interchangeable. (بنليجنت)

You can see the divergence even more clearly if you compare what each product publicly treats as the primary output. Anthropic’s public Claude Code surface is centered on reading, editing, commands, prompts, memory, and development integrations. Penligent’s public surface is centered on verified findings, proof artifacts, controlled agentic workflows, and report packaging. That does not make one better in the abstract. It means the products are solving adjacent but non-identical problems. If your bottleneck is “I need faster, better reasoning against code and local tools,” Claude Code can be a strong fit. If your bottleneck is “I need validated target-facing output I can rerun and ship,” a workflow-native product is solving a different and often more operationally complete problem. (Claude)

Claude Code vs Penligent at five system layers

The cleanest way to compare these systems is not to compare model names, UI polish, or screenshots. It is to compare the layers where offensive workflows actually succeed or fail.

| الطبقة | Claude Code with pentest skills | Penligent, based on public materials |

|---|---|---|

| Execution center of gravity | Repo, shell, editor, dev tooling | Target behavior, multi-tool execution, proof workflow |

| State model | Conversation context, CLAUDE.md, auto memory, local tooling | Workflow artifacts, steps, proof chain, reportable evidence |

| Validation model | Strong at hypothesis generation, local reproduction, patch reasoning | Strongly positioned around verified impact and reproducible proof |

| Governance model | Permissions, sandboxing, allowlists, hooks, scoped tools | Scope locking, prompt editing, action customization, report controls |

| Output model | Commands, patches, scripts, explanations, research notes | Findings, artifacts, traceable proof, editable reports |

The first layer is execution center of gravity. Claude Code is built to live where engineers and researchers already work. That gives it tremendous leverage for white-box tasks, but it also means the “truth source” of the session is often whatever the repo, shell, or connected tools reveal locally. Penligent’s public materials point in the opposite direction. Its execution story is about chaining tools toward target-facing validation and keeping the resulting proof material coherent. That is why comparisons based only on “who can call more tools” are so weak. Tool count matters far less than where the system thinks the task truly lives. (Claude)

The second layer is state. Claude Code has serious state primitives. Anthropic documents CLAUDE.md, auto memory, subagents, and session-oriented context management. That is enough to build surprisingly sophisticated security workflows. But the state is still fundamentally conversational and environment-adjacent. Penligent’s public materials instead emphasize artifacts, steps, traceable proof, and report packaging. That is a different way of storing progress. One is optimized for continuing the work. The other is optimized for preserving the work so other people can audit, reproduce, and act on it. In pentesting, both matter, but they are not the same. (Claude API Docs)

The third layer is validation. This is where many AI product comparisons become misleading. Claude Code can be extremely strong at building a compelling exploit hypothesis, drafting the right test harness, or tracing a vulnerability candidate back to code and configuration. But it is not, by default, a workflow that treats every claim as untrusted until independently checked against a target or environment. The best Claude-centered offensive workflows account for that by adding evidence gates, bounded tools, and external validation. Penligent’s public positioning is much more direct here. It repeatedly centers verified impact, reproducible proof, and evidence-first results. The difference is not that one side “uses AI” and the other does not. The difference is what each side considers finished work. (بنليجنت)

The fourth layer is governance. Anthropic’s public security model for Claude Code is detailed and engineering-heavy: explicit approvals, write restrictions, sandboxing, deny rules, allowlists, and lifecycle hooks. That is a real strength for organizations that want to build their own governed workflows. Penligent’s public product language is simpler and more workflow-specific: edit prompts, lock scope, customize actions, generate reports. Those are different governance languages because the systems are governed at different abstraction layers. Claude Code’s governance asks, “what may the agent do inside this environment.” Penligent’s governance, at least in public materials, asks more often, “how do we keep this offensive workflow within the target, proof, and reporting boundaries we actually need.” (Claude)

The fifth layer is output. Claude Code naturally emits working memory: notes, diffs, shell histories, code edits, proposed tests, summarized findings, and sometimes high-quality local automation. Penligent naturally markets finished evidence: artifacts, traceable proof, reproducible steps, and clean reports. This is the layer that most directly affects whether a team should buy one, build around one, or combine both. If your final consumer is a senior engineer who wants the fastest path to patch direction, Claude Code may be the better first surface. If your final consumer is a security lead, customer stakeholder, or remediation owner who needs a reproducible proof package, the workflow-native design is much closer to the destination. (Claude)

The CVEs that explain the real security boundary

The fastest way to understand the difference between an extensible coding agent and a pentest workflow system is not to read product copy. It is to look at where real vulnerabilities show up when agents, tools, protocols, and state layers are stitched together. Those incidents reveal the true execution boundary.

A good starting point is the GitHub MCP prompt-injection case documented by Invariant and summarized by Red Hat. The attack chain did not depend on some mystical “AI bug.” It relied on the fact that an agent using a GitHub MCP integration could consume attacker-controlled issue content and be steered into unintended behavior that exposed private repository data. Red Hat’s summary is especially useful because it avoids protocol hysteria and focuses on the actual mechanism: a crafted malicious issue in a public repo becomes untrusted content inside a tool-using agent workflow. That is the kind of failure that matters for Claude Code plus skills plus MCP, because the value of the stack comes from connecting the agent to real systems. The moment you do that, untrusted tool context stops being an abstract safety topic and becomes an operational security problem. (invariantlabs.ai)

The next layer down is the tooling around MCP itself. GitHub’s advisory for CVE-2025-49596 says versions of MCP Inspector below 0.14.1 were vulnerable to remote code execution because the Inspector client and proxy lacked authentication, allowing unauthenticated requests to launch MCP commands over stdio. That advisory matters here for a simple reason: many teams experimenting with agent extensions assume their debugging and inspection tools are “safe because they are developer tools.” This CVE shows the opposite. In agent ecosystems, debugging infrastructure is part of the attack surface. If you build a generalized pentest workbench out of local tools, skills, and protocol components, then the workbench’s diagnostic tools have to be threat-modeled too. Upgrading to 0.14.1 or later is the immediate remediation, but the broader lesson is more important: a flexible agent stack inherits risk from every layer that can originate or relay tool calls. (جيثب)

CVE-2025-6515, the oatpp-mcp prompt-hijacking case, pushes the lesson further. JFrog and NVD describe the problem as a session-ID design weakness in the MCP SSE endpoint, where an instance pointer was reused as a non-cryptographically secure session identifier. An attacker with network access to the relevant HTTP server could guess future session IDs and hijack legitimate client MCP sessions, returning malicious responses from the MCP server. The exploit condition is important: this is not a generic indictment of all agent tooling, and it is not proof that every Claude Code deployment is exposed. It is a reminder that once agent extensions leave the purely local boundary and rely on network transports, session design becomes part of the threat model. For teams turning a general-purpose coding agent into a network-connected offensive workbench, transport security and session integrity cannot be an afterthought. (research.jfrog.com)

CVE-2025-68143 in mcp-server-git is especially relevant because it affected an official MCP server implementation rather than a fringe experiment. GitHub’s reviewed advisory says versions prior to 2025.9.25 accepted arbitrary filesystem paths in the git_init tool and created repositories without validating the target location, effectively making those directories eligible for subsequent Git operations. The fix was decisive: the vulnerable tool was removed entirely because the server was meant to operate on existing repositories only. The relevance to this article is straightforward. When people say “Claude Code plus pentest skills,” they often imagine that the interesting risk lies only in the skill instructions or the model output. This advisory shows that the server-side tool boundary can be just as important. If the tool wrapper expands the accessible filesystem in an unintended way, the agent’s practical capability expands with it. That is not a theoretical concern. It is a reviewed advisory with a concrete patch path. (جيثب)

CVE-2026-0621 in the MCP TypeScript SDK illustrates a different class of exposure. GitHub’s advisory describes a ReDoS flaw in the UriTemplate class that could allow crafted resources/read requests to drive 100 percent CPU usage, crash the server, and deny service to all clients. The exploit condition is narrower than the previous examples: it affects MCP servers that register resource templates with exploded array patterns and accept untrusted client requests. But that is exactly why it is useful for this comparison. Agent ecosystems are not only about confidentiality and code execution. Availability matters too. A generalized agentic workbench with many protocol components may fail in ways that have little to do with “hacking” and everything to do with runtime resilience. That, again, is one reason workflow-native platforms can be attractive: they may reduce the number of moving pieces a buyer has to secure directly, even though the platform itself still needs its own scrutiny. (جيثب)

The LangChain and LangGraph CVEs from late 2025 make the same point at the framework layer rather than the protocol layer. NVD describes CVE-2025-68664 as a serialization injection flaw in LangChain’s dumps() و dumpd() functions, where dictionaries using the internal ل ج key structure could be treated as legitimate serialized objects during deserialization. NVD describes CVE-2025-67644 as an SQL injection flaw in LangGraph SQLite checkpoint implementations, where untrusted metadata filter keys could manipulate SQL queries in checkpoint search operations. These vulnerabilities are not about Claude Code specifically, and that is precisely why they matter. They show that modern agent systems fail not only at the visible edges like prompts and plugins, but also in their hidden state and persistence layers: serialization, checkpoints, metadata filters, and framework assumptions. Any team building its own pentest product around a general coding agent needs to own that risk surface. (nvd.nist.gov)

All of those examples are easier to digest in one view.

| CVE or case | Why it matters here | Exploit conditions | Mitigation path |

|---|---|---|---|

| GitHub MCP prompt injection case | Untrusted tool content can steer an agent into data exposure | Agent processes attacker-controlled issue content through MCP | Treat tool content as untrusted, scope credentials, sanitize context, constrain tool access |

| CVE-2025-49596 | Debugging infrastructure became an RCE surface | MCP Inspector below 0.14.1, unauthenticated requests reach proxy | Upgrade to 0.14.1 or later |

| CVE-2025-6515 | Networked MCP session integrity can be hijacked | oatpp-mcp over HTTP SSE plus attacker network access | Fix session handling, reduce network exposure |

| CVE-2025-68143 | Tool boundary expanded into unintended filesystem scope | mcp-server-git before 2025.9.25 | Upgrade to 2025.9.25 or later |

| CVE-2026-0621 | SDK-layer bugs can break agent availability | Untrusted clients plus affected MCP SDK usage | Upgrade to 1.25.2, avoid risky patterns, add timeouts and validation |

| CVE-2025-68664 and CVE-2025-67644 | Framework serialization and checkpoint state are real attack surfaces | Untrusted data reaches vulnerable serialization or checkpoint paths | Upgrade affected LangChain and LangGraph components |

The fair conclusion from this section is not that general-purpose agent stacks are doomed. The fair conclusion is that extensibility creates security responsibility. Anthropic’s own security model for Claude Code recognizes that through explicit permissions, write restrictions, sandboxing, prompt-injection guidance, and defense-in-depth. The protocol ecosystem around MCP also recognizes it, which is why so many advisories now cluster around tool servers, inspectors, SDKs, and connected components. In practice, the more you turn Claude Code into a custom pentest operating environment, the more you need to think like a platform engineer, not just a prompt engineer. (Claude)

What research says about AI pentest agents, and what still breaks

The most helpful research result in this area is not any single benchmark score. It is the repeated finding that performance depends as much on system design as on model quality. The 2026 paper “What Makes a Good LLM Agent for Real-world Penetration Testing?” is useful because it does not stop at cheering on higher scores. It explicitly separates Type A failures, which come from missing tools or weak prompts, from Type B failures, which persist because of planning and state-management problems. The paper argues that agents misallocate effort, get trapped in low-value branches, and exhaust context before finishing attack chains. That diagnosis matters more than the headline score because it tells buyers what to look for. A demo that feels brilliant on three-step tasks may still collapse under long-horizon work if the system has no disciplined way to manage difficulty, confidence, and context. (arXiv)

The live-enterprise comparison paper from early 2026 sharpens that point. In a real university environment of roughly 8,000 hosts across 12 subnets, the ARTEMIS scaffold placed second overall, found nine valid vulnerabilities, and outperformed nine of ten human participants. That is not a toy result. But the same paper also says AI agents had higher false-positive rates and struggled with GUI-based tasks. That combination is exactly what a sober technical buyer should expect. AI agents can be fast, systematic, and cheap at enumeration and parallel work. They can also still misread reality when the environment depends on awkward interaction patterns, ambiguous state, or evidence that resists simplification. The right takeaway is not “agents already beat humans” or “agents are overhyped.” It is that agent performance is highly task-shaped. Any comparison between Claude Code and Penligent that ignores task shape is already too shallow. (openreview.net)

The MAPTA results on the XBOW benchmark are also revealing for the same reason. The paper reports 76.9 percent overall success across 104 web challenges, with strong results on SSRF, misconfiguration, server-side template injection, SQL injection, and broken authorization, but weaker performance on XSS and zero success on blind SQL injection. The XBOW benchmark repository itself describes those 104 benchmarks as intentionally curated to evaluate the proficiency of web-based offensive tools and notes that the set had been kept confidential until release to preserve novelty. Together, those sources make a strong point: modern multi-agent offensive systems can absolutely do meaningful work, but their strengths and weaknesses are sharply patterned. The system may look highly capable on visible, tool-grounded, feedback-rich vulnerability classes and still struggle when the signal is delayed, indirect, or interaction-heavy. (arXiv)

That pattern should change how people read claims about “Claude Code pentest skills.” Skills can absolutely improve the first category of failures. They can encode method, route to better tools, insist on evidence, and standardize workflow. They are much less likely to solve the second category of failures if the underlying issue is horizon management, weak state control, or ambiguous external truth. A skill can tell an agent to be careful with blind SQL injection. It cannot manufacture a clean proof signal when the system around it has poor state tracking, insufficient instrumentation, or weak target-facing validation. That is why the strongest product argument for a workflow-native system is not “better prompts.” It is “better system design around evidence and state.” (arXiv)

This is also where Penligent’s public positioning becomes technically interesting rather than merely commercial. The homepage and related posts keep returning to the same themes: signal to proof, artifacts, traceable evidence, report editing, scope control, and reproducible PoC material. Those emphases line up with what the research literature keeps identifying as the real bottlenecks. That does not prove Penligent wins every benchmark. Public materials are not independent evaluation. But it does show that the product is aiming at the right bottlenecks. In a field where the model is no longer the only hard part, that matters. (بنليجنت)

How to evaluate an AI pentest copilot without getting fooled by a demo

A serious evaluation starts by refusing to compare systems on a single undifferentiated notion of “security testing.” White-box repo review is different from black-box target validation. Authenticated business-logic testing is different from CVE replay. Patch-direction work is different from stakeholder-ready reporting. A coding agent may win one class and lose another. A workflow-native system may look narrower in a coding demo and far stronger in remediation handoff. If you want a fair test, split the scenarios first. Then evaluate them with the metrics that actually reflect operational value. The research literature already gives the right hints: false positives, context failure, GUI friction, exploit class sensitivity, and operator burden all matter. (arXiv)

The most useful primary metric is not “number of possible issues surfaced.” It is time to first validated finding. The second is validated finding rate, not raw finding count. Then come false-positive burden, number of operator interventions, evidence completeness, retestability, and report readiness. Those metrics make it much harder for a product to win by producing plausible-looking noise. They also make it much easier to tell what category you are really buying. A coding agent with pentest skills might score extremely well on patch direction, local reproduction, and operator-augmented code understanding. A workflow-native system might dominate on evidence completeness, retestability, and report readiness. That is not a flaw in either one. It is the point of the comparison. (openreview.net)

A real evidence bundle should also be concrete enough that another person can rerun it without guessing what happened.

{

"asset": "staging.example.internal",

"finding_id": "idor-authz-01",

"preconditions": [

"authenticated as basic_user",

"feature_flag=beta_exports enabled"

],

"control_request": "GET /api/exports/12345 returns 403 for unrelated resource",

"test_request": "GET /api/exports/67890 returns 200 for another tenant",

"observable_effect": "cross-tenant export metadata disclosed",

"supporting_artifacts": [

"request.txt",

"response.txt",

"session-notes.md",

"screenshot.png"

],

"retest_after_fix": "repeat both control and test requests after authorization patch"

}

Nothing in that structure is glamorous, but that is exactly why it matters. It forces the system to preserve the minimum information an engineer, triager, or retester needs. It also exposes where a coding-agent workflow and a pentest-workflow product often diverge. A coding agent can help you build this artifact. A workflow-native system may make artifact production the default path rather than an extra discipline you must remember to impose. Penligent’s public materials and technical posts are very clearly aimed at that second pattern. Claude Code’s official materials are much more clearly aimed at making the workbench itself powerful and governable. (بنليجنت)

A practical evaluation matrix should look more like this.

| Evaluation scenario | Best primary metric | What often fools buyers |

|---|---|---|

| White-box code audit | Patch-quality and hypothesis precision | Mistaking fluent explanation for exploitability |

| Black-box web validation | Time to first validated finding | Counting scanner-style noise as progress |

| Authenticated logic testing | Operator interventions and evidence completeness | Assuming auth context is stable when it is not |

| Fix verification | Retestability and delta clarity | Accepting “probably fixed” instead of proof |

| Reporting workflow | Report readiness and artifact quality | Treating screenshots or prose alone as sufficient evidence |

The point of this table is simple. Buying or building an AI pentest stack is no longer mostly about model selection. It is about deciding which failure modes you can tolerate and which ones your workflow has to eliminate by design. If your organization already has elite operators who can impose discipline, Claude Code plus carefully designed skills, permissions, sandboxing, and external validators may be exactly right. If your organization needs the system to institutionalize that discipline and produce consistent artifacts for other consumers, a dedicated workflow system becomes much more attractive. (Claude)

A workflow that uses Claude Code and Penligent together

The most realistic answer for mature teams is often “both,” but only if the division of labor is explicit. Claude Code is excellent for white-box discovery, patch direction, repo-local reasoning, and building bounded local experiments. Penligent’s public materials suggest it is aimed at target-facing validation, traceable proof, and report packaging. Those are complementary centers of gravity. A team that tries to force Claude Code to become an entire pentest platform may end up rebuilding a lot of workflow infrastructure around evidence, retesting, and artifact capture. A team that ignores Claude Code’s repo-native strengths may miss the fastest route from code suspicion to precise hypothesis. The mature move is not to flatten those differences. It is to operationalize them. (Claude)

A sensible loop starts with Claude Code near the code and the environment clone. Use it to map risky flows, inspect auth and trust boundaries, derive hypotheses from config and history, propose minimal tests, and draft remediation options. Keep the agent bounded with permissions, sandboxing, and method-enforcing skills so that it remains a disciplined research assistant rather than an overtrusted judge. Then shift the work into a target-facing validation layer where the goal is not further speculation but proof: exact conditions, exact artifacts, exact observable effect, exact retest path. Penligent’s own public write-up on going from white-box findings to black-box proof describes almost this exact split, emphasizing validation against real targets, reproducible evidence, and repeat verification after fixes. That is a strong practical model even if you swap in different products. (بنليجنت)

That combined model also has a useful governance property. It keeps the highest-flexibility tool where flexibility is most valuable, which is early reasoning against local context. It keeps the highest-discipline artifact path where discipline matters most, which is when claims must survive review, remediation, and retest. In other words, it aligns tool freedom with research and aligns workflow structure with proof. That is a much healthier design than asking one product to be simultaneously the free-form investigator, the validator, the historian, the policy engine, and the report writer unless it was intentionally built to do all of that. (بنليجنت)

The final responsibility, though, never moves. Penligent’s own PoC article puts it bluntly: the human remains accountable. That is the right ending for any discussion of agentic offense. Scope, authorization, stop conditions, legal boundaries, confidence labels, and escalation decisions still belong to people. Good systems make that responsibility easier to exercise. Bad systems hide it behind smooth demos. (بنليجنت)

Claude Code vs Penligent, the real answer

Claude Code with pentest skills is not a gimmick. It can be one of the most powerful security workbenches available to a technical operator because it combines repo awareness, command execution, memory, specialization, and extensibility inside the same surface where real engineering work happens. Anthropic’s public documentation backs that up in detail. But the same documentation also makes clear that Claude Code is a governed coding environment, not a pre-solved pentest workflow. Skills extend behavior. They do not erase the need for permissions, sandboxing, validation discipline, or evidence engineering. (Claude)

Penligent, at least in its public materials, is solving a more specific and more operational problem. It emphasizes target-facing execution, proof artifacts, scope control, and report packaging. That does not make it “more AI” or “less AI.” It makes it more workflow-native for the part of pentesting where many agent demos still break: finishing the chain from signal to proof. Research on LLM pentest systems keeps pointing toward the same bottlenecks in planning, context, state, and validation. That is why the right comparison is not model versus model. It is workbench versus workflow system. (بنليجنت)

The cleanest conclusion is also the least dramatic one. Use Claude Code when your leverage comes from code access, customization, local tooling, and flexible research. Use a workflow-native system when your leverage comes from validated target behavior, repeatable proof, and reportable artifacts. Use both when your team is mature enough to separate hypothesis generation from proof generation. The model is not the pentest workflow. In real offensive security, the hard part is not producing the next command. It is preserving the chain from signal to proof. (بنليجنت)

Further reading and references

For primary documentation on Claude Code itself, the most useful starting points are Anthropic’s overview of Claude Code, the official docs on skills, subagents, permissions, sandboxing, hooks, memory, data usage, and the MCP introduction. Those documents define the actual behavior boundary of the system far better than any social post or product commentary. (Claude)

For the security boundary around agent ecosystems, the most useful sources here are Red Hat’s MCP security analysis, Invariant’s GitHub MCP case study, the GitHub reviewed advisories for CVE-2025-49596, CVE-2025-68143, and CVE-2026-0621, JFrog’s and NVD’s records for CVE-2025-6515, and NVD’s records for the LangChain and LangGraph issues. Those sources are much more valuable than generic hot takes because they show where real failures occurred and what exact assumptions broke. (redhat.com)

For research on what AI pentest agents can and cannot do today, the strongest references used here are the 2026 paper on real-world penetration-testing agents, the live enterprise comparison study on OpenReview, the MAPTA paper, and the XBOW benchmark repository. Together they are a much better foundation for system evaluation than isolated vendor benchmarks. (arXiv)

For Penligent pages that are directly relevant to this topic, the most natural internal follow-ups are the Penligent homepage, the article on building an evidence-first workflow with Claude Code, the piece on moving from white-box findings to black-box proof, and the article on building reproducible proof from a fast PoC. Those links are directly related to the comparison in this article and do not need to be forced into a separate sales narrative to be useful. (بنليجنت)