An AI pentest report is only useful if it can survive retest.

That sounds obvious, but it is where most AI-generated security reporting breaks. A language model can turn messy notes into polished prose in seconds. It can summarize scanner output, draft remediation text, and rephrase technical issues for executives. None of that proves the finding is real. A credible pentest report still needs scope, test boundaries, reproducible evidence, exploit conditions, impact, remediation, and a clean handoff to the people who will fix the issue. OWASP’s current reporting guidance says the report has to work for both executive management and technical staff, and it explicitly calls for scope, limitations, an executive summary, findings, reproducible artifacts, and secure handling of the report itself. NIST SP 800-115 still frames testing and reporting as part of the same process of planning, executing, analyzing findings, and developing mitigation strategies. PTES remains useful as a shared model because it treats reporting as a formal phase of the engagement rather than an afterthought. (owasp.org)

That is the first correction to make if you are trying to get an AI pentest report: the problem is not “How do I make AI write a PDF?” The problem is “How do I turn security evidence into a report that another human can verify, prioritize, and act on?” Bug bounty platforms say the same thing in a more operational way. HackerOne defines a quality report as one with a clear title, detailed reproduction steps, and enough supporting material for the security team to understand and replay the issue. Bugcrowd’s documentation is equally direct: you need to explain where the bug was found, who it affects, how to reproduce it, the parameters involved, and include proof-of-concept support such as files or logs. (docs.hackerone.com)

That is also why a chat transcript is not a pentest report. Penligent’s own recent writing on AI pentest workflows makes that point clearly from the offensive side: a valid deliverable requires scope authorization, raw logs, captures or screenshots of successful exploitation, and reproducible verification steps, not just a smart-looking conversation. Its public product material and blog content consistently frame reporting as the end of an evidence chain that runs through discovery, validation, and proof, not as a standalone writing feature. (penligent.ai)

What an AI pentest report actually is

A real AI pentest report sits somewhere between a traditional consultant deliverable and a modern evidence pipeline. It is not a scanner export. It is not a loose notebook. It is not a raw Burp history, and it is not a one-page “high, medium, low” summary. It is a structured output assembled from technical evidence and then adapted for multiple audiences. OWASP’s reporting structure makes that split explicit by separating the executive summary from the findings section, and the findings section from appendices and artifacts. NIST likewise notes that because a report may have multiple audiences, multiple report formats may be required. (owasp.org)

In practice, that means a useful AI pentest report usually contains at least four layers of content.

The first layer is engagement truth: who authorized the work, what was in scope, what was out of scope, what kind of access was provided, and what limitations shaped the test. OWASP explicitly recommends documenting scope, limitations, timeline, version control, and the point-in-time nature of the assessment. That matters because security findings are extremely sensitive to context. A stored XSS found with an authenticated low-privilege user in a staging system is not the same thing as unauthenticated code execution in production. (owasp.org)

The second layer is leadership communication: what matters most, what the likely business impact is, how urgent the fixes are, and what strategic remediation work should happen next. OWASP’s executive summary guidance focuses on business need, business impact, and strategic recommendations, not tool output. OWASP’s risk methodology says the business risk is what justifies investment in fixing security problems. (owasp.org)

The third layer is technical findings: reference IDs, titles, exploitability, impact, risk, detailed descriptions, reproduction steps, evidence, remediation, and supporting references. OWASP recommends that the findings section be sufficient for engineers to understand, replicate, and resolve the issue, with enough technical detail to take action. HackerOne and Bugcrowd say essentially the same thing through the lens of triage. (owasp.org)

The fourth layer is artifact preservation: logs, screenshots, HTTP traces, sanitized requests and responses, proof-of-concept scripts when appropriate, and retest notes after fixes. OWASP’s latest reporting guidance now explicitly mentions reproducible test artifacts such as curl commands, HAR files, CSRF forms, and simple automation scripts to help developers validate and retest vulnerabilities. (owasp.org)

Once you think of the report that way, the role of AI becomes much clearer. AI is strong at transforming, summarizing, normalizing, deduplicating, and drafting. It is weak at ground truth. It does not know whether the token in your screenshot was already expired. It does not know whether the vulnerable path belonged to production or preprod. It does not know whether the exploit required a feature flag, a locale-specific configuration, or an admin role unless you tell it. So the right workflow is not “AI discovers truth.” The right workflow is “AI helps package verified truth.”

Pentest report standards that still matter in an AI workflow

A lot of security teams treat standards as paperwork. That is a mistake when you are building an AI reporting workflow. Standards help you decide what the model is allowed to do and what the model must never invent.

OWASP’s current Web Security Testing Guide remains the best practical starting point for web-focused reporting. Its guidance says a report should be easy to understand, highlight risks, appeal to both executives and technical staff, and be encrypted in transit or at rest so only the receiving party can use it. It recommends a report structure that includes scope, limitations, timeline, an executive summary, a findings summary, detailed findings, reproducible artifacts, and appendices for methodology and risk-rating explanations. It also warns that the report is a point-in-time assessment, which is a crucial sentence to preserve in AI-generated output because models often write with more certainty than the evidence supports. (owasp.org)

PTES is older, but still useful because it gives the engagement a coherent shape. PTES describes penetration testing as seven major sections and treats reporting as a distinct part of the overall process. Its reporting page says the report is broken into two major sections to communicate objectives, methods, and results to different audiences. That maps naturally to how AI systems should be used: one output for executive readers, one output for technical readers, and the same evidence base underneath both. (pentest-standard.org)

NIST SP 800-115 is even older, but it remains a foundational federal reference because it explicitly ties reporting to testing and mitigation. Its purpose statement says the document is intended to help organizations plan and conduct technical tests and examinations, analyze findings, and develop mitigation strategies. The linked PDF snippet also notes that multiple report formats may be required because reports may have multiple audiences. That is exactly the condition where AI drafting can help, as long as the findings are already validated. (مركز موارد أمن الحاسب الآلي NIST)

Bug bounty guidance adds another valuable reality check. Consultant-style pentest reports can be more expansive than bounty submissions, but the core requirements are the same. HackerOne wants a clear title, detailed steps to reproduce, impact, and supporting artifacts. Bugcrowd wants where the bug was found, who it affects, how to reproduce it, which parameters matter, and proof-of-concept support. If your AI workflow cannot consistently produce those fields, it is not ready to produce a pentest report either. (docs.hackerone.com)

Here is a compact way to think about the overlap:

| المصدر | What it emphasizes | Why it matters for AI pentest reporting |

|---|---|---|

| OWASP WSTG | Scope, limitations, executive summary, findings, reproducible artifacts, appendices, report security | Gives you the report skeleton and keeps the model from inventing missing context (owasp.org) |

| PTES | Reporting as a distinct engagement phase, different audiences, common language for pentests | Reminds you that polished text is not the same as a completed engagement (pentest-standard.org) |

| NIST SP 800-115 | Testing, analysis, mitigation, multiple audiences | Helps justify separate technical and executive outputs from the same evidence store (مركز موارد أمن الحاسب الآلي NIST) |

| HackerOne | Clear title, impact, reproduction, supporting artifacts | Good minimum bar for any individual finding drafted by AI (docs.hackerone.com) |

| Bugcrowd | Location, affected target, parameters, reproduction, PoC support | Forces the AI output to stay concrete and triageable (Bugcrowd Docs) |

If your workflow aligns with those five columns, you are close to something production-worthy. If it does not, the model quality matters less than you think.

The mistake most teams make with AI pentest reports

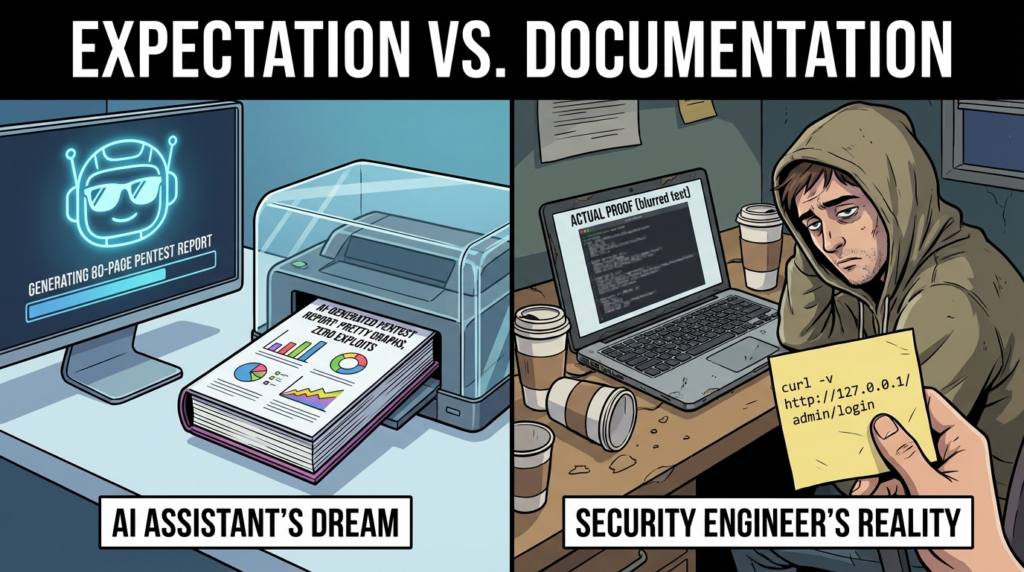

The most common failure in AI security reporting is not bad grammar. It is misplaced confidence.

Models are extremely good at smoothing jagged input into readable output. That skill becomes dangerous when the underlying evidence is incomplete. A scanner reports “possible SQL injection.” A model rewrites it as “confirmed SQL injection vulnerability permits data exfiltration.” A human glances at the narrative, sees the right buzzwords, and approves it. Then the engineering team cannot reproduce the issue, trust drops, and every future high-severity report gets treated with more skepticism.

OWASP’s reporting guidance pushes in the opposite direction. It says findings should include exploitability, impact, risk, detailed descriptions, detailed remediation, and enough technical detail for an engineer to act. That standard is hard to meet if the raw inputs are ambiguous. The issue is not that AI is bad at writing. The issue is that AI is very good at hiding ambiguity unless the workflow forces uncertainty into the output. (owasp.org)

This is where “proof-first” thinking matters. Penligent’s public material on AI pentest copilots argues that adaptive testing, evidence gathering, and verified findings matter more than chatbot fluency. That is the right frame. A pentest report is not an essay. It is a record of what was observed, what was tested, what conditions were required, what succeeded, what failed, and what remains uncertain. When a model is allowed to skip any of those categories, it starts acting like a ghostwriter for claims that have not been established. (penligent.ai)

There are several specific ways this failure shows up in practice.

The model may merge evidence from different hosts. That happens when you feed it multiple scanner outputs and screenshots without a stable asset identifier. It may conflate “vulnerable version present” with “reachable attack path confirmed.” It may strip away exploit prerequisites because those make the prose longer and less dramatic. It may overstate business impact because internet training data has taught it that “critical” findings are usually paired with catastrophic language. It may copy a remediation pattern from a similar CWE but miss the actual control boundary in your environment.

None of these are hypothetical risks. They are natural outputs from a system whose job is to predict plausible language. The fix is not just a stronger model. The fix is better constraints.

AI data handling for pentest reporting

Before you worry about prompting, worry about where your evidence goes.

Pentest reports routinely include secrets, access tokens, customer identifiers, internal hostnames, cloud account IDs, screenshots of production consoles, and sometimes live data from systems that should never leave a tightly controlled environment. If you upload that material to the wrong AI service, you can create a second security problem while trying to document the first one.

Official vendor documentation is very clear that product choice matters here. OpenAI’s API documentation states that data sent to the API is not used to train or improve OpenAI models unless you explicitly opt in. Anthropic’s commercial privacy documentation says that by default it does not use inputs or outputs from commercial products such as Claude for Work and the Anthropic API to train its models. Anthropic’s Claude Code data usage documentation adds that commercial users typically have a 30-day retention period, and that zero data retention is available for Claude Code on Claude for Enterprise. Google’s Gemini for Google Cloud documentation says prompts are handled under Google Cloud terms and the Cloud Data Processing Addendum. But Google’s Gemini API Additional Terms for unpaid services also say human reviewers may read and annotate API inputs and outputs for quality improvement, and explicitly warn users not to submit sensitive, confidential, or personal information to unpaid services. (OpenAI Developers)

That leads to a practical rule set.

If you are processing sensitive pentest evidence, default to an enterprise or commercial API path with well-documented data handling controls. Strip or mask secrets before sending anything to the model. Treat screenshots as data-rich artifacts, not harmless images. Remove session cookies, bearer tokens, personal data, and raw dumps unless there is a specific and controlled reason to preserve them. If you are using an unpaid or consumer-tier service, assume it may be the wrong place for confidential pentest artifacts unless the vendor explicitly says otherwise for that exact service.

A useful decision table looks like this:

| AI path | Officially documented data posture | Good fit for pentest reporting | Use with caution |

|---|---|---|---|

| OpenAI API | API data is not used for training unless you opt in (OpenAI Developers) | Drafting sanitized findings, rewriting executive summaries, structured report generation | Still mask secrets, customer data, and tokens |

| Anthropic commercial products and API | Commercial inputs and outputs are not used for training by default (privacy.anthropic.com) | Drafting from controlled evidence, structured triage, internal report generation | Standard retention and local caching details still matter (Claude API Docs) |

| Claude Code Enterprise with ZDR | Zero data retention available for Enterprise on a per-organization basis (Claude API Docs) | Higher-sensitivity internal workflows | Requires enterprise setup and verification |

| Gemini for Google Cloud | Governed under Google Cloud terms and DPA (Google Cloud Documentation) | Enterprise environments already centered on Google Cloud governance | Confirm exact service boundary and logging path |

| Gemini API unpaid services | Human reviewers may read and annotate inputs and outputs, and Google warns not to submit sensitive information (Google AI for Developers) | Low-sensitivity experiments with synthetic data | Not a good default for confidential pentest artifacts |

A team that gets this right does not ask, “Which model is best?” first. It asks, “Which evidence can legally and operationally be sent to which model, under which retention rules, with which redactions?”

A practical workflow to generate an AI pentest report

The cleanest way to get an AI pentest report is to separate evidence collection from language generation.

Start with authorization and scope. Every finding must inherit a stable record of the engagement: who approved testing, which assets were in scope, what dates the testing covered, what identities were used, and what limitations applied. OWASP recommends explicitly documenting scope, limitations, timeline, and the point-in-time nature of the assessment. If you skip that, the report immediately becomes harder to trust because every later statement floats without boundaries. (owasp.org)

Then collect raw evidence in a disciplined way. Do not think in terms of “all output.” Think in terms of evidence classes. A typical web or application engagement will produce at least these:

- Asset and service evidence, such as hostnames, IPs, open ports, software fingerprints, and discovered paths.

- Request and response evidence, such as sanitized HTTP traces, GraphQL queries, REST calls, cookies, response bodies, and timing behavior.

- Identity and role evidence, such as differences between unauthenticated, low-privilege, and admin behavior.

- Exploit evidence, such as screenshots, captured responses, proof-of-concept code, command output, or state changes that demonstrate impact.

- Environment evidence, such as version strings, configuration flags, locale settings, code pages, operating system details, feature toggles, or reverse proxy behavior.

- Mitigation and retest evidence, such as patched versions, negative test results after a fix, and new screenshots proving the issue is closed.

That evidence should be stored before any model sees it. Give every artifact a stable identifier, a hash, a timestamp, and an asset association. If you do not do that, AI will happily summarize across records that should never have been combined.

Next, normalize the evidence into finding records. This is the key transition point. The model should not be asked to infer a complete finding from a tarball of screenshots and XML. It should be given a record that says, in effect: “Here is the asset, the endpoint, the prerequisite, the observed behavior, the supporting artifacts, the likely classification, the remaining uncertainty, and the reviewer status.” Only then should AI be asked to draft title, description, executive phrasing, remediation wording, or a retest checklist.

After drafting, add risk and prioritization. OWASP notes that the technical impact needs to be translated into business impact for executive readers, and that on some engagements CVSS is required. In 2026, CVSS v4.0 is worth using when your customers or internal risk process expect formal scoring because it has more granular support for threat and environmental context than older habits built around a bare CVSS 3.x base score. NVD officially added support for CVSS v4.0 in June 2024, and FIRST defines CVSS v4.0 across Base, Threat, Environmental, and Supplemental metric groups. (owasp.org)

Then do human review. This is the point where someone with offensive judgment confirms exploitability, checks whether the business context is accurate, verifies that the remediation is specific enough to be useful, and removes language that overclaims. The reviewer should have a checklist: Are the steps reproducible? Are the artifacts attached? Are sensitive details masked? Are out-of-scope paths excluded? Are uncertainty and assumptions stated honestly? Has a real person confirmed that the issue is not just scanner noise?

Finally, produce two outputs from the same source. One should be a leadership-facing document: concise, risk-oriented, and action-focused. The other should be a technical report or appendix: detailed, reproducible, and artifact-backed. NIST’s point about multiple report formats for multiple audiences is still exactly right. (منشورات NIST)

A compact division of labor looks like this:

| Task | AI can help | Human sign-off still needed |

|---|---|---|

| Deduplicate noisy scanner output | نعم | Yes, before anything becomes a finding |

| Draft vulnerability titles | نعم | Yes, to avoid overclaiming |

| Summarize raw evidence | نعم | Yes, for accuracy and scope |

| Write executive summaries | نعم | Yes, because business impact is contextual |

| Map to CWE, OWASP Top 10, CVSS fields | نعم | Yes, especially when exploit conditions are nuanced |

| Write remediation text | نعم | Yes, because generic fixes waste engineering time |

| Decide exploitability | No, not alone | نعم |

| Approve severity and business priority | No, not alone | نعم |

| Mark a fix as verified | No, not alone | نعم |

OWASP’s Top 10 page now lists OWASP Top 10 2025 as the current release, which makes it a current taxonomy choice for classification in application reports, but it should be used as a categorization aid, not a substitute for proof and environment-specific risk. (owasp.org)

A structured finding schema for AI pentest reports

The best thing you can do for any model is stop feeding it shapeless input.

A structured finding schema gives the model a narrow lane to work in. It also makes human review faster because every finding looks the same. You want a record that is complete enough for drafting, but rigid enough that missing evidence cannot be silently “imagined” into existence.

A practical schema usually includes these fields:

| الحقل | ما أهمية ذلك |

|---|---|

| finding_id | Stable cross-reference across tickets, report revisions, and retest cycles |

| العنوان | Human-readable label that must not overstate confidence |

| affected_asset | Keeps the issue tied to the right host, service, or application |

| endpoint_or_location | Critical for reproducibility |

| preconditions | Role, network location, feature toggle, locale, or configuration requirements |

| evidence_artifacts | Screenshots, HAR files, logs, request captures, PoC files |

| observed_behavior | What actually happened |

| reproduction_steps | What another engineer can do to see it again |

| exploitability_notes | Factors that make exploitation easier or harder |

| technical_impact | Confidentiality, integrity, availability, auth bypass, privilege escalation, and so on |

| business_impact | What the organization actually risks |

| classification | CWE, OWASP Top 10 2025, internal taxonomy, or compliance mapping where relevant (owasp.org) |

| risk_score | CVSS v4 or internal risk rating, depending on engagement needs (FIRST) |

| remediation | Specific engineering actions |

| retest_plan | What has to be rechecked after the fix |

| reviewer | Human owner who approved the text |

| confidence | Confirmed, likely, possible, or needs more evidence |

That same idea is visible in Penligent’s public discussions of verified findings. Their recent writing emphasizes vulnerable request sequence, affected objects, reproduction steps, raw evidence, and retest instructions instead of generic report polish. Even if you never use that product, the operating principle is sound: findings should be structured around evidence and replayability, not around how good the PDF looks. (penligent.ai)

Here is a minimal JSON example that works well as an intermediate object before report generation:

{

"finding_id": "WEB-004",

"title": "Stored cross-site scripting in support ticket comment field",

"affected_asset": "support.example.com",

"location": "/tickets/18492/comments",

"preconditions": [

"Authenticated low-privilege user",

"Victim must view the affected ticket in a browser session"

],

"evidence_artifacts": [

"artifacts/WEB-004/request.har",

"artifacts/WEB-004/rendered-comment.png",

"artifacts/WEB-004/session-redacted.txt"

],

"observed_behavior": "User-supplied HTML payload executed in the victim's browser when the ticket thread was opened.",

"reproduction_steps": [

"Log in as a standard support user",

"Create or open a ticket",

"Submit the payload in the comment field",

"Open the ticket as another user with access to the thread",

"Observe JavaScript execution in the browser"

],

"exploitability_notes": "No CSP blocked the payload. HTML sanitization was applied inconsistently. Attack requires ticket visibility to another user.",

"technical_impact": "Session compromise and action execution in the victim context",

"business_impact": "Potential takeover of internal support sessions and access to ticket data",

"classification": {

"cwe": "CWE-79",

"owasp_top_10_2025": "Injection"

},

"risk_model": {

"cvss_version": "4.0",

"vector": "CVSS:4.0/AV:N/AC:L/AT:N/PR:L/UI:A/VC:L/VI:L/VA:N/SC:L/SI:L/SA:N"

},

"remediation": [

"Apply context-appropriate output encoding for rendered comments",

"Use a strict allowlist sanitizer for rich text input",

"Deploy a Content Security Policy that blocks inline script execution",

"Add regression tests for stored XSS payload handling"

],

"retest_plan": [

"Repeat the exact payload submission after the fix",

"Verify stored content is encoded or stripped on render",

"Verify CSP blocks execution if sanitization fails"

],

"confidence": "confirmed",

"review_status": "approved_by_human_reviewer"

}

The value of a schema like this is not aesthetic. It is that the model can only draft from what exists. If preconditions is blank, the omission becomes visible. If evidence_artifacts is empty, the issue cannot quietly become a confirmed finding without someone noticing.

Code that turns security evidence into a draft pentest report

Once you have structured finding data, report generation becomes much safer.

A common pattern is to keep the source of truth in JSON or YAML, and then render Markdown for a final report, a client portal, or a PDF conversion pipeline. The rendering layer should be deterministic. The model can help fill the fields, but the template should decide where each field goes.

Here is a simple Python example that renders a technical finding section from a structured object:

from textwrap import indent

def render_list(items):

if not items:

return "- None provided"

return "\n".join(f"- {item}" for item in items)

def render_finding(finding: dict) -> str:

classification = finding.get("classification", {})

risk_model = finding.get("risk_model", {})

lines = []

lines.append(f"### {finding['finding_id']} — {finding['title']}")

lines.append("")

lines.append(f"**Affected asset:** {finding.get('affected_asset', 'Unknown')}")

lines.append(f"**Location:** {finding.get('location', 'Unknown')}")

lines.append(f"**Confidence:** {finding.get('confidence', 'unspecified')}")

lines.append("")

lines.append("**Preconditions**")

lines.append(render_list(finding.get("preconditions", [])))

lines.append("")

lines.append("**Observed behavior**")

lines.append(finding.get("observed_behavior", "No observation recorded."))

lines.append("")

lines.append("**Reproduction steps**")

lines.append(render_list(finding.get("reproduction_steps", [])))

lines.append("")

lines.append("**Exploitability notes**")

lines.append(finding.get("exploitability_notes", "No exploitability notes provided."))

lines.append("")

lines.append("**Technical impact**")

lines.append(finding.get("technical_impact", "No technical impact provided."))

lines.append("")

lines.append("**Business impact**")

lines.append(finding.get("business_impact", "No business impact provided."))

lines.append("")

lines.append("**Classification**")

lines.append(

f"- CWE: {classification.get('cwe', 'N/A')}\n"

f"- OWASP: {classification.get('owasp_top_10_2025', 'N/A')}"

)

lines.append("")

lines.append("**Risk model**")

lines.append(

f"- CVSS version: {risk_model.get('cvss_version', 'N/A')}\n"

f"- Vector: {risk_model.get('vector', 'N/A')}"

)

lines.append("")

lines.append("**Evidence artifacts**")

lines.append(render_list(finding.get("evidence_artifacts", [])))

lines.append("")

lines.append("**Remediation**")

lines.append(render_list(finding.get("remediation", [])))

lines.append("")

lines.append("**Retest plan**")

lines.append(render_list(finding.get("retest_plan", [])))

lines.append("")

return "\n".join(lines)

This sort of renderer solves a real problem: it prevents stylistic drift from removing fields that matter. The report cannot “forget” reproduction steps because the renderer always expects them.

The second useful piece is evidence manifesting. If you want your report to survive internal review or later retesting, hash the evidence and store a manifest. That is especially important when the artifacts travel through ticketing systems, shared drives, or client handoffs.

#!/usr/bin/env bash

set -euo pipefail

REPORT_ID="engagement-2026-04-webapp"

ARTIFACT_DIR="./artifacts"

MANIFEST="./${REPORT_ID}-evidence-manifest.txt"

: > "$MANIFEST"

find "$ARTIFACT_DIR" -type f | sort | while read -r file; do

sha256sum "$file" >> "$MANIFEST"

done

echo "Created manifest: $MANIFEST"

That script does not make the evidence trustworthy by itself, but it makes later comparison and audit much easier. It is a small control with outsized value.

The third piece is sanitized reproducibility. OWASP now explicitly encourages reproducible test artifacts such as curl commands and HAR files for validation and retest. A report that gives developers a clean, redacted replay path is materially better than one that simply says “issue reproduced.” (owasp.org)

A stripped-down example might look like this:

curl 'https://api.example.com/v1/profile/12345' \

-H 'Authorization: Bearer REDACTED_LOW_PRIV_TOKEN' \

-H 'Accept: application/json' \

-H 'X-Tenant-ID: REDACTED_TENANT' \

--path-as-is

You would pair that with the observation that the endpoint returned another user’s object and explain which identifier changed, what status code came back, and why the object should not have been accessible. The point is not that curl is magical. The point is that a clear replay artifact cuts down triage friction dramatically.

Writing an executive summary that leadership can use

The executive summary is where AI-generated security writing often looks the most polished and says the least.

OWASP’s reporting guidance is clear that the executive summary should explain the objective of the test, key findings in business context, and strategic recommendations in non-technical language. OWASP’s risk methodology likewise says the business risk is what justifies investment in fixes. So the executive summary cannot just restate severity counts. A list of “2 critical, 4 high, 7 medium” tells a security leader almost nothing by itself. (owasp.org)

A good executive summary answers five concrete questions.

What was tested?

Why did the organization ask for the test?

What were the most important weaknesses actually confirmed?

What would exploitation let an attacker do in this environment?

What should be fixed first, and why?

Those are not language questions. They are translation questions. AI can help with that translation, but only if the technical findings already capture business-relevant details. If a finding says “Stored XSS in ticket comments,” the AI needs to know whether the affected system is a low-impact internal tool or a privileged support platform used by staff with access to account resets, payment data, or admin actions. Without that context, the model will default to generic rhetoric.

A bad AI summary sounds like this:

The assessment identified multiple critical vulnerabilities that could significantly impact the security posture of the organization. Immediate remediation is recommended.

That sentence is grammatically fine and operationally useless.

A better summary sounds like this:

The assessment confirmed two issues that could let a remote attacker reach privileged workflows without normal authorization checks. The most urgent issue affected the customer support application, where malicious content submitted by a standard user executed in the browser context of support staff. In this environment, that could expose ticket data and enable actions normally reserved for internal operators. A separate authorization flaw allowed cross-tenant object access in the billing API when object identifiers were changed. Both issues should be remediated before the next scheduled release because they affect workflows tied to customer trust and support operations.

That version gives leadership something to decide on. It shows affected functions, business consequences, and fix ordering.

Here is a practical contrast:

| Weak executive summary language | Better executive summary language |

|---|---|

| “Critical vulnerabilities were discovered.” | “Two confirmed issues affected authentication and cross-tenant data boundaries.” |

| “Attackers could compromise the system.” | “A remote attacker could act in a privileged user’s browser session or access another tenant’s records, depending on the path used.” |

| “Immediate remediation is required.” | “The support portal and billing API should be prioritized because both issues touch high-trust workflows used by staff and customers.” |

| “Security posture needs improvement.” | “Input handling, authorization checks, and retest controls were inconsistent across internet-facing services.” |

AI can generate the better version, but only if you force it to answer business questions explicitly instead of asking for a “leadership summary.”

Using CVSS v4 and business impact in an AI pentest report

Security teams still argue about scoring because many reports use numbers without context.

OWASP’s reporting guidance notes that some engagements require CVSS, while others may be better served by simpler risk bands if formal scoring only adds complexity. That is a fair caution. Still, if you are reporting across customers, environments, or compliance programs, a formal system helps. In 2026, CVSS v4.0 is the right modern reference point when a standardized score is needed. FIRST’s specification defines CVSS v4.0 as four metric groups: Base, Threat, Environmental, and Supplemental. NVD says CVSS v4.0 was released on November 1, 2023 and that it provides more granularity, a new Supplemental metric group, and a different severity methodology than earlier versions. (owasp.org)

That matters for AI pentest reporting because a bare base score is often not enough. FIRST explicitly says Threat metrics adjust severity based on things like proof-of-concept code or active exploitation, while Environmental metrics refine the score for a specific organization’s deployment and controls. That is much closer to how real pentest prioritization already works. A login bypass in a dormant internal prototype is not the same priority as a login bypass in the production admin panel of a revenue-critical platform. (FIRST)

CISA’s Known Exploited Vulnerabilities Catalog adds another practical layer. CISA describes the KEV catalog as a way to help organizations manage vulnerabilities and keep pace with threat, and explicitly says organizations should use the catalog as an input to vulnerability management and prioritization. That means an AI pentest report should not stop at “CVSS 9.8.” If the finding maps to a vulnerability already known to be exploited in the wild, that should influence the remediation narrative and sequencing. (cisa.gov)

A better prioritization section in an AI pentest report therefore combines at least four inputs:

- The intrinsic severity of the issue.

- Whether exploitation is active, easy, or publicly well understood.

- The business criticality of the affected system.

- The exploit prerequisites and available compensating controls.

That can be rendered as a simple decision table:

| Factor | Example question | ما أهمية ذلك |

|---|---|---|

| CVSS v4 Base | How severe is the issue in a generic sense? | Good for vendor comparison and baseline consistency (FIRST) |

| Threat context | Is there public PoC code or active exploitation? | Raises practical urgency even when technical description is unchanged (FIRST) |

| Environmental context | Is the asset internet-facing, privileged, or customer-impacting? | Changes the real remediation order inside your organization (FIRST) |

| Business impact | What breaks if the issue is exploited? | Converts technical findings into leadership action (owasp.org) |

| Prerequisites | What role, configuration, or feature must exist? | Prevents false urgency and overbroad claims (owasp.org) |

The right use of AI here is not “score this for me.” It is “show me where my scoring rationale is thin, where I have not documented prerequisites, and where the business impact language does not match the evidence.”

Real CVEs that show what good AI reporting looks like

The fastest way to tell whether an AI pentest report is any good is to see how it handles real vulnerabilities with non-trivial conditions.

CVE-2024-3400, PAN-OS GlobalProtect

NVD describes CVE-2024-3400 as a command injection issue arising from an arbitrary file creation vulnerability in the GlobalProtect feature of Palo Alto Networks PAN-OS, under specific versions and distinct feature configurations, and says it may allow unauthenticated remote code execution with root privileges on the firewall. NVD also notes that Cloud NGFW, Panorama appliances, and Prisma Access are not impacted. CISA’s alert on April 12, 2024 said Palo Alto Networks had released workaround guidance and identified affected version families 10.2, 11.0, and 11.1. CISA later referenced threat activity that included reconnaissance and probing for devices vulnerable to CVE-2024-3400, and the KEV catalog search results show CVE-2024-3400 in the catalog. (NVD)

A bad AI pentest report would write this finding as “Palo Alto firewall RCE.” That is too broad. It loses the GlobalProtect dependency, the configuration preconditions, and the list of products not affected. A good report would say something closer to this:

- The issue is relevant only if the affected PAN-OS versions and the necessary GlobalProtect conditions are present.

- The exposure is especially urgent because the flaw was treated as actively relevant by defenders and CISA added it to KEV tracking.

- The report should explicitly say whether the tester confirmed reachability, version family, and the relevant feature/configuration state, or whether the finding is based only on fingerprinting and should therefore remain “probable” until deeper validation is complete.

That difference is not academic. It is the difference between “patch all Palo Alto things immediately” and “patch or mitigate the specific exposed devices with the relevant attack preconditions first.”

CVE-2024-4577, PHP CGI on Windows

NVD describes CVE-2024-4577 very precisely: it affects PHP 8.1 before 8.1.29, 8.2 before 8.2.20, and 8.3 before 8.3.8 when Apache and PHP-CGI are used on Windows under certain code pages, where Windows Best-Fit behavior can cause PHP-CGI to misinterpret characters as PHP options and enable source disclosure or arbitrary PHP code execution. CISA’s KEV catalog search results also show CVE-2024-4577 in the catalog. (NVD)

This is a perfect test for AI report quality because the exploit conditions matter. A sloppy report says, “Outdated PHP on Windows enables RCE.” A strong report says:

- The issue is tied to Apache plus PHP-CGI on Windows, not simply “any PHP server.”

- Code page behavior is part of the exploit conditions and must be stated.

- The remediation is not vague. It is to move to fixed versions or remove the vulnerable deployment pattern.

- If the environment uses PHP-FPM or another non-CGI model, that should be stated because it changes applicability.

The report should also say how the team established applicability. Was it version disclosure plus server behavior? Direct configuration access? A lab reproduction? A PoC against a non-production replica? Those distinctions should survive into the final report, because engineering teams use them to decide whether emergency change procedures are justified.

CVE-2024-6387, OpenSSH regreSSHion

NVD describes CVE-2024-6387 as a security regression related to CVE-2006-5051 in OpenSSH’s server, where a race condition may allow sshd to handle signals unsafely, and says an unauthenticated remote attacker may be able to trigger it by failing to authenticate within a set time period. The CVE record echoes that framing. (NVD)

This is another good example because real exploitability depends heavily on environment and timing. A weak AI report will read the headline and collapse all nuance into “critical unauthenticated SSH RCE.” A better report will preserve the uncertainty:

- The issue is a race condition, not a deterministic bug with a one-request exploit path.

- The report should state whether the target version range was confirmed, whether the system is internet reachable, whether compensating controls narrow the exposure, and whether the engagement reproduced exploitability or only established susceptibility.

- The remediation section should distinguish between patching, service hardening, and temporary exposure reduction.

AI tends to erase uncertainty because certainty sounds cleaner. Good pentest reporting does the opposite. It makes the uncertainty visible where it matters.

CVE-2024-3094, xz backdoor

NVD says CVE-2024-3094 involved malicious code in xz upstream tarballs starting with version 5.6.0, and explains that extra .m4 files in the tarballs—not present in the repository—were used through a complicated build process to modify code while building liblzma. Red Hat’s post on its incident response adds useful environment detail: the compromise targeted a narrow set of standard Linux server conditions, especially x86_64 systems using systemd و sshd, and Red Hat’s response focused on determining affectedness rather than assuming all xz users were equally exposed. (NVD)

This is a classic case where an AI report can become dangerously misleading if it is not tightly grounded.

A bad report says, “The xz library version is present, system compromised.”

A good report says:

- The relevant distinction is between affected upstream tarball releases and what was actually built, packaged, and deployed in the specific environment.

- Repository state and tarball state were not identical.

- Exposure depended on build and runtime characteristics, not just the package name.

- The response plan needed both version analysis and supply-chain provenance review.

That is exactly why AI pentest reporting should be evidence-first. The language layer should never be allowed to flatten a supply-chain event into a naive “version equals breach” story.

Here is the broader lesson from all four cases:

| مكافحة التطرف العنيف | What the AI must not skip | What a good report should state |

|---|---|---|

| CVE-2024-3400 | Feature and configuration prerequisites | Affected PAN-OS context, exposed path, validation method, mitigation priority (NVD) |

| CVE-2024-4577 | Windows, Apache, PHP-CGI, code page conditions | Exact deployment pattern, fixed versions, proof of applicability (NVD) |

| CVE-2024-6387 | Race-condition uncertainty | Confirmed version, exposure surface, exploitability caveats, patch plan (NVD) |

| CVE-2024-3094 | Tarball versus repo, build path, narrow target conditions | Provenance, package source, deployment reality, containment steps (NVD) |

An AI pentest report earns trust when it preserves those distinctions instead of smoothing them away.

Common mistakes when using AI to write pentest reports

Most failed AI reporting programs make the same mistakes because the pressure points are predictable.

The first mistake is promoting noisy scanner output into confirmed findings. OWASP’s reporting guidance expects technical findings to include exploitability, impact, and detailed descriptions. That assumes the issue has crossed a validation threshold. If your workflow promotes “possible SSRF” or “potential SQL injection” straight from a tool into a final report, the problem is not the model. The problem is the lack of a validation gate. (owasp.org)

The second mistake is losing scope boundaries. Reports that omit who authorized the work, which identities were used, and what was out of bounds are far more likely to misstate impact. OWASP explicitly recommends including scope and limitations. (owasp.org)

The third mistake is letting the model generalize from one environment to another. This happens constantly in multi-tenant SaaS and multi-stage deployment pipelines. A staging-only issue becomes a production issue in the narrative because the AI optimizes for readability, not deployment discipline.

The fourth mistake is generic remediation. Developers hate this because it wastes time. “Apply proper input validation” is not a fix. It is a slogan. A useful remediation section ties the specific control gap to the affected code path or architecture decision. That is why the AI should draft remediation from a structured finding record that includes exploit path and observed failure mode, not from a vague category label.

The fifth mistake is weak retest bookkeeping. OWASP’s latest guidance specifically mentions reproducible artifacts that help validate fixes. A mature AI reporting workflow keeps the original reproduction steps, records what changed, and creates a retest state. If your report pipeline ends at PDF export, it is incomplete. (owasp.org)

The sixth mistake is mixing technical and executive language badly. Leadership sections become melodramatic, while technical sections become vague. The reason is simple: the same prompt is being used for both. NIST’s multiple-audience observation is a reminder that different outputs should be intentionally different. (منشورات NIST)

The seventh mistake is forgetting report security. OWASP recommends securing and encrypting the report so only the receiving party can use it. That guidance matters even more in the AI era because artifacts often move through more systems before final delivery. (owasp.org)

This is the part of the workflow where evidence-driven platforms make more sense than chat-only approaches. Penligent’s public material repeatedly emphasizes verified findings, editable reporting, reproducible PoCs, and one-click reporting tied to earlier testing stages. Whether a team uses that specific platform or builds an internal pipeline, the principle is right: reporting should remain connected to the evidence graph. The report should be the visible tip of a tracked process, not a detached block of AI-generated prose. (penligent.ai)

Picking the right AI setup for pentest reporting

The right setup depends on what you are trying to optimize.

If you are a solo researcher or small internal team, the simplest usable setup is often a structured folder, a finding schema, a scriptable renderer, and a commercial API with explicit data controls. That gets you most of the value: draft generation, consistent formatting, title cleanup, executive-summary assistance, and remediation phrasing.

If you are an internal AppSec or red team function, you will usually need more. At that point the report is not just a document. It is part of a lifecycle. You need asset identities, engagement records, reviewer sign-offs, attachment storage, and retest history. That is where an evidence-oriented workflow matters more than whichever model is hottest that week.

If you are dealing with compliance-sensitive environments, the right question becomes “What can this system prove later?” Penligent’s public site claims one-click reports aligned with SOC 2 and ISO 27001, and its product overview describes a pipeline from asset discovery through exploit execution to final report generation. That sort of end-to-end framing is useful because compliance and client assurance rarely care only about the PDF. They care whether the report maps to a repeatable workflow with evidence reuse and human approval. (penligent.ai)

That does not mean every team needs an all-in-one platform. It means the architecture you choose should support at least these capabilities:

- A stable source of truth for structured findings

- Artifact retention with hashes or equivalent controls

- Human review before severity and business impact are finalized

- Template-driven report rendering

- A retest path that reuses the original evidence and steps

- Data handling controls that match the sensitivity of the engagement

If one of those is missing, your AI pentest report process is weaker than it looks.

Building an evidence pipeline that survives retest

The strongest AI pentest report workflows are built backward from the retest.

Imagine the report six weeks later, after engineering says the issue is fixed. Can a second tester take the original steps, replay them safely, compare the new behavior to the old behavior, and mark the issue closed with confidence? If the answer is no, the original report was incomplete.

OWASP’s current guidance helps here because it specifically recommends reproducible artifacts and appendices for methodology and risk-rating explanations. That gives you a natural way to structure the pipeline. The original report contains summary and findings. The appendix or attachment set contains evidence and replay artifacts. The retest report references the same finding IDs and notes whether the exploit path still works, partially works, or has changed. (owasp.org)

A practical retest-ready workflow has these stages:

- Capture raw artifacts during testing.

- Normalize them into structured finding records.

- Draft narrative sections with AI.

- Review with a human.

- Render into report formats.

- Track remediation owner and state.

- Replay the original evidence after the fix.

- Record retest outcome with the same finding ID.

The operational discipline matters more than any single model feature.

A minimal evidence manifest can include:

- Finding ID

- Artifact file path

- Artifact hash

- Asset ID

- Collection date

- Tool or collection method

- Reviewer

- Sanitization state

- Retest relevance

That may feel heavy for a single issue, but it pays for itself quickly on larger engagements and repeated customer environments.

A minimum viable way to get an AI pentest report this week

If you want something practical rather than theoretical, this is a reasonable first implementation path.

On day one, create a fixed report template with these sections:

- Engagement scope and limitations

- Executive summary

- Findings summary table

- Detailed findings

- Appendix with methodology and artifacts

Then define a structured finding format like the JSON object shown earlier. Use that object as the only thing the model is allowed to draft from. Do not feed raw screenshots and logs directly into the report prompt.

Next, sanitize and package evidence. Redact tokens, customer identifiers, and secrets. Hash the artifact set. Keep a manifest.

Then use AI for four concrete jobs only:

- Rewrite rough notes into consistent finding titles

- Draft the technical description from verified fields

- Draft the business impact paragraph from reviewer-supplied context

- Draft the executive summary from approved findings only

After that, do a human review pass with a checklist:

- Can I reproduce this from the report?

- Are the exploit conditions accurate?

- Is the affected asset correct?

- Is the business impact specific and honest?

- Are the remediation steps actionable?

- Are the artifacts attached and sanitized?

- Would I sign my name to this finding?

If the answer is yes, render the report and deliver it in encrypted form or through an access-controlled channel, consistent with OWASP’s advice to secure the report. (owasp.org)

If you have a week instead of a day, add three more things:

- A simple ticket sync so every finding ID maps to a remediation owner

- A retest status field

- A scoring helper that combines CVSS v4 with your environmental and business modifiers

That is enough to move from “AI helped me write something” to “AI is part of a reporting workflow I would actually trust.”

The real answer to how to get an AI pentest report

You get an AI pentest report the same way you get a good non-AI pentest report: by collecting evidence carefully, preserving context, validating findings honestly, and writing for the people who have to make decisions from the result.

AI changes the speed and the ergonomics of that process. It does not change the burden of proof.

Used well, AI can save a huge amount of time on clustering findings, drafting titles, translating technical impact into business language, maintaining formatting consistency, and preparing retest notes. Used badly, it can flood your organization with elegant fiction.

So the standard to hold is simple. Every important sentence in the report should be traceable to scope, evidence, or a clearly stated reviewer judgment. Every finding should have replayable steps. Every severity statement should reflect both technical and environmental reality. Every executive statement should map to a business consequence. And every delivered report should make the next retest easier, not harder.

That is how you get an AI pentest report worth sending to engineering, leadership, clients, or auditors.

References and further reading

- OWASP Web Security Testing Guide, Reporting Structure (owasp.org)

- OWASP Risk Rating Methodology (owasp.org)

- OWASP Top 10 2025 (owasp.org)

- PTES main page and reporting guidance (pentest-standard.org)

- NIST SP 800-115, Technical Guide to Information Security Testing and Assessment (مركز موارد أمن الحاسب الآلي NIST)

- HackerOne, What Makes a Quality Report (docs.hackerone.com)

- Bugcrowd, Reporting a Bug (Bugcrowd Docs)

- FIRST CVSS v4.0 specification and user guidance (FIRST)

- NVD support notice for CVSS v4.0 (NVD)

- CISA Known Exploited Vulnerabilities Catalog overview (cisa.gov)

- NVD records for CVE-2024-3400, CVE-2024-4577, CVE-2024-6387, and CVE-2024-3094 (NVD)

- CISA and related official advisories relevant to CVE-2024-3400 and KEV context (cisa.gov)

- Vendor and ecosystem material relevant to xz incident handling (redhat.com)

- OpenAI API data controls (OpenAI Developers)

- Anthropic commercial privacy and Claude Code data usage (privacy.anthropic.com)

- Google Cloud Gemini data governance and Gemini API unpaid-services terms (Google Cloud Documentation)

- Penligent homepage (penligent.ai)

- AI Pentest Copilot, From Smart Suggestions to Verified Findings (penligent.ai)

- Overview of Penligent.ai’s Automated Penetration Testing Tool (penligent.ai)

- PentestGPT vs. Penligent AI in Real Engagements, From LLM Writes Commands to Verified Findings (penligent.ai)

- How to Use AI for SOC 2 and ISO 27001 Compliance While Reducing Costs (penligent.ai)