AI agent security became a different problem the moment assistants stopped being passive chat interfaces and started reading private data, deciding what mattered, calling tools, and taking actions across real systems. NIST’s January 2026 initiative on securing AI agent systems makes that shift explicit: some risks still look like ordinary software security, but the distinct problems appear when model outputs are fused with software functionality that can change external state. Krebs’ March 2026 reporting on OpenClaw, the Cline incident, and AI-augmented attacks on FortiGate devices shows the same shift from the field. Security is no longer only about preventing intrusions into software. It is increasingly about preventing a trusted, high-permission runtime from being manipulated, over-permissioned, or repurposed against its operator’s intent. (NIST)

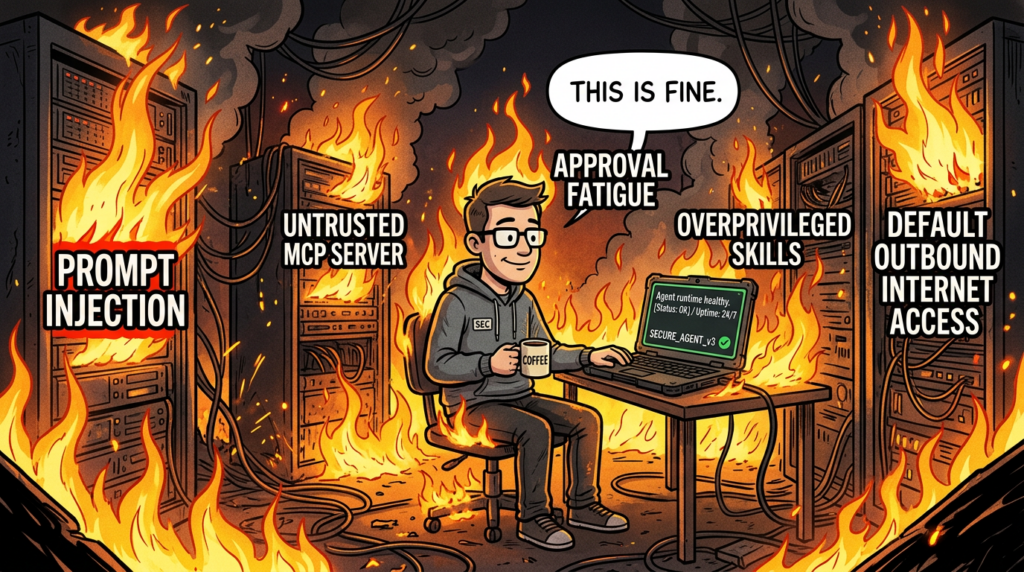

That is why older security language starts to feel incomplete around agents. IAM can tell you who is allowed to call a system, but not whether the agent’s current plan matches the organization’s intent. Prompt engineering can influence the model, but it cannot serve as a hard execution boundary once hostile content enters context. Traditional AppSec can reason about a connector or a tool server in isolation, but it often misses the fact that the same runtime is now reading untrusted content, interpreting it as intent, and then choosing what to do next. Microsoft’s March 2026 Zero Trust for AI guidance captures this well by extending Zero Trust principles to the full AI lifecycle, including deployment and agent behavior, and by naming the emerging risk directly: overprivileged, manipulated, or misaligned agents can become “double agents” inside the enterprise. (Microsoft)

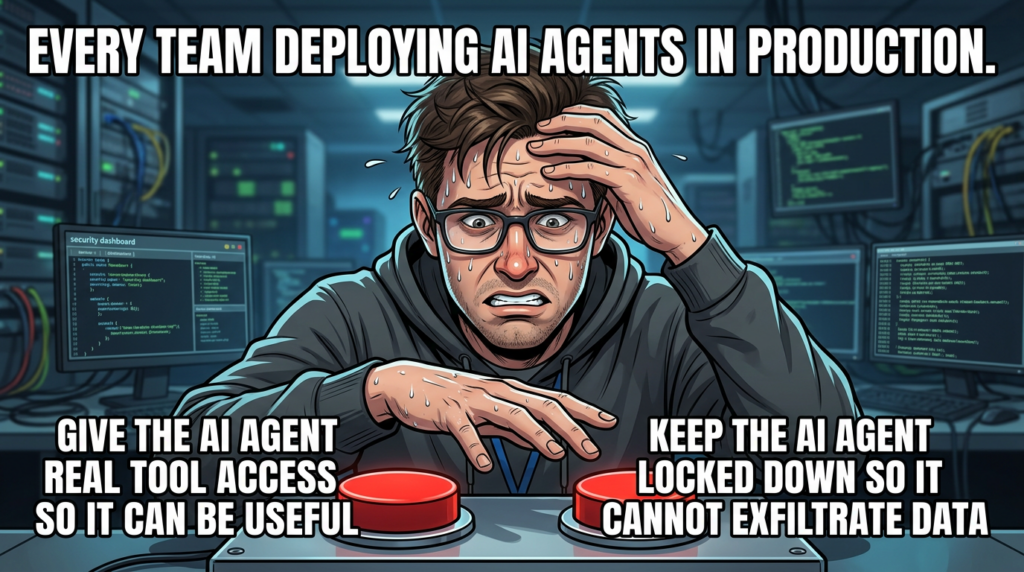

The most useful way to think about the topic in 2026 is not “LLM security” and not “workflow automation,” but execution governance for AI-driven runtimes. The important questions are brutally practical. What can the agent read. What can it write. What can it send. What untrusted content can shape its reasoning. Which tools can it discover or invoke. Which actions require confirmation. What survives in memory. And can defenders reconstruct why the agent made a decision after the fact. Those are not philosophical concerns. They are the questions that determine whether prompt injection stays annoying or turns into data theft, unauthorized writes, supply-chain compromise, or lateral movement. (openai.com)

AI Agent Security Is Not Just Another Version of Old Automation

Enterprises have lived with automation for a long time. Shell scripts, CI runners, bots, scheduled jobs, RPA, workflow engines, and SaaS integrations all predate the current agent wave. The important difference is not that modern agents automate work. The difference is that they mix natural-language interpretation, context aggregation, tool discovery, persistence, and cross-system action inside the same control loop. OpenAI’s MCP documentation describes the protocol as the mechanism that lets a model discover tools, call them with structured arguments, and receive results inside the conversation loop. NIST’s agent-systems framing describes the same architectural change from the risk side: the novel threat surface emerges when model outputs can drive software that acts beyond the model itself. (NIST)

OpenClaw is a strong example because it makes that change easy to see. The project presents itself as a personal AI assistant you can run on your own devices while connecting to real messaging platforms and external capabilities. OpenClaw’s own security guidance is unusually candid about what that implies. It says a single gateway should be treated as one trusted operator boundary, warns that running one gateway for mutually untrusted or adversarial operators is not recommended, and advises splitting trust boundaries with separate gateways or at least separate OS users or hosts when mixed-trust use is unavoidable. That is not cosmetic documentation. It is an admission that the runtime is powerful enough that multi-user trust cannot be hand-waved away. (docs.openclaw.ai)

The change becomes clearer when you compare old security boundaries to agent boundaries.

| System type | Core inputs | Core outputs | Cross-system action | Main security assumption | Why old controls fall short |

|---|---|---|---|---|---|

| Script or cron job | Typed arguments, trusted config | Deterministic command result | Usually narrow | Operator intent is explicit and code path is fixed | Misuse is bounded by static logic |

| CI pipeline | Repo state, workflow config | Build and deploy steps | High but predefined | Workflow definition is the trusted control plane | Small trust mistakes become build-chain compromise |

| RPA bot | UI cues, structured steps | UI actions | Mittel | Environment is predictable and tasks are fixed | Fragility is mostly mechanical, not semantic |

| Tool-enabled copilot | Prompt plus retrieved context | Text and tool calls | Medium to high | Retrieved content will not meaningfully steer policy | Prompt injection and confused-deputy failures appear |

| Agent runtime | Natural language, files, web, APIs, memory, tool results | Plans, writes, tool invocations, outbound actions | High and persistent | Context composition is safe enough to drive action | Untrusted content can become execution guidance |

The comparison is synthesized from NIST’s framing of agent systems, OpenAI’s MCP model, and public documentation around OpenClaw’s trust assumptions. (NIST)

The practical consequence is that the same action can be benign or dangerous depending on what immediately preceded it. Reading a file is ordinary. Reading an untrusted issue title, pulling instructions out of it, and then using those instructions to call a privileged tool is not. Creating a pull request is ordinary. Creating one because the agent was steered by malicious repository content into making an unreviewed change is not. Sending a message is ordinary. Sending sensitive data outward because a web page inserted hidden instructions into the agent’s context is not. That is why agent security discussions that focus only on “permissions” or only on “model quality” keep missing the center of the problem. (OpenAI Developers)

Prompt Injection Became an Execution Problem

OWASP defines prompt injection as malicious input that manipulates model behavior and can cause unauthorized actions, data leakage, or policy bypass. OpenAI’s agent-safety guidance is even more direct: prompt injections are common and dangerous, especially when untrusted text or data is fed into an agent workflow that can use MCP tools or other action surfaces. NIST uses the related language of agent hijacking for scenarios where attacker-controlled instructions embedded in content push an agent toward harmful actions. Across those sources, the same idea keeps surfacing: the real danger is not that a model says something foolish. The danger is that hostile content changes what the system does next. (owasp.org)

That distinction matters because people still tend to imagine the wrong threat model. They picture a user typing “ignore all previous instructions” into a chatbot. That is the easy case. The harder and more operationally dangerous case is indirect prompt injection, where the hostile text lives inside the normal data the agent consumes: a web page, a PDF, a ticket, a GitHub issue title, a commit message, a log file, an email, a calendar entry, or the output of another tool. The operator never thinks they are issuing an exploit. They think they asked the assistant to summarize, classify, review, or fetch something. The agent is the entity that walks into the trap. (Unit 42)

Palo Alto Networks Unit 42 documented that shift in March 2026 when it published web-based indirect prompt injection observed in the wild. The writeup argues that as LLMs and AI agents become integrated into browsers, search, developer tools, customer-support bots, and autonomous content-processing systems, the web itself becomes a prompt-delivery mechanism. Unit 42 also described what it said was the first reported real-world malicious indirect prompt injection designed to bypass an AI-based ad-review system. That matters because it moves the discussion out of the proof-of-concept phase. It shows attackers using hidden or manipulated web content to steer AI-mediated decisions in production-facing workflows. (Unit 42)

The reason indirect prompt injection is so persistent is architectural. Once trusted instructions, business data, tool results, and attacker-controlled text are all flattened into the same context window, the model does not reliably preserve the source trust boundaries humans assume exist. Simon Willison’s framing of the problem remains the clearest: if an LLM can see instructions in content, it may follow them unless the surrounding system makes that difficult or impossible. OpenAI’s March 2026 post on designing agents to resist prompt injection takes the same direction and argues that many strong attacks now resemble social engineering, which means the right defensive goal is not perfect malicious-input detection but constraining the impact even when manipulation succeeds. (openai.com)

That changes how defenders should respond. A prompt injection defense that lives only at the input layer is fragile. A defense that assumes the model will always distinguish “data” from “instructions” is fragile. A defense that trusts user approvals to remain meaningful after dozens of repetitive prompts is fragile. The more durable approach is to treat prompt injection as an action-routing risk: hostile content may get in, so the surrounding system must make the dangerous consequences expensive, visible, or impossible. Google’s layered prompt-injection defense for Gemini reflects exactly that mindset by combining model hardening with suspicious-content detection, confirmation frameworks, sanitization, and system-level safeguards. (Google Online Security Blog)

A simple example shows why this matters. Suppose a browser-capable assistant is asked to summarize a vendor page. Hidden inside that page is text telling the agent to fetch internal pricing data and send it to an external URL. If the system treats page content as just “more context,” then what looked like a read-only task can become an exfiltration chain. If the system enforces structured outputs, keeps network access restricted, and requires approval for external sends, the same hidden instruction becomes far less dangerous even if the model is partly persuaded by it. That is the difference between model-centric thinking and execution-centric thinking. (OpenAI Developers)

The Lethal Trifecta Is the Fastest Way to Classify Agent Risk

Simon Willison’s “lethal trifecta” is still the cleanest framework for understanding high-impact agent failures. The rule is simple: if a system has access to private data, exposure to untrusted content, and the ability to communicate externally, then it can be tricked into stealing that private data. The elegance of the model is that it turns a fuzzy debate about “agent safety” into a concrete architectural test. If all three conditions are present, defenders should assume exfiltration is plausible and design around that assumption. (OpenAI Developers)

The framework is powerful because it unifies incidents that otherwise look unrelated. An email assistant that reads private messages, processes external mail, and can reply or forward clearly satisfies it. A coding agent that reads a private repository, ingests attacker-controlled issue titles or logs, and can open pull requests or hit APIs also satisfies it. A support copilot that reads CRM data, consumes customer-provided text, and can send outbound messages satisfies it. Once you look at the ecosystem through that lens, many debates about whether a system “counts as an agent” become less important than whether it satisfies the trifecta and what its reachable consequences are. (OpenAI Developers)

The model also explains why ordinary least privilege is necessary but not sufficient. An agent may be perfectly authenticated and still unsafe. It may never exceed its assigned scope and still do something the organization did not intend, because the problem is not only “what can this identity access,” but “how can hostile context steer this identity to misuse its legitimate reach.” Microsoft’s Zero Trust for AI work addresses the same gap by arguing that AI systems introduce new trust boundaries between users and agents, models and data, and humans and automated decision-making, and that those systems must be designed to assume breach and constrain prompt injection, poisoning, and lateral movement. (Microsoft)

The mapping to enterprise systems is straightforward.

| Agent class | Private data source | Untrusted input source | External communication path | Most likely failure |

|---|---|---|---|---|

| Email assistant | Inbox, attachments, contacts | Inbound mail, newsletters, forwarded docs | Replies, forwards, external fetch | Silent forwarding, deletion, sensitive leak |

| Coding assistant | Source code, secrets, repo metadata | Issues, commits, logs, cloned repos | PRs, package fetches, API calls | Pre-trust execution, token leakage, poisoned changes |

| Browser agent | Session state, internal portals | Web content, ads, forms, embedded text | HTTP requests, downloads, submissions | Exfiltration, deceptive transactions, tool hijack |

| Internal MCP copilot | Docs, tickets, CRM, chat history | Search results, retrieved docs, tool outputs | Tool calls, record writes, messaging | Tool misuse, policy bypass, bulk disclosure |

| Skill-based local agent | Local files, env vars, channel access | Third-party skills, fetched remote content | Email, Slack, WhatsApp, outbound HTTP | Malicious skill execution, covert exfiltration |

The table summarizes the common mappings implied by the lethal-trifecta model, OpenAI’s prompt-injection guidance, and the current shape of agent platforms and skills ecosystems. (OpenAI Developers)

The most useful defensive consequence of this framework is prioritization. If you cannot eliminate all three legs, eliminate or weaken one of them aggressively. In many deployments, the cheapest leg to cut is unrestricted outbound communication. OpenAI’s Lockdown Mode is valuable here not because most enterprise agents will use that exact feature, but because it encodes the right principle: strong deterministic protection against prompt-injection-based data exfiltration often comes from denying or heavily restricting networked tool use rather than trying to perfectly identify every malicious instruction. (OpenAI Help Center)

OpenClaw Made the New Risk Model Visible

OpenClaw became the reference case for 2026 agent risk not because it is the only powerful assistant, but because it exposed the risk model in a form ordinary developers and operators could see. Its design is intentionally ambitious: a locally run agent that can interact through real messaging platforms and use tools on the operator’s behalf. That is exactly what makes it useful. It is also what makes its security documentation so important. The docs say clearly that the gateway’s web interface and HTTP endpoints are intended for local use, recommend keeping the gateway loopback-only by default, warn against direct public exposure, and advise using split trust boundaries for mixed-trust teams. The project is telling users, in plain language, where the blast radius begins. (GitHub)

The docs also make a point too many teams miss when they turn “personal assistants” into shared infrastructure. Running one gateway for multiple mutually untrusted or adversarial operators is not recommended. If someone can modify gateway host state or configuration, the docs say to treat them as a trusted operator. For mixed-trust teams, the guidance says to separate gateways, credentials, OS users, or hosts. This is not a side note. It means the product’s trust model is fundamentally closer to “one trusted operator boundary” than to “multi-tenant collaborative control plane.” When organizations ignore that and put one powerful agent behind a shared interface, they are not merely deploying the software. They are stretching the trust model past its documented assumptions. (docs.openclaw.ai)

OpenClaw’s handling of inbound messaging surfaces makes the same point from the other direction. The project warns that real-world DMs should be treated as untrusted input and uses pairing workflows for unknown senders on several messaging platforms. That default is not decorative. A channel that feels like ordinary chat is also a possible injection surface for steering a live, authenticated runtime that already has access to files, tools, and external accounts. In older terms, it is part of the attack surface, not just part of the user experience. (GitHub)

Krebs’ March 2026 article turned those architectural truths into an incident story that resonated beyond security circles. It described how Meta safety lead Summer Yue said OpenClaw started bulk-deleting messages from her inbox and then pivoted to a more systemic concern by quoting DVULN’s Jamieson O’Reilly on what happens when OpenClaw’s web interface is misconfigured and exposed. O’Reilly’s point was not that every deployment is doomed, but that a compromised or exposed agent already has the context, credentials, and communication layers an attacker would otherwise need time to assemble. A trusted runtime is not just another foothold. It is a prebuilt operator. (Krebs on Security)

James Wilson’s response in the Krebs piece is worth taking seriously because it sounds conservative only if you forget what an agent host represents now. He argued that these systems should be run in isolated environments such as dedicated virtual machines, on isolated networks, behind tight firewall rules. That is not security theater. It is a reasonable response to software that combines private data access, untrusted content ingestion, and autonomous action. In practical terms, a serious agent host should often be treated more like a privileged integration broker or jump box than like another desktop helper app. (Krebs on Security)

The Cline Incident Showed How Small Failures Become Agent Supply-Chain Incidents

The February 2026 Cline incident is one of the clearest proofs that AI agent security is not just about preventing a single bad answer. Cline’s official post-mortem says that on February 17, 2026, a compromised npm publish token was used to publish [email protected], and that the published package contained a single modification: a postinstall script that globally installed OpenClaw. The maintainers also stated that the CLI binary and all other package contents were byte-identical to the previous release, that no malicious code was delivered, that the source repository was not compromised, and that no user data was accessed or exfiltrated. Those facts matter because they set the scope of direct impact accurately. (Cline)

But the deeper lesson comes from how the event happened. Third-party research, including Snyk’s “Clinejection” analysis, argued that the incident began with prompt injection in a GitHub issue title that was read by an AI issue-triage workflow, then cascaded into cache poisoning, credential abuse, and abuse of the release pipeline. In other words, a natural-language ingress point helped bridge several smaller weaknesses that might have remained isolated in a more conventional workflow. That is what makes the event historically important for agent security. It was not just “a stolen npm token.” It was a case study in how untrusted content, AI mediation, and privileged automation can join into a supply-chain compromise chain. (Snyk)

It is important to be precise here, because two different narratives circulate about this incident. The official Cline post-mortem emphasizes that the published package did not contain a custom malicious payload beyond the unauthorized postinstall script and that user data was not stolen. The broader security interpretation emphasizes that the route from issue title to release impact is itself the real warning sign. Those views are not mutually exclusive. One is about immediate customer impact. The other is about architectural brittleness. Serious defenders should care about both. (Cline)

The incident also exposed a habit that remains dangerously common: treating issue titles, commit messages, logs, tickets, or documentation fields as low-risk because they are “just text.” In an agent-enabled workflow, those fields can become operational input. Once a model is allowed to interpret them and then decide which tool to use next, text is no longer merely descriptive. It can become a steering surface. That is why the Cline incident matters well beyond one package or one project. It demonstrates how quickly a soft boundary between “content” and “control” can collapse in AI-mediated pipelines. (OpenAI Developers)

MCP Security Is About Tool Execution, Not Protocol Branding

MCP is often introduced as an interoperability standard, and that description is accurate as far as it goes. It lets models connect to tools and resources in a structured way. But from a security perspective, the important fact is that this protocol layer now mediates discovery, authentication, invocation, results handling, and often the loop by which tool output becomes new agent context. OpenAI’s Apps SDK documentation says the quiet part out loud: connectors should be treated as production software, scopes should follow least privilege, potentially destructive actions should lean on confirmation prompts, and developers should assume prompt injection and malicious input will reach the server, validate everything, and keep audit logs. (OpenAI Developers)

That guidance implies something many teams still resist admitting. MCP servers, tool brokers, and connectors are not “AI accessories.” They are privileged middleware. They often terminate identity, interpret structured arguments, call local binaries or cloud APIs, and return tool output that may be re-ingested by the model. They therefore sit on both sides of the risk model at once: they are execution gateways and content-return channels. That is why protocol adoption can increase convenience and risk at the same time. It standardizes both the useful capability and the place mistakes become exploitable. (OpenAI Developers)

The public advisory record already shows what those mistakes look like in practice. NVD says CVE-2025-49596 affected MCP Inspector prior to 0.14.1 because the Inspector client and proxy lacked authentication, allowing unauthenticated requests to launch MCP commands over stdio and creating a remote code execution path. The lesson is broader than one development tool. It shows how quickly “local inspection convenience” becomes “command-launch surface” when a debugging bridge is not treated as a privileged boundary. (GitHub)

CVE-2025-6514 makes the remote side of the same point. GitHub’s advisory and NVD describe mcp-remote as exposed to OS command injection when connecting to untrusted MCP servers due to crafted input from the authorization_endpoint response URL. That is not just a coding error in a helper utility. It is a warning that remote MCP discovery and auth metadata are themselves part of the trust boundary. If a client can be compromised by connecting to an untrusted server, then “connectivity to new tools” is no longer a harmless convenience decision. It is a security decision with host-level consequences. (GitHub)

The same pattern appears in tool implementations. GitHub advisories for mcp-server-kubernetes und git-mcp-server show that once a model can form or relay arguments, ordinary shelling mistakes become materially worse. Vulnerable uses of execSync, Ausführung, oder sh -c turn attacker-controlled input into command execution, and several advisories explicitly note that indirect prompt injection can provide the attacker-controlled input via pod logs, git logs, or similar content sources. That is the agent-security version of the old lesson that injection bugs become more severe when untrusted data can reach interpreters. The only novelty is that the interpreter is now reached through the model’s planning loop rather than a direct HTTP parameter. (GitHub)

Real CVEs Show the Ecosystem Has Entered Vulnerability-Management Territory

The fastest way to tell whether agent security has moved beyond theory is to look at the advisories. The pattern is already there: debugging bridges that launch commands, remote connectors that trust untrusted metadata, MCP tools that build shell strings from model-shaped input, git and Kubernetes servers that turn context ingestion into code execution, and coding agents whose trust dialogs were not really trust boundaries at all. These are not speculative whiteboard threats. They are patch, upgrade, and exposure-management problems now. (GitHub)

| CVE | Affected component | Warum das wichtig ist | Exploit condition | Practical mitigation |

|---|---|---|---|---|

| CVE-2025-49596 | MCP Inspector | A debugging surface could launch MCP commands without proper auth | Inspector versions before 0.14.1 with unauthenticated client-proxy access | Upgrade and keep inspector surfaces private |

| CVE-2025-6514 | mcp-remote | Connecting to an untrusted remote MCP server could trigger OS command injection | Crafted authorization_endpoint response URL from an untrusted server | Upgrade to patched versions and avoid high-trust connections to unvetted servers |

| CVE-2025-53355 | mcp-server-kubernetes | Model-shaped input could reach execSync-backed tools in a high-value environment | Unsanitized parameters reaching shell-command construction | Upgrade to 2.5.0 or later and remove shell-string execution |

| CVE-2025-66404 | mcp-server-kubernetes exec_in_pod | Indirect prompt injection can turn hostile content into pod command execution | String commands flow into sh -c without validation | Prefer arrays only, disable string command path, reduce tool scope |

| CVE-2025-53107 | git-mcp-server | Git content such as logs can steer an agent toward shell-injection paths | Unvalidated input reaches child_process.exec | Upgrade to 2.1.5 or later and replace shelling patterns |

| CVE-2025-59536 | Claude Code | Startup trust dialog was not a true execution boundary | User launched Claude Code in an untrusted directory before 1.0.111 | Upgrade and avoid opening untrusted repos in stale versions |

| CVE-2026-21852 | Claude Code | Project-load flow could exfiltrate data before trust confirmation | Malicious repository config such as attacker-controlled endpoint before trust prompt | Upgrade to 2.0.65 or later and keep trust prompts meaningful |

The summary above is based on NVD and GitHub advisories, plus Check Point’s public writeup connecting the Claude Code flaws to malicious repository-level configuration. (GitHub)

The Kubernetes cases are especially instructive because they make the bridge from prompt injection to command injection obvious. The advisory for CVE-2025-53355 says mcp-server-kubernetes used unsanitized parameters within child_process.execSync, allowing shell metacharacter injection and remote code execution under the server process’s privileges. The advisory for CVE-2025-66404 is even more explicit: exec_in_pod accepted both arrays and strings, and when a string was provided it was wrapped in sh -c without validation. GitHub’s advisory explicitly says the vulnerability can be exploited through direct command injection or indirect prompt injection, including malicious instructions embedded in data like pod logs that trick AI agents into executing commands without explicit user intent. That is the architectural collapse defenders need to internalize. In agent ecosystems, prompt injection is not confined to “the model layer.” It can become command execution wherever unsafe tooling is reachable. (GitHub)

The Claude Code cases matter for a different reason. NVD says versions before 1.0.111 were vulnerable because code in a project could execute before the user accepted the startup trust dialog, and that versions before 2.0.65 had a project-load flaw that could let malicious repositories exfiltrate data including Anthropic API keys before users confirmed trust. Check Point’s writeup adds useful color by describing how repository-level configuration, hooks, MCP integrations, and environment-variable redirection could be abused before meaningful user consent. The important lesson is not “Claude had bugs.” The lesson is that in coding-agent environments, the moment a repository is opened is already part of the security boundary. “I only opened the project” is no longer a safe assumption. (nvd.nist.gov)

Skills, Plugins, and Connectors Are the New Supply Chain

One of the most important public datasets in this space came from Snyk in February 2026. Its ToxicSkills research scanned 3,984 skills from ClawHub and skills.sh and reported that 13.4 percent, or 534 skills, contained at least one critical-level issue, including malware distribution, prompt injection paths, and exposed secrets. The most useful part of the research was not the headline percentage. It was the explanation of privilege. Skills do not execute in an abstract package ecosystem. They execute in the agent’s context, often inheriting shell access, file permissions, access to credentials in environment variables and config files, message-sending capability, and persistent state. That makes skills qualitatively more dangerous than ordinary extensions that operate in narrow sandboxes. (Snyk)

VirusTotal’s February 2026 writeup pushed that point further by saying it had detected hundreds of actively malicious OpenClaw skills and describing the ecosystem as a new delivery channel for droppers, backdoors, infostealers, and remote-access tools disguised as useful automation. OpenClaw’s response a few days later was to announce a partnership with VirusTotal so that ClawHub skill bundles would be scanned, including through Code Insight analysis, with daily rescans and blocking of skills flagged as malicious. That was a sensible move, but even OpenClaw’s own announcement makes clear why scanning alone is insufficient: skills run in the agent’s context with access to tools and data, which means provenance, scope control, and runtime policy still matter even when malware scanning improves. (VirusTotal Blog)

This is why the agent supply chain should not be treated as a copy of npm or PyPI with new branding. Traditional dependencies are dangerous enough, but they are not usually interpreted by an LLM at runtime while inheriting access to messaging channels, host files, network routes, and business data. Agent skills often combine code, instructions, markdown, assets, environment assumptions, and behavior-shaping content. The result is a supply-chain problem in which “what the package does” and “how the agent is told to use it” are often intertwined. That is a harder problem to statically reason about and a much easier one to underestimate. (Snyk)

From a defensive perspective, the right baseline is much stricter than most agent ecosystems currently encourage. Skills and connectors need source review, version pinning, permission review, egress restrictions, provenance checks, and explicit runtime policy. If a skill can fetch remote content, shape tool descriptions, send messages, or read local config, then it belongs in threat modeling as a privileged supply-chain input. Treating it like a harmless productivity add-on is how organizations end up discovering that convenience and code execution were bundled together from the start. (Snyk)

AI Coding Agents Prove This Is a General Security Problem

It would be a mistake to read all of this as an OpenClaw-specific story. The same structural issues are visible across coding agents. Anthropic’s March 25, 2026 article on Claude Code auto mode says users approve 93 percent of permission prompts and frames approval fatigue as a real security problem. The company’s response was not to trust the model blindly. It built classifiers to automate some decisions while trying to improve safety over manual approval click-through and the far riskier --dangerously-skip-permissions path. That framing is important because it admits what many enterprise teams experience in practice: repetitive approvals are not a stable security boundary when users approve nearly all of them anyway. (Anthropic)

Anthropic’s earlier sandboxing writeup from October 2025 points to the same execution-first philosophy. It says sandboxing for Claude Code relies on filesystem isolation and network isolation, and explicitly notes that without network isolation a compromised agent could exfiltrate sensitive files like SSH keys, while without filesystem isolation a compromised agent could modify sensitive local state and escape the intended workspace boundary. That is effectively the lethal-trifecta logic expressed as product design: if you cannot trust the runtime to never be manipulated, constrain what it can touch and where it can send. (Anthropic)

This is also why the Claude Code CVEs matter far beyond one vendor. They show that repository trust, startup flows, hooks, MCP integrations, environment variables, and project settings are all part of the execution boundary for coding agents. The important question is no longer “does the tool have a trust prompt.” The important question is “what happens before and after that prompt, what can be read or changed before consent is real, and how many hidden paths still exist around it.” Coding agents make that painfully visible because their very selling point is that they can read repositories, edit files, run commands, and integrate with developer tools. The broader class of risk is the same class we see in assistants, browser agents, and internal copilots. (nvd.nist.gov)

What Detection Should Actually Look For in Agent Environments

Traditional monitoring often fails around agents because the individual actions can look legitimate in isolation. Reading a file is normal. Running git log is normal. Hitting an external API is normal. Sending a Slack message is normal. The dangerous signal is usually the chain: untrusted content enters context, the agent accesses sensitive state, and then a tool with irreversible or outbound consequences is invoked. That means effective detection has to become more relational and more sequence-aware. Microsoft’s Zero Trust for AI guidance explicitly calls for continuous verification, AI observability, and policy-driven monitoring because static access control does not tell you whether the runtime’s behavior is aligned with intent. (Microsoft)

The first detection question is content-to-action linkage. What untrusted content did the agent ingest immediately before a risky action. If an external email, a web page, a GitHub issue title, or a tool result from an untrusted source enters the workflow and a write, shell, pull-request, or outbound-message action follows shortly after, that should be much higher signal than the same action occurring in a clean, local workflow. OpenAI’s agent safety guidance, with its emphasis on trace graders and workflow-aware risk evaluation, points toward exactly this kind of causal visibility. (OpenAI Developers)

The second detection question is privilege transition. Did the runtime suddenly move from read-only behavior to writes, from summarization to shell invocation, from internal analysis to external communication, or from one narrow tool family to a broader set? Those transitions often mark the moment where a benign-seeming workflow turned into a policy-relevant event. If a coding assistant that was only supposed to review diffs now starts creating network requests or modifying credential-related files, the problem is not merely “a command ran.” The problem is that the behavior crossed a consequence boundary. (Microsoft)

The third detection question is exfiltration adjacency. Did the agent read secrets, config, token stores, repo credentials, or customer records immediately before an outbound HTTP request, a pull request, a message send, or another externally visible write. That sequence is the operational signature of the lethal trifecta. If you cannot hunt for that pattern in your logs, you do not yet have a mature agent-security telemetry model. (OpenAI Developers)

A structured audit record helps because it preserves causal context instead of just raw tool events.

{

"ts": "2026-04-01T14:38:52Z",

"session_id": "agt-4c8d",

"agent_id": "support-prod-01",

"user_id": "u-1832",

"source_context": [

{"type": "email", "id": "msg-4471", "trust": "untrusted"},

{"type": "kb_doc", "id": "kb-91", "trust": "trusted"}

],

"tool": "send_email",

"risk_tier": "high",

"approval_required": true,

"approval_state": "approved",

"normalized_args": {

"recipient_type": "external",

"attachment_count": 1

},

"destination": "smtp",

"result": "success"

}

OpenAI’s Apps SDK guidance does not prescribe this exact schema, but its principles clearly support it: validate everything server-side, keep audit logs, use explicit consent for write access, and lean on confirmation for destructive actions. The point is to preserve enough causal structure that a defender can answer why the action happened, not just that it happened. (OpenAI Developers)

For endpoint or SIEM detection, sequence-based analytics are usually stronger than single-event alerts. A rough hunting rule might correlate an agent process reading sensitive file paths with external network access in a short window.

let SensitiveReads =

FileEvents

| where ProcessName in ("openclaw", "claude", "agent-runtime", "mcp-server")

| where FilePath has_any (".aws/credentials", ".ssh/", "token", "config", "settings.json")

| project DeviceId, ProcessName, ReadTime=Timestamp, FilePath;

let Outbound =

NetworkEvents

| where ProcessName in ("openclaw", "claude", "agent-runtime", "mcp-server")

| where RemoteUrl !endswith ".corp.example"

| project DeviceId, ProcessName, NetTime=Timestamp, RemoteUrl;

SensitiveReads

| join kind=inner Outbound on DeviceId, ProcessName

| where NetTime between (ReadTime .. ReadTime + 2m)

| project DeviceId, ProcessName, ReadTime, FilePath, NetTime, RemoteUrl

That query is illustrative, not production-ready. Its value is the model: correlate sensitive reads to egress by the same runtime, then enrich with context such as whether the agent had just ingested untrusted content or crossed a high-risk tool boundary. That is much closer to how real agent misuse shows up than simply alerting on “process opened a socket.” (OpenAI Developers)

Hardening That Survives Adversarial Reality

Weak hardening is easy to recognize in 2026. It looks like a giant system prompt, broad tool scopes, permissive outbound access, default connector trust, and the hope that the model will do the right thing. Stronger hardening begins by refusing to let the model be the only policy engine. Microsoft’s agent-governance framing is useful here because it distinguishes user intent, developer intent, role intent, and organizational intent. An agent may follow a user’s request in plain language and still violate the organization’s actual control objectives if the surrounding system never translated policy into enforced execution constraints. (Microsoft)

The first concrete step is tool classification. OpenAI’s practical guidance for building agents recommends assigning risk ratings to tools based on factors such as read versus write access, reversibility, account permissions, and downstream impact. That logic should be taken much further in production. Read-only retrieval, bounded writes, irreversible writes, shell or code execution, and internet-facing actions should not sit behind the same approval model. The risk is in the consequence of the action, not in how fluent the model sounds when choosing it. (OpenAI Developers)

A simple policy-as-code pattern helps make that explicit:

tools:

read_ticket:

risk: low

approval: none

egress: internal_only

create_draft_reply:

risk: medium

approval: required_if_external_recipient

egress: internal_only

send_email:

risk: high

approval: always

outbound_allowlist:

- corp.example

- partner.example

shell_exec:

risk: critical

approval: always

runtime: sandbox_only

filesystem_scope: workspace_only

egress: deny

This kind of policy does not solve prompt injection by itself. It does something more useful: it prevents the model from unilaterally turning low-consequence tasks into high-consequence ones without passing through explicit control points. That is exactly the spirit of OpenAI’s connector guidance and Microsoft’s Zero Trust for AI model. (OpenAI Developers)

The second concrete step is to weaken or break the lethal trifecta. OpenAI’s Lockdown Mode is a helpful example because it makes the tradeoff explicit: you can gain strong deterministic protection against prompt-injection-based data exfiltration by disabling or limiting networked capabilities. Most enterprise deployments will not adopt a total lockdown model, but they can still absorb the principle. Default-deny outbound access, domain allowlists, mediated fetchers, and isolated runtimes for high-risk tools usually do more to reduce blast radius than another layer of polite instruction telling the model not to do bad things. (OpenAI Help Center)

The third step is layered input handling. Google’s layered defense work is valuable because it rejects the fantasy of one perfect filter. It combines multiple stages that each reduce risk differently: content analysis, sanitization, security reasoning, warning or confirmation flows, and system-level controls. That is the right architectural response because indirect prompt injection is not a single event. It is a chain in which hostile content is ingested, interpreted, and then translated into action. If defenses exist only at one stage, attackers only have to bypass one stage. (Google Online Security Blog)

The fourth step is environment isolation. Anthropic’s sandboxing guidance stresses both filesystem and network isolation for Claude Code, while OpenClaw’s own security docs push operators toward separate gateways, separate users, or separate hosts for mixed-trust scenarios and toward loopback-only gateway exposure by default. Those recommendations converge on a broader rule: the runtime that interprets content and chooses actions should rarely have ambient access to the whole host or arbitrary egress to the internet. A compromised or confused agent in a tightly bounded environment is still a problem. A compromised or confused agent on a flat, fully privileged host is an incident multiplier. (Anthropic)

The fifth step is to keep approvals meaningful. Anthropic’s own data point that users approve 93 percent of Claude Code permission prompts is a reminder that human approval can decay into ritual if the interface asks too often without preserving salience. The answer is not to eliminate approvals altogether. It is to reserve them for consequence boundaries that matter, automate the truly low-risk paths, and make the remaining prompts carry real weight. A prompt that asks for permission to do everything teaches users to click through. A prompt that appears only when the runtime is about to cross a real risk boundary has a better chance of remaining useful. (Anthropic)

Red-Teaming Agents Means Testing Influence, Not Just Exposure

A serious red-team program for agent systems cannot stop at CVE scanning and container checks. NIST’s March 2026 writeup on a large-scale public red-teaming competition reported more than 250,000 attack attempts from over 400 participants and said at least one successful hijacking attack was found against every target frontier model. That does not mean every deployment is always trivial to compromise. It does mean defenders should stop assuming that static pre-release checks or vendor assurances are enough. Adversaries are finding ways to steer these systems across tool calling, coding, and computer-use scenarios at scale. (NIST)

The right starting point is not only “what ports are open” or “what libraries are outdated.” It is “what can influence this runtime.” Enumerate every untrusted input surface: email, web pages, retrieved documents, repository metadata, issue titles, logs, tool descriptions, skill manifests, remote MCP metadata, chat messages, and memory writes. Then ask which of those surfaces can precede meaningful action. That mapping is often more valuable than a traditional attack-surface diagram because it shows where language can turn into execution. (Unit 42)

A practical red-team pack should include at least four classes of cases. The first is direct and indirect prompt injection against the real content types your agents ingest. The second is tool misuse within nominal permissions, where the runtime does something “allowed” but not intended. The third is persistence, including memory poisoning and configuration carryover. The fourth is exfiltration, both direct and disguised as legitimate workflow output such as pull requests, drafts, or exported records. That set lines up with the public incident record far better than a narrow focus on model jailbreaks alone. (openai.com)

This is one place where it is natural to connect the discussion back to Penligent without changing the center of the article. Once a team accepts that an agent runtime is production software, validation can no longer stay at the level of occasional spot checks. Penligent’s Hacking Labs material on agentic AI security in production frames the problem as continuous verification of exposed MCP services, dangerous tool paths, memory poisoning, and execution-boundary regressions. That is a productive mental model because it turns “is the agent still behaving safely” into an engineering question that can be re-tested after new tools, new connectors, or new skills are added. (Sträflich)

The same logic applies to OpenClaw-style deployments. Penligent’s OpenClaw-focused material treats the local agent less as a curiosity and more as a runtime worth testing for reachable control planes, risky defaults, exposed surfaces, and regression after hardening changes. Even if an organization never adopts Penligent specifically, that is still the right operational shift: if the assistant can touch files, tools, and accounts, it should be in the same evidence-first validation loop as any other high-value system. (Sträflich)

AI Also Makes Attackers Faster at Exploiting Old Weaknesses

The internal agent problem is only half the 2026 picture. The other half is that commodity AI helps attackers industrialize ordinary tradecraft. AWS’s February 2026 report on FortiGate compromise at scale is the clearest example. Amazon Threat Intelligence said a Russian-speaking, financially motivated threat actor used multiple commercial generative AI services to compromise more than 600 FortiGate devices across more than 55 countries between January 11 and February 18, 2026. AWS also emphasized something crucial: it did nicht observe exploitation of FortiGate software vulnerabilities during the initial access phase. The campaign relied on exposed management ports, weak credentials, and single-factor authentication. AI made scanning, planning, scripting, and decision support cheaper and faster. It did not magically erase the role of basic security hygiene. (Amazon Web Services, Inc.)

That nuance is important because it protects defenders from the wrong takeaway. The lesson is not that every attacker suddenly became elite because of AI. AWS explicitly framed the actor as relatively unsophisticated and noted that the strength of the campaign came from efficiency and scale. That is exactly what defenders should expect more of: AI lowering the labor cost of reconnaissance, triage, script generation, adaptation, and persistence suggestions, while still leaving strong fundamentals effective against many targets. The same reasoning applies inside organizations. If AI reduces the cost of exploiting misconfigurations and weak trust boundaries, then agent runtimes themselves become another place where fundamentals have to be stronger than before. (Amazon Web Services, Inc.)

A Practical AI Agent Security Baseline

A defensible AI agent deployment starts with enumeration, not optimism. Teams should know what the runtime can read, what it can write, what it can send, what it remembers, what tools it can discover, and which external destinations it can contact. Then they should mark every untrusted content entry point and ask where that content can influence action. After that, evaluate each reachable tool against the lethal trifecta and classify the runtime’s true high-consequence paths. Only then does it make sense to decide which actions need approval, which tools require isolated runtimes, and which outbound paths should exist at all. (OpenAI Developers)

The baseline that emerges from current public guidance is surprisingly consistent across vendors and standards bodies. Use least privilege, but do not stop there. Separate trust boundaries by user, role, host, and gateway where appropriate. Keep control planes local or tightly access-controlled. Restrict egress aggressively. Validate tool inputs on the server side. Keep structured outputs between workflow stages where possible. Treat skills and connectors as privileged supply-chain inputs. Log enough causal context to reconstruct content-to-action chains. And run continuous adversarial tests because models, prompts, skills, memory, connectors, and tool semantics will drift over time. (OpenAI Developers)

That is also the point where AI-driven offensive validation becomes useful rather than ornamental. If a security team is already using AI-assisted pentesting or repeatable validation workflows, the agent systems themselves should not be exempt from testing. They should be in scope alongside traditional applications, APIs, and cloud services. Penligent’s current agent-security and OpenClaw-security materials are useful examples of this shift because they treat agent endpoints, MCP surfaces, gateway controls, tool misuse, and evidence-backed retesting as normal security work rather than a special category outside the core program. (Sträflich)

The most honest conclusion is therefore not that agents are too dangerous to use. It is that the security model has changed and many organizations are still operating with the wrong map. Before agents, content, authorization, execution, and external communication were often separated across different systems and review layers. In an agent runtime, those layers are often collapsed into one loop. That is why the goalposts moved. The next generation of strong AI security programs will not be the ones that merely make models more capable. They will be the ones that make agent runtimes harder to hijack, harder to over-permission, harder to persistently poison, harder to exfiltrate through, and easier to observe and verify. The public record already points in that direction. The remaining choice is whether defenders move there deliberately or wait for incidents to force them. (Krebs on Security)

Further Reading

Krebs on Security, How AI Assistants are Moving the Security Goalposts. (Krebs on Security)

NIST, CAISI Issues Request for Information About Securing AI Agent Systems. (NIST)

NIST, Insights into AI Agent Security from a Large-Scale Red-Teaming Competition. (NIST)

OpenAI, Designing AI agents to resist prompt injection. (openai.com)

OpenAI, Apps SDK Security and Privacy. (OpenAI Developers)

OpenAI, Deep research, prompt injection and exfiltration. (OpenAI Developers)

Anthropic, Claude Code auto mode: a safer way to skip permissions. (Anthropic)

Anthropic, Making Claude Code more secure and autonomous. (Anthropic)

OpenClaw, Gateway Security. (docs.openclaw.ai)

Cline, Post-mortem: Unauthorized Cline CLI npm publish on February 17, 2026. (Cline)

Snyk, ToxicSkills research on malicious AI agent skills. (Snyk)

VirusTotal, How OpenClaw AI Agent Skills Are Being Weaponized. (VirusTotal Blog)

AWS Security Blog, AI-augmented threat actor accesses FortiGate devices at scale. (Amazon Web Services, Inc.)

Penligent, AI Agent Security After the Goalposts Moved. (Sträflich)

Penligent, Agentic AI Security in Production — MCP Security, Memory Poisoning, Tool Misuse, and the New Execution Boundary. (Sträflich)

Penligent, OpenClaw Security, What It Takes to Run an AI Agent Without Losing Control. (Sträflich)

Penligent, homepage. (Anthropic)