On March 30, 2026, Railway disclosed an incident in which a CDN configuration update accidentally enabled caching for a small subset of domains that had not enabled Railway’s CDN features. Railway’s postmortem says the impact window ran from 10:42 UTC to 11:34 UTC, about 52 minutes, and that cached responses may have been served to users other than the original requester. Railway estimated the affected set at roughly 0.05 percent of domains on Railway with CDN disabled. Most importantly, Railway stated that Set-Cookie headers were not cached, but that most GET responses without explicit cache headers were cached by default during that window. That combination is the whole story. This was not a simple cookie-sharing accident. It was a shared-cache boundary failure that could cause authenticated response bodies to be reused across users. (Railway Blog)

That distinction matters because a lot of teams still treat cache safety as a side effect of session design. If the cookie is session-bound, if the app is dynamic, if the route is behind login, the response must be safe from reuse. That assumption is wrong often enough to be dangerous. RFC 9111 makes clear that caching is governed by cache semantics, not by developer intuition, and it also warns that implementation and deployment flaws can lead caches to store sensitive information and expose it to unauthorized parties. In the same security considerations section, the RFC explicitly notes that the presence of Set-Cookie does not by itself inhibit caching. (RFC-Editor)

The Railway incident is worth studying for a second reason. It is easy to read it as an isolated platform outage and move on. It is better understood as a concentrated example of a broader failure mode that has shown up repeatedly in web infrastructure and modern frameworks: a response that developers believe is single-user or non-cacheable becomes eligible for shared caching because of a platform rule, a framework bug, a missing directive, or a cache-key mismatch. Railway’s case was platform-side accidental enablement. Other cases come from application code, framework internals, edge rules, path normalization differences, or response metadata that quietly turns a dynamic page into a cache object. The result is the same class of damage: content intended for one request context shows up in another. (Railway Blog)

Railway CDN caching incident, the official timeline and impact

Railway’s official postmortem is straightforward about the timeline. At 10:42 UTC, an engineer deployed a CDN provider configuration update. That change accidentally enabled caching for some domains that had CDN disabled. At 11:14 UTC, Railway identified the issue through internal signals and user reports. At 11:34 UTC, Railway rolled the change back and globally purged the cache. Railway later said affected users would be notified by email and that it would add more testing and shard CDN rollouts over hours instead of minutes. (Railway Blog)

The blast radius description is precise enough to be useful and narrow enough to avoid sensationalism. Railway did not say the entire platform was serving cross-user content. It said the affected population was approximately 0.05 percent of Railway domains with CDN disabled. That wording matters because it narrows the incident to a subset of domains that had not opted into CDN behavior. The bug was not “CDNs are unsafe” in the abstract. The bug was that the platform accidentally moved a non-CDN set of domains into CDN caching behavior. In other words, the incident broke an operational promise about which traffic classes were supposed to be cache-managed in the first place. (Railway Blog)

Railway also drew an important boundary around what happened at the header level. Its notice says that origin Cache-Kontrolle directives were respected where they were present, and that Set-Cookie response headers were not cached. But it also says most GET responses without explicit cache headers were cached by default during the incident window. That means the risk profile depended heavily on which authenticated GET routes in affected applications returned personalized HTML or JSON without sending clear directives like private oder no-store. A public page with no cache directive is a performance choice. A personalized dashboard with no cache directive is an incident waiting for the wrong edge rule. (Railway Blog)

Railway did not publish a route-by-route breakdown, specific leaked data classes, or evidence of follow-on abuse. That is the right place to stop. Beyond the official facts, anything more specific about what any particular customer exposed would be speculation. A serious analysis can still explain the mechanism and the likely risk envelope without inventing details Railway did not provide. That is what defenders need anyway: not gossip, but a model they can apply to their own stack. (Railway Blog)

Railway’s own public CDN documentation also adds useful context. Its CDN guide uses Amazon CloudFront and includes a dynamic route example that applies an explicit cache-control parameter and describes CloudFront revalidating after 60 seconds. That does not prove anything about the incident root cause beyond what Railway already disclosed, but it does reinforce the bigger lesson: explicit cache directives are part of the product’s normal operating model, not an obscure edge case. When those directives are missing, platform behavior matters more than most teams assume. (Railway Docs)

Shared cache semantics, not browser quirks, are the center of this incident

The easiest way to misunderstand this incident is to mentally file it under browser caching. Browser caching is real, and OWASP’s testing guide has long recommended verifying that pages containing sensitive information instruct the browser not to retain them, especially after logout. But browser cache weakness and shared-cache leakage are different problems. Browser cache weakness is usually a same-user problem, such as sensitive pages remaining available in history or back-button navigation. Railway’s incident was a shared-cache problem, which means content generated in one user context could be reused across users at the edge. (OWASP-Stiftung)

RFC 9111 defines a shared cache as a cache used by more than one user, typically an intermediary such as a proxy or CDN. That definition is exactly why this class of issue matters so much more than many teams expect. If a browser caches a page that should not have been cached, the user who loaded it may see stale or sensitive content later. If a shared cache stores a page that should not have been stored, the platform may serve one user’s representation to a different user. Those are not the same severity tier. They are not even the same trust boundary. (RFC-Editor)

The RFC also draws a sharp line around privacy-sensitive caching. private tells shared caches that a response is intended for a single user and must not be stored by a shared cache. no-store tells caches not to store the response at all. public explicitly allows shared caching. s-maxage sets freshness rules for shared caches and can also permit reuse in situations that would otherwise be excluded. no-cache is frequently misunderstood; it does not mean “do not store.” It means the cache may store the response but must revalidate it before reuse. That distinction is the reason “we set no-cache somewhere in the stack” is not the same as “we made personalized content safe.” (RFC-Editor)

The semantics below are the ones that matter most when you are reasoning about an incident like Railway’s, or trying to prevent one in your own environment. They come directly from RFC 9111 and MDN’s shared-cache guidance. (RFC-Editor)

| Directive or header | Meaning for shared caches | Safe default for personalized authenticated content | Häufiger Fehler |

|---|---|---|---|

private | Tells shared caches not to store the response | Usually yes, if the content is user-specific and can still live in a browser cache | Assuming private und public are performance hints instead of security boundaries |

no-store | Tells caches not to store the response | Strongest default for highly sensitive authenticated HTML and JSON | Replacing it with no-cache and assuming the result is equivalent |

no-cache | Allows storage, but requires revalidation before reuse | Not strong enough by itself for many session-bound responses | Reading it as “do not cache” |

public | Explicitly allows shared-cache storage | Usually no for authenticated personalized content | Emitting it on dynamic routes because a framework or proxy default added it |

s-maxage | Controls freshness for shared caches and can permit shared reuse in otherwise restricted cases | Usually no for session-bound pages unless the representation is genuinely shared | Forgetting that a single header can turn a dynamic response into CDN material |

Vary | Adds request dimensions to the cache key | Useful when the representation legitimately varies by request headers, but not a substitute for private oder no-store | Treating Vary as a privacy guarantee rather than a cache-key instruction |

The most important consequence is simple. Authentication does not automatically turn a response into a non-cacheable object. Cache directives do that. Request headers can do that. Provider rules can do that. But “this route is behind login” is not itself a recognized cache directive. That gap between application intent and cache semantics is where incidents like this live. (MDN-Webdokumente)

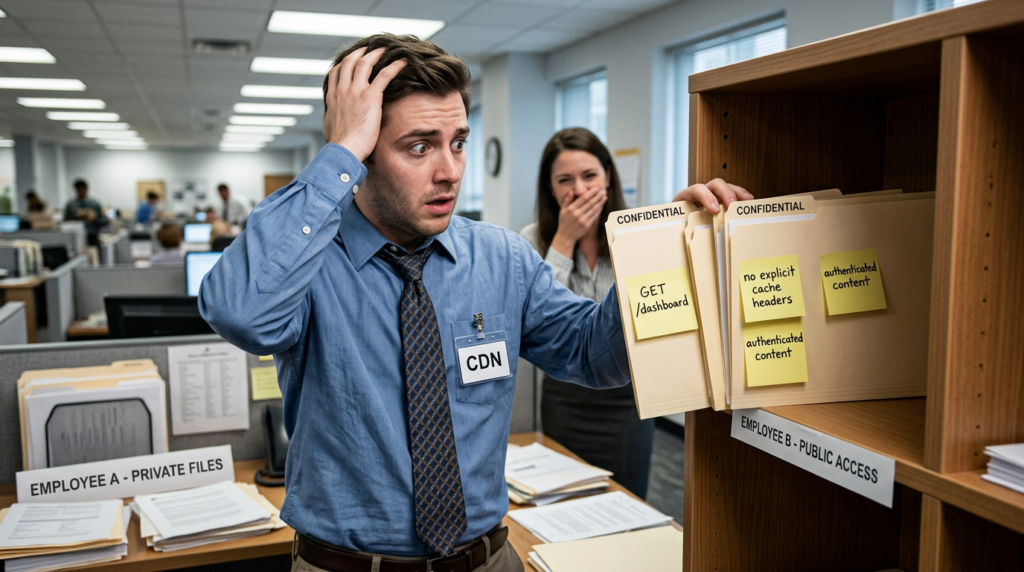

Warum Set-Cookie not being cached did not make the response safe

Railway’s statement that Set-Cookie headers were not cached is worth reading carefully. It is true. It is also not enough. RFC 9111 explicitly says the presence of Set-Cookie does not inhibit caching. The spec warns that a cacheable response carrying Set-Cookie can still be stored and later used to satisfy subsequent requests. That point alone should permanently retire the industry habit of equating cookie behavior with whole-response safety. The header can be handled one way while the body is handled another. (RFC-Editor)

MDN makes the same defensive point in more practical language. It tells developers to use private for responses that contain user-personalized content, especially after login or when the session is cookie-managed. It also warns that if you forget private, personalized content can be stored in a shared cache and reused for multiple users, which can leak personal information. Railway’s incident is exactly the kind of case that turns that sentence from guidance into a postmortem. (MDN-Webdokumente)

It helps to think about a typical authenticated page load. The response may contain several things at once: a session refresh header, HTML that includes the user’s name, recent projects, billing status, workspace identifiers, a CSRF token embedded in a form, feature flags, or a JSON blob bootstrapping the front end. The cache layer might preserve or discard some headers while still storing the response body. In a correctly configured system, personalized content should never become a shared-cache artifact. In a misconfigured system, or during a platform incident like Railway’s, the body is what hurts you. You can avoid sharing the cookie and still share the page. (RFC-Editor)

Vendor defaults also vary, which is why teams should stop relying on folk wisdom like “CDNs do not cache HTML” or “anything with cookies is safe by default.” Cloudflare’s documentation says HTML and JSON are not cached by default. Fastly says responses containing Set-Cookie are usually not cacheable and are not cached by default. Those are useful safety rails, but they are provider-specific behaviors, not universal laws of web infrastructure. Railway’s incident demonstrates how a platform-side rule change can temporarily shift those expected safety rails for some traffic classes. (Cloudflare Docs)

Cloudflare’s cache-control documentation also highlights a subtle but important point: when origin cache control is respected, directives like private und no-store are honored by the shared cache, and responses with Set-Cookie are not cached under default cache levels. But that documentation also shows how provider behavior changes with configuration and origin cache control settings. There is no security model where “a response has cookies somewhere in it” is a substitute for explicit, audited cache policy. (Cloudflare Docs)

That is why the Railway incident is a better teaching case than many classic web bugs. It forces the reader to separate three different ideas that developers routinely collapse into one: who authenticated the request, what cookie or header carried the session, and whether the resulting representation is eligible for reuse by a shared cache. Those are related, but they are not interchangeable. When they drift apart, the edge starts lying about who a page belongs to. (RFC-Editor)

Cookie session auth, Authorization headers, and the blind spot many teams miss

RFC 9111 includes an explicit safeguard around requests that carry an Authorization header. A shared cache must not use a stored response to satisfy such a request unless the response contains cache-control directives that explicitly allow it, and the response conforms to those requirements. That is good protection for token-authenticated APIs, at least when the infrastructure fully follows the standard and the response metadata is sane. (RFC-Editor)

But a lot of real web applications do not keep browser sessions in Authorization headers. They use cookies. The user logs in, gets a session cookie, and then requests personalized HTML and JSON through ordinary GET routes. From the cache’s point of view, unless the cache key or cache policy accounts for that cookie-bound personalization, the request is just another request for /dashboard oder /api/me. That is why cookie-session apps are so often mis-modeled. Developers assume the session context automatically tags the response as private. It does not. The response has to say so, or the platform has to enforce it. (MDN-Webdokumente)

This is also where Vary gets misunderstood. Vary tells a cache which parts of the request influenced the representation and therefore which request headers must become part of the cache key. That is important for content negotiation and sometimes for edge behavior. But it is not a magic privacy switch. If a page is truly user-personalized, the safest shared-cache policy is usually not “cache it with a more complicated key.” It is “do not store it in a shared cache.” Vary is a precision tool. private und no-store are boundary tools. Using one where the other is required is how systems drift into trouble. (MDN-Webdokumente)

The Railway incident makes this blind spot very concrete. Railway says most GET responses without explicit cache headers were cached by default during the incident window. That should immediately make defenders ask a route-design question, not just a platform question: which of our authenticated GET responses rely on login state alone to imply privacy? Those are the routes that become dangerous when a cache boundary shifts under them. It is not enough to ask whether POST requests are protected or whether the app sets cookies correctly. Any GET that renders personalized state is part of the cache threat model. (Railway Blog)

There is another practical reason to think in these terms. Plenty of modern front ends bootstrap session state through GET responses that look harmless in isolation. A page loads with an embedded JSON payload. A settings panel hydrates from /api/me. A project page includes an account-scoped token or CSRF value in HTML. A billing view shows tenant metadata that is not secret in the cryptographic sense but is still private and should not bleed across accounts. None of those require a cache to share the cookie to become a data exposure. They only require the cache to reuse the response body. (RFC-Editor)

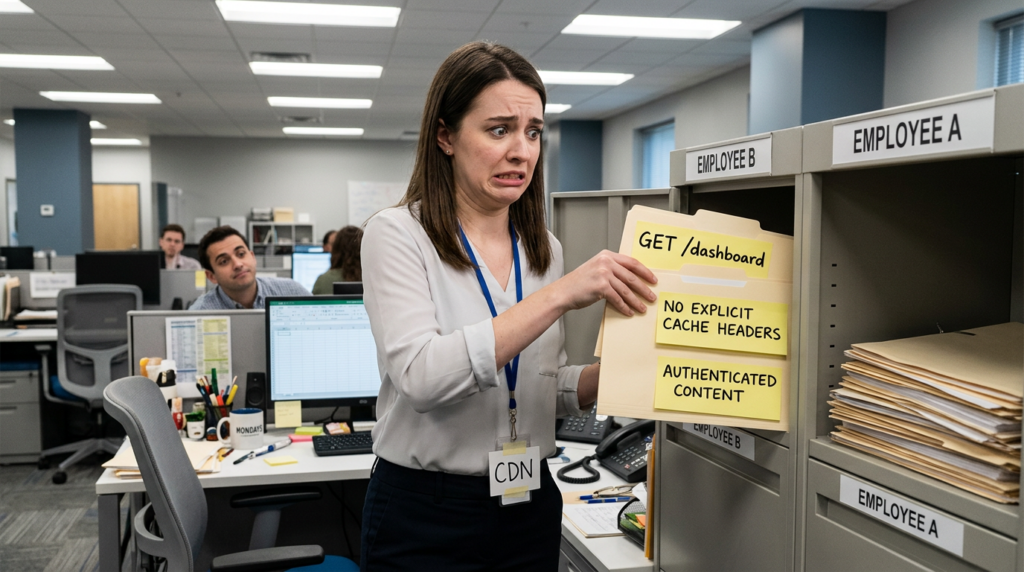

How an authenticated GET turns into cross-user content exposure

A clear way to model this bug class is to walk the request path. Suppose user A requests /dashboard after authenticating with a cookie-backed session. The application returns an HTML response with account-specific content. Under normal operation, either the route is never sent through shared caching or it is marked so a shared cache will not store it. In Railway’s incident window, for the affected domains, some GET responses without explicit cache directives became shared-cache material. That means the edge could store the representation for /dashboard even though the application did not intend the page to be globally reusable. (Railway Blog)

Now suppose user B requests the same path shortly after. If the cache key does not separate the two requests by the session-bearing dimension, and the response is still fresh enough under the edge rule that stored it, the edge can serve the stored body to user B. User B does not need user A’s cookie for that to happen. The cache is not replaying A’s session. It is replaying A’s representation. That is the critical mental model. The thing being confused is not the authentication token itself. The thing being confused is which request context the representation belongs to. (RFC-Editor)

That is also why the likely exposure surface is broader than many incident summaries suggest, but narrower than some social media threads imply. Railway did not say every secret in memory was exposed. It said cached responses may have been served to users other than the original requester. In practice, the severity of such an incident depends on what your authenticated GET responses contain. For one application, that may mean a username and internal navigation state. For another, it may mean project names, logs, billing data, temporary download links, embedded anti-forgery tokens, or tenant-scoped API bootstrapping data. The incident mechanism is the same. The consequence is application-specific. (Railway Blog)

This class of issue also explains why defenders should pay attention to “harmless” side effects like stale account views or intermittent token mismatches. If a page containing a session-bound CSRF token or one-time state is cached and reused, the first user might see nothing unusual while the next user gets a token or page snapshot that no longer belongs to their session. Penligent’s public CSRF debugging article explicitly calls out stale cached HTML and proxy or CDN interference as causes of token mismatch behavior. That is not the same as the Railway incident, but it is a useful reminder that cache-boundary failures often surface first as broken session behavior rather than as a clean, obvious disclosure report. (Sträflich)

One more subtle point matters here. MDN notes that if you have requirements around caching, you should state them explicitly because caches may apply heuristic behavior otherwise. Railway’s postmortem said most GET responses without explicit cache headers were cached during the window. That means the absence of a directive was not a neutral state. It became an implicit opt-in to the wrong edge behavior. A surprising number of web stacks still treat “no explicit caching header on authenticated pages” as acceptable because the platform default has always done the right thing. That assumption is exactly what this incident punishes. (MDN-Webdokumente)

What Railway confirmed, what it did not, and what defenders should infer

Railway confirmed the duration, the approximate scope, the trigger, the rollback, the purge, and the fact that authenticated user data may have been cached and served to others. It also confirmed that Set-Cookie was not cached and that origin Cache-Kontrolle was respected where present. Those are the facts that belong in a serious analysis. (Railway Blog)

Railway did not publicly enumerate per-customer data classes, route categories, or any evidence that an attacker deliberately weaponized the incident beyond opportunistic exposure. That omission is not suspicious; it is normal and often responsible during incident handling. But it means defenders should resist the urge to fill the gap with invented specifics. The right inference is structural: if your authenticated GET routes return personalized content without explicit shared-cache controls, you own the same class of risk even if your stack and provider differ from Railway’s. (Railway Blog)

Defenders should also avoid the opposite mistake of dismissing the incident because the percentage was small. The 0.05 percent figure is a blast-radius estimate, not a severity reducer. Shared-cache cross-user exposure is one of those bugs where a narrow affected set can still translate into serious privacy, trust, and regulatory consequences for the tenants in scope. The industry should read that number as evidence of containment, not as proof that the underlying failure mode is unimportant. (Railway Blog)

Railway and web cache deception, same family, different root cause

PortSwigger describes web cache deception as a technique where an attacker tricks a cache into storing sensitive dynamic content because the cache and the origin interpret the request differently. OWASP’s testing guide on path confusion gives a familiar example: a user dashboard might be reachable at a path that a cache incorrectly treats as static, such as a dashboard path with an appended extension-like suffix. In that class of bug, the attacker creates the cacheability mistake by exploiting mismatched parsing rules. (PortSwigger)

Railway’s incident was not that. Railway did not describe an attacker-crafted path confusion or an origin-versus-cache disagreement over URL parsing. It described a provider-side configuration update that accidentally enabled caching for traffic that should not have been in the caching path at all. That makes the root cause different, but the security lesson closely related. In both cases, content that the application owner treats as user-specific becomes shared-cache material. In both cases, the exposure is driven by a boundary mismatch between application intent and edge behavior. (Railway Blog)

That is why the broader cache-deception literature is relevant here. PortSwigger’s write-up on the top web hacking techniques of 2024 included the ChatGPT account takeover case built on wildcard web cache deception, highlighting how inconsistent decoding and cache-rule scope can turn a cache mistake into a serious account-level risk. You do not need Railway’s incident to have involved that exact technique to learn from the parallel. Once a shared cache is allowed to store the wrong representation, the difference between “stale weirdness” and “high-impact security incident” is often just what happened to be in the body. (PortSwigger)

The reason this family of bugs keeps reappearing is that it lives at the seam between teams and systems. Application developers think in routes, templates, JSON payloads, and sessions. Platform engineers think in caching layers, rollout scopes, and edge rules. Security engineers think in request identity, authorization boundaries, and replay risk. The incident happens when those models stop lining up. Railway’s postmortem is valuable because it shows how little time is needed for that misalignment to matter once it reaches a shared edge. (Railway Blog)

Related CVEs, the same failure mode through framework and application paths

Recent framework CVEs make the same lesson impossible to ignore. They also help separate three related but distinct problems: accidental shared caching of personalized responses, cache-key confusion between request contexts, and representation mix-ups where a framework emits the wrong object type into a cacheable response path. The product and root cause differ, but the security boundary being crossed is the same. (NVD)

The table below summarizes the most relevant cases for understanding Railway’s incident as part of a broader class rather than as an isolated outage. The CVE facts come from NVD and related official advisories. (NVD)

| CVE | Affected component | Failure mode | Why it matters for the Railway incident | Fix or mitigation emphasis |

|---|---|---|---|---|

| CVE-2024-46982 | Next.js Pages Router | A crafted request can make a non-dynamic SSR route emit cacheable s-maxage=1, stale-while-revalidate headers, enabling upstream CDN caching | Shows how a route meant to stay dynamic can quietly become shared-cache material | Upgrade, and scrutinize unexpected cache headers on SSR responses |

| CVE-2025-32421 | Next.js Pages Router | Race condition under certain misconfigurations can make endpoints return pageProps instead of HTML | Demonstrates that framework bugs become cross-user issues only when platform caching policy allows it | Upgrade, strip x-now-route-matches, use explicit no-store on at-risk responses |

| CVE-2025-49005 | Next.js App Router and Vercel CLI | HTML requests can receive RSC payloads under certain conditions | Useful contrast between browser-cache impact on Vercel and bigger risk on self-hosted plus CDN setups | Upgrade, ensure cache keys distinguish representation type, test self-hosted CDN paths |

| CVE-2025-57752 | Next.js Image Optimization API | Cache-key confusion when responses vary on Cookie oder Authorization | Very close to the Railway lesson that request context must be reflected in shared-cache decisions | Upgrade and avoid shared caching of header-dependent personalized content |

CVE-2024-46982 is especially relevant because of how mechanically similar it is to the Railway outcome. NVD says a crafted HTTP request could poison the cache of a non-dynamic SSR route in Next.js Pages Router and cause it to send Cache-Control: s-maxage=1, stale-while-revalidate, which upstream CDNs may cache. That is not the same bug as Railway’s, but it is the same kind of failure: a response that developers assume belongs to a dynamic request path gets transformed into a shared-cache artifact by unexpected metadata. Railway got there through platform config. This CVE got there through framework behavior. The edge does not care where the mistake came from. It only cares whether the response became cacheable. (NVD)

CVE-2025-32421 is arguably even more interesting from a platform-design perspective. NVD describes a race condition in certain Next.js versions and notes that the issue affected Pages Router under certain misconfigurations. The same NVD entry also notes that Vercel was not affected because the platform does not cache responses based solely on 200 OK without explicit cache-control headers. That is a rare case where the mitigation lesson is written right into the vulnerability record: platform cache policy can determine whether a framework bug remains a weird rendering problem or becomes a cross-user exposure problem. Railway’s incident is the mirror image of that lesson. A platform-side cache boundary shift made otherwise ordinary GET responses riskier than the application owners expected. (NVD)

CVE-2025-49005 adds a useful contrast. NVD describes a case where HTML requests could return React Server Component payloads under certain conditions. On Vercel, the impact was described as browser cache only, not CDN cache poisoning. But NVD also notes that self-hosted environments fronted by external CDNs could turn the same confusion into cache poisoning if the CDN does not distinguish RSC and HTML in its cache key. That is an important architectural lesson. The same logical bug can live at three different layers: application rendering, browser cache, or shared edge cache. Railway’s incident sits squarely in the third category. (NVD)

CVE-2025-57752 is the closest conceptual match to the cross-user dimension of the Railway incident. NVD says Next.js Image Optimization API routes were vulnerable to cache-key confusion and that if images from API routes vary based on request headers like Cookie oder Authorization, the responses could be incorrectly cached and served to unauthorized users. That is the same security boundary in one sentence: request context changed the representation, but the shared cache did not reflect that context in its storage or reuse decision. Railway’s postmortem should make every team read that sentence more carefully. Personalized image endpoints, signed export endpoints, user-specific CSV downloads, and dynamic HTML all live in the same cache-trust family. (NVD)

Taken together, these cases show why it is a mistake to frame Railway’s incident as “just an ops bug.” It was an ops bug. It was also a canonical example of a vulnerability class that appears through multiple supply paths: a provider rollout, a framework edge case, a cache-key design error, or a path-deception exploit. Mature teams do not defend against these one source at a time. They defend the boundary itself. (Railway Blog)

How to test your own apps for Railway-style CDN caching leaks

The safest way to test for this class of bug is to treat it as a cache regression problem on systems you own or are explicitly authorized to assess. Do not start with exploit theater. Start with route inventory, response semantics, and edge observation. The question is not “can I get a spectacular leak demo in one curl command.” The question is “which authenticated representations in this system could ever become shared-cache artifacts, and how would I prove or disprove that after every routing or CDN change.” (MDN-Webdokumente)

Start by inventorying authenticated GET routes. Teams often focus on POST endpoints because those feel stateful, but the routes most at risk here are GET routes that render or return personalized content. That includes dashboards, settings pages, /api/me, project overviews, account switchers, profile endpoints, tenant-scoped search pages, download-preparation endpoints, and any HTML form page that embeds user-bound tokens or state. If a GET response would be embarrassing or dangerous to show to another logged-in or anonymous user, it belongs in the inventory. Railway’s postmortem specifically points to GET responses without explicit cache headers as the risky population in its incident window. (Railway Blog)

Then inspect headers, not just bodies. For each route in scope, capture the full response headers and ask a few blunt questions. Is Cache-Kontrolle present. Does it say private oder no-store for personalized content. Is there a Vary header, and if so, does it reflect real representation differences. Are there signs of intermediary caching such as Age, Via, X-Cache, oder CF-Cache-Status. Does the provider add its own cache headers on supposedly non-cacheable paths. If a route is authenticated and returns personalized content, silence is not good enough. Missing directives are the first thing Railway’s incident turns into risk. (MDN-Webdokumente)

A simple header check can catch a surprising amount:

curl -s -D headers.txt \

-o body.html \

-b cookiejar.txt \

https://app.example.com/dashboard

grep -Ei '^(cache-control|vary|age|via|x-cache|cf-cache-status|set-cookie):' headers.txt

Wenn /dashboard is authenticated and personalized, a result like Cache-Control: public, s-maxage=60 is a problem. A result like no Cache-Kontrolle at all is also a problem in many stacks because it leaves behavior up to intermediaries and platform defaults. A result like Cache-Control: no-cache deserves more scrutiny, not less, because it still permits storage and depends on revalidation behavior. The safer baseline for sensitive authenticated HTML or JSON is usually private, no-store. (MDN-Webdokumente)

After the header pass, run a two-account test. Set up two benign test users or two test tenants with clearly distinguishable markers in the UI, such as different project names or dummy profile values. Load the route as user A, then request the same route as user B through the same edge path. Compare the returned bodies. If the system is working correctly, user B’s response should reflect B’s state or force a fresh origin path. If the shared cache is misbehaving, you may see A’s marker in B’s response or observe repeated identical body hashes where they should differ. Use staging and dummy data so that a failing test does not create a real exposure. (Railway Blog)

A lightweight body-hash workflow can help you catch this fast:

curl -s -b userA.cookies https://app.example.com/api/me > a.json

curl -s -b userB.cookies https://app.example.com/api/me > b.json

shasum -a 256 a.json b.json

diff -u a.json b.json

Identical hashes do not automatically mean a bug if the endpoint intentionally returns shared public data. But for user-specific endpoints, identical bodies across distinct accounts are a red flag, especially when combined with cache headers or edge hit indicators that say the response came from an intermediary. (MDN-Webdokumente)

When a CDN or reverse proxy uses path-based rules, add a cache-deception pass. PortSwigger’s Academy and OWASP’s path-confusion guidance both show why extension-like path suffixes and mismatched parsing can change how the cache and origin classify a route. On systems you own, test whether a personalized route can be requested under a path form that looks cacheable to the edge but still resolves to the same origin content. This is especially relevant if your CDN attaches caching rules to file extensions, static prefixes, or simplified route matchers. Railway’s incident was not caused by this technique, but many organizations defending against the Railway failure mode are also one bad path rule away from web cache deception. (PortSwigger)

A basic regression script can turn that into something repeatable:

#!/usr/bin/env bash

set -euo pipefail

BASE="https://staging.example.com"

A="userA.cookies"

B="userB.cookies"

declare -a ROUTES=(

"/dashboard"

"/api/me"

"/settings/profile"

"/dashboard/non.js"

)

for r in "${ROUTES[@]}"; do

echo "== Testing $r =="

curl -s -D "A.headers" -o "A.body" -b "$A" "$BASE$r"

curl -s -D "B.headers" -o "B.body" -b "$B" "$BASE$r"

echo "-- Cache headers --"

grep -Ei '^(cache-control|vary|age|via|x-cache|cf-cache-status):' A.headers || true

grep -Ei '^(cache-control|vary|age|via|x-cache|cf-cache-status):' B.headers || true

echo "-- Body hashes --"

shasum -a 256 A.body B.body

echo "-- Cross-user marker check --"

if grep -q "Tenant A Canary" B.body; then

echo "Potential cross-user exposure on $r"

fi

done

Do not stop after a purge. A purge tells you whether the problem existed. It does not tell you whether the policy is now correct. After every fix, rerun the same inventory, the same header checks, the same two-account test, and the same path-variance cases. A lot of teams fix cache bugs by purging aggressively and then declaring victory while the route remains silently cacheable under the next rollout. Railway’s own remediation plan included more testing and slower, more heavily sharded rollout of CDN changes. That is exactly the kind of process correction defenders should copy. (Railway Blog)

For teams already using agent-assisted validation workflows, this class of bug fits unusually well into an evidence-first retest loop. Penligent’s public material on moving from white-box findings to black-box proof describes a workflow centered on black-box auditing, proof of exploitability, and regression re-verification against real targets. That matches cache-boundary testing better than many other bug classes because the problem is rarely settled by a single screenshot or one suspicious header. You need repeatable auth setup, controlled route selection, post-fix reruns, and saved artifacts after every purge or edge-rule change. (Sträflich)

Hardening authenticated content against shared-cache exposure

The best defense against this class of incident is not a clever CDN trick. It is a boring, explicit, layered policy that makes personalized content impossible to mistake for shared content. The first rule is simple: if a route returns authenticated user-specific HTML or JSON, send an explicit cache policy. Do not rely on the provider default. Do not assume “dynamic” implies private. Do not assume cookies imply non-cacheability. For most authenticated pages and APIs, the right starting point is Cache-Control: private, no-store. If the content is extremely sensitive or can embed one-time state, keep it there unless you have a strong, measured reason not to. (MDN-Webdokumente)

That also means teams need to stop overusing no-cache as a comfort blanket. MDN is very clear that no-cache permits storage and requires revalidation before reuse. That may be good enough in carefully designed cases, but it is not the “never share this” directive many developers think it is. If your route should never live in a shared intermediary cache, say private oder no-store, and usually both. Railway’s incident is the kind of event that makes the semantic gap between those directives operationally expensive. (MDN-Webdokumente)

The recommendations below are operational guidance derived from the standards and incident patterns discussed above. They are intentionally conservative for session-bound content. (RFC-Editor)

| Route type | Typical example | Recommended Cache-Kontrolle | Shared cache allowed | Extra notes |

|---|---|---|---|---|

| Public marketing HTML | /, /pricing, blog landing pages | public, max-age=300, stale-while-revalidate=60 or similar measured values | Ja | Keep truly public and version-stable |

| Fingerprinted static assets | /assets/app.abc123.js, hashed CSS, images | public, max-age=31536000, immutable | Ja | Safe because the URL changes with the content |

| Authenticated dashboard HTML | /dashboard, /projects | private, no-store, max-age=0 | Nein | Best default for user-specific pages |

| Profile and session APIs | /api/me, /api/account | private, no-store, max-age=0 | Nein | Do not assume JSON is safer than HTML |

| Token-bearing forms | /settings/profile, /billing/update-card | private, no-store, max-age=0 | Nein | Prevent stale CSRF or one-time values from being reused |

| User-generated downloads with auth | signed exports, reports, CSV endpoints | Usually private, no-store unless a different signed-delivery design is intentional | Usually no | Review object-store and CDN behavior together |

At the application layer, centralize the rule instead of depending on every route author to remember it. A simple middleware pattern is often enough to remove entire classes of mistakes:

app.use((req, res, next) => {

const isAuthenticated = Boolean(req.user);

const sensitivePrefixes = ["/dashboard", "/settings", "/api/me", "/billing"];

if (isAuthenticated || sensitivePrefixes.some(p => req.path.startsWith(p))) {

res.set("Cache-Control", "private, no-store, max-age=0");

res.set("Pragma", "no-cache");

}

next();

});

That is not a substitute for route-level review, but it gives you a secure default. If a team later decides a route is intentionally shareable, it can make that case explicitly rather than inheriting ambiguous behavior from the platform. The same philosophy should carry into framework configuration: force dynamic rendering for authenticated pages, audit unexpected s-maxage output, and review any helpers or middleware that auto-generate cache headers. Recent Next.js cache-related CVEs are a reminder that framework defaults and edge behavior can combine in surprising ways. (NVD)

At the reverse-proxy and CDN layer, draw a hard line between static asset policy and session-bound traffic. The most common operational mistake is not “the CDN existed.” It is “the CDN rule designed for public assets also touched authenticated pages.” Do not attach static cache policies to route prefixes that include dashboards, account APIs, settings flows, export endpoints, or mixed-use prefixes that can resolve to user-specific content. Railway’s remediation emphasis on more tests and more gradual rollout is a useful operational model here. The more powerful the edge rule, the more narrowly it should be targeted and the more slowly it should be deployed. (Railway Blog)

A simple Nginx example shows the intent:

location /dashboard {

proxy_pass http://app_backend;

proxy_no_cache 1;

proxy_cache_bypass 1;

add_header Cache-Control "private, no-store, max-age=0" always;

}

location /api/me {

proxy_pass http://app_backend;

proxy_no_cache 1;

proxy_cache_bypass 1;

add_header Cache-Control "private, no-store, max-age=0" always;

}

location /assets/ {

proxy_pass http://app_backend;

add_header Cache-Control "public, max-age=31536000, immutable" always;

}

Vary deserves a final word. Use it when the representation legitimately varies by request headers and the route is still intended to be cacheable. Do not use it as a bandage for personalized content that should not be in a shared cache at all. MDN’s explanation is clear: Vary describes what parts of the request influenced the representation so the cache can key correctly. It is not a privacy directive. If the content belongs to one user or one session, the more reliable move is to prevent shared-cache storage in the first place. (MDN-Webdokumente)

One operational smell worth calling out is any system where the application team cannot answer a simple question: which headers and edge rules prevent /dashboard from being cached by a shared intermediary. If that answer lives only in tribal memory or only in a CDN dashboard that nobody reviews during deploys, the system is too fragile. Railway’s incident was a reminder that the edge is part of the application’s security boundary, whether or not the app team likes thinking about it that way. (Railway Blog)

Turning cache validation into a repeatable engineering workflow

Many teams still approach cache issues like a one-time penetration-test finding. Someone proves the bug once, opens a ticket, and everyone hopes the fix sticks. That is the wrong operational model. Cache correctness is closer to authorization correctness than to a transient bug. It changes when routes change, when proxies change, when frameworks change, and when providers change. Railway’s incident itself came from a provider configuration update. Any organization that treats cache privacy as a one-and-done exercise will eventually relearn that lesson the hard way. (Railway Blog)

The better model is a recurring workflow with four stable steps. First, maintain a living inventory of routes whose representations are user-specific. Second, define explicit cache expectations for each route class. Third, verify those expectations through automated header and body checks in staging and after edge changes. Fourth, keep artifacts from failures and post-fix reruns so that regressions are easy to compare. This is where offensive validation and defensive engineering meet in a productive way. (MDN-Webdokumente)

Penligent’s public material on black-box proof is relevant here because it frames validation as a repeatable loop of execution, evidence capture, and re-verification rather than as one impressive test run. That same posture is valuable for cache-boundary work. A single “gotcha” request proves the issue exists. What defenders need after that is durable confidence that the issue stays fixed through future CDN rollouts, proxy migrations, framework upgrades, and rendering changes. Evidence-first retesting is a better fit for that job than ad hoc manual spot checks. (Sträflich)

The same logic applies to related session symptoms. Penligent’s published CSRF debugging guide treats stale cached HTML and proxy or CDN interference as meaningful diagnostic causes of token mismatch behavior. That is a practical reminder to expand your monitoring beyond clean disclosure indicators. If a deployment suddenly increases CSRF mismatch rates, stale-page complaints, or intermittent account-context anomalies, do not stop at the session layer. Check whether a route became cacheable or whether an edge rule started reusing content that should have stayed per-user. (Sträflich)

Responding to an accidental CDN caching incident in your own environment

If you suspect a Railway-style incident in your own stack, the first priority is not perfect root-cause analysis. It is to stop shared reuse of the wrong representations. Disable or bypass the relevant cache policy for sensitive paths immediately, and purge the edge globally. Railway’s own response included a rollback and global cache purge before it moved into longer-term remediation. That is the right order. You can debate architecture after you stop the bleeding. (Railway Blog)

Next, define the potential data classes conservatively based on real route behavior, not on generic panic. Review which authenticated GET routes were in scope, what they return, and whether those responses could contain profile details, workspace state, embedded anti-forgery material, download links, or other tenant-specific data. You do not need a perfect reconstruction before you start this classification. You need a defensible, route-based map of what the cache may have reused. Railway did not publish that per-customer detail publicly, and most teams will not have a perfect historical replay either. But you can still reason from route design and logs. (Railway Blog)

Then review observable signs of reuse. Edge logs, X-Cache style signals, cache hit ratios on routes that should never hit, customer support reports, and application anomalies like token mismatch spikes are all useful. This is where defenders should remember the browser-cache guidance from OWASP and the session-anomaly guidance from real operational experience: cache incidents rarely announce themselves in one clean alert. They leak through stale views, broken forms, session oddities, and users saying “I saw something that was not mine” before your metrics line up into a neat story. (OWASP-Stiftung)

After containment, decide whether short-lived artifacts embedded in pages should be rotated. The answer is not always yes, and it depends on what your responses actually contain. If affected pages could have included one-time CSRF tokens, signed action URLs, expiring report links, or similar state, rotation may be appropriate. If the leaked surface was mostly cosmetic account metadata, the response may focus more on notification and assurance. The key is to let the actual route content drive the decision rather than applying a generic incident playbook blindly. Railway’s public notice was careful about confirmed facts. Teams handling their own incidents should do the same. (Railway Blog)

Finally, turn the fix into a release gate. Railway said it would add more tests and shard rollouts more aggressively over longer periods. That is not just a vendor-specific lesson. Any organization operating proxies, CDNs, or framework-level cache policies should make authenticated-route cache tests part of deployment acceptance, especially when edge behavior changes. The cheapest time to catch a cross-user cache leak is before the config reaches the full fleet. The second-cheapest time is before you declare the incident resolved. (Railway Blog)

The real lesson from the Railway CDN caching incident

The Railway incident is useful precisely because it is smaller and clearer than many high-profile web failures. There is no need to reverse-engineer a baroque exploit chain to understand it. A provider-side configuration update accidentally changed the caching boundary for a subset of domains. For 52 minutes, some GET responses without explicit cache controls became shared-cache objects. That was enough to create a risk that authenticated content could be served to the wrong users. (Railway Blog)

The bigger lesson is not “do not use CDNs.” It is “do not let your privacy boundary depend on unstated cache behavior.” If a response must stay attached to one user, say so explicitly. If a framework bug can emit cacheable headers on a dynamic route, test for that. If your platform or CDN changes how a path is classified, catch it in staging with two accounts and real markers. If Set-Cookie is absent from the cache, do not congratulate yourself until you know where the body went. RFC 9111, MDN, OWASP, the recent Next.js CVEs, and Railway’s own postmortem all point to the same conclusion from different angles: shared caches are powerful, but they are not psychic. They only know what you tell them, and sometimes they only know what the platform accidentally told them. (RFC-Editor)

A lot of application security work is about finding the place where a system silently stopped remembering who something belonged to. Sometimes that is an authorization check. Sometimes it is a session binding. Sometimes it is an object reference. In this incident, it was the edge representation of a page. That is why it deserves serious attention. For authenticated content, the most dangerous cache setting is often not an obviously reckless one. It is the one you assumed did not exist. (RFC-Editor)

Further reading

- Railway’s incident report on authenticated user data being cached. (Railway Blog)

- Railway’s public CDN guide and dynamic route caching example. (Railway Docs)

- RFC 9111, HTTP Caching. (RFC-Editor)

- MDN on

Cache-Kontrolle, includingprivate,no-store,no-cache, and heuristic caching guidance. (MDN-Webdokumente) - MDN on

Varyand cache-key behavior. (MDN-Webdokumente) - OWASP Web Security Testing Guide on browser cache weakness. (OWASP-Stiftung)

- PortSwigger Web Cache Deception material. (PortSwigger)

- NVD entries for CVE-2024-46982, CVE-2025-32421, CVE-2025-49005, and CVE-2025-57752. (NVD)

- Claude Code Security and Penligent, From White-Box Findings to Black-Box Proof. (Sträflich)

- How to Fix CSRF Token Mismatch, Advanced Debugging and Prevention. (Sträflich)