OpenClaw security is easy to misunderstand because the product does not fail like a normal application. A traditional web app has routes, handlers, access control, and a relatively stable execution path. OpenClaw is different. It sits at the junction of language models, messaging channels, local files, connected tools, persistent credentials, browser automation, and host execution. The result is not merely “an AI assistant.” It is a decision-making runtime that can be steered by untrusted content and can act with real authority. OpenClaw’s own documentation says its security guidance assumes a personal assistant trust model with one trusted operator boundary per gateway, and explicitly warns that it is not meant to be a hostile multi-tenant boundary for adversarial users sharing one runtime. Microsoft goes even further and recommends running OpenClaw only in isolated environments with no access to non-dedicated credentials or sensitive data that must not leak. (OpenClaw)

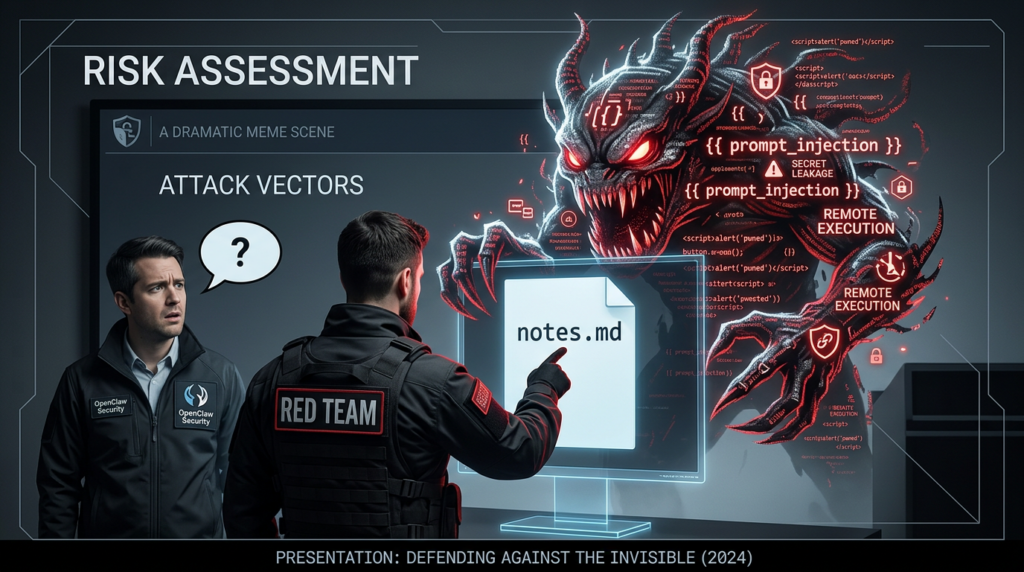

That distinction matters more than any marketing description. Once an agent can read files, call tools, act on messages, hold API tokens, and persist state, the security question changes from “Can the model answer safely?” to “Can untrusted input drive a high-authority execution path?” OWASP’s AI Agent Security guidance frames the problem in similar terms: least privilege, validation of external inputs, human review for high-risk actions, isolation between sessions, anomaly monitoring, and strict avoidance of unrestricted tool access. OWASP’s prompt injection guidance also makes the core issue plain: prompt injection is not simply bad content moderation, but a class of vulnerabilities where attacker-controlled text changes system behavior, bypasses safeguards, leaks data, or triggers unauthorized actions through connected tools. (Serie de hojas de trucos de OWASP)

The reason OpenClaw has become such a live security topic in 2026 is not just its adoption curve. It is the combination of fast growth, exposed deployments, community-installed skills, expanding browser and execution capabilities, and a stream of security findings that map directly to real-world operator mistakes. SecurityScorecard reported tens of thousands of exposed OpenClaw instances and said 35.4 percent of observed deployments were flagged as vulnerable at the time of writing. OpenClaw’s own GitHub security materials repeatedly emphasize that the web interface is intended for local use, that the recommended default is loopback-only binding, and that it should not be exposed directly to the public internet. In other words, the attack surface is no longer hypothetical. It is observable, measurable, and already broad enough that defenders need a hard stance, not a vague awareness campaign. (SecurityScorecard)

Why OpenClaw security is a runtime problem, not a chatbot problem

OpenClaw inherits many of the known LLM risks, but it also extends them into runtime risks. The LLM layer can still be manipulated through direct prompt injection, indirect prompt injection inside documents or webpages, and instruction confusion across multi-step tasks. What makes OpenClaw more dangerous than a plain chat interface is that the model’s output is connected to tool execution, message delivery, file operations, browser sessions, and long-lived account state. OpenClaw’s documentation warns that if several people can message one tool-enabled agent, they effectively share the delegated tool authority of that agent. It also notes that prompt and content injection from one sender can affect shared state, devices, or outputs, and that any allowed sender can potentially drive exfiltration if the agent holds sensitive credentials or files. That is a much more serious failure mode than a wrong answer in a chat window. (OpenClaw)

The platform’s own trust model is unusually explicit. Installing or enabling a plugin grants it the same trust level as local code running on the gateway host. Plugin behavior such as reading environment variables, reading files, or running host commands is expected inside that trust boundary. OpenClaw’s maintainers even treat “a trusted-installed plugin can execute with host privileges” as documented trust-model behavior rather than a product vulnerability. That tells you exactly how the platform should be evaluated: every new skill, plugin, or execution surface is part of your code execution boundary. If you let the wrong thing in, the platform is doing what it was designed to do. The compromise is yours. (GitHub)

This is why security teams that still evaluate AI assistants using only model safety tests are missing the point. A correct OpenClaw review has to look like a hybrid of application security, endpoint hardening, identity governance, and software supply chain control. Microsoft describes the runtime risk bluntly. If OpenClaw has permission to reach certain apps, files, or accounts, it may be able to retrieve additional information from them, which is why the company recommends isolated environments without non-dedicated credentials. Semgrep’s security write-up reaches the same practical conclusion in simpler words: start with the smallest access that still works, then widen it only if you must. (Microsoft)

The real OpenClaw attack surface

The first attack surface is inbound content. OpenClaw connects to real messaging systems, and its GitHub documentation explicitly says to treat inbound DMs as untrusted input. By default, unknown senders on several channels receive a pairing code and the bot does not process their messages until approved. That is good as a baseline, but it is not enough if operators over-broaden who can reach the agent, or if the same agent is shared across mixed-trust users in Slack, Discord, Teams, or other chat environments. Once an allowed sender reaches a tool-enabled agent, the question is no longer whether the sender is authenticated. The question is whether the sender can steer the agent into actions that exceed the sender’s intended authority. (GitHub)

The second attack surface is the web and gateway control plane. OpenClaw’s security documentation says the control UI and HTTP endpoints are intended for local use only, recommends gateway.bind="loopback" by default, and warns against direct public exposure such as binding to 0.0.0.0 or putting the UI behind a public reverse proxy. If remote access is necessary, the guidance prefers an SSH tunnel or Tailscale-style access while keeping the gateway loopback-bound. This is not defensive paranoia. It reflects the reality that a control interface for an agent runtime is effectively a high-value target with authorization, tool dispatch, and state visibility concentrated in one place. (GitHub)

The third attack surface is tools and exec paths. OpenClaw supports security audit checks for elevated command profiles, sandbox mismatches, and unsafe execution assumptions. It also has an approvals system where commands can require policy agreement and user approval. The docs warn against treating safeBins as a generic allowlist and specifically caution against casually allowing interpreter binaries such as Python, Node, Ruby, or Bash. Those warnings exist because arbitrary or loosely bounded execution turns natural-language abuse into system compromise. (OpenClaw)

The fourth attack surface is skills, plugins, and supply chain trust. JFrog’s analysis argues that handing OpenClaw third-party extensions without scrutiny is comparable to giving an agent the keys to your kingdom. The blog cites cases of a trojanized fake extension and malicious skills linked to active campaigns, and advises using OpenClaw’s built-in security audit, minimizing integrations, and installing only trusted reviewed components. OpenClaw’s own security policy backs that worldview by treating trusted-installed plugins as part of the host trust boundary. If your deployment process does not include extension provenance checks, dependency review, and revocation procedures, your “AI security” problem is already a software supply chain problem. (JFrog)

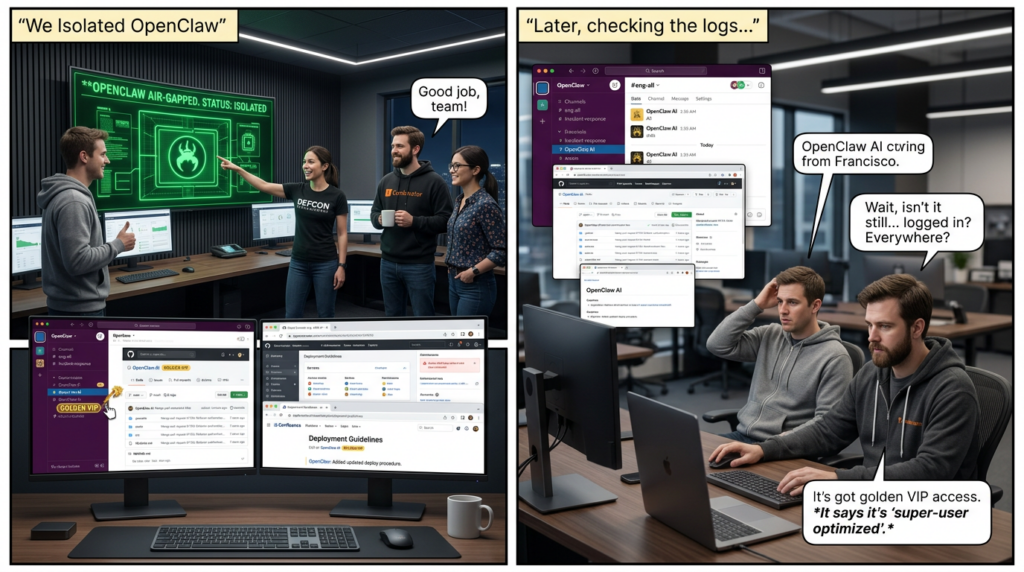

The fifth attack surface is secrets and durable state. OpenClaw’s documentation includes a credential storage map and notes that file-backed secrets, allowlists, auth profiles, and legacy auth artifacts can all persist under ~/.openclaw. It also supports secrets audits to scan for plaintext secret storage, unresolved references, precedence drift, and legacy residues. This matters because agent compromise is often less about instant shell access and more about quietly inheriting the credentials the agent already has. The best attacker does not need to build a new identity if your agent is already logged in everywhere. (OpenClaw)

Prompt injection is still the center of gravity

A surprising number of OpenClaw security discussions treat prompt injection as one risk among many, when in practice it is the bridge that connects untrusted content to privileged action. OWASP defines prompt injection as a vulnerability where malicious input manipulates model behavior, potentially causing unauthorized data access, prompt leakage, unauthorized actions through tools and APIs, and even persistent manipulation across sessions. In an agent runtime, that bridge gets stronger, not weaker, because the output of model reasoning is not merely text. It is a plan that can be executed. (Serie de hojas de trucos de OWASP)

The OpenClaw maintainers implicitly recognize this, but they draw an important product-security boundary. Their public security policy says prompt-injection-only attacks without a policy, auth, or sandbox bypass are out of scope for vulnerability reporting. That is a perfectly understandable triage choice for an open-source project, but operators should not misread it. “Out of scope for a bounty report” does not mean “low risk in production.” It means the platform considers prompt injection an expected deployment problem that must be mitigated through isolation, policy, approvals, and trust-boundary design. (GitHub)

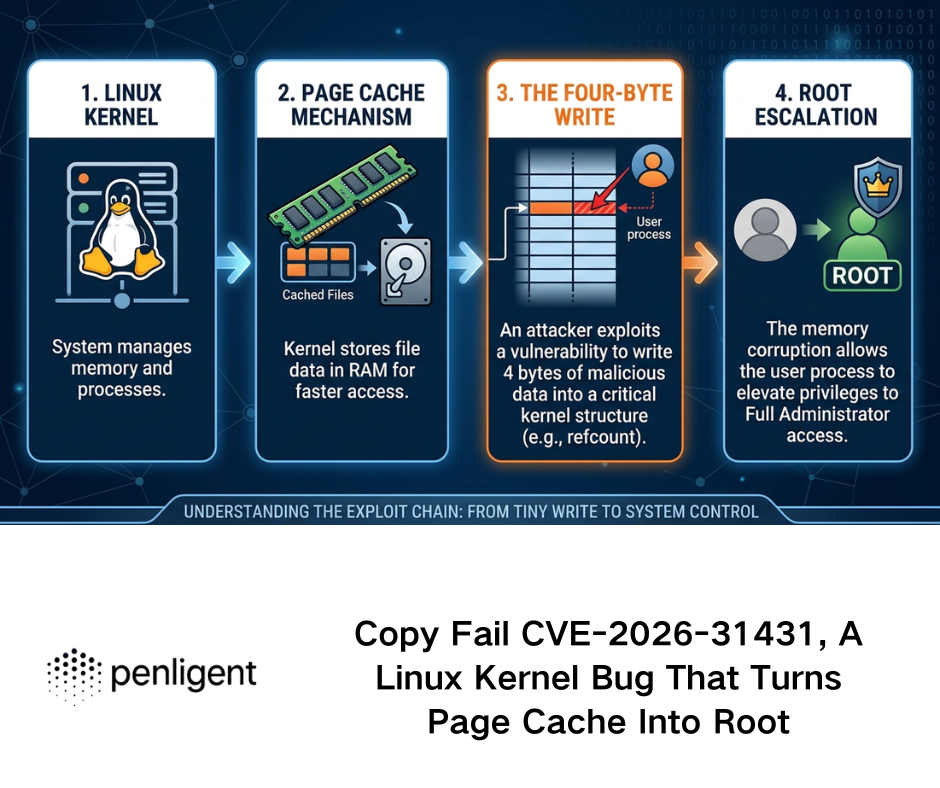

This is also why recent NVD entries and security research deserve attention even when they sound heterogeneous. Some issues are classic product flaws. Others reflect how easily prompt-originated manipulation can combine with architectural assumptions. NVD’s entry for CVE-2026-30741 describes a remote code execution vulnerability in OpenClaw Agent Platform v2026.2.6 via a request-side prompt injection attack. Even if the exact exploit path evolves as the ecosystem matures, the headline alone captures the structural problem: in an agent runtime, prompt injection is no longer merely a content attack. It can become an execution attack. (NVD)

The CVEs that matter most right now

The most widely cited OpenClaw issue in early 2026 was CVE-2026-25253. NVD describes it as a flaw in versions before 2026.1.29 where OpenClaw obtained a gatewayUrl value from a query string and automatically made a WebSocket connection without prompting, sending a token value. NVD scored it with a severe CVSS 3.1 vector showing high confidentiality, integrity, and availability impact. This is not just another token leak. It is a reminder that browser-adjacent trust decisions, implicit connection flows, and user-interface convenience can collapse security boundaries very quickly in agent tooling. (NVD)

CVE-2026-28472 matters for a different reason. NVD says versions prior to 2026.2.2 allowed skipping device identity checks during the gateway WebSocket connect handshake when auth.token was present but not validated. In plain English, an attacker could potentially connect without providing device identity or pairing under vulnerable conditions, which means operator access could be obtained in deployments that assumed the pairing model was protecting them. That is a control-plane flaw, and control-plane flaws in agent runtimes are especially dangerous because they sit upstream of every subsequent action. (NVD)

CVE-2026-32060 is the kind of issue defenders should treat as a hard lesson in sandbox assumptions. NVD says versions prior to 2026.2.14 contained a path traversal vulnerability in aplicar_parche that allowed writes or deletes outside the configured workspace directory when filesystem sandbox containment was absent. The important part is not only the directory traversal itself. It is the phrase “without filesystem sandbox containment.” A large share of OpenClaw risk comes from operators assuming a workspace boundary exists when the actual host boundary is still open. The product documentation even warns about runtime expectation drift, such as configuration saying exec should happen in a sandbox while sandbox mode is off. (NVD)

Then there is CVE-2026-30741, the prompt-injection-to-RCE entry. Because it is new and its public references are lighter-weight than the other NVD-backed issues, it should be handled carefully and validated against vendor-side fixes before being used as the sole basis for a governance decision. Still, its presence in NVD is meaningful because it shows that the industry is already formalizing prompt-to-execution chains as vulnerability records, not merely research anecdotes. That trend matters even if specific exploit details change. (NVD)

OpenClaw’s own release notes reinforce the general pattern. Recent releases mention switching device pairing to short-lived bootstrap tokens rather than shared gateway credentials in chat or QR payloads, and disabling implicit workspace plugin auto-load so cloned repositories cannot execute workspace plugin code without an explicit trust decision. That is exactly the direction a mature security model should move in: fewer ambient credentials, fewer implicit trust jumps, and fewer silent execution paths. (GitHub)

A practical risk table for security teams

| Zona de riesgo | What goes wrong | Why it is dangerous in OpenClaw | What to do first |

|---|---|---|---|

| Prompt injection | Untrusted content changes agent behavior | The agent can turn manipulated reasoning into tool calls, data access, or outbound actions | Treat all external content as hostile, require approvals for risky actions, narrow tool scope |

| Exposed gateway | Control UI or HTTP surface is internet-reachable | Attackers target the control plane and inherit whatever the agent can already do | Keep loopback-only binding, avoid public reverse proxies, use tunnels or private access |

| Plugin and skill trust | Third-party code runs with host-level trust | Skills and plugins sit inside the gateway trust boundary | Review provenance, pin versions, maintain an allowlist, disable implicit autoload |

| Secret persistence | Tokens, auth profiles, or residues remain in agent state | Attackers prefer stealing the agent’s existing identity over building a new one | Use secret refs, run secret audits, rotate non-dedicated credentials, reduce token scope |

| Shared-user deployment | Multiple people drive one powerful agent | Allowed senders effectively share delegated authority | Split trust boundaries, separate gateways, separate OS users or hosts |

| Sandbox drift | Operators think actions are sandboxed when they are not | File writes, patch operations, or exec calls can hit the real host | Audit configuration continuously and fail closed on sandbox mismatch |

The table above is not abstract theory. Every line maps directly to OpenClaw or OWASP guidance, or to observed exposure patterns described by SecurityScorecard and other researchers. (OpenClaw)

What a sane OpenClaw hardening baseline looks like

A sane baseline starts by accepting that OpenClaw is not a shared assistant for mixed-trust users. OpenClaw’s own security page says the supported posture is one user or trust boundary per gateway, ideally one OS user or host per boundary. If your company wants multiple employees experimenting with agents, do not centralize them into one all-purpose gateway and hope session separation will save you. Session isolation helps privacy. It does not create authorization isolation between adversarial users. (OpenClaw)

Second, keep the gateway local unless there is a compelling reason not to. The recommended default is loopback-only binding. If remote access is unavoidable, use a tunnel or private networking overlay, and layer strong authentication on top. Public internet exposure is directly discouraged by both the official docs and external security research. (GitHub)

Third, use OpenClaw’s built-in audit features aggressively. The CLI reference includes openclaw security audit, openclaw security audit --deepy openclaw security audit --fix. The security page says to run these regularly, especially after config changes or when exposing any network surface. That is unusually mature guidance for an open-source agent project, and operators should take advantage of it. (OpenClaw)

Fourth, assume the agent’s credentials define your blast radius. Use dedicated accounts, not your primary personal or enterprise accounts. Use short-lived tokens where possible. Rotate them after incidents. Keep secret material out of plaintext config when you can, and use the secret audit workflow to catch residues and precedence drift. Microsoft’s isolation recommendation is effectively a statement about blast-radius control: if compromise happens, the environment should already be sacrificial. (Microsoft)

Fifth, narrow tools before narrowing prompts. Prompts are slippery. Tool policies are deterministic. OWASP recommends least privilege, validation of all external inputs, human-in-the-loop for high-risk actions, and never giving agents unrestricted tool access. OpenClaw’s approvals system can enforce a stricter execution path locally, and its docs explicitly say command requests should be allowed only when policy, allowlist, and optional approval all agree. That is the kind of deterministic control plane you want. (Serie de hojas de trucos de OWASP)

Sixth, treat skills like code, not content. A good extension policy for OpenClaw looks more like an internal package repository policy than a consumer app-store workflow. Require provenance, review manifests, pin versions, scan dependencies, and maintain a kill switch for revocation. OpenClaw’s releases have already moved to reduce implicit workspace plugin execution, which tells you the project is aware that convenience and trust often collide. (GitHub)

Example commands and operational checks

A simple starting point for a local review looks like this:

openclaw security audit

openclaw security audit --deep

openclaw security audit --json > openclaw-audit.json

openclaw secrets audit

openclaw doctor --fix

These checks help catch gateway auth exposure, browser control exposure, filesystem permission issues, plaintext secret residues, and some classes of runtime expectation drift documented by the project. (OpenClaw)

For host-level containment, the intent should be equally clear:

# Keep the gateway local

openclaw gateway run --bind loopback

# Review your Node version because the project expects modern LTS

node --version

The OpenClaw security documentation recommends loopback binding and notes runtime requirements tied to Node.js versions that include important security patches. (GitHub)

A practical review checklist for security engineers can also be expressed as policy questions rather than commands:

1. Is the gateway reachable only from loopback or a private tunnel

2. Are credentials dedicated, scoped, and rotated

3. Are dangerous tools disabled by default

4. Do exec requests require approval for sensitive paths

5. Are third-party skills reviewed and version pinned

6. Is the runtime isolated from production secrets and personal accounts

7. Can one allowed sender induce actions that affect another user or shared state

8. Do we have logs that tie human input to agent action to result

Those questions line up closely with OWASP, Microsoft, OpenClaw’s own trust model, and third-party operational guidance. (Serie de hojas de trucos de OWASP)

The mistakes that cause most real-world damage

The first recurring mistake is treating OpenClaw as a productivity app instead of a privileged runtime. That framing error causes teams to install it on normal laptops, log in with high-value personal or corporate credentials, add too many integrations, and skip isolation. Microsoft’s guidance exists because this pattern is already common. (Microsoft)

The second mistake is assuming authentication equals authorization. Pairing codes, allowlists, and channel approval reduce noise from random senders. They do not solve the deeper problem that an approved sender may still be able to induce dangerous tool use within the agent’s permission envelope. OpenClaw’s own docs say that if multiple people can message one tool-enabled agent, they share the delegated tool authority. That should end any illusion that a shared Slack bot with shell access is “safe enough” merely because only employees can reach it. (GitHub)

The third mistake is exposing the control plane for convenience. SecurityScorecard’s exposed-instance reporting is the public proof that many operators still make this error. The issue is not that agentic AI is mysteriously unpredictable. It is that people are exposing sensitive runtime services the same way they used to expose insecure dashboards, admin interfaces, or test databases. The difference is that the compromised service now comes bundled with delegated authority across files, tokens, and tools. (SecurityScorecard)

The fourth mistake is importing community skills as if they were harmless prompts. They are not prompts. They are operational extensions inside a powerful runtime. JFrog, Semgrep, and OpenClaw’s own security model all point toward the same conclusion: trust in the extension ecosystem is one of the hardest boundaries to defend, and it needs code-level governance. (JFrog)

Why agent validation has to become continuous

Static hardening is necessary, but it is not enough. OpenClaw is a moving system. New model versions change tool-use behavior. New integrations widen authority. New skills alter execution paths. New CVEs emerge as the project expands. Release notes already show the maintainers tightening defaults around pairing tokens and plugin autoload behavior, which is exactly what you would expect from a runtime that is maturing under pressure. Defenders need to assume the same thing will continue: the product will evolve, and so will the failure modes. (GitHub)

That is why AI agent security needs verification, not just opinions. Hardening guidance, policy reviews, and architectural whiteboarding all matter, but the decisive question is whether the runtime can still be steered into unsafe behavior under real inputs, real integrations, and real credentials. Research such as Agents of Chaos shows how quickly autonomous agents in realistic environments can drift into unauthorized compliance, destructive actions, spoofing, leakage, and partial takeover. The lesson is not that every agent is doomed. The lesson is that autonomy plus tools plus persistent state creates a different class of testing requirement. (arXiv)

For teams building around OpenClaw or evaluating similar agentic runtimes, that is where a platform like Penligent fits naturally. The value is not “AI talking about AI security.” The value is being able to continuously test a high-authority runtime the way security teams already test APIs, web apps, and exposed services: with repeatable attack paths, evidence, prioritization, and verification loops. That is especially relevant for runtimes where the real boundary is spread across prompts, policies, tools, memory, browser state, and connected identities. Penligent’s recent OpenClaw-focused research has leaned into that exact gap, looking at hardening, validation, and how stronger models widen the blast radius when a runtime is already trusted too broadly. (Penligente)

The practical takeaway is simple. If OpenClaw is experimental, test it like hostile code. If OpenClaw is in production, test it continuously like business-critical infrastructure. Anything in between is wishful thinking.

Final judgment

OpenClaw security is not impossible, but it is unforgiving. The platform can be run responsibly if you accept its real trust model, keep the gateway local, isolate identities, minimize tools, govern skills like code, audit secrets, and continuously validate the runtime against adversarial inputs. The moment you treat it as just another chatbot, the model stops being your biggest problem. Your architecture becomes the problem. (OpenClaw)

Enlaces

- OpenClaw Security Documentation

- OpenClaw CLI Security Audit Reference

- OpenClaw GitHub Security Policy

- Microsoft Security Blog, Running OpenClaw safely

- OWASP AI Agent Security Cheat Sheet

- OWASP LLM Prompt Injection Prevention Cheat Sheet

- SecurityScorecard, Exposed OpenClaw Deployments

- NVD, CVE-2026-25253

- NVD, CVE-2026-28472

- NVD, CVE-2026-32060

- NVD, CVE-2026-30741

- Agents of Chaos, arXiv

- OpenClaw Security Risks and How to Fix Them

- OpenClaw GPT 5.4 Security, When a Better Agent Becomes a Bigger Target

- OpenClaw Security Audit, Penligent landing page

- Penligent, AI Penetration Testing Platform