A pentest copilot becomes useful the moment it stops pretending to be an autonomous hacker and starts acting like a controlled reasoning layer inside a real testing workflow. That distinction matters more with Claude than with most AI security discussions, because the practical Anthropic product surface is no longer just a chat box. Claude Code can read a codebase, edit files, run commands, connect to external tools, and iterate through a task loop that Anthropic itself describes as gather context, take action, and verify results. That is exactly why it can accelerate offensive security work, and exactly why it becomes dangerous when operators skip permissions, verification, and human review. (Claude)

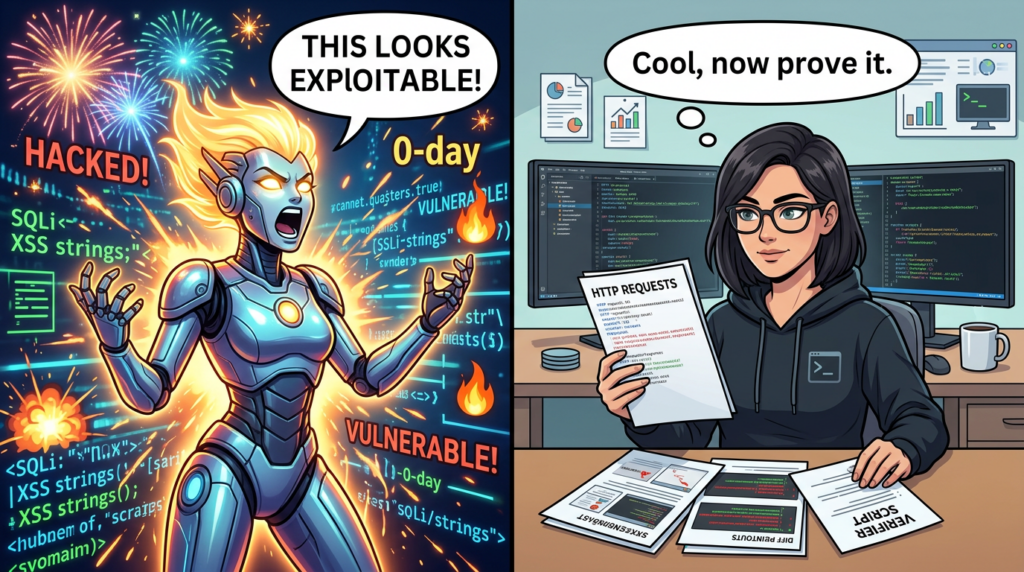

The phrase “Claude AI for pentest copilot” only makes sense if the word copilot is kept narrow. In an authorized engagement, a copilot can help absorb a large repository, map likely trust boundaries, draft retest steps, correlate logs with code paths, and turn messy artifacts into a coherent narrative. It cannot be treated as a source of truth for exploitability, impact, or exposure without an external verifier and a human who understands the target system. Anthropic’s public materials on Claude Code Security point in the same direction. Their security product is positioned around vulnerability scanning and patch suggestions for code, not as a magic proof engine for live internet-facing targets. (Antrópico)

That distinction is reinforced by Anthropic’s own cyber research. The company says modern Claude models are now genuinely useful for cybersecurity tasks, and its work with Mozilla shows strong performance in bug finding. But the same public write-up is blunt about the limits: the model was much better at finding bugs than exploiting them, and the few successful exploit attempts described in the Firefox research happened only in a testing environment with key browser protections disabled. That is not a reason to dismiss Claude. It is a reason to use it with the right job description. (Antrópico)

For pentesters, the right job description is evidence-first assistance. Claude is strongest when it is asked to reason about possible attack paths, formalize a testing sequence, generate reproducible checks, review diffs, and help translate a finding into something another engineer can independently verify. It gets weaker as soon as the problem shifts from “what is plausible here” to “is this truly reachable, under this authentication state, against this deployed target, with these compensating controls, and can I prove it without ambiguity.” (Claude)

There is another reason to be precise. Anthropic’s policy language draws a clear boundary between legitimate security work and malicious computer misuse. The current policy text carves out space for vulnerability discovery and security research with system owner consent, while tightening rules around unauthorized compromise and destructive abuse. For anyone building a Claude-centered pentest workflow, authorization, scope, and auditability are not paperwork around the work. They are part of the technical design. (Antrópico)

Claude AI for pentest copilot starts with a narrow definition

Most AI security products are sold with language that collapses several different problems into one. Source review, exploit development, browser automation, live validation, report writing, and remediation verification are discussed as if they were all just “finding vulnerabilities faster.” They are not the same task. The practical question is not whether Claude is “good at pentesting.” The practical question is which parts of an engagement benefit from Claude’s reasoning loop, which parts need specialized tooling, and which parts must remain under strict human control. (Claude)

In this context, a pentest copilot is best understood as a collaborator that sits between raw context and operator judgment. It can turn a sprawling codebase into a candidate threat model. It can identify the configuration edge cases a human might overlook during a quick review. It can draft repeatable verification logic. It can summarize evidence after a test has already been run. What it should not do is silently collapse the gap between hypothesis and proof. That gap is where weak security work becomes expensive security theater. (Antrópico)

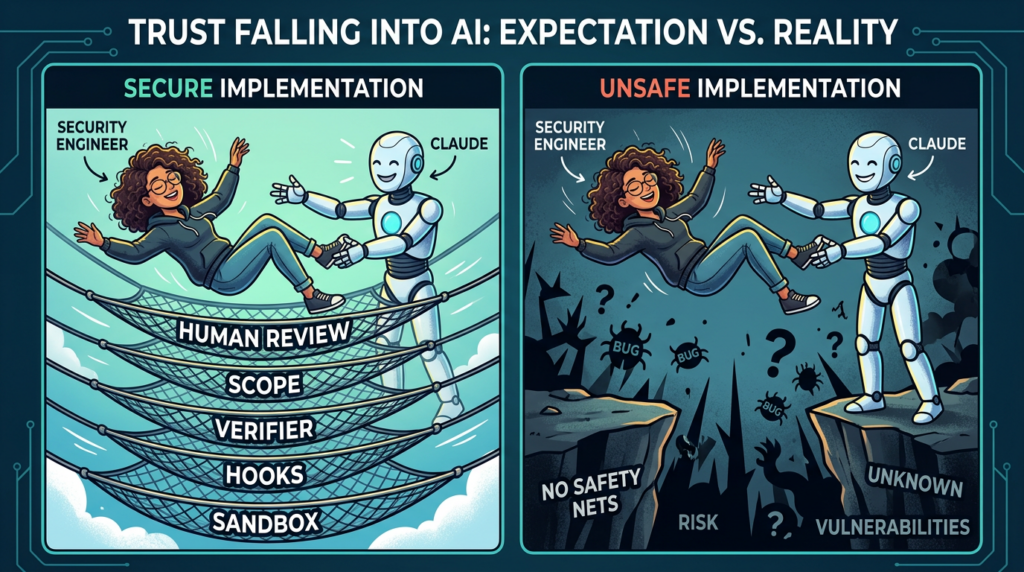

A useful pentest copilot therefore has three characteristics. First, it is instrumented, which means it can read files, run bounded commands, and talk to approved tools. Second, it is constrained, which means every extra capability is governed by permissions, sandboxing, allowlists, and explicit approval paths. Third, it is verifier-backed, which means its claims are checked by code, environment state, browser state, or independent tooling instead of being accepted because the explanation sounds smart. Anthropic’s documentation supports all three ideas. Claude Code is explicitly tool-using and agentic; its security model centers on approvals, sandboxing, and protections against prompt injection; and Anthropic’s own cyber research repeatedly highlights the value of reliable task verifiers. (Claude)

Claude Code is the practical engine behind a Claude pentest copilot

For a real offensive workflow, the relevant Anthropic surface today is Claude Code, not generic chat. Anthropic describes Claude Code as an agentic coding tool that can read code, edit files, run commands, and integrate with development systems. The documented task loop is simple and important: gather context, take action, verify results. That pattern lines up well with security review, retesting, and code-to-runtime reasoning because security work is full of partial hypotheses that only become useful after controlled action and external verification. (Claude)

Claude Code is also no longer confined to a single shell experience. Anthropic documents desktop and browser surfaces, an Agent SDK that exposes the same core agent loop and tool set, a Chrome integration that lets Claude inspect and interact with authenticated web sessions, and a security-focused mode that can scan codebases for vulnerabilities and propose targeted patches for human review. Each one expands the amount of signal a pentester can collect. Each one also expands the amount of trust the operator must actively refuse to give away. (Claude API Docs)

This matters because Claude is not entering an empty field. Security teams already have embedded AI assistants such as Burp AI, which PortSwigger positions as workflow enhancements inside an established testing platform. Burp AI’s own documentation stresses that its features do not run unless the user explicitly activates them. Claude operates at a broader orchestration layer. That gives it more room to reason across code, commands, browser state, and external tools, but it also means the surrounding control plane has to be designed much more carefully than it would for an assistant that lives only inside one tightly scoped product surface. (PortSwigger)

The broader orchestration model is why the term copilot is still justified. A well-designed Claude workflow does not try to replace a proxy, a scanner, a browser testing harness, or a verifier. It coordinates them. It helps the tester maintain state, transform observations into next-step hypotheses, and convert human intuition into repeatable checks. That is a very different claim from “AI can now pentest on its own,” and it is much closer to what the public evidence actually supports. (Claude)

Pentest copilot work Claude does well

Claude is at its best when the challenge is understanding and structuring complexity faster than a human could do alone. That starts with code and configuration review. In a modern application, the interesting security behavior is rarely visible in one controller or one route. It lives in the interaction between middleware, feature flags, policy checks, client-side assumptions, environment variables, storage adapters, deployment templates, and undocumented “temporary” admin flows. Claude Code’s ability to traverse a repository, search deeply, and maintain a working memory of what it has already inspected makes it particularly effective at surfacing these relationships. (Claude)

A second strong use case is test path generation. Security engineers often have enough clues to suspect an issue but not enough time to turn those clues into a disciplined sequence of validations. Claude can help sequence that work. Given a route handler, an auth middleware chain, a set of API clients, and some logs, it can identify which states matter, which assumptions deserve a manual challenge, and what the shortest non-destructive verification sequence might look like. That is not the same thing as discovering a novel exploit chain out of nothing. It is often more valuable in practice because it reduces wasted testing motion. (Claude)

A third strong use case is patch comprehension and regression review. Anthropic exposes a /security-review command that is explicitly aimed at analyzing code changes for risks such as injection, authentication errors, and data exposure. That feature is most useful after a finding is already suspected or after a fix has already landed. Claude can inspect a diff, explain what the patch truly changed, identify any side paths the fix did not touch, and draft regression checks that would catch a partial remediation. This is one of the most mature and least controversial ways to use AI in offensive security because the model is helping the human read code more carefully, not pretending to replace evidence. (Claude)

A fourth strong use case is artifact normalization. Offensive work produces ugly evidence. Terminal output, browser transcripts, screenshots, error messages, proxy captures, commit diffs, and “I changed one header and it broke in a weird way” notes do not naturally become a clean narrative. Claude is very good at turning that pile into a sequence of observations, conditions, proof points, and remediation notes. Done correctly, that reduces the reporting tax without reducing rigor. Done incorrectly, it becomes eloquent fiction. The difference is whether the operator makes Claude cite artifacts instead of letting it improvise causal claims. (Antrópico)

The table below condenses the highest-value places for Claude inside a real engagement, and the control signals each task still needs from outside the model. It is based on Anthropic’s documented agent loop, permissions model, code-security features, and public cyber research rather than on aspirational marketing. (Claude)

| Engagement phase | Where Claude adds value | External verifier needed | Artifact that should come out |

|---|---|---|---|

| Repository intake | Map auth boundaries, risky sinks, hidden admin paths, config dependencies | Human review of scope and trust boundaries | Initial threat map, file list, open questions |

| API test planning | Turn code and logs into concrete test sequences | Proxy traces, staging responses, role-specific accounts | Stepwise retest plan |

| Patch review | Explain what changed, what did not, and what should be regression-tested | Unit tests, integration tests, live retest | Patch analysis, regression checklist |

| Browser-driven validation | Correlate UI flows, console errors, DOM state, auth state | Isolated browser session, screenshots, HTTP evidence | Repro steps, browser artifacts |

| Finding confirmation | Help write exact checks and edge-case probes | Status codes, body diffs, logs, permission-state proofs | Verified result or rejection |

| Informes | Convert raw evidence into a precise narrative | Human edit against raw artifacts | Finding write-up, remediation notes, retest appendix |

Pentest copilot work Claude should not do alone

The easiest way to misuse Claude is to ask it a question that sounds crisp but is underdetermined by the evidence. “Is this endpoint vulnerable.” “Can this bug be chained to RCE.” “Does this patch fix the issue.” “Is this production system exposed.” These look like yes-or-no questions, but each one hides multiple conditions the model cannot infer safely from partial context. The model can help enumerate those conditions. It should not be allowed to collapse them. (Antrópico)

This is where Anthropic’s public cyber research is unusually useful. In the Mozilla work, the company explicitly says that the model was better at finding bugs than exploiting them, and it emphasizes the importance of task verifiers that can tell whether the problem was actually fixed and whether the intended functionality still works. That is a powerful framing for pentesters. A copilot does not become trustworthy by getting better at sounding certain. It becomes trustworthy when the workflow around it gets better at saying “prove it.” (Antrópico)

Claude should also not be trusted to decide what is in scope, which account is safe to use, which secrets may be touched, or when a test should stop. Those are governance decisions, not reasoning tasks. Anthropic’s documentation reflects this separation. Claude Code has tiered permissions, explicit approval modes, sandboxing, and managed settings because the vendor assumes there will be cases where the model’s next plausible action is still not an acceptable action. Pentesting is full of such cases. (Claude)

Finally, Claude should not be trusted to carry external content into privileged decision-making without filtration. Anthropic’s security documentation explicitly discusses prompt injection, input sanitization, command blocklists, suspicious-command review, and isolated context windows for web fetch. The point is not that Claude is uniquely weak. The point is that any agent allowed to consume untrusted content and then act on local state or credentials is a high-value target for content-layer manipulation. A pentest copilot is no exception. (Claude)

Claude AI for pentest copilot needs a control plane before the first command

A safe copilot workflow starts before the model sees the first file. If you build the prompt first and the controls later, you are already behind. Anthropic’s own product design points toward the right order: persistent project context, permissions, approval modes, sandboxing, hooks, and managed settings come before any serious automation. Those are not convenience features. They are the control plane. (Claude)

The first control is project context. Anthropic documents that Claude Code sessions start fresh and that cross-session knowledge is carried through mechanisms such as CLAUDE.md, auto memory, and managed memory. Project-level CLAUDE.md is shared through version control and is intended to hold build commands, standards, workflows, and other operational context. In an authorized security engagement, it should also hold scope boundaries, forbidden actions, environments, evidence storage rules, approved accounts, and explicit completion criteria. That turns vague “be careful” instructions into machine-visible constraints. (Claude)

The following CLAUDE.md example is a practical security adaptation of Anthropic’s documented project-memory model. It is not vendor text. It is an operator template for a scoped, authorized engagement.

# Engagement context

Target: staging.api.example.internal

Authorization: Written approval on file, testing window 2026-03-20 through 2026-03-27

Objective: Validate suspected authorization flaws and patch regressions in billing and admin routes

# Hard boundaries

- Do not target production

- Do not modify customer records

- Do not send traffic to hosts outside *.example.internal

- Do not use destructive payloads, denial-of-service patterns, or brute force

- Do not access secrets stores, cloud consoles, or employee mailboxes

- Do not create new users or elevate privileges outside provided test accounts

# Test accounts

- analyst_user with standard billing role

- manager_user with read-only admin dashboard access

- security_admin for verifier-only checks, not for discovery

# Evidence requirements

- Save every confirmed test result under evidence/YYYYMMDD/

- For each candidate issue, store request, response, account used, timestamp, and expected vs actual behavior

- Never mark a finding as confirmed without a reproducer or verifier script

# Output requirements

- For each suspected issue, produce:

1. code path summary

2. preconditions

3. minimal verification steps

4. evidence files

5. remediation note

6. regression checks

# Stop conditions

- Stop and ask for approval before any state-changing request

- Stop and ask for approval before any outbound network access

- Stop and ask for approval before adding a new MCP server or browser permission

The second control is permissions. Anthropic’s permissions documentation is explicit: Claude Code uses a tiered permission model in which simple reads are handled differently from shell access and file modification, and the configuration can be shared across teams and checked into version control. There are also multiple permission modes aimed at different levels of autonomy and interruption. In pentesting, the right default is almost never “wide open and convenient.” Start at read-heavy or approval-heavy settings, then widen only for specific tasks that demonstrably need it. Anthropic also states plainly that permissions and sandboxing are complementary defense layers, not substitutes. (Claude)

The third control is sandboxing. Anthropic describes Claude Code’s sandbox as using OS-level isolation to restrict filesystem and network effects for bash commands while reducing approval fatigue. That matters in offensive testing for a reason beyond convenience. A pentest copilot is frequently asked to process attacker-controlled content, untrusted repositories, reflected browser content, and error messages that might themselves contain manipulative instructions. Sandboxing reduces the blast radius of the inevitable case where a plausible next step is still the wrong step. (Claude)

The fourth control is hooks. Anthropic documents hooks as user-defined shell commands, HTTP endpoints, or LLM prompts that run at specific lifecycle points. Their own examples include blocking edits, injecting context, auditing configuration, and approving or rejecting actions. In a pentest workflow, hooks are the cleanest place to encode “do not stop until the evidence exists,” “do not write a conclusion without a raw artifact,” and “do not touch new domains unless explicitly approved.” That is much stronger than repeating the same warning in natural language every session. (Claude)

The example below shows a stop-time agent hook pattern adapted from Anthropic’s documented hook model. It is designed to reject a “done” state when the session has not produced auditable evidence.

{

"hooks": {

"stop": [

{

"type": "llm_prompt",

"name": "require_evidence_before_stop",

"prompt": "Before allowing the session to stop, verify that the repository contains an evidence directory for today, that at least one raw request and response pair exists for each confirmed finding, and that the environment name and test account are recorded. Respond with JSON only: {\"allow\": true|false, \"reason\": \"...\"}"

}

]

}

}

The fifth control is MCP, which is also where many teams quietly reintroduce risk they had just removed. Anthropic promotes MCP as the way Claude Code connects to hundreds of external tools and data sources. The same documentation warns that third-party MCP servers are not verified by Anthropic and that servers which fetch untrusted external content can introduce prompt-injection exposure. The official managed-settings docs expose allowlists and denylists for MCP servers, with the important detail that an undefined allowlist means unrestricted behavior, while an empty allowlist means total lockdown, and deny entries always take precedence. A security team should treat those semantics as policy, not as optional tuning. (Claude)

The following managed-settings example is based on Anthropic’s documented MCP controls, adapted to an engagement in which only a small set of trusted integrations are acceptable.

{

"permissions": {

"allow": ["Read", "Grep", "Glob", "LS", "Bash"],

"deny": ["WebFetch"]

},

"allowedMcpServers": [

"github",

"filesystem-readonly",

"internal-ticketing-readonly"

],

"deniedMcpServers": [

"browser-remote",

"arbitrary-http",

"community-scraper"

]

}

These controls are what make the word copilot defensible. Without them, the workflow becomes a loosely supervised agent with access to code, credentials, browsers, and tools. That is not a copilot. That is an incident report waiting for a timestamp.

Browser-driven testing changes the signal you can collect

One of the most useful additions to the Claude surface is browser integration. Anthropic’s documentation for Claude in Chrome and Claude Code with Chrome describes capabilities such as reading and navigating websites, viewing console errors and network behavior, debugging web apps, automating form input, extracting data, and interacting with sites where the user is already signed in. For security work, that is a major increase in observable state. It closes part of the gap between static reasoning and authenticated application behavior. (Claude)

That extra signal is valuable in several specific cases. It helps with stateful authorization testing where the interesting behavior is visible only after a multi-step workflow. It helps with client-side policy failures where the server response and the DOM behavior diverge. It helps with fragile reproductions where timing, redirects, or browser storage state matter. It can also surface console errors or blocked requests that explain why a server-side suspicion never manifests in the live app. Those are all excellent uses for a copilot, and none require pretending that the model itself is the verifier. The browser transcript, screenshot, network capture, and account state are the verifier. (Claude)

The risk is obvious. Anthropic’s help materials state that browser use has inherent risks and provide modes such as “Ask before acting.” The extension also has broad browser-related permissions, and site access can be managed in the extension settings. In a pentest environment, the right pattern is isolated test accounts, an isolated browser profile, tightly bounded site permissions, and a rule that authenticated sessions used for discovery are different from sessions used for destructive verification. Mixing the operator’s real daily browser identity with a copilot workflow is indefensible. (Claude Help Center)

There is also a deployment-shape difference worth noting. Anthropic’s “Claude Code on the web” runs in an Anthropic-managed virtual machine in research preview. That model has a different trust boundary than local CLI execution. A team deciding between local execution, desktop execution, Chrome integration, or Anthropic-managed browser execution should map each option to the data it can see, the credentials it can inherit, and the network it can reach. Those are architectural choices, not UI preferences. (Claude)

Task verifiers separate believable findings from real findings

The most important idea in modern AI-assisted security work is not agent autonomy. It is verifier quality. Anthropic’s cyber research with Mozilla says this directly. A useful patching or vulnerability-finding agent needs a reliable way to determine whether a vulnerability has actually been removed and whether the original functionality still works. That principle extends beyond patching into every offensive workflow where the model is allowed to propose or refine test logic. (Antrópico)

A verifier is any independent mechanism that can answer a narrow, factual question about system behavior. Did a standard-role account receive data intended for an admin role. Did the supposedly fixed route stop accepting an unsafe input. Did the browser still render privileged controls after the server response changed. Did the patched service stop performing a dangerous lookup. Did a role boundary survive a direct request replay. These are not “AI questions.” They are engineering questions. Claude is useful because it can help define them precisely and write the checks that answer them. (Antrópico)

In web and API testing, good verifiers are often embarrassingly simple. Compare two status codes across accounts. Diff a response body for fields that should never appear to a lower-privileged principal. Assert that a browser control is not only hidden but also server-enforced. Record whether a patch removed the dangerous sink and whether the intended feature still completes. Those are the checks that keep a report from drifting into “appears vulnerable” language when the evidence is not there. (Antrópico)

The following example is intentionally modest. It is a safe verifier pattern for an authorized staging environment. It compares role-scoped access to a billing export route and fails if a lower-privileged account sees either the data or a success status it should not receive.

import requests

BASE = "https://staging.api.example.internal"

ROUTES = ["/billing/export", "/billing/export?format=csv"]

TOKENS = {

"analyst_user": "REPLACE_ME",

"manager_user": "REPLACE_ME",

"security_admin": "REPLACE_ME"

}

def check_route(route, token):

r = requests.get(

BASE + route,

headers={"Authorization": f"Bearer {token}"},

timeout=10,

)

return r.status_code, r.text[:500]

for route in ROUTES:

analyst_status, analyst_body = check_route(route, TOKENS["analyst_user"])

admin_status, admin_body = check_route(route, TOKENS["security_admin"])

print(f"\nRoute: {route}")

print(f"analyst_user status: {analyst_status}")

print(f"security_admin status: {admin_status}")

if analyst_status == 200:

raise SystemExit(f"FAIL: analyst_user unexpectedly succeeded on {route}")

if "invoice_id" in analyst_body.lower():

raise SystemExit(f"FAIL: analyst_user response leaked billing data on {route}")

if admin_status != 200:

raise SystemExit(f"FAIL: security_admin lost expected access on {route}")

print("\nPASS: access boundary behaved as expected in this verifier run")

Claude is good at drafting this sort of checker after it reads the route logic, the role model, and a patch diff. The verifier itself is what matters. If the script is wrong, the workflow is wrong. If the operator runs it against the wrong environment or with the wrong account, the workflow is wrong. The useful mental model is that Claude shortens the distance between suspicion and a checker, not the distance between suspicion and truth. (Antrópico)

CVE-2021-44228 shows why reasoning is not the same as exposure proof

Log4Shell remains one of the clearest examples of where a copilot can help a lot and still be unable to answer the only question that matters without external evidence. NVD records CVE-2021-44228 as a remote code execution issue in Log4j 2 caused by JNDI features, and Oracle’s advisory notes that it is remotely exploitable without authentication in affected products. CISA’s response materials treated it as an urgent, broad-impact problem because the vulnerable library was embedded everywhere and because exposure depended on both software composition and runtime conditions. (NVD)

That is exactly the kind of situation where Claude can save time. On the white-box side, it can identify direct and transitive Log4j usage, flag logging statements that consume attacker-controlled input, locate environment-dependent mitigations, inspect container and build files for vulnerable versions, and draft a regression matrix for patch validation. If a team has a sprawling monorepo and partial SBOM coverage, those are meaningful productivity gains. (Claude)

But none of that proves live exploitability. Exposure depends on version specifics, configuration, message-lookup behavior, egress conditions, classpath reality, reachable log sinks, and the actual request path through the deployed service. A copilot can tell you where to look. It cannot responsibly claim that the production system is exploitable unless a verifier demonstrates the relevant unsafe behavior in an authorized environment. This is why many bad AI security write-ups sound confident but remain operationally useless. They confuse “I found the risky ingredient” with “I proved the deployed exploit path.” (NVD)

Claude is especially helpful after the first pass. Once a patch lands, it can compare the old and new code paths, identify whether the fix only removed one entry point, and generate negative tests to ensure the dangerous behavior no longer occurs. In other words, it is strong at making the investigation and retest loop sharper. That is different from replacing the runtime evidence loop. (Claude)

CVE-2023-34362 shows why authenticated application context matters

CVE-2023-34362 in MOVEit Transfer is another instructive case. NVD describes it as an SQL injection vulnerability in MOVEit Transfer, and the CVE record notes that it can allow an unauthenticated attacker to gain access to the MOVEit Transfer database. Public reporting and advisories around the incident made clear that the practical risk was not just a generic injection class. It was the combination of a reachable application surface, a business workflow that accepted attacker-controlled input, and downstream impact in the context of a file-transfer product trusted by many organizations. (NVD)

For a pentest copilot, this is the sort of issue where code-centric reasoning and application-context reasoning must meet. Claude can read route handlers, identify suspicious concatenation or parameter handling, map request flow through the product, and help draft focused checks. It can also help compare the pre-fix and post-fix logic to see whether sanitization or query handling changed in the intended place. What it cannot infer safely from code alone is whether a particular deployment path, auth state, tenant configuration, or reverse-proxy behavior still exposes the path you care about. (Claude)

This is also where browser-backed and authenticated testing start to matter. Many high-value findings are not about a single raw request in isolation. They depend on role setup, workflow state, token lifetime, CSRF assumptions, partial feature access, or hidden fields that appear only after a specific sequence. Anthropic’s browser tooling expands the amount of state Claude can observe during these tests. The mistake would be to assume that observing more state means the model itself is the final judge. It simply has better inputs for producing a verifier. (Claude)

When teams use Claude for this part of the workflow, they should insist on four outputs before they accept a finding. First, the narrow preconditions. Second, the smallest successful reproduction path. Third, a clear account of what evidence confirms the result. Fourth, a regression checker that would fail if the bug came back. Without those four, the write-up is still a research note, not a finding. (Antrópico)

CVE-2026-2796 shows both frontier capability and hard limits

The most tempting way to oversell Claude in security is to point to Anthropic’s Mozilla collaboration and stop reading halfway through. Anthropic publicly states that Claude helped find more than 500 zero-day vulnerabilities in well-tested open-source software. That is a striking signal about modern model capability and should not be downplayed. It means security engineers should stop pretending that frontier models are only useful for summarization or junior-level code review. (Antrópico)

At the same time, the most useful part of the Mozilla write-up is the limitation language. Anthropic says its model was much better at finding bugs than exploiting them, and that the few successful exploit attempts in the Firefox work occurred after several hundred runs and in a test setup where important browser security features had been disabled. Anthropic’s separate write-up on CVE-2026-2796 also says the exploit was not a full chain and only worked in a test environment lacking some security features. NVD identifies CVE-2026-2796 as a JIT miscompilation issue in Firefox’s JavaScript WebAssembly component. Taken together, those facts paint a much more honest picture than either hype or dismissal. (Antrópico)

For pentesters, the practical lesson is that frontier capability is real but phase-specific. Claude may be able to help find logic errors, variant paths, and even subtle implementation flaws that a rushed reviewer would miss. That does not mean it can autonomously transform a promising bug into a reliable exploit under realistic hardening. A pentest copilot built around Claude should therefore be optimized for discovery support, reasoning quality, and retest discipline, not for theatrical autonomy. (Antrópico)

Anthropic’s broader cyber range research supports the same reading. The company says Claude models can now complete multistage attacks on realistic ranges using standard open-source tools, and it describes Sonnet 4.5 successfully exfiltrating simulated PII in a high-fidelity Equifax-style range in two out of five trials using only bash on a Kali host. That is serious progress. It is also still measured, conditional, and environment-specific. A security engineer should read it as evidence that AI assistance has crossed the threshold from toy to operationally relevant, not as evidence that human-governed offensive workflows are obsolete. (Rojo antrópico)

The copilot itself becomes part of the attack surface

A pentest copilot is not only a tool for inspecting targets. It is itself a target. Anthropic’s security documentation addresses prompt injection directly, including permission systems, input sanitization, command blocking, suspicious-command review, trust checks for new codebases and MCP servers, isolated context windows for web fetches, and default approval on network requests. Those controls exist because the threat model is real: untrusted content can try to manipulate the model into taking unsafe actions with local tools or credentials. (Claude)

MCP expands this attack surface substantially. The official MCP specification and Anthropic’s MCP security guidance both warn that the protocol enables powerful capabilities and therefore requires user consent, strong authorization, and careful handling of sensitive data. The security best-practices page explicitly discusses the confused deputy problem. In plainer language, a low-trust source can trick a high-privilege integration into doing something the user never intended if the client, server, and authorization model are not carefully designed. That is not an abstract academic concern for pentesters. It is what happens when a model that can read untrusted issue text is also allowed to use a cloud admin MCP server. (Modelo de Protocolo de Contexto)

Recent research has made the browser and extension story impossible to ignore. Koi Security’s ShadowPrompt research described a browser-scale prompt-injection issue involving Anthropic’s Claude extension, claiming that any website could silently inject prompts that appeared to come from the user because of an overly broad trusted origin condition combined with a DOM XSS flaw in Arkose CAPTCHA hosted on an Anthropic subdomain. Koi advised users to verify they were running extension version 1.0.41 or later. Whether or not a given operator used that extension, the lesson is general: a copilot that can read the web and act on the browser inherits web-origin problems. (Koi)

Research on Anthropic’s desktop extension ecosystem raises a related concern. LayerX publicly described a zero-click chain involving Claude Desktop Extensions and a single Google Calendar event, arguing that DXT components could bridge low-risk connectors and high-risk local execution because extensions ran with broad local privileges. Their post also says Anthropic chose not to remediate the issue at that time. That last point should be read as LayerX’s public characterization, not as an uncontested adjudication. The useful operational lesson is simpler: any extension or connector system that bridges external content and local execution deserves the same suspicion as a browser extension with broad access. (LayerX)

The table below summarizes the most important places a Claude-centered offensive workflow can be attacked from the side rather than from the front. It combines Anthropic’s official controls with public security research on browser and extension risks. (Claude)

| Surface | Real risk | Condición desencadenante | Practical control |

|---|---|---|---|

| Untrusted repository content | Prompt injection, misleading instructions, secret discovery attempts | Running Claude on unfamiliar code with broad tool access | Start read-only, require approval for write and network, use sandbox |

| Web fetch or browser content | Hidden prompt injection, malicious DOM content, cross-origin tricks | Letting Claude read arbitrary web pages while it can act locally | Isolated browser profile, minimal site access, explicit approval on action |

| Third-party MCP server | Tool poisoning, confused deputy, data exfiltration | Adding community servers or servers that ingest untrusted content | Use allowlists, deny by default, prefer read-only internal servers |

| Hooks | Unsafe automation, false approvals, hidden side effects | Overly permissive shell or HTTP hooks | Keep hook scope narrow, log every hook action, review hook code |

| Desktop extensions and connectors | Untrusted external content bridged into local execution | Installing broad extensions with local privileges | Treat as privileged code, test in isolated environment first |

| Shared operator browser or identity | Accidental use of real corporate auth or production context | Running discovery inside a daily-use browser profile | Separate test identities, separate profiles, separate environments |

Claude AI for pentest copilot and white-box to black-box handoff

One of the most common failure modes in AI-assisted security work is that teams become very good at white-box reasoning and very bad at transitioning to black-box proof. Claude makes that easier to get wrong because the model is unusually good at producing plausible, technically literate explanations. A beautiful explanation of why a route appears bypassable is still not evidence that the deployed service is bypassable. A polished patch review is still not proof that a live instance is safe. This handoff from code-native reasoning to system-native evidence is the center of gravity of the whole workflow. (Antrópico)

Public Penligent material is useful here because it uses almost the same distinction. Its own article on AI pentest copilots argues that “smart suggestions” are not the same thing as verified findings, and another public Penligent piece frames the relationship between Claude Code Security and black-box proof in nearly the same white-box versus deployed-validation terms. That public positioning is interesting not as marketing language, but because it mirrors the operational truth: many teams will use Claude to understand code and shape test logic, then hand off to a separate, operator-controlled validation layer for reachability, reproducible PoCs, and evidence collection against an authorized live target. (Penligente)

The key is to keep the handoff explicit. The white-box phase should end with preconditions, candidate proof paths, and regression ideas. The black-box phase should begin with environment confirmation, account confirmation, verifier execution, and evidence storage. If those phases bleed together inside one long, unstructured conversation with a high-privilege agent, the model will fill the gaps with confidence instead of evidence. (Antrópico)

Reporting and retesting need evidence, not eloquence

Claude’s writing ability is one of its most helpful features and one of its most dangerous ones. A model that can turn half-organized notes into a clean finding can save hours on every engagement. The same model can also turn a weak hypothesis into a report paragraph that sounds more mature than the evidence behind it. The only reliable fix is to make the model anchor every substantive claim to raw artifacts already saved by the workflow. That means requests, responses, screenshots, logs, role information, timestamps, and patch diffs should be treated as first-class inputs rather than as afterthoughts. (Antrópico)

This is another place where the public Penligent material aligns with a strong operating model. Penligent’s public pages emphasize editable reporting, reproducible PoCs, and verified findings rather than unverifiable AI narration. Whether a team uses that specific product or not, the underlying principle is right. The report should be the end of the evidence chain, not the substitute for it. (Penligente)

A good retest section should therefore answer six narrow questions. What changed. What exact condition used to fail. What exact condition now passes or no longer passes. Which account or environment was used. Which artifact proves it. Which check should be rerun after the next release. Claude can assemble these very effectively after the artifacts exist. It should never be allowed to invent one of the six because the operator forgot to capture it. (Antrópico)

How Claude differs from embedded security assistants

It is useful to compare Claude to embedded assistants not to rank them, but to prevent category mistakes. PortSwigger’s public documentation says Burp AI features enhance the workflow and do not run unless explicitly activated by the user. It also positions AI help inside concrete testing contexts such as Repeater, where the assistant can analyze a suspicious request, test a specific vulnerability idea, or suggest next steps within a constrained environment. That is a narrower and often safer posture than a general-purpose reasoning agent that can see files, run shell commands, and connect to external tools. (PortSwigger)

The difference suggests a practical split. If the problem is local and protocol-specific, an embedded assistant inside an established testing platform may be the right place for AI. If the problem spans code, infrastructure, browser state, ticket context, and retest orchestration, Claude’s broader reasoning surface becomes more attractive. The cost of that flexibility is that the operator must build the control plane instead of inheriting it from one mature tool. (PortSwigger)

This is why “Claude versus tool X” is usually the wrong question. A more useful question is “which part of the workflow needs broad reasoning, and which part benefits from staying inside a narrow, instrumented security surface.” In many strong teams, the answer will be both. Claude for cross-context reasoning. Purpose-built security tools for direct interaction and proof. Verifiers tying the two together. (Antrópico)

Common mistakes that make a pentest copilot dangerous

The first mistake is granting broad permissions before the workflow has earned them. Anthropic’s own documentation offers multiple approval modes and a layered permission system for a reason. Starting from the most permissive setting because it feels smoother is the operational equivalent of disabling every guardrail in a CI pipeline because the prompts are annoying. The irritation is the control working. (Claude)

The second mistake is using real production identities or daily-use browser sessions. Anthropic’s browser tooling can interact with sites where the user is already signed in. That is powerful for testing and a terrible excuse to let the model inherit whatever the operator happens to have open. Security testing accounts should be isolated, least-privileged, and specific to the engagement. Browsers should be isolated by profile or environment as well. (Claude)

The third mistake is installing MCP servers because they are convenient. Anthropic warns that third-party MCP servers are not verified and may increase prompt-injection risk, especially when they consume untrusted content. The official settings model gives teams enough structure to do allowlists and denylists. Teams that ignore that and then complain that the model touched the wrong tool are not dealing with an AI failure. They are dealing with a change-management failure. (Claude)

The fourth mistake is writing findings directly from model memory instead of from evidence. This is the fastest route to polished nonsense. If Claude cannot quote the request, quote the response, identify the account, point to the log, or show the patch diff, then it should not be writing the sentence as a confirmed fact. Its job at that point is to help the operator find the missing artifact or mark the claim as unconfirmed. (Antrópico)

The fifth mistake is ignoring the fact that the copilot is attackable. Prompt injection, browser content, connector abuse, tool poisoning, and extension chains are not future risks. Public research has already documented browser-extension prompt injection and extension-ecosystem local-execution concerns in Anthropic-adjacent surfaces. That should push teams toward isolation, explicit trust decisions, and narrow privileges, not toward panic. (Koi)

The sixth mistake is assuming that better reasoning removes the need for specialized methodology. OWASP’s Top 10 for Agentic Applications and the OWASP AI Testing Guide exist because AI-enabled systems introduce their own classes of risk and their own testing requirements. A pentest copilot should be built with that broader model in mind. The goal is not just to have a smarter assistant. The goal is to have a smarter assistant inside a workflow that still respects testing methodology, evidence discipline, and safety boundaries. (Proyecto OWASP Gen AI Security)

A practical operating model for security teams

A practical Claude-centered pentest workflow is simple to describe even if it takes discipline to run well. Start with written authorization, environment separation, and a project-level CLAUDE.md that encodes the engagement rules. Begin in a read-heavy permission mode. Enable shell and write actions only as specific tasks require them. Keep bash inside a sandbox. Treat every new MCP server as privileged software. Use hooks to enforce evidence capture and to reject ambiguous “done” states. Use isolated browser profiles and isolated test accounts for authenticated flows. Then define verifier logic before you ask Claude to help you write a finding. (Claude)

Once that foundation exists, Claude becomes genuinely useful. It can read what your team would otherwise skim. It can notice routes, policy gaps, and edge cases your team might not connect under time pressure. It can convert a patch diff into a retest plan. It can tighten a verifier. It can turn raw artifacts into a clean draft that an experienced tester can approve quickly. The gain is real, but it comes from better use of human attention, not from pretending the model has replaced it. (Claude)

Anthropic’s own public work points toward the same destination. Claude’s usefulness in cyber tasks is no longer hypothetical. Its limitations are no longer hypothetical either. That combination should push serious teams toward a design that is humble in the right places: permissioned, verifier-backed, artifact-driven, and explicit about which questions the model is allowed to answer. (Antrópico)

A pentest copilot built that way does not need to posture as an autonomous red team. It is more valuable than that. It is a disciplined amplifier for the parts of offensive security work that are most often bottlenecked by context load, review fatigue, and reporting drag. It shortens the path from clue to checker and from checker to report. It does not shorten the path from guess to fact. That boundary is where good security work stays honest. (Antrópico)

Lecturas complementarias y referencias

- Anthropic, Claude Code overview and how the agent loop works. (Claude)

- Anthropic, Claude Code permissions, approval modes, and sandboxing docs. (Claude)

- Anthropic, Claude Code hooks, memory, and MCP documentation. (Claude)

- Anthropic, Claude in Chrome and Claude Code browser integration docs. (Claude)

- Anthropic, Claude Code Security announcement and cyber defender research. (Antrópico)

- Anthropic and Mozilla, public write-up on bug finding, exploit limits, and task verifiers. (Antrópico)

- Anthropic, public write-up on CVE-2026-2796 and exploit constraints. (Rojo antrópico)

- Anthropic, realistic cyber range results with Claude models. (Rojo antrópico)

- Model Context Protocol, official specification and security best practices. (Modelo de Protocolo de Contexto)

- PortSwigger, official Burp AI documentation. (PortSwigger)

- OWASP Top 10 for Agentic Applications 2026 and OWASP AI Testing Guide. (Proyecto OWASP Gen AI Security)

- NVD and CISA material for CVE-2021-44228, plus Oracle’s advisory context. (NVD)

- NVD and CVE record for CVE-2023-34362. (NVD)

- Koi Security research on the ShadowPrompt browser-extension prompt injection issue. (Koi)

- LayerX research on Claude Desktop Extensions risk chains. (LayerX)

- Penligent, AI Pentest Copilot, From Smart Suggestions to Verified Findings. (Penligente)

- Penligent, Claude Code Security and Penligent, From White-Box Findings to Black-Box Proof. (Penligente)

- Penligent, homepage and public product positioning for operator-controlled agentic workflows, verified findings, reproducible PoCs, and editable reports. (Penligente)