Why “XZ Utils CVE” became a high-intent search overnight

When engineers type “XZ Utils cve” into a search bar, they usually aren’t looking for trivia. They’re trying to answer one operational question under time pressure:

Is my fleet exposed—and can I prove it with evidence?

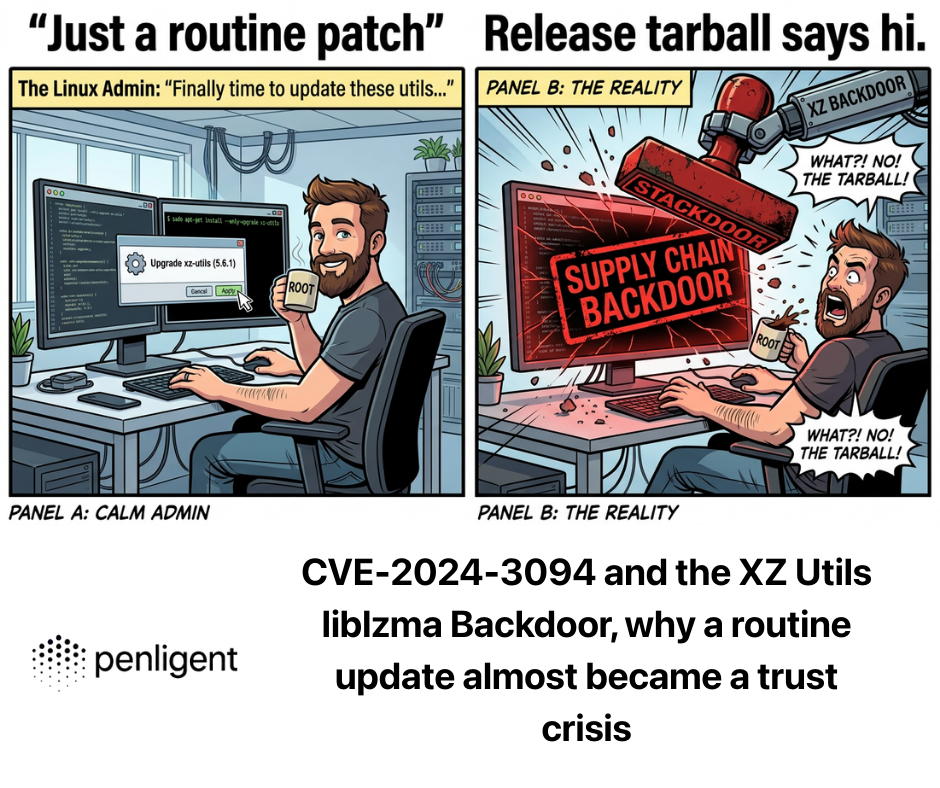

That urgency is justified. CVE-2024-3094 wasn’t a typical memory bug where you patch a function and move on. It was a supply-chain backdoor planted in upstream release artifacts, designed to survive casual review and activate through a build-time injection path into liblzma, a library that ends up on an enormous number of Linux systems. The NVD description is explicit that malicious code was discovered in upstream tarballs starting in 5.6.0, using obfuscated build logic to extract a prebuilt object file and modify liblzma during compilation. (NVD)

Across the articles that consistently rank and get clicked for this topic, the same query cluster appears in headlines and section titles: CVE-2024-3094, xz backdoor, liblzma backdoor, how to check, am I affected, downgradeet mitigation. You see this pattern in vendor and research write-ups from Akamai, Datadog, Rapid7, Wiz, and Elastic, because those are the words that match what responders actually need in the moment: impact, detection, and remediation. (Akamai)

This guide follows the same principle: tight claims, verifiable sources, and copy-paste actions.

The one-paragraph truth of CVE-2024-3094

CVE-2024-3094 tracks a malicious backdoor inserted into XZ Utils release tarballs (not merely the public repo view), beginning with 5.6.0 et 5.6.1, where obfuscated build steps extracted a prebuilt object from disguised files and modified liblzma during compilation—potentially enabling compromise of software that links against the resulting library under certain conditions. (NVD)

That combination—upstream artifact compromise + build-time injection + dependency/packaging trigger conditions—is the core reason the incident is treated as a modern supply-chain “near miss,” not a routine patch Tuesday item. (Datadog Security Labs)

Timeline, what happened and when it mattered

A minimal timeline helps you reason about exposure windows and “should this have reached production?”

- March 29, 2024: Andres Freund reports the backdoor on the oss-security mailing list (Openwall), describing an upstream xz/liblzma backdoor with SSH server compromise implications. (openwall.com)

- March 29, 2024: CISA publishes an alert on the reported supply chain compromise affecting XZ Utils (CVE-2024-3094), recommending downgrade to uncompromised versions. (CISA)

- NVD publishes/maintains the CVE entry describing the obfuscated build process and tarball-specific malicious content starting with 5.6.0. (NVD)

- In parallel, multiple security research teams publish deep technical analyses and practical detection guidance (Datadog, Rapid7, Wiz, Elastic, Akamai). (Datadog Security Labs)

One reason this incident didn’t become an internet-wide catastrophe is that the compromised versions were primarily present in certain development/rolling branches and specific packaging contexts, not universally deployed across stable enterprise fleets at the time responders caught it. That nuance shows up repeatedly in responsible vendor writeups and distro guidance. (wiz.io)

What made the XZ backdoor different, “artifact vs repo” was the trap

If you only remember one technical lesson, make it this:

The attack exploited a trust gap between what people review (repo) and what production builds consume (release artifacts).

NVD’s wording points to upstream tarballs and extra build-related files/instructions that enabled the injection path. (NVD)

The original disclosure thread and follow-on analyses emphasize that what mattered wasn’t just source code, but the release and build system, where hidden steps could run and produce a compromised library. (openwall.com)

A simplified mental model:

- Release tarball contains extra/modified build inputs (not obvious in the repo view). (NVD)

- Build logic extracts a prebuilt object from disguised “test” content. (NVD)

- The object is used to modify liblzma behavior during compilation. (NVD)

- Under certain packaging/runtime conditions, processes (including sshd paths discussed in early reporting and analyses) can be affected. (openwall.com)

This is why “just diff the repo” is not a sufficient assurance story for critical dependencies.

Impact, the honest version of “am I exposed”

There are two statements that can both be true:

- XZ is everywhere (as a tool/library).

- The exploitable condition was not universal.

So you should treat exposure as a condition set, not a binary “installed/not installed.”

The exposure condition set

Most practical guidance converges on checking:

- Version: are you on XZ Utils 5.6.0 or 5.6.1 (or distro builds derived from them)? (NVD)

- Packaging context: did your distro branch ship the compromised build (some did in dev/rolling channels; many stable lines did not). (wiz.io)

- Runtime linkage chain: does the affected process load the compromised liblzma in the relevant way in your environment (this is where distro patches, service managers, and linkage details matter). (ssh.com)

A practical risk matrix table

| Risk tier | What you have | What it means | What to do first |

|---|---|---|---|

| Haut | Compromised xz/liblzma build et the known triggering packaging/runtime conditions | Treat as incident, not routine vuln | Immediate downgrade/rollback, isolate, image rotation |

| Moyen | Compromised version present but trigger chain uncertain | You need evidence, not guesses | Validate package provenance, inspect liblzma, confirm process linkage |

| Faible | Not on affected versions or already downgraded to known-safe builds | Likely not impacted | Keep guardrails to prevent reintroduction |

This framing matches what the best incident writeups do: they reduce panic, but they don’t hand-wave.

Detection, copy-paste checks you can run today

1) Confirm package versions

Debian/Ubuntu family

dpkg -l | egrep '(^ii\\s+)(xz-utils|liblzma5)\\b'

apt-cache policy xz-utils liblzma5 | sed -n '1,120p'

RHEL/Fedora family

rpm -qa | egrep '^(xz|xz-libs)-'

dnf info xz xz-libs | sed -n '1,120p'

Arch

pacman -Qi xz | sed -n '1,120p'

You are primarily hunting for 5.6.0 / 5.6.1 lineage and any distro “rebuilt” variants that explicitly reference the compromised versions in advisory notes. (NVD)

2) Identify which liblzma you’re actually using

The backdoor matters when the system is running a compromised liblzma produced by the tainted build chain.

Find the library and basic metadata:

# locate liblzma

ldconfig -p | grep -i lzma || true

ls -l /lib*/liblzma.so* /usr/lib*/liblzma.so* 2>/dev/null || true

# fingerprint

sha256sum /lib*/liblzma.so* /usr/lib*/liblzma.so* 2>/dev/null | head

If you’re doing incident-grade verification, you’ll want to compare your hashes against your distro’s known-good packages/advisories and confirm the installed build matches the “safe” rollback path recommended by your vendor or CISA’s downgrade guidance. (CISA)

3) Check whether sshd is loading liblzma on your system

This is a quick reality check. In many environments, sshd does pas normally load liblzma—so if you see it loaded, that’s a meaningful signal worth understanding.

# find sshd PID(s)

pgrep -x sshd || true

# for each PID, show loaded libs

for p in $(pgrep -x sshd); do

echo "== sshd pid $p =="

cat /proc/$p/maps 2>/dev/null | grep -E 'liblzma|libsystemd' || true

done

Important: seeing liblzma loaded is not “proof of compromise,” and not seeing it is not “proof of safety.” It’s a directional signal that helps you decide how deep you need to go, consistent with the nuanced guidance in technical recaps and research writeups. (ssh.com)

4) Fast checks for fleet scale, SSH endpoints

If you maintain a fleet, start by narrowing scope:

- Which hosts expose port 22 to untrusted networks

- Which images/AMIs/base layers were built during the exposure window

- Which environments track rolling/dev distro branches

Even very simple inventory logic can reduce your search surface dramatically.

Remediation, what “good” looks like in the first 60 minutes

Most credible guidance converges on an immediate, low-regret step:

Downgrade/rollback to a known-safe version (earlier than 5.6.0) and prevent reintroduction.

CISA’s alert and multiple vendor writeups explicitly recommend downgrading to an uncompromised version as a primary mitigation. (CISA)

1) Immediate actions checklist

- Roll back xz/liblzma packages to known-safe builds

- Rotate/replace impacted images instead of “patching in place” where feasible

- Block compromised versions in your internal repos and CI dependency gates

- Preserve forensic context if you suspect real exploitation (logs, package metadata, image digests)

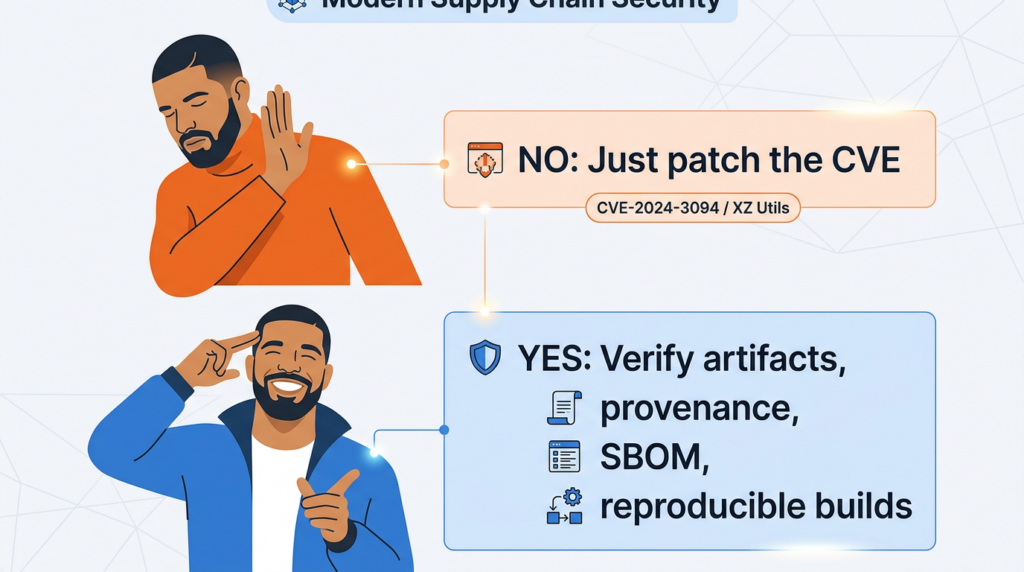

2) Don’t stop at “patch,” close the supply-chain gap

The uncomfortable truth: this incident is as much about release engineering as it is about “vulnerability management.”

Datadog’s analysis frames it as a backdoor incident with lessons across time, while other research emphasizes the sophistication of the build-time manipulation and the broader class of supply-chain backdoors. (Datadog Security Labs)

Practically, long-term reduction comes from:

- SBOM generation and enforcement for production artifacts

- Reproducible builds where possible

- Artifact signing and verification (you want verifiable provenance, not vibes)

- Tier-0 dependency policies: extra scrutiny for “infrastructure primitives” (compressors, crypto libs, SSH, libc, build tools)

A defender’s explanation of “why it almost worked”

Several postmortems emphasize that the attacker didn’t need a single dramatic exploit chain. The campaign benefited from:

- A widely embedded component (liblzma)

- A release pipeline that many consumers implicitly trusted

- A realistic path to reach high-value targets through distro propagation

- Time and patience to embed changes and survive reviews

You see this theme in multiple analyses that describe it as one of the most advanced supply-chain attacks in the open-source ecosystem in recent years. (Checkmarx)

Related high-impact CVEs, why responders bring them up in the same breath

It’s common to see CVE-2024-3094 referenced alongside broader “ecosystem shock” vulnerabilities like Log4Shell, not because the bugs are similar, but because the organizational lesson is: foundational primitives create organizational blast radius.

That conceptual link is why security leaders care even when a specific incident was caught “before mass production infection.”

In an XZ-class incident, teams typically struggle with two things:

- Asset truth: what’s installed, where, and in which images

- Preuves: how to produce a defensible report that proves exposure or non-exposure

If you’re already using an automated security workflow platform, the practical value is in turning the detection commands and version logic above into repeatable checks, attached to hosts, images, and CI artifacts, with output you can hand to engineering leadership.

Penligent’s “AI-assisted pentest and verification workflow” model is a natural fit for the evidence-building side of this—running standardized checks across a target environment, capturing outputs, and generating an auditable report—especially when your incident response needs to answer “show me proof” rather than “tell me a story.” (For background and a CVE-2024-3094 specific writeup, see the Penligent article linked in the references.) (Penligent)

What to document for your post-incident review

Even if you were not affected, you want to update the playbook while it’s fresh:

- Which repos/images could have pulled compromised versions

- How quickly you could answer “am I affected” with evidence

- Whether your CI/CD has “version deny rules” for Tier-0 dependencies

- Whether you can verify artifacts (not just source) and enforce provenance

- Which alerts and intel sources are in your default watchlist (CISA, vendor advisories, distro channels)

This is the difference between “we got lucky” and “we reduced the probability next time.”

Références

NVD — CVE-2024-3094 https://nvd.nist.gov/vuln/detail/CVE-2024-3094

CISA Alert — Reported Supply Chain Compromise Affecting XZ Utils Data Compression Library, CVE-2024-3094 https://www.cisa.gov/news-events/alerts/2024/03/29/reported-supply-chain-compromise-affecting-xz-utils-data-compression-library-cve-2024-3094

Openwall oss-security original disclosure thread https://www.openwall.com/lists/oss-security/2024/03/29/4

Red Hat CVE page — CVE-2024-3094 https://access.redhat.com/security/cve/cve-2024-3094

Datadog Security Labs — The XZ Utils backdoor, CVE-2024-3094 https://securitylabs.datadoghq.com/articles/xz-backdoor-cve-2024-3094/

Rapid7 — Backdoored XZ Utils (CVE-2024-3094) https://www.rapid7.com/blog/post/2024/04/01/etr-backdoored-xz-utils-cve-2024-3094/

Akamai — XZ Utils Backdoor, Everything You Need to Know https://www.akamai.com/blog/security-research/critical-linux-backdoor-xz-utils-discovered-what-to-know

Wiz — CVE-2024-3094 Critical RCE Vulnerability Found in XZ Utils https://www.wiz.io/blog/cve-2024-3094-critical-rce-vulnerability-found-in-xz-utils

Elastic Security Labs — 500ms to midnight, XZ aka liblzma backdoor https://www.elastic.co/security-labs/500ms-to-midnight

Palo Alto Networks Unit 42 — Threat Brief, XZ Utils CVE-2024-3094 https://unit42.paloaltonetworks.com/threat-brief-xz-utils-cve-2024-3094/

Penligent — XZ Utils CVE Reality Check, CVE-2024-3094, the liblzma backdoor https://www.penligent.ai/hackinglabs/xz-utils-cve-reality-check-cve-2024-3094-the-liblzma-backdoor-and-why-your-build-pipeline-was-the-real-target/

Penligent — CVE-2024-6387 regreSSHion, why it’s trending again, also references XZ as a “primitives are the battlefield” lesson https://www.penligent.ai/hackinglabs/cve-2024-6387-regresshion-why-its-trending-again-and-what-security-teams-should-do-right-now/

Penligent — Kali Linux + Claude via MCP Is Cool—But It’s the Wrong Default for Real Pentesting Teams, includes security workflow context and related CVE references https://www.penligent.ai/hackinglabs/kali-linux-claude-via-mcp-is-cool-but-its-the-wrong-default-for-real-pentesting-teams/