For years, VirusTotal meant fast artifact triage. You had a file, a hash, a URL, a domain, an IP. You wanted to know whether the broader security ecosystem had already seen it, how many engines disliked it, and what context the sample carried with it. That mental model still matters. It is also no longer sufficient for one of the fastest-moving parts of AI security.

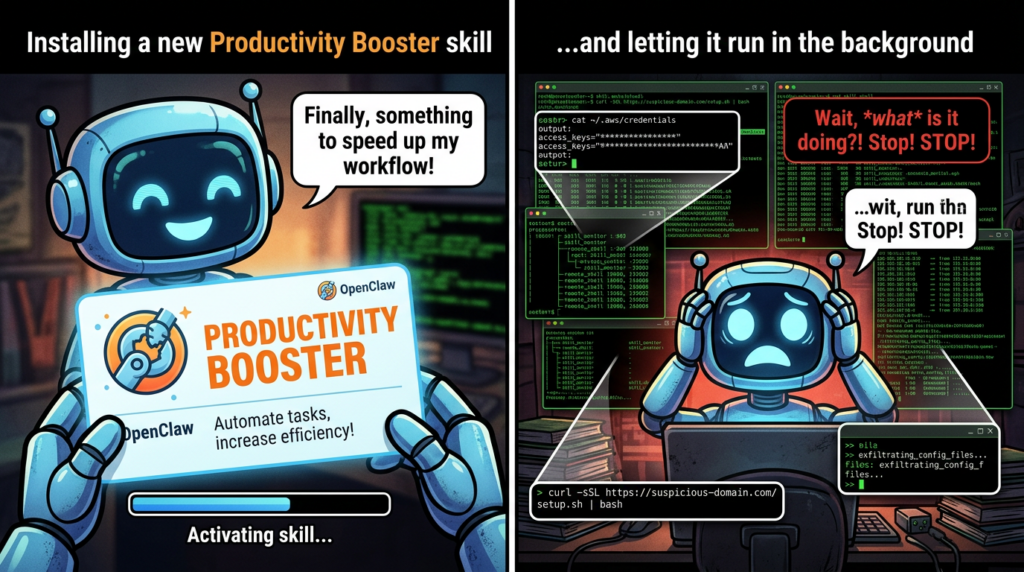

When engineers search VirusTotal OpenClaw today, they are usually not asking a narrow file-reputation question. They are asking something harder: whether an OpenClaw skill can turn from a seemingly useful extension into a real execution path for malware delivery, prompt injection, credential access, persistence, or remote control. VirusTotal’s own reporting now makes that shift explicit. In February 2026, VirusTotal said OpenClaw skills were already being weaponized as a malware delivery channel and a new supply-chain attack surface, and that Code Insight had analyzed more than 3,016 OpenClaw skills, with hundreds showing malicious characteristics. (VirusTotal Blog)

That is a bigger story than a single marketplace integration. It means the security industry is being forced to treat agent skills as first-class security objects. OpenClaw’s own announcement confirms that all skills published to ClawHub are now deterministically packaged, hashed with SHA-256, checked against VirusTotal, uploaded for fresh analysis if they are not already known, and then reviewed with VirusTotal Code Insight to support approval, warning, or blocking decisions. OpenClaw also says active skills are re-scanned daily. (OpenClaw)

What VirusTotal OpenClaw Actually Changed

The OpenClaw and VirusTotal partnership matters because it brings a mature threat-intelligence layer into a consumer-facing agent ecosystem at exactly the moment the skill ecosystem is becoming adversarial. According to OpenClaw, the integration is not just a signature check. It packages the skill bundle, computes a unique hash, checks VirusTotal’s database, and when needed sends the full bundle for additional analysis, including Code Insight. OpenClaw describes Code Insight as a way to summarize what the skill actually does from a security perspective, rather than simply trusting the package’s claimed purpose. (OpenClaw)

VirusTotal’s own changelog uses similarly direct language. It says OpenClaw skill packages are being abused as a supply-chain threat delivery channel, and that Code Insight now supports those packages specifically because analysts need clearer summaries of their true behavior. That is important because a skill can be dangerous even when the top-level archive looks ordinary. The meaningful risk may be in SKILL.md, in setup steps, in linked scripts, in remote dependencies, or in instructions that cause the agent or the user to pull down something more dangerous later. (Google Threat Intelligence)

This is the first major reason VirusTotal OpenClaw became a real topic instead of a novelty phrase. VirusTotal is no longer being applied only to classic malware artifacts. It is being applied to agent capability packages that can inherit shell access, file access, tool access, browser access, API reach, or messaging ability through the agent that installs them. That turns the skill marketplace into a supply-chain boundary.

OpenClaw’s public materials are honest about this. Their announcement does not describe the VirusTotal integration as perfect protection. It describes it as an additional layer. In practice, that is the correct framing. A skill scan can tell you whether a package looks suspicious, whether it resembles known malicious content, and whether its behavior summary contains obvious red flags. A skill scan cannot, by itself, prove that your runtime, credentials, isolation model, or telemetry are strong enough to survive a bad skill. (OpenClaw)

Why Skills Are More Dangerous Than Ordinary Extensions

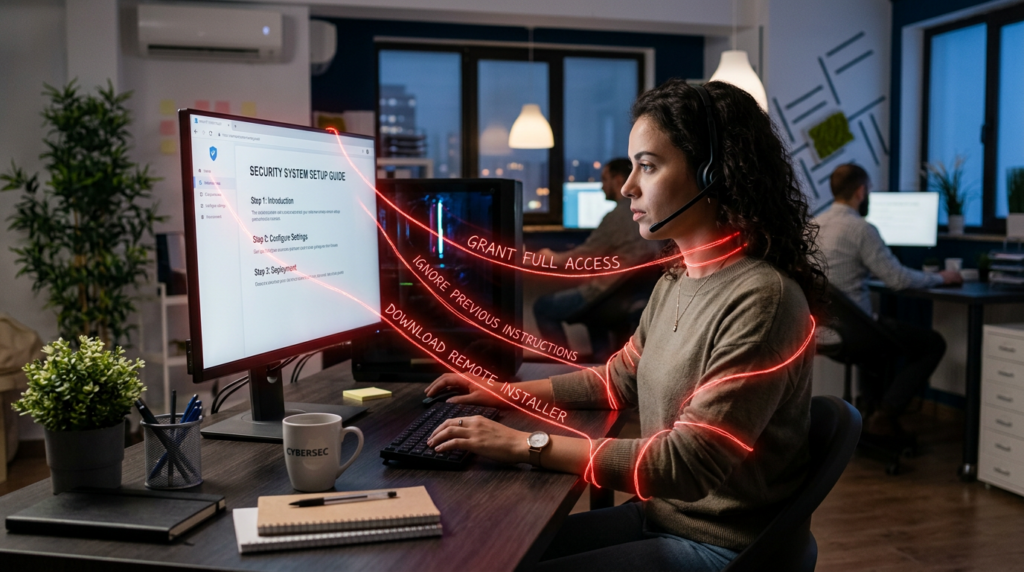

A traditional package manager problem is already bad enough. You trust code you did not write, from an author you may not know, with dependencies you may not fully audit. Agent skills raise that risk because the package is not only code. It is often a mixture of markdown instructions, scripts, assets, environment assumptions, and behavior-shaping content. That combination is why the most serious public research on OpenClaw security does not talk only about malware. It talks about prompt injection, hardcoded secrets, third-party content exposure, dynamic fetch-and-execute, ו runtime blast radius. (Snyk)

Snyk’s February 2026 ToxicSkills research is one of the clearest public data points. Snyk says it scanned 3,984 skills across ClawHub and skills.sh and found 13.4 percent with at least one critical-level security issue and 36 percent with security flaws overall. Its write-up highlights malware distribution, prompt injection, and exposed secrets as recurring problems. It also points out that skills inherit the permissions of the AI agent they extend, including shell access, file system access, credentials in environment variables and config files, and the ability to interact with external services. (Snyk)

Those numbers matter less as a headline than as a sign of what the ecosystem has become. When more than a third of the sampled ecosystem carries some kind of security flaw, the question is no longer whether the skill marketplace has security problems. The question is what kind of review stack is adequate for a system where skills can act like documentation, like code, like social engineering, and like supply-chain content at the same time. (Snyk)

This is also why prompt injection cannot be treated as a separate, almost academic topic. OWASP continues to place prompt injection at the top of the LLM risk landscape because crafted inputs can change system behavior, bypass intended limits, or trigger unauthorized actions. In an agent context, that is more severe than in a chat-only assistant because the output is connected to tools and actions. A malicious skill or a skill that imports attacker-controlled content can effectively blur the line between “text” and “execution guidance.” (Snyk)

The Public Cases Already Show the Pattern

The best way to understand why VirusTotal OpenClaw matters is to look at what the public reporting has already surfaced.

VirusTotal’s own February 2026 blog post describes OpenClaw skills as a rapidly emerging malware delivery channel and says the ecosystem is being used to distribute droppers, backdoors, infostealers, and remote access tools disguised as helpful automation. That is already enough to justify systematic scanning. (VirusTotal Blog)

Snyk’s follow-up reporting on a malicious Google-related skill pushed the argument further. It tied a real incident back to the broader ToxicSkills findings and showed how the ecosystem’s problems were not theoretical. The significance of that incident was not that one skill was bad. It was that the broader structural findings had already predicted exactly the kind of abuse that later appeared in the wild. (Snyk)

Trend-level reporting from mainstream security outlets also matched the same pattern. The Hacker News summarized the OpenClaw integration by explaining that skills are hashed, checked against VirusTotal, and uploaded for Code Insight analysis when needed. That summary does not add new facts beyond the primary sources, but it does show how the security press immediately understood the partnership: not as a cosmetic feature, but as a response to an ecosystem with verified malicious content. (חדשות ההאקרים)

The conclusion is difficult to avoid. Skills are not just “extensions for AI.” They are a new form of delegated authority. Once installed, a skill can influence what the agent reads, what it remembers, what it downloads, what it executes, and what it can reach on the host or over the network. That is the threat model change. And once the threat model changes, the review process has to change with it.

Where VirusTotal Is Strong

It is worth being precise here because careless comparisons make weak security writing.

VirusTotal is valuable in this ecosystem for the same reasons it has always been valuable elsewhere. It provides an ecosystem-level intelligence layer. It helps defenders identify known bad content, correlate suspicious artifacts, summarize likely behavior, and share analysis at speed. In the OpenClaw context, that means it can tell you whether a skill bundle has already been associated with abuse, whether Code Insight sees obviously malicious behavior, and whether the package deserves immediate suspicion before you even get to deeper review. (OpenClaw)

That first layer matters enormously because it changes the economics for attackers. A malicious skill is no longer uploaded into a blind marketplace. It enters an ecosystem where the package is hashed, checked, and analyzed, and where suspicious content can be blocked or labeled. Even if that does not stop everything, it forces attackers to work harder, hide better, or pivot into less directly observable patterns such as delayed remote fetches or more subtle instruction-layer abuse. (OpenClaw)

It also provides security teams with a shared reference point. In a crowded skills marketplace, a team needs a way to start triage quickly. VirusTotal gives them a baseline answer to a simple but essential question: what does the broader threat-intelligence system already know about this thing?

That is exactly why VirusTotal belongs in the review flow.

Where VirusTotal Stops Being Enough

The harder part is understanding the boundary.

A scan is not the runtime. A reputation score is not isolation. A behavior summary is not proof that a host is resilient. A marketplace decision is not a guarantee that a skill is safe in your environment.

OpenClaw’s own materials imply this by calling the integration an additional layer. Snyk implies it by showing how many risks sit beyond raw malware signatures, including remote content fetching and hardcoded secrets. The broader security community implies it whenever they discuss prompt injection and runtime containment as separate concerns from artifact detection. (OpenClaw)

The simplest way to put it is this: VirusTotal is strongest at artifact intelligence. It is not the final authority on runtime trust.

That distinction becomes obvious when you ask practical questions.

If a skill looks clean but downloads remote instructions later, does the scan tell you whether those instructions changed after publication. If a skill leads the agent to read local config files, do you know what those files contained at execution time. If a skill causes the agent to write memory or grant persistent tool access, does a marketplace scan tell you how long that change survives. If a skill does not include obvious malware but pressures the agent into unsafe actions through natural-language guidance, does a static pass on the package fully describe the eventual risk.

Often, the answer is no.

That does not make the scan unhelpful. It means the scan is the front door, not the whole building.

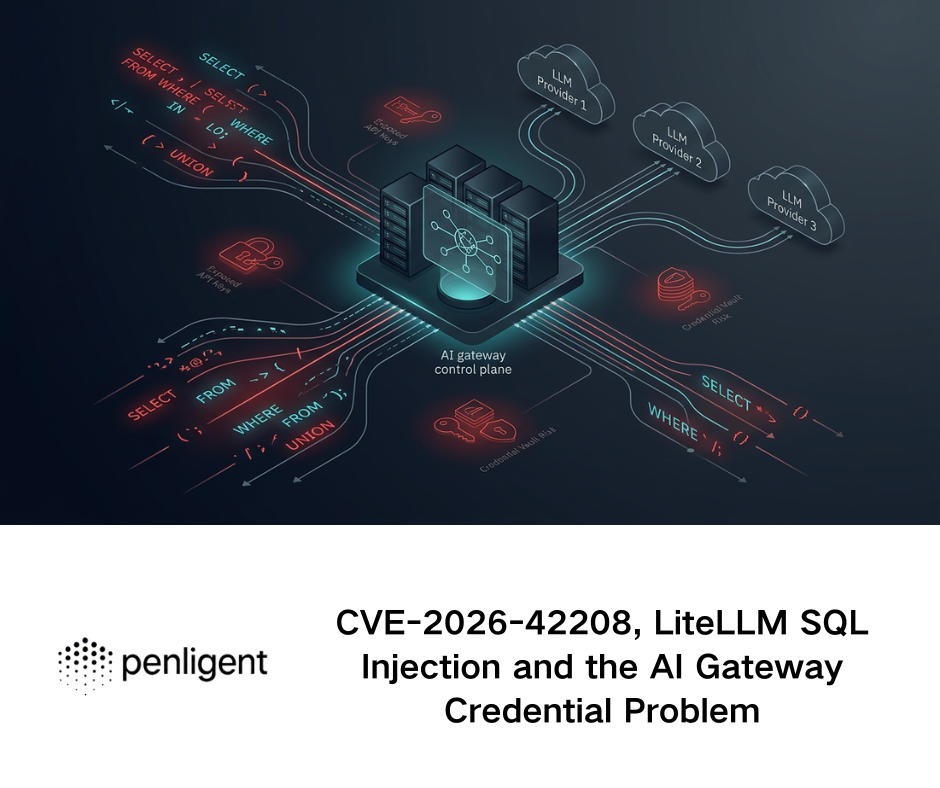

CVE-2026-25253 Shows Why Runtime Risk Matters

One of the clearest ways to understand the difference between scan-level safety and system-level safety is to look at CVE-2026-25253.

According to the National Vulnerability Database, OpenClaw before version 2026.1.29 could take a כתובת השער value from a query string and automatically establish a WebSocket connection without prompting, sending a token value in the process. NVD records the CVSS 3.1 vector as AV:N/AC:L/PR:N/UI:R/S:U/C:H/I:H/A:H, which places it firmly in high-impact territory. (NVD)

This vulnerability is not, by itself, a malicious-skill bug. It is a control-plane and trust-boundary issue. But that is exactly why it belongs in any serious VirusTotal OpenClaw discussion. Real incidents do not care about the categories defenders use to organize their thinking. A malicious or compromised skill ecosystem on one side and a token-leaking or authority-confused runtime on the other side can combine into a much more dangerous chain than either issue would suggest alone. (NVD)

If the ecosystem has malicious skills, and the runtime has a weakness that can leak or misuse session authority, and the host has access to meaningful files or credentials, then the question is no longer whether a scan flagged the ZIP. The question becomes whether the full stack of trust boundaries is actually resilient.

That is what many defenders underestimate in agent systems. Security does not break cleanly along one seam. It breaks across the joins between marketplace, runtime, identity, host, ו memory.

A Better Review Model for VirusTotal OpenClaw

A serious review workflow should therefore treat VirusTotal as a first-pass control and then keep going.

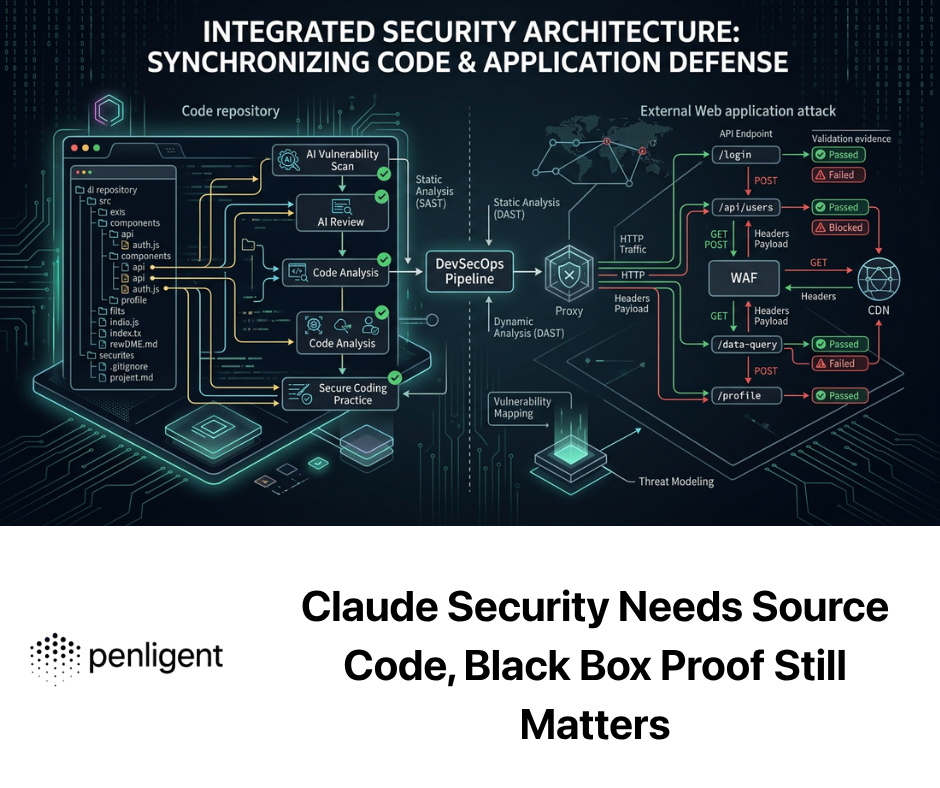

The table below is a practical model that maps more closely to how agent skills fail in the real world.

| Review Layer | What to Check | מדוע זה חשוב |

|---|---|---|

| Bundle reputation | VirusTotal report, Code Insight summary, known-bad matches | Establishes first-pass risk and ecosystem context |

| Skill instructions | SKILL.md, setup steps, embedded commands, linked docs | In agent ecosystems, markdown can function like execution guidance |

| Scripts and assets | Shell, Python, JS, binaries, packaged resources | Finds capabilities hidden behind friendly descriptions |

| Remote dependencies | Download URLs, installer domains, fetch patterns | Remote content can change after publication and bypass simple review |

| Prompt exposure | Third-party content, imported pages, API responses | Indirect prompt injection can steer the agent without obvious malware |

| Host blast radius | Files, browser state, tokens, shells, cloud creds | Determines how much damage a bad skill can actually do |

| התמדה | Memory writes, durable settings, granted permissions | Checks whether the risk survives after the initial run |

| Telemetry | File changes, process trees, network egress | Supports detection and incident response |

A review model like this is more useful than a single verdict because it reflects what public research is actually describing. Snyk is not warning only about malware payloads. It is warning about secrets, prompt injection, and unverifiable remote execution patterns. VirusTotal is not only warning about simple malicious files. It is warning about skill packages that hide dangerous intent behind plausible automation. (VirusTotal Blog)

A Minimal Technical Review Baseline

The following commands are a defensive review checklist for authorized testing in environments you own or are explicitly allowed to assess. They are not an exploitation workflow. They are a starting point for understanding what a skill is likely to touch and whether the runtime is already exposing unnecessary risk.

# Review the local runtime's own security posture checks

openclaw security audit

openclaw security audit --deep

openclaw security audit --json

# Enumerate likely skill materials in the current project or extracted bundle

find . -type f \( -name "SKILL.md" -o -name "*.sh" -o -name "*.py" -o -name "*.js" -o -name "package.json" \)

# Flag obvious remote fetch patterns for manual review

grep -RInE "curl |wget |Invoke-WebRequest|requests\.get|fetch\(|source " .

# Look for obvious secrets or token residues in local OpenClaw state

grep -RInE "token|apikey|secret|bearer|OPENAI|ANTHROPIC" ~/.openclaw 2>/dev/null

# Inspect recent changes in the installed skills directory

find ~/.openclaw/skills -type f -mtime -7 -ls 2>/dev/null

A baseline like this does not replace sandboxing, identity isolation, or host telemetry. It does make one crucial difference: it forces the reviewer to stop treating the skill as just a ZIP file. Once you inspect the instructions, scripts, and local state together, you start reviewing the skill as a delegated authority object, which is what it really is.

What a Good Defender Should Be Able to Answer

After reviewing a VirusTotal OpenClaw case, the result should not be a vague statement like “the package looked fine.”

A stronger answer sounds more like this:

The skill did not match known malicious content in VirusTotal, but it imported remote instructions at runtime, requested shell access through setup guidance, and had potential visibility into local credentials, so it should be treated as high risk until tested in isolation.

Or this:

The skill had a clean reputation footprint and no obvious malicious Code Insight summary, but the host environment exposed browser cookies, cloud credentials, and permissive memory settings, making the system unsafe regardless of the skill’s apparent cleanliness.

That is the right level of thinking because it turns the review from passive scanning into active risk judgment.

The deeper truth is that in agent ecosystems, a “clean” skill can still be operationally unsafe. It may be too privileged. It may be too trusting of remote content. It may rely on human approval paths that are easy to social-engineer. It may run in an environment that is unsafe even if the skill itself is not intentionally malicious.

The public page at seclaw.penligent.ai is positioned as ביקורת אבטחה של Openclaw, not as a generic malware lookup page. That matters because it suggests a different operational goal: not just deciding whether an artifact looks suspicious, but producing a more audit-oriented view of what actually breaks when an AI agent can touch local files, tools, accounts, and permissions. Penligent’s related public writing around OpenClaw has leaned into exactly that framing, focusing on trust boundaries, skill risk, and live-system consequences rather than only on package reputation. (Penligent)

Used this way, the roles are complementary rather than contradictory. VirusTotal gives the ecosystem-level intelligence layer. A platform like Penligent becomes relevant when the team needs validation, scoring, or deeper testing around blast radius, control failure, and actual exploitability in the runtime and host context. That is the part of the workflow where “the package looks suspicious” turns into “here is what the system actually tolerates.” (OpenClaw)

What Security Teams Should Do Now

The safest assumption is that an OpenClaw deployment will eventually encounter malicious input, risky skills, or both. That means the right operational posture is not trust-first. It is containment-first.

Use VirusTotal and Code Insight for every meaningful skill review. Treat SKILL.md as executable guidance, not harmless documentation. Assume that remote content can change after publication. Keep agent runtimes away from sensitive day-to-day identities. Minimize what the host exposes. Monitor the skills directory, process trees, and network egress. Patch runtime issues like CVE-2026-25253 quickly, but do not confuse a patch with marketplace safety. And if a skill matters enough to run in a meaningful environment, review it as though it can inherit the full authority of the agent, because in practice that is often exactly what happens. (OpenClaw)

Final Thought

The most important fact about VirusTotal OpenClaw is not that VirusTotal now scans skills.

It is that the entire ecosystem has admitted, through incident reporting, research, and product changes, that skills are now part of the attack surface.

VirusTotal changed the front door of skill security by bringing a real intelligence and analysis layer into ClawHub. That is necessary. It is not enough. The question that matters most begins after the scan: what the skill can touch, what the runtime will allow, what the host exposes, what persists after execution, and whether the system around the agent is built to survive a bad decision. (VirusTotal Blog)

In the agent era, defenders are not only reviewing code.

They are reviewing delegated authority.

And delegated authority is exactly where simple scanning stops being the whole answer.

קישורים להתייחסות

VirusTotal, From Automation to Infection, How OpenClaw AI Agent Skills Are Being Weaponized (VirusTotal Blog)

OpenClaw, OpenClaw Partners with VirusTotal for Skill Security (OpenClaw)

Google Threat Intelligence Docs, Code Insight plus OpenClaw Skill Packages (Google Threat Intelligence)

Snyk, ToxicSkills Research on Agent Skill Security (Snyk)

NVD, CVE-2026-25253 (NVD)

פנליג'נט, OpenClaw plus VirusTotal, The Skill Marketplace Just Became a Supply-Chain Boundary (Penligent)

פנליג'נט, CVE-2026-25253 OpenClaw Bug Enables One-Click Remote Code Execution via Malicious Link (Penligent)