Pentest GPT has become two different things at once

Search for pentest gpt today and you immediately run into a naming problem. In one sense, the phrase still points to PentestGPT, the academic system presented at USENIX Security 2024 and maintained as an open-source project. In another sense, it has expanded into a broader market label for any system that combines a large language model with scanners, web tooling, terminal execution, exploit logic, state tracking, and reporting. That split is not semantic trivia. It is the difference between discussing a specific research artifact and discussing an entire product category. The public results currently visible for the term reflect exactly that ambiguity: the official project, research links, explainer pieces from vendors, and newer comparisons that treat “pentest gpt” as shorthand for AI-assisted penetration testing more generally. (GitHub)

That ambiguity matters because it creates bad expectations in both directions. Some readers still imagine a one-prompt system that can “hack for you” without constraints, which is not what the original PentestGPT paper claimed. Others dismiss the whole category as marketing because they have seen too many chat wrappers over legacy scanners. Both reactions miss the engineering reality. A serious Pentest GPT system is neither “just ChatGPT with a hacker prompt” nor a magical autonomous operator that makes human security work obsolete. The better way to frame the category is this: an AI system that sits between target data, security tools, human intent, and evidence collection, and tries to move from raw observations to verified findings with less friction than a purely manual workflow. (אייקידו)

That is also why the phrase keeps attracting clicks. Publicly visible headline patterns around the keyword repeatedly lean on the same reader questions: what Pentest GPT actually is, how AI is changing penetration testing, whether it is autonomous or still human-led, how it compares with alternatives, and which systems move beyond command suggestions into real validation. Those are not arbitrary headline choices. They are the questions practitioners actually have when they try to separate a research prototype, a copilot, a browser-integrated assistant, and a productized validation platform. (אייקידו)

Why the original PentestGPT paper still matters

The original PentestGPT work remains the canonical starting point because it did something many later articles blur out: it stated both the promise וה failure mode of LLM-based pentesting in the same breath. The paper found that large language models were often strong at sub-tasks such as using testing tools, interpreting their outputs, and proposing subsequent actions. But it also found that these models struggled to maintain an integrated understanding of the overall testing scenario as the workflow got longer and more stateful. PentestGPT itself was introduced as a response to that exact limitation, with three self-interacting modules designed to reduce context loss across the engagement. The paper reported a 228.6 percent task-completion improvement over GPT-3.5 on its benchmark targets. (arXiv)

That architecture is still surprisingly modern. The PentestGPT website describes the framework as three modules for הנמקה, generation, ו parsing, and explicitly ties them to strategic planning, command execution, and output analysis. In other words, the project was never “a chatbot that knows security.” It was an attempt to decompose pentesting into loops that an LLM system could manage more reliably than a monolithic prompt. The current site also frames the newer release as an agentic v1.0 direction, with autonomous execution, session persistence, and a Docker-first environment. (PentestGPT)

The current GitHub repository shows how much that prototype has evolved in public. As of March 2026, the repo lists about 12.1k stars, 2.1k forks, and a v1.0.0 latest release dated December 24, 2025. The README now emphasizes an “Agentic Upgrade,” session persistence, Docker-first isolation, support for local LLM routing, and a benchmark runner with a reported 86.5 percent success rate on 90 out of 104 XBOW validation benchmarks in the project’s own validation suite. Those GitHub numbers are maintainer-reported rather than independent third-party evaluation, but they do show something important: PentestGPT is not a dead paper. It has remained a live reference point for how people think about AI-driven offensive workflows. (GitHub)

The project also matters historically because it made the category legible. Before PentestGPT, a lot of public discussion about “AI pentesting” was either vague futurism or isolated scripting demos. The paper gave the field a vocabulary: multi-step workflows, context retention, sub-task specialization, and the idea that the model is not the product by itself. That framing still holds. Today’s better systems differ from the 2024 paper in polish, tooling, and operational safeguards, but they are still wrestling with the same core problem: how to help a model survive a long, branching, evidence-heavy security workflow without getting lost. (arXiv)

What pentest gpt is genuinely good at today

The easiest way to misunderstand Pentest GPT is to ask whether it can “do a pentest” in one giant abstract sense. That question is too coarse. The useful question is which parts of a pentest it can already accelerate in a way that a working engineer would actually care about. The strongest current answers are practical rather than theatrical: AI is already good at compressing noisy outputs, extracting signal from tool results, proposing follow-up commands or payload variants, summarizing what changed between attempts, ו turning scattered observations into a plausible attack narrative. The original PentestGPT research explicitly highlighted strengths in tool use, output interpretation, and next-step proposal. Vendor explainers aimed at practitioners, even when marketing-oriented, also converge on the same point: the model’s real value is in orchestrating and interpreting, not in magically replacing the offensive toolchain. (arXiv)

That matches what serious product vendors are actually shipping. PortSwigger’s current Burp AI documentation does not market Burp as a fully autonomous hacker. It positions Burp AI as an assistant inside Repeater, where it helps analyze HTTP messages, validate findings, generate and send payloads, summarize responses, and capture insights while the tester stays in control. PortSwigger’s public language is careful here, and that care is instructive. Burp AI is described as augmenting expertise, not replacing it, and its features are explicitly disabled unless the user turns them on. That is a strong signal about where the market has found real value: less “AI hacks everything by itself,” more “AI removes friction from the parts humans keep repeating.” (PortSwigger)

This is also where the best pentest-gpt-style workflows now outperform naive chat use. A general chatbot can explain SQL injection, decode an error message, or suggest an nmap flag. A better Pentest GPT system can use the output of one tool to steer the next tool, preserve the state of what has already been tried, keep hypotheses separate from verified evidence, and draft a more coherent report at the end. Even when the AI is not discovering novel bugs from scratch, saving hours of analyst time on triage, path reasoning, and evidence packaging is meaningful value. That kind of acceleration does not make headlines as easily as “autonomous hacking,” but it is much closer to what production teams buy. (אייקידו)

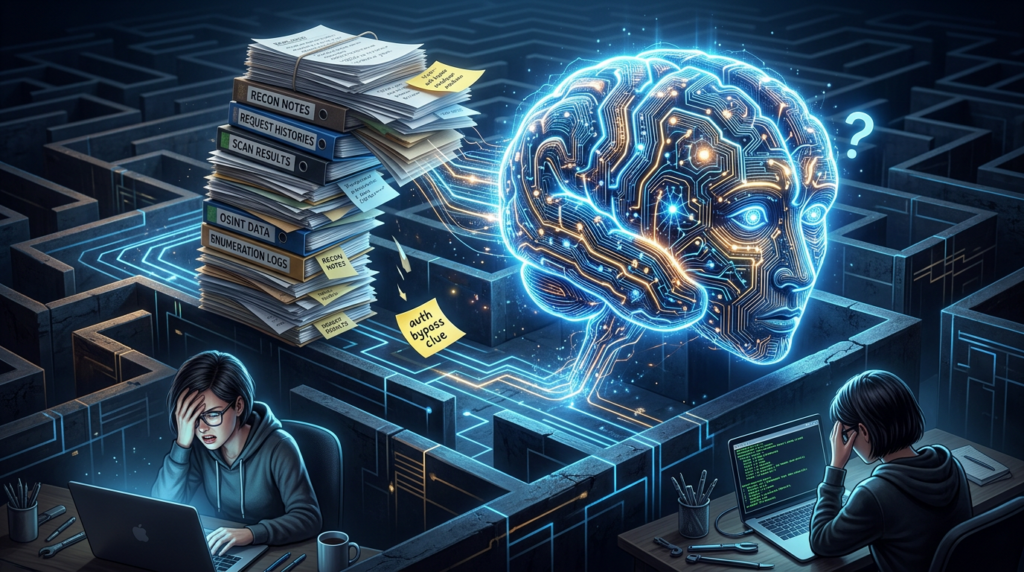

The reason this matters is that real penetration testing is rarely blocked by lack of raw payload creativity alone. It is blocked by state, volume, ו context switching. Engineers lose time moving between scan results, browser traces, notes, terminal output, screenshots, and remediation drafts. Pentest GPT is strongest precisely where it can reduce that switching cost without pretending the hard parts disappeared. The category becomes genuinely useful when it shortens the distance between “I suspect something here” and “I can prove what I saw, explain it, and reproduce it.” (Penligent)

What pentest gpt still gets wrong

The last eighteen months of research have made one point impossible to ignore: fully autonomous end-to-end pentesting remains unstable. PentestEval, a 2025 benchmark that decomposes the workflow into six stages across 346 tasks and 12 realistic vulnerable scenarios, found generally weak performance across stages and reported that end-to-end pipelines reached only a 31 percent success rate. It also noted that existing LLM-powered systems such as PentestGPT, PentestAgent, and VulnBot showed similar limitations, with autonomous agents failing almost entirely in the fully end-to-end setting the benchmark tested. That is not a small caveat. It is the central reality check anyone writing about Pentest GPT in 2026 needs to keep visible. (arXiv)

AutoPenBench reaches a similar conclusion from a different direction. Its published evaluation found that a fully autonomous agent achieved a 21 percent success rate, while a human-assisted architecture reached 64 percent. The lesson is not that the category is fake. The lesson is that the most viable near-term deployment path remains structured human assistance or strong external planning, not unconstrained autonomy. That result lines up with what many practitioners already experience informally: the agent can be surprisingly useful until the workflow becomes long-horizon, branch-heavy, or dependent on subtle environmental cues, at which point the value of a human steering hand rises quickly. (ACL Anthology)

Newer research is getting more specific about why these failures happen. The 2026 paper What Makes a Good LLM Agent for Real-world Penetration Testing? separates failures into two types. Type A failures come from engineering gaps such as missing tools or weak prompting, which can often be fixed. Type B failures persist even with better tooling because they come from planning and state-management limitations. The authors argue that agents lack real-time task difficulty estimation, which causes them to overcommit to low-value branches and burn context before completing the attack chain. Their Excalibur system reports up to 91 percent task completion on CTF benchmarks and compromises 4 of 5 hosts in GOAD versus 2 by prior systems, but even that paper’s contribution is less “we solved it” than “we identified a planning problem that model scaling alone does not fix.” (arXiv)

Another 2025 paper, Automated Penetration Testing with LLM Agents and Classical Planning, makes a similar point from yet another angle. It argues that fully hands-off execution remains a significant challenge and proposes a Planner-Executor-Perceptor pattern, with a CHECKMATE framework that uses external structured planning to offset LLM weaknesses in long-horizon reasoning, tool use, and stability. The paper reports that CHECKMATE improved benchmark success over a strong baseline and cut time and cost materially. Read together, the benchmarks and design papers all point in the same direction: Pentest GPT is real, but its ceiling is far more dependent on workflow structure, tool discipline, and external planning than on raw model cleverness alone. (arXiv)

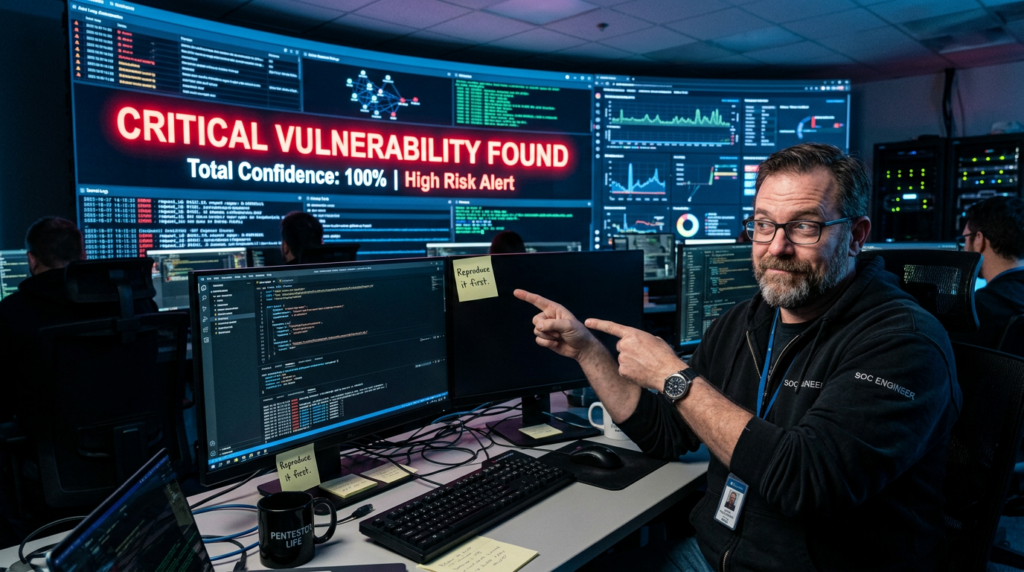

There is also a more mundane failure mode that matters in practice: hallucinated confidence. Security teams do not need an AI that sounds plausible; they need an AI that can distinguish between a hypothesis, a partial signal, a confirmed condition, and a reproduced impact. Vendor educational content aimed at engineers now frequently acknowledges hallucinations, stale knowledge, and context gaps as core constraints, which is telling. The industry has largely moved past pretending these problems do not exist. The serious conversation is now about how to engineer around them. (אייקידו)

The benchmark story is not anti-AI, it is anti-sloppiness

It is tempting to read the weaker benchmark results and conclude that Pentest GPT is overhyped. That is not the strongest reading of the evidence. A better reading is that the category is already useful, but only when it is embedded in a disciplined system that does not ask the model to do what it is still bad at. The original paper showed measurable gains over raw models. AutoPenBench showed hybrid workflows beating autonomous ones by a wide margin. PentestEval showed stage-level weakness and low end-to-end success. Excalibur and CHECKMATE showed that better planning and external structure meaningfully improve results. That is not the story of a fake category. It is the story of a field moving from demos to engineering. (arXiv)

There is also important nuance in the broader research landscape. In early 2024, LLM Agents can Autonomously Hack Websites showed that frontier model agents could carry out meaningful website attacks such as SQL injection and blind schema extraction in specific research conditions, and even find vulnerabilities in the wild. That paper mattered because it proved the offensive capability question could not be dismissed out of hand. But later benchmarking work clarified the other half of the truth: isolated capability demos do not automatically translate into production-stable, repeatable, broad-coverage pentesting systems. Both facts can be true at once, and serious engineering starts when you hold them together instead of picking one. (arXiv)

This is the point many shallow “AI pentest” articles miss. They either oversell the frontier-model case study and imply the rest is solved, or they overreact to benchmark weakness and imply the category has no present value. Neither is accurate. Pentest GPT is already changing workflows, but mostly by making existing workflows faster, more structured, and more evidence-rich. It is not yet a universal substitute for a seasoned tester who can reason about intent, edge cases, business logic, risk trade-offs, and the consequences of taking the wrong action at the wrong time. (arXiv)

A serious pentest gpt stack is a system, not a model

Once you stop treating the model as the product, the architecture of a serious pentest-gpt-style system becomes much clearer. The OpenAI agent-building guidance defines an agent as an AI system with הוראות, guardrails, ו access to tools, and describes modern agentic stacks in terms of models, tools, state or memory, and orchestration. The practical guide adds that reliable agents depend on clear tool definitions, suitable orchestration patterns, and guardrails for safe production behavior. Whether or not you build on OpenAI specifically, that framing maps directly onto what offensive-security systems need: a reasoning layer, explicit tool boundaries, action gating, and persistent state that survives beyond a single chat turn. (OpenAI Developers)

PentestGPT’s own public description now looks very much like an early version of that general pattern. The current site describes reasoning, generation, and parsing modules; the GitHub project emphasizes agentic execution, session persistence, and model routing for different types of work such as reasoning-heavy or long-context tasks. In other words, the project has evolved from “LLM for pentesting” toward “workflow system with specialized paths and persistent state.” That evolution is exactly what the field as a whole has been learning. A pentest model without tools is a clever assistant. A pentest system with tools but no state becomes forgetful. A pentest system with tools and state but no guardrails becomes unsafe. A serious platform needs all three. (PentestGPT)

PortSwigger’s Burp AI shows the same lesson from a different angle. Burp AI is embedded inside an existing professional workflow rather than pretending to be a whole autonomous platform. It is on-demand, tightly scoped, user-controlled, and privacy-bounded. PortSwigger says Burp AI helps analyze, understand, and test HTTP messages, but keeps the tester in control. It also documents that AI-related data is handled within its security framework, that provider-side storage is not retained, and that the feature can be disabled entirely. This is what maturity looks like: not fewer constraints, but better-defined ones. (PortSwigger)

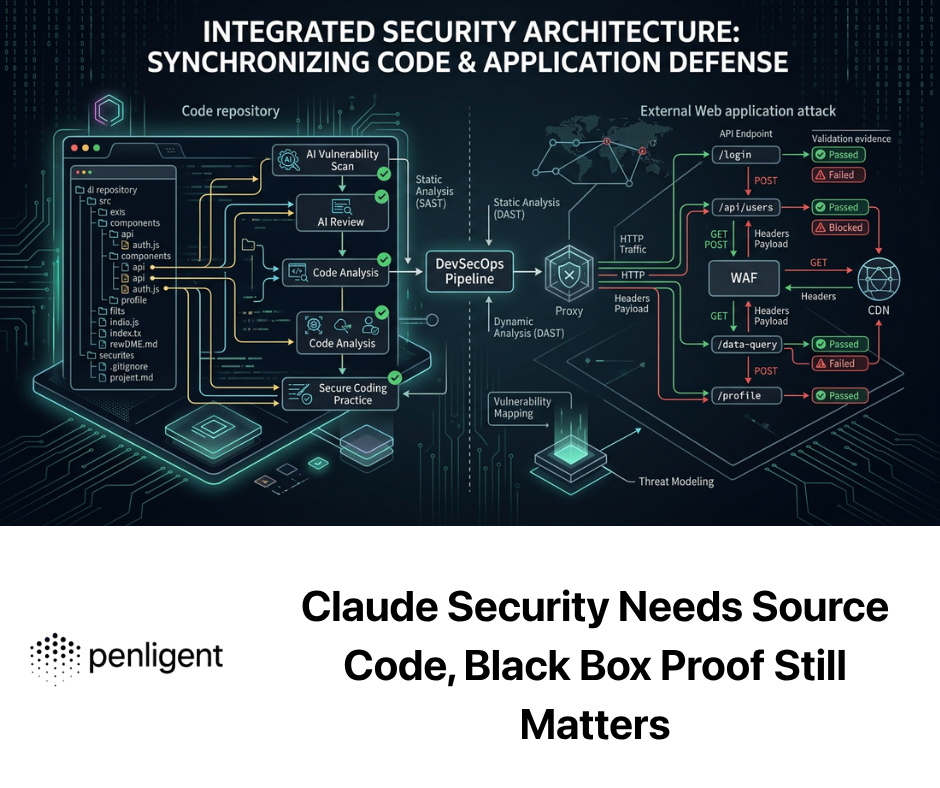

From an engineering perspective, a production-worthy pentest-gpt-style system usually needs at least seven layers. It needs a model layer for reasoning. It needs a tool layer for web, network, and validation actions. It needs a state layer to remember what has already been seen and tried. It needs an orchestration layer to decide what happens next and when to stop. It needs a guardrail layer to restrict destructive or out-of-scope actions. It needs an evidence layer that stores raw outputs and timestamps. And it needs a report layer that transforms evidence into something defenders, auditors, and engineering leads can act on. Remove any one of those, and the system starts drifting back toward either a scanner or a chatbot. (OpenAI)

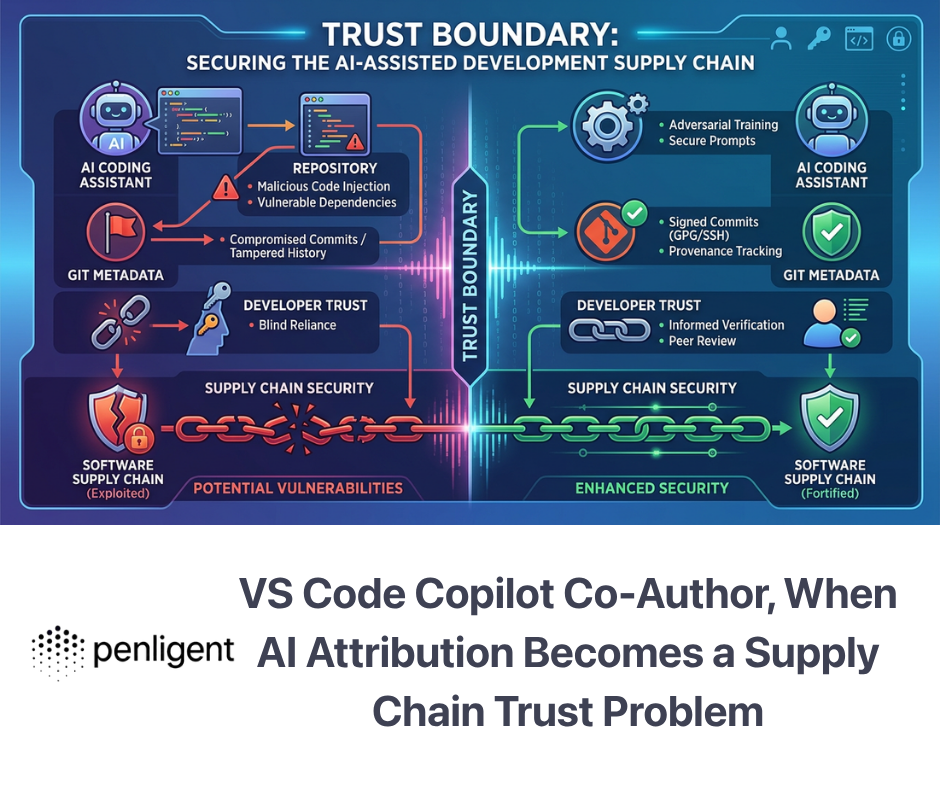

That is also why so many weak market claims collapse under scrutiny. A system that can explain a response body is useful, but that is not the same as maintaining an engagement. A system that can launch a tool is useful, but that is not the same as understanding whether the result changed the hypothesis. A system that can draft a report is useful, but that is not the same as carrying an auditable evidence chain from first observation to final finding. The strongest current distinction in the category is not AI versus non-AI. It is suggestion versus validation. (Penligent)

Three categories are hiding inside the market

A lot of confusion disappears once you separate the current market into three practical classes.

| קטגוריה | What it mainly does | Where it helps most | Where it usually breaks |

|---|---|---|---|

| AI explainer or copilot | Interprets requests, responses, logs, or scan output | Faster analysis, payload drafting, note-taking, triage | Weak long-horizon memory, no independent proof |

| AI-assisted pentest workflow | Chains tools, tracks more state, suggests next steps, helps validate hypotheses | Recon-to-validation acceleration, attack-path reasoning, repeatable analyst workflows | Still brittle on complex multi-step paths without human steering |

| Evidence-driven validation platform | Adds explicit controls, artifact capture, reproducibility, reporting, and often human gating | Real engagements, compliance-sensitive environments, repeat testing, stakeholder reporting | Harder to build, more operational overhead, narrower safety envelope by necessity |

This table is a synthesis, but it mirrors what the public sources are actually describing. PentestGPT and related research established the workflow problem. Burp AI exemplifies the copilot class. The benchmark literature keeps rewarding systems with more structure and more human or external-planning support rather than unconstrained autonomy. That pattern is why the category is becoming more useful even while “fully autonomous pentesting” remains an overstatement in many real-world contexts. (arXiv)

What security engineers should demand before trusting pentest gpt

Before asking whether a Pentest GPT is “good,” it is better to ask what evidence it can leave behind. NIST SP 800-115 still offers the right starting frame: technical security testing needs planning, execution, analysis, and mitigation-oriented reporting. That sounds obvious, but it becomes more important, not less, once AI enters the workflow. The model can accelerate execution and help with analysis, but the rules of engagement, authorization boundaries, and success criteria still have to be set by humans. A pentest agent without those boundaries is not advanced. It is undisciplined. (NIST)

For web targets, OWASP’s Web Security Testing Guide remains the best coverage map because it forces the work back onto recognizable test domains: identity, authentication, authorization, session management, input validation, business logic, client-side behavior, and APIs. One of the easiest ways to spot weak AI pentest marketing is that it talks about “finding vulnerabilities” in a vague blob. Serious systems should be mappable to recognizable testing domains and repeatable workflows. If the tool cannot tell you whether it has meaningfully exercised authorization logic versus just replaying obvious payloads, you do not really know what it has tested. (OWASP)

MITRE ATT&CK still matters here, not because ATT&CK turns a pentest into a better pentest by itself, but because it helps connect offensive activity to defender visibility. MITRE describes ATT&CK as a globally accessible knowledge base of adversary tactics and techniques based on real-world observations. For a modern pentest-gpt-style system, ATT&CK mapping is useful when it turns scattered technical activity into operationally meaningful reporting. A finding should not end at “high severity.” It should help defenders understand which behaviors, tactics, or technique families were exercised and what evidence was collected. (MITRE ATT&CK)

The AI layer itself also needs its own risk model. OWASP’s current material on LLM and agentic application security highlights prompt injection and the broader class of risks that emerge when models can plan, retrieve, and act across tools. That is directly relevant to Pentest GPT systems because the target environment is often adversarial by definition. A mature offensive agent must assume that some of what it reads from targets, logs, HTML, JavaScript, or attached documents may be hostile not just to the target system but to the agent’s reasoning process. The moment a pentest agent can browse, retrieve, execute, or hand off actions, prompt-injection resistance stops being a theoretical appendix and becomes part of operational safety. (פרויקט אבטחת AI של OWASP Gen)

In practice, that means the right buying or building questions are simple and severe. Can the system scope actions to authorized assets. Can it log every meaningful action and raw output. Can it distinguish proposed actions from executed ones. Can it preserve artifacts independently of the model’s summary. Can it stop, pause, or require approval before higher-risk actions. Can it keep target data inside an acceptable trust boundary. Can it re-run or replay the exact validation path later. Those questions are much more predictive of real value than “which frontier model does it use.” (OpenAI)

Pentest gpt works best when it is tied to the engagement lifecycle

The most productive way to use Pentest GPT is still phase-based. During reconnaissance, the model is valuable for turning broad, noisy inputs into a narrower plan: which hosts look most promising, which ports or routes deserve deeper inspection, which API surface appears authenticated versus public, which obvious dead ends can be deprioritized. During vulnerability analysis, it becomes useful as a synthesizer: it can connect weak signals into a plausible chain and suggest what evidence would falsify or confirm that chain. During validation, it helps by drafting clean follow-up requests, replaying variants, comparing responses, and packaging the proof. During reporting, it converts raw evidence into a form another engineer can reproduce. That is a far more grounded lifecycle than the popular image of one-shot autonomous exploitation. (Penligent)

The five-phase view referenced in current Pentest GPT writing is still useful because it exposes where systems really fail. Recon is often manageable. Scanning and initial interpretation are manageable. The hard middle is where many systems stall: maintaining context across pivots, noticing that one partial success changes the relevance of earlier observations, and resisting the urge to keep hammering a dead path because the model remains superficially confident. This is exactly why structured planning papers and stage-level benchmarks matter so much. They are not academic overhead. They are maps of where workflow fragility actually lives. (Penligent)

A practical Pentest GPT implementation should therefore behave more like a disciplined engagement manager than a stunt demo. It should ask, in effect: what do I know, what do I only suspect, what evidence would close the gap, and which tool is the least risky way to get that evidence. That posture sounds conservative, but in real environments it is exactly what makes AI acceleration usable. Speed without state discipline produces noise. Speed with state discipline produces throughput. (OpenAI)

Here is a simple example of the kind of safe, auditable evidence capture pattern a serious system should prefer on authorized targets. The point is not the command itself. The point is that the command, parameters, output, and storage path are all treated as evidence.

# Authorized targets only.

# Create a timestamped evidence folder and capture non-destructive recon output.

export TARGET="example.internal"

export RUN_ID="$(date -u +%Y%m%dT%H%M%SZ)"

mkdir -p "evidence/$RUN_ID"

# Inventory-style service detection

nmap -sV -oN "evidence/$RUN_ID/nmap_services.txt" "$TARGET"

# Basic HTTP header capture for patch and exposure validation

curl -skI "https://$TARGET" > "evidence/$RUN_ID/http_headers.txt"

# Record a minimal operator note

printf "Target=%s\nRunID=%s\nMode=non-destructive\n" "$TARGET" "$RUN_ID" \

> "evidence/$RUN_ID/run_metadata.txt"

A model can draft this pattern, but the real value comes from the surrounding discipline. The raw nmap output is preserved. The headers are preserved. The run metadata is preserved. A later report can cite those artifacts as the source of truth instead of relying on the model’s memory of what happened. This is the difference between “AI summarized a test” and “AI participated in a defensible technical assessment.” (NIST)

The CVEs that matter around pentest gpt, part one, the stack itself

One mistake in this space is to write about Pentest GPT as though only the יעד can be vulnerable. In practice, the Pentest GPT stack itself often includes exactly the kinds of components that keep producing serious vulnerabilities: agent UIs, orchestration layers, self-hosted model frontends, workflow engines, browser-facing admin panels, document retrieval endpoints, and low-code automation runtimes. That means the system doing the testing may itself expand the attack surface if it is deployed carelessly. This is not hypothetical. The 2025 and 2026 advisory stream around adjacent AI and agent tools makes the point very clearly. (GitHub)

Langflow CVE-2025-3248 is a good example. GitHub’s advisory describes versions prior to 1.3.0 as susceptible to code injection in the /api/v1/validate/code endpoint, allowing a remote unauthenticated attacker to execute arbitrary code. The NVD record says the same thing. Whether or not your own Pentest GPT stack uses Langflow specifically, the lesson is broader: if your orchestration layer includes code-validation or dynamic execution features, it must be threat-modeled like any other high-risk service, not treated as a harmless “AI workflow” convenience. (GitHub)

Open WebUI offers another cautionary case. Its December 2025 advisory GHSA-c6xv-rcvw-v685 describes an SSRF issue in /api/v1/retrieval/process/web that allows an authenticated user to force the server to request arbitrary URLs, potentially reaching cloud metadata endpoints, internal networks, and internal services behind firewalls. In November 2025, a separate advisory described a stored DOM XSS path that could be leveraged into account takeover and even server-side RCE chains in certain admin workflows. These are exactly the kinds of flaws that become more dangerous when organizations rapidly assemble model-facing admin surfaces and retrieval pipelines around sensitive environments. (GitHub)

Then there is n8n CVE-2025-68613, which matters well beyond the n8n ecosystem because it sits at the intersection of workflow automation and AI integration. NVD describes a critical remote code execution vulnerability in the workflow expression evaluation system affecting versions before the fixed releases, and GitHub’s later advisory lists a CVSS 9.4 severity for the expression-escape issue. CISA added the issue to its Known Exploited Vulnerabilities workflow in March 2026. The larger lesson is uncomfortable but important: once AI security workflows start depending on general automation engines, the vulnerability history of those automation engines becomes part of your offensive-security risk surface too. (NVD)

A Pentest GPT platform, then, has to be secured twice. First, it must help assess the target. Second, it must defend its own orchestrators, retrieval connectors, browser surfaces, storage layers, and privileged execution paths. That is why prompt injection, SSRF, XSS, credential exposure, and over-permissioned service boundaries all matter so much here. A system that can reach the internet, read artifacts, and propose actions is useful precisely because it is powerful. That same power makes sloppy deployment far more expensive. (פרויקט אבטחת AI של OWASP Gen)

The CVEs that matter around pentest gpt, part two, the current enterprise cases it should help validate

The other CVEs that matter are the ones security teams are actually being forced to prioritize right now. This is where Pentest GPT becomes practically useful again, because the value is not “AI discovered a famous CVE exists.” The value is that AI can help turn a late-breaking advisory into a fast, organized, non-destructive validation workflow on authorized assets. That kind of workflow is especially valuable when the vulnerability is already in or near CISA KEV, when the environment is large, or when the affected products sit in control-plane, backup, or collaboration infrastructure. (CISA)

CVE-2026-20127 is a strong example. Cisco’s description says the issue in Cisco Catalyst SD-WAN Controller and Manager could allow an unauthenticated remote attacker to bypass authentication and obtain administrative privileges on an affected system. CISA also tied the issue to its known exploited vulnerability response and released guidance around ongoing exploitation. For a Pentest GPT workflow, the immediate value is not in automating exploitation details. It is in rapidly fingerprinting exposure, identifying affected control-plane nodes, validating patch level or configuration state, and preserving that evidence cleanly for operations teams. (NVD)

CVE-2026-20963 in Microsoft SharePoint is another case where the workflow matters more than the slogan. NVD describes it as a ביטול סידור נתונים שאינם מהימנים vulnerability that allows an authorized attacker to execute code over a network, and CISA added it to the KEV Catalog in mid-March 2026 based on evidence of active exploitation. In a real environment, the urgent work is usually not “can the AI invent a flashy payload.” It is “which SharePoint instances are exposed, which authentication paths and privilege boundaries are relevant, which patches are applied, and what evidence do we have for remediation sequencing.” That is a perfect fit for AI-assisted triage and evidence packaging. (NVD)

CVE-2026-22719 in VMware Aria Operations belongs in the same conversation. Broadcom’s advisory describes a command injection issue that may allow an unauthenticated actor to execute arbitrary commands during support-assisted migration and lists a maximum CVSS 8.1. The advisory also notes awareness of reports of possible exploitation in the wild, and CISA’s news stream points to the issue’s KEV relevance. For a Pentest GPT workflow, this is a reminder that the most valuable validations often happen around management and observability planes, not just internet-facing web apps. These are the systems where clean evidence, reproducibility, and low-friction triage matter because the blast radius is operationally large. (Support Portal)

A mature Pentest GPT system should therefore be able to move quickly on fresh, high-impact vulnerabilities in a very specific way. It should gather version and topology evidence, align what it sees against vendor and KEV guidance, help generate a bounded validation plan, store the raw artifacts, and make it obvious which claims are confirmed, which are suspected, and which still require manual follow-up. That is not glamorous. It is exactly what security engineering teams need. (NIST)

| CVE | מדוע זה חשוב במקרה זה | What a safe Pentest GPT workflow should focus on |

|---|---|---|

| CVE-2025-3248, Langflow | Agent-orchestration stack risk | Identify exposed Langflow instances, verify version, isolate risky endpoints, preserve evidence |

| CVE-2025-68613, n8n | Workflow engine risk in AI-heavy environments | Confirm version, review expression exposure, document patch state and trust boundaries |

| CVE-2026-20127, Cisco SD-WAN | Control-plane compromise risk | Fingerprint affected nodes, verify patch status, capture management-plane evidence |

| CVE-2026-20963, SharePoint | Active exploitation and enterprise blast radius | Map exposure, privilege requirements, patch status, and remediation priority |

| CVE-2026-22719, VMware Aria Operations | Management-plane RCE path | Identify affected deployments, migration context, fixed versions, and compensating controls |

The point of this matrix is not to turn Pentest GPT into a CVE search engine. It is to show where the category becomes operationally valuable: helping teams validate, document, and prioritize real exposure while keeping the record defensible. (GitHub)

Burp AI shows where augmentation is already winning

Burp AI deserves attention in any serious Pentest GPT article because it captures the most commercially credible pattern in the market today. PortSwigger is not claiming that Burp AI has replaced the tester. It describes Burp AI as an on-demand assistant in Repeater that can validate suspected findings, automate routine steps, explore payload variations, and turn insight into reusable notes while the operator remains in control. That language is more than positioning. It reflects where real trust is forming: not around autonomous theater, but around tools that shorten the time from suspicion to evidence without obscuring the operator’s visibility. (PortSwigger)

The privacy and control posture is equally instructive. PortSwigger documents that Burp AI features are disabled by default for extensions, that AI request data is handled within its security framework, that provider-side storage is not retained, and that organizations can fully disable AI in settings. It also states that current model providers include OpenAI and Anthropic. Whether you use Burp or not, these are the kinds of questions any Pentest GPT system should answer plainly: what data leaves the box, who can retain it, what audit trail exists, and who can turn the feature off. (PortSwigger)

That matters because the most productive long-term shape of Pentest GPT may not be one monolithic autonomous agent. It may be a set of increasingly capable assistants embedded inside offensive workflows that already have trusted interfaces, strong operator models, and mature evidence practices. In that world, “Pentest GPT” becomes less about anthropomorphizing the model and more about compressing the analyst loop while keeping proof and control where they belong. (PortSwigger)

What a good Pentest GPT deliverable should look like

A good Pentest GPT output should read less like an enthusiastic chat transcript and more like a structured technical case file. At minimum, it should separate הקשר, observations, hypothesis, validation steps, raw evidence, השפעה, ו remediation. It should include the commands or requests used, the timestamps of execution, the exact artifacts produced, and the confidence level of each conclusion. Most importantly, the raw evidence should remain accessible independently of the AI’s summary. The summary is for speed. The artifacts are for trust. (NIST)

That output should also map cleanly to engineering and defensive workflows. Where relevant, findings should map to OWASP WSTG areas for web coverage and to MITRE ATT&CK where attacker behavior patterns are useful for blue-team correlation. Remediation text should distinguish between immediate containment, durable fix, and retest criteria. This sounds more mundane than “AI found a critical bug,” but it is much closer to how useful security work survives handoff across teams. (OWASP)

A simple structured finding schema can look like this:

{

"target": "app.example.internal",

"finding_id": "PTGPT-2026-0007",

"title": "Broken access control in order export endpoint",

"status": "validated",

"confidence": "high",

"wstg_area": "Authorization Testing",

"attack_mapping": ["T1190", "T1078"],

"evidence": [

"evidence/20260319T021500Z/http_headers.txt",

"evidence/20260319T021500Z/repeater_order_export_diff.txt"

],

"validation_summary": "Authenticated low-privilege request could access another user's export data after identifier substitution.",

"raw_steps": [

"Captured baseline request and response",

"Replayed request with alternate object identifier on authorized test asset",

"Observed cross-user data exposure and preserved response diff"

],

"impact": "Unauthorized access to another user's order data",

"remediation": "Enforce object-level authorization on export handler and add regression tests for cross-tenant identifier substitution",

"retest_required": true

}

The key point is not the JSON shape itself. The key point is that a Pentest GPT system should make it easy to preserve the chain from input to proof. That is where AI becomes operationally valuable: not by sounding smart about a bug class, but by leaving behind enough structure that another engineer can reproduce, fix, and verify the issue without guessing what the model meant. (OpenAI)

The useful fit is in the gap between LLM reasoning ו evidence-driven validation. Penligent’s recent public writing around Pentest GPT, AI pentest tools, and model selection keeps circling the same core idea: the real dividing line is no longer whether a product uses a model, but whether it can move from signal to reproducible proof in a workflow that preserves context, validates impact, and produces stakeholder-ready output. That is the right problem to focus on. (Penligent)

That framing also avoids one of the category’s biggest traps. It does not require claiming that a single system has solved every class of pentesting problem. It simply asks for something stricter and more useful: can the platform find exposures, test them responsibly, retain the evidence, and make retesting and reporting materially easier. In a market crowded with scanner-plus-chat experiences, that is a more credible and more durable value proposition than pretending the hard parts of security work disappeared. (Penligent)

Pentest GPT is real, but the proof standard has changed

The strongest conclusion in 2026 is not that Pentest GPT has arrived as a perfect autonomous pentester. It has not. The strongest conclusion is that the category is now real enough that its strengths and weaknesses are concrete. The strengths are now obvious: triage, synthesis, path reasoning, validation support, and faster evidence packaging. The weaknesses are now equally obvious: context drift, brittle long-horizon planning, hallucinated confidence, and uneven autonomy under complex conditions. Research papers, benchmark suites, and serious vendor documentation all point to the same practical answer: Pentest GPT works best when it is embedded in a structured workflow with tools, state, guardrails, and evidence capture. (arXiv)

That is also why the phrase remains worth taking seriously. Not because it names a magic capability, but because it names a genuine transition in security engineering. Penetration testing is moving from isolated manual labor and disconnected scanners toward systems that can preserve more context, accelerate more of the repetitive loop, and leave a better record behind. The winning systems will not be the ones that sound the most autonomous. They will be the ones that make verified risk easier to find, easier to prove, and easier to fix. (Penligent)

לקריאה נוספת

Authoritative external sources: the original PentestGPT paper and USENIX presentation remain the canonical references for the category’s research foundation. NIST SP 800-115 still provides the best governance frame for technical assessment work. OWASP WSTG remains the strongest public map for web testing coverage. MITRE ATT&CK remains useful for translating findings into defender-relevant behavior. OWASP’s GenAI and Agentic guidance is increasingly relevant for securing the testing stack itself. Burp AI documentation is one of the clearest public examples of how serious vendors are positioning AI as augmentation inside a trusted workflow. (USENIX)

Recent Penligent reading worth linking internally: Pentest GPT, What It Is, What It Gets Right, and Where AI Pentesting Still Breaks; PentestGPT vs. Penligent AI in Real Engagements From LLM Writes Commands to Verified Findings; Pentest AI Tools in 2026, What Actually Works, What Breaks; and כלי בדיקת חדירות מבוסס בינה מלאכותית: איך תיראה תקיפה אוטומטית אמיתית בשנת 2026. Those pieces sit close to this article’s core theme and are natural internal companions. (Penligent)