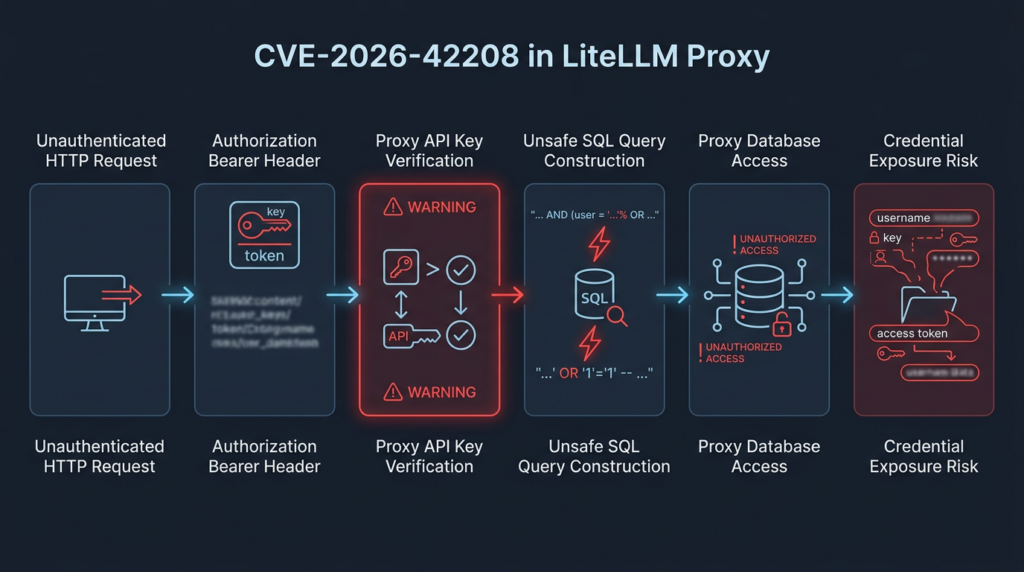

CVE-2026-42208 is not just another SQL injection advisory in a Python package. It is a critical pre-authentication SQL injection in LiteLLM Proxy, the gateway mode many teams use to route requests across LLM providers, manage virtual keys, enforce budgets, and centralize access to upstream model credentials. The official GitHub advisory tracks it as GHSA-r75f-5x8p-qvmc, marks it Critical with a CVSS v4 score of 9.3, lists the weakness as CWE-89, and states that affected versions are >=1.81.16, <1.83.7, with the fix available in 1.83.7 and later. LiteLLM’s own security update recommends upgrading to v1.83.10-stable. (GitHub)

The dangerous part is the location of the bug. The vulnerable code path sits in LiteLLM Proxy’s API key verification flow. In affected versions, a database query used during proxy API key checks mixed the caller-supplied key value into the SQL query text instead of passing that value as a separate parameter. An unauthenticated attacker who could reach an LLM API route, such as POST /chat/completions, could send a crafted Authorization header and reach the vulnerable query through the proxy’s error-handling path. The advisory says that an attacker could read data from the proxy database and may be able to modify it, leading to unauthorized access to the proxy and the credentials it manages. (GitHub)

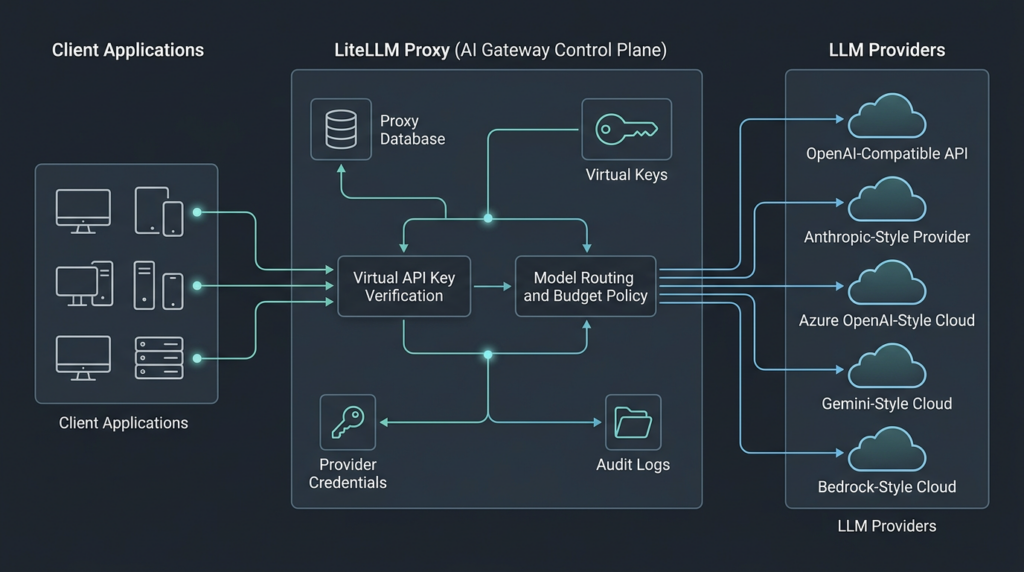

That last phrase is the part defenders should not skip. LiteLLM is commonly deployed as an AI gateway, not as a cosmetic library. Its public repository describes it as an open-source AI Gateway that provides a unified interface to more than 100 LLM providers, including OpenAI, Anthropic, Gemini, Bedrock, Azure, Vertex AI, Cohere, Hugging Face, vLLM, NVIDIA NIM, and others, using OpenAI-compatible or native formats. LiteLLM Proxy is documented as an OpenAI-compatible LLM gateway for model access, spend tracking, virtual keys, budgets, and rate limits. (GitHub)

A SQL injection in this layer can therefore become a credential-plane incident. If the gateway database stores virtual API keys, provider credentials, model routing configuration, environment-derived settings, or tenant access metadata, then database access is not merely an application data breach. It may become access to the systems that decide who can call models, which provider keys are used, what budgets apply, and how traffic moves through the AI stack.

The short version security teams need first

| Question | Answer |

|---|---|

| CVE | CVE-2026-42208 |

| Advisory | GHSA-r75f-5x8p-qvmc |

| Affected component | LiteLLM Proxy API key verification path |

| חולשה | CWE-89, SQL Injection |

| חומרה | Critical, CVSS v4 9.3 in GitHub advisory |

| גרסאות מושפעות | >=1.81.16, <1.83.7 |

| Patched versions | >=1.83.7 |

| Recommended version | LiteLLM recommends v1.83.10-stable |

| Authentication required | לא |

| Typical reachable route | Any LLM API route that triggers proxy key verification, such as /chat/completions |

| הסיכון העיקרי | Reading or modifying the proxy database, including data that may enable unauthorized access to the proxy and managed credentials |

| Emergency workaround | הגדר disable_error_logs: true under general_settings if immediate upgrade is not possible |

| Proper fix | Upgrade, rotate secrets, review database and reverse proxy logs, and reduce network exposure |

The official advisory is precise about the vulnerable pattern: the caller-supplied API key value was mixed into the query text rather than passed separately as a parameter. LiteLLM’s security update says the issue was reported through its bug bounty program, fixed in a stable release before publication of the GitHub Security Advisory, and affects versions v1.81.16 through v1.83.6. (GitHub)

Sysdig’s threat research added the operational context. It reported targeted exploitation attempts shortly after disclosure, including enumeration of production LiteLLM schema objects associated with virtual API keys, stored provider credentials, and environment-variable configuration. Sysdig also emphasized that it did not observe confirmed follow-through such as authenticated calls using exfiltrated keys, virtual-key minting through /key/generate, or chained reuse of provider credentials. That distinction matters: the public record supports targeted exploitation attempts and high-confidence risk, not a blanket claim that every exposed instance was successfully compromised. (Sysdig)

Why LiteLLM changes the SQL injection blast radius

SQL injection has been a known class of vulnerability for decades. MITRE defines CWE-89 as improper neutralization of special elements used in an SQL command. OWASP’s guidance remains consistent: SQL injection is best prevented through prepared statements and parameterized queries, where the SQL statement is parsed separately from the user-supplied values. (CWE)

What makes CVE-2026-42208 different is not the underlying weakness. It is the asset behind the weakness.

A traditional web application SQL injection might expose users, orders, sessions, product records, messages, or administrative data. Those are serious. But an AI gateway SQL injection may expose something more operationally powerful: the keys, routing rules, budgets, provider credentials, and virtual authorization layer that control model access across an organization.

LiteLLM Proxy often sits between applications and upstream model providers. Client services send OpenAI-compatible requests to the proxy. The proxy verifies the incoming key, applies access policy, selects a configured model route, and forwards the request to an upstream provider. In that architecture, the proxy is both a traffic broker and a trust boundary. Its database is not a passive data store. It helps decide whether a request should exist at all.

That is why the phrase “proxy API key checks” should make defenders pay attention. If authentication checks are themselves injectable, then an unauthenticated attacker is interacting with the trust boundary before the system has decided whether the attacker is trusted.

How the vulnerable path works

The public advisory does not require a complex exploit chain to understand the bug. The issue is a classic query construction mistake in a sensitive path.

A safe authentication check should treat the incoming Bearer token as data. The application should build a query with placeholders and bind the token as a parameter. The database engine should never interpret characters inside the token as SQL syntax.

The dangerous pattern looks like this in simplified pseudocode:

# Unsafe pseudocode for explanation only.

# Do not build SQL queries this way.

bearer_value = request.headers.get("Authorization", "").replace("Bearer ", "")

query = (

"SELECT * FROM LiteLLM_VerificationToken "

"WHERE token = '" + bearer_value + "'"

)

row = db.execute(query)

The safe pattern separates SQL structure from caller-controlled data:

# Safer pseudocode.

# The SQL text and the value are sent separately.

bearer_value = request.headers.get("Authorization", "").replace("Bearer ", "")

query = """

SELECT *

FROM LiteLLM_VerificationToken

WHERE token = $1

"""

row = db.execute(query, [bearer_value])

The exact implementation details belong to the project maintainers, but the security principle is the same across languages and database clients. Prepared statements and parameterized queries prevent the database from treating attacker-controlled input as executable SQL. OWASP’s Query Parameterization Cheat Sheet describes this as the primary SQL injection defense pattern. (סדרת דפי העזר של OWASP)

In CVE-2026-42208, the vulnerable input source is especially sensitive because it is not an ordinary form field. It is the Authorization: Bearer header. That header is supposed to carry proof of authorization. In affected versions, crafted input in that location could reach a vulnerable database query before authentication had completed. GitHub’s advisory says the issue can be reached through the proxy’s error-handling path by sending a specially crafted Authorization header to an LLM API route. (GitHub)

Why pre-auth changes prioritization

Pre-auth vulnerabilities deserve a different response tempo from post-auth bugs. A post-auth SQL injection still matters, especially in multi-tenant systems, but it usually requires an attacker to possess some kind of account, API key, session, or privileged workflow. A pre-auth SQL injection can be attempted by anyone who can reach the vulnerable route.

For LiteLLM Proxy, that means the exposure question is simple and uncomfortable:

Can an untrusted client reach the LiteLLM Proxy API port?

If the answer is yes and the version falls in the affected range, the instance should be treated as urgent. Sysdig wrote that the injection runs in the auth-check itself and that any HTTP client able to reach the proxy port is sufficient. Its observed attack traffic targeted /chat/completions and sometimes /v1/chat/completions, with malicious indicators in the Authorization header. (Sysdig)

The mistake many teams make with internal gateways is assuming that “internal” means “trusted.” In modern environments, internal reachability often means service-to-service reachability, developer VPN reachability, CI runner reachability, temporary test environment reachability, or reachability from an agent host that processes untrusted content. Once an attacker compromises any one of those nearby systems, an internal-only pre-auth gateway bug becomes reachable.

For this reason, the right ranking is not simply “internet-facing versus not internet-facing.” The better ranking is:

| Deployment condition | Risk level | מדוע זה חשוב |

|---|---|---|

| Internet-facing LiteLLM Proxy on affected version | קריטי | The pre-auth path is reachable by untrusted remote clients |

| Internal shared LiteLLM Proxy reachable from broad corporate or cluster networks | גבוה | Any compromised internal workload may become a launch point |

| LiteLLM Proxy reachable only from a narrow service mesh with strict policy | Medium to High | Exploitability depends on network policy and service identity controls |

| Local developer-only Proxy with provider keys in config | בינוני | Local compromise or exposed dev tunnels can still expose credentials |

| SDK-only use without running Proxy | Case-dependent | The advisory targets Proxy API key verification, but teams should still check installed versions and deployment paths |

The category that deserves the most attention is the shared AI gateway. A single shared gateway may hold the keys for multiple teams, tenants, model providers, and workflows. That makes it more valuable than an isolated application instance.

What attackers were observed targeting

Sysdig’s public analysis is useful because it moves the discussion from “SQL injection is possible” to “this is what targeted traffic tried to enumerate.” The report says the observed traffic was not generic SQLmap noise. It described deliberate enumeration of the LiteLLM production schema, targeting three high-value areas: virtual API keys, stored provider credentials, and the proxy’s environment-variable configuration. It also stated that the operator appeared to know Prisma-generated PostgreSQL identifier casing and performed a column-count discovery sweep. (Sysdig)

Those targets make sense.

LiteLLM_VerificationToken is interesting because virtual API keys and master-key-related material can allow direct replay against the gateway if stolen and still valid. litellm_credentials is interesting because AI gateways often store upstream provider credentials to route traffic to OpenAI, Anthropic, Azure OpenAI, Bedrock, Gemini, or other providers. litellm_config is interesting because environment-derived configuration can reveal secrets, deployment assumptions, internal URLs, database references, and operational flags. Sysdig specifically named those three target areas in the context of the observed activity. (Sysdig)

This is why the incident response should not stop at “upgrade the package.” If a vulnerable instance was reachable while affected, defenders should answer these questions:

| Asset | Why an attacker wants it | What defenders should do |

|---|---|---|

| LiteLLM virtual keys | Replay access to the gateway | Rotate keys, invalidate old keys, review access logs |

| Master key or administrative key material | Administrative control or key issuance | Rotate immediately, review admin actions |

| Provider API keys | Direct model provider usage and billing abuse | Revoke and recreate keys at providers, review provider logs |

| Environment variables | Cloud, database, or integration secrets | Inventory and rotate any exposed secrets |

| Model routing config | Knowledge of internal model architecture | Review access scope and minimize disclosure |

| Prompt or request logs | Sensitive business context or user data | Review data retention and access controls |

| Budget and rate limit config | Information for abuse planning | Add anomaly detection and hard limits |

| MCP or tool gateway settings | Potential pivot into execution paths | Lock down tool creation and command execution boundaries |

The exact data present depends on deployment choices. Some teams store little in the gateway database. Others store enough to treat the proxy as an AI access control plane. The safer incident response assumption is to start with the maximum plausible exposure and reduce scope only after evidence supports it.

The observed disclosure-to-exploitation window

The speed of exploitation is part of the story. Sysdig reported that the GitHub repository advisory was published on April 20, 2026, the advisory was indexed in the global GitHub Advisory Database on April 24, 2026 at 16:17 UTC, and the first exploitation attempt it observed occurred on April 26, 2026 at 04:24 UTC. It calculated the time from global advisory publication to first observed exploit attempt as 36 hours and seven minutes. (Sysdig)

That timeline does not prove widespread compromise. Sysdig explicitly wrote that it did not observe follow-through such as authenticated calls with exfiltrated keys, virtual-key minting, or chained reuse of provider credentials. (Sysdig)

But the timeline does prove something else: AI gateway advisories are now being operationalized quickly. Attackers do not need weeks to understand the value of this layer. A public advisory that names an auth-path SQL injection in a gateway known to centralize credentials is enough to attract targeted probing.

Defenders should treat that as a workflow problem. If a dependency advisory lands on Friday and the gateway is exposed over the weekend, “we will review it next sprint” is no longer a serious response. AI gateway components need the same emergency patch path as VPNs, identity providers, SSO brokers, internet-facing admin panels, CI/CD controllers, and remote management software.

What changed in the fix

The GitHub advisory says the issue was fixed in 1.83.7 and that the caller-supplied value is now always passed to the database as a separate parameter. That is the right class of fix for SQL injection: do not try to sanitize a token into safety after building a dynamic SQL string; instead, make sure the database receives the query structure and user-controlled value separately. (GitHub)

LiteLLM’s official security update says the issue was reviewed, patched, validated, and released as a stable build before the GitHub Security Advisory was published. It lists affected versions as v1.81.16 through v1.83.6, fixed versions as v1.83.7 and later, and the recommended version as v1.83.10-stable. (liteLLM)

The workaround is narrow. GitHub’s advisory says that if upgrading is not immediately possible, setting disable_error_logs: true under general_settings removes the path through which unauthenticated input reaches the vulnerable query. That should be treated as an emergency control, not a remediation endpoint. It reduces a known path, but it does not provide the assurance of running patched code. (GitHub)

A minimal temporary configuration change would look like this:

general_settings:

disable_error_logs: true

Use that only while preparing the upgrade. The upgrade remains the fix.

How to check whether you are exposed

Start with version and deployment mode. The advisory affects LiteLLM Proxy versions from 1.81.16 לפני 1.83.7, so the first question is whether an affected LiteLLM version is installed and whether the Proxy is running.

For Python environments, use:

python -c "import importlib.metadata as m; print(m.version('litellm'))"

For pip-managed environments, enumerate installed packages:

python -m pip show litellm

python -m pip freeze | grep -i '^litellm=='

For container images, check both the image tag and the package inside the running container:

docker ps --format 'table {{.Names}}\t{{.Image}}\t{{.Ports}}'

docker exec -it <container_name> \

python -c "import importlib.metadata as m; print(m.version('litellm'))"

For Kubernetes, identify workloads that may be running LiteLLM Proxy:

kubectl get deploy,statefulset,daemonset -A | grep -i litellm

kubectl get pods -A -o wide | grep -i litellm

Then check whether the proxy is reachable from untrusted networks. Look for public load balancers, ingress rules, exposed NodePorts, reverse proxy routes, developer tunnels, and permissive service mesh policies.

kubectl get ingress -A

kubectl get svc -A | grep -E 'LoadBalancer|NodePort'

If you find an affected version and a reachable Proxy, assume the instance needs emergency handling.

Immediate remediation plan

The response should happen in phases, but the first phase should be fast.

Upgrade LiteLLM Proxy

שדרג ל v1.83.7 or later. LiteLLM recommends v1.83.10-stable. (liteLLM)

For pip-based deployments:

python -m pip install --upgrade "litellm>=1.83.10"

python -c "import importlib.metadata as m; print(m.version('litellm'))"

If your deployment uses pinned requirements, update the pinned version rather than relying on an unpinned upgrade:

litellm==1.83.10

Then rebuild and redeploy through your normal release pipeline.

For container-based deployments, rebuild from a known-good base and verify the package version inside the final image. Avoid assuming the image tag alone proves package state.

docker build --no-cache -t internal/litellm-proxy:1.83.10 .

docker run --rm internal/litellm-proxy:1.83.10 \

python -c "import importlib.metadata as m; print(m.version('litellm'))"

Restrict network reachability

Do not expose LiteLLM Proxy directly to the public internet unless there is a strong reason and a properly designed security boundary. Even after patching, the gateway remains a high-value control point. Put it behind authenticated ingress, network policy, service identity, private connectivity, or a dedicated API edge.

A Kubernetes NetworkPolicy example might restrict access to a known application namespace:

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: restrict-litellm-proxy

namespace: ai-gateway

spec:

podSelector:

matchLabels:

app: litellm-proxy

policyTypes:

- Ingress

ingress:

- from:

- namespaceSelector:

matchLabels:

name: approved-apps

ports:

- protocol: TCP

port: 4000

The exact labels and ports will differ in your environment. The goal is not to copy this YAML blindly. The goal is to stop broad unauthenticated network reachability to the gateway.

Apply the workaround only if patching is delayed

If you cannot upgrade immediately, set disable_error_logs: true under general_settings as described by the advisory. This removes the unauthenticated path identified by the maintainers, but it should be considered a short-term bridge to the patched version. (GitHub)

Rotate credentials

If the affected Proxy was reachable from an untrusted network, rotate secrets as though database contents may have been read. That includes LiteLLM virtual keys, any master or administrative keys, upstream model provider credentials, database credentials, cloud secrets, and integration tokens stored in or exposed to the gateway.

OWASP’s Secrets Management guidance describes rotation as a multi-step process: create a new secret, set it, test it, and finish the transition safely. That matters here because emergency key rotation done without testing can create outages, while delayed rotation can preserve attacker access. (סדרת דפי העזר של OWASP)

A practical rotation order is:

| עדיפות | Secret class | פעולה |

|---|---|---|

| 1 | LiteLLM master or admin key | Revoke and recreate immediately |

| 2 | LiteLLM virtual API keys | Rotate tenant and service keys, invalidate old keys |

| 3 | Model provider API keys | Revoke and recreate at OpenAI, Anthropic, Azure, Bedrock, Gemini, or other providers |

| 4 | Database credentials | Rotate if stored in gateway config or environment variables |

| 5 | Cloud and CI/CD secrets | Rotate if present in gateway environment or mounted secrets |

| 6 | Webhook and observability tokens | Rotate if exposed through environment variables or config |

| 7 | Long-lived developer keys | Replace with short-lived or scoped credentials where possible |

Rotation should be paired with evidence. Capture which keys were rotated, when old keys were revoked, which systems were restarted, and which upstream provider logs were reviewed.

Detection logic for reverse proxy and application logs

Detection should focus on the pre-auth route, not only authenticated API calls. Sysdig published indicators such as requests to /chat/completions או /v1/chat/completions, suspicious Authorization: Bearer values beginning with an unexpected quote sequence, SQL keywords in the Authorization header, and a Python/3.12 aiohttp/3.9.1 user-agent in the observed requests. User-agent is weak evidence because attackers can change it, but it is still useful for historical hunts. (Sysdig)

Look for these patterns:

| אות | מדוע זה חשוב |

|---|---|

Authorization header contains a single quote | Common SQL string termination indicator |

Authorization header contains UNION, SELECT, FROM, --, or SQL comments | Common SQLi probing behavior |

Requests to /chat/completions with empty or tiny bodies | Sysdig observed small or empty bodies in attack traffic |

| Repeated 4xx or 5xx responses from pre-auth routes | Auth-path probing often causes errors |

| New source IPs calling key-management endpoints | May indicate follow-on probing |

| Provider billing spikes after suspicious gateway traffic | Possible credential replay or abuse |

| Postgres logs showing unusual queries against LiteLLM tables | Possible SQLi impact evidence |

A Sigma-style rule for HTTP access logs could look like this:

title: Suspicious LiteLLM Proxy Authorization Header SQLi Probe

id: 4d94f9cf-5b40-44fb-a3b5-litellm-sqli-probe

status: experimental

description: Detects suspicious SQL injection probing patterns in Authorization headers targeting LiteLLM-style LLM API routes.

logsource:

category: webserver

detection:

selection_path:

url|contains:

- "/chat/completions"

- "/v1/chat/completions"

selection_auth_quote:

request_headers.authorization|contains: "'"

selection_auth_keywords:

request_headers.authorization|re:

- "(?i)\\bunion\\b"

- "(?i)\\bselect\\b"

- "(?i)\\bfrom\\b"

- "(?i)--"

- "(?i)/\\*"

condition: selection_path and selection_auth_quote and selection_auth_keywords

fields:

- timestamp

- src_ip

- http_method

- url

- user_agent

- status

- request_headers.authorization

falsepositives:

- Security testing in an authorized lab

- Broken clients sending malformed Authorization headers

level: high

For Microsoft Sentinel or similar KQL-backed log stores, a query might look like this:

let suspicious_sql_terms = dynamic(["union", "select", "from", "--", "/*", "*/"]);

HttpLogs

| where Url has_any ("/chat/completions", "/v1/chat/completions")

| extend AuthHeader = tostring(RequestHeaders["authorization"])

| where AuthHeader contains "'"

| where tolower(AuthHeader) has_any (suspicious_sql_terms)

| project TimeGenerated, SrcIpAddr, HttpMethod, Url, StatusCode, UserAgent, AuthHeader

| order by TimeGenerated desc

If you do not store full request headers, use header hashes or edge proxy security logs. Long-term logging of full Authorization headers is risky because valid API keys may be captured in logs. A better design is to log whether the header was present, the key prefix class, a keyed hash of the token, and anomaly flags produced at the edge.

For Nginx, a temporary filter can block obvious malicious Authorization headers while patching is underway. This is not a complete defense, and it should not replace the upgrade.

map $http_authorization $litellm_sqli_probe {

default 0;

"~*'" 1;

"~*union[[:space:]]+select" 1;

"~*from[[:space:]]+" 1;

"~*--" 1;

"~*/\*" 1;

}

server {

location / {

if ($litellm_sqli_probe) {

return 403;

}

proxy_pass http://litellm_proxy;

}

}

This kind of filtering can break unusual but legitimate clients if implemented too broadly. Test before deploying. The correct security control is still patched application code.

Database-side hunting

LiteLLM’s official security update recommends reviewing Postgres query history if the proxy was reachable from an untrusted network while running an affected version. The official post links to a helper query for that purpose. (liteLLM)

If you already collect Postgres logs, hunt for unusual query shapes around the exposure window. Useful signs include:

| Database signal | Possible interpretation |

|---|---|

Queries against LiteLLM_VerificationToken with unexpected SQL syntax | Key table enumeration |

Queries referencing litellm_credentials outside normal admin paths | Provider credential targeting |

Queries referencing litellm_config with environment-variable filters | Config and secret discovery |

| SQL errors triggered by malformed Bearer tokens | Failed probing |

| Repeated column-count style errors | UNION discovery behavior |

| Queries from the application user at unusual volume | Automated enumeration |

| Statements that include raw Authorization header fragments | Vulnerable string interpolation evidence |

A generic Postgres log hunt might start with:

-- Adapt table names and log table structure to your environment.

-- This assumes Postgres logs are ingested into a searchable table.

SELECT

log_time,

user_name,

database_name,

client_addr,

message

FROM postgres_logs

WHERE log_time >= TIMESTAMP '2026-04-20 00:00:00'

AND (

message ILIKE '%LiteLLM_VerificationToken%'

OR message ILIKE '%litellm_credentials%'

OR message ILIKE '%litellm_config%'

OR message ILIKE '%UNION%'

OR message ILIKE '%authorization%'

)

ORDER BY log_time DESC;

If your Postgres logs do not capture statements, check whether your database, proxy, or cloud provider offers query history. Be careful with retention. The time to discover that your database logs expired yesterday should not be during a credential exposure incident.

Provider billing and model usage review

A successful attacker may not need to persist in your environment if they can steal valid provider credentials. They can replay keys elsewhere, consume your model budget, test prompts, or use the account as infrastructure. That makes upstream provider review part of the incident, not an optional follow-up.

ביקורת:

| Provider-side signal | מדוע זה חשוב |

|---|---|

| Sudden token usage spikes | Stolen keys may be used for high-volume inference |

| New source geographies or IP ranges | Replay from attacker infrastructure |

| New models used by old keys | Abuse may target expensive or permissive models |

| Requests outside normal hours | Common sign of automated misuse |

| Failed authentication attempts | Old keys may still be tested after rotation |

| New API keys created during exposure window | Possible administrative compromise |

| Changes to billing alerts or quotas | Attacker or misconfiguration may weaken controls |

If provider logs show suspicious use, preserve evidence before revoking old keys. Revocation is urgent, but evidence matters for root cause, insurance, legal review, and customer communication.

Why “patched” is not the same as “clean”

Upgrading closes the known vulnerable path. It does not prove that secrets were not accessed before the upgrade.

That is why CVE-2026-42208 should be handled like a potential credential exposure incident when the affected Proxy was reachable from an untrusted network. CCB Belgium’s warning captures the risk bluntly: because LiteLLM centralizes API credentials for providers such as OpenAI and Anthropic, successful exploitation can be equivalent to losing connected AI provider accounts simultaneously. (CCB Belgium)

A clean response should include:

| שלב | מטרה |

|---|---|

| Patch | Remove the known vulnerable code path |

| Isolate | Stop additional probing and reduce network blast radius |

| Hunt | Review reverse proxy, application, database, and provider logs |

| Rotate | Invalidate any secret that may have been exposed |

| אמת | Confirm old keys fail and new keys work only where expected |

| צג | Watch for continued attempts, billing spikes, and suspicious key use |

| Document | Preserve evidence and decisions for audit and post-incident review |

The key operational mistake is doing these steps out of order. If you rotate credentials before capturing enough evidence, you may lose visibility into which key was abused. If you hunt for days before rotating high-value keys, you may give an attacker time to monetize access. The better approach is to preserve the most important evidence quickly, then rotate aggressively.

Common mistakes during remediation

The first mistake is treating the bug as a normal dependency update. It is a dependency update, but it is also a possible credential exposure event. If your LiteLLM Proxy stored provider keys and was reachable while vulnerable, you need a secret rotation plan.

The second mistake is relying on WAF patterns as the primary fix. Header filtering can reduce obvious probing, but it is brittle. Attackers change casing, spacing, comments, encoding, and request shape. Parameterized queries fix the code path. WAF rules buy time.

The third mistake is assuming no impact because no one saw a successful model call. Sysdig did not observe confirmed follow-through in its captured activity, but that does not prove that every environment was safe. Many organizations do not log enough at the gateway, database, or provider layer to prove non-use. (Sysdig)

The fourth mistake is storing complete Authorization headers in logs forever. It is tempting during an incident, but long-lived logs full of bearer tokens become a second credential store. A better design logs normalized indicators, keyed hashes, and anomaly flags.

The fifth mistake is leaving the gateway broadly reachable after patching. CVE-2026-42208 is a specific bug, but the category risk remains. AI gateways should be protected like identity brokers and CI/CD controllers because they concentrate high-value authorization decisions.

Related AI infrastructure issues that explain the pattern

CVE-2026-42208 is not an isolated lesson. It belongs to a broader pattern of AI infrastructure vulnerabilities and incidents where the vulnerable component sits near credentials, tool execution, or model-routing control.

CVE-2026-30623, LiteLLM MCP command injection

LiteLLM disclosed CVE-2026-30623 in April 2026 as an authenticated remote command execution issue in MCP server creation functionality. The security update states that when adding an MCP server with transport: stdio, ה command field was passed through to StdioServerParameters and executed as a subprocess on the proxy host. An authenticated user with permission to create MCP servers could run arbitrary commands as the LiteLLM process. (liteLLM)

The relevance to CVE-2026-42208 is architectural. CVE-2026-42208 targets the authentication and credential path. CVE-2026-30623 targets the tool execution boundary. Both show that AI gateways are no longer simple request routers. They are becoming control planes that manage identity, credentials, tools, subprocesses, and model access in one place.

The mitigation lesson is also different. SQL injection requires parameterized queries, patching, and credential response. MCP command execution requires command allowlisting, approved server definitions, permission scoping, sandboxing, and strong review of who can create or modify tool connections.

CVE-2026-1868, GitLab AI Gateway insecure template expansion

GitLab disclosed CVE-2026-1868 in the Duo Workflow Service component of GitLab AI Gateway. GitLab’s patch release says the issue involved insecure template expansion of user-supplied data via crafted Duo Agent Platform Flow definitions. Authenticated access to the GitLab instance was required, and the vulnerability could cause denial of service or code execution on the Gateway. GitLab fixed it in AI Gateway versions 18.6.2, 18.7.1, ו 18.8.1, and assigned a CVSS score of 9.9. (GitLab Docs)

The relevance is not that GitLab and LiteLLM had the same bug. They did not. The relevance is that AI gateway software now sits on execution-sensitive paths. A workflow definition, an MCP server definition, a model route, or an API key verification function can become an attack boundary. Security teams that evaluate these systems only as “AI middleware” will miss the real risk.

LiteLLM 1.82.7 and 1.82.8 PyPI compromise

In March 2026, LiteLLM also disclosed a suspected supply chain incident involving compromised PyPI packages litellm==1.82.7 ו litellm==1.82.8. LiteLLM’s update said the packages were live on March 24, 2026 from 10:39 UTC for about 40 minutes before being quarantined by PyPI, and that the compromise was believed to have originated from the Trivy dependency used in CI/CD security scanning. (liteLLM)

Snyk’s analysis described the same affected versions and stated that the packages were published by TeamPCP after maintainer PyPI credentials were obtained through a prior compromise of Trivy used in the LiteLLM CI/CD pipeline. (Snyk)

This incident is separate from CVE-2026-42208. It should not be merged into the same vulnerability narrative. But it reinforces the same defensive conclusion: AI gateway packages are high-value because they often run near model provider keys, cloud credentials, CI/CD tokens, Kubernetes secrets, and developer environment secrets.

A security team investigating CVE-2026-42208 should therefore avoid tunnel vision. Check the SQL injection exposure window, but also confirm whether any environment installed the malicious March versions. The incidents have different mechanics, but both can end in credential exposure.

Long-term hardening for AI gateways

Patch management fixes known bugs. Architecture limits the damage of unknown bugs. CVE-2026-42208 is a good forcing function for redesigning how AI gateways are deployed.

Keep the gateway off the public internet

The safest AI gateway is not directly reachable by the public internet. Put it behind an authenticated API edge, private networking, VPN, service mesh, or identity-aware proxy. If public exposure is unavoidable, apply strict route-level authorization, aggressive rate limits, WAF monitoring, and anomaly detection.

Reduce secrets stored in the gateway database

Provider credentials should not be casually stored in the same relational database used for gateway application state. Use a secrets manager where possible. Store references, not raw secrets. Scope provider keys to the minimum models, projects, and budgets required.

Use short-lived and scoped credentials

Long-lived provider keys create durable attacker value. Prefer short-lived credentials, scoped service identities, and provider-side restrictions where supported. If a gateway key is stolen, its usefulness should expire quickly and be limited by policy.

Separate administrative and inference paths

The same network route should not casually expose chat completions, admin UI, key generation, model management, and tool configuration. Separate inference traffic from administrative functions. Put admin routes behind stronger identity controls and narrower network access.

Add provider-side budget limits

Assume a key may leak. Provider-side rate limits, hard budgets, model allowlists, and alerting can turn a catastrophic key leak into a contained incident. Gateway-side budgets help, but stolen upstream provider keys may bypass the gateway entirely.

Treat prompt logs as sensitive data

AI gateway logs can contain user prompts, internal code, credentials accidentally pasted into prompts, support transcripts, customer data, and business context. Reduce retention. Mask known secret formats. Restrict access. Do not store sensitive request bodies unless there is a clear operational need.

Lock down MCP and tool execution

If the gateway supports MCP, tools, plugins, or subprocess execution, treat that as an execution platform. Require approved tool definitions. Disable arbitrary command fields. Use sandboxing. Isolate runtime users. Restrict egress. Review tool configuration changes as security-sensitive events.

Use database least privilege

The application’s database user should not have broad privileges beyond what the application needs. If an injection lands, database permissions influence what can be read or modified. Separate read/write roles where possible. Restrict access to secrets tables. Audit administrative changes.

Add schema-aware security regression tests

A standard DAST scanner may not understand AI gateway routes, OpenAI-compatible endpoints, or unusual header-driven auth flows. Add regression tests that exercise authentication error paths, malformed Authorization headers, admin route boundaries, and key-management endpoints. The point is not to fuzz randomly forever. The point is to prove that auth-path inputs are treated as data.

In authorized environments, an AI-assisted penetration testing workflow can help teams keep this evidence organized: identify the exposed gateway, verify version and route reachability, test safe malformed input handling, collect proxy and database evidence, confirm key rotation, and generate a retest record. Penligent’s public materials describe an AI-powered penetration testing workflow that combines traditional tools such as nmap, Metasploit, Burp Suite, and SQLmap into an AI-driven process, and its docs position the product around installation, automated pentests, and report generation. Used carefully, that category of workflow is most valuable after an incident when the team needs reproducible evidence that the exposure has been removed and controls still hold. (penligent.ai)

A practical hardening checklist

| בקרה | Minimum action | Better action |

|---|---|---|

| Version management | Upgrade to patched LiteLLM | Enforce dependency policy and emergency patch SLAs |

| Network exposure | Remove public access | Private gateway with identity-aware access |

| API authentication | Rotate virtual keys | Short-lived, scoped, tenant-bound keys |

| Provider credentials | Rotate exposed keys | Secrets manager with scoped provider keys |

| Database security | Review query logs | Least-privilege roles and sensitive table isolation |

| Admin routes | Restrict admin UI | Separate admin plane with MFA and IP allowlisting |

| Logging | Hunt for SQLi indicators | Token-safe structured logging with anomaly fields |

| Billing controls | Review provider usage | Hard provider quotas and alerting |

| MCP and tools | Review enabled features | Approved tool catalog and sandboxed execution |

| Incident response | Patch and rotate | Preserve evidence, retest, and document closure |

Safe validation after patching

Validation should prove that the specific vulnerable behavior is gone without attempting destructive exploitation.

A safe validation plan looks like this:

- Confirm the running LiteLLM version is

1.83.7or later. - Confirm the Proxy is not reachable from untrusted networks.

- Send a malformed but non-exfiltrating Authorization header in an authorized test environment.

- Confirm the response does not include SQL errors or database structure.

- Confirm database logs do not show raw caller input changing query structure.

- Confirm old LiteLLM virtual keys no longer work after rotation.

- Confirm old provider API keys are revoked at the upstream provider.

- Confirm provider billing and usage logs show no unexplained spike.

- Confirm WAF or proxy detections are alerting on obvious SQLi indicators.

- Document the test, evidence, timestamp, and final state.

A simple negative test in an authorized lab should avoid real extraction logic:

curl -i https://<authorized-litellm-test-host>/chat/completions \

-H "Authorization: Bearer malformed-test-token-with-quote-'" \

-H "Content-Type: application/json" \

--data '{"model":"test","messages":[{"role":"user","content":"test"}]}'

The expected result is not a successful request. The expected result is a controlled authentication failure without SQL syntax errors, stack traces, schema names, or unusual database behavior. Do not run tests like this against systems you do not own or have explicit permission to test.

How to communicate the incident internally

Security incidents involving AI infrastructure often fail because ownership is unclear. The AI platform team owns the gateway. The application team owns clients. The security team owns response. The cloud team owns provider keys. Finance owns billing anomalies. Legal or privacy teams may own customer notification decisions. That fragmentation slows response.

A clear internal incident note should include:

| שדה | Example content |

|---|---|

| פגיעות | CVE-2026-42208, LiteLLM Proxy pre-auth SQL injection |

| גרסאות מושפעות | >=1.81.16, <1.83.7 |

| Environment status | Production gateway was or was not reachable from untrusted networks |

| Patch status | Upgraded to version and timestamp |

| Exposure window | First affected deployment time to patch time |

| Logs reviewed | Reverse proxy, LiteLLM app, Postgres, SIEM, provider usage |

| Secrets rotated | LiteLLM keys, provider keys, database credentials, cloud secrets |

| Suspicious activity | Confirmed, not observed, or unknown due to logging limits |

| Business risk | Potential unauthorized model usage, credential exposure, prompt log exposure |

| Follow-up controls | Network isolation, secret manager, provider quotas, regression tests |

Do not overstate certainty. “No evidence of compromise” is not the same as “no compromise.” If logging was incomplete, say that. A precise statement is more useful than a comforting one.

Why old SQL injection lessons still matter

CVE-2026-42208 is modern because it affects AI infrastructure. It is old because the secure coding lesson is familiar. User-controlled input must not be concatenated into SQL. Error paths must be tested. Authentication code must be treated as attack surface. Secrets should not be concentrated without strong controls. Logs must help defenders without becoming secret stores.

OWASP’s injection guidance says prepared statements ensure an attacker cannot change the intent of a query even if SQL commands are inserted into input. MITRE’s CWE-89 entry also warns that SQL injection can arise when externally influenced input is used to construct SQL commands without correct neutralization. (סדרת דפי העזר של OWASP)

The AI twist is that security teams are deploying new gateway layers faster than their traditional review processes can absorb. AI gateways are being added to support model routing, fallback, spend management, centralized observability, prompt logging, agent frameworks, MCP servers, and experimentation. Each feature adds operational value. Each feature also adds a new boundary.

The right conclusion is not “do not use AI gateways.” The right conclusion is “secure them like control planes.”

Final response priorities

For an affected organization, the priorities are straightforward.

First, upgrade LiteLLM Proxy to 1.83.7 or later, preferably the currently recommended stable release from LiteLLM. (liteLLM)

Second, remove untrusted network reachability. The Proxy should not be directly reachable from arbitrary clients unless you have a deliberate API edge and monitoring design.

Third, review logs across the reverse proxy, LiteLLM application, Postgres, SIEM, and upstream model providers. Sysdig’s observed indicators are useful starting points, but do not rely on one user-agent or one payload shape. (Sysdig)

Fourth, rotate credentials. If the gateway was reachable while vulnerable, treat provider keys, LiteLLM virtual keys, and environment-derived secrets as potentially exposed until evidence says otherwise.

Fifth, redesign the gateway boundary. Put secrets in a proper secrets manager, narrow database privileges, separate admin and inference paths, apply provider-side quotas, and build regression tests for auth-path input handling.

CVE-2026-42208 matters because it exposes the real shape of AI infrastructure risk. The vulnerability is SQL injection. The asset is an AI gateway. The consequence can be credential exposure across the model access layer. That is why the response should look less like a routine dependency update and more like a control-plane incident.

קריאה נוספת וקישורים למקורות

GitHub Security Advisory, SQL injection in Proxy API key verification, GHSA-r75f-5x8p-qvmc. (GitHub)

LiteLLM Security Update, CVE-2026-42208 in LiteLLM Proxy. (liteLLM)

Sysdig Threat Research, targeted SQL injection against LiteLLM authentication path. (Sysdig)

LiteLLM AI Gateway documentation. (liteLLM)

LiteLLM GitHub repository and project description. (GitHub)

Tenable CVE summary for CVE-2026-42208. (Tenable®)

CCB Belgium warning on LiteLLM pre-auth SQL injection. (CCB Belgium)

OWASP Query Parameterization Cheat Sheet. (סדרת דפי העזר של OWASP)

OWASP Secrets Management Cheat Sheet. (סדרת דפי העזר של OWASP)

MITRE CWE-89, SQL Injection. (CWE)

LiteLLM Security Update, CVE-2026-30623 command injection via MCP SDK. (liteLLM)

GitLab AI Gateway patch release for CVE-2026-1868. (GitLab Docs)

Penligent, LiteLLM on PyPI Was Compromised, What the Attack Changed and What Defenders Should Do Now. (penligent.ai)

Penligent docs, installation and automated pentest workflow. (penligent.ai)

Penligent overview of automated penetration testing workflows. (penligent.ai)