The question sounds simple enough. You want the best AI model for pentesting, so you can pick one model, wire it into your workflow, and move faster. But in practice, that question hides three different decisions. First, are you choosing a foundation model or an AI security product built on top of one? Second, are you trying to improve a human tester’s daily workflow, or are you trying to automate an end-to-end offensive pipeline? Third, do you care most about code reasoning, browser interaction, long-context repository analysis, or repeatable evidence collection?

Those distinctions matter because the public conversation is noisy. Some prominent practitioner content now ranking for this topic makes the useful point that many “AI security tools” are really wrappers around a small set of foundation models. At the same time, comparison articles that readers are likely to encounter often rank products such as XBOW, NodeZero, and Burp AI, which are not base models at all but operational systems with orchestration, tooling, validation, and reporting layers. Treating those two categories as the same thing is how teams make bad buying decisions and bad architecture decisions. (मध्यम)

So here is the honest answer up front. If you are a security engineer who wants one general-purpose model for most pentesting-adjacent work today, Claude Sonnet 4.6 is the strongest single default pick. If your workflow leans heavily on browser automation, computer use, and tool-driven operator loops, GPT-5.4 is the better specialist. If your work involves giant multimodal evidence sets, very large documents, and cost-conscious large-context analysis, Gemini 3.1 Pro is the strongest third option. If you are building a serious pentest product or internal autonomous system, the best answer is not one model at all, but a routed stack with deterministic tools and explicit validation. That conclusion is an inference from official model docs, current product patterns, and the best public research on AI-assisted pentesting rather than a single vendor benchmark pretending to settle the entire question. (OpenAI)

Why this question gets answered badly

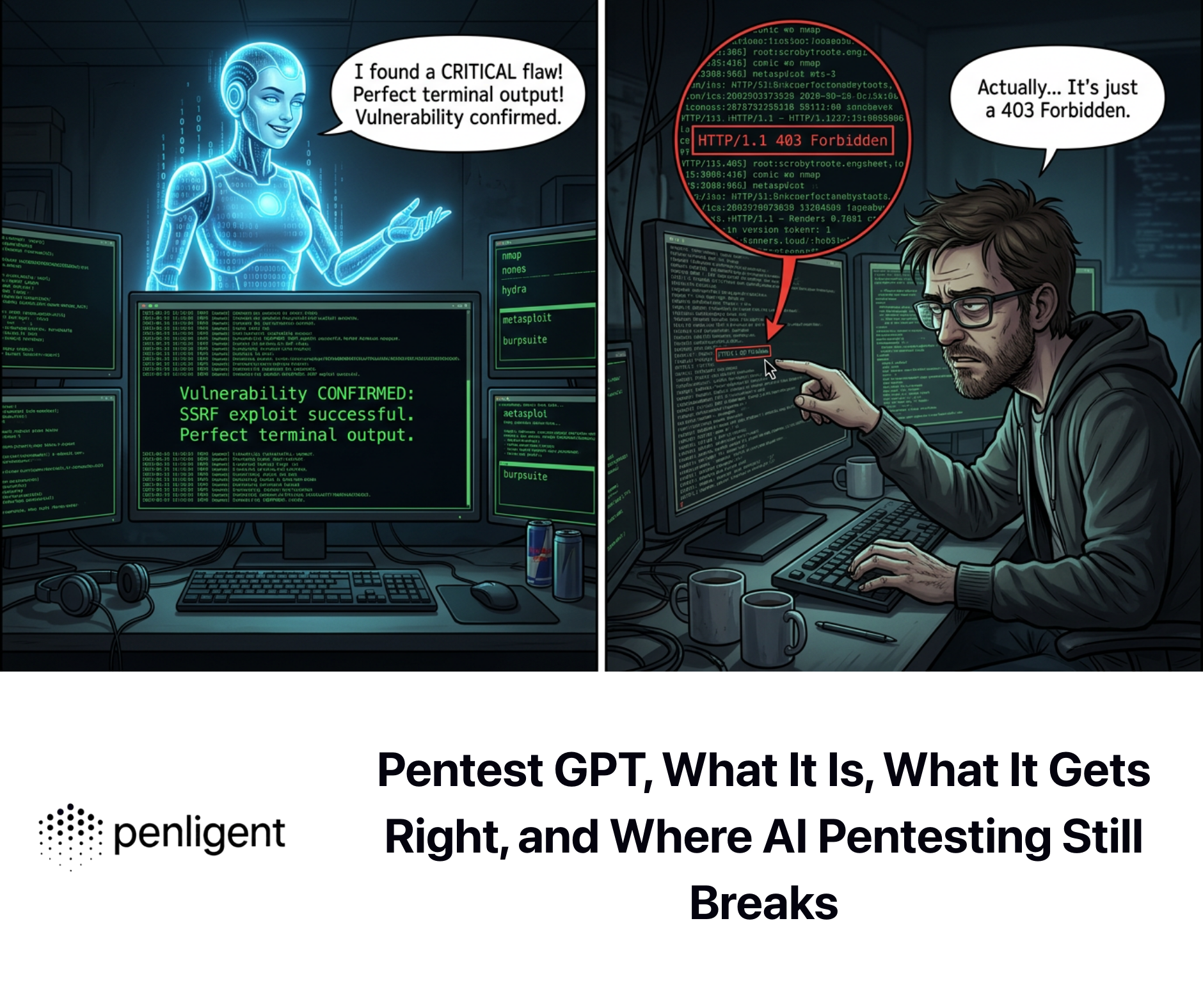

A lot of writing on AI and pentesting still makes one of two mistakes. The first mistake is to treat pentesting as a prompt-writing problem. In that version of the story, the model is “good” if it can explain a vulnerability class, suggest next steps, or generate a script that looks plausible. That is useful, but it is not the same as helping someone complete an authorized security assessment. Real pentesting means navigating ambiguity, adapting to broken assumptions, handling incomplete telemetry, maintaining context across stages, and generating evidence that can survive scrutiny from engineers, managers, and sometimes auditors.

The second mistake is to confuse impressive demos with reliable operations. Public materials from modern AI security systems tell a very consistent story here. OpenAI’s Aardvark, now rolled into Codex Security as a research preview, is explicitly described as a multi-stage system that analyzes repositories, produces a threat model, validates exploitability in isolation, and proposes targeted fixes. Burp AI is marketed not as a replacement for pentesters but as a way to accelerate work while keeping the operator in control. XBOW emphasizes independently validated findings through real exploitation. NodeZero emphasizes attack-path chaining and continuous proof of exploitability. In other words, the products closest to production reality are not saying “pick one model and let it freestyle.” They are saying the opposite: models matter, but architecture matters more. (OpenAI)

That is also why the strongest public research keeps rewarding decomposition. The USENIX Security 2024 PentestGPT paper found that a structured three-module design materially improved results over naive model usage, including a reported 228.6 percent task-completion increase over GPT-3.5 on benchmark targets, while also highlighting that context loss and long-horizon planning are central failure points for generic chat-style interaction. AutoPenBench later showed that fully autonomous agents reached only 21 percent success, while human-assisted agents reached 64 percent. PentestEval pushed the point harder still, finding generally weak stage-level performance across modern LLMs, with the hardest stages hovering around a 25 percent success rate and end-to-end autonomous systems performing very poorly. That body of work does not say AI is useless for pentesting. It says the winning unit is not the single reply. It is the workflow. (USENIX)

What a pentesting model actually needs to be good at

If you strip away hype, a strong pentesting model needs to do six things well.

First, it needs to read code and configuration at a high level of fidelity. That includes normal code review work, but also the kind of security reasoning that requires following data flow, trust boundaries, auth assumptions, and deployment conditions. In practical terms, this is where long-context performance and codebase search quality matter more than one-off cleverness.

Second, it needs to handle tools without getting lost. Pentesting is not pure reasoning. It is a messy loop of collecting output, pruning noise, choosing a next step, and updating a working hypothesis. Official model positioning reflects that shift. GPT-5.4 is explicitly presented as strong on computer-use workloads and on writing code to operate computers through libraries such as Playwright, while Claude Sonnet 4.6 is framed as stronger than prior Sonnet models across coding, computer use, long-context reasoning, and agent planning. Gemini 3.1 Pro is positioned around improved tool use, multi-step tasks, and agentic coding with a 1M-token context window. Those capabilities do not prove real pentest performance by themselves, but they do line up with the mechanics of modern offensive workflows. (OpenAI)

Third, the model needs to stay coherent over long sessions. A surprising amount of security work dies here. You are half an hour into a target, you have collected assumptions about auth flow, role boundaries, error patterns, JavaScript behavior, and backend quirks, and the model suddenly starts optimizing the wrong branch because it has effectively forgotten the structure of the investigation. PentestGPT called this out directly as a context-loss problem, and that diagnosis still holds. A model with a larger context window does not automatically solve this, but it gives the system designer more room to preserve artifacts, hypotheses, and evidence without compression loss. (USENIX)

Fourth, it needs to manage false positives and weak hypotheses. This is where security work differs sharply from general coding assistance. A model that sounds persuasive while being wrong is expensive in software engineering. In pentesting, it is worse. It wastes human time, burns rate limits, creates junk tickets, and teaches teams to distrust the system. Product documentation from Burp AI is revealing here: one of the highlighted AI functions is reducing broken access control false positives, and the platform repeatedly frames AI as a collaborator that augments the tester rather than replacing judgment. That design choice is not conservative branding. It is a recognition that error handling is central to usefulness. (PortSwigger)

Fifth, it needs to be cheap enough to stay in the loop. A model that performs beautifully but is too expensive to run across repositories, attack-surface changes, and regression checks will not become the default. As of March 2026, OpenAI lists GPT-5.4 at $2.50 per million input tokens and $15 per million output tokens, while Anthropic lists Claude Sonnet 4.6 at $3 and $15 per million tokens, and Google lists Gemini 3.1 Pro at $2 and $12 under 200,000 input tokens, with higher rates beyond that threshold. Pricing is not the whole story, but it meaningfully affects whether teams can afford continuous use. (OpenAI)

Sixth, it needs to produce artifacts people can act on. The real output of pentesting is not “interesting thoughts.” It is validated findings, supporting evidence, remediation guidance, and often retest confirmation. This is why mature systems are converging on multi-stage pipelines rather than pure chat. The best model for pentesting is the one that sits inside a loop that can observe, decide, verify, and explain. Without that loop, even a strong model is just a clever assistant.

What research says, once you ignore the hype

The academic literature has become much more useful over the past two years because it stopped asking whether LLMs can help at all and started asking where they help, where they fail, and what system design compensates for those failures.

PentestGPT was a turning point because it framed automated pentesting as a structured collaboration problem, not a single-session prompt problem. The paper built a benchmark based on real-world targets drawn from platforms like Hack The Box and VulnHub, covering 13 targets, 182 subtasks, 26 categories, and 18 CWE items. The authors found that LLMs were capable on some subtasks but struggled with long-term planning, context retention, and coordinated decision-making. Their three-module design, separating reasoning, generation, and parsing, significantly improved outcomes and showed that design choices can matter as much as model quality. (USENIX)

AutoPenBench extended the conversation in a different direction. Instead of asking whether a bespoke agent could solve a handful of attractive demos, it created an open benchmark with 33 tasks ranging from educational exercises to real vulnerable systems with CVEs, using MCP integration and milestone-based evaluation. The headline result was sobering and useful at the same time: fully autonomous agents reached 21 percent success, while human-assisted agents reached 64 percent. That result should change how teams interpret every autonomous pentesting demo they see. The right lesson is not that AI failed. The right lesson is that human-guided modular deployment is the practical path right now. (ACL Anthology)

PentestEval, published later, is even more blunt. It evaluated nine LLMs and several specialized pentesting tools across six decomposed stages of the workflow. The researchers reported generally weak stage-level performance, with attack decision-making and exploit generation hovering around 25 percent success, and end-to-end autonomous methods performing poorly. In their setup, PentestGPT achieved 39 percent success with manual execution and 31 percent with automation, while fully autonomous agents like PentestAgent and VulnBot were dramatically worse. You do not need to accept every design choice in that paper to recognize the main operational truth it exposes: autonomy is still brittle precisely where offensive work becomes ambiguous, branching, and high-consequence. (arXiv)

Put differently, the best current evidence points to a strong conclusion. The “best AI model for pentesting” is not the one that writes the flashiest command or the longest explanation. It is the model that degrades the least when the workflow gets long, the evidence gets messy, and the next step is not obvious. That is why long-context reasoning, tool reliability, and error correction matter more than social media anecdotes about which model feels smarter.

GPT-5.4, the strongest operator-style model in the group

OpenAI’s own positioning of GPT-5.4 is unusually relevant to security work. The company explicitly highlights performance across computer-use workloads and calls out writing code to operate computers with libraries like Playwright, as well as responding to screenshots with mouse and keyboard actions. The API docs also list a context window of roughly 1,050,000 tokens and 128,000 max output tokens. Those are not generic lifestyle features. They map directly to the kinds of browser automation, interface exploration, stateful navigation, and tool-driven loops that increasingly sit inside authorized web and product security testing workflows. (OpenAI)

That makes GPT-5.4 especially attractive when the pentesting-adjacent task is not just “reason about this target,” but “drive an environment.” Think authenticated application exploration, multi-step account workflows, reproducing permission boundaries, inspecting client-side states, or instrumenting regression checks against newly introduced surfaces. In those cases, the ability to reliably write and adjust automation code matters as much as vulnerability intuition. GPT-5.4 looks strongest here because OpenAI is now explicitly optimizing toward that operator-style loop rather than only static code completion. (OpenAI)

The tradeoff is that GPT-5.4 is not obviously the best single default for every security engineer’s daily use. Plenty of pentesting work is repository-heavy, note-heavy, or report-heavy rather than browser-heavy. If your most common activity is reviewing a sprawling codebase, comparing prior findings, reading architecture documents, and reasoning across very large evidence bundles, GPT-5.4 may not give you a decisive edge over Claude Sonnet 4.6. It is also not the cheapest option if you plan to keep it constantly in the loop for wide coverage tasks. OpenAI’s listed pricing is competitive for a frontier model, but security teams that run continuous analysis across many artifacts will still feel the cost. (OpenAI)

So the cleanest way to think about GPT-5.4 is this: it is the best choice when you want a model to behave like an adaptable technical operator. It is less compelling as a universal “one model for every security task” choice than it is as the model you reach for when the workflow involves interaction, automation, and active execution under guardrails.

Claude Sonnet 4.6, the best single default for most security engineers

Claude Sonnet 4.6 is the model I would recommend as the best general-purpose default for most pentesting-centered work right now. That is not because Anthropic claims it wins every benchmark. Every frontier vendor says some version of that. It is because the model’s public feature profile lands unusually well on the actual texture of security engineering: coding, computer use, long-context reasoning, agent planning, and a 1M token context window, all at a price point that is feasible for frequent use. Anthropic explicitly recommends Sonnet 4.6 for most AI applications needing a balance of advanced capabilities and cost efficiency. (Anthropic)

Why does that matter in pentesting? Because most real security work is not a pure operator task and not a pure writing task. It sits in between. You are reading code, comparing application states, reasoning over logs and documentation, spotting trust-boundary failures, deciding which branch of an investigation deserves another hour, and turning the result into something another human can verify. Sonnet 4.6 looks strongest as a default because it does not force a hard tradeoff between code understanding, long-session context, and general professional workflow quality. Public endorsements highlighted on Anthropic’s own page keep returning to the same theme: large codebases, hard bug finding, long-horizon tasks, fewer tool errors, and strong performance-to-cost. Vendor testimonials are not neutral science, but the consistency of the use cases is informative. (Anthropic)

There is another reason Sonnet 4.6 fits security work well: the best current research suggests that partial autonomy plus human supervision is where value concentrates, and Claude’s recent positioning is very strong in exactly that lane. AutoPenBench’s 64 percent result for human-assisted agents, compared with 21 percent for fully autonomous ones, is effectively an argument for high-quality collaboration rather than blind delegation. Sonnet 4.6’s combination of long context, controlled reasoning effort, and broad workflow fluency makes it a very strong collaborator model. It is the model I would want open while auditing a big internal application, reading a generated client bundle, reviewing auth logic, or turning raw evidence into a credible finding narrative. (ACL Anthology)

Its weakness is not capability so much as specialization. If your workflow is dominated by highly interactive browser or desktop automation, GPT-5.4 may give you more leverage. If your organization already lives deep inside Google’s ecosystem and handles giant multimodal corpora at scale, Gemini 3.1 Pro may be a better economic fit. But if you force me to answer the original question in a single line, Claude Sonnet 4.6 is the best single AI model for pentesting-adjacent work in 2026, because it is the hardest one to regret standardizing on.

Gemini 3.1 Pro, the strongest choice for giant evidence bundles

Gemini 3.1 Pro deserves more respect in security circles than it usually gets. Google DeepMind positions it around advanced reasoning, multimodal understanding, improved tool use, simultaneous multi-step tasks, and strong agentic coding behavior. Google’s developer docs also expose something very relevant to security teams: a 1,048,576-token input limit, 65,536 output tokens, support for code execution, function calling, structured outputs, search grounding, URL context, and PDF input. That capability mix makes Gemini extremely compelling for cases where the “target” is not just an app or repo, but a pile of documents, diagrams, PDFs, screenshots, logs, and code fragments that all need to be held together in one working frame. (Google DeepMind)

This matters more than many people realize. A lot of security work in mature environments is evidence synthesis. You are reading architecture notes, Jira exports, prior pentest findings, deployment manifests, CI configuration, API specs, and packet captures, then trying to answer a narrower question about exploitability, privilege boundaries, or business impact. In that sort of workload, long context plus multimodal handling plus decent tool use can beat a model that is marginally better at raw code generation. Gemini’s price profile is also attractive for large-scale analysis, especially under the lower input tier. (Google AI for Developers)

The reason Gemini 3.1 Pro is not my default number one choice is not that it is weak. It is that the publicly visible security ecosystem has not yet converged around it as clearly for day-to-day pentesting workflows the way it has around Claude for collaboration-heavy coding work or GPT-style models for operator-like automation. That can change. Official materials already emphasize improved tool use and agentic coding, and Google’s methodology page shows the company is thinking seriously about function-calling evaluations. But as of March 2026, it still feels like the best option when the workload is unusually large, heterogeneous, and document-heavy, not yet the most natural single-model default for the average security engineer. (Google DeepMind)

If your team spends more time triaging enormous bundles of evidence than actively driving interfaces, Gemini may actually be the best fit. For example, in cloud incident validation, architecture-heavy security reviews, or AI-agent surface assessments with huge prompt, tool, and runtime artifacts, the model’s document and multimodal strengths become very practical.

A model is not a pentester, and the market is proving it

One of the clearest signals in this space is that the most interesting products are increasingly explicit about where the model ends and the system begins.

Burp AI is a good example because PortSwigger has taken a very pragmatic stance. The official docs say Burp AI helps testers uncover vulnerabilities more efficiently, understand complex web technologies, and streamline authentication setup, but the product messaging repeatedly stresses that the operator remains in control. The features that matter are not mystical. They are practical: AI in Repeater, autonomous issue exploration, explanations of unfamiliar technologies, false-positive reduction for broken access control, and AI-generated recorded logins. That is not “AI replaces pentesting.” It is “AI removes friction in the parts of pentesting that burn time.” (PortSwigger)

OpenAI’s Aardvark, now Codex Security, tells a related story from the code-security side. Its workflow includes repository analysis, threat modeling, commit scanning, isolated validation, and patch generation. The key word there is validation. The system is not satisfied with spotting a pattern. It attempts to confirm exploitability in a sandboxed environment and provide evidence for review. That architectural choice lines up almost perfectly with what offensive-security engineers have wanted from AI for years: less speculation, more proof. (OpenAI)

XBOW and NodeZero show the same pattern from the offensive platform end. XBOW frames itself as an autonomous offensive security platform that explores attack paths and independently validates potential findings through real exploitation. NodeZero emphasizes attack-path chaining, continuous testing, and proof-driven remediation. Whether you adopt those platforms or not, they demonstrate where the market thinks durable value lies. It does not lie in chat quality alone. It lies in guided exploration, chaining, evidence, and repeatability. (Xbow)

Once you see that pattern, the original question becomes easier to answer. Picking the best AI model for pentesting matters. But picking the wrong system design matters more.

The current best answer, by workflow

The simplest way to choose is to map model choice to the kind of security work you do most often.

| Workflow | Best fit | Why it wins |

|---|---|---|

| Large codebase review, auth logic analysis, repo-wide security reasoning | Claude Sonnet 4.6 | Best overall balance of long context, coding quality, and collaborative reasoning |

| Browser-driven product testing, interface automation, multi-step operator loops | GPT-5.4 | Strongest explicit computer-use and automation profile |

| Huge evidence bundles, PDFs, multimodal materials, architecture-heavy review | Gemini 3.1 Pro | Excellent large-context and multimodal capability mix |

| Production-grade autonomous or semi-autonomous security platform | Routed multi-model stack | Research and market evidence both favor modular systems over single-model autonomy |

The table above is a judgment call based on current official docs, public benchmarks, and the architecture of leading security products, not a universal law. That distinction matters because the “best” answer changes with the job. A solo web tester doing authenticated flows inside Burp may rationally prefer GPT-5.4 for highly interactive tasks, then switch to Claude for report construction and code review. A cloud security team buried in documents and policies may prefer Gemini for large evidence synthesis while using a different model for exploit logic. What is dangerous is not choosing differently. What is dangerous is assuming that one model’s general reputation automatically transfers to every pentesting task. (OpenAI)

CVEs that reveal what a good pentesting model should actually do

A useful way to evaluate AI for security work is to stop asking whether it “knows vulnerabilities” and ask whether it helps with the right decisions around real ones.

Take Log4Shell, CVE-2021-44228. The vulnerability in Log4j 2 allowed remote code execution when attacker-controlled log data triggered JNDI lookups under vulnerable configurations. Every model today can recite the headline. The harder question is whether the model can help trace transitive exposure, identify where the logging path is actually attacker-influenced, distinguish affected from unaffected versions and configurations, and produce remediation guidance that matches the real deployment. That is a context and dependency reasoning problem, not a trivia problem. (एनवीडी)

Consider CVE-2024-3400 in PAN-OS. NVD describes it as a command injection vulnerability resulting from arbitrary file creation in GlobalProtect that may enable an unauthenticated attacker to execute arbitrary code with root privileges, but only for specific PAN-OS versions and distinct feature configurations. That conditionality is exactly the sort of detail a useful model must reason through correctly. The job is not to say “critical RCE.” The job is to help the engineer verify exposure preconditions, identify where the feature is enabled, and separate affected estate from unaffected estate without creating panic. (एनवीडी)

Now look at CVE-2025-0282 in Ivanti Connect Secure. NVD describes it as a stack-based buffer overflow allowing remote unauthenticated code execution against specific Ivanti products and versions. This is the kind of issue where a security team needs a model that can quickly connect asset inventory, internet exposure, version evidence, and likely blast radius, then assist with validation and post-patch confirmation. The problem is partly technical and partly organizational. A good model shortens the time from advisory to verified prioritization. (एनवीडी)

The same is true for CVE-2025-53770, where NVD notes that Microsoft was aware of exploitation in the wild for a SharePoint Server deserialization issue that allowed unauthorized network code execution, and for CVE-2026-20127, where CISA and NVD describe an authentication bypass in Cisco Catalyst SD-WAN Controller and Manager that allowed a remote unauthenticated attacker to obtain administrative privileges and that was actively exploited. These are the moments when a strong model earns its keep. It should help security engineers move from headline severity to concrete verification: Are we exposed, where are we exposed, what is internet-reachable, what changed after mitigation, and what evidence do we keep for leadership and operations. (एनवीडी)

That is the benchmark I would use when evaluating any model for pentesting. Not whether it can explain the CVE from memory, but whether it can help a real team reduce uncertainty faster than they could without it.

What an internal evaluation should look like

If your team is serious about choosing a model, do not copy social media prompt battles. Build a small, safe internal benchmark around your own authorized workflows. Include tasks like repository triage, authenticated flow reasoning, false-positive filtering, remediation write-ups, and attack-path explanation. Then score for accuracy, tool reliability, evidence quality, and time saved.

A good benchmark should avoid live exploitation and focus on tasks you can legally and safely reproduce from prior engagements, internal labs, or intentionally vulnerable applications. Public research strongly supports milestone-based evaluation over all-or-nothing scoring, because much of the value in security work lives in intermediate progress: finding the right boundary, ruling out a weak lead, identifying the correct privilege model, or producing a clean proof trail. (ACL Anthology)

Here is a simple evaluation harness pattern that is safe and actually useful:

from dataclasses import dataclass

from typing import List, Dict

@dataclass

class Task:

name: str

artifact_bundle: str

expected_findings: List[str]

expected_evidence: List[str]

expected_fix_points: List[str]

@dataclass

class ModelRun:

model_name: str

task_name: str

finding_score: float

evidence_score: float

remediation_score: float

hallucination_penalty: float

tool_reliability_score: float

notes: str

def weighted_score(run: ModelRun) -> float:

return (

0.30 * run.finding_score +

0.25 * run.evidence_score +

0.20 * run.remediation_score +

0.20 * run.tool_reliability_score -

0.15 * run.hallucination_penalty

)

def rank_models(runs: List[ModelRun]) -> Dict[str, float]:

totals = {}

counts = {}

for run in runs:

totals[run.model_name] = totals.get(run.model_name, 0.0) + weighted_score(run)

counts[run.model_name] = counts.get(run.model_name, 0) + 1

return {m: round(totals[m] / counts[m], 3) for m in totals}

# Example tasks

tasks = [

Task(

name="Auth flow regression review",

artifact_bundle="sanitized_proxy_log + route_map + code_diff",

expected_findings=["broken access control", "role mismatch"],

expected_evidence=["request pair", "authorization gap", "repro steps"],

expected_fix_points=["server-side auth check", "test coverage"]

),

Task(

name="CVE exposure triage",

artifact_bundle="asset_inventory + version_data + advisory_text",

expected_findings=["affected systems", "internet exposure", "priority order"],

expected_evidence=["version match", "feature preconditions", "mitigation status"],

expected_fix_points=["patch target", "containment", "validation step"]

)

]

# After each model completes the same benchmark set, score them with human review.

The important part is not the Python. The important part is the rubric. Reward evidence and correction. Penalize plausible nonsense. Score whether the model stayed inside the available facts. Score whether it helped the reviewer move faster without making the result less trustworthy. That is how you choose a model that will still be useful six months later, after the novelty wears off.

This is the point where a model discussion naturally turns into a platform discussion. Once you move beyond ad hoc assistance, the hard part is no longer simply “Which model gives the smartest answer?” The hard part becomes orchestration. How do you preserve context across stages, coordinate tools, validate findings, organize evidence, retest fixes, and turn everything into something another engineer can trust?

That is where a system like Penligent fits naturally. Penligent’s own recent material keeps coming back to the same idea: the gap between LLM reasoning and production security value is closed by tool-driven validation, proof-centered workflows, and structured reporting rather than by clever prompting alone. That is also why its recent writing repeatedly distinguishes between chat-style security assistance and agentic validation workflows tied to evidence, ATT&CK mapping, and real verification. (पेनलिजेंट)

In practical terms, that means the better question for a team may be: “Which model should power which stage of our security workflow, and what platform ensures that the result is auditable?” If your answer to that second question is weak, even the best model will disappoint you. If your answer is strong, an imperfect model can still create a lot of value.

The uncomfortable truth, no model is enough on its own

The strongest conclusion from current evidence is not that one vendor has solved autonomous pentesting. It is that the field is becoming much better at identifying where models help and where they still break.

They help a lot with repository understanding, hypothesis generation, artifact summarization, remediation drafting, low-level automation, and evidence organization. They help less when they are forced to manage long, branching offensive workflows without structure, memory discipline, or validation. They help even less when teams treat confidence as accuracy. The research record on this point is consistent. PentestGPT showed the importance of modularity and context management. AutoPenBench showed the value of human assistance. PentestEval showed that stage-level weaknesses still compound badly when pushed into full autonomy. (USENIX)

That is why the best teams in 2026 are increasingly building AI security workflows around a few stable ideas: explicit task decomposition, deterministic tool use, evidence capture, isolated validation, and human review at the points where the cost of being wrong is high. The model still matters enormously. But the best AI model for pentesting is now better understood as the best component in a disciplined offensive workflow, not the magical replacement for one.

Final verdict

So, what is the best AI model for pentesting right now?

For most security engineers, the best single answer is Claude Sonnet 4.6. It gives the best overall balance of code understanding, long-context reasoning, collaborative workflow quality, and sustainable cost. It is the easiest model to recommend as a default if you need one answer and want the broadest day-to-day usefulness. (Anthropic)

If your work is more interactive and operator-like, especially around browser automation, multi-step app navigation, and tool-driven execution loops, GPT-5.4 is the strongest specialist. It is the model I would choose when I want the AI to drive and adapt, not just read and reason. (OpenAI)

If your work revolves around very large evidence sets, PDFs, architecture documents, logs, and multimodal review, Gemini 3.1 Pro is more capable than many security teams assume and may be the smartest economic choice for those workloads. (Google DeepMind)

And if you are building an internal or commercial pentest system, the best answer is not a single model at all. It is a routed architecture with deterministic tooling, validation gates, and human review where it counts. The research says that. The best current products say that. Experience says that. (OpenAI)

Further Reading

OpenAI, Introducing GPT-5.4 और Introducing Aardvark, OpenAI’s agentic security researcher. (OpenAI)

Anthropic, Claude Sonnet 4.6 and official pricing documentation. (Anthropic)

Google DeepMind and Google AI for Developers, Gemini 3.1 Pro model docs and pricing. (Google DeepMind)

USENIX Security 2024, PentestGPT, Evaluating and Harnessing Large Language Models for Automated Penetration Testing. (USENIX)

EMNLP Industry 2025, AutoPenBench, A Vulnerability Testing Benchmark for Generative Agents. (ACL Anthology)

PentestEval, Benchmarking LLM-based Penetration Testing with Modular and Stage-Level Design. (arXiv)

PortSwigger, Burp AI documentation and product page. (PortSwigger)

Penligent, Pentest AI Tools in 2026 — What Actually Works, What Breaks. (पेनलिजेंट)

Penligent, PentestGPT vs. Penligent AI in Real Engagements From LLM Writes Commands to Verified Findings. (पेनलिजेंट)

Penligent, MITRE ATT&CK Framework, The Practical Way to Use It in 2026 Security Engineering. (पेनलिजेंट)