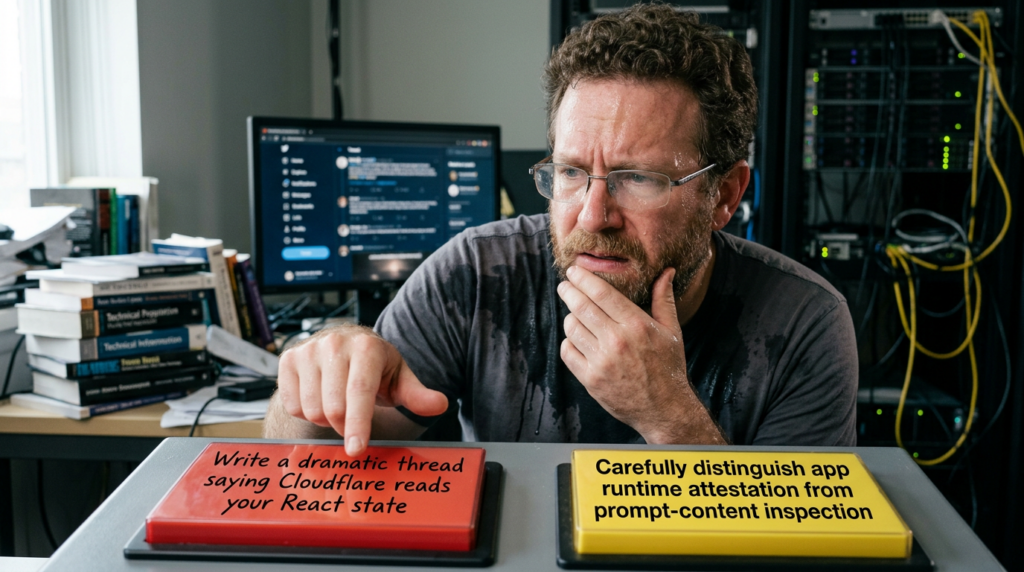

OpenAI openly says ChatGPT uses CAPTCHAs to reduce bot activity and spam. Cloudflare openly says Turnstile runs small JavaScript challenges, gathers client-side signals, and can be embedded into single-page applications with precise control over when verification happens. The March 2026 controversy started because a reverse-engineering write-up argued that, in ChatGPT, the analyzed challenge logic appears to go past generic browser fingerprinting and into the React app’s own boot state. That is a serious claim. It is also a claim that is easy to inflate past what the evidence actually supports. (OpenAI Help Center)

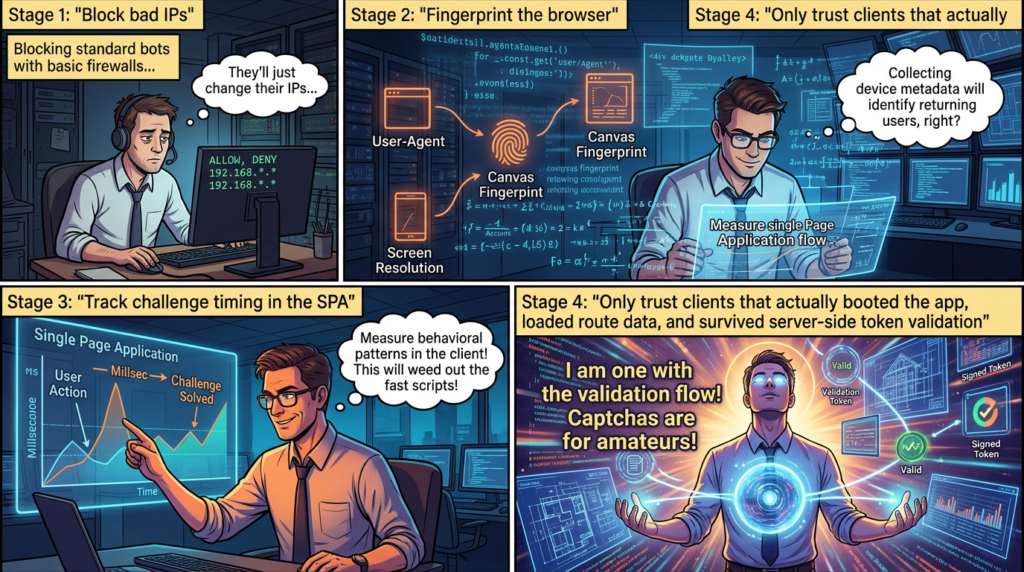

The useful way to read this story is not as a culture-war argument about whether anti-bot controls are “too invasive.” The useful way to read it is as a technical question: what does the available evidence show about ChatGPT and Cloudflare Turnstile, what does it not show, and what should security engineers, red teamers, and defenders learn from it. The answer is more interesting than the headline cycle. It points to a broader shift from browser-aware bot detection toward application-aware bot detection, especially in high-abuse environments where pretending to be Chrome is no longer enough. (Cloudflare Docs)

That shift matters because a modern abuse system does not need to ask only whether a client looks like a browser. It can ask whether the client actually booted the expected app, loaded the expected route data, attached the expected framework runtime, and reached the point in the page lifecycle where a real human user would normally trigger a protected action. In other words, the story here is less about a “mystery CAPTCHA” and more about runtime attestation at the application layer. That framing is not directly stated by OpenAI or Cloudflare in those words, but it is the most defensible interpretation of the official Turnstile design model and the reverse-engineered evidence that was published. (Cloudflare Docs)

What is firmly established about ChatGPT and Cloudflare Turnstile

Before getting into reverse engineering, it helps to separate hard facts from layered inference. OpenAI’s help center says ChatGPT uses CAPTCHAs to minimize bot activity and spam and that users may encounter them on web, iOS, and Android. That is not speculation. It is an explicit product statement. OpenAI also says unusual behavior, VPN use, and certain browser conditions can make CAPTCHAs appear more often. (OpenAI Help Center)

OpenAI’s login troubleshooting material also makes the Cloudflare side concrete. It tells users to resolve Cloudflare verification loops, allow JavaScript and cookies, disable blockers that may interfere with verification flows, and, on managed networks, ask IT teams to allowlist Cloudflare challenges if the loop persists. That means the presence of Cloudflare challenge infrastructure in ChatGPT’s access path is not just a rumor derived from packet captures or DOM inspection. OpenAI’s own support material refers to it directly. (OpenAI Help Center)

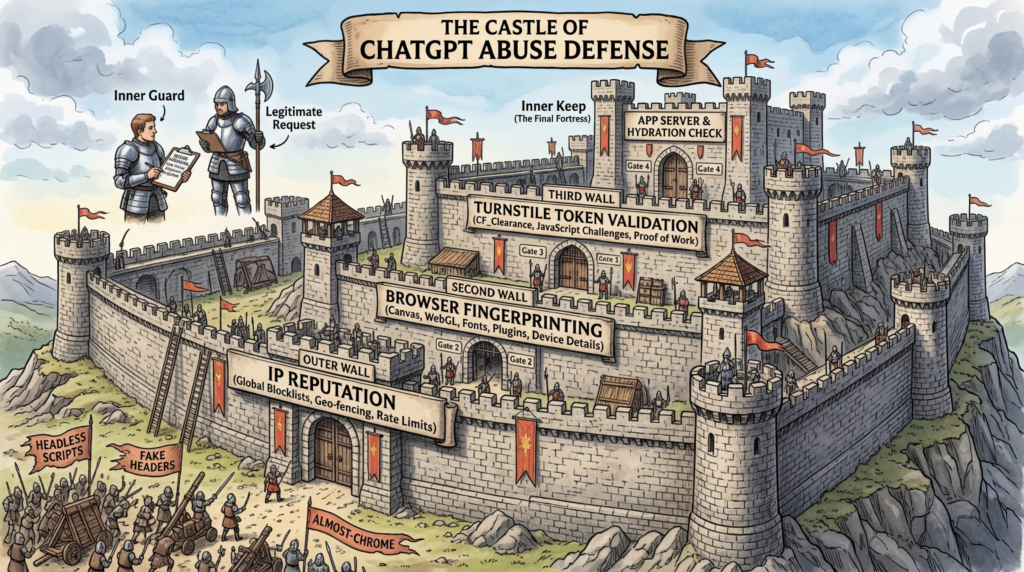

Cloudflare’s Turnstile documentation fills in the second half. Turnstile is part of Cloudflare’s Challenge Platform. Cloudflare says it runs a series of small non-interactive JavaScript challenges to gather signals about the visitor or browser environment. Those challenges can include proof-of-work, proof-of-space, probing for web APIs, and checks related to browser quirks and human behavior. That description matters because it establishes, in Cloudflare’s own words, that Turnstile is not merely a static checkbox or a server-side IP reputation lookup. It is an active client-side telemetry and challenge system. (Cloudflare Docs)

Cloudflare also says Turnstile is suitable for dynamic websites and single-page applications. Its explicit rendering mode is described as best for SPAs when teams need programmatic control over when widgets are created and when verification occurs. Execution can be deferred until a later point with turnstile.execute(), and appearance can be tuned so the widget becomes visible only when interaction is required or when challenge execution begins. That means there is nothing architecturally strange about a Turnstile deployment whose verification timing is tightly bound to an app’s runtime lifecycle. (Cloudflare Docs)

Cloudflare is equally clear about the real security boundary. Tokens must be validated on the server through the Siteverify API. The client-side widget alone does not provide protection. Tokens expire after 300 seconds, are single-use, and cannot be trusted merely because a browser page generated them. This is a critical point because it reframes the whole conversation: the meaningful control is not the visible UI element or even the JavaScript challenge by itself, but the combination of client-side signal gathering and server-side decision logic. (Cloudflare Docs)

Cloudflare’s privacy addendum gives the policy side of the story. It says Turnstile processes client-side signals such as IP address, TLS fingerprint, user-agent, sitekey, and associated origin. It also says the purpose of collecting those signals is solely to detect and block bots, not to identify, profile, or target individuals. Whether one is satisfied with that policy boundary is a separate question, but technically and legally it matters: the public Cloudflare position is not “we inspect arbitrary application content for profiling,” but “we process signals necessary for bot detection.” (Cloudflare)

The table below is a good starting point for calibrating confidence.

| Claim | Primary evidence | Confidence |

|---|---|---|

| ChatGPT uses CAPTCHAs to limit abuse | OpenAI help center says ChatGPT uses CAPTCHAs to minimize bot activity and spam. (OpenAI Help Center) | High |

| ChatGPT users can hit Cloudflare challenge loops | OpenAI login troubleshooting refers to Cloudflare verification loops and allowlisting Cloudflare challenges. (OpenAI Help Center) | High |

| Turnstile gathers client-side browser and behavior signals | Cloudflare overview says Turnstile runs JavaScript challenges, including proof-of-work, proof-of-space, API probing, browser-quirk and human-behavior checks. (Cloudflare Docs) | High |

| Turnstile can be timed around SPA lifecycle events | Cloudflare widget docs say explicit rendering is best for SPAs and allow deferred execution with turnstile.execute(). (Cloudflare Docs) | High |

| Turnstile tokens must be validated server-side | Cloudflare says Siteverify is mandatory and client-side verification alone is insecure. (Cloudflare Docs) | High |

| The ChatGPT deployment analyzed by Buchodi appears to check React app state | Buchodi reports __reactRouterContext, loaderData, और clientBootstrap in analyzed programs. (Buchodi’s Threat Intel) | Medium to High |

| The available evidence proves Cloudflare reads prompt content | No reviewed official source or published evidence in the reverse-engineering write-up establishes prompt-body inspection. (Buchodi’s Threat Intel) | Low |

What the reverse engineering actually claims

The March 29, 2026 Buchodi write-up is the reason this topic exploded. The article’s central claim is not just that ChatGPT uses Turnstile. It claims the author decrypted 377 challenge programs observed in browser traffic and found 55 properties checked across three layers: browser fingerprinting, Cloudflare-related network fields, and ChatGPT React application state. The key contention is that Turnstile, or more precisely the challenge logic surrounding ChatGPT’s deployment of it, is not merely verifying “real browser” properties. It is verifying “real browser that truly booted this application.” (Buchodi’s Threat Intel)

The article lays out a decryption story in two stages. According to the author, the server sends an encrypted turnstile.dx field in a prepare response. That field is XOR-decoded with a token from the request to reveal an outer layer of bytecode, which then contains an inner encrypted blob representing the actual fingerprinting program. The author says the second-stage XOR key is present in the payload itself as a float literal and reports successfully validating that decryption path across 50 samples. Whether every detail of that interpretation is correct is less important here than the larger point: the author is not presenting a vague intuition. The article presents a specific reverse-engineering methodology, a repeatable decryption chain, and metrics that can be scrutinized. (Buchodi’s Threat Intel)

The first layer described is standard anti-bot territory: WebGL properties, screen geometry, hardware signals, font measurement, DOM probing, and storage-related operations. Anyone who has worked on bot detection or instrumented browser automation will recognize that category immediately. It sits squarely inside what Cloudflare itself publicly describes as client-side signal gathering, browser-quirk evaluation, and environment probing. On its own, that part of the write-up would not be especially surprising. (Buchodi’s Threat Intel)

The second layer in the Buchodi article is described as Cloudflare network data, including fields such as cfIpCity, cfIpLatitude, cfIpLongitude, cfConnectingIp, और userRegion. Those specific field names and their role in this workflow are claims from the researcher, not points documented in Cloudflare’s public Turnstile documentation for this ChatGPT deployment. What matters for interpretation is narrower: the author is arguing that the analyzed logic combined client-side device and browser properties with network- and edge-derived context, which is directionally consistent with how large anti-abuse systems often build risk signals even though the exact field set here is not vendor-confirmed. (Buchodi’s Threat Intel)

The third layer is what changed the story. Buchodi says the analyzed programs referenced __reactRouterContext, loaderData, और clientBootstrap, and argues that these fields exist only when the ChatGPT React application has fully rendered and hydrated. The article then treats that as the decisive finding: a headless or partially simulated client might spoof many browser APIs, but if it never actually finishes loading the SPA runtime, route data, and hydration path expected by the site, it will not look like the intended application environment. That is the strongest and most consequential part of the research. (Buchodi’s Threat Intel)

The article goes one step further and claims the resulting fingerprint material is serialized, XOR-transformed, and ultimately becomes an OpenAI-Sentinel-Turnstile-Token header on conversation requests. It also says other challenge layers exist beyond the analyzed Turnstile program, including a signal orchestrator watching interaction events and a proof-of-work component. Those points are important to mention because they reinforce a broader architectural view: the challenge flow the author observed is presented not as one isolated CAPTCHA widget, but as part of a larger abuse-resistance stack. Again, those claims come from the reverse-engineering write-up, not from official OpenAI or Cloudflare deployment notes. (Buchodi’s Threat Intel)

This distinction is essential. The Buchodi article is technically detailed and specific enough that it deserves serious attention. But it is still a public reverse-engineering artifact, not a vendor white paper. The right reading is neither “ignore it” nor “treat every line as official ground truth.” The right reading is: here is a detailed, technically plausible account of a challenge flow observed in real traffic, and its most credible contribution is that it surfaced application-state checks as a likely part of the anti-bot model. (Buchodi’s Threat Intel)

Why React state would matter to an anti-bot system

To understand why this matters, it helps to think like a defender protecting a high-abuse application. Generic browser fingerprinting asks whether the client looks enough like a real browser to deserve service. Application-aware detection asks something stricter: did this client really load, initialize, and reach the operational state of the application it is pretending to use. The second question is harder for attackers and more useful to defenders. (Cloudflare Docs)

That distinction is not academic. A large class of abusive clients does not behave like real users. Some scrape HTML and replay requests. Some automate only the network layer and mimic headers or cookie flows. Some drive browsers partially but short-circuit expensive runtime paths. Some stub out Web APIs just enough to satisfy naive fingerprint checks. If the defense only verifies common browser properties, a sufficiently careful automation stack may pass. If the defense also checks whether the exact app runtime reached the point where router context, loader data, and hydration state exist, the attacker’s cost goes up sharply. Now the bot must do more than imitate Chrome. It must imitate this app. (Buchodi’s Threat Intel)

This is why the React-state angle is technically meaningful even if one dislikes the rhetoric around it. Anti-bot systems have always moved toward whatever signals are hardest to fake cheaply. IP reputation became insufficient. User-agent checks were never enough. Browser fingerprints became common. Behavior signals followed. For valuable targets, the next logical layer is application runtime evidence. The Buchodi analysis is compelling because it suggests that in the ChatGPT case, the challenge system may be using exactly that kind of evidence. (Cloudflare Docs)

There is also a product reason this makes sense. Cloudflare’s own Turnstile model gives site owners control over when verification occurs in an SPA. If an application only wants to generate a high-confidence token after the route is initialized and the real interaction path is about to start, explicit rendering and deferred execution support that pattern directly. The point is not that Cloudflare publicly says, “we verify React hydration in ChatGPT.” It is that the documented platform capabilities and the observed reverse-engineered behavior fit together cleanly. (Cloudflare Docs)

That also explains why the headline version of the story hit a nerve. “Reads React state” sounds more intimate than “checks browser quirks,” even though in practice a runtime check for application boot status can be much closer to infrastructure hardening than to content surveillance. Security engineers should resist both extremes here. It is not nothing. It is also not automatically a revelation that a provider is reading user-authored message text. (Buchodi’s Threat Intel)

The signal layers are easier to reason about when written this way:

| Signal layer | Example from reviewed materials | What it helps prove | What it does not prove |

|---|---|---|---|

| Browser layer | WebGL, screen, hardware, DOM and storage checks in Buchodi’s sample; browser and client-side challenges in Cloudflare docs. (Buchodi’s Threat Intel) | The client resembles a real browser environment rather than a trivial script. | That the user is legitimate, or that the client truly loaded the intended app. |

| Network and edge layer | Buchodi’s reported Cloudflare-related fields; Cloudflare privacy addendum lists IP, TLS fingerprint, user-agent, sitekey, and origin as signals. (Buchodi’s Threat Intel) | The request context looks consistent with expected network and anti-bot telemetry. | That the client reached the correct in-app state or that user content was inspected. |

| Application layer | Buchodi’s reported __reactRouterContext, loaderData, और clientBootstrap; React Router and hydration docs explain the runtime meaning of route data and hydration. (Buchodi’s Threat Intel) | The actual application runtime appears to have booted and hydrated. | That Cloudflare read prompt bodies, or that long-term profiling occurred. |

What those React markers probably mean

The most important technical clarification in this entire debate is that not all “React state” is equal. The phrase can sound like a single bucket containing everything the app knows, from route metadata to internal component flags to potentially user-authored content. The evidence published so far does not justify that broad reading. The fields cited in the reverse-engineering write-up are much more consistent with boot-state and route-state markers than with general content inspection. (Buchodi’s Threat Intel)

React Router’s documentation describes loaderData as data returned by route loaders and made available to the route component. That means loaderData is not some mysterious hidden vault. It is a normal mechanism for getting route-associated data into a rendered page. In a server-rendered or hybrid app, checking whether route loader data exists can tell you something simple and valuable: the route pipeline ran. The page did not merely dump static HTML and stop. (React Router)

React’s hydrateRoot documentation fills in the next piece. Hydration is the step where React attaches to HTML that was already rendered on the server and takes over managing the DOM. If a page is server-rendered and then hydrated client-side, checking for evidence that hydration completed is an effective way to distinguish “raw HTML arrived” from “the app is actually live.” A client that fetched markup but never executed the real JavaScript bundle will not reach the same operational state. (प्रतिक्रिया)

That is why the Buchodi interpretation is technically plausible. A reported check for router context plus route loader data plus a ChatGPT-specific bootstrap marker points toward runtime completeness. It says, in effect: do not trust a client simply because it renders pixels or exposes certain browser APIs; trust it more if the expected app internals are present at the time the protected action happens. In high-abuse systems, that is a very rational escalation of anti-automation logic. (Buchodi’s Threat Intel)

There is another subtle point here. The write-up also reports that one storage-related step wrote a fingerprint value into localStorage under a specific key. That is worth mentioning, but it should not be generalized too aggressively. Cloudflare’s separate Ephemeral IDs documentation says Turnstile can provide short-lived device-fingerprinting capabilities without relying on cookies or client-side storage and that those IDs are scoped to an account and expire within a few days. So the existence of a localStorage write in one analyzed sample is not proof that Turnstile universally depends on localStorage in every deployment or mode. It is better read as one observed detail in one reverse-engineered workflow. (Buchodi’s Threat Intel)

This distinction matters because technical debates often go bad when one concrete trace gets inflated into a universal architectural claim. The right conclusion is narrower and stronger: the evidence supports the idea that this ChatGPT challenge flow, as analyzed by Buchodi, used application runtime markers in addition to ordinary client-side signals. That is already a significant result. It does not need exaggeration to be interesting. (Buchodi’s Threat Intel)

What the evidence does not prove

This is the section most missing from the public debate. It is where precision matters more than attitude.

First, the reviewed materials do not establish that Cloudflare reads the content of ChatGPT prompts or conversation text as part of Turnstile. The Buchodi article points to router context, loader data, and a bootstrap marker. React Router’s documentation tells us those are consistent with route and hydration state. None of that is the same as a demonstrated read of prompt bodies. If someone wants to make that stronger claim, they need evidence of actual content fields or payload inspection logic, not just a broad phrase like “React state.” (Buchodi’s Threat Intel)

Second, the reviewed materials do not establish that Cloudflare or OpenAI are using these signals for advertising profiles or unrelated personal targeting. Cloudflare’s public Turnstile privacy addendum explicitly says the signals are collected solely to detect and block bots, not to identify, profile, or target individuals. That is a policy statement, not a cryptographic guarantee, but it is still the correct public reference point. Any claim that the same mechanism is being used for adtech-style profiling would need separate evidence. (Cloudflare)

Third, the headline phrasing “won’t let you type until” is stronger than the evidence reviewed here directly proves. The official docs and the reverse-engineering write-up support a story about challenge timing, token generation, app runtime checks, and request gating. They do not, by themselves, give a complete official account of exact UI lock timing for every typing interaction. It is safer to say the analyzed flow appears to gate protected actions and token issuance on the presence of application-state signals than to say the keyboard was definitively frozen by a single specific rule. (Cloudflare Docs)

Fourth, this is not, on the evidence reviewed, a vulnerability record. There is no public NVD or CISA-style treatment here showing a discrete exploit condition, impact statement, and remediation advisory for “ChatGPT reads React state through Turnstile.” What exists is a reverse-engineered description of an anti-bot design. That can still be valuable to defenders and researchers. It is just a different category of security finding. (Buchodi’s Threat Intel)

Finally, the reverse-engineering evidence does not cleanly separate which logic belongs to Cloudflare Challenge Platform, which belongs to OpenAI’s surrounding sentinel or orchestration layer, and which exists only in the way the two are composed for this product. The write-up itself describes Turnstile as one of three challenges in a broader system. So anyone discussing the result should avoid treating every observed component as if it were a generic Turnstile behavior present on every site that embeds Cloudflare’s widget. (Buchodi’s Threat Intel)

A more accurate way to phrase the controversy is this: a public reverse-engineering study argues that ChatGPT’s deployed abuse-defense flow appears to incorporate Cloudflare challenge logic, browser and network signals, and application boot-state checks from the React SPA. That is technically meaningful, defensible, and useful. It is also more precise than saying “Cloudflare reads your React state” as if that alone explained the entire design. (Buchodi’s Threat Intel)

How Turnstile fits into a single-page application

Once you step away from the headline rhetoric, the architecture becomes easier to understand. Cloudflare documents two rendering modes for Turnstile: implicit and explicit. Implicit rendering scans the page automatically and is a good fit for simpler static setups. Explicit rendering gives developers programmatic control and is explicitly recommended for dynamic websites and SPAs. That matters because a single-page application typically has lifecycle phases that matter for risk: initial HTML arrival, bundle execution, route resolution, hydration, interaction readiness, and final request submission. A flexible challenge system should be able to hook into those phases. Turnstile can. (Cloudflare Docs)

Cloudflare’s execution modes make this even more obvious. In default render mode, the challenge runs after widget render and can work in the background while the page loads. In execute mode, the challenge runs only after the app explicitly calls turnstile.execute(). Cloudflare recommends that pattern for multi-step forms, conditional verification, and situations where the verification should be deferred until the visitor actually attempts to submit data. That is exactly the kind of design surface where a product team might decide, “Only generate a high-confidence token once the app is genuinely ready and the user is at the protected step.” (Cloudflare Docs)

Cloudflare also documents appearance modes that let the widget stay invisible or become visible only when an interactive challenge is actually needed. That matters because many people still imagine anti-bot systems as always-on visible puzzles. Modern deployments are often quieter. The visible widget is only one possible interface. Much of the real logic can happen in the background, around execution timing, signal collection, and server validation. That helps explain why a user can experience a service as “just working” while the application is actually running a fairly sophisticated anti-abuse pipeline behind the scenes. (Cloudflare Docs)

There is another nuance worth keeping straight. Cloudflare says Turnstile can be embedded into any website without sending traffic through Cloudflare’s CDN. In other words, Turnstile use and full CDN routing are distinct product questions. That is helpful because discussions of ChatGPT and Cloudflare sometimes blur these together. In the ChatGPT context, OpenAI’s own support docs do refer to Cloudflare challenge loops, so Cloudflare challenge infrastructure is clearly present in practice. But as a general architectural matter, “a site uses Turnstile” and “all site traffic transits Cloudflare CDN” are not identical statements. (Cloudflare Docs)

Pre-clearance adds yet another layer. Cloudflare documents a mode where a Turnstile widget can issue a cf_clearance cookie for the same zone so that later WAF challenges at that zone can be bypassed according to the configured clearance level. The docs explicitly say JavaScript detections are stored in that clearance cookie. For large applications, that means Turnstile may do more than bless one form submission. It can become part of a broader challenge economy across the same protected surface. That is not necessary to explain the Buchodi write-up, but it shows how challenge systems in modern SPAs can be composed beyond a single button click. (Cloudflare Docs)

Put all of that together and the broad architecture stops looking exotic. A high-value SPA can render or defer Turnstile explicitly, gather browser and behavior signals, validate tokens server-side, and optionally coordinate with broader Cloudflare challenge state. If, inside that process, the app also uses route and hydration markers to avoid trusting half-booted or synthetic clients, that is entirely in line with the direction of modern anti-bot engineering. (Cloudflare Docs)

How to verify an implementation without guessing

If you build or assess a protected SPA, the most useful lesson from this entire debate is methodological. Do not guess from headlines. Verify the runtime.

Start in the browser. Open DevTools and watch for the Turnstile script load from https://challenges.cloudflare.com/turnstile/v0/api.js, either in default or explicit-render form. Then observe whether the widget or challenge logic initializes at page load, after route completion, or only at the moment the protected action is attempted. Cloudflare’s docs make those implementation patterns concrete: the timing can vary intentionally depending on whether the site uses implicit rendering, explicit rendering, or deferred execution. (Cloudflare Docs)

Next, inspect the action boundary. If the app allows a user to press a protected button before any token is generated, what happens next? A sound implementation will either trigger turnstile.execute() at that point or already have a fresh token ready from a background challenge. More important, the server should reject the request if no valid token is presented or if the token has expired or already been used. Cloudflare’s docs are explicit that the widget alone is not protection and that the real decision belongs on the server side through Siteverify. (Cloudflare Docs)

Then test degraded runtime conditions in a controlled and authorized environment. Disable JavaScript. Block third-party cookies. Turn on aggressive privacy tooling. Use an extension profile that interferes with challenge scripts. OpenAI’s login documentation says those conditions can cause Cloudflare verification loops or break login flows, which makes them useful for understanding where your own app’s assumptions are brittle. A healthy test question is not “Can I bypass it,” but “What prerequisite does the protected flow actually depend on, and how clearly does the app fail when that prerequisite is missing.” (OpenAI Help Center)

If your application is a React or React Router SPA, add one more diagnostic lens: compare the moment the route becomes usable with the moment the challenge is allowed to run. Check whether route data is loaded, whether the app finished hydrating, and whether the protected request path is gated on those milestones. React’s hydrateRoot semantics and React Router’s loaderData model are exactly the kind of runtime markers that make this analysis concrete rather than mystical. The Buchodi write-up matters because it suggests those runtime boundaries are becoming first-class anti-bot signals. (React Router)

A practical SPA pattern looks like this:

<script

src="https://challenges.cloudflare.com/turnstile/v0/api.js?render=explicit"

defer

></script>

<div id="turnstile-slot"></div>

<script>

let widgetId = null;

let appReady = false;

let turnstileToken = null;

function onAppBootComplete() {

appReady = true;

widgetId = turnstile.render("#turnstile-slot", {

sitekey: "YOUR_SITE_KEY",

appearance: "interaction-only",

execution: "execute",

callback: function(token) {

turnstileToken = token;

},

"expired-callback": function() {

turnstileToken = null;

},

"error-callback": function(errorCode) {

console.error("Turnstile error:", errorCode);

}

});

}

async function sendProtectedAction(payload) {

if (!appReady) {

throw new Error("Application is not fully booted yet");

}

if (!turnstileToken) {

turnstile.execute("#turnstile-slot");

return;

}

const res = await fetch("/api/protected-action", {

method: "POST",

headers: { "Content-Type": "application/json" },

body: JSON.stringify({

payload,

turnstileToken

})

});

if (!res.ok) {

turnstileToken = null;

throw new Error("Protected action rejected");

}

turnstileToken = null;

}

</script>

This is not a ChatGPT code sample and it is not meant to reproduce OpenAI’s deployment. It is a defensive illustration of the exact engineering principle at stake: verification can be intentionally deferred until the application is genuinely ready and the protected action is actually attempted. That design is directly supported by Cloudflare’s explicit rendering and execution model. (Cloudflare Docs)

In authorized security work, the hard part is often not triggering the control once. The hard part is preserving evidence, comparing behavior across browser profiles and networks, and rerunning the same validation after a UI change, a CSP change, or a backend rollout. In that sort of workflow, a structured testing system can be useful not because it magically “beats” Turnstile, but because it keeps the verification trail coherent. A tool such as Penligent is most naturally relevant here when teams need to document authorized runtime tests, preserve artifacts, and retest the same action boundaries after changes. (पेनलिजेंट)

Server-side validation is the real control plane

Many anti-bot implementations fail in the same way many authentication flows fail: the UI is treated as the control instead of the server decision. Cloudflare’s documentation could not be clearer that Siteverify is mandatory. It says tokens can be forged, expire after five minutes, are single-use, and that client-side verification alone leaves major security vulnerabilities. If there is one sentence developers should tape to their monitor, that is the one. (Cloudflare Docs)

This matters in the ChatGPT and Cloudflare Turnstile debate because it resets the mental model. Suppose a client-side challenge uses browser signals, behavior signals, and even application boot-state signals. That still does not mean the browser alone determines trust. The browser generates evidence. The server evaluates that evidence by validating the token. Protected functionality should only proceed if the backend accepts the token for that request within its time window. Otherwise, the whole exercise becomes theater. (Cloudflare Docs)

A minimal server-side validation pattern in Node looks like this:

import express from "express";

const app = express();

app.use(express.json());

app.post("/api/protected-action", async (req, res) => {

const token = req.body.turnstileToken;

if (!token) {

return res.status(400).json({ error: "Missing Turnstile token" });

}

const params = new URLSearchParams();

params.append("secret", process.env.TURNSTILE_SECRET_KEY);

params.append("response", token);

const verifyRes = await fetch(

"https://challenges.cloudflare.com/turnstile/v0/siteverify",

{

method: "POST",

headers: {

"Content-Type": "application/x-www-form-urlencoded"

},

body: params

}

);

const verify = await verifyRes.json();

if (!verify.success) {

return res.status(403).json({

error: "Turnstile validation failed",

details: verify["error-codes"] || []

});

}

return res.json({ ok: true });

});

app.listen(3000);

That pattern is intentionally simple. A production implementation should also log validation failures carefully, bind the protected action to the validated request path, and avoid accidentally reusing stale tokens. The guiding principle does not change: if the server does not validate the token, the challenge is cosmetic. (Cloudflare Docs)

One practical implication is that a security reviewer should always ask a boring but decisive question: if I send the same protected request directly to the backend without a valid token, what happens. If the answer is “the backend still executes it,” then the integration failed regardless of how sophisticated the front-end widget appears. If the answer is “the backend rejects it, and the app requests a fresh token,” then the control is operating where it should. (Cloudflare Docs)

CSP, pre-clearance, and testing pitfalls

A surprising amount of operational pain around Turnstile comes from integration mistakes rather than from the challenge logic itself. Content Security Policy is one example. Cloudflare’s CSP guidance says script-src और frame-src must allow https://challenges.cloudflare.com, and if pre-clearance mode is used, connect-src must include 'self' because Turnstile will fetch a special endpoint under /cdn-cgi/ on the protected domain. Teams that lock down CSP aggressively but forget those paths can create verification failures that look mysterious until the network panel tells the real story. (Cloudflare Docs)

Pre-clearance itself is useful but easy to misunderstand. Cloudflare says Turnstile widgets can issue a clearance cookie for the same zone, and that successful challenge completion can allow visitors to bypass additional challenges of the configured clearance level and below. That means a deployment may not behave as though every single action is challenged independently. Some risk state can persist through the clearance model. If a team is testing challenge behavior and does not account for that cookie, the results can look inconsistent when they are actually stateful. (Cloudflare Docs)

Testing is another common failure point. Cloudflare explicitly warns that automated testing suites such as Selenium, Cypress, and Playwright are detected as bots by Turnstile. That is not a side effect. It is part of the product’s job. So if a team tries to run full end-to-end tests against a production Turnstile flow using ordinary automation and real site keys, it can end up debugging the anti-bot control instead of the application. Cloudflare’s answer is to provide dummy sitekeys and secret keys with deterministic outcomes for development and testing. (Cloudflare Docs)

A clean environment split looks like this:

# .env.development

TURNSTILE_SITEKEY=1x00000000000000000000AA

TURNSTILE_SECRET_KEY=1x0000000000000000000000000000000AA

# .env.test

TURNSTILE_SITEKEY=2x00000000000000000000AB

TURNSTILE_SECRET_KEY=2x0000000000000000000000000000000AA

# .env.production

TURNSTILE_SITEKEY=your-real-sitekey

TURNSTILE_SECRET_KEY=your-real-secret-key

Cloudflare documents the behavior of these test keys clearly: some always pass, some always fail, and they generate dummy tokens that production secrets will reject. That separation is not just convenient. It is the only sane way to keep automated tests reproducible without teaching your CI system to fight the same anti-bot defenses your production site uses against hostile automation. (Cloudflare Docs)

The reason this section matters for the ChatGPT story is simple: a lot of public analysis around anti-bot systems is accidentally distorted by broken test environments. If the reviewer does not know whether JavaScript was blocked, whether a stale clearance cookie existed, whether a challenge was deferred until submit, or whether the test harness itself was being flagged as automation, conclusions become unreliable fast. High-quality analysis starts with instrumenting the app as the docs say it works. (OpenAI Help Center)

Where real implementation risk shows up, plugin wrappers and key exposure

This is where CVEs become genuinely useful in the discussion. The March 2026 React-state controversy is not best understood as a CVE story. But Turnstile deployments do have real vulnerability history, and it teaches an important lesson: the riskiest layer is often not the challenge concept itself. It is the wrapper code, settings surface, or secret handling around it. (एनवीडी)

Take CVE-2023-5135. NVD describes a stored cross-site scripting issue in the Simple Cloudflare Turnstile WordPress plugin, affecting versions up to 1.23.1 because of insufficient sanitization and escaping in a shortcode path. That matters because it reminds defenders not to infer too much from a trusted brand name in a stack. “We use Cloudflare Turnstile” does not mean the surrounding plugin or integration is safe. A site can wrap a legitimate protection service in vulnerable presentation logic and hand attackers a very old-fashioned web bug. (एनवीडी)

Now look at CVE-2025-10732. NVD says the SureForms WordPress plugin exposed sensitive information, including Cloudflare Turnstile keys and security-related form settings, through improper access control on a REST API endpoint. This is exactly the kind of operational mistake that turns a decent challenge deployment into an avoidable security problem. Turnstile’s public sitekey is designed to be exposed in the browser. The secret is not. Once teams start leaking security settings and secret material through configuration APIs, the problem is not “anti-bot systems are creepy.” The problem is ordinary authorization failure. (एनवीडी)

CVE-2026-2589 makes the same point from a different angle. NVD says the Greenshift WordPress plugin exposed configured Cloudflare Turnstile API keys through a publicly accessible settings backup file. Here the failure mode is not a REST endpoint with missing authorization but a backup artifact left where it should never have been reachable. The pattern is familiar across security systems: once configuration exports, backup files, or migration artifacts become public, the supposed strength of the upstream service matters much less than the weakness of local operational hygiene. (एनवीडी)

These examples are directly relevant to readers of this topic because they prevent a common analytical mistake. A lot of attention goes to the cleverness of anti-bot telemetry. Less attention goes to the mundane places where defenders actually lose. They lose by trusting client-side validation. They lose by exposing secret keys. They lose by using poorly maintained wrappers. They lose by misconfiguring CSP or by testing the wrong thing in automation. That is where engineering reality lives. (Cloudflare Docs)

A concise risk map looks like this:

| Failure mode | Real consequence | Supporting example |

|---|---|---|

| Client-side verification treated as sufficient | Attackers can submit protected requests without a validated token if the backend does not enforce Siteverify. (Cloudflare Docs) | Cloudflare server-side validation guidance |

| Secret key exposed through settings API | An attacker gains access to security settings and challenge secrets that should remain server-side. (एनवीडी) | CVE-2025-10732 |

| Secret key exposed through public backup artifact | Unauthenticated users can retrieve sensitive configuration data from a publicly reachable file. (एनवीडी) | CVE-2026-2589 |

| Wrapper plugin suffers XSS | The protection layer becomes a delivery point for attacker-controlled script. (एनवीडी) | CVE-2023-5135 |

| CSP omits required Turnstile origins or connect path | Challenge flows fail unpredictably and teams misread the breakage as app logic problems. (Cloudflare Docs) | Cloudflare CSP guidance |

| Automated tests use real keys in bot-detecting environments | CI becomes flaky or misleading because the anti-bot layer is detecting the test harness. (Cloudflare Docs) | Cloudflare testing docs |

If there is a broad lesson here, it is that challenge systems deserve the same maturity we already demand from authentication and payment systems. Treat the browser widget as the edge of the system, not the whole system. Treat the secret as a secret. Treat wrappers and settings surfaces as attack surfaces. And treat challenge timing, clearance state, and app lifecycle as explicit parts of the design rather than accidental side effects. (Cloudflare Docs)

What this means for attackers, researchers, and defenders

For attackers, the message is simple: high-value consumer web apps are moving toward deeper attestation of client behavior and runtime state. It is no longer enough to replay a request with good headers or imitate a few browser APIs. If the service expects evidence that the app really loaded, hydrated, and reached a legitimate interaction path, synthetic clients get more expensive to build and easier to break. That does not make abuse impossible. It does change the economics. (Buchodi’s Threat Intel)

For researchers and bug bounty hunters, the lesson is about precision. “The site checks React state” is not, by itself, a vulnerability. It becomes a vulnerability only if you can turn that discovery into a discrete security impact: bypass, disclosure, integrity failure, broken authorization, or some other concrete violation of a security boundary. Without that second step, what you have is architectural intelligence, which can still be valuable, but belongs in a different category than an exploitable product bug. (Buchodi’s Threat Intel)

For defenders, the lesson is broader. Browser signals, network signals, and app-runtime signals should not be treated as mutually exclusive options. They are layers. The ChatGPT and Cloudflare Turnstile discussion shows why that layering is attractive. Browser fingerprints alone are noisy and easier to fake. Network context alone is weak against distributed abuse. App-state alone is brittle if tokens are not validated server-side. Put together, they create a more expensive problem for attackers. Done badly, they create fragility and implementation bugs. Done well, they raise the floor without forcing every user through a visible puzzle. (Cloudflare Docs)

This is also the point where agentic or structured testing workflows become relevant in a grounded way. When a team wants to compare challenge behavior across environments, preserve request and response artifacts, retest after a frontend bundle change, and fold the results into a real verification trail, the operational problem looks less like “solve a CAPTCHA” and more like “manage an evidence-backed test loop.” That is the kind of narrow, practical context in which a platform such as Penligent can make sense inside an authorized security program. (पेनलिजेंट)

Privacy, policy, and the difference between telemetry and content inspection

The hardest part of this discussion is that both sides can sound plausible if you flatten the distinction between telemetry and content. The Buchodi write-up makes an important observation about the challenge payload: the “encryption” described there is presented as obfuscation rather than true secrecy, because the decryption material is available within the observed exchange. That is a fair technical point. It means the mechanism is designed to frustrate easy analysis, not to create end-to-end opacity against all parties in the system. (Buchodi’s Threat Intel)

But that still does not answer the content question by itself. A system can gather meaningful runtime telemetry without ingesting the user’s typed prompt body into the challenge layer. A route context check, a hydration marker, or a deferred execute path can all say something useful about whether the browser is truly running the intended application. None of those necessarily imply that prompt text was inspected as part of Turnstile validation. That is why it is so important not to let the phrase “reads React state” do more analytical work than the evidence allows. (Buchodi’s Threat Intel)

Cloudflare’s public privacy language is relevant here because it defines the intended use of collected signals narrowly around bot detection and blocking. One may still want deeper transparency from vendors about what exact signal classes are used in large consumer services. That is a legitimate policy position. But the reviewed public materials do not support jumping from “client-side and runtime signals exist” to “prompt content is being profiled.” Those are different propositions. (Cloudflare)

This is also where the Ephemeral IDs documentation provides a useful caution against simplistic conclusions. Cloudflare says short-lived device-fingerprinting capabilities can exist without cookies or local storage. The Buchodi sample reported a localStorage write. Those two facts are not contradictory. They just show that one observed workflow detail should not be mistaken for a universal rule across all Turnstile features and all deployments. Security analysis gets stronger when it narrows claims instead of stretching them. (Cloudflare Docs)

The bottom line

The strongest takeaway from the ChatGPT and Cloudflare Turnstile debate is not that someone discovered a spooky new CAPTCHA. It is that modern abuse defense for high-value web applications increasingly lives at the boundary between browser identity and application runtime. OpenAI publicly confirms ChatGPT uses CAPTCHAs. Cloudflare publicly confirms Turnstile runs JavaScript challenges, gathers client-side signals, supports SPA-aware timing, and requires server-side validation. Buchodi’s reverse engineering adds a credible, technically important layer to that picture by arguing that the analyzed ChatGPT deployment appears to check application-state markers associated with a real React boot path. (OpenAI Help Center)

That does not prove Cloudflare reads prompt bodies. It does not prove a new vulnerability. It does not prove some universal law about every Turnstile integration. What it does show is more interesting than the overstatements: anti-bot logic is moving deeper into application execution, and defenders should assume that runtime completeness is becoming a first-class signal. If you build, test, or assess SPAs, that is the part worth carrying forward. (Buchodi’s Threat Intel)

The practical lessons are straightforward. Time challenge execution deliberately. Validate every token on the server. Treat wrappers, settings APIs, and backup artifacts as attack surface. Use dummy keys for automation. Inspect the app lifecycle, not just the widget. And when you discuss findings publicly, be precise enough to separate app-boot telemetry from content inspection, architecture observations from vulnerabilities, and policy questions from packet-level facts. (Cloudflare Docs)

References

OpenAI, CAPTCHAs in ChatGPT. (OpenAI Help Center)

OpenAI, Why can’t I log in to ChatGPT, including Cloudflare verification loop guidance. (OpenAI Help Center)

Cloudflare, Turnstile overview, including Challenge Platform, proof-of-work, proof-of-space, API probing, and behavior-related checks. (Cloudflare Docs)

Cloudflare, Widget configurations, including explicit rendering for SPAs, appearance modes, callbacks, and execution timing. (Cloudflare Docs)

Cloudflare, Validate the token, including mandatory Siteverify, token expiry, and single-use semantics. (Cloudflare Docs)

Cloudflare, Turnstile privacy addendum, including the stated signal classes and bot-detection purpose. (Cloudflare)

Cloudflare, Clearance और Content Security Policy guidance for pre-clearance, cf_clearance, and required CSP directives. (Cloudflare Docs)

Cloudflare, Test your Turnstile implementation, including dummy sitekeys and the warning that Selenium, Cypress, and Playwright are detected as bots. (Cloudflare Docs)

React Router, Data Loading, for the meaning of loaderData. (React Router)

React, hydrateRoot, for the meaning of hydration in a server-rendered React app. (प्रतिक्रिया)

Buchodi, ChatGPT Won’t Let You Type Until Cloudflare Reads Your React State. I Decrypted the Program That Does It. (Buchodi’s Threat Intel)

NVD, CVE-2023-5135, CVE-2025-10732, और CVE-2026-2589, for real-world risks in Turnstile wrappers and key exposure. (एनवीडी)

Penligent, Homepage, for an example of an authorized, evidence-oriented AI testing workflow context. (पेनलिजेंट)

Penligent, ChatGPT and the Security Crossroads, for broader ChatGPT security context. (पेनलिजेंट)

Penligent, Understanding the OpenAI ChatGPT Atlas Browser Jailbreak, for adjacent browser and ChatGPT security reading. (पेनलिजेंट)