The market has finally stopped asking the easy question. A year ago, the conversation was mostly about whether companies should use AI in security at all. In 2026, the real question is harsher and much more operational: if your company is building, buying, or deploying AI, what exactly proves that the system is governable, defensible, auditable, and safe enough to trust with real business data and real-world actions. That is why terms like AI SOC, SOC 2, ISO 27001, and ISO 42001 keep colliding in procurement calls, board updates, vendor questionnaires, and engineering roadmaps. They are related, but they are not interchangeable. (UnderDefense)

The confusion is understandable. AI SOC sounds like a compliance term, even though it is really an operating model. SOC 2 sounds like a security program, even though it is an independent report on controls. ISO 27001 sounds like a checklist, even though it is an information security management system. ISO 42001 sounds optional, but for AI-native companies it increasingly looks like the missing governance layer that traditional ISMS programs never fully covered. NIST’s AI RMF adds a voluntary but practical risk language for trustworthy AI, while the EU AI Act adds a legal and risk-based frame that makes governance no longer a purely internal choice. (NIST)

What makes the issue more urgent is that the market is already moving. OpenAI publicly states that it maintains SOC 2 Type 2, ISO 27001, and ISO 42001 coverage for relevant business and AI offerings. AWS positions Bedrock around encryption, logging, governance support, and compliance scope, and Bedrock AgentCore explicitly states alignment with ISO and SOC programs pending third-party audit cycles. In other words, major AI providers are already treating security operations, evidence, and formal assurance as part of the product surface, not as back-office paperwork. (OpenAI)

This article makes a simple argument. Serious AI teams need a stack, not a slogan. They need an AI SOC if they want continuous investigation and response. They need SOC 2 if they sell into buyers who want third-party assurance. They need ISO 27001 if they want a durable, auditable information security management system. And they increasingly need ISO 42001, plus AI-specific risk guidance from NIST and OWASP, if they want to govern model behavior, agentic actions, data use, and lifecycle risk without pretending that classic security controls are enough on their own. (NIST)

The first thing to get right, these terms answer different questions

Most teams get into trouble because they ask one artifact to do the job of another. They want an AI SOC to prove governance maturity. They want ISO 27001 to prove operational detection quality. They want a SOC 2 report to answer whether an agent can safely call tools, handle secrets, or resist prompt injection. None of those expectations are completely fair. Each layer answers a different question, and the fastest way to build a weak program is to ignore that separation. (ISO)

A practical way to think about it is this. AI SOC asks whether the organization can see and respond to malicious or risky activity fast enough. SOC 2 asks whether an auditor can examine your controls and describe them credibly for customers and stakeholders. ISO 27001 asks whether your information security program exists as a managed system with risk treatment, ownership, review, and continual improvement. ISO 42001 asks whether your AI activities are governed as an AI management system with accountability, transparency, and lifecycle discipline. NIST AI RMF and OWASP guidance help you decide what good looks like when the problem is specifically AI, not just generic IT. (NIST)

That distinction matters because AI changes failure modes. A traditional SaaS app can fail through broken authentication, weak access control, data leakage, or logging gaps. An AI system can fail in all of those ways and then add prompt injection, unsafe tool use, model misuse, over-broad delegation, weak action approval, memory poisoning, and unsafe agent autonomy on top. A SOC 2 report can still be valuable. An ISO 27001 certificate can still be valuable. But neither one, by itself, tells you whether an agent can be manipulated into doing something dangerous in production. (OWASP Gen AI 보안 프로젝트)

The mature posture is layered. Use formal assurance to demonstrate control design and governance. Use AI security guidance to shape controls that match AI-specific risks. Use AI SOC capability to continuously detect, investigate, and contain operational issues. Then use offensive validation to check whether all of that works under adversarial pressure. That is the architecture buyers increasingly expect, even when they do not yet have the vocabulary to describe it cleanly. (UnderDefense)

What an AI SOC actually is, and what it is not

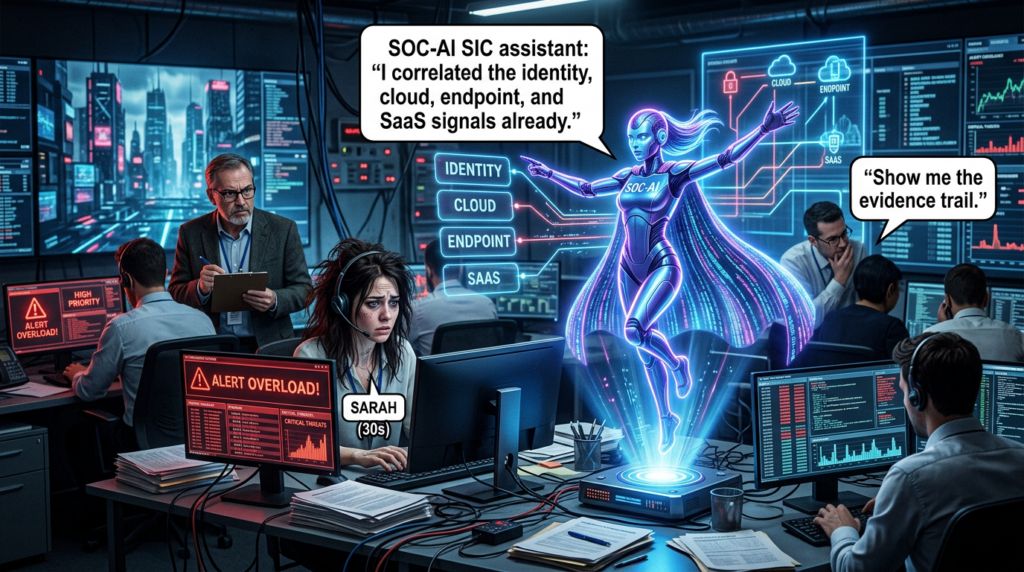

A real AI SOC is not a dashboard with a language model pasted on top. UnderDefense describes an AI SOC as a security operations center in which AI performs investigation, triage, and correlation work that would otherwise sit with Tier 1 and Tier 2 analysts. Trustero describes a similar model, where AI helps prioritize alerts, investigate incidents, run response playbooks, and generate audit-ready evidence. Both descriptions point to the same core shift: AI is no longer just used inside single tools, but as a reasoning layer that works across the environment. (UnderDefense)

That cross-domain reasoning layer is the key difference. In a fragmented environment, identity alerts live in one system, cloud events in another, endpoint telemetry in a third, and email or SaaS findings somewhere else. Human analysts become the correlation engine, which means fatigue, delays, and blind spots are built into the workflow. An AI SOC tries to collapse that fragmentation by enriching, correlating, and prioritizing signals across identity, endpoint, cloud, network, and SaaS telemetry, then presenting a human-readable narrative of what is happening and what should happen next. (UnderDefense)

This is also where many AI SOC pitches become weak. Automation alone is not an AI SOC. SOAR playbooks can still be useful, but fixed if-this-then-that logic is not the same thing as a system that can reason through ambiguous activity, decide what additional evidence is needed, query adjacent systems, and route actions with approvals and rollback paths. OWASP’s guidance for agentic applications and AI agents makes the same point from the defensive side: once systems can reason, use tools, maintain memory, and take actions, the security model has to account for autonomous multi-step behavior, not just inference. (UnderDefense)

The best AI SOC programs therefore care about four things at once. They care about speed, because alert triage cannot remain a queue of stale tickets. They care about quality, because noisy summaries do not help anyone. They care about explainability, because auditors, customers, and incident leaders need to know what the system saw and why it acted. And they care about control boundaries, because an AI SOC that can reason without guardrails can also make fast mistakes at scale. Bedrock’s focus on logging, CloudTrail, CloudWatch, and optional request-response storage is one example of the kind of operational evidence substrate this model needs. (UnderDefense)

SOC 2 is not your security program, it is your assurance story about controls

The AICPA defines a SOC 2 examination as a report on controls at a service organization relevant to security, availability, processing integrity, confidentiality, or privacy. That definition matters. A SOC 2 report is not the same thing as building the controls, running the security program, or governing AI behavior. It is an independent examination that helps users of your service understand whether the controls around the system are designed and described in a way that supports trust. (AICPA & CIMA)

In practical terms, SOC 2 is usually about what customers want to review when they ask whether your service is secure enough to handle their data. They are not buying the report itself. They are buying the confidence signal that the report creates. That is why SOC 2 remains commercially important for AI and SaaS vendors even when engineers privately prefer more technical frameworks. It translates your internal control environment into something procurement, vendor risk, legal, and enterprise security reviewers understand quickly. (AICPA & CIMA)

For AI companies, this creates both value and a trap. The value is obvious: if your platform processes customer prompts, files, telemetry, embeddings, or operational actions, a SOC 2 report helps customers understand whether the relevant system and controls have been examined. The trap is believing that SOC 2 settles the AI-specific part of the discussion. It does not. SOC 2 can tell a customer that your controls over security, confidentiality, or privacy exist and are described. It does not automatically tell them how you handle prompt injection, unsafe tool invocation, agent memory poisoning, or model governance. (AICPA & CIMA)

That is why the strongest AI companies no longer present SOC 2 as the whole trust story. OpenAI’s public security and privacy materials pair SOC 2 with ISO 27001-family certifications and ISO 42001. That is a strong signal about how the market is evolving: customers still want familiar assurance artifacts, but they also expect explicit discussion of AI management, audit logs, data controls, safety testing, and product-specific administrative features. SOC 2 is still important. It is just no longer sufficient by itself. (OpenAI)

ISO 27001 is still the backbone, because AI does not remove information security risk

ISO states that ISO 27001 is the best-known standard for information security management systems and that conformity means an organization has put in place a system to manage risks related to the security of data it owns or handles. ISO also emphasizes that the standard promotes a holistic approach across people, policies, and technology. That is exactly why ISO 27001 remains relevant for AI teams. AI may create new risk patterns, but it does not make data classification, access control, supplier management, incident handling, or asset governance less important. It makes them more important. (ISO)

For AI companies in particular, ISO 27001 solves a problem that product teams often underestimate. The most valuable thing in many AI businesses is not just customer data. It is the whole operating system around the model: training data lineage, evaluation sets, system prompts, tool credentials, fine-tuning assets, deployment pipelines, model access pathways, feature flags, logs, and exception-handling logic. Hightable frames ISO 27001 for AI companies as a business enabler because it protects exactly those assets that enterprise buyers worry about most, including proprietary algorithms, training data, and model integrity. (ISO)

ISO 27001 also matters because it forces discipline around continuity and review. AI teams often move fast enough to create shadow systems without realizing it. A demo model endpoint turns into production traffic. A prototype tool connector keeps privileged access longer than intended. An annotation pipeline grows into a regulated-data workflow. An external model provider becomes business-critical before anyone has documented the dependency. A properly run ISMS gives leadership a way to see, review, and improve those conditions instead of discovering them during a breach or a customer escalation. (ISO)

None of this means ISO 27001 alone covers all AI risks. It does not. It means the opposite: if you skip ISO 27001 because you think “AI governance” is a different category, you often end up rebuilding basic information security badly under a new name. The stronger move is to treat ISO 27001 as the base management system for information risk, then extend upward into AI-specific governance rather than pretending the old problems disappeared. (ISO)

ISO 42001 is the missing layer most AI teams eventually discover they need

ISO 42001 is the first AI management system standard. ISO describes it as a standard for establishing, implementing, maintaining, and continually improving an AI management system, designed for entities that provide or use AI-based products and services. ISO also highlights why it matters: AI introduces issues around transparency, ethical considerations, responsible use, and continuous learning that do not fit neatly inside classic security management language alone. (ISO)

This is why the ISO 27001 versus ISO 42001 debate is usually framed badly. It is not really a competition. ISO 27001 answers how your organization manages information security risk. ISO 42001 answers how your organization governs AI systems as AI systems. The overlap is meaningful, but the center of gravity is different. One is about information security management broadly. The other is about responsible development, provision, and use of AI, including questions that security teams often cannot answer in isolation, such as purpose limitation, lifecycle oversight, model change control, human oversight, and AI-specific accountability. (ISO)

The market is already reflecting that distinction. OpenAI publicly states that it maintains an ISO 42001 AI Management System covering consumer and business AI products and models in its role as an AI producer and AI provider. Anthropic’s trust materials likewise surface ISO 42001 artifacts alongside more familiar security documents. You do not need those examples to prove that ISO 42001 matters, but they show that leading AI vendors now see AI management assurance as part of enterprise trust, not as an academic extra. (OpenAI)

For most teams, the practical takeaway is simple. If you sell or deploy AI in a way that materially affects customers, internal decision-making, or automated actions, you should expect buyers and regulators to ask questions that pure ISMS language does not fully answer. ISO 42001 gives you a formal structure for those questions. Used together, ISO 27001 and ISO 42001 are much closer to what a serious AI trust program actually looks like in 2026. (ISO)

NIST AI RMF and CSF 2.0 help turn compliance language into operating language

One reason AI security programs drift into confusion is that certification language and engineering language often do not line up. NIST’s AI Risk Management Framework is useful precisely because it is voluntary and operational. NIST says the AI RMF is intended to improve an organization’s ability to incorporate trustworthiness considerations into the design, development, use, and evaluation of AI products, services, and systems. That makes it an excellent bridge between board-level governance and engineering-level implementation. (NIST)

NIST’s Generative AI Profile is even more relevant for teams deploying modern AI systems. NIST describes it as a cross-sectoral profile and companion resource for generative AI that helps organizations identify unique risks posed by generative AI and propose risk management actions aligned with their goals and priorities. That is exactly the kind of framing most AI companies need after they have achieved the basics of cloud security and access control and start asking harder questions about model misuse, content handling, agent behavior, and lifecycle risk. (NIST)

CSF 2.0 is useful for a parallel reason. NIST says CSF 2.0 organizes cybersecurity outcomes into six functions, including the newly added Govern function. NIST has also emphasized that the Govern function exists to connect cybersecurity to broader enterprise risk management. That is a particularly helpful lens for AI teams, because AI programs fail when they are treated as isolated model projects instead of enterprise systems with policy, accountability, risk appetite, and escalation paths. (NIST)

Used together, these frameworks help solve a recurring executive problem. ISO 27001 and SOC 2 tell leadership that controls and systems exist. NIST AI RMF and CSF 2.0 help leadership ask whether those controls and systems map to the actual risk of the AI use case in front of them. That is a subtle difference, but it is where mature governance begins. (ISO)

A working map of the stack

| 레이어 | Primary question | Typical owner | Main output |

|---|---|---|---|

| AI SOC | Can we detect, investigate, and respond to AI-era threats and operational abuse fast enough | Security operations | Cases, detections, playbooks, investigation trails |

| SOC 2 | Can an independent auditor describe and examine our control environment for customers | GRC, security, platform | SOC 2 report |

| ISO 27001 | Do we run an ISMS that continuously manages information risk | Leadership, GRC, security | Certification, risk treatment, ISMS records |

| ISO 42001 | Do we run an AI management system for responsible AI lifecycle governance | Leadership, legal, AI governance, security | Certification, AI governance records |

| NIST AI RMF | Have we identified and managed AI-specific risks in a trustworthy way | Cross-functional risk owners | Profiles, assessments, treatment plans |

| CSF 2.0 | Are cyber outcomes governed across the lifecycle and tied to enterprise risk | Security leadership | CSF profile, target outcomes, governance alignment |

The table above compresses the relationship into one view, but the important point is not the labels. The important point is that each layer has a different center of gravity. A company that is excellent at one layer can still be weak at another. A beautiful SOC 2 report does not guarantee strong investigations. A mature AI SOC does not guarantee an auditable management system. An ISO 27001 certificate does not guarantee safe agent tool boundaries. Once you accept that, architecture decisions get much easier. (NIST)

The control domains that break AI teams most often

The first domain is data governance. AI systems create more pathways for data use than many teams realize. Prompts, retrieved context, embeddings, evaluation corpora, memory stores, feedback loops, fine-tuning sets, moderation decisions, and logs can all become sensitive. AWS emphasizes data control, encryption, key management, private connectivity, and monitoring for Bedrock. OpenAI emphasizes data protection, certifications, and business controls such as audit logs and data residency options. These are not marketing extras. They are reminders that AI systems expand data handling surfaces, which means governance has to expand with them. (OpenAI)

The second domain is action governance. Agentic systems can plan, call tools, and modify state. OWASP’s AI Agent Security Cheat Sheet makes clear that this expanded capability introduces risks beyond traditional LLM prompt injection. The problem is not only what the model says. The problem is what the system can do. If a model can read a CRM, create tickets, invoke code, query repositories, or change cloud state, then authorization, purpose limitation, and approval design become as important as inference safety. (OWASP 치트 시트 시리즈)

The third domain is logging and explainability. UnderDefense explicitly argues that AI SOC investigations must produce human-readable audit trails, including what data was analyzed, what reasoning was applied, and what action was taken. AWS makes a parallel point from the infrastructure side by foregrounding CloudWatch, CloudTrail, and optional metadata logging for governance and audit purposes. In the AI era, logging is no longer only about post-incident forensics. It is also about proving that automated reasoning and automated actions were bounded, reviewable, and attributable. (UnderDefense)

The fourth domain is supplier and model ecosystem risk. AI teams increasingly depend on external model providers, vector tooling, agent frameworks, orchestration layers, hosted inference, third-party connectors, and plugin ecosystems. CSF 2.0 expanded emphasis on governance and supply chain risk management, and OpenAI’s supplier security measures explicitly require independent audits against recognized standards such as SOC 2 Type 2 or ISO 27001. In practice, that means vendor review is no longer just a procurement checklist. It is part of the security architecture. (NIST)

A simple AI system inventory that auditors and engineers can both use

One of the fastest wins for AI governance is to keep a living inventory of AI systems. Not a vague spreadsheet of “we use AI here and there,” but an asset register that ties each AI-enabled system to an owner, purpose, data classes, model provider, tools, action boundaries, approval rules, and log locations. This is where ISO 27001 discipline and ISO 42001 discipline start to feel complementary rather than theoretical. (ISO)

ai_systems:

- name: support-assistant-prod

owner: customer-platform

business_purpose: customer support triage

model_provider: openai

model_reference: gpt-4.1

data_classes:

- customer_pii

- support_history

retrieval_sources:

- product_kb

- ticket_archive

tools_allowed:

- crm_read

- ticket_create

tools_blocked:

- billing_change

- account_delete

human_approval_required_for:

- policy_exception

- refund_decision

log_locations:

- siem

- cloudtrail

- application_audit_index

retention_days: 365

fallback_mode: read_only

This kind of inventory does three useful things at once. It tells engineering what the system is allowed to do. It tells security where to collect evidence and what failure modes to watch. And it tells auditors and customers that AI use is not happening as undocumented drift. NIST AI RMF, ISO 27001, and ISO 42001 all reward exactly this kind of traceable governance habit, even if they describe it in different language. (NIST)

Agent identity, tool permissions, and approval boundaries are now first-class security controls

The old software model asked whether a user or service account had the right permissions. The AI model asks that, and then adds another layer: under what conditions should an autonomous or semi-autonomous agent be allowed to exercise those permissions. That is a security question, a product question, and a governance question at the same time. OWASP’s agentic security materials exist because the action plane is where harmless-looking AI features become operational risk. (OWASP Gen AI 보안 프로젝트)

A common failure pattern is over-broad tool access. Teams grant an internal copilot the ability to read knowledge bases, repositories, tickets, calendars, cloud metadata, and customer records because the demo feels more powerful that way. Then nobody goes back and asks whether those accesses were separated by purpose, environment, sensitivity, or required approvals. If the agent is later manipulated through prompt injection, retrieval poisoning, compromised connectors, or confused-deputy behavior, it can act with more reach than a human employee would have been granted in the same scenario. (OWASP 치트 시트 시리즈)

The right pattern is not “never let agents do anything.” The right pattern is explicit scoping. Read-only before read-write. Low-risk tools before high-impact tools. Narrow credentials instead of broad API keys. Human approval before irreversible actions. Separate runtime identities for separate workflows. Strong logging for every delegated step. Those principles are as old as least privilege, but AI makes them visible again because the speed and scale of automated action raises the cost of bad defaults. (ISO)

A useful policy-as-code guardrail can be remarkably small if the ownership model is clear.

package ai.guardrails

default allow = false

allow if {

input.actor_type == "ai_agent"

input.tool in {"kb_search", "ticket_create", "crm_read"}

input.data_classification != "restricted"

}

allow if {

input.actor_type == "ai_agent"

input.tool == "billing_change"

input.human_approval == true

input.change_ticket != ""

}

That snippet is not the whole program, but it captures the mindset shift. AI governance becomes real when tool use, data sensitivity, and human approval are enforced as executable policy instead of buried inside slide decks. (OWASP 치트 시트 시리즈)

Logging and evidence are no longer a back-office function

A surprisingly large share of AI trust work reduces to one question: what evidence can you show after the fact. If an AI SOC investigated a suspected incident, can you reconstruct the chain. If an AI agent took an action, can you attribute the decision path. If a customer asks whether their data was exposed to a model provider or stored in logs, can you answer from records instead of memory. If an auditor asks how a high-risk workflow is approved, can you show the approval artifact. Strong organizations win here because they build evidence collection into the system rather than treating it as a quarterly scramble. (Trustero)

That is why compliance automation platforms keep emphasizing evidence gathering, continuous monitoring, and centralized auditor access. Comp AI, Vanta, Delve, and similar vendors all foreground that pattern. The important lesson is not that one vendor is necessarily better than another. The important lesson is that the market has converged on the same pain point: organizations do not only need controls, they need machine-collected, reviewable evidence that those controls exist and operate over time. (Comp AI)

For AI systems, the evidence model needs one more field than traditional SaaS evidence usually includes. It needs reasoning context. Not full chain-of-thought style internals, but enough provenance to know what inputs, policies, retrieval sources, user requests, tool calls, and approval states shaped a decision. Without that, you can prove that an action happened, but not whether it happened within policy. UnderDefense’s insistence on human-readable investigation trails is therefore not just a SOC convenience. It is an auditability requirement in disguise. (UnderDefense)

A minimal event format for agentic action logging might look like this.

{

"event_id": "evt_2026_03_20_000412",

"timestamp": "2026-03-20T18:11:02Z",

"actor": {

"type": "ai_agent",

"id": "soc-investigator-01",

"owner_team": "security-operations"

},

"request": {

"user_id": "analyst_42",

"goal": "investigate suspicious token usage",

"tool_called": "identity_lookup"

},

"governance": {

"policy_version": "2026-02-18",

"approval_required": false,

"data_classification": "internal"

},

"outcome": {

"decision": "enriched_case",

"case_id": "case_11829",

"confidence": "high"

}

}

The point is not the JSON itself. The point is that event structure becomes governance structure. If your AI system cannot emit attributable action records like this, you are asking customers and auditors to trust a black box that is already touching sensitive workflows. That is no longer a reasonable ask. (Amazon Web Services, Inc.)

The newest AI compliance mistake, assuming cloud platform compliance transfers upward automatically

Cloud AI platforms are getting better at security and compliance. AWS states that Bedrock supports encryption, private connectivity, governance-oriented logging, and scope for common compliance standards. Bedrock AgentCore states alignment with multiple AWS compliance programs, including ISO and SOC, subject to audit review cycles. Those are meaningful signals and useful building blocks. But they do not eliminate your own responsibilities. (Amazon Web Services, Inc.)

This is the shared responsibility problem in AI form. A compliant model hosting platform does not make your agent workflow compliant. A platform with excellent encryption does not decide whether your retrieval layer is pulling sensitive content into the wrong prompt. A platform with SOC coverage does not define which internal teams are allowed to enable tool execution, what approval gates exist, or whether your high-risk actions are reversible. The infrastructure may be trustworthy. Your system design may still be reckless. (Amazon Web Services, Inc.)

This distinction becomes especially important in customer conversations. Many teams say “we run on a compliant AI platform” when what the buyer actually wants to know is whether 당신의 configuration, logging, access model, vendor review, and operational controls are good enough. Mature buyers now hear the difference immediately. They see platform assurance as necessary, not sufficient. That is one reason trust portals, supplier audit expectations, and framework mappings have become standard reading for security and procurement teams. (OpenAI)

Recent AI-related CVEs tell a blunt story, most AI failures still look like software security

The idea that AI security is mostly about exotic model behavior is comforting, but wrong. OWASP is right to elevate AI-specific issues such as prompt injection and agentic misuse, yet some of the most important recent vulnerabilities in AI and ML infrastructure are very recognizable software flaws: path traversal, authentication bypass, SSRF, stack overflow, and remote code execution. The “AI” label changes the blast radius and context, but not the basic need for patching, hardening, isolation, and least privilege. (OWASP Gen AI 보안 프로젝트)

| CVE | Affected technology | What happened | Practical lesson |

|---|---|---|---|

| CVE-2025-12420 | ServiceNow AI 플랫폼 | Unauthenticated impersonation vulnerability with critical impact | AI workflow platforms still need classic auth, privilege, and tenancy review |

| CVE-2025-62615 | AutoGPT | SSRF in RSSFeedBlock through unfiltered URL access | Agent connectors and fetchers need strict outbound controls |

| CVE-2025-23317 | NVIDIA Triton Inference Server | Crafted HTTP request could start a reverse shell | Model serving infrastructure belongs behind strong network and runtime isolation |

| CVE-2026-2635 | MLflow | Hard-coded default credentials enabled auth bypass and code execution | MLOps stacks need secrets hygiene and secure bootstrap defaults |

| CVE-2025-15031 | MLflow | Path traversal in pyfunc artifact extraction enabled arbitrary file writes | Treat model artifacts and package ingestion as hostile input until proven otherwise |

These are not theoretical edge cases. NVD describes CVE-2025-12420 as enabling unauthenticated impersonation in the ServiceNow AI Platform. It describes CVE-2025-62615 as a critical SSRF issue in AutoGPT. It describes CVE-2025-23317 as an NVIDIA Triton issue where a specially crafted HTTP request could start a reverse shell. It describes CVE-2026-2635 and CVE-2025-15031 as MLflow flaws involving hard-coded default credentials, authentication bypass, code execution, and path traversal during artifact handling. The common thread is not “AI went rogue.” The common thread is that AI systems are built from software, and software is exploitable when control boundaries are weak. (NVD)

There is another lesson here for compliance teams. A strong ISO 27001 or SOC 2 posture should help you catch or contain these problems faster through asset ownership, vulnerability management, logging, patch processes, and change control. But recent CVEs show why that still is not enough on its own. If your AI system includes agent frameworks, model registries, artifact handling, connectors, or inference servers, you need security validation that follows the actual attack paths of that stack, not just paperwork that states you have a process. (ISO)

The control architecture that tends to work in practice

The most durable architecture usually starts with management systems and ends with runtime evidence. At the foundation sits ISO 27001, because without a functioning ISMS the rest tends to become fragmented and personality-driven. Alongside or soon after that comes AI-specific governance, usually shaped by ISO 42001 and NIST AI RMF, because AI systems need documented purpose, lifecycle ownership, review logic, and treatment of AI-specific risks. On the assurance side sits SOC 2, because customers still need an external artifact they can request, evaluate, and file. On the operations side sits an AI SOC or equivalent capability, because the control environment means little if nobody can investigate what is actually happening. (ISO)

What ties those pieces together is not more policy. It is traceability. You need a traceable inventory of AI systems, a traceable mapping from system purpose to controls, traceable boundaries for data and tool use, traceable logs for automated decisions, traceable vendor reviews, and traceable evidence for how the controls operate. If you cannot trace those relationships, your security program will eventually split into two worlds: the world of documents and the world of production. That split is where expensive surprises are born. (NIST)

The simplest way to test whether your architecture is real is to ask four operational questions. Can you list every AI-enabled system in scope. Can you explain what each one is allowed to do. Can you reconstruct what it actually did. And can you prove that someone reviewed whether it should be allowed to do that in the first place. If the answer to any of those is no, then you do not have an AI governance architecture yet. You have pieces of one. (ISO)

Where AI SOC, ISO 27001, and Penligent naturally connect

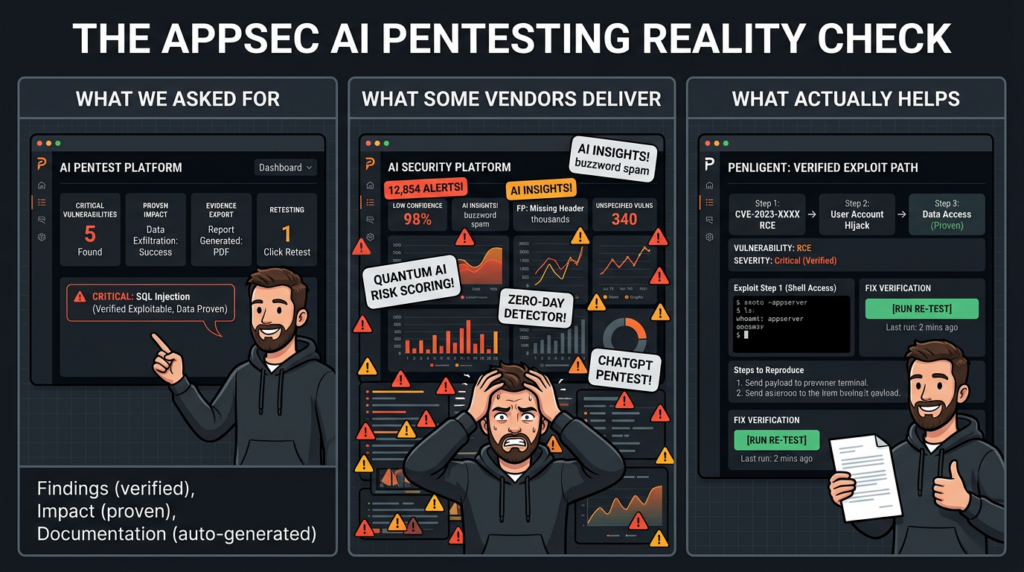

There is a point where governance, assurance, and operations stop being enough on their own. That point is adversarial reality. A company can have solid policies, an attractive trust center, strong logging, and decent procurement answers, and still fail under a real attack path because nobody tested the chain the way an attacker would. This is where offensive validation belongs. Not as a replacement for AI SOC or ISO 27001, but as the evidence that the controls stand up when somebody actively tries to move through them. (ISO)

That is the most natural place to connect a platform like Penligent. Penligent describes its product as an end-to-end AI-powered penetration testing agent that goes from asset discovery through vulnerability scanning, exploit execution, attack-chain simulation, and final reporting, including compliance-ready reporting aligned with standards such as ISO 27001 and NIST-oriented workflows. Its related materials also position the platform around autonomous recon, exploitation, adaptive testing strategy, and local deployment options for privacy-sensitive environments. Used correctly, that makes it complementary to AI SOC and assurance work: it helps validate whether the systems you govern and attest can actually resist the attack chains you think they can. (펜리전트)

That distinction matters because security buyers increasingly distrust abstract control claims. They want to know not only whether you have a policy for secrets, model endpoints, connectors, and privileged tools, but whether those controls survive realistic misuse and exploit sequences. An AI-enabled offensive validation workflow can help answer that more continuously than annual consulting-only approaches, especially for teams that are shipping AI features and new integrations every few weeks instead of once or twice a year. (펜리전트)

A practical rollout path for three kinds of organizations

| Organization stage | First priority | Second priority | Third priority | What success looks like |

|---|---|---|---|---|

| Early AI startup | Basic ISMS discipline and AI asset inventory | Customer-facing assurance planning | Guardrailed logging and action boundaries | You can answer buyer questionnaires without improvising |

| Growth-stage AI SaaS | ISO 27001 and SOC 2 operating maturity | AI-specific governance with ISO 42001 and AI RMF | AI SOC workflows for alerting, triage, and evidence | Sales, security, and engineering stop contradicting each other |

| Mature enterprise with AI and SOC team | Formal AI governance, runtime action controls, supplier discipline | AI SOC integration across cloud, identity, endpoint, SaaS | Continuous adversarial validation of AI and non-AI paths | Governance, operations, and validation work as one system |

For an early AI startup, the biggest mistake is trying to imitate a mature enterprise trust stack all at once. Start with ownership, inventory, data classification, access rules, and evidence-friendly logging. Those are boring, but they reduce chaos fastest. Add a credible path toward ISO 27001 and customer assurance early, because enterprise buyers will ask before the engineering team feels ready. If the product is AI-native, build lightweight AI governance records now rather than pretending that can wait until scale. (ISO)

For a growth-stage AI SaaS company, the problem is usually coordination rather than awareness. Security, product, ML, platform, and legal all know pieces of the answer, but nobody owns the integrated story. This is the stage where ISO 27001 plus SOC 2 becomes commercially powerful, and where ISO 42001 or NIST AI RMF starts to pay off by forcing consistency around AI lifecycle governance. It is also the stage where AI SOC workflows become useful beyond pure detection, because they can support evidence collection, investigation quality, and response around AI misuse or sensitive operational drift. (NIST)

For larger enterprises with established SOC teams, the challenge shifts again. The core issue is less “do we have controls” and more “can our controls keep pace with agents, model sprawl, third-party tooling, and cross-domain telemetry.” These organizations tend to benefit most from strong runtime governance, richer AI SOC capabilities, and continuous adversarial validation because they already have enough complexity for hidden paths to exist. The cost of fragmented visibility is higher, and the cost of undocumented AI drift is higher too. (UnderDefense)

The mistakes that keep repeating

The first mistake is presenting AI SOC as a synonym for AI governance. It is not. Investigation and response capability are essential, but they do not replace management systems, ownership models, or assurance artifacts. A company can have fast triage and still have weak model governance. (UnderDefense)

The second mistake is presenting SOC 2 as a synonym for product security. It is not. A good SOC 2 report is valuable, but it does not answer every technical question a security engineer will ask about AI agents, tool invocation, prompt injection, or model behavior. It should be treated as evidence of examined controls, not as a magic shield against AI-specific risk. (AICPA & CIMA)

The third mistake is presenting ISO 27001 as too generic for AI teams. That view usually collapses the moment a team has to answer who owns training data, where secrets live, how logs are retained, what suppliers are trusted, and how incidents are escalated. AI does not outrun information security management. It makes it more necessary. (ISO)

The fourth mistake is treating AI-specific frameworks as a future concern. The EU AI Act is already in force as the first comprehensive AI legal framework and takes a risk-based approach for developers and deployers. Whether or not a given company falls directly into every obligation today, the direction of travel is clear: governance, transparency, accountability, and risk classification are moving from best practice into expectation. (Digital Strategy)

The fifth mistake is skipping validation. Recent CVEs across MLflow, Triton, AutoGPT, and AI platforms show that AI stacks can fail through very traditional vulnerabilities. If your program stops at policy and attestations, you will eventually discover that a well-documented control is not the same thing as an effective one. (NVD)

Further reading

- NIST AI 위험 관리 프레임워크

- NIST Generative AI Profile for AI RMF

- NIST Cybersecurity Framework 2.0

- ISO 27001 official overview

- ISO 42001 official overview

- AICPA SOC 2, Trust Services Criteria

- OWASP Top 10 for LLM Applications 2025

- OWASP Top 10 for Agentic Applications for 2026

- OWASP AI Agent Security Cheat Sheet

- EU AI Act, European Commission overview

- Overview of Penligent.ai’s Automated Penetration Testing Tool

- The 2026 Ultimate Guide to AI Penetration Testing, The Era of Agentic Red Teaming

- The Future of AI Agent Security, Openclaw Security Audit

- Comprehensive Digital Security Controls Guide for Security Engineers and Cyber Defenders