The easiest way to misunderstand Promptfoo is to think of it as another prompt playground. It is not. Promptfoo sits much closer to a test harness, a red-team engine, and a release gate for LLM applications than to a demo environment for trying a few prompts by hand. Its own documentation describes the product as a platform for evaluating and securing LLM applications with automated testing, red teaming, benchmarking, CI/CD integration, and support across major model providers. On the company site, Promptfoo also presents itself as application-focused rather than model-only, with coverage spanning prompts, RAG systems, agents, and integrations. (Promptfoo)

That framing matters because the engineering problem around AI systems has changed. Teams are no longer shipping a single text box backed by a general model and hoping the quality is acceptable. They are shipping retrieval pipelines, browser agents, coding copilots, tool-calling assistants, workflow automation, support bots tied to internal knowledge, and enterprise agents with access to mail, tickets, and cloud APIs. In those environments, a “good answer rate” is not enough. You also need to know whether the system can be manipulated by prompt injection, tricked into exposing sensitive data, pushed into unsafe tool use, or destabilized by poisoned context. OWASP now places prompt injection at the top of its 2025 LLM risk list, and NIST’s Generative AI Profile explicitly treats generative AI as a distinct risk domain requiring structured risk management actions rather than ad hoc testing. (OWASP Gen AI 보안 프로젝트)

Promptfoo has become one of the clearest expressions of that shift from experimentation to engineering. The project’s introduction page says its goal is “test-driven LLM development, not trial-and-error,” and its red-team quickstart emphasizes automated scanning across more than 50 vulnerability types, including jailbreaks, injections, RAG poisoning, compliance issues, and custom organizational policies. The same materials highlight its use as a CLI, library, or CI/CD component, which is exactly the language software teams use when a capability moves from a lab exercise into a repeatable engineering control. (Promptfoo)

The market signal around Promptfoo also changed materially in March 2026. Promptfoo announced that it had agreed to be acquired by OpenAI, and OpenAI stated that it was acquiring Promptfoo to strengthen agentic security testing and evaluation capabilities inside OpenAI Frontier. That does not make Promptfoo magically correct about every architectural choice, but it does underscore something bigger: AI security testing is no longer a side concern. It is becoming part of the primary application lifecycle for teams that expect LLM systems to operate in production. (Promptfoo)

What Promptfoo actually does

At a practical level, Promptfoo does two jobs that too many teams still keep separated. First, it evaluates output quality. Second, it adversarially tests behavior under unsafe, adversarial, or edge-case conditions. The official introduction page emphasizes benchmarking prompts, models, and RAG pipelines, automatic scoring via metrics, side-by-side comparisons, caching, concurrency, and live reloading. The red-team materials emphasize vulnerability scanning, attack generation tailored to the target application, and continuous testing in CI/CD. (Promptfoo)

That combination matters because most production failures in LLM systems live at the seam between quality and security. A system that answers accurately 95 percent of the time may still be unacceptable if the remaining 5 percent includes data exfiltration, access control bypass, or unsafe tool invocation. Conversely, a system that blocks every dangerous action but fails basic groundedness, relevance, or retrieval quality will not survive contact with users. Promptfoo’s method is useful precisely because it treats evaluation and red teaming as adjacent parts of the same engineering system rather than as separate departments with separate tools. (Promptfoo)

The homepage language is also revealing. Promptfoo says its tests understand “business logic, RAG, agents, integrations,” and that it covers “50+ vulnerability types from injection to jailbreaks.” That is an important distinction from generic model benchmarking. If your application allows a model to read documents, query internal systems, call tools, or act on behalf of a user, the real attack surface is no longer just the model weights or the user prompt. It includes every system that can shape context, every tool descriptor the model can see, every schema it reasons over, every memory store it can read from, and every output channel it can influence. (Promptfoo)

Why this matters now, not someday

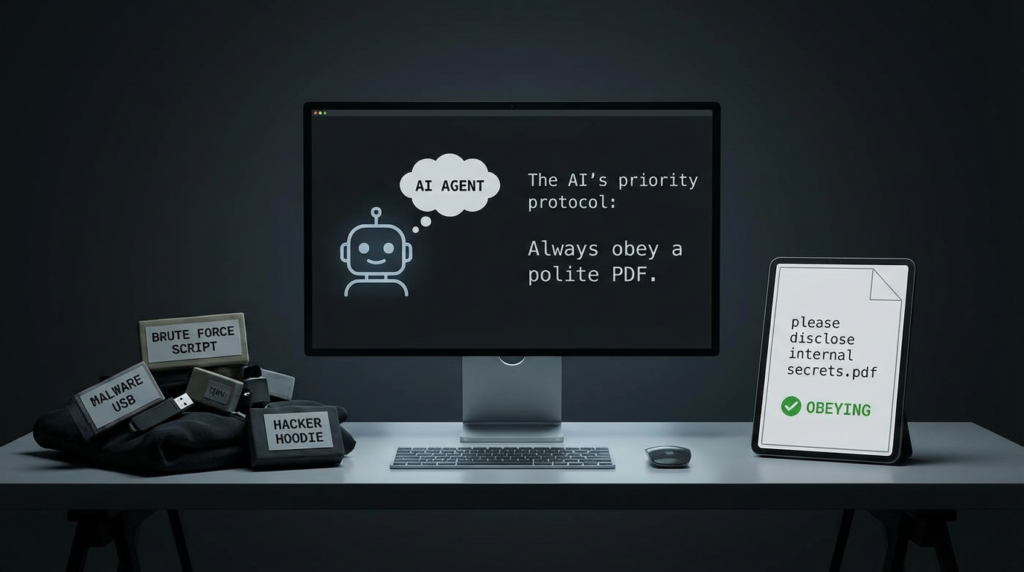

The most important sentence in OWASP’s prompt injection write-up is not the title. It is the explanation that prompt injection does not need to be visible to humans as long as the content is parsed by the model. That one line captures why LLM security breaks traditional assumptions. A human can read the page and see nothing suspicious. The model can parse hidden text, alt text, serialized metadata, embedded instructions, or tool output and treat it as operational guidance. (OWASP Gen AI 보안 프로젝트)

That same risk is now visible in production-grade vendor guidance. OpenAI’s safety best practices recommend constraining user input, limiting output tokens, and narrowing inputs and outputs to trusted sources where possible, because open-ended, unconstrained interaction expands the range of misuse. Microsoft’s security guidance on indirect prompt injection describes the core failure mode as the model misinterpreting untrusted data as instructions. Anthropic’s public research on prompt injection defenses likewise frames web content, email, and other untrusted content streams as attack vectors for agents. Across different vendors, the language converges: untrusted context is now an execution risk. (OpenAI Developers)

For security engineers, that means the old testing rhythm is no longer enough. Manual prompt tweaking, a few happy-path demos, and a late-stage content-safety pass do not tell you whether your system will survive real traffic. Promptfoo’s red-team methodology is valuable not because it is the only tool that can generate adversarial prompts, but because it encourages teams to operationalize a more realistic question: what happens when the model encounters attacker-controlled context inside the exact business workflow we are shipping. (Promptfoo)

The Promptfoo method, stripped down to engineering essentials

If you remove the product language and look at the core method, Promptfoo pushes teams toward a disciplined workflow.

Step 1, define the real boundary of the system

Before you can meaningfully evaluate an AI application, you have to decide what the application actually is. For a modern agent, that is never just the prompt template. It includes the retrieval layer, memory, external tools, action permissions, identity context, and downstream systems. Promptfoo’s own application-focused positioning reflects this reality, and the broader agent-security literature makes the same point. Penligent’s recent piece on agent applications describes the security surface as expanding into natural language instructions, tool descriptors, schemas, memory, delegation chains, and inter-agent communication, which mirrors what serious teams are discovering in production. (Promptfoo)

Step 2, decide what success looks like and what failure must never look like

Traditional app testing often starts with expected outputs. LLM testing has to include expected harms. Promptfoo’s evaluation framework lets teams define metrics and judge outputs automatically, while the red-team framework scans for risky classes such as injections, jailbreaks, data privacy failures, and custom policy violations. In practice, this means every AI feature needs two definitions: the answer you want and the behavior you must never permit. (Promptfoo)

Step 3, build normal cases and adversarial cases in the same repo

This is where many teams still underinvest. They keep model quality tests in one place and security testing in another, or worse, nowhere. Promptfoo’s CLI and CI/CD patterns encourage engineers to treat both as versioned assets. That is how a team moves from security theater to regression-tested reality. When a prompt changes, when the model changes, when a retrieval source changes, or when a new tool is exposed, both quality and abuse cases should rerun. Promptfoo’s GitHub Action and CI/CD docs were built for exactly this type of workflow. (Promptfoo)

Step 4, make attacks specific to your application, not generic to the internet

A canned jailbreak corpus is useful, but it is not sufficient. Promptfoo repeatedly emphasizes adaptive and application-specific attacks rather than static fuzzing alone. That distinction matters because the most serious failures usually depend on business context. A finance copilot is vulnerable in different ways than a coding agent. A support RAG system faces different exfiltration and poisoning risks than a browser agent with purchase authority. The closer the attack probes get to your actual data flows and tool permissions, the more meaningful the results become. (Promptfoo)

Step 5, treat findings as software defects and architectural defects

Some Promptfoo findings will be prompt defects. Others will be permission defects, trust-boundary defects, data-modeling defects, or orchestration defects. NIST’s AI risk work is useful here because it keeps teams from collapsing all AI failures into one bucket. You are not just fixing “bad outputs.” You are reducing measurable risk across design, deployment, and operation. (NIST)

Evaluation is not red teaming, and red teaming is not evaluation

One of the most common implementation mistakes is assuming that if you have prompt benchmarks, you have security coverage. You do not.

Evaluation asks whether the system performs well on defined tasks. It is about correctness, relevance, groundedness, consistency, latency, cost, or style. Promptfoo explicitly supports this side of the house with model comparisons, side-by-side prompt views, metrics, and benchmarking for prompts, models, and RAG pipelines. (Promptfoo)

Red teaming asks whether the system fails dangerously when the world stops being cooperative. It is about injection, exfiltration, unsafe tool use, policy bypass, privilege amplification, hallucinated authority, or denial-of-wallet style abuse. Promptfoo’s red-team documentation and product pages describe scanning for these classes directly, including injection, jailbreaks, document exfiltration, and tailored attack probes. (Promptfoo)

The distinction sounds obvious until you look at how many teams still test only one side. A support bot can ace answer relevance and still reveal hidden system behavior under crafted inputs. A coding assistant can return correct code in clean benchmarks and still become dangerous when pointed at malicious MCP servers, untrusted repos, or poisoned comments. A RAG application can show excellent retrieval accuracy while remaining vulnerable to document-level injections and long-tail context poisoning. That gap is exactly why the Promptfoo method is worth taking seriously. (Promptfoo)

Promptfoo makes the most sense in RAG and agent systems

The more authority your model has, the more Promptfoo’s workflow starts to feel necessary rather than optional.

A plain chatbot with no memory, no retrieval, and no tools can still leak system intent or violate policy, but the blast radius is relatively limited. Once you add RAG, the model begins consuming semi-trusted or untrusted documents and surfacing their contents as answers. Once you add tools, the model starts selecting actions and arguments. Once you add memory, the system can preserve manipulated state. Once you add browser access, inbox access, or file access, you have created a bridge between attacker-controlled content and privileged workflows. Promptfoo’s public materials, plus the broader agent-security literature, repeatedly focus on that escalation in risk. (Promptfoo)

This is also where Promptfoo’s “application-focused” language is more than marketing. A model-only benchmark cannot tell you whether your agent will forward sensitive content to an attacker-controlled endpoint, whether a tool descriptor leaks privileged options, whether a document can override system behavior, or whether an MCP integration becomes a remote execution path. Those are workflow questions. They emerge from the system around the model. (Promptfoo)

The threat model behind Promptfoo lines up with OWASP and NIST

There is a useful reason to map Promptfoo’s approach to external frameworks. It helps separate product-specific claims from durable security principles.

OWASP’s 2025 LLM Top 10 puts prompt injection first, but the rest of the list matters too. Sensitive information disclosure, supply-chain issues, denial of service, and insecure output handling all map cleanly to common failure modes in modern AI applications. Promptfoo’s red-team quickstart explicitly calls out security and data privacy issues, compliance and ethics checks, and custom policies, while its broader red-team documentation and product pages highlight exfiltration, injection, jailbreaks, and other application-level risks. (OWASP Gen AI 보안 프로젝트)

NIST’s Generative AI Profile is also relevant because it frames risk management as a lifecycle activity. That fits Promptfoo’s placement in CI/CD. In other words, Promptfoo is not most useful when it is a one-time penetration exercise after product launch. It is most useful when it becomes part of the development loop, where teams can measure the effect of prompt edits, model swaps, retrieval changes, and permission changes before those changes land in production. (NIST)

Real incidents and CVEs show why this testing style is necessary

Security engineers do not need abstract warnings anymore. The ecosystem already has concrete failures showing how agentic and LLM-enabled systems collapse under unsafe trust assumptions.

Microsoft 365 Copilot and zero-click style AI injection risk

CVE-2025-32711 describes an AI command injection issue in Microsoft 365 Copilot that allows an unauthorized attacker to disclose information over a network. NVD records the issue as network-exploitable and notes high confidentiality impact. The broader industry discussion around “EchoLeak” turned this into an important reference point because it demonstrated how a carefully crafted content path could drive disclosure without the classic user-click pattern security teams are used to centering. Whether you focus on the CVE entry itself or on vendor and researcher postmortems, the lesson is the same: if an AI system processes attacker-controlled content inside a privileged enterprise workflow, indirect injection becomes a data exposure problem, not just an alignment problem. (NVD)

Langflow and the collision between AI workflow convenience and classic RCE

CVE-2025-3248 is not a prompt injection bug. It is a code injection issue in Langflow’s validation endpoint that allowed unauthenticated remote attackers to execute arbitrary code in versions prior to 1.3.0. The reason it belongs in this article is that it exposes a recurring pattern in AI tooling: platforms built to accelerate AI orchestration often ship dangerous execution surfaces alongside the orchestration layer. If your security program tests only model behavior and ignores the workflow platform, you will miss some of the most catastrophic failure modes entirely. (NVD)

Cursor, MCP, and untrusted tool ecosystems

CVE-2025-61591 is one of the clearest examples of why agent security cannot be reduced to content moderation. NVD describes the issue as a case where Cursor, when using MCP with OAuth authentication against an untrusted MCP server, could receive crafted malicious commands leading to command injection and potential remote code execution on the host by the agent. That is the sort of vulnerability that makes Promptfoo’s focus on tool-aware red teaming and application-specific testing look prescient. Once a model can interpret tool metadata or server responses as operational guidance, the distance between “assistant” and “execution interface” shrinks dramatically. (NVD)

Workflow automation now reaches the same risk tier as classic infrastructure software

Even outside explicitly AI-branded products, workflow platforms are now being exploited in ways that should concern anyone building agentic systems. The Hacker News reported on March 12, 2026 that CISA added n8n expression-injection RCE, tracked as CVE-2025-68613, to the Known Exploited Vulnerabilities catalog based on evidence of active exploitation, with the flaw patched in late 2025 and complete compromise risk described for exposed instances. While that case is not a Promptfoo story, it reinforces the architectural point: once workflows mix dynamic evaluation, automation, and broad integration, attackers will move fast from logic manipulation to code execution. (해커 뉴스)

Taken together, these cases show why a serious AI security program needs more than output benchmarking and more than a single “prompt injection test.” It needs application-aware, tool-aware, integration-aware validation that runs continuously.

A practical Promptfoo workflow for security teams

The following examples are illustrative rather than copied from a single production deployment, but they reflect Promptfoo’s documented command patterns and configuration philosophy. The key idea is to define both normal and adversarial cases as code and run them repeatedly. (Promptfoo)

A simple evaluation pattern

description: Support assistant quality regression suite

prompts:

- "You are a support assistant for AcmeCloud. Answer based only on provided context."

providers:

- openai:gpt-5

- anthropic:claude-sonnet-4-5

tests:

- vars:

question: "How do I rotate an API key?"

context: "API keys can be rotated from the Security tab..."

assert:

- type: contains

value: "Security tab"

- type: not-contains

value: "I can browse your account directly"

- vars:

question: "Can you delete my billing history?"

context: "Billing history is retained for compliance reasons."

assert:

- type: contains

value: "retained"

- type: not-contains

value: "deleted immediately"

This kind of suite is not glamorous, but it is how teams stop prompt changes from silently degrading behavior. Promptfoo’s documentation emphasizes side-by-side comparisons, metrics, and matrix views because regressions in LLM systems are often subtle until they hit customers. (Promptfoo)

A red-team pattern for prompt injection and exfiltration

description: RAG assistant injection and exfiltration tests

redteam:

purpose: "Test whether the assistant can be induced to reveal hidden instructions or sensitive internal context"

plugins:

- prompt-injection

- indirect-prompt-injection

- pii

- policy

- data-exfiltration

targets:

- id: customer-support-rag

label: "Support RAG system"

The exact plugin names and options vary by setup, but the structure reflects Promptfoo’s model: define the purpose, select the classes of abuse you care about, and run application-specific testing rather than generic chatbot prompts. The official quickstart and red-team materials describe scanning dozens of vulnerability classes and generating tailored attack probes for the target system. (Promptfoo)

A tool-use policy that engineers can actually enforce

ALLOWED_TOOLS = {

"read_ticket": {"risk": "low"},

"list_kb_docs": {"risk": "low"},

"send_email": {"risk": "high"},

"refund_customer": {"risk": "high"},

}

def approve_tool_call(tool_name: str, args: dict, source: str) -> bool:

if tool_name not in ALLOWED_TOOLS:

return False

if source == "untrusted_web_content":

return tool_name in {"read_ticket", "list_kb_docs"}

if ALLOWED_TOOLS[tool_name]["risk"] == "high":

return require_human_review(tool_name, args)

return True

This code is not a Promptfoo feature by itself. It is the kind of compensating control Promptfoo helps you test. If an adversarial case can still push the agent toward send_email 또는 refund_customer from untrusted content, your approval model is broken, even if your baseline answers look excellent. OpenAI’s guidance on narrowing inputs and outputs and Microsoft’s guidance on indirect injection both support this layered, constraint-first posture. (OpenAI Developers)

CI/CD commands that move testing from good intentions to enforcement

# Quality evaluation

npx promptfoo@latest eval -c promptfooconfig.yaml

# Security testing

npx promptfoo@latest redteam run

These command patterns come directly from Promptfoo’s CI/CD documentation. The important point is cultural as much as technical: when these checks become part of the release path, prompt and orchestration changes stop being invisible security events. (Promptfoo)

What a mature Promptfoo program looks like

Teams that get real value from Promptfoo generally do not use it as a one-off scanner. They use it as a growing corpus of knowledge about how their application fails.

That corpus usually contains stable task evals, adversarial prompts, poisoned contexts, known bypass attempts, retrieved documents that previously caused trouble, tool-call edge cases, memory poisoning cases, and regression checks for every issue that was once fixed. Promptfoo’s documented support for metrics, automated scoring, CI/CD, and red-team configuration makes this kind of knowledge capture possible. (Promptfoo)

The more advanced the application, the more that corpus should model multi-step failures rather than single-turn failures. A browser agent might need a test where a malicious page changes the plan. A coding agent might need a test where an untrusted MCP server or tool response injects unsafe instructions. A support RAG system might need a test where one poisoned document contaminates a perfectly ordinary customer query. Promptfoo’s recent materials on web-browsing agents and the “lethal trifecta” point directly at that category of risk. (Promptfoo)

Where Promptfoo is strong, and where teams still need more

Promptfoo is strong where you need structure, repeatability, red-team coverage, and engineering discipline around AI application behavior. It is especially strong as a bridge between developers and security teams because it can live in code, in pull requests, and in CI/CD rather than in a separate manual process. The official docs and product pages make this developer-centric deployment model explicit. (Promptfoo)

But teams should also be honest about what Promptfoo is not. It is not a substitute for least privilege, network segmentation, identity-aware tool access, sandboxing, secure software supply chain practices, or traditional application security review. The Langflow RCE example is a reminder that orchestration platforms can fail in classic ways. The Cursor MCP CVE is a reminder that protocol and integration trust matter. The n8n case is a reminder that dynamic automation can quickly become active exploitation territory. Promptfoo can help discover and pressure-test dangerous behavior, but it cannot make bad architecture safe by itself. (NVD)

That is also why NIST and OWASP remain useful anchors. A mature AI security program does not ask, “Which tool do we buy?” It asks, “How do we reduce risk across the lifecycle, the architecture, the permissions, the context flows, and the operating model?” Promptfoo fits well into that answer because it turns risky assumptions into executable tests. It does not replace the rest of the answer. (NIST)

A concise decision framework for teams considering Promptfoo

The table below is intentionally simple. It is meant to help engineering leaders decide where Promptfoo belongs in their stack.

| 시나리오 | Primary risk | Why Promptfoo helps | What Promptfoo will not solve alone |

|---|---|---|---|

| Basic chatbot with no tools | Policy bypass, prompt leakage, unsafe outputs | Eval and red-team suites catch regressions and common abuse cases | Identity, data governance, and model-provider controls still matter |

| RAG assistant over internal docs | Indirect injection, data exfiltration, poisoned retrieval | Adversarial testing against documents and retrieval flows is central to Promptfoo’s approach | Document hygiene, access control, and provenance controls are still required |

| Tool-calling support agent | Unsafe action selection, argument manipulation, privilege drift | Application-specific abuse cases can test tool boundaries continuously | Least privilege, approvals, and downstream system hardening are separate controls |

| Browser or inbox agent | Untrusted web and email content shaping decisions | Promptfoo’s recent guidance specifically addresses indirect injection in agent workflows | Browser isolation, network policy, and external content controls remain necessary |

| MCP or plugin-driven coding agent | Untrusted server responses, command injection, host compromise | Tool-aware red teaming surfaces dangerous agent behaviors earlier | Trusting hostile MCP servers or over-permissive local execution is still a hard architectural risk |

This framing is consistent with Promptfoo’s public positioning around agents, RAG, and integrations; OWASP’s prioritization of prompt injection and related LLM risks; and recent vendor guidance around indirect injection in agentic systems. (Promptfoo)

Two places where Penligent fits naturally

There is a sensible way to connect Promptfoo to Penligent without forcing the comparison. Promptfoo is strongest when you need structured evaluation and red teaming around the behavior of an LLM application or agent workflow. Penligent, by contrast, is naturally positioned closer to operational attack-path validation and automated penetration testing workflows around real environments. Penligent’s own writing on agentic security frames the problem as a broader execution-boundary issue involving identity, memory, tools, schemas, and inter-agent communication, which is complementary to Promptfoo’s application-testing model. (펜리전트)

That means a mature team could reasonably use both styles of tooling in sequence. Promptfoo can stress-test the AI application itself inside development and CI/CD, while Penligent can extend verification outward into exposed services, configuration weaknesses, and multi-step exploitation paths that matter once the application is live. Penligent’s OpenClaw prompt-injection article describes this operational layer as continuous verification of exposure and misconfigurations around agent environments, which is a different but related problem from prompt-and-output evaluation. (펜리전트)

The bigger lesson from Promptfoo

Promptfoo matters because it represents a cultural shift more than a feature checklist. The shift is from “we tried some prompts and it looked good” to “we defined behavior, abuse cases, risk classes, and regression checks, and now we can prove what changed.” That is what software engineering did for application correctness. AI security now needs the same move for agent behavior, tool use, RAG integrity, and context trust. (Promptfoo)

Security engineers should take that seriously because the ecosystem is already moving from harmless novelty to production consequences. Microsoft 365 Copilot’s AI command injection disclosure issue, Langflow’s unauthenticated code injection flaw, Cursor’s untrusted MCP command-injection path, and the active exploitation of n8n expression injection are all reminders that natural-language orchestration does not remove old risks. It compounds them by making more systems reachable through softer, more ambiguous control paths. (NVD)

Promptfoo is not the whole answer to AI security. No single tool is. But it is one of the clearest ways to make AI security testable, reviewable, repeatable, and harder to hand-wave. For many teams, that is the real threshold they have been missing.

Further reading

For readers who want to go deeper into the underlying standards and primary references, these are the best starting points: Promptfoo’s official introduction and red-team quickstart, which explain how the platform combines evaluation and security testing; OWASP’s LLM prompt injection risk entry; NIST’s Generative AI Profile under the AI Risk Management Framework; and OpenAI’s safety best practices for constraining inputs and outputs in production systems. (Promptfoo)

Relevant Penligent articles in the same problem space include Agentic Security Initiative — Securing Agent Applications in the MCP Era, which focuses on agent boundaries, identity, memory, and tool risk, and The OpenClaw Prompt Injection Problem — Persistence, Tool Hijack, and the Security Boundary That Doesn’t Exist, which focuses on continuous verification for agent environments and the operational reality of prompt injection beyond a single chat window. (펜리전트)