Por um tempo, a "IA na segurança cibernética" foi tratada como um exercício de branding. Os fornecedores grampeavam um chatbot em uma fila de alertas, chamavam-no de autônomo e esperavam que ninguém olhasse muito de perto. Essa fase já passou. As evidências atuais da Microsoft, do Google Cloud, da IBM, do NIST, da OWASP e do MITRE apontam para uma realidade mais severa: A IA agora é significativa na segurança cibernética, mas somente quando está vinculada a problemas operacionais concretos, como velocidade de detecção, profundidade de investigação, abuso de identidade, governança, proteção de dados e validação de caminhos de ataque reais. Ao mesmo tempo, essas mesmas fontes são igualmente claras quanto ao fato de que a IA expande a superfície de ataque, acelera a técnica do invasor e introduz modos de falha que não são mapeados de forma clara nos manuais de segurança de software mais antigos. (NIST)

Essa é a maneira correta de enquadrar o assunto. A IA na segurança cibernética não é uma coisa só. A Cisco a define em termos práticos como o uso de algoritmos e técnicas de aprendizado de máquina para analisar conjuntos de dados grandes e complexos, identificar padrões e anomalias e recomendar maneiras de reduzir os riscos. O perfil emergente de IA cibernética do NIST aprimora ainda mais o quadro ao separar o espaço em três domínios de risco: a segurança cibernética dos sistemas de IA, os ataques cibernéticos habilitados para IA e a defesa cibernética habilitada para IA. Essa distinção é importante porque uma equipe pode ser excelente no uso de IA dentro do SOC e ainda assim ser perigosamente fraca na proteção dos sistemas de IA que implantou. (Cisco)

O material público mais forte que está surgindo atualmente sobre esse tópico continua abordando os mesmos temas por um motivo. A Microsoft concentra-se na triagem de ameaças, no phishing em escala e na assistência autônoma para os defensores. O Google enfatiza a redução do trabalho dos analistas, a aceleração da investigação e da engenharia de detecção e a defesa contra campanhas de identidade pesada. A IBM se concentra na governança, nos controles de acesso, na proteção de dados e na economia mensurável da IA de segurança quando ela é usada extensivamente e controlada adequadamente. O OWASP, o NIST, o CISA e o MITRE acrescentam a parte que o marketing do fornecedor geralmente suaviza: injeção imediata, envenenamento de dados e modelos, risco da cadeia de suprimentos, vulnerabilidades de serviço de modelo e novos casos de abuso nativos de IA já são reais o suficiente para exigir estruturas formais e taxonomias compartilhadas. (Google Cloud)

O resultado é que a conversa amadureceu. Uma equipe séria não está mais perguntando se a IA pertence à pilha. Ela está perguntando onde a IA produz uma clara vantagem operacional, onde os seres humanos devem continuar sendo os principais tomadores de decisão, quais cargas de trabalho de IA merecem o mesmo endurecimento que qualquer outro serviço essencial e como testar a infraestrutura clássica e os fluxos de trabalho habilitados para IA sob pressão adversária real. Essa é a versão do tópico que vale a pena publicar para engenheiros de segurança, equipes vermelhas, pentesters e profissionais de recompensa por bugs.

O que realmente significa a IA na segurança cibernética

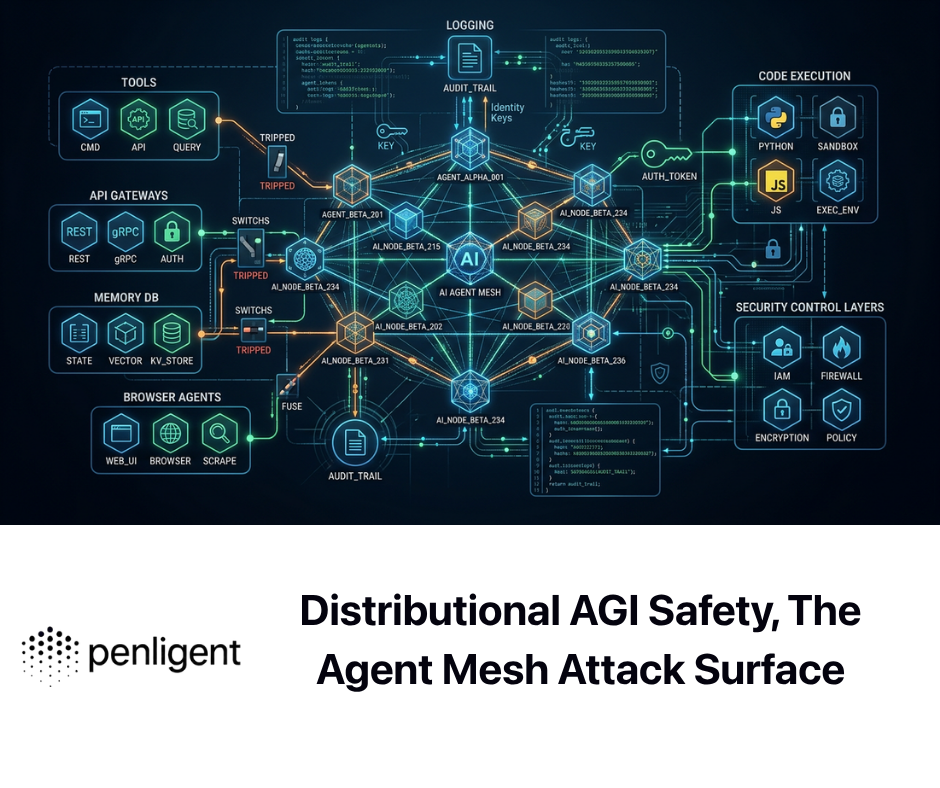

Em um nível de engenharia, a IA na segurança cibernética agora abrange pelo menos quatro camadas diferentes. A primeira é a detecção estatística e a análise de anomalias, que já existia muito antes da atual onda generativa. A segunda é o aumento de analistas: consulta em linguagem natural, resumo de casos, explicação de registros, geração de regras de detecção e assistência na investigação de ameaças. A terceira é a orquestração e a automação, em que a IA ajuda a correlacionar sinais, priorizar incidentes, recomendar ações e, às vezes, executar fluxos de trabalho limitados. A quarta é a ação ofensiva e defensiva autônoma ou semiautônoma, em que o sistema faz mais do que resumir - ele preserva o estado, usa ferramentas, interage com alvos e produz evidências que podem ser verificadas por outro engenheiro. Cada vez mais, os materiais de fornecedores e padrões públicos tratam essas camadas de forma diferente, pois os riscos e benefícios não são intercambiáveis. (Google Cloud)

É nesse ponto que muitos textos públicos ainda dão errado. Um modelo que explica um alerta de SIEM em inglês simples não é equivalente a um sistema que pode gerar detecções confiáveis. Um modelo que escreve uma consulta KQL decente não é o mesmo que pode validar um caminho de identidade suspeito nos logs, na política de IAM e nos eventos do plano de controle da nuvem. E um assistente de IA que sugere a próxima etapa para um pentester não é o mesmo que uma plataforma que pode manter o estado autenticado, girar em um fluxo de trabalho, provar a capacidade de exploração e produzir um relatório reproduzível. A diferença não é semântica. Ela é operacional. Ela determina se o sistema reduz a carga de trabalho ou simplesmente muda sua forma. (Microsoft Learn)

A maneira mais útil de pensar sobre esse espaço é separar a "conveniência da linguagem" da "capacidade de segurança". As interfaces de linguagem natural são valiosas. Elas permitem que analistas juniores interroguem um lago de dados, traduzam scripts suspeitos ou entendam a lógica de detecção mais rapidamente. A Microsoft explicitamente enquadra o Security Copilot na investigação e correção de ameaças, na criação de consultas KQL e na análise de scripts suspeitos. Da mesma forma, o Google destaca a consulta em linguagem natural, a criação de regras de detecção, a criação de manuais e a resolução mais rápida de incidentes no SecOps. Esses são ganhos reais. Mas não são a história completa e não devem ser confundidos com competência de segurança autônoma. (Microsoft Learn)

A mesma distinção se aplica ao lado ofensivo. O mercado atual ainda usa "ferramenta de pentest de IA" de forma vaga, mas o material público tecnicamente relevante converge cada vez mais para uma definição mais rígida: um sistema real deve realizar um trabalho reconhecível de teste de penetração, manter o contexto, lidar com aplicativos com estado, raciocinar sobre caminhos de ataque e produzir evidências em vez de resumos elegantes. Esse enquadramento é especialmente importante porque muitas equipes agora estão avaliando se a IA pertence apenas à detecção e à resposta ou se também deve ser confiável para validar a exposição continuamente em ambientes de preparação e produção. (Penligente)

A tabela abaixo é uma maneira prática de separar as categorias.

| Camada | O que ele faz bem | Onde ele falha |

|---|---|---|

| Detecção estatística e de ML | Encontra anomalias, pontua o comportamento, correlaciona sinais de alto volume | Dificuldades com contexto, lógica comercial e explicações confiáveis |

| Copiloto analista | Resume incidentes, escreve consultas, explica scripts, reduz o trabalho | Pode ter alucinações, priorizar excessivamente a linguagem polida em detrimento da precisão |

| Orquestração assistida por IA | Cria manuais, correlaciona eventos de várias etapas e recomenda ações de resposta | Pode ultrapassar o limite quando as permissões, a qualidade dos dados e as proteções são fracas |

| Validação e testes autônomos | Mantém o estado do fluxo de trabalho, comprova o impacto, gera evidências, testa novamente de forma contínua | Requer controles fortes, escopo limitado, registro e revisão humana |

Essa tabela é uma síntese, mas está muito próxima da maneira como os principais fornecedores e órgãos de padronização atuais descrevem o espaço: o uso limitado de assistentes já é a tendência dominante; uma autonomia mais profunda está surgindo; e a governança, a validação e o gerenciamento de riscos se tornam mais importantes à medida que o sistema ganha mais acesso, mais memória e mais capacidade de agir. (IBM)

Onde a IA já oferece valor defensivo real

O valor menos controverso da IA na segurança cibernética é a velocidade. Não "velocidade" no sentido vago de marketing de produto, mas velocidade nos locais em que os defensores estão cronicamente sobrecarregados: triagem de alertas, assistência à investigação, correlação de eventos relacionados e compressão do trabalho repetitivo dos analistas. A IBM descreve a segurança com IA como uma forma de melhorar a velocidade, a precisão e a produtividade das equipes de segurança e observa que a análise de risco com IA pode produzir resumos de maior fidelidade e acelerar as investigações e a triagem de alertas. O Google apresenta um caso semelhante para o SecOps, descrevendo como o Gemini pode reduzir o trabalho, simplificar a interação com os dados de segurança e ajudar as equipes a criar detecções, criar manuais e investigar ameaças mais rapidamente. (IBM)

Os números públicos da Microsoft explicam por que isso é importante. Em março de 2025, a Microsoft disse que estava detectando mais de 30 bilhões de e-mails de phishing em 2024, processando 84 trilhões de sinais de ameaças por dia e observando cerca de 7.000 ataques de senha a cada segundo. Esses números não significam que a IA resolve magicamente o problema. Significam, sim, que a antiga suposição de que os humanos inspecionariam manualmente a maior parte dessa superfície não existe mais. Nessa escala, o valor imediato da IA não é o fato de ela substituir os analistas. Ela permite que os analistas gastem seu tempo onde o julgamento humano é realmente importante: incidentes incomuns, ambiguidade entre sistemas, caminhos de acesso em casos extremos e correções complexas. (Microsoft)

É por isso que as melhores implementações de IA de segurança não começam com autonomia. Elas começam com a compactação. O modelo consegue resumir dez alertas relacionados em uma única hipótese de incidente? Ele pode escrever uma consulta de primeira passagem que um humano sintoniza em vez de começar do zero? É capaz de traduzir um fragmento suspeito do PowerShell ou do Bash em uma intenção legível? É capaz de revelar o provável raio de explosão mais rapidamente do que um analista que pula manualmente entre as guias? Essas são pequenas vitórias, mas são operacionalmente significativas porque recuperam as horas dos analistas sem fingir que o modelo é uma autoridade final. A Microsoft posiciona explicitamente o Security Copilot em torno desse tipo de trabalho: triagem, orientação de correção, geração de consultas e análise de scripts. (Microsoft Learn)

O próximo nível de valor é a detecção de sequência. Muitos ataques importantes não ficam ocultos porque um único evento é invisível. Eles são perdidos porque o padrão significativo é distribuído ao longo do tempo, das identidades, das APIs, dos serviços e dos hosts. O GuardDuty Extended Threat Detection da AWS é um bom exemplo de como os defensores da nuvem estão operacionalizando a IA aqui. A AWS descreve que a IA e o ML estão sendo usados para correlacionar vários sinais em descobertas de sequências de ataques que podem abranger descoberta de privilégios, manipulação de API, atividade de persistência e exfiltração, com resumos em linguagem natural e orientação de correção alinhada com ATT&CK. Esse é exatamente o tipo de tarefa em que o reconhecimento de padrões em uma telemetria extensa é útil. (Amazon Web Services, Inc.)

A defesa de identidade é outra área em que a IA já se justifica, em parte porque o cenário de ameaças mudou para lá de forma tão agressiva. O Google Cloud destacou que credenciais roubadas, phishing, força bruta e outros vetores baseados em identidade foram responsáveis por 37% das violações bem-sucedidas em 2024, citando as últimas descobertas do M-Trends da Mandiant. Esse número se alinha a uma mudança mais ampla do setor, que se distancia do pensamento que prioriza o malware e se volta para o abuso de identidade, o roubo de sessões, os infostealers e o uso indevido de privilégios. Nesse ambiente, a IA ganha seu sustento quando ajuda as equipes de segurança a reconhecer a diferença entre um evento de login barulhento e um padrão significativo de risco de credenciais vinculado ao usuário, ao dispositivo, à sessão e ao comportamento downstream. (Google Cloud)

Essa mudança de identidade também explica por que a IA é cada vez mais valiosa fora do próprio painel do SOC. Ela pertence à revisão de acesso, à governança de identidade não humana, à análise de contas privilegiadas e à detecção de anomalias de sessão. O material de custo de violação de 2025 da IBM enfatiza que as organizações que observaram incidentes relacionados à IA geralmente não tinham controles adequados de acesso à IA e vincula explicitamente a segurança de identidade e a autenticação moderna resistente a phishing à redução prática de riscos. Se uma organização adicionar agentes de IA, endpoints que servem de modelo ou fluxos de trabalho de recuperação sem reforçar os limites de identidade, ela não estará modernizando a segurança. Ela está multiplicando o número de maneiras pelas quais um token comprometido pode se tornar um incidente maior. (IBM)

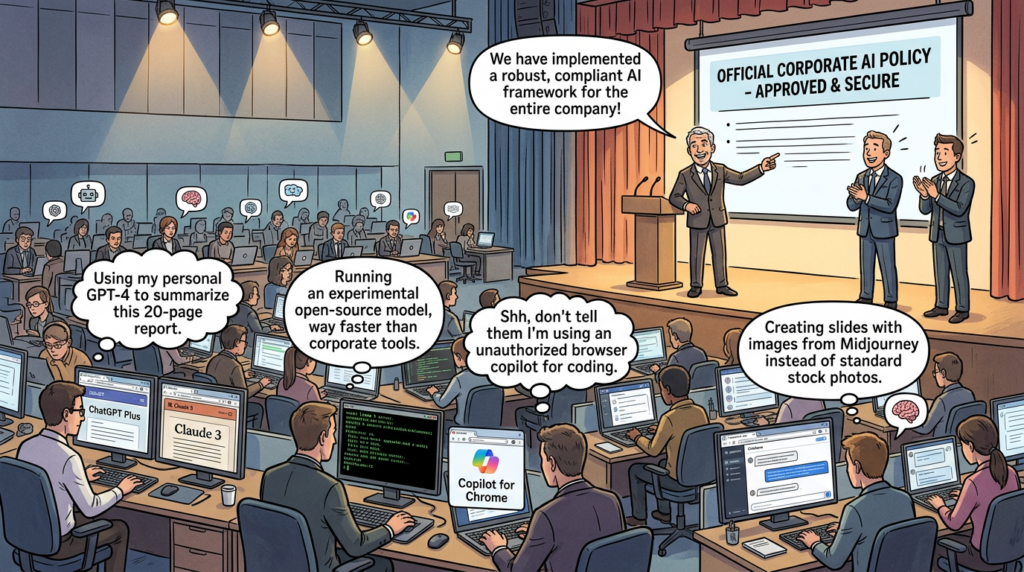

Um terceiro caso de uso maduro é a segurança de dados. Ele não recebe a mesma atenção que as demonstrações de modelos chamativos, mas é mais importante. O relatório de violações da IBM de 2025 enquadra o momento atual como uma "lacuna de supervisão de IA", destacando que os sistemas de IA sem controle têm maior probabilidade de serem violados e são mais caros quando o são. O mesmo relatório diz que 97% das organizações que relataram um incidente de segurança relacionado à IA não tinham controles adequados de acesso à IA, enquanto 63% não tinham políticas de governança de IA para gerenciar a IA ou evitar a IA sombra. Esses números explicam por que a IA na segurança cibernética não pode ser reduzida apenas a modelos de detecção. Ela deve incluir descoberta de dados, classificação, controles de acesso, gerenciamento de chaves e visibilidade de onde as cargas de trabalho de IA estão realmente sendo executadas. (IBM)

Os casos de uso defensivo que continuam aparecendo nos principais fornecedores não são aleatórios. Eles mapeiam os pontos problemáticos reais que sobreviveram a todos os ciclos de hype: muita telemetria, muitas tarefas repetitivas, muito atraso entre o sinal e o significado, pouca visibilidade do acesso arriscado e muita dificuldade para conectar eventos dispersos em uma narrativa de incidente defensável. A IA ajuda mais quando o problema é volume, padrão e linguagem. Ela ajuda menos quando o problema é a confiança, a intenção e o contexto comercial que existe apenas nas pessoas, nos sistemas de gerenciamento de mudanças ou nos fluxos de trabalho específicos de aplicativos.

O atacante também se beneficia, e isso altera a linha de base

Qualquer artigo honesto sobre IA na segurança cibernética precisa dizer claramente o seguinte: os defensores não são o único lado que está obtendo vantagem. O Relatório de Ameaças Globais de 2025 da CrowdStrike afirmou que o engano com base em GenAI estava aumentando, relatou um aumento de 442% no vishing e descreveu um crescimento mais amplo em ataques sem malware e baseados em identidade. O material de inteligência de ameaças do Google Cloud também afirma que os agentes apoiados pelo Estado e os criminosos cibernéticos estão integrando a IA em todo o ciclo de vida do ataque, indo além dos simples ganhos de produtividade. O Digital Defense Report 2025 da Microsoft inclui um estudo de caso em que uma rede criminosa explorou chaves de API roubadas para contornar os controles de segurança de IA e gerar imagens abusivas produzidas por IA. A IA não é apenas um escudo. Ela é um multiplicador de força para uso indevido, escala, engano e experimentação. (CrowdStrike)

O lugar mais fácil de ver isso é a engenharia social. O phishing sempre dependeu da qualidade da linguagem, da plausibilidade contextual e da escala. Os modelos generativos reduzem o custo de todos os três. Eles ajudam os invasores a escrever e-mails de phishing mais limpos, traduzir o conteúdo naturalmente, imitar o tom adequado à função, localizar mensagens e iterar iscas mais rapidamente. Quando combinados com áudio deepfake ou enriquecimento de identidade sintética, o problema muda de "identificar o e-mail em inglês incorreto" para "reconhecer a manipulação em uma mensagem que parece totalmente normal". O foco da CrowdStrike no engano alimentado por IA e a ênfase do Google em phishing e vishing aprimorados por IA são sinais de que a engenharia social está se tornando menos óbvia do ponto de vista sintático e mais convincente do ponto de vista operacional. (CrowdStrike)

Mas a qualidade da linguagem é apenas parte da mudança. A mudança mais consequente é que os modelos ajudam cada vez mais os invasores a operacionalizar ações específicas do ambiente. O Google Threat Intelligence Group escreveu no final de 2025 que havia observado famílias de malware usando LLMs durante a execução, gerando dinamicamente scripts mal-intencionados, automodificando-se para evasão e recebendo comandos de modelos de IA em vez de apenas da infraestrutura tradicional de comando e controle. O exemplo do PROMPTSTEAL do GTIG é importante não porque todo invasor agora tem um LLM dentro do malware, mas porque mostra a direção da viagem: os modelos estão começando a influenciar o comportamento do tempo de execução, não apenas o planejamento pré-ataque. (Google Cloud)

Isso é importante para os defensores porque reduz o tempo entre o reconhecimento e a ação. Um invasor não precisa mais de habilidades perfeitas de criação de scripts para adaptar comandos a um novo ambiente. Um fluxo de trabalho assistido por IA pode ajudar a gerar comandos de descoberta, interpretar a saída da ferramenta, resumir caminhos de privilégios prováveis e converter anotações confusas em ações repetíveis. Isso não torna todo invasor sofisticado. Mas torna os atacantes medíocres mais rápidos. E os invasores medíocres em escala são um grande problema. As previsões de segurança do Google para 2026 e 2025 alertam para o fato de que phishing, vishing, fraudes por SMS e outros ataques de engenharia social são cada vez mais aprimorados por IA, enquanto a AWS já descreveu campanhas reais em que a IA ajudou um ator relativamente pouco sofisticado a explorar fundamentos fracos em escala. (Google Cloud)

É por isso que a frase "a IA mudou tudo" é tanto errada quanto útil. Errado, porque os invasores ainda vencem por meio de falhas conhecidas: credenciais fracas, acesso privilegiado em excesso, superfícies de gerenciamento expostas, desserialização insegura, manuseio de sessão quebrado e visibilidade deficiente dos ativos. Útil, porque a IA ajuda os invasores a descobrir e encadear esses pontos fracos com mais rapidez, além de ajudá-los a parecer mais convincentes ao fazer isso. O artigo da AWS sobre ataques aumentados por IA contra dispositivos FortiGate mostra exatamente esse ponto: a campanha ainda dependia de interfaces expostas, credenciais fracas e autenticação de fator único. A IA não substituiu os fundamentos. Ela acelerou o abuso de fundamentos ruins. (Amazon Web Services, Inc.)

A lição prática é que a IA na segurança cibernética não substitui a engenharia de segurança convencional. Ela aumenta o custo de não fazer isso. Se o seu ambiente já é frágil, a IA aumenta o retorno do invasor sobre essa fragilidade. Se o seu ambiente for bem controlado, a IA poderá ajudá-lo a defendê-lo com mais eficiência. A mesma tecnologia amplifica quem tem melhor disciplina operacional.

Os sistemas de IA agora fazem parte da superfície de ataque

O maior erro no mercado atual é tratar a IA apenas como uma ferramenta de segurança, em vez de tratá-la também como um assunto de segurança. O Perfil de IA Cibernética do NIST explicita a distinção ao nomear a segurança cibernética dos sistemas de IA como um domínio de risco próprio, separado dos ataques habilitados para IA e da defesa habilitada para IA. O perfil de IA generativa do NIST acrescenta que a IA generativa pode criar riscos que são novos ou que intensificam os riscos tradicionais de software, e organiza o gerenciamento de riscos por meio de governança, mapeamento, medição e gerenciamento durante o ciclo de vida da IA. Essa linguagem não é um preenchimento abstrato de políticas. É um aviso prático de que os sistemas de IA não herdam apenas os riscos normais de software. Eles podem ampliá-los, conectá-los ou criar novos caminhos para que se tornem importantes. (NCCoE)

A CISA, a NSA, o FBI e as agências parceiras fizeram a mesma observação em sua orientação conjunta sobre a implantação de sistemas de IA com segurança. Sua orientação para 2024 diz que a rápida adoção torna os recursos de IA alvos valiosos para agentes mal-intencionados, incluindo invasores que podem tentar cooptar sistemas de IA implantados para fins mal-intencionados. A orientação deixa claro que as organizações devem aplicar as práticas recomendadas de TI tradicionais aos sistemas de IA, mas também enfatiza os modelos de ameaças, a governança, a revisão da fonte do modelo, a validação antes da implementação e os controles de acesso rigorosos e a segurança da API com privilégio mínimo e defesa em profundidade. Em outras palavras, uma implementação de IA não é apenas mais uma implementação de aplicativo. Trata-se de um sistema privilegiado que, muitas vezes, toca dados confidenciais, conteúdo externo e ações automatizadas ao mesmo tempo. (Departamento de Guerra dos EUA)

É por isso que a orientação para o desenvolvimento seguro de IA do NCSC do Reino Unido e de parceiros internacionais continua sendo útil mesmo em 2026. Ela estrutura a segurança da IA em torno de quatro áreas do ciclo de vida: projeto seguro, desenvolvimento seguro, implantação segura e operação e manutenção seguras. Ele destaca especificamente a modelagem de ameaças, a segurança da cadeia de suprimentos, o gerenciamento de incidentes, a proteção da infraestrutura e dos modelos contra comprometimentos e a facilidade para os usuários fazerem a coisa certa. Essas frases são enfadonhas até que você se lembre do que os sistemas modernos de IA realmente fazem: chamam ferramentas, consomem conteúdo não confiável, armazenam prompts, lidam com embeddings, gerenciam tokens e, às vezes, realizam ações em sistemas de produção. Quando você vê o fluxo de trabalho completo, a necessidade de disciplina do ciclo de vida deixa de parecer opcional. (NCSC)

O Top 10 de 2025 da OWASP para LLMs e aplicativos GenAI ajuda a traduzir essa visão do ciclo de vida em riscos com os quais os engenheiros podem trabalhar. O projeto destaca a injeção imediata, a divulgação de informações confidenciais, o risco da cadeia de suprimentos, o envenenamento de dados e modelos, o tratamento inadequado de resultados, a agência excessiva, o vazamento imediato do sistema, os pontos fracos do vetor e da incorporação, a desinformação e o consumo ilimitado. Esses riscos são importantes porque descrevem onde os aplicativos modernos de IA rotineiramente confundem os limites que os modelos mais antigos de segurança de aplicativos supunham ser mais claros: instrução versus dados, recuperação versus confiança, saída de modelo versus ação executável e acesso a informações versus autoridade para agir sobre elas. (Projeto de segurança de IA da OWASP Gen)

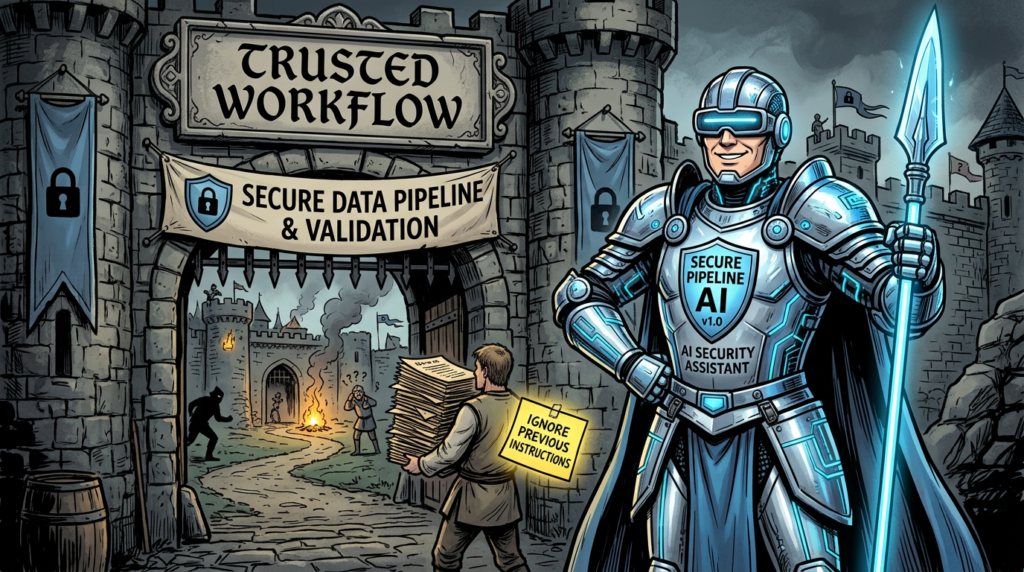

O risco que a maioria dos engenheiros reconhece agora em primeiro lugar é a injeção imediata. A OWASP define esse risco como uma vulnerabilidade na qual as entradas do usuário alteram o comportamento ou a saída do modelo de maneiras não intencionais, inclusive contornando diretrizes, permitindo acesso não autorizado ou influenciando decisões críticas. O que torna a injeção imediata grave não é o fato de um modelo dizer algo bobo. É o fato de um modelo poder ser manipulado por meio de dados que ele deve processar e, em seguida, passar essa manipulação para ferramentas, APIs ou fluxos de trabalho downstream. Quando um pipeline de recuperação, um analisador de e-mail, um agente de navegador, um bot de tickets ou um assistente de SOC começa a confiar no raciocínio do modelo sem isolamento e validação suficientes, a instrução e o conteúdo se tornam perigosamente emaranhados.Projeto de segurança de IA da OWASP Gen)

O excesso de agência é o próximo risco que merece mais atenção do que recebe. A OWASP o enquadra como o problema de dar a um sistema baseado em LLM muita capacidade de ação. Na prática, é nesse ponto que muitas implantações de "IA agêntica" se tornam frágeis. Um assistente que pode ler uma mensagem é uma coisa. Um agente que pode abrir arquivos, chamar ferramentas, extrair contêineres, editar tíquetes, invocar comandos do shell, aprovar alterações de acesso ou operar em sistemas SaaS é algo completamente diferente. Assim que o sistema puder agir, e não apenas aconselhar, a questão passa a ser: qual é o limite da autorização, onde está o ponto de verificação da aprovação, como a intenção é verificada e o que acontece quando o modelo está errado por motivos que parecem plausíveis. (Projeto de segurança de IA da OWASP Gen)

O vazamento do prompt do sistema e as fraquezas do vetor ou da incorporação provam ainda mais que os sistemas de IA não se encaixam perfeitamente em categorias antigas. Um prompt de sistema vazado pode revelar instruções ocultas, regras de negócios, recursos de ferramentas ou suposições de defesa. Os pontos fracos nas camadas de incorporação ou recuperação podem corromper o conhecimento que o modelo vê, envenenar a classificação ou infiltrar conteúdo malicioso em contextos confiáveis. O perfil GenAI do NIST é útil aqui porque insiste que as organizações pensem sobre o risco por estágio do ciclo de vida, por nível de modelo e sistema, por nível de aplicativo e por nível de ecossistema. Essa lente mais ampla é necessária porque muitas falhas de IA não ocorrem em um único lugar. Elas surgem da interação entre dados, modelo, recuperação, permissões e fluxo de trabalho do usuário. (Projeto de segurança de IA da OWASP Gen)

O MITRE ATLAS ajuda a defender o mesmo ponto de vista de uma defesa informada sobre ameaças. O ATLAS existe como uma base de conhecimento de táticas e técnicas de adversários para sistemas habilitados para IA, e o trabalho 2025 Secure AI do MITRE diz que a adoção da IA introduz um cenário de ameaças expandido e novas vulnerabilidades, exigindo compartilhamento de incidentes, descoberta verificável de vulnerabilidades de IA e métodos de red-teaming de IA. Esse é um dos sinais mais claros de que a segurança da IA saiu da fase de "tópico de pesquisa interessante". Defensores maduros agora precisam de uma linguagem comum para ameaças a sistemas de IA e uso malicioso de IA em operações cibernéticas, não apenas conselhos genéricos para "monitorar seus modelos". (MITRE ATLAS)

Uma maneira útil de interpretar o material dos padrões é a seguinte: Os sistemas de IA não são frágeis porque são mágicos. Eles são frágeis porque combinam vários problemas historicamente difíceis em um único lugar. Eles combinam acesso privilegiado, cadeias de suprimentos complexas, instruções ambíguas, dados externos, comportamento probabilístico e, às vezes, ação autônoma. Isso faz com que valha a pena tratá-los como infraestrutura essencial, mesmo quando chegam à organização por meio de uma linha orçamentária de "produtividade" em vez de uma linha de segurança ou de engenharia de plataforma.

O mapa de riscos no estilo OWASP em termos práticos

| Área de risco | Como é a aparência na produção | Por que é importante |

|---|---|---|

| Injeção imediata | Instruções ocultas ou explícitas em documentos, tíquetes, sites, e-mails, registros ou mensagens de usuários alteram o comportamento do modelo | Pode transformar conteúdo não confiável em ações não autorizadas ou vazamento de dados |

| Divulgação de informações confidenciais | Prompts, janelas de contexto, resultados de recuperação, registros ou saídas expõem segredos ou dados regulamentados | Pode vazar credenciais, informações de identificação pessoal, segredos comerciais ou instruções do sistema |

| Cadeia de suprimentos | Modelos, plug-ins, ferramentas de agente, registros, pacotes Python, repositórios de modelos e imagens de contêineres estão comprometidos ou com verificação insuficiente | Permite que os invasores entrem por meio de dependências em vez de pela porta principal |

| Envenenamento de dados e modelos | Os corpora de treinamento, ajuste fino ou recuperação são manipulados | Corrompe os resultados futuros e pode introduzir backdoors ou tendências sistemáticas |

| Excesso de agência | Os agentes podem agir de forma muito ampla sem controles rígidos | Converte erros de modelo em incidentes operacionais |

| Vazamento imediato do sistema | Instruções ocultas são expostas | Revela suposições de projeto, regras internas e possíveis rotas de desvio |

| Fraquezas de vetores e de incorporação | A classificação da recuperação ou a injeção de conhecimento se comporta de forma inesperada | Permite que dados ruins distorçam o que o modelo "sabe" no momento da decisão |

| Consumo ilimitado | As consultas ou os fluxos de trabalho geram um uso descontrolado | Cria riscos de custo, disponibilidade e negação de serviço |

Essa tabela é uma leitura direta da engenharia do conjunto de riscos do OWASP 2025 LLM, além do ciclo de vida e da estrutura de governança do NIST e da orientação de implantação segura de parceiros governamentais. O ponto importante não é memorizar os rótulos. É perceber que esses riscos estão nas costuras - entre o modelo e a ferramenta, o prompt e a política, os dados e a ação, a recuperação e a confiança. (Projeto de segurança de IA da OWASP Gen)

IA na segurança cibernética

A pilha de IA está enviando bugs comuns com consequências extraordinárias

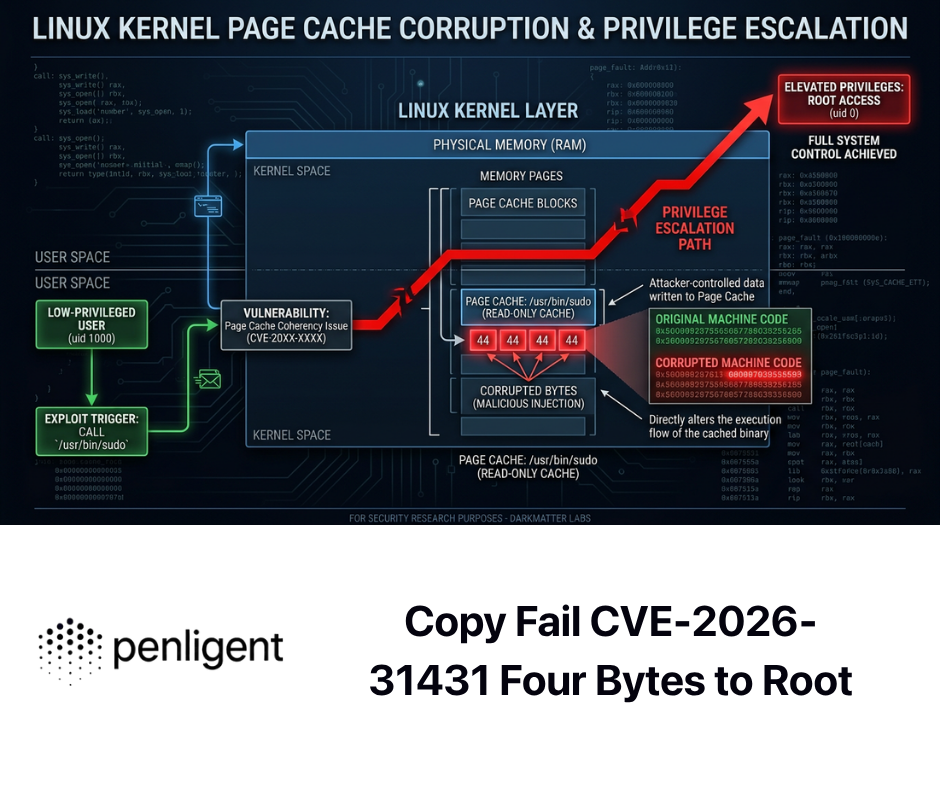

Uma das mudanças mais saudáveis na conversa do último ano é que os engenheiros de segurança pararam de tratar a infraestrutura de IA como intelectualmente separada da segurança de software. O recente registro de CVE em torno de pilhas que servem de modelo torna a verdadeira lição óbvia: muitos sistemas de IA falham de maneiras muito familiares. Desvio de autenticação. Injeção de comando. Desserialização insegura. Entrada não validada. Padrões perigosos em soquetes de rede expostos. A novidade não é a classe de vulnerabilidade. A novidade é o raio de explosão quando o serviço vulnerável fica na frente do gerenciamento de modelos, da execução de ferramentas, de prompts confidenciais ou da infraestrutura de inferência distribuída. (NVD)

Considere o Ollama MCP Server CVE-2025-15063. A NVD o descreve como uma vulnerabilidade de execução de código remoto de injeção de comando no execAsync explorável sem autenticação porque a entrada fornecida pelo usuário não foi validada adequadamente antes de ser usada em uma chamada do sistema. Não há nada de místico nessa classe de bug. É o tipo de problema com o qual as equipes de segurança lidam há anos. Mas em um contexto de IA, as implicações são mais amplas porque os sistemas do tipo MCP geralmente existem exatamente para conectar modelos a ferramentas e caminhos de execução. Um bug nesse sistema não apenas trava uma interface de usuário. Ele pode transformar uma camada de orquestração em uma ponte controlada por um invasor. (NVD)

O CVE-2025-63389 da Ollama conta uma história diferente, mas igualmente importante. O NVD descreve um problema crítico de desvio de autenticação nos pontos de extremidade da API antes e inclusive na versão 0.12.3, em que vários pontos de extremidade de gerenciamento de modelos foram expostos sem a autenticação necessária, permitindo operações não autorizadas. Novamente, a lição principal é familiar: se o seu plano de controle estiver acessível e não estiver devidamente autenticado, você não tem um plano de controle. Em uma configuração de IA, isso pode significar extrações não autorizadas de modelos, alterações de modelos, manipulação do comportamento de serviço ou acesso indireto a suposições de infraestrutura mais amplas que as equipes erroneamente pensam ser "internas". (NVD)

O problema de exposição de token rastreado como CVE-2025-51471 reforça como detalhes sutis de implementação podem se tornar limites de segurança. A NVD afirma que o Ollama 0.6.7 permitia a exposição de tokens entre domínios e o desvio do controle de acesso por meio de um reino em um valor WWW-Autenticar retornado pelo /api/pull. No papel, isso parece mais restrito do que a injeção de comando. Na prática, é exatamente o tipo de bug de superfície de autenticação que importa em ambientes em que modelos, registros e fluxos de trabalho pull formam uma cadeia de suprimentos confiável. Se a cadeia for fraca no ponto em que as credenciais são negociadas ou reutilizadas, a camada do modelo se torna outro local para abuso de sessão. (NVD)

A família vLLM de CVEs recentes é ainda mais reveladora porque mostra como a infraestrutura de IA orientada para o desempenho pode herdar problemas clássicos de desserialização insegura e carregamento inseguro. O CVE-2025-32444 descreve a execução remota de código em implantações de vLLM usando o Mooncake porque a serialização baseada em pickle foi usada em soquetes ZeroMQ não seguros que escutam em todas as interfaces de rede. O CVE-2025-29783 abrange um caminho de RCE de host distribuído do Mooncake intimamente relacionado por meio de desserialização insegura no ZMQ/TCP. O CVE-2024-11041 documenta o RCE em MessageQueue.dequeue() devido a pickle.loads nos dados de soquete recebidos. Nenhum desses problemas exige uma nova teoria de risco de IA. Elas exigem lembrar que a "pilha de inferência distribuída" ainda é um software, ainda está exposta a entradas hostis e ainda está sujeita à regra mais antiga: não desserialize dados não confiáveis. (NVD)

O CVE-2025-62164 torna o quadro mais moderno. A NVD diz que as versões do vLLM de 0.10.2 até antes de 0.11.1 continham um problema de corrupção de memória na API Completions porque os embeddings de prompt fornecidos pelo usuário eram carregados com torch.load() sem validação suficiente, e uma mudança de comportamento do PyTorch 2.8.0 em relação às verificações de integridade de tensor esparso possibilitou gravações fora dos limites, levando à negação de serviço e à execução de código potencialmente remoto. Esse é um exemplo particularmente valioso, pois mostra como os sistemas de IA acumulam riscos por meio da interação da lógica de atendimento ao modelo, dos padrões da estrutura e dos caminhos de entrada que as equipes de segurança talvez ainda não estejam monitorando com o mesmo rigor que as superfícies clássicas de upload ou RPC. (NVD)

A conclusão correta desses CVEs não é "a IA é insegura". A conclusão certa é que a infraestrutura de IA merece a mesma modelagem de ameaças, inventário de ativos, análise de exposição, disciplina de patches e redução da superfície de ataque que qualquer serviço privilegiado ou acessível pela Internet merece - e provavelmente mais. A orientação do governo sobre a segurança da implantação de IA diz explicitamente para analisar a fonte dos modelos de IA e a segurança da cadeia de suprimentos, validar o sistema de IA antes da implantação e aplicar controles de acesso rigorosos e segurança de API. Essas recomendações são quase como uma resposta direta às classes de bugs que agora estão aparecendo nos ecossistemas de serviço de modelos. (Departamento de Guerra dos EUA)

Aqui está um mapa condensado de CVE que as equipes de segurança podem usar para priorização.

| CVE | Componente | Questão central | Lição de segurança |

|---|---|---|---|

| CVE-2025-15063 | Servidor Ollama MCP | Injeção de comando, RCE não autenticado | As camadas de ponte de ferramentas precisam de validação rigorosa de entrada e controle de exposição |

| CVE-2025-63389 | Ollama | Desvio de autenticação em pontos de extremidade da API | Os planos de gerenciamento de modelos devem ser autenticados e segmentados |

| CVE-2025-51471 | Ollama | Exposição de tokens entre domínios | Os fluxos de trabalho de registro e extração são limites de identidade, não recursos de conveniência |

| CVE-2025-32444 | vLLM com Mooncake | Pickle sobre ZeroMQ não seguro, RCE | Nunca confie em suposições apenas internas na inferência distribuída |

| CVE-2025-29783 | vLLM com Mooncake | Desserialização insegura no ZMQ/TCP | Os clusters de serviço de modelo precisam de escopo de rede e higiene de serialização |

| CVE-2024-11041 | vLLM | pickle.loads em MessageQueue, RCE | Os caminhos de fila e IPC precisam da mesma análise que as APIs externas |

| CVE-2025-62164 | vLLM | Não validado torch.load() caminho, corrupção de memória, possível RCE | A incorporação e os caminhos de entrada de modelo são superfícies de análise críticas para a segurança |

Essa lista é mais do que uma fila de correções. É um aviso de design. Se a sua organização estiver adotando modelos locais, tempos de execução de agentes, sistemas de recuperação ou inferência distribuída, a "segurança de IA" não se refere apenas à injeção imediata. Trata-se também do trabalho de engenharia muito antigo e muito real de fechar a exposição do plano de controle, eliminar a desserialização insegura, fortalecer os serviços internos e recusar-se a tratar o software que serve de modelo como confiável simplesmente porque ele faz parte da pilha de IA. (NVD)

Como é um verdadeiro programa de segurança de IA

O maior erro que as organizações cometem é comprar recursos de IA antes de atribuir a responsabilidade pela IA. A orientação conjunta do governo de 2024 sobre a implantação segura de IA diz que a pessoa responsável pela segurança cibernética do sistema de IA deve ser a mesma pessoa responsável pela segurança cibernética da organização em geral. Essa é uma recomendação enganosamente forte. Isso significa que a equipe de IA não pode executar um modelo de segurança secreto com suposições separadas, exceções separadas e decisões de exposição separadas só porque a carga de trabalho é "inovadora". Se o sistema de IA tocar em dados confidenciais, entradas externas ou ações automatizadas, ele deverá estar dentro da mesma disciplina de controle que o restante do patrimônio. (Departamento de Guerra dos EUA)

O segundo pilar é a modelagem de ameaças do ciclo de vida. A orientação de desenvolvimento de IA segura do NCSC torna a modelagem de ameaças uma atividade de design central, e a orientação de implantação da CISA-NSA diz às organizações para exigir que o desenvolvedor principal forneça um modelo de ameaças para o sistema. Isso é importante porque as ameaças de IA ultrapassam limites que as equipes de aplicativos normais geralmente dividem entre diferentes proprietários: procedência de dados, procedência de modelos, qualidade de recuperação, confiança de plugins, manipulação de resultados, permissões de tempo de execução e monitoramento pós-implantação. Se ninguém modelar essas interações, todos presumirão que outra pessoa está fazendo isso. (NCSC)

O terceiro pilar é o controle de acesso. Isso parece comum até que você o aplique aos sistemas agênticos modernos. O modelo de menor privilégio para um assistente de IA quase nunca é o modelo de menor privilégio para a pessoa que o utiliza. Se o agente puder navegar, executar ferramentas, recuperar documentos internos ou chamar APIs, as permissões que ele receber deverão ter escopo explícito para o fluxo de trabalho, e não herdadas casualmente de uma função de usuário ampla. Os dados de violação da IBM de 2025 são especialmente úteis aqui porque vinculam os incidentes relacionados à IA à falta de controles de acesso à IA e à governança fraca, enquanto a orientação de implantação do governo diz explicitamente às organizações que devem aplicar segurança rigorosa de API e defesa em profundidade. (IBM)

Em quarto lugar, vem a disciplina de dados. A orientação de segurança de dados de IA de 2025 da NSA, FBI, CISA e parceiros diz que a segurança de dados abrange o ciclo de vida do sistema de IA e avisa que, se um invasor puder manipular os dados, ele também poderá manipular a lógica de decisão de um sistema baseado em IA. Esse é o modelo mental correto. Dados de treinamento, dados de ajuste fino, corpora de recuperação, modelos de prompt, resultados de ferramentas, conjuntos de dados de avaliação e loops de feedback não são entradas passivas. Eles são partes do comportamento do sistema. As equipes de segurança que protegem os pesos do modelo e ignoram o armazenamento de recuperação ou o cache de comandos estão protegendo o invólucro e negligenciando o sistema nervoso. (Departamento de Guerra dos EUA)

O quinto pilar é a validação antes e depois da implementação. A orientação do governo diz que os sistemas de IA devem ser validados antes da implantação; o perfil GenAI do NIST enfatiza os testes pré-implantação e a divulgação de incidentes como considerações primárias; o trabalho de IA segura do MITRE destaca a necessidade de descoberta verificável de vulnerabilidades de IA e de formação de equipes vermelhas de IA. Juntas, essas fontes implicam em algo simples: não basta avaliar a qualidade do modelo, a satisfação do usuário ou a latência. Um verdadeiro programa de segurança de IA precisa de testes adversários pré-lançamento, revisão de incidentes pós-lançamento, testes de injeção imediata e de manipulação de saída, revisão de dependência, varredura de exposição e validação contínua à medida que os modelos, conectores e fluxos de trabalho mudam. (Departamento de Guerra dos EUA)

O sexto pilar é o registro e a observabilidade que realmente refletem o fluxo de trabalho de IA. Os registros tradicionais de aplicativos são necessários, mas não são suficientes. As equipes precisam saber qual prompt ou conteúdo recuperado produziu uma determinada ação, qual versão do modelo foi respondida, quais chamadas de ferramentas foram tentadas, qual identidade foi usada, quais verificações de políticas foram aprovadas ou falharam e qual conteúdo externo entrou no contexto. A ênfase da IBM na visibilidade de todas as implantações de IA, incluindo a IA sombra, e uma melhor observabilidade de vulnerabilidades e anomalias é importante aqui porque os sistemas de IA geralmente falham por meio de corrupção de contexto e uso indevido de permissão, não apenas por meio de falhas de processo ou alertas óbvios. (IBM)

Em sétimo lugar, as organizações precisam parar de tratar a validação ofensiva como algo separado da governança de IA. Se uma equipe está implantando agentes de IA que podem atuar em sistemas de negócios, a validação contínua não é mais um "luxo da equipe vermelha". Ela se torna parte do controle de mudanças. Da mesma forma que as equipes de nuvem aprenderam que os painéis de postura não são suficientes sem testes de caminho de ataque, as equipes de IA estão aprendendo que as avaliações de modelos não são suficientes sem testes de abuso de fluxo de trabalho. Nesse sentido, a segurança de IA está forçando uma fusão entre segurança de aplicativos, segurança de identidade, governança de dados e validação ofensiva.

O modelo operacional a seguir é uma maneira útil de organizar o trabalho.

| Área do programa | Expectativa mínima | Forte expectativa |

|---|---|---|

| Governança | Inventário de IA, proprietário designado, cobertura da apólice | Revisão central de agentes, ferramentas, conectores e IA sombra |

| Identidade | SSO e MFA para consoles, tokens com escopo | Permissões com escopo de tarefa, controles de identidade não humanos, chaves de acesso quando relevante |

| Dados | Classificação e controles de acesso | Rastreamento de proveniência, revisão do armazenamento de recuperação, política de retenção imediata e de saída |

| Cadeia de suprimentos | Revisão de dependências e aplicação de patches | Controles de registro de modelos, artefatos assinados sempre que possível, análise de risco do conector |

| Validação | Teste de pré-implantação e revisão de configuração | Testes adversos, reteste contínuo, validação de abuso de fluxo de trabalho |

| Observabilidade | Registros de aplicativos e registros de API | Controle de versão do modelo, linhagem de ação imediata, políticas e trilhas de auditoria de chamadas de ferramentas |

Essa tabela é uma síntese, mas é uma extensão direta dos temas de controle repetidos pelo NIST, pela orientação da CISA-NSA, pela pesquisa de violações da IBM, pelos riscos LLM da OWASP e pelo trabalho de IA baseado em ameaças do MITRE. A questão não é inventar uma nova burocracia em torno da IA. É parar de permitir que a IA contorne as disciplinas existentes que já existem para sistemas de alto risco. (NIST)

Conteúdo de detecção que as equipes de segurança podem realmente usar

Os conselhos gerais acima só são importantes se forem transformados em controles e detecções. Para muitas equipes, a vitória mais rápida é começar a observar o plano de controle de IA como qualquer outra superfície de gerenciamento sensível. Isso significa pontos de extremidade de extração de modelos, rotas de criação de modelos, alterações de registro de plugins ou ferramentas, chamadas externas de processos de serviço de modelos e execução de shell gerada por tempos de execução de serviço ou camadas de orquestração.

Um exemplo prático de KQL para detectar acesso externo suspeito aos endpoints de gerenciamento de IA pode ser assim:

// Detectar o acesso de origem na Internet a rotas sensíveis de gerenciamento de modelos de IA

AzureDiagnostics

| where RequestUri_s has_any ("/api/pull", "/api/create", "/api/delete", "/api/tags")

| where not(ipv4_is_in_any_range(ClientIP_s, dynamic([

"10.0.0.0/8",

"172.16.0.0/12",

"192.168.0.0/16"

])))

| summarize count(), FirstSeen=min(TimeGenerated), LastSeen=max(TimeGenerated)

by ClientIP_s, RequestUri_s, UserAgent_s, Resource, Host_s

| order by count_ desc

Essa consulta é intencionalmente simples. Ela não "resolve" a segurança da IA. Ela dá aos defensores um ponto de partida para uma classe de risco que os recentes CVEs relacionados a Ollama tornam dolorosamente concretos: os endpoints de gerenciamento de modelos e os fluxos de autenticação adjacentes são sensíveis. Eles não devem ser tratados como APIs de conveniência inofensivas. Os nomes exatos das rotas e o esquema de registro variam, mas o padrão de detecção é duradouro: isolar as ações do plano de controle, distinguir a origem interna da externa e investigar rapidamente qualquer coisa incomum. O raciocínio por trás dessa detecção é consistente com os recentes registros de NVD para problemas no plano de controle do Ollama e com a orientação do governo sobre controles de acesso rigorosos e segurança de API para sistemas de IA. (NVD)

Para telemetria de endpoint ou EDR, um segundo padrão útil é a detecção de surgimento de processos em torno do software que serve de modelo. Em muitos ambientes, um servidor de modelos não deve iniciar repentinamente shells, downloaders ou interpretadores de scripts.

título: Processo filho suspeito gerado por tempo de execução de serviço de IA

id: 4d89c0f0-f8e2-4b1d-8f41-ai-runtime-spawn

status: experimental

logsource:

category: process_creation

detecção:

parent_image:

Image|endswith:

- '\ollama.exe'

- '\ollama'

- '\python.exe'

- '\python'

- '\vllm'

child_image:

Image|endswith:

- '\cmd.exe'

- '\powershell.exe'

- '\bash'

- '\sh'

- '\curl'

- '\wget'

condição: parent_image e child_image

campos:

- Imagem

- ParentImage

- Linha de comando

- ParentCommandLine

nível: alto

Essa regra também é básica por design. Você a ajustaria para seu próprio tempo de execução, estilo de empacotamento e comportamento esperado. A questão é reconhecer que, uma vez que os tempos de execução de IA estejam no ambiente, eles merecem o mesmo escrutínio de processo filho que você já aplica a aplicativos de escritório, servidores da Web, hosts de script e ferramentas de gerenciamento remoto. Essa mentalidade se torna mais importante, e não menos, à medida que os modelos ganham recursos de orquestração e uso de ferramentas.

Onde a validação ofensiva se encaixa e por que ela é mais importante na era da IA

A lição mais difícil da segurança moderna é que observabilidade não é prova. Um modelo pode parecer alinhado nos testes e ainda assim ser perigoso em um fluxo de trabalho real. Um painel pode mostrar baixas contagens de vulnerabilidade crítica enquanto um caminho de identidade de alto valor permanece explorável. Um assistente de GenAI pode ser aprovado no controle de qualidade funcional e, ainda assim, ser vulnerável à injeção indireta de prompt por meio de conteúdo recuperado. É por isso que a IA na segurança cibernética agora está aproximando a validação ofensiva da engenharia cotidiana. Os defensores precisam de maneiras de verificar não apenas se um modelo responde com segurança, mas também se o fluxo de trabalho ao redor resiste a abusos em condições reais. (Projeto de segurança de IA da OWASP Gen)

É também nesse ponto que uma plataforma como a Penligent se encaixa naturalmente. A recente redação técnica pública da Penligent continua retornando a uma ideia: o valor da IA não está em resumir as vulnerabilidades de forma bonita, mas em lidar com a parte mais difícil do trabalho de segurança - preservar o contexto, raciocinar sobre caminhos de ataque, validar a capacidade de exploração e produzir evidências que outro engenheiro possa reproduzir. Seus artigos "AI in Security" e "AI Pentest Tool" argumentam que o futuro pertence aos sistemas que passam do sinal bruto para a prova defensável, e não aos scanners com um invólucro de conversação. Essa é uma maneira justa de descrever a próxima fase do mercado. (Penligente)

Há também uma ponte natural entre a inteligência de ameaças e a validação ofensiva. O artigo da Penligent sobre gráficos de conhecimento de inteligência contra ameaças com LLMs argumenta que a construção de gráficos orientada por LLMs pode ajudar a conectar ameaças, vulnerabilidades e TTPs mais rapidamente, mas também diz que a inteligência por si só não é suficiente e precisa ser combinada com testes de penetração e validação contínua. Essa lógica é mais forte do que pode parecer à primeira vista. Quanto mais a IA ajuda os defensores a comprimir informações brutas em hipóteses, mais importante se torna testar se essas hipóteses correspondem a riscos reais e acessíveis no ambiente. (Penligente)

A implicação prática é simples. Se a sua organização estiver usando IA para priorizar os riscos, ela também deverá usar validação repetível para provar quais riscos são alcançáveis. Se a sua organização estiver implantando agentes de IA que possam agir, ela deverá testar esses fluxos de trabalho de forma adversa. E se a sua organização estiver comprando IA para o SOC, ela deve garantir que a saída desse sistema se conecte a alterações que possam realmente ser verificadas, corrigidas e testadas novamente.

O que os líderes de segurança devem fazer a seguir

A maioria das organizações não precisa de um programa de segurança de IA de última geração no próximo trimestre. Elas precisam de um programa disciplinado. Primeiro, faça um inventário de todos os sistemas de IA implantados, pilotados ou usados discretamente por meio de fluxos de trabalho obscuros. Inclua assistentes de bate-papo, copilotos, sistemas de recuperação, assistentes de código, servidores de modelos locais, clusters de inferência, agentes de navegador e qualquer coisa que possa chamar ferramentas ou tocar em dados confidenciais. As descobertas de violação da IBM em 2025 deixam claro que a visibilidade e a governança fracas ainda são pontos básicos de falha. (IBM)

Em segundo lugar, separe a "IA usada para segurança" da "IA que deve ser protegida". Coloque-os em diferentes trilhas de revisão, se necessário, mas não mescle as suposições de risco. Um assistente de SecOps que lê logs tem um modelo de ameaça diferente de um cluster de serviço de modelo exposto a desenvolvedores internos, e ambos são diferentes de um agente autônomo com acesso a ferramentas em sistemas de produção. O Cyber AI Profile do NIST é útil justamente porque força essa distinção de três vias entre a defesa habilitada para IA, o ataque habilitado para IA e a segurança dos próprios sistemas de IA. (NCCoE)

Terceiro, eleve imediatamente o padrão de controle de acesso e exposição da rede em torno da infraestrutura de IA. Os registros recentes de NVDs sobre Ollama e vLLM mostram o motivo. Suponha que as APIs de gerenciamento de modelos, as camadas de orquestração, os tempos de execução de agentes e os backplanes de inferência sejam serviços essenciais à segurança. Analise quais deles podem ser acessados, como se autenticam, o que podem extrair, o que podem executar e quais identidades herdam. (NVD)

Quarto, teste o sistema que existe, não o modelo que você pretendia implantar. A injeção imediata, a agência excessiva, o vazamento imediato do sistema e os problemas de manipulação de saída não aparecem apenas nos benchmarks do modelo. Eles surgem quando o modelo encontra recuperação, ferramentas, segredos, usuários e dados de produção. É por isso que a lista de riscos de 2025 da OWASP e o perfil GenAI do NIST ainda são tão úteis: eles ancoram o trabalho de segurança em como os aplicativos de IA se comportam no contexto, e não apenas na capacidade abstrata do modelo. (Projeto de segurança de IA da OWASP Gen)

Por fim, não deixe que a IA se torne uma desculpa para abandonar os fundamentos. Os invasores estão usando IA. Isso é verdade. Mas os ataques mais prejudiciais auxiliados por IA ainda se baseiam em interfaces expostas, autenticação deficiente, roubo de credenciais, segmentação fraca, padrões de software inseguros e monitoramento incompleto. A IA muda o ritmo e a forma da luta. Ela não anula a engenharia de segurança.