The cheapest way to fail a SOC 2 or ISO 27001 project is to let AI write polished policies while your actual evidence stays manual, scattered, and stale. A SOC 2 report is an examination of controls relevant to security, availability, processing integrity, confidentiality, or privacy. ISO/IEC 27001 defines requirements for an information security management system, and ISO’s own guidance makes clear that conformance and certification are about evidence and audits, not attractive prose. (AICPA & CIMA)

That distinction matters because AI can lower the cost of compliance, but only if you point it at the right work. It can compress control mapping, evidence collection, evidence summarization, and technical validation workflows. It cannot replace an external auditor, cannot grant certification, and cannot make a false statement true just because it sounds organized. ISO explicitly states that certification is performed through external certification bodies and that evidence gathering is generally done through audits. (ISO)

A useful way to frame the problem is simple. SOC 2 asks whether your controls can be independently examined and described in a way that gives customers assurance. ISO 27001 asks whether you run a durable information security management system that manages risk through people, policies, and technology. Many teams treat these as separate spend buckets, then wonder why the same engineers keep re-answering the same questions in slightly different formats. The money leak is rarely the standard itself. The money leak is duplicated effort. (AICPA & CIMA)

For AI-native companies, there is another layer. NIST’s AI Risk Management Framework says trustworthy AI requires explicit risk management across the lifecycle, organized around Govern, Map, Measure, and Manage. ISO/IEC 42001 adds a formal AI management system, and OWASP’s current AI guidance focuses on practical risks such as prompt injection, insecure output handling, supply chain weaknesses, and the security of agentic applications. None of that replaces SOC 2 or ISO 27001. It explains why generic control language is often too shallow once models, tools, memory, and autonomous actions enter the system. (NIST AI Resource Center)

SOC 2 and ISO 27001 are not the same job

The easiest way to overspend is to run two programs when one integrated operating model would do. SOC 2 is built around the AICPA Trust Services Criteria for security, availability, processing integrity, confidentiality, and privacy, and the AICPA describes a SOC 2 examination as a report on controls at a service organization relevant to those areas. ISO/IEC 27001, by contrast, is the best-known ISMS standard and defines requirements for establishing, implementing, maintaining, and continually improving an information security management system. (AICPA & CIMA)

In practice, that means SOC 2 is usually the artifact customers ask to review, while ISO 27001 is the management system that keeps the organization from improvising security as it grows. They overlap heavily, but they do not start from the same center of gravity. SOC 2 leans toward assurance for users of your service. ISO 27001 leans toward systematic governance and continuous risk treatment. If you confuse them, you get one of two bad outcomes: a neat audit packet sitting on top of a chaotic program, or a thoughtful internal security program that still cannot answer customer diligence cleanly. (AICPA & CIMA)

The good news is that ISO’s management-system guidance says many management system standards share the same structure and many of the same terms and requirements. That is exactly why an integrated control-and-evidence model pays off. Instead of building one machine for customer assurance and another for internal governance, you build one evidence-bearing control system that can answer both. (ISO)

| Area | What SOC 2 tends to emphasize | What ISO 27001 tends to emphasize | Shared evidence opportunity |

|---|---|---|---|

| Security controls | Whether controls can be examined and described for assurance users | Whether controls are part of a managed risk process | Reuse the same technical evidence and control narratives |

| Risk management | Control objectives and supporting evidence | Formal ISMS risk identification, treatment, and continual improvement | One risk register feeding both programs |

| Controle de acesso | Demonstrable control over logical access | Governance over access as a managed security process | Reuse IdP, approval, review, and deprovisioning artifacts |

| Logging and monitoring | Evidence that controls operate and issues are detected | Ongoing operational security within the ISMS | Reuse alerting, retention, and investigation evidence |

| Change management | Evidence changes are authorized and controlled | Change as a managed process within the ISMS | Reuse branch protections, approvals, deployment logs |

| Vendor and supplier oversight | Assurance over third parties affecting the system | Risk treatment and supplier management within the ISMS | Reuse due diligence, contracts, and review records |

The table is a synthesis, but the principle is grounded in the way AICPA frames SOC 2 and the way ISO frames ISO 27001 and management systems more broadly. The cheapest mature program is usually the one that builds one repeatable security operating layer and then renders it differently for different audiences. (AICPA & CIMA)

AI does not make you compliant, it makes evidence operations cheaper

A lot of AI compliance pitches collapse because they attack the wrong problem. They promise policy drafting, questionnaire autofill, or glossy control summaries. Those things can save time, but they are not the expensive part of a maturing security program. The expensive part is repeated evidence labor: collecting it, validating it, naming it, timestamping it, matching it to controls, answering follow-up questions, and proving that what you are showing is not a one-off screenshot from a lucky day.

That is where AI becomes financially useful. It can classify evidence by control family. It can compare a new artifact against a prior month’s artifact and flag drift. It can extract the part of a CloudTrail, GitHub, IdP, ticketing, or scanning output that matters to a specific control owner. It can draft the first-pass answer to an auditor request without claiming that the request is already satisfied. It can cluster findings from offensive validation into a control-centric summary instead of leaving them as disconnected technical notes. None of that is magical. It is just cheaper than having a senior engineer manually do the same pattern-matching every month.

The difference between useful AI and dangerous AI is whether the model starts from raw evidence or starts from empty air. If it starts from raw evidence, you can review, challenge, and correct it. If it starts from empty air, you get hallucinated compliance. The right operating sequence is always the same: gather original artifacts first, let AI summarize and cross-reference second, require a human owner to approve the interpretation third.

ISO’s own guidance reinforces this discipline. To claim conformance, an organization needs evidence that it is meeting the requirements, and that evidence gathering is generally done through audits. Certification is not something ISO itself grants through a chatbot or a report generator. (ISO)

Where compliance costs actually come from

Most founders and even many security leads misprice the work because they count obvious external spend and ignore internal drag. The line item for an auditor or certification body is visible. The line item for engineers spending a hundred tiny interruptions on evidence, screenshots, approvals, backfill, and answer-drafting is usually invisible.

The real cost centers tend to look like this.

First, teams redefine their system boundary too many times. Product says the system is the customer-facing app. Security says it also includes the supporting cloud services. Platform says the CI pipeline belongs in scope. Legal asks whether the third-party AI provider is in or out. Everyone is partly right, and every new answer causes evidence churn.

Second, teams duplicate control writing. They draft a SOC 2 story, then separately draft an ISO 27001 story, then separately draft a customer questionnaire story, as if the underlying technical reality changed between documents. It did not.

Third, evidence gets collected in formats humans hate maintaining. Screenshots with no timestamps. CSVs with unclear origin. Ticket exports with no control mapping. Vulnerability scans with no retest proof. Cloud settings copied into a slide deck. When the auditor asks one follow-up question, the whole chain has to be rebuilt.

Fourth, technical validation stays detached from compliance. Security testing finds things. AppSec retests things. Operations patches things. But the compliance workstream never gets clean artifacts that say what was tested, what failed, what changed, and what passed after remediation.

Finally, AI products introduce a new class of cost because the classic SaaS control language is no longer enough. CSA’s guidance on including AI implementations in penetration testing starts with scoping and specifically calls out questions around provider responsibility, key protection, output handling, logging, monitoring, and even billing exposure. Those are real operational concerns, not abstract AI ethics. (Aliança de segurança na nuvem)

AI can shrink every one of those cost centers, but only if the workflow is organized around evidence reuse.

Build one shared control layer before you automate anything

The biggest mistake in AI-enabled compliance is automating chaos. Do not start by wiring a model into every system you own. Start by defining a shared control layer that covers both frameworks.

For most software and AI companies, that shared layer includes at least these themes: asset inventory, logical access control, authentication and authorization, logging and monitoring, vulnerability management, change management, incident response, backup and recovery, supplier oversight, secure development, and risk management. The naming can vary, and the exact shape depends on your scope, but the overlap is real.

A practical control layer has four components. The first is a canonical control statement written in plain language that describes the operational truth you are trying to maintain. The second is a list of frameworks and customer artifacts that rely on that control. The third is the evidence list. The fourth is the named owner and review frequency.

Here is what that looks like in practice.

| Shared control theme | Examples of evidence | Best AI use | Human decision that still matters |

|---|---|---|---|

| Inventário de ativos | Cloud account inventory, CMDB export, repo inventory, deployed services list | Normalize formats, detect duplicates, identify scope drift | Final system boundary decision |

| Controle de acesso | IdP group mappings, approval tickets, access review records, deprovisioning logs | Match users to systems, flag orphaned access, summarize review exceptions | Access approval, privileged exceptions, risk acceptance |

| Logging and monitoring | SIEM rules, retention settings, alert records, investigation tickets | Summarize coverage, map alerts to controls, identify missing telemetry | Decide alert thresholds, approve monitoring scope |

| Vulnerability management | Scanner results, validation reports, remediation tickets, retest results | Deduplicate findings, group by asset and severity, draft remediation summaries | Prioritize remediation, approve timelines, close risk |

| Change management | Pull requests, approvals, CI logs, production deploy records, rollback events | Extract evidence chain, highlight missing approvals, summarize deployment history | Approve production changes and exceptions |

| Supplier management | Security reviews, DPAs, contracts, vendor questionnaires, risk notes | Extract obligations, compare supplier claims to internal requirements | Supplier approval and compensating controls |

| Incident response | Runbooks, ticket timelines, postmortems, communication logs | Build timelines, cluster artifacts, draft lessons learned | Declare incidents, decide severity, approve post-incident actions |

This is the level where AI starts paying for itself. Once your controls and evidence live in a stable schema, one model pass can do meaningful work across multiple frameworks, audit requests, and customer questionnaires. Before that point, AI is just generating prettier confusion.

The evidence model that keeps costs down

The minimum unit of compliance is not the control statement. It is the evidence packet.

A good evidence packet contains five things. It identifies the control or controls it supports. It records the source system and collection time. It preserves the raw artifact or a cryptographically anchored copy. It includes a short, human-readable explanation of why the artifact matters. And it records the reviewer who accepted it into the compliance record.

That last point is where many AI workflows collapse. The model can classify and summarize. The model should not be the only entity asserting, “This proves the control worked.” That judgment needs an owner.

A lightweight evidence manifest can solve most of the scaling pain. For each artifact, store a stable identifier, source path or API endpoint, collection timestamp, hash, associated control IDs, system scope, artifact type, sensitivity level, retention rule, and reviewer state. Once that exists, AI can do higher-order work cheaply: compare this month to last month, spot missing fields, cluster related artifacts, build draft narratives, and surface anomalies that deserve human review.

A strong evidence model also reduces the most common auditor frustration. Auditors rarely complain that teams have too little prose. They complain that teams cannot show provenance, timing, completeness, or consistency. A control narrative that says “we review access quarterly” is weak. A packet that includes the exported review list, the reviewer comments, the resolution evidence, and the change log is strong.

The same model makes customer diligence cheaper. Once the evidence packet exists, the organization can answer a procurement questionnaire, support a SOC 2 review conversation, prepare an ISO surveillance audit response, and feed an internal management review without manually rebuilding the story each time.

A practical evidence pipeline for cloud and engineering environments

The most efficient compliance pipeline is not a giant platform rollout on day one. It is a staged, boring, automatable set of collectors that pull truth from the systems you already run.

Start with your cloud environment. For most teams, that means checking organization trails, log retention, key configuration, public exposure safeguards, and identity configuration. The goal is not to prove everything at once. The goal is to prove the controls that most often generate repeat evidence requests.

A simple AWS-oriented collection pass might look like this:

# CloudTrail configuration and status

aws cloudtrail describe-trails --include-shadow-trails

aws cloudtrail get-trail-status --name org-trail

# S3 protections for log buckets

aws s3api get-public-access-block --bucket company-prod-logs

aws s3api get-bucket-encryption --bucket company-prod-logs

aws s3api get-bucket-versioning --bucket company-prod-logs

# KMS key metadata for protected logging

aws kms describe-key --key-id alias/org-logs

# IAM credential report for access review support

aws iam generate-credential-report

aws iam get-credential-report > iam-credential-report.csv

That command set does not make you compliant. What it does is capture raw operational evidence for logging, retention, storage protection, and access-review workflows. AI becomes useful after collection. It can tell you which outputs belong to which control families, whether the results look materially different from the prior review period, and which artifacts should be escalated for human confirmation.

Engineering systems are the same. Pull branch protection, code-scanning alerts, dependency-alert status, and deployment history directly from the source system instead of relying on screenshots copied into slides.

# GitHub branch protection

gh api repos/ORG/REPO/branches/main/protection > branch-protection.json

# Code scanning alerts

gh api repos/ORG/REPO/code-scanning/alerts > code-scanning-alerts.json

# Dependabot or vulnerability alerts

gh api repos/ORG/REPO/vulnerability-alerts -i > vulnerability-alerts.txt

# Recent pull requests for change-management sampling

gh api repos/ORG/REPO/pulls --paginate > recent-pulls.json

Again, the value is not the command itself. The value is that the evidence originates from the system of record, with repeatable collection steps. That makes AI summarization safe enough to be useful.

Hash your artifacts before you let AI summarize them

One of the easiest upgrades any team can make is to cryptographically anchor evidence files. That turns a pile of exports into a defensible record.

from pathlib import Path

import hashlib

import json

from datetime import datetime, timezone

EVIDENCE_DIR = Path("evidence")

manifest = {

"generated_at": datetime.now(timezone.utc).isoformat(),

"artifacts": []

}

for path in sorted(EVIDENCE_DIR.rglob("*")):

if path.is_file():

digest = hashlib.sha256(path.read_bytes()).hexdigest()

manifest["artifacts"].append({

"path": str(path),

"sha256": digest,

"size_bytes": path.stat().st_size

})

Path("evidence-manifest.json").write_text(

json.dumps(manifest, indent=2),

encoding="utf-8"

)

That tiny manifest gives you three advantages. It lets you prove which files were reviewed. It lets you detect silent drift. And it gives the AI assistant a structured inventory to reason over instead of forcing it to infer your evidence set from filenames and folder chaos.

Once you have a manifest, your prompt design gets cleaner too. You are no longer asking the model to invent a compliance story. You are asking it to summarize a known, hash-anchored set of artifacts for a specific control family. That is a radically safer use of AI.

A review prompt can be simple:

You are assisting with audit preparation.

Use only the attached evidence manifest and referenced artifacts.

Do not infer missing evidence.

For each artifact, identify:

1. which control theme it may support,

2. what fact it demonstrates,

3. what remains unproven,

4. whether human follow-up is required.

If a claim is not directly supported by an artifact, state that clearly.

That last sentence is doing most of the safety work.

Where AI saves real money in audit preparation

The highest-return uses of AI in audit preparation are usually not glamorous.

One is control mapping. A team with one shared control layer can ask AI to generate the first-pass mapping from a control to internal policy text, technical evidence, and customer-facing assurance language. Humans still validate the mapping, but they no longer draft it from scratch.

Another is evidence gap detection. Give the model a complete inventory of required recurring evidence and the artifacts you actually collected this cycle. It can instantly tell you which controls are under-supported, which evidence is stale, which artifacts appear duplicative, and which artifacts are mislabeled.

A third is follow-up response drafting. Auditors and customers both ask variants of the same question: “Show me how this works in practice.” AI can draft a response packet that points to the relevant evidence, extracts the relevant fields, and highlights the exact facts already present in your records. That is cheaper than interrupting three senior engineers for a rewrite every time.

The fourth is technical-report normalization. Penetration tests, validation runs, scanner outputs, fix verification notes, and incident postmortems often exist in wildly different formats. AI is very good at turning them into structured case files. That matters because vulnerability management evidence is much cheaper to defend when it follows one shape: what was found, how it was validated, what changed, when it was retested, what the result was.

The fifth is integrated management review support. ISO’s management-system model is built around repeatability, self-evaluation, and improvement. AI can prepare the recurring pack that leadership actually reviews, as long as the source facts come from the underlying system records. (ISO)

If you build or ship AI, your compliance story needs an AI risk layer

A classic SaaS compliance program can still be weak, but at least its failure modes are familiar. Identity failures, logging gaps, misconfiguration, exposed data, weak supplier controls. Once AI enters the product or the internal workflow, the risk surface shifts.

NIST’s AI RMF says AI risk management needs explicit lifecycle governance through Govern, Map, Measure, and Manage. ISO/IEC 42001 describes an AI management system for the responsible development, provision, or use of AI systems. OWASP’s current LLM guidance highlights risks including prompt injection, insecure output handling, training data poisoning, model denial of service, and supply chain vulnerabilities, while OWASP’s guide for agentic applications focuses on concrete technical recommendations for systems that plan, act, and integrate tools. (NIST AI Resource Center)

That does not mean every SOC 2 or ISO 27001 program suddenly becomes an AI governance program. It means your control narratives must account for the AI-related realities actually in scope. If a customer-facing workflow uses a third-party LLM, then provider responsibility, output handling, key protection, logging, and abuse monitoring matter. CSA’s AI-specific penetration testing guidance makes exactly that point during scoping. It asks teams to think about who owns which security boundary, how outputs are rendered, whether logging and monitoring are enabled, and how provider usage risk is managed. (Aliança de segurança na nuvem)

A practical implication follows. If your product uses AI, your evidence library should not stop at conventional SaaS artifacts. It should also include model-provider configuration, tool-approval design, output-handling protections, prompt and system-instruction governance, abuse-monitoring evidence, fallback behavior, and records showing how risky actions are constrained or reviewed. You do not need to turn the article into an ISO 42001 manual to acknowledge that reality. You do need to avoid pretending that a standard access-control matrix alone fully describes the risk.

Why offensive validation belongs inside a compliance workflow

Policies tell you what the company intends. Technical validation tells you whether the environment behaves that way under pressure.

That distinction is why compliance work that never touches offensive validation often feels expensive and unconvincing at the same time. You spend time writing control descriptions, then still have to answer whether the exposed asset can actually be reached, whether the weak permission can actually be abused, whether the risky configuration can actually be exploited, and whether the fix really changed the attack path.

This is where technical validation creates cost savings instead of extra cost. A validated technical finding can satisfy multiple needs at once. It supports vulnerability management. It supports change-management closure once fixed and retested. It strengthens the customer trust conversation because the team can show a detection-to-remediation loop. And it gives auditors something much better than a statement like “we scan regularly.”

The right way to use offensive validation in a compliance program is not to turn the compliance program into a red team. It is to use targeted validation where controls depend on technical truth. Access boundaries. Public exposure. Configuration drift. Exploitable weakness versus theoretical weakness. Patch verification. Supplier exposure touching your environment. Those are all areas where one good validation artifact can replace a surprising amount of narrative hand-waving.

Three real incidents that show why evidence matters more than policy

The first example is CVE-2024-3400. Palo Alto Networks described it as an arbitrary file creation issue leading to command injection in the GlobalProtect feature of PAN-OS under specific version and configuration conditions, enabling unauthenticated remote code execution with root privileges on affected firewalls. This is a perfect compliance-era lesson because “we have perimeter security policies” is not the same as proving which PAN-OS versions were in scope, whether the relevant feature set was enabled, whether external exposure existed, what patch status was achieved, and whether post-remediation validation confirmed closure. (Palo Alto Networks Security)

The second example is CVE-2024-3094, the XZ Utils backdoor. NVD describes malicious code in upstream tarballs starting with version 5.6.0, with obfuscated build-process behavior that modified liblzma during the build. This matters for compliance because supplier and software-integrity controls are easy to describe and hard to prove. A team can say it has software supply chain discipline, but the evidence standard is higher: inventory, provenance checks, package review, exposure analysis, rollback evidence, and retest artifacts showing the environment no longer carries the compromised path. (NVD)

The third example is CVE-2023-4966, commonly called CitrixBleed. CISA’s guidance addressed active exploitation, and NVD notes the vulnerability’s placement in CISA’s Known Exploited Vulnerabilities ecosystem. This is operationally important because it exposes the difference between written incident-response policy and incident-response evidence. If a remote access edge is exploited in the wild, a credible record includes patch action, session termination per vendor guidance where applicable, investigation records, and evidence of containment and verification. That is what closes the control loop. (CISA)

None of those incidents are “AI compliance incidents.” That is the point. Compliance programs are judged by whether the organization can manage real security conditions, not by whether it can produce framework-shaped text. AI only saves money when it helps build, verify, and reuse that real evidence.

A lower-cost path from technical validation to audit-ready reporting

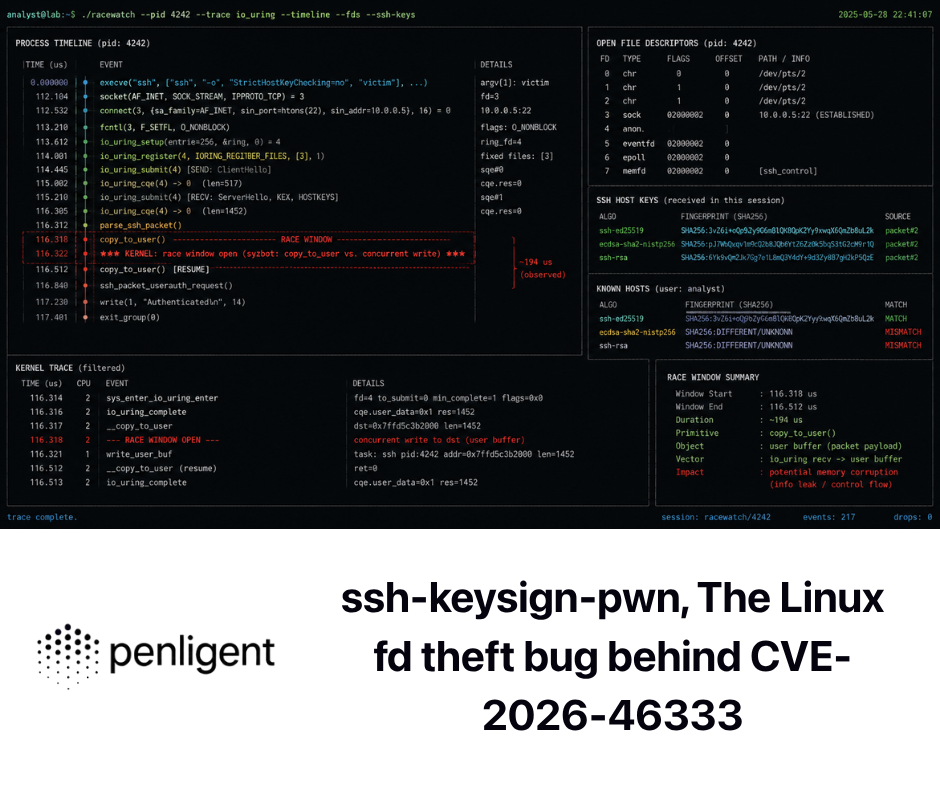

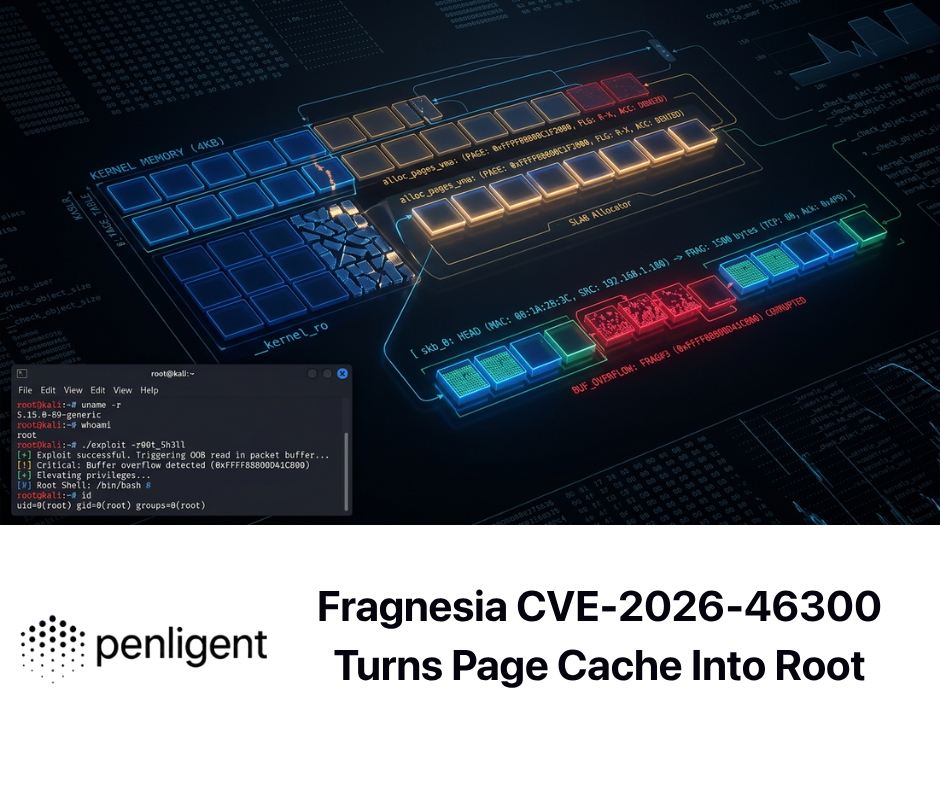

This is the narrow place where a tool like Penligent becomes commercially relevant without turning the whole article into a product pitch. Penligent’s public homepage says it offers one-click reports aligned with SOC 2 and ISO 27001, evidence-first results with traceable proof, and support for more than 200 industry tools. Its pricing page says the free tier includes end-to-end AI pentesting from asset discovery to validation plus PDF or Markdown report export with evidence and reproduction steps. The same public pricing page lists the Pro plan at $39.92 per month billed annually and says it adds one-click exploit reproduction with evidence-chain reporting. (Penligente)

That matters because the economic question is not whether a PDF makes you compliant. It does not. The economic question is whether you can reduce the labor required to turn technical validation into structured, reviewable, reusable evidence. Penligent’s public overview page also describes exports in PDF, HTML, or JSON mapped to CVE/CWE frameworks and standards such as ISO, SOC 2, and NIST, plus CI/CD integrations. Read narrowly and literally, those claims describe a reporting and evidence-conversion workflow, not a magic compliance button. That is the right way to think about a lower-cost compliance tooling story. (Penligente)

For smaller teams, that price point changes the conversation. When a public product page shows an under-$50 monthly entry point for evidence-chain reporting, the question stops being “Can we afford a giant compliance platform this quarter?” and becomes “Can we start producing cleaner technical validation artifacts now?” That is often the more important milestone. (Penligente)

What AI should automate, what humans should still own

A safe and cost-effective compliance program draws a hard line between acceleration and authority.

AI should usually automate first-pass evidence sorting. It should suggest control mappings. It should highlight missing artifacts, stale evidence, inconsistent naming, unclosed retest loops, and contradictory statements between policies and technical records. It should draft responses to diligence questions and summarize validation reports. It should identify the probable owners of a control gap based on system metadata and prior history.

Humans should still own system scoping, risk acceptance, exception approval, control design decisions, final control statements, final claims to auditors and customers, and the determination that a technical artifact is sufficient evidence for a control. Those are governance acts, not text-transformation tasks.

If you want a litmus test, ask whether the action could create liability if wrong. If yes, the final approval needs a human owner.

The 90-day rollout that usually works better than buying more software

A useful rollout starts by reducing the highest-friction evidence work, not by proclaiming “continuous compliance” on day one.

Days 1 to 30, define the scope and build the shared control map

In the first month, define the in-scope system once. Name the assets, environments, repositories, cloud accounts, providers, customer data flows, and AI services that truly belong in the system boundary. Decide which control themes are shared between your SOC 2 and ISO 27001 efforts. Create one control register with a stable ID for each control, one owner, one review cadence, and one evidence list.

Do not obsess over perfect wording yet. The highest-return deliverable is the map from control to evidence source. Where does the truth live for access control? Which APIs or exports prove change management? Where are vulnerability records? Which log system is authoritative? Which tickets prove remediation? Which supplier records are canonical?

At the end of this phase, you want one thing more than anything else: a control-to-evidence matrix that is boring, concrete, and reviewable.

Days 31 to 60, automate raw collection and add evidence manifests

In the second month, start pulling recurring evidence directly from source systems. Prioritize controls that generate the most repeat questions: access reviews, logging, production changes, vulnerability tracking, incident handling, and supplier reviews. Store the raw exports. Hash them. Build manifests. Keep the provenance.

Now bring AI in, but only as a reviewer and organizer. Let it label artifacts by control family. Let it compare new artifacts with the prior cycle. Let it draft a monthly evidence summary that tells owners what is missing, stale, or contradictory.

This is also the right phase to standardize naming. A surprise amount of compliance waste comes from teams unable to tell whether final-access-review-v2-real.xlsx is actually the authoritative file.

Days 61 to 90, add technical validation and prepare the review packet

In the third month, connect the technical security workflow. Pull in validation reports, exploit proof, retest artifacts, scanner outputs with human-reviewed triage, and remediation tickets. Normalize them into one evidence shape. For each validated issue, capture the finding, affected asset, validation method, severity context, remediation reference, retest date, and current state.

This is where a product that can export evidence-rich, standards-aligned technical reports at low cost becomes strategically useful. The goal is not to replace your compliance owner. The goal is to make sure the security team’s technical truth arrives in a form that the compliance program can actually use.

At the end of 90 days, run an internal review. Ask the hard questions before any external party does. Which controls still rely on screenshots? Which assertions still lack source-backed evidence? Where is the evidence stale? Which AI-generated summaries still need stronger human sign-off? That self-critique is cheaper than fixing the same issues under audit pressure.

Common ways teams misuse AI in compliance

One common failure mode is letting AI draft control statements before anyone has defined the system boundary. This produces elegant nonsense because the model fills scope gaps with general best practice.

Another is letting AI summarize technical evidence that was never collected in the first place. The result reads like an audit response and dies the moment someone asks for the source artifact.

A third is equating “aligned with SOC 2 and ISO 27001” with “SOC 2 certified” or “ISO certified.” Those are not interchangeable claims. A report can be structured around a framework without any third party having attested to the organization’s conformity or control operation. ISO explicitly distinguishes implementation from certification, and certification itself comes through external certification bodies. (ISO)

A fourth is using AI to flatten nuance in AI-specific risk management. If your product uses models, tools, and agent-like workflows, you need more than generic statements about confidentiality and availability. NIST, ISO 42001, OWASP’s LLM work, and OWASP’s agentic guidance all point in the same direction: governance has to reflect the unique ways AI systems fail. (NIST AI Resource Center)

The last failure mode is financial. Teams buy software before they fix the evidence model. If you do that, AI amplifies mess. If you fix the evidence model first, AI amplifies discipline.

The right mental model for reducing compliance costs

Think less about “AI compliance” and more about “AI-assisted evidence operations.”

That phrase is clunky, but the model is right. A mature program does not ask AI to impersonate the auditor. It asks AI to reduce repetitive evidence work, normalize technical artifacts, compare periods, detect gaps, and prepare humans to make better governance decisions faster.

If you adopt that model, the path to lower cost becomes straightforward.

You define one shared control layer across SOC 2 and ISO 27001 wherever the underlying technical truth is the same.

You gather evidence directly from systems of record instead of recreating it manually.

You anchor the evidence with timestamps, metadata, and hashes.

You use AI to classify, summarize, compare, and draft.

You require human owners to approve interpretations, exceptions, and claims.

You add technical validation so that vulnerability management and control effectiveness are not just policy statements.

You treat AI-specific product risks as an additional governance layer instead of pretending classic SaaS language is always enough.

That is how AI reduces cost without reducing credibility.

The teams that get this right do not necessarily have the biggest compliance budget. They usually have the cleanest evidence habits.

Leitura adicional e referências

- AICPA overview of SOC 2 and the Trust Services Criteria. (AICPA & CIMA)

- ISO overview of ISO/IEC 27001 and certification context. (ISO)

- ISO overview of ISO/IEC 42001 for AI management systems. (ISO)

- NIST AI Risk Management Framework and AI RMF Core. (NIST AI Resource Center)

- OWASP Top 10 for LLM Applications 2025. (Fundação OWASP)

- OWASP Securing Agentic Applications Guide 1.0. (Projeto de segurança de IA da OWASP Gen)

- Cloud Security Alliance guidance on including AI in penetration testing. (Aliança de segurança na nuvem)

- Palo Alto advisory for CVE-2024-3400, plus NVD details. (Palo Alto Networks Security)

- NVD details for CVE-2024-3094. (NVD)

- CISA and NVD guidance for CVE-2023-4966. (CISA)

- Penligent homepage and product positioning around evidence-first reporting aligned with SOC 2 and ISO 27001. (Penligente)

- Penligent pricing page, including the publicly listed Pro plan and free-tier report export. (Penligente)

- Penligent overview page covering export formats, mapped reporting, and CI/CD integration. (Penligente)

- Penligent article on AI SOC, ISO 27001, and SOC 2 for AI teams. (Penligente)