The patch window was never a law of nature. It was a timing assumption.

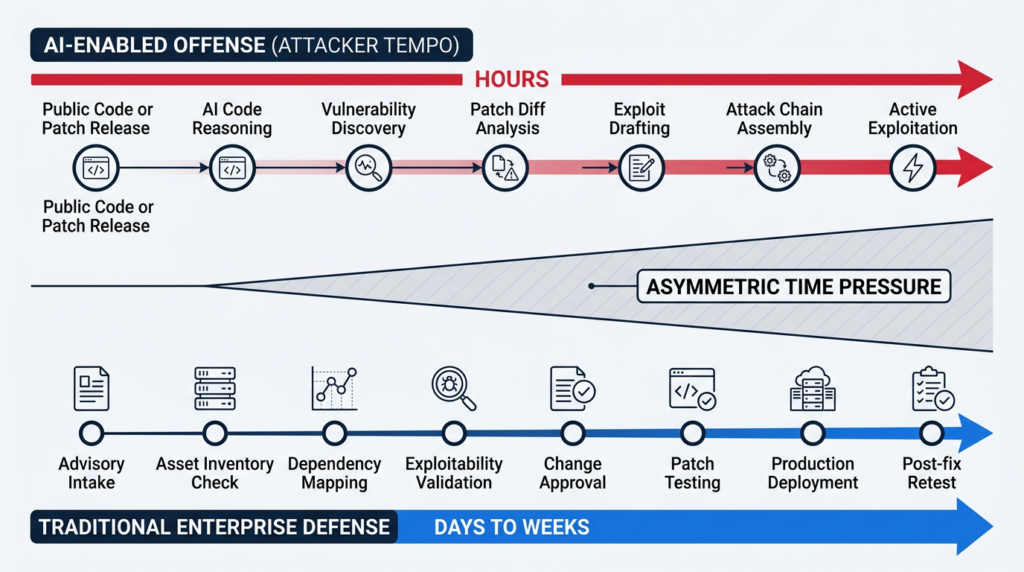

For years, most enterprise security programs operated as if vulnerability discovery, exploit development, public weaponization, internal prioritization, change approval, patch testing, and production rollout would happen on roughly human timescales. That assumption created a workable buffer. It was never perfect, but it was enough to support monthly patch cycles, quarterly penetration tests, backlog-based remediation, and vulnerability prioritization systems that often leaned more on scoring than on immediate exploitability. That buffer is getting weaker. Frontier AI models are now being described by major security organizations as capable of autonomously identifying vulnerabilities, constructing exploit chains, adapting attacks in real time, and compressing the time between disclosure and exploitation from days toward hours. (Unit 42)

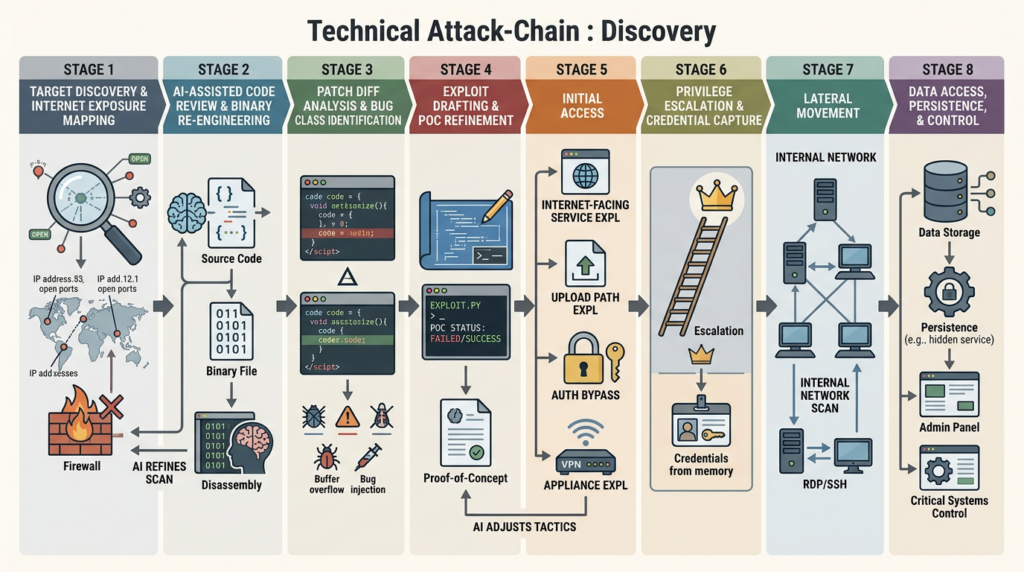

That does not mean every organization is suddenly one model prompt away from automated compromise. Public details remain limited because responsible disclosure prevents vendors and labs from openly documenting unpatched issues. But the broad conclusion is already hard to avoid. Palo Alto Unit 42 says frontier models are bringing autonomous zero-day discovery, shrinking the N-day patching window, and enabling more advanced attack chaining with minimal human expertise, especially against source-available software. Anthropic says the cost, effort, and expertise required to find and exploit vulnerabilities have dropped sharply as models become better at reading and reasoning about code. OpenAI now describes cyber-specialized models that can work autonomously for long stretches and analyze compiled software through binary reverse engineering. These are not fringe claims from one lab trying to make a headline. They are converging signals from multiple primary sources. (Unit 42)

The most important consequence is not merely that “AI makes vulnerability research faster.” It is that AI changes the shape of the contest. Attackers need one reachable path, one missed dependency, one internet-exposed management plane, one weak upload path, one appliance that could not be patched in time. Defenders need current inventory, dependency visibility, exploitability context, safe deployment, monitoring coverage, compensating controls, and proof that the fix actually killed the path. Those are not symmetric workloads. AI reduces marginal cost on the offensive side more easily than it removes operational drag on the defensive side. That is why the real story is asymmetry. (Unit 42)

The Patch Window Was Always a Timing Assumption

A patch window exists only because several things take time. Someone has to notice a bug or a code pattern. Someone has to understand whether the bug is reachable. Someone has to turn that understanding into an exploit or attack chain. If the software is widely deployed, someone has to identify reachable instances at scale. If the bug is inside a high-value product, someone has to decide whether the opportunity is worth operationalizing. On the defensive side, a security or engineering team has to confirm affected assets, map dependencies, evaluate exposure, schedule change windows, test for regressions, and push the fix safely. None of that is instantaneous. The “window” is the gap between those two motion curves. (Unit 42)

Traditional vulnerability management quietly assumes that those curves will not separate too far. CVSS scores, vendor advisories, asset criticality, and quarterly or monthly review cadences all make more sense when exploit development and mass targeting remain somewhat expensive. Even when active exploitation exists, there is often a belief that central feeds, scanner plugins, or vendor guidance will mature fast enough to support a reasonable enterprise response. CISA’s Known Exploited Vulnerabilities catalog is authoritative for vulnerabilities confirmed exploited in the wild, and it is essential input to vulnerability management. But even CISA’s own role here reveals the underlying problem. If the defender waits for centralized enrichment or catalog inclusion before treating an externally exposed issue as urgent, the defender is already spending time the attacker may no longer need. (CISA)

This is especially obvious on internet-facing systems and management planes. Remote access appliances, VPN gateways, firewalls, RMM tools, file transfer systems, software update infrastructure, upload endpoints, and identity-adjacent services have always had a bad risk profile because a successful exploit buys immediate control or a clean path to lateral movement. What changes in the AI era is the speed with which hypothesis generation, patch diffing, exploit drafting, and variant search can move from “expert-intensive” to “much cheaper than before.” That is why Unit 42’s framing matters so much. The issue is not just automated code review. The issue is that the whole chain from bug discovery to operational pressure is shortening. (Unit 42)

The uncomfortable truth is that many organizations were already operating on too little slack even before the latest model wave. In critical infrastructure, healthcare, manufacturing, and other environments with brittle systems or strict uptime constraints, patching often cannot happen immediately, and sometimes patches do not even exist yet. Forescout notes that in many environments remediation is delayed for weeks or months, or is not safely possible on operational timelines, which forces defenders to rely on mitigation, visibility, and enforcement controls rather than clean and immediate patch completion. AI does not create that fragility. It exposes how dependent many defenders were on the hope that attackers would not move too quickly. (Forescout)

AI Vulnerability Discovery Turns That Assumption Into a Liability

The clearest public statement of the problem comes from Palo Alto Unit 42. In April 2026, Unit 42 wrote that frontier AI models are bringing autonomous zero-day discovery, collapsing the patching window for N-days, enabling advanced vulnerability chaining, and supporting real-time adaptation in hardened environments. The same write-up says these models can identify vulnerabilities and attack paths with minimal human expertise, and that open-source software faces greater immediate risk because source code gives models more to work with than compiled binaries. It also warns that the world is moving toward “N-hours instead of N-days.” That phrase matters because it translates an abstract capability story into an operational timeline problem. (Unit 42)

Anthropic’s public material points in the same direction. The Project Glasswing announcement says the cost, effort, and expertise needed to find and exploit vulnerabilities have dropped dramatically as AI models improve at reading and reasoning about code. The program’s stated focus areas include local vulnerability detection, black-box testing of binaries, securing endpoints, and penetration testing of systems. In other words, the concern is not limited to model-assisted secure coding or synthetic benchmark work. The concern is the entire spectrum from source analysis to binary analysis to exploitability in real systems. Anthropic framed the program as a defensive effort specifically because the change is large enough to merit a coordinated response across major technology and security organizations. (anthropic.com)

Anthropic’s Claude Mythos disclosure adds another important layer. The company says the model is capable of identifying and exploiting zero-days in major operating systems and browsers, that many of the bugs it found were ten to twenty years old, and that even non-experts inside Anthropic could sometimes ask it to find a remote code execution path overnight and get a working exploit. Anthropic also says it cannot disclose details for more than 99 percent of the vulnerabilities involved because they remain unpatched, which is exactly why the public debate should not obsess over a single benchmark or one leaked statistic. The safe conclusion is not that every dramatic capability claim is fully documented. The safe conclusion is that the economics of vulnerability research and exploit drafting are changing in ways the primary sources themselves now treat as strategically significant. (red.anthropic.com)

OpenAI, Google, DARPA, and NIST all reinforce that this is a serious transition rather than a speculative narrative. OpenAI says cyber-specialized models can work autonomously for hours or days, help accelerate vulnerability discovery and remediation, and analyze compiled software through binary reverse engineering. Google Project Zero and DeepMind reported that Big Sleep found a real-world vulnerability in SQLite before release and called that a promising path toward finally turning the tables and creating an asymmetric advantage for defenders. DARPA’s AI Cyber Challenge was designed around the fact that code requiring protection is often open source and that finding and patching vulnerabilities through current methods remains slow and expensive. NIST’s guidance on generative AI threat modeling explicitly notes that such systems may augment cyberattacks, including vulnerability discovery, exploit writing, evasion, and privilege escalation. The ecosystem is already treating this as real. (OpenAI)

Why This Is an Asymmetric Fight

It is tempting to say that AI will help both attackers and defenders, therefore the effect should roughly cancel out. That is too neat. It ignores where time is actually spent.

An attacker does not need perfect coverage. The attacker needs an entry point that works. That might be one internet-exposed appliance, one forgotten RMM instance, one unpatched SAP component, one reachable upload surface, one VPN gateway waiting for a maintenance window, one cloud workload carrying a vulnerable library with a realistic call path. Once a working path exists, the attacker can focus on exploitation, staging, and lateral movement. The attack surface is broad, but the success condition is narrow. (Unit 42)

A defender faces the opposite geometry. The defender needs current inventory. The defender needs to know which software is present, including transitive dependencies and software buried inside appliances or images. The defender needs to know whether the vulnerable component is actually reachable in the running environment. The defender needs to prioritize external exposure, privileged boundary crossings, credential material, and blast radius. Then the defender needs to change production systems without breaking them. In environments with uptime constraints or fragile software, even a correct patch may need careful sequencing, validation, and rollback planning. None of those tasks disappears because a model can summarize code faster. (fsisac.com)

That asymmetry gets sharper when patching is not immediately possible. Forescout argues that many defenders must shift from a pure remediation mindset toward mitigation, dynamic controls, segmentation, and network-level enforcement because operational reality often prevents immediate software updates. FS-ISAC advises enterprises to assume active or imminent exploitation by default for exposed vulnerabilities, realign vulnerability prioritization, and support same-day decisioning with automated test harnesses and real-time inventory. Those recommendations are practical precisely because they recognize the asymmetry. The problem is not only how fast a patch can be approved. The problem is how quickly the organization can determine what is exposed, whether exploitation is plausible, and what it can do before patching completes. (Forescout)

The table below is a better way to think about the change than the vague claim that “AI accelerates security.”

| Attack chain stage | Human-era bottleneck | What AI changes | Direct consequence for defenders |

|---|---|---|---|

| Source review | Expert time to understand unfamiliar code paths | Faster code reading, hypothesis generation, pattern matching, variant search | New advisories become actionable faster, especially in source-available software |

| Patch diff analysis | Manual narrowing from a large codebase to the true fix area | Rapid focus on changed auth, parser, upload, serialization, and boundary logic | The time between patch release and exploit hypotheses may shrink |

| Exploit drafting | Skilled exploit development and iterative refinement | Faster creation and adaptation of proof-of-concept logic | N-day pressure can intensify before normal enterprise patch cadence completes |

| Binary reverse engineering | High expertise and time cost on closed-source targets | Better assistance on decompilation, path tracing, and binary reasoning | Closed-source software loses some of its historical analysis friction |

| Attack-chain assembly | Manual stitching of foothold, privilege, and movement steps | Quicker enumeration of plausible multi-step paths | Defenders must think in chains, not individual CVEs |

| Validation at scale | Slow analyst workflows to confirm exposure and reachability | Faster test generation and automated triage on both sides | Proof of exploitability becomes a race, not a reporting step |

This table is a synthesis, but every row is grounded in primary-source claims that frontier models can read and reason about code, perform binary analysis, discover vulnerabilities, draft exploits, and support more advanced chaining. (Unit 42)

Open-Source Code Gets Pressured First, but Not Last

Open source is under special pressure in the near term, and Unit 42 says that directly. Its April 2026 analysis states that frontier models show stronger ability on source-available code than on compiled-only code, which makes open-source software the first place where capability improvements convert most obviously into vulnerability discovery and exploit-path generation. The same analysis notes that nearly all commercial software includes open-source components, so this is not only an “open source problem.” It is a software supply-chain problem that reaches commercial products through dependencies, embedded libraries, images, frameworks, and upstream projects that many downstream users never directly inspect. (Unit 42)

It is important to be precise here. Open source is not unsafe because it is open. Public code has always enabled defensive review, broader inspection, bug bounties, and fast community patching when maintainers are resourced. The issue in this article is different. Source transparency is now more directly machine-readable. A model does not get tired while traversing error handling, parser branches, upload logic, auth edges, and boundary assumptions across a large codebase. If the code is public, the friction required to build and refine vulnerability hypotheses can drop sharply. That changes time-to-insight even if it does not magically produce perfect exploits every time. (Unit 42)

This matters because modern enterprises do not consume software as isolated products. They consume chains of dependencies. A team may not know that a vulnerable parser, compression library, crypto component, or embedded SQLite build is buried inside a vendor appliance, a desktop product, a container image, a CI plugin, or a mobile package. That was already a governance problem in the Log4Shell era. In an AI-tempo environment, it becomes a speed problem. The faster attackers can narrow their hypothesis space across public code and public patches, the less time defenders have to discover that the affected component exists in their environment at all. That is why real-time inventory and dependency mapping show up so often in current guidance. (fsisac.com)

There is also a resourcing issue. Unit 42 notes that open-source software often has limited maintainer bandwidth. DARPA’s AI Cyber Challenge was built around the reality that much critical code is open source and that vulnerability finding and patching remains slow, expensive, and dependent on a limited workforce. AI, in the near term, does not automatically solve that asymmetry for maintainers. It may instead expose latent weaknesses faster than many projects can safely absorb. That is why the same capability that helps defenders find flaws in upstream components can also make defensive lag more dangerous when patching, packaging, and downstream rollout do not happen quickly. (Unit 42)

Closed-Source Software Will Not Stay Insulated

If open source feels the pressure first, closed-source software will feel it next.

Anthropic says Claude Mythos is highly capable at reverse engineering closed-source binaries and has been used to find vulnerabilities in major closed-source browsers and operating systems. OpenAI’s GPT-5.4-Cyber documentation explicitly highlights binary reverse engineering for analyzing compiled software without source access. Those statements matter because defenders sometimes treat closed-source products as if their code secrecy still provides substantial delay against broad analysis. That delay is getting thinner. Source availability still helps the attacker more, but it no longer defines the whole landscape. (red.anthropic.com)

That has real implications for enterprise software. Many of the most operationally painful vulnerabilities do not live in developer-facing libraries. They live in appliances, gateways, VPN platforms, file transfer products, remote support tools, and large business platforms that sit close to identity or sensitive data. The historical reason those products were hard to study at scale was not that they were conceptually impossible to analyze. It was that binary analysis, protocol understanding, and exploit drafting took time and expertise. AI does not eliminate that difficulty, but it lowers the cost of iteration. Once that cost drops, the products that defenders used to hope would stay out of the mass-analysis blast radius become more attractive. They are few in number, widely deployed, highly privileged, and internet-exposed. That is excellent attacker economics. (red.anthropic.com)

This is one reason the public conversation should not be trapped in “Will AI find more zero-days?” That question is too narrow. A more useful question is whether AI is reducing the time and expertise needed to move from code or patch artifact to a working exploit hypothesis against a target class that matters. The answer, based on the primary sources now available, is yes. And the enterprise risk profile is not limited to open-source codebases. It reaches the commercial software stack through binary reasoning, patch diffing, dependency discovery, and exploit-chain assembly. (Unit 42)

The Real Danger Is Not Just Zero-Days

Zero-days get attention because they feel exceptional. In practice, defenders often lose on N-days.

Anthropic’s technical discussion makes this point sharply. The company says turning N-days into exploits is especially dangerous because the patch itself can become a roadmap. Once a fix is public, the attacker’s search space narrows. The question is no longer “What might be wrong in this product?” but “What did the vendor just change, and what class of weakness does that imply?” That is fertile ground for AI-assisted analysis because models are increasingly good at reading code, summarizing semantic differences, tracing likely validation gaps, and proposing exploit paths against familiar bug classes. (red.anthropic.com)

This is why “wait for the patch, then schedule the patch” is becoming a weaker default response on exposed systems. If the patch reveals too much too early, the time between vendor release and attacker weaponization can shrink. FS-ISAC’s guidance to assume active or imminent exploitation by default for exposed vulnerabilities reflects this logic, even when a vulnerability has not yet shown up in a centralized feed. CISA’s KEV catalog remains essential because it tells defenders what is demonstrably being used in the wild, but KEV is not meant to guarantee that everything not yet listed is low urgency. In an AI-tempo environment, externally exposed issues with high-value consequence should increasingly be treated as if time is scarce from the start. (fsisac.com)

That suggests a different prioritization model. CVSS still matters, but it is not enough. Defenders need to prioritize internet reachability, privilege consequence, identity adjacency, authentication bypass potential, upload surfaces, deserialization surfaces, management-plane exposure, and the operational value of successful compromise. A medium-scored issue in a strange parser buried behind four controls may matter less than a patch-diff-visible auth flaw in a remote management product. AI makes the ranking problem more urgent because it helps attackers spend their time where the expected payoff is highest. (Unit 42)

This is also why the public argument should not become “AI means software is doomed.” Forescout makes a useful point here. Faster AI-driven discovery does not automatically mean the software ecosystem is less secure in the long run. It means latent risk is exposed faster. That can be good for defenders if they use the same capabilities to find, verify, and fix weaknesses earlier in the lifecycle. Google’s Big Sleep example and DARPA’s AIxCC project both support that possibility. But the short-term danger is real because deployment friction, change control, and visibility gaps still live on the defender side. Attackers can benefit from speed immediately. Defenders only benefit when speed is wired into operations. (Forescout)

Five CVEs That Show How Little Slack Defenders Already Have

The AI story becomes more concrete when placed next to real vulnerabilities that security teams have already had to manage. These cases do not prove that AI was the cause of exploitation. That would be too strong, and the evidence is often not public. They prove something else, which is enough for our purposes: the modern enterprise patch window was already thin, especially for internet-facing systems, and many of the highest-impact cases involved exactly the sort of products that an AI-assisted attacker would rationally prioritize. (TECHCOMMUNITY.MICROSOFT.COM)

| CVE | Product class | Why it spread risk so quickly | What it teaches in an AI-tempo environment |

|---|---|---|---|

| CVE-2025-31324 | SAP NetWeaver business platform | Internet-exposed enterprise component, unauthenticated upload path, high-value post-exploit access | If the exploit path is simple and the landing zone is stable, time-to-scan and time-to-webshell matter more than advisory maturity |

| CVE-2024-3400 | PAN-OS GlobalProtect firewall | Edge device, unauthenticated RCE, immediate control over a boundary system | High-consequence perimeter bugs cannot wait for routine patch cadence |

| CVE-2024-1709 | ConnectWise ScreenConnect | Remote management product with privileged operational role | Attackers do not need many targets when the target class gives broad downstream control |

| CVE-2023-46805 plus CVE-2024-21887 | Ivanti Connect Secure chain | Authentication bypass chained with command injection, zero-day use, staggered patching | Multi-step chains can be more important than any single CVE viewed in isolation |

| CVE-2023-34362 | MOVEit Transfer | Internet-facing file transfer, direct path to enterprise data, unauthenticated exploitability | High-value data platforms compress decision time even without exotic exploit engineering |

The specific details differ, but the operational lesson is the same. When a vulnerability sits on the edge, inside a management plane, or near data concentration points, defenders do not have much slack even under pre-AI conditions. AI compresses the discovery and weaponization side of that equation further. (TECHCOMMUNITY.MICROSOFT.COM)

SAP NetWeaver and CVE-2025-31324

CVE-2025-31324 affected SAP NetWeaver’s Visual Composer Metadata Uploader and was described in the CVE ecosystem as a missing authorization check that allowed unrestricted file upload. Microsoft said it observed active exploitation and that attackers could deploy web shells and execute arbitrary commands with SAP service permissions. Rapid7 said exploitation in customer environments dated back to at least March 27, 2025, before public disclosure on April 24, and documented JSP web shells dropped into a specific NetWeaver path. CISA later added the issue to KEV. (TECHCOMMUNITY.MICROSOFT.COM)

Why is this a useful example for the AI era? Because it combines several attributes that dramatically reduce attacker uncertainty. The target class is valuable. The vulnerable function sits on a known enterprise platform. The exploit condition is simple enough to support broad scanning. The post-exploit move is obvious: plant web shell, execute commands, pivot if possible. AI does not need to invent a novel intrusion model here. It only needs to shorten the time from advisory understanding to reproducible operational logic. The simpler the path, the more dangerous time compression becomes. (TECHCOMMUNITY.MICROSOFT.COM)

The lesson for defenders is not only “patch SAP quickly.” It is that business-critical enterprise platforms deserve exploit-first validation on day zero. As soon as a severe advisory appears, the team should answer four questions in parallel. Do we run the affected component? Is it internet-reachable or adjacent to exposed systems? Can we identify signs of prior compromise in the exact paths observed by researchers? If patching is delayed, what controls actually reduce exploitability rather than just reduce anxiety? Those questions matter more than waiting for a neat severity summary in a dashboard. (TECHCOMMUNITY.MICROSOFT.COM)

PAN-OS GlobalProtect and CVE-2024-3400

CVE-2024-3400 was an unauthenticated remote code execution vulnerability affecting certain PAN-OS versions and configurations. NVD describes it as allowing an unauthenticated attacker to execute code with root privileges on the firewall under affected conditions. CISA added it to KEV with required action deadlines, and Palo Alto warned that attacks exploiting the issue were increasing and that public proof-of-concept material, including persistence-related post-exploit examples, existed. (NVD)

This is the classic patch-window killer. It sits on a perimeter security product. It is reachable from the internet in common deployments. Successful exploitation lands on an extremely sensitive system. Even without AI, this is urgent. With AI in the picture, perimeter issues like this become even more dangerous because attackers can prioritize them with almost no ambiguity. There is no need to debate whether the product is important, whether the surface matters, or whether the blast radius is meaningful. Those answers are built into the product category. (NVD)

The defensive lesson is equally clear. “Wait for the next maintenance window” is not a serious default for unauthenticated, internet-facing boundary systems when exploit pressure is rising. If the patch is not immediately deployable, temporary mitigation and signature-based controls have to be applied with the assumption that they are buying time, not resolving the underlying condition. In the AI era, that distinction becomes critical. Temporary controls are often the only way to shrink exploitability before the patch window closes. (NVD)

ScreenConnect and CVE-2024-1709

CVE-2024-1709 affected ConnectWise ScreenConnect and was described by NVD as an authentication bypass vulnerability. Security authorities warned that it was actively exploited, and ConnectWise directed on-premises users to upgrade to fixed versions. This was not just another web application flaw. ScreenConnect sits in a class of software that often has direct operator trust, sensitive visibility, and broad remote access capability. A compromise of that platform can be strategically more valuable than a compromise of a single exposed web server. (NVD)

That matters when thinking about AI-assisted prioritization. An attacker does not need a million possible targets. It needs target classes with high expected return. Remote management software, support tooling, jump systems, VPN concentrators, and file transfer platforms rank very high on that list. In many enterprises, one successful exploit against such a system buys far more downstream leverage than a random application bug ever could. AI makes the cost of evaluating and operationalizing those categories lower. The result is not universal compromise. It is more rational, more targeted pressure against the systems defenders can least afford to lose. (NVD)

For defenders, the important correction is to stop treating “privileged operational software” as just another asset class. These products deserve their own playbooks, faster validation, stronger exposure controls, and dedicated retest steps after every emergency change. If the software helps administrators reach the rest of the estate, it cannot live on the same patch tempo as low-consequence systems. (fsisac.com)

Ivanti Connect Secure, CVE-2023-46805 and CVE-2024-21887

Ivanti’s 2024 incident cycle remains one of the best examples of why defenders need to think in chains rather than isolated CVEs. Public sources described CVE-2023-46805 as an authentication bypass in the web component and CVE-2024-21887 as a command injection issue. Authorities warned that they were being actively exploited as zero-days, and mitigation guidance included temporary XML-based workarounds before final patches were broadly available. Later recommendations also included isolating or disconnecting compromised appliances and running integrity checks. (NVD)

This is exactly the kind of scenario where AI changes the economics of offense. A chained path is often where the real complexity lives. One weakness gets you around a gate. Another weakness gives execution or control. Humans have always done this, but chain construction and chain testing take effort. A model that can reason across boundary conditions, product behavior, and exploitability hypotheses can reduce that effort. That is why Unit 42’s emphasis on more advanced vulnerability chaining is more important than generic statements about bug finding. Attackers do not win by discovering interesting code smells. They win by discovering viable paths. (Unit 42)

The defensive lesson is to stop measuring urgency one CVE at a time when architecture suggests the risk is combinatorial. If an externally exposed product is already under active scrutiny and an authentication boundary weakness exists anywhere near code execution, the organization should treat the pair as an attack path problem. That means faster proof-of-exploitability testing, faster mitigation, and faster compromise assessment. The vulnerability count is less important than the chain shape. (Cyber Security Agency of Singapore)

MOVEit Transfer and CVE-2023-34362

NVD describes CVE-2023-34362 as a SQL injection vulnerability in MOVEit Transfer that allowed an unauthenticated attacker to gain access to the underlying database, and notes exploitation in the wild in May and June 2023 over HTTP or HTTPS. On its own, that description already explains why the case matters. MOVEit is not just another application. It is a data transfer platform, which means it often sits near sensitive data flows and external-facing interfaces. A successful exploit against that kind of product can produce immediate extortion, theft, or follow-on intrusion value. (NVD)

For the purposes of this article, MOVEit matters because it shows how little time defenders often have even without invoking futuristic AI narratives. If a high-value, internet-facing product presents a clean pre-auth path, the advisory-to-impact interval can already be brutal. AI increases the danger not by changing the importance of such systems, but by reducing the attacker’s search and operationalization cost around them. That is why the organizations most likely to struggle are not the ones with the longest vulnerability lists. They are the ones with poor exposure visibility and slow proof windows on the systems that matter most. (NVD)

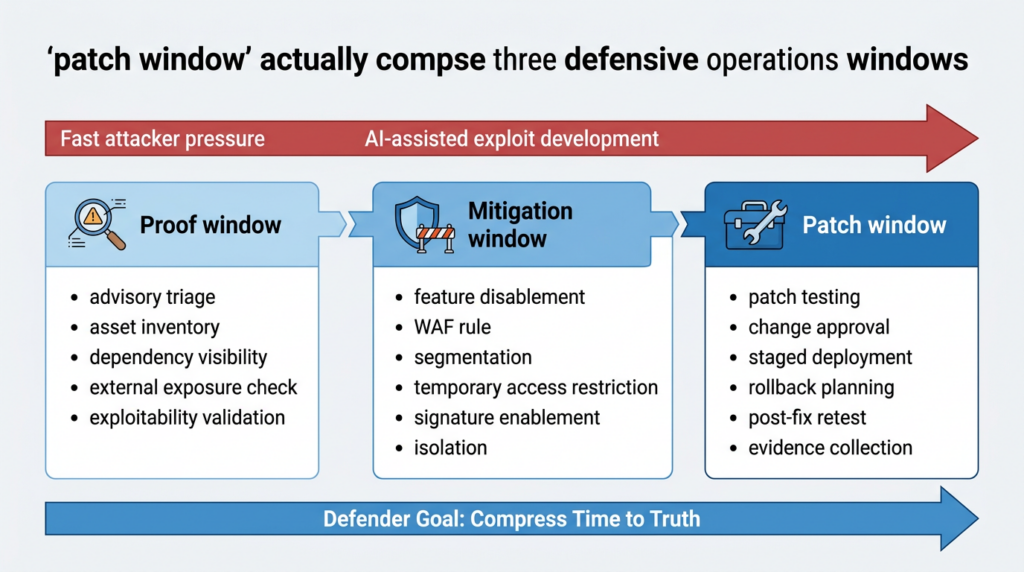

The Patch Window Is Really Three Different Windows

Most organizations talk about the patch window as if it were a single thing. In practice, defenders live inside three different clocks.

The first is the proof window. That is the time between learning of a vulnerability and knowing whether your specific environment is actually exposed in a meaningful way. Do you run the software? Which versions? Which assets? Is the vulnerable path reachable? Is the asset public-facing? Does the weakness cross an identity boundary? Is there a realistic route to code execution, sensitive data, or privileged access? The proof window is where many defenders lose time because they do not have current inventory, dependency visibility, or fast validation workflows. AI makes this more dangerous because attackers may be accelerating while defenders are still figuring out whether the problem is theirs. (fsisac.com)

The second is the mitigation window. This is the time required to lower exploitability before a full patch can be safely deployed. Mitigation can mean disabling a risky feature, applying a vendor workaround, turning on a signature, restricting reachability, adding WAF controls, segmenting the asset, rotating credentials, or isolating a management plane. This window matters because real environments often cannot patch immediately. Forescout’s guidance is valuable here because it explicitly frames many operational environments as mitigation-first rather than patch-first. That is not a sign of failure. It is a sign of reality. (Forescout)

The third is the patch window in the narrow sense. This is the time from approved fix to successful deployment and post-deployment verification. Even this step is not only about rollout. It is also about proving that the vulnerable path is gone, that no unpatched or drifted systems remain, and that the patch did not simply move the attack to an adjacent path or variant. OpenAI’s security material emphasizes an ecosystem that continuously identifies, validates, and fixes issues rather than relying on episodic audits and static bug inventories. That mindset fits the three-window model better than the old assumption that deploying a patch is the same thing as closing risk. (OpenAI)

This model helps explain why so many security teams feel overwhelmed during major advisories. They try to do everything inside the patch window, even when the first urgent need is proof and the second urgent need is mitigation. In an AI-tempo environment, compressing the proof window may be the most important improvement of all, because it tells you where to spend scarce time before the attacker does. Once proof is fast, mitigation becomes targeted. Once mitigation is targeted, patching becomes a controlled operation rather than blind panic. (fsisac.com)

What Fast Defenders Do Differently

A credible response to AI-driven vulnerability discovery does not start with more dashboards. It starts with faster truth.

That means real-time or near-real-time asset inventory, software inventory, and dependency visibility. If a new advisory drops and the team cannot answer within minutes whether it runs the affected component or product, the proof window is already too long. This is especially important for open-source components and embedded dependencies, where exposure often hides inside images, vendor distributions, desktop tools, and transitive packages. The defender does not need perfect omniscience on day one. It does need a workflow that shortens the time from advisory to scoping. (fsisac.com)

A simple SBOM-first triage workflow is one practical way to do that:

syft dir:. -o cyclonedx-json > sbom.json

jq -r '

.components[]

| select(.name | test("sqlite|openssl|log4j|zlib|curl"; "i"))

| [.name, .version, .purl]

| @tsv

' sbom.json

grype sbom:sbom.json --only-fixed

This does not prove exploitability. It does something more basic but still essential. It answers whether the component is present, where it appears, and whether the environment is carrying versions that deserve immediate attention. In the AI era, even that first scoping step matters because the time lost deciding whether the issue is relevant may be greater than the time the attacker needs to narrow a viable path. (fsisac.com)

Fast defenders also do patch-diff triage instead of treating vendor updates as opaque. Attackers will inspect patches to infer bug classes and target logic. Defenders should do the same, not to write exploits, but to understand urgency and likely reachability.

git fetch origin --tags

git diff v1.4.2..v1.4.3 -- src/ auth/ parser/ upload/ > advisory.diff

rg -n 'auth|token|session|upload|path|exec|deserialize|sql|rowid|bounds|length' advisory.diff

This kind of triage is not a substitute for deep review. It is a way to compress the proof window. If the patch shows changes in authentication logic, upload validation, parser bounds checks, or command invocation, the internal response should become sharper immediately. The point is to move from “we heard there is a critical issue” to “we have a plausible idea of what class of flaw changed and where to look in our own deployments.” Anthropic’s warning that patches can become roadmaps is exactly why defenders need this habit. (red.anthropic.com)

Fast defenders also keep exploitability and compromise assessment close together. The SAP NetWeaver case is a good example. Rapid7 documented a specific JSP landing path for observed web shells. That makes immediate filesystem triage useful while patch planning is still underway.

find /usr/sap \

-path '*/j2ee/cluster/apps/sap.com/irj/servlet_jsp/irj/root/*.jsp' \

-type f \

-printf '%TY-%Tm-%Td %TT %p\n'

grep -R --line-number -E 'Runtime\.getRuntime|ProcessBuilder|cmd\.exe|/bin/sh' \

/usr/sap/*/j2ee/cluster/apps/sap.com/irj/servlet_jsp/irj/root/

This is not a complete incident response workflow. It is an example of how defenders can overlap vulnerability response with compromise hunting instead of handling them as separate calendar events. That overlap matters when time is short. (Rapid7)

The old model of vulnerability response too often assumed a linear sequence: advisory, ticket, patch meeting, rollout, then maybe a later validation exercise. That sequence is too slow for exposed systems when exploit development costs are dropping. NIST’s long-standing penetration testing guidance is still useful because it defines penetration testing as realistic attack simulation against real systems and data using attacker-like tools. The problem is not that realistic testing was wrong. The problem is that its cadence was often too low for the attack tempo that exposed systems now face. (NIST Publications)

Continuous Validation Beats Periodic Reassurance

The most practical adaptation is not a new slogan. It is a new validation cadence.

If attack paths can be identified and operationalized faster, then defenders cannot rely entirely on periodic assessments that produce static snapshots. A quarterly penetration test can still be valuable for deep coverage, specialist reasoning, and architecture review. But it cannot be the only place where exploitability gets tested. Attack surfaces change between quarters. Dependencies change. Cloud routing changes. New products go live. Emergency patches introduce regressions. Exposure that did not exist last month may exist now. A world of continuous change needs continuous verification. (fsisac.com)

This is the operating idea behind the recent shift in vendor and research language. OpenAI talks about a security ecosystem that continuously identifies, validates, and fixes issues as software is written. Anthropic’s Glasswing program focuses not only on vulnerability detection but also on penetration testing and binary testing for defenders. Google’s Big Sleep example demonstrates that AI can, at least in some cases, surface real vulnerabilities before attackers exploit them. These are different organizations with different programs, but they point in the same direction. The defender-side use of AI becomes meaningful only when it collapses time inside a real operational loop. (OpenAI)

Some teams are already responding by building shorter loops between advisory intake, exploitability testing, evidence capture, and retest after fixes. In that narrow and practical sense, platforms such as Penligent are useful when they help turn a new vulnerability signal into a scoped validation run, collect evidence, and then rerun the path after remediation to prove it is dead. Penligent’s own published material around Project Glasswing argues for continuous penetration testing precisely because the dangerous gap is often the one between “we believe the issue is fixed” and “we verified the attack path no longer works.” (Penligent)

The core point is not about any single tool. It is about the control loop. If the loop ends when the patch is approved or even deployed, the organization still does not know enough. In the AI era, proof after change matters as much as the change itself. The attack path either still exists or it does not. The only dependable answer is validation. (OpenAI)

Evidence Matters More Than Narrative

The AI security market is filling with summaries, co-pilots, rankings, and claims of automated reasoning. Much of it is useful. Some of it is not. The right question is not whether a system can talk confidently about a vulnerability. The right question is whether it can produce evidence a defender can act on.

Evidence means showing the asset or dependency is present. It means showing the vulnerable path is reachable. It means showing whether the issue is externally reachable, internally reachable after a boundary crossing, or merely theoretical in the current environment. It means preserving request traces, file paths, exploit conditions, and post-fix retest artifacts that can survive audit or incident review. That is a much higher bar than producing a polished explanation of CVE risk. In an AI-tempo environment, the teams that win are the ones that can convert a noisy signal into a small set of defensible facts quickly. (fsisac.com)

That is also why repeatability matters. One useful idea in Penligent’s public material is the emphasis on retest discipline and evidence-backed validation rather than one-off scan output. That distinction is important. An AI-generated narrative might tell you what is likely. A repeatable validation artifact tells you what happened in your environment before and after the change. Only the latter actually closes the proof window. (Penligent)

There is a broader lesson here. AI will create a lot of convincing language about risk. Security teams should reward systems that create convincing evidence instead. This is not a philosophical preference. It is an operational necessity when exploit development and attack-chain assembly are accelerating. (red.anthropic.com)

How Defenders Can Still Win Time Back

The situation is serious, but it is not hopeless. In fact, some of the strongest public evidence in this space points to a real defensive upside.

Google Project Zero and DeepMind’s Big Sleep work is important precisely because it is concrete. The system found a real-world vulnerability in SQLite before release, and Google described that as the first real-world vulnerability discovered by the agent. Project Zero went further and called this a promising path toward finally creating an asymmetric advantage for defenders. That language matters. It means the idea of defender asymmetry is no longer theoretical rhetoric. It is an explicit goal of current research. (googleprojectzero.blogspot.com)

DARPA’s AI Cyber Challenge makes the same point at ecosystem scale. The challenge exists because critical code, especially open-source code, needs better vulnerability discovery and patching capacity than the current workforce and methods can provide. DARPA says its AI systems found and patched real-world vulnerabilities and is pushing Cyber Reasoning Systems into open release. That is a long-term play to change the economics of defense, not just offense. If defenders can discover, triage, patch, and validate at machine speed before attackers operationalize the same weaknesses, then the asymmetry can shift. But it only shifts when those capabilities are deployed inside actual engineering and security workflows. (darpa.mil)

Anthropic’s Glasswing and OpenAI’s cyber programs point in the same direction. Glasswing is designed to give defenders a head start across vulnerability detection, binary testing, endpoint security, and penetration testing. OpenAI says it wants advanced cyber capabilities to reach defenders broadly with safeguards, and it describes a model of security work that is continuous rather than episodic. None of that guarantees success. It does, however, show that the serious players in this space are converging on the same operational insight: the advantage will go to whoever compresses the most useful time loops first. (anthropic.com)

That is why the strategic answer is not “patch faster” in the abstract. It is more specific. Compress proof. Prepare mitigation. Patch with urgency on exposed and high-consequence systems. Retest after change. Use AI to identify and verify risk earlier in the software lifecycle and earlier in post-release response. In other words, use AI where time matters, not where presentation looks impressive. (fsisac.com)

What a Credible Operating Model Looks Like Now

A credible model for the next few years starts with assumptions, not tools.

Assume that public source code, public patches, and high-value exposed systems will be analyzed faster than before. Assume that N-days on internet-facing platforms may attract near-immediate attention even before central feeds fully mature. Assume that a single CVE score is less useful than reachability, privilege consequence, and architectural position. Assume that management planes, remote access tools, file transfer systems, and business-critical platforms deserve shorter response loops than ordinary line-of-business software. These are not paranoid assumptions. They are increasingly normal operating assumptions based on current guidance and incident history. (Unit 42)

From there, build the workflow around the three windows.

First, shrink the proof window with current asset and dependency visibility, advisory-to-asset mapping, patch-diff triage, and focused exploitability testing. Second, shrink the mitigation window with pre-approved playbooks for feature disablement, signature enablement, reachability restrictions, segmentation, and emergency monitoring changes. Third, shrink the patch window for the systems that matter most, especially internet-facing systems and control points. Not every environment can patch instantly, but every environment can prepare to make better time decisions than it did last year. (fsisac.com)

Also, stop measuring maturity only by ticket closure. Ticket closure without exploitability validation is paperwork. A mature program knows not just that a patch was deployed, but that exposure was confirmed beforehand, that compensating controls existed if needed, that high-risk assets were checked for compromise during the response, and that the post-fix state was retested. This is where AI can meaningfully help defenders. It can accelerate code reasoning, binary inspection, exploit hypothesis generation, and retest automation. But it only helps if the surrounding process demands evidence at each step. (OpenAI)

Finally, treat continuous penetration testing and continuous validation as operational necessities for high-value attack paths, not as luxury add-ons. NIST’s classic framing of penetration testing as realistic attack simulation still holds. What changes is the frequency with which realistic validation needs to happen on systems that concentrate privilege, trust, or data. If AI shortens the attacker’s preparation loop, the defender must shorten the validation loop or accept a widening asymmetry. (NIST Publications)

Conclusion

AI did not create insecure software. It changed the tempo at which insecure software can be understood, weaponized, and pressured.

That difference matters because modern defense programs were built around time assumptions. They assumed advisory analysis would take time. They assumed exploit development would take time. They assumed patch release created breathing room. They assumed quarterly or monthly validation was often enough. Those assumptions are all getting weaker. Unit 42’s “N-hours instead of N-days” framing, Anthropic’s disclosure that the cost and expertise required for vulnerability exploitation are falling, Google’s real-world AI-found SQLite case, DARPA’s AIxCC push, and the practical guidance from FS-ISAC all point to the same conclusion. The patch window is no longer a comfortable operational buffer. It is a contested interval. (Unit 42)

The strategic mistake now would be to keep talking about patching as if the only question is how quickly a package can be deployed. The first question is how quickly you can know whether you are actually exposed. The second is how quickly you can cut exploitability if patching cannot happen immediately. Only then does the deployment clock become useful. In the AI era, the first defender advantage to recover is not a prettier backlog. It is shorter time to truth. (fsisac.com)

Further Reading and Related Resources

Palo Alto Unit 42 on frontier AI models, autonomous vulnerability discovery, and the move toward N-hours instead of N-days. (Unit 42)

Anthropic Project Glasswing and the program’s focus on vulnerability detection, binary testing, endpoint security, and penetration testing for defenders. (anthropic.com)

Anthropic’s Claude Mythos disclosure for the strongest public description of model capability, binary reverse engineering, and the disclosure limits created by unpatched findings. (red.anthropic.com)

Google Project Zero and Big Sleep on the SQLite case and the idea of regaining asymmetric advantage for defenders. (googleprojectzero.blogspot.com)

DARPA AI Cyber Challenge on AI systems finding and patching real-world vulnerabilities in critical codebases. (darpa.mil)

FS-ISAC on enterprise response, compressed patch timelines, and same-day decisioning for exposed systems. (fsisac.com)

CISA Known Exploited Vulnerabilities Catalog as a primary feed for confirmed real-world exploitation. (CISA)

OpenAI on Codex Security, GPT-5.4-Cyber, continuous validation, and binary reverse engineering for defenders. (OpenAI)

Penligent on why Project Glasswing strengthens the case for continuous penetration testing. (Penligent)

Penligent on evidence-backed retesting and continuous pentesting discipline. (Penligent)

Penligent on what actually matters in AI-assisted penetration testing workflows. (Penligent)