The phrase AWS security agent used to be easy to interpret. Most engineers meant a workload-side component that lived on a host, helped AWS manage it, or fed telemetry back into a cloud security service. In 2026, that phrase has become overloaded. It still points to classic components such as SSM Agent, Inspector’s agent-based path, and the GuardDuty runtime security agent. But it now also points to AWS Security Agent, a distinct AWS service that applies AI-driven design review, code review, and context-aware penetration testing across the software lifecycle. If you do not separate those two meanings, you end up comparing tools that answer completely different questions. (docs.aws.amazon.com)

That distinction matters because modern AWS security programs are no longer choosing between “agent” and “no agent.” They are choosing how to combine workload management, runtime behavior visibility, software inventory coverage, exploit validation, and now AI-agent governance. A runtime security sensor can tell you which process executed, which file was touched, and whether a suspicious network connection occurred. A software inventory scanner can tell you which package versions are present and whether a known vulnerability might apply. A context-aware pentest system can go after authenticated flows, business logic, and multi-step attack paths that posture tools do not prove exploitable. An AI-agent governance layer decides what a model-driven system is allowed to do after it authenticates successfully. Those are different jobs. Mature teams keep them different on purpose. (docs.aws.amazon.com)

AWS’s own public material reflects that shift. The December 2, 2025 preview announcement presented AWS Security Agent as an AI-powered service for proactive application security across design, code, and deployment validation. On March 31, 2026, AWS separately announced that on-demand penetration testing in AWS Security Agent was generally available in six regions. At the same time, the public product page still carries a preview label, which is a useful reminder to read AWS Security Agent by capability, not by a single marketing state. If you treat every page as describing the exact same rollout stage, you will misread what is broadly available today. (Amazon Web Services, Inc.)

The right way to think about the keyword is simple. There is an older AWS security agent world that is about workloads. There is a newer AWS Security Agent world that is about applications. And there is an emerging AWS AI security world that is about agentic action control after authentication. The interesting technical work begins where those three worlds overlap. (docs.aws.amazon.com)

Why AWS Security Agent Means Two Different Things

If someone says they need an AWS security agent for EC2, the question is usually operational. They want a management channel, a runtime sensor, or a vulnerability scanner. SSM Agent is the clearest example. AWS describes it as software that runs on EC2 instances, edge devices, on-premises servers, and virtual machines so Systems Manager can update, manage, and configure those resources. That is not pentesting. That is control-plane reach into compute. Inspector can build on that SSM-managed state to do agent-based vulnerability scanning. GuardDuty Runtime Monitoring uses its own security agent to watch operating system, process, file, and network events. In that older meaning, “security agent” is close to “privileged operational component attached to a workload.” (docs.aws.amazon.com)

If someone says they need AWS Security Agent for their customer portal, the question is now application-centric. AWS’s preview announcement says the service can evaluate architectural documents and code against organization-defined security requirements and perform context-aware penetration testing during deployment validation. The current features page says on-demand penetration testing uses specialized AI agents to test web applications and APIs against OWASP Top 10 issues and business logic flaws, then produce validated findings, exploit paths, and remediation guidance. In that newer meaning, AWS Security Agent is not a host sensor. It is a development-lifecycle security service that uses AI agents to reason over design, code, and application behavior. (Amazon Web Services, Inc.)

The confusion gets worse because the new service can also point at applications outside AWS. AWS’s FAQ says you still need an AWS account, but the service can target applications running in AWS, on premises, in hybrid environments, and in other cloud providers. That means the name “AWS Security Agent” no longer implies an AWS-only target or a workload-resident sensor. It means an AWS-managed security service that can assess applications wherever they run, provided you configure scope, ownership validation, credentials, and access boundaries correctly. (Amazon Web Services, Inc.)

Another useful distinction is where the system is supposed to live. The resilience documentation says AWS Security Agent is available in six regions and explicitly notes that it is a tool used during application development and should not be deployed as critical or customer-facing infrastructure. That is a very different operational posture from SSM Agent or a runtime security sensor, both of which are part of the workload management and telemetry fabric. One belongs near development and validation. The other belongs near the workloads themselves. (docs.aws.amazon.com)

The simplest practical test is this. Ask what question you are trying to answer. If the question is, “Can AWS manage this instance, patch it, or use it as a scan target?” you are in the workload-agent world. If the question is, “What process ran on this node, what file changed, and which connection was made?” you are in the runtime telemetry world. If the question is, “Which packages are present, and which known CVEs might apply?” you are in the inventory and vulnerability exposure world. If the question is, “Can an authenticated user path, a business logic flaw, or a chained attack actually be exploited?” you are in the AWS Security Agent or AI pentest world. If the question is, “What can this AI system do after it gets a token and tool access?” you are in the AI-agent governance world. The keyword only makes sense once you decide which of those problems you are actually solving. (docs.aws.amazon.com)

| Meaning of the term | Where it operates | Primary job | Best answer it gives you | What it does not prove |

|---|---|---|---|---|

| SSM Agent and related workload-side agents | On instances, servers, VMs, edge devices | Management and control-plane execution | Can AWS manage this node and use it as an AWS-managed resource | Whether the app is actually exploitable |

| Inspector agent-based and agentless coverage | On managed instances or from EBS snapshot workflows | Software inventory and CVE exposure discovery | Which packages and libraries may be vulnerable | Whether a finding is exploitable in context |

| GuardDuty Runtime Monitoring security agent | On monitored workloads | Runtime visibility into file, process, command line, and network behavior | What happened at runtime | Whether an application design flaw or business logic issue can be exploited end to end |

| GuardDuty Malware Protection for EC2 | Agentless EBS scan path triggered by findings | Malware scanning on attached volumes | Whether potentially impacted volumes contain malware artifacts | Whether the broader application path is safe |

| AWS Security Agent service | AWS-managed service against application targets | Design review, code review, and application pentesting | Whether a web app or API can be exploited through validated attack paths | Full replacement for human pentesters or runtime detection coverage |

The comparison above synthesizes AWS Systems Manager, Amazon Inspector, Amazon GuardDuty, and AWS Security Agent documentation and announcements. (docs.aws.amazon.com)

The AWS Workload Agents Most Teams Already Run

Start with SSM Agent because it anchors much of the rest of AWS’s workload-side security model. AWS says SSM Agent runs on EC2 instances, edge devices, on-premises servers, and VMs, processes instructions from Systems Manager, and sends execution status back to AWS. That sounds routine, but it is a huge architectural fact. Once a node becomes SSM-managed, it can participate in a large set of AWS security and operations workflows without inbound administrative exposure. In many environments, that makes SSM Agent the quiet prerequisite behind scanning, remote command execution, automation, and patching. When people say “AWS agent,” this is often what they mean, even when they do not say it explicitly. (docs.aws.amazon.com)

Amazon Inspector sits one layer above that. For agent-based EC2 scanning, Inspector requires the instance to be SSM managed. AWS’s documentation states that the instance must have SSM Agent installed and running, and Systems Manager must have permission to manage it. That is why Inspector agent-based coverage often appears to teams as “just turn on scanning,” while the operational reality is that a management channel and IAM permission model are already doing the heavy lifting. This is useful because it reduces extra agent sprawl, but it also means your scanning coverage inherits the quality of your SSM hygiene. (docs.aws.amazon.com)

Inspector’s newer agentless behavior matters just as much. In hybrid scanning mode, Inspector uses EBS snapshots for eligible unmanaged instances and collects software inventory without needing the SSM-managed path on those nodes. AWS says agentless scans apply to eligible instances in hybrid mode, use EBS snapshots, and run every 24 hours for qualifying unmanaged instances, while newly eligible instances can be detected every hour. AWS also notes a subtle but important coverage difference: for Linux package vulnerability discovery, agentless scanning can inspect all available paths, whereas agent-based deep inspection is tied to default paths plus any additional ones you configured. The result is that the same instance can legitimately produce different findings depending on which method is active. That is not a bug in the scanner. It is a consequence of two different data-collection models. (docs.aws.amazon.com)

That distinction changes how you should interpret vulnerability exposure. Agent-based coverage is often better integrated into instance lifecycle management and can feel more continuous when your SSM discipline is strong. Agentless coverage is valuable for unmanaged or stale instances and for accounts where installing or fixing agents lags reality. But neither mode tells you whether the vulnerable component is reachable through the real application path, whether compensating controls block exploitation, or whether a business logic path makes the issue irrelevant or worse. Inspector is answering a package and configuration question. It is not performing exploit validation. (docs.aws.amazon.com)

GuardDuty Runtime Monitoring answers a different class of questions. AWS says Runtime Monitoring analyzes operating-system, networking, and file events, and that its security agent adds visibility into file access, process execution, command-line arguments, and network connections. That is valuable precisely because package-level exposure alone does not tell you what the workload is doing in real time. Runtime Monitoring gives defenders a behavioral lens. It can help separate “vulnerable package is present” from “the workload is now behaving like something dangerous is happening.” For response teams, those are different priorities. One is exposure management. The other is threat detection. (docs.aws.amazon.com)

GuardDuty Malware Protection for EC2 answers yet another question. AWS documents it as an agentless malware scan against EBS volumes attached to potentially impacted resources, typically triggered when GuardDuty raises findings associated with possible malware. The point is not broad continuous exploit validation. The point is to inspect storage artifacts with minimal performance impact on the instance and without asking teams to install another resident component. This is a strong example of why “agentless” does not mean “replaces everything else.” It solves a specific post-signal inspection problem well, but it does not replace runtime telemetry, vulnerability inventory, or application pentesting. (docs.aws.amazon.com)

For cloud defenders, the dangerous mistake is to flatten all of these controls into a single mental model. Teams often say they want an AWS security agent when what they really want is better evidence. Better evidence of what is installed. Better evidence of what is running. Better evidence of what is malicious. Better evidence of what is exploitable. Those are not synonyms. When you map them to the right AWS service category, architecture decisions become much cleaner. (docs.aws.amazon.com)

A useful side note here is metadata hardening. Security Hub’s EC2 control states that an instance passes the IMDSv2 check only if HttpTokens is set to required, and AWS explicitly ties IMDSv2 to protection against SSRF, open reverse proxies, open firewalls, and related paths to metadata access. This matters in a discussion about agents because workload-side agents, SDKs, and applications often rely on instance metadata for temporary credentials. If you treat metadata hardening as separate from your “agent strategy,” you are leaving one of the most important trust boundaries unguarded. (docs.aws.amazon.com)

| AWS-native capability | Collection model | What it is best at | Why teams deploy it | What still needs another control |

|---|---|---|---|---|

| SSM Agent | Resident workload agent | Managed-node operations and control-plane execution | Patch, configure, automate, and prepare nodes for other AWS workflows | Real exploit validation and runtime threat intent |

| Inspector agent-based | SSM-managed workload path | CVE exposure and inventory on managed instances | Good coverage when SSM is already part of the fleet standard | Unmanaged instances and exploit context |

| Inspector agentless | EBS snapshot-based | Vulnerability inventory on eligible unmanaged or stale instances | Catch coverage gaps where SSM is missing or stale | Runtime behavior and full exploitability |

| GuardDuty Runtime Monitoring | Resident security agent | Process, file, command line, and network visibility | Threat detection from runtime behavior | Proof that an app flaw is exploitable end to end |

| GuardDuty Malware Protection | Agentless EBS inspection | Malware inspection after GuardDuty signal | Fast artifact inspection with low operational footprint | Application logic validation and code-level remediation |

| IMDSv2 enforcement and Security Hub controls | Metadata hardening and posture check | Protecting credential retrieval path | Reduce SSRF and metadata abuse risk | Post-auth business logic abuse and AI action control |

The table summarizes official AWS documentation on Systems Manager, Amazon Inspector, GuardDuty, and Security Hub controls. (docs.aws.amazon.com)

What the New AWS Security Agent Actually Does

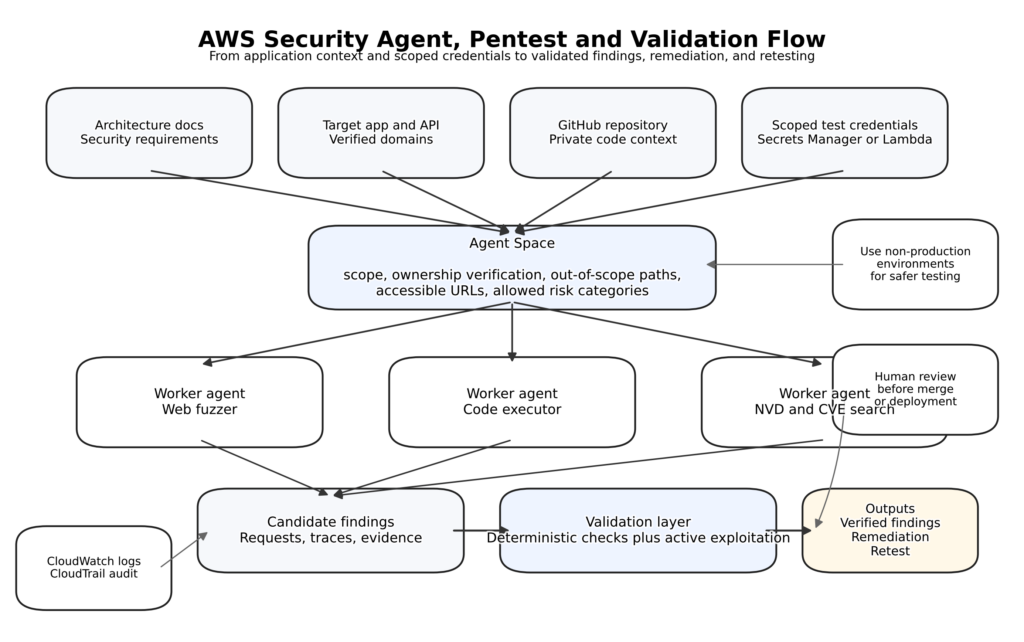

The newer AWS Security Agent service is easiest to understand if you stop thinking like an endpoint security buyer and start thinking like an application security lead. The December 2025 announcement introduced it as an AI-powered service that can evaluate architecture documents and code against organizational requirements, then perform context-aware penetration testing for deployment validation. The March 31, 2026 announcement says on-demand penetration testing is now GA in six regions: N. Virginia, Oregon, Ireland, Frankfurt, Sydney, and Tokyo. The product page still presents AWS Security Agent under a preview banner, so the most accurate reading is that AWS Security Agent is a broader service family, while the pentesting capability inside it has reached GA. That is not uncommon during staged product evolution, but it is important to read precisely. (Amazon Web Services, Inc.)

AWS’s own “how it works” page says AWS Security Agent has three main components: the AWS Management Console, the Security Agent Web Application, and Agent Spaces. Agent Spaces are not a cosmetic concept. They are how teams scope work to an application or project, define security requirements, connect code repositories, configure test boundaries, and manage who sees what. That is a clue to AWS’s intended operating model. This is not “install agent and forget it.” This is “create a scoped security workspace around an application lifecycle.” (docs.aws.amazon.com)

The setup flow reinforces that reading. AWS documents two access patterns: IAM Identity Center SSO and IAM-only access through the AWS Console. If you use SSO, AWS’s setup guide says IAM Identity Center must be enabled in us-east-1, even if the Agent Space is being created elsewhere. That detail matters operationally because many teams assume region locality for all security-control plumbing. In practice, identity and application access patterns can have their own regional prerequisites. Security engineers planning rollout should verify access architecture first, not after they have connected repositories and invited teams. (docs.aws.amazon.com)

The pentest path itself is more constrained than many people expect, and that is good. AWS requires target ownership validation, lets users configure out-of-scope paths, and allows exclusion of specific risk categories if needed. The create-pentest workflow also emphasizes verifying that target domains are correctly verified and accessible, IAM roles are properly permissioned, out-of-scope paths prevent destructive operations, and the operator has authorization to test all target domains. Those are not soft suggestions. They are part of what makes AI-assisted pentesting viable without turning into uncontrolled traffic generation. (docs.aws.amazon.com)

Credentials deserve special attention. AWS says penetration testing is the only AWS Security Agent capability that authenticates to a user system at runtime, and that credentials can be provided directly or through AWS Secrets Manager or Lambda-based credential vendors. The documentation recommends creating new credentials with appropriately scoped permissions for pentesting. That single recommendation tells you a lot about how AWS expects mature teams to use the service: not with admin accounts, not with production superuser tokens, and not with “whatever works” copied from a password vault. A context-aware pentest engine with too much authority stops being a validator and starts becoming an unnecessary blast-radius multiplier. (docs.aws.amazon.com)

The same principle applies to network dependencies. AWS Security Agent supports accessible URLs for dependencies such as external authentication providers or CDNs. AWS’s best-practices page makes an important point that many teams will otherwise miss: AWS Security Agent is not instructed to test accessible URLs, but by specifying them you are declaring them trusted dependencies, and test data including credentials can be transmitted to those endpoints. In other words, accessible URLs are not just convenience settings. They are trust-boundary declarations. If your app depends on a third-party IdP or external API, those integrations must be part of your scoping conversation. (docs.aws.amazon.com)

GitHub integration is one of the clearest signs that AWS sees this as a development-lifecycle product, not a point-in-time scanner. AWS says the service supports cloud-hosted GitHub and cloud-hosted GitHub Enterprise, and the integration serves multiple purposes: code review, pentest context, and automated remediation. The remediation page adds a useful nuance: for private repositories, AWS Security Agent can open a pull request with the proposed fix; for public repositories, it provides a downloadable diff file. AWS’s best-practices page further says code security guidance is restricted to private repositories, so security findings do not get publicly exposed before remediation. That is a meaningful operational boundary, not a minor footnote. (docs.aws.amazon.com)

Data handling and logging are also more explicit than many AI security products manage to be. AWS’s FAQ says customer data is encrypted at rest with KMS, logs are stored in CloudWatch in the customer account, and customer data is not used for model training. The preview announcement also said queries and data are never used to train models and that API activity is logged to CloudTrail. The CloudTrail documentation confirms that AWS Security Agent API calls are captured as events, including console-originated and API-originated actions. That means the service is not just producing findings; it can also be incorporated into audit trails around who initiated a test, what was configured, and when. (Amazon Web Services, Inc.)

There is, however, an important documentation nuance around region and data processing. The preview announcement and security-best-practices page both describe launch-time behavior in which AWS Security Agent used Amazon Bedrock geographic cross-region inference while keeping data processing within the United States. The current resilience page, on the other hand, lists six supported AWS regions for the service. The safest interpretation is that rollout state and data-path behavior may differ by capability and by the stage of documentation you are reading. Teams with strict data residency requirements should validate the current state of the exact capability they plan to use rather than assuming that every page reflects the same moment in time. (Amazon Web Services, Inc.)

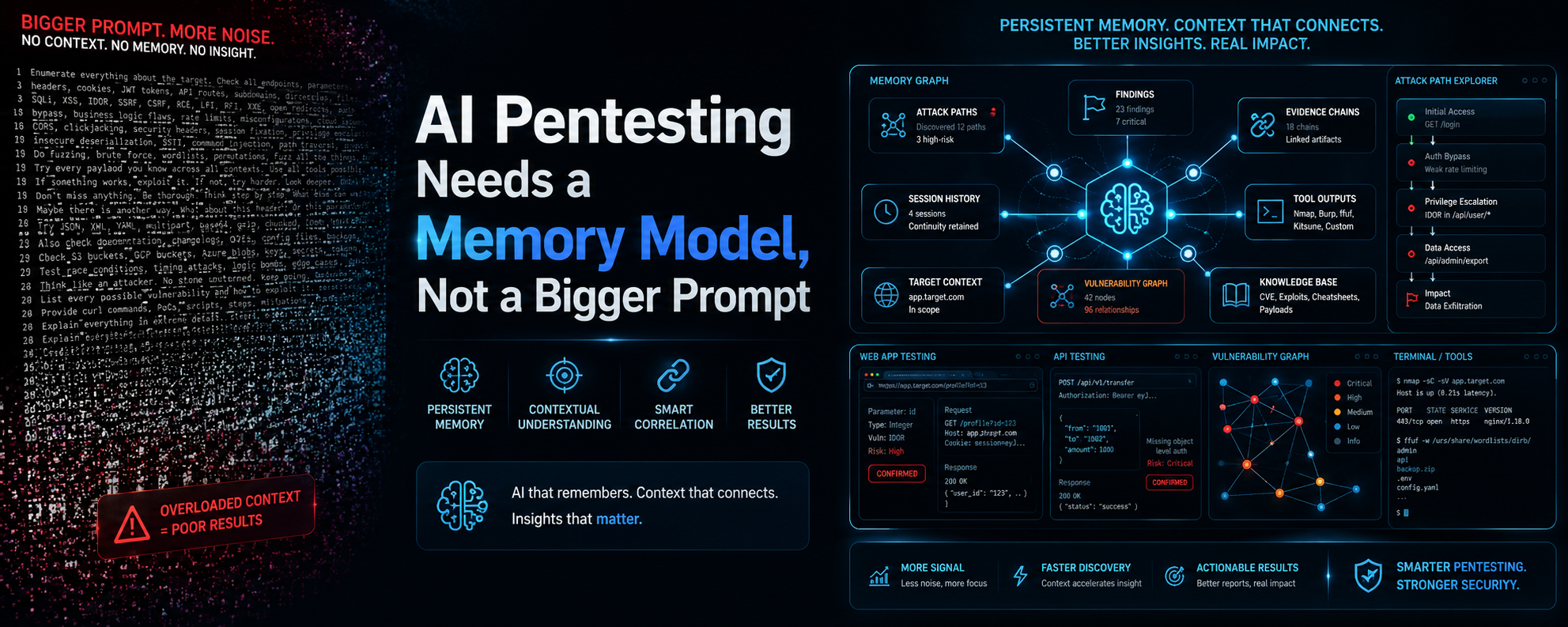

The most technically revealing material is in AWS’s security blog on the service internals. AWS describes a multi-agent architecture where specialized worker agents are equipped with code executors, web fuzzers, NVD-backed CVE search, and vulnerability-specific tools. Candidate findings are not treated as trustworthy just because an LLM proposed them. They go through structured validation using both deterministic validators and LLM-based agents that attempt active exploitation. AWS also openly discusses one of the real hard problems here: balancing exploration and exploitation under a fixed compute budget, and dealing with non-determinism across multiple runs. That is exactly the kind of detail serious security engineers want to see, because it shows the product is not pretending that AI pentesting is magically deterministic or universally complete. (Amazon Web Services, Inc.)

AWS’s features page and GA blog are also very explicit about what the pentesting engine is trying to find. The service is positioned to validate security vulnerabilities through exploitation, produce reproducible exploit paths, and identify complex issues through multi-step attack scenarios, including OWASP Top 10 issues and business logic flaws. That is a critical boundary line. This is no longer just “scan for reflected XSS and open redirects.” The interesting claim is that AWS Security Agent uses application context from documentation, credentials, and code to build deeper attack paths than a classic stateless scanner would. Even if you remain conservative about how complete that coverage is, the operating model is clearly different from commodity DAST. (Amazon Web Services, Inc.)

At the same time, AWS is careful not to oversell the service as a full replacement for professional human testing. The security guidance states that AWS Security Agent is لا a professional penetration testing service and should be integrated into a broader security review workflow. The best-practices page adds that findings vary across runs, AI-generated fixes must be reviewed, and penetration tests should be performed in non-production environments because the service uses a suite of tools from Kali Linux that may modify state, data, or configuration. Those caveats are not weaknesses. They are the signs of a realistic product description. The teams that benefit most from AWS Security Agent will be the teams that treat it as a high-frequency validation layer between coding and formal external review, not as the only offensive testing they ever need. (docs.aws.amazon.com)

Why AI Security Tools and AI Pentest Tools Need to Work Together on AWS

The most useful way to frame AI security on AWS is to split it into two categories. The first category is AI security tooling. That includes guardrails, identity controls, authorization boundaries, auditability, prompt-attack filtering, and runtime control of what an AI system is allowed to do. The second category is AI pentest tooling. That includes systems that exercise authenticated flows, attempt exploit chains, validate whether a finding is real, and retest after fixes. They overlap, but they do not substitute for each other. You do not defend an AI-enabled application by pentesting alone, and you do not validate its real attack surface by policy controls alone. (docs.aws.amazon.com)

NIST’s January 2026 call for input on AI agent security gives a strong official basis for that distinction. NIST says AI agent systems can plan and take autonomous actions that affect real-world systems and face distinct risks when AI model outputs are combined with software functionality. The examples NIST gives are exactly the ones cloud defenders should care about: indirect prompt injection, insecure models, and cases where the model takes security-harming actions even without adversarial input. That is already beyond what classic web application controls were designed to manage. It is not just a content-safety problem. It is an action and authority problem. (المعهد الوطني للمعايير والتكنولوجيا والابتكار)

AWS’s Bedrock documentation makes a parallel point in more concrete language. AWS says prompt injection is an application-level security concern, comparable in principle to SQL injection: AWS secures the underlying infrastructure, but customers are responsible for preventing prompt injection in their code. The same document recommends security testing, least privilege, and guardrail use. That is a powerful framing because it rejects a common false hope in AI adoption: the idea that using a managed model endpoint automatically collapses application security responsibilities. It does not. Managed AI infrastructure reduces one class of risk. It does not remove application-layer responsibility for hostile input, unsafe tool use, or authorization mistakes. (docs.aws.amazon.com)

Bedrock Guardrails are important here, but they need to be placed accurately. AWS documents Guardrails as a configurable safeguard layer that can filter harmful content, prompt attacks, denied topics, sensitive information, and hallucination-related grounding problems. The prompt-attack documentation says the filter supports jailbreaks, prompt injection, and prompt leakage. That makes Guardrails a meaningful preventive layer for AI systems on AWS, especially when you need a standardized control plane across multiple models. But Guardrails are not pentesting. They do not prove whether an authenticated application flow can be abused, whether a plugin boundary is unsafe, or whether a tool chain can be driven into privilege misuse through multi-step logic. (docs.aws.amazon.com)

Automated Reasoning is another good example of why naming matters. AWS says Automated Reasoning checks help validate whether a response is consistent with logical rules and policy constraints. That is useful in regulated or high-assurance settings. But AWS is equally explicit about what it does لا do: no prompt injection protection, no off-topic detection, and detect-mode feedback rather than direct blocking. AWS recommends using content filters together with Automated Reasoning checks for stronger protection. In practice, that means Automated Reasoning is a policy-verification layer, not a general AI exploit defense layer. If you misread it as a substitute for adversarial testing, you will leave meaningful gaps. (docs.aws.amazon.com)

Identity and delegated access become central as soon as an AI system acts on behalf of a user. AWS’s Security Blog on AgentCore Identity describes it as identity and credential management specifically designed for AI agents and automated workloads, with compatibility for existing identity providers and centralized control over what agents can access. That is the right category of tool when your core question is “how should this agent obtain, hold, and use authority.” It is not the right category of tool when your core question is “can the app behind this identity flow still be exploited through stateful, authenticated, context-sensitive attack paths.” Again, both are needed. Neither answers the other’s question. (Amazon Web Services, Inc.)

AWS’s broader guidance on agentic security sharpens the point further. The Security Blog says deterministic external controls are the starting point for agentic security and that organizations should enforce security through infrastructure-level controls outside the agent’s reasoning loop, not through the model’s own instructions or internal guardrails alone. That is a strong architectural principle. It means your agent can reason, but it should not be trusted to define or enforce its own security boundaries. If you build AI-enabled systems on AWS, that principle is the bridge between identity, IAM, network, tool authorization, and runtime review. (Amazon Web Services, Inc.)

AWS’s work on managed MCP servers gives that principle concrete teeth. The AWS Security Blog explains two new IAM context keys: aws:ViaAWSMCPService, which is true when the request comes through an AWS-managed MCP server, and aws:CalledViaAWSMCP, which identifies the MCP service principal involved. That matters because it lets you differentiate direct human or service calls from AI-mediated calls in IAM. For the first time, the AI path itself becomes a first-class authorization condition in the policy engine, rather than a fact you discover only after reading logs. For security teams evaluating AI security tools on AWS, this is one of the biggest practical changes in 2026. (Amazon Web Services, Inc.)

Here is a minimal example pattern for that style of policy control. It is not an AWS copy-and-paste template for every environment. It is a readable policy pattern that demonstrates the idea: let an MCP-mediated workflow read from a bucket, but deny destructive actions when the same bucket is accessed through an AWS-managed MCP path.

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "AllowReadForBuildArtifacts",

"Effect": "Allow",

"Action": ["s3:GetObject", "s3:ListBucket"],

"Resource": [

"arn:aws:s3:::app-build-artifacts",

"arn:aws:s3:::app-build-artifacts/*"

]

},

{

"Sid": "DenyDestructiveS3ActionsViaMCP",

"Effect": "Deny",

"Action": ["s3:DeleteObject", "s3:DeleteBucket"],

"Resource": "*",

"Condition": {

"Bool": {

"aws:ViaAWSMCPService": "true"

}

}

}

]

}

That pattern is directly aligned with AWS’s documented IAM context keys for AWS-managed MCP servers. The point is not S3 specifically. The point is that AI-mediated execution paths can be given narrower, more inspectable authority than the same principal might have in a direct developer workflow. (Amazon Web Services, Inc.)

Why does this matter for pentesting? Because once your application contains an AI layer, the attack surface is no longer just the HTTP endpoints or the package list. It includes prompt injection, tool descriptions, delegated authorization, external content ingestion, and post-auth behavior that can remain within valid API syntax while still violating business intent. NIST’s large-scale red-teaming write-up underlines the point: across more than 250,000 attacks from over 400 participants, at least one successful attack was found against every target frontier model in the competition. That does not mean every AI application is doomed. It means “we have a guardrail” is not the same statement as “we have validated this system under adversarial conditions.” (المعهد الوطني للمعايير والتكنولوجيا والابتكار)

This is exactly where AI security tools and AI pentest tools meet. AI security tools put boundaries around authority, content, and action. AI pentest tools try to discover where those boundaries fail in the real application. On AWS, the combination matters more than the label. Guardrails can reduce prompt-based abuse. Identity and IAM can constrain delegated actions. Runtime visibility can tell you whether something suspicious actually happened. But only adversarial validation will show whether your real customer workflow, external dependency chain, and post-auth state machine still allow the wrong thing to happen. (docs.aws.amazon.com)

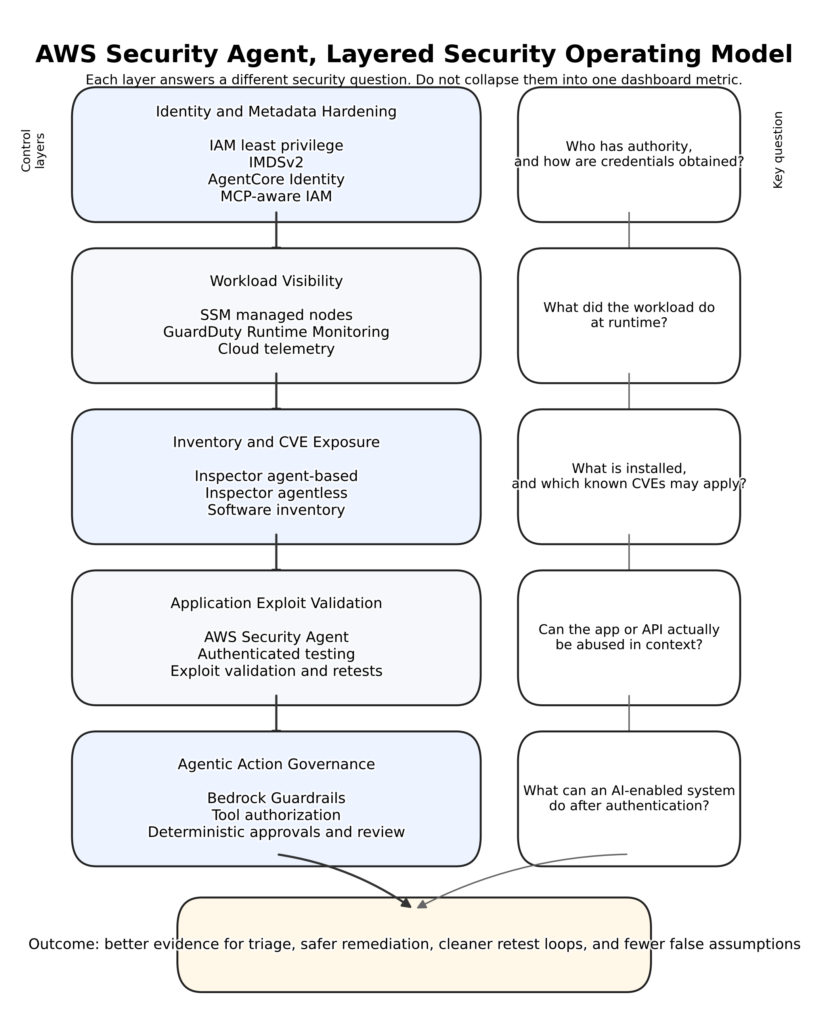

A Layered AWS Security Agent Operating Model

A useful AWS security program in 2026 does not pick one control and call it a day. It organizes controls by the question each layer answers. The first layer is identity and metadata hardening. That includes IAM least privilege, short-lived credentials, metadata protections such as IMDSv2, and explicit separation between human, service, and AI-mediated access paths. Security Hub’s EC2 control around IMDSv2 belongs in this layer because it protects one of the most sensitive credential-delivery paths in the AWS stack. If this layer is weak, everything above it inherits ambiguity about who really had authority. (docs.aws.amazon.com)

The second layer is workload visibility. This is where SSM-managed posture, GuardDuty Runtime Monitoring, and related host-side components operate. The goal is not to prove exploitability. The goal is to know what the workload is, what it is doing, and whether its behavior crosses into suspicious territory. Runtime visibility is what lets defenders tell the difference between a package-level risk and an execution event that needs incident response. It also provides the telemetry needed to investigate whether a pentest or AI workflow caused unexpected impact. (docs.aws.amazon.com)

The third layer is inventory and exposure coverage. Inspector’s agent-based and agentless models fit here. They are great at answering what software exists, which CVEs might apply, and where unmanaged or stale inventory is hiding. For large estates, this is the layer that reduces blind spots and gives triage teams the raw material to prioritize. But it is still an exposure layer. It cannot, on its own, tell you whether the vulnerable package is reachable behind auth, whether the issue is neutralized by deployment specifics, or whether a custom application flaw is more important than any package CVE on the box. (docs.aws.amazon.com)

The fourth layer is application exploit validation. This is where the new AWS Security Agent belongs, along with external AI-assisted offensive workflows. The job of this layer is to test what the earlier layers cannot prove: whether an app, API, or authenticated workflow can be abused through multi-step attack scenarios, business logic flaws, or attack chains that depend on application context. AWS’s own positioning of AWS Security Agent around validated security findings, reproducible exploit paths, and business logic coverage places it squarely here. This layer should also be the place where retests happen after fixes, because “patched” and “no longer exploitable” are not the same statement. (Amazon Web Services, Inc.)

The fifth layer is agentic action governance. This layer exists because AI-enabled applications change the trust model after authentication. Guardrails, AgentCore Identity, IAM context keys for AWS-managed MCP servers, and the principle of deterministic controls external to the agent all fit here. This is the layer that asks not only “who got in” but also “what is this agent allowed to do now, through which tools, under which approvals, and with what evidence trail.” NIST’s framing of AI agent systems as software-plus-model-plus-autonomous action systems makes this layer unavoidable. (docs.aws.amazon.com)

Put differently, a layered model separates discovery from validation and separates authorization from execution. Many teams collapse those steps because they want one dashboard. That is understandable, but it is strategically expensive. The moment you collapse “might be vulnerable,” “behaved suspiciously,” “has authority,” and “was actually exploitable” into the same category, you create noisy workflows and weak ownership boundaries. The AWS security stack works better when each layer is allowed to answer a narrow question with strong evidence. (docs.aws.amazon.com)

Here is a compact planning artifact that many teams should define قبل they wire up AWS Security Agent or any external AI pentest workflow. This is not AWS product syntax. It is a readable scope contract that keeps the offensive workflow bounded and reviewable.

application: customer-portal

environment: pre-production

target_domains:

- app-preprod.example.com

accessible_urls:

- login.identity-provider.example

- static.cdn.example

out_of_scope_paths:

- /admin/billing/refund

- /ops/bulk-delete

credential_profile:

name: pentest-standard-user

privilege: low

storage: secrets-manager

forbidden_actions:

- destructive writes

- irreversible account changes

- third-party endpoint testing

success_criteria:

- verified exploit path

- reproducible request sequence

- evidence saved to ticket

retest_required_after_fix: true

That sort of contract maps cleanly to AWS’s own controls around verified target domains, out-of-scope paths, accessible URLs, scoped credentials, non-production testing, and evidence review. It is also the operational form of the agentic security principle that boundaries should be explicit, deterministic, and external to the model’s reasoning loop. (docs.aws.amazon.com)

For teams with mixed-cloud or external application footprints, this is where vendor-neutral offensive workflows become useful. Penligent’s own cloud-security material makes a point AWS defenders will recognize immediately: posture tooling tells you what might be risky, while automated pentesting tells you what is actually exploitable. Its agent-security material makes a second point that fits the AWS model well: the real risk often starts after authentication, when a valid principal or agent begins acting through tools and inherited context rather than merely presenting a token. That is a sensible lens when deciding whether AWS-native controls are sufficient for your stack or whether you also want an external exploit-validation workflow that spans applications across AWS, other clouds, and externally exposed assets. (بنليجنت)

| الطبقة | Primary question | Typical AWS-native controls | Why the layer exists |

|---|---|---|---|

| Identity and metadata hardening | Who has authority and how are credentials retrieved | IAM, IMDSv2, Security Hub controls, AgentCore Identity, MCP-aware IAM | Prevent overbroad or ambiguous authority before execution begins |

| Workload visibility | What did the node do at runtime | SSM-managed workflows, GuardDuty Runtime Monitoring | Turn exposure into behavior and behavior into investigations |

| Inventory and CVE exposure | What software and packages are present | Inspector agent-based and agentless scans | Reduce blind spots and prioritize patching |

| Application exploit validation | Can the app or API actually be abused | AWS Security Agent, external AI pentest workflows | Validate findings in context and after fixes |

| Agentic action governance | What can an AI-enabled system do after auth | Bedrock Guardrails, AgentCore Identity, IAM context keys, execution review | Control post-auth tool use, delegation, and AI-mediated actions |

The model above is a synthesis of AWS documentation, AWS Security Blog guidance, and NIST’s framing of AI agent risk. (docs.aws.amazon.com)

What Posture and Runtime Tools Still Miss

The easiest way to understand the value of AI-assisted pentesting is to look at the blind spots of good defensive tooling. Posture and runtime controls are necessary. They are not sufficient. A runtime security agent can tell you that a process executed and reached a destination. It cannot necessarily tell you whether that process execution was the final step in a business logic exploit that began three HTTP requests earlier under a valid user session. That gap is not a product flaw. It is the result of using the right tool for the wrong question. (docs.aws.amazon.com)

The first blind spot is the authenticated user journey. AWS Security Agent’s pentest workflow explicitly supports application credentials and recommends typical-user access rather than overprivileged accounts. That matters because many of the most consequential web security issues only appear after a low-privileged user logs in and begins moving through stateful features. Authorization flaws, role confusion, and state-machine bugs are often invisible to unauthenticated scanners and only partially visible to runtime telemetry. They require application context and adversarial navigation. (docs.aws.amazon.com)

The second blind spot is the multi-step attack chain. AWS’s own public material on AWS Security Agent repeatedly emphasizes validated findings through tailored multi-step attack scenarios. That is the right design target, because real exploitation often depends on sequence. A leak, then a pivot. A weak identifier check, then a state mutation. An overly trusted integration response, then a privilege jump. Traditional scanners are usually strong at single-request or single-pattern findings. They are weaker at adaptive path-building unless teams layer in additional validation logic. AWS is clearly trying to close that gap. (Amazon Web Services, Inc.)

The third blind spot is business logic. This is one reason the new AWS Security Agent is more interesting than a generic scanner rebrand. AWS’s features page explicitly says the service targets not only OWASP Top 10 issues but also business logic flaws. That is the right place to push offensive automation because logic defects are where cloud teams lose time with false certainty. Package inventories do not model them. WAFs do not reason about them. Runtime telemetry may observe the consequences without understanding the logic that created them. The application itself has to be exercised adversarially. (Amazon Web Services, Inc.)

The fourth blind spot is dependency-mediated trust. Accessible URLs in AWS Security Agent are a good example. Once you declare an external identity provider, CDN, or other endpoint as accessible during a pentest, you are acknowledging that the application’s true behavior depends on systems outside the main target domain. That is realistic. Modern applications live on federated identity, static asset providers, SaaS APIs, and third-party control paths. But it also means your exploit surface is partly shaped by trust declarations and runtime integrations, not just by code on the main domain. Defensive inventory tools rarely model that trust boundary richly enough. (docs.aws.amazon.com)

The fifth blind spot is AI-enabled post-auth behavior. This is where the older “who can log in” model becomes dangerously incomplete. NIST’s current framing of AI agent risk focuses on the distinct threats that appear when model outputs get bound to software actions. Bedrock’s prompt injection documentation says this is an application-level problem, not an infrastructure problem. AWS’s own security principle says deterministic controls must sit outside the agent’s reasoning loop. Put those together and the operational conclusion is obvious: once the model is in the loop, safe authentication is not enough. The model, its tool broker, its prompts, its context sources, and its policy wrappers all become part of the attack surface. (المعهد الوطني للمعايير والتكنولوجيا والابتكار)

For offensive validation, this means you should test the whole action path, not just the model response. Penligent’s pentest-AI material makes the same point in more operational language: the workflow should begin with scope, provenance, and task tracking, because otherwise you get clever output without defensible findings. That observation travels well to AWS environments. Whether you are using AWS Security Agent, an external AI pentest tool, or a human-led workflow augmented by AI, the real value comes from verified paths, evidence, and repeatable retests, not from interesting speculative findings. (بنليجنت)

The CVEs That Explain Why Privileged Cloud Agents Need Hardening

There is a second mistake hidden inside the keyword AWS security agent. Teams focus so hard on what the agent protects that they forget the agent itself is a privileged software component with its own attack surface. That is not theoretical. AWS-related agents have had meaningful vulnerabilities, and those flaws are a direct reminder that security agents need the same patching, segmentation, least-privilege design, and monitoring as any other high-value daemon. (NVD)

خذ CVE-2022-29527 in Amazon’s SSM Agent. NVD describes it as a condition in versions before 3.1.1208.0 where a world-writable sudoers file could be created, allowing local attackers to inject sudo rules and escalate to root under a race condition. That is exactly the kind of flaw defenders need to care about in a management agent. A component whose job is to let a cloud control plane execute privileged operations on a node should automatically be considered part of your high-risk software set. The lesson is not “avoid SSM Agent.” The lesson is “anything with this level of trust belongs in your fast patch and review path.” (NVD)

انظر الآن إلى CVE-2025-9039 in the Amazon ECS agent. NVD says that under certain conditions an introspection server could be reached off-host by another instance if the instances were in the same security group or if ingress rules allowed the port in question, and that the issue was fixed in ECS agent version 1.97.1. That is a classic cloud lesson. Management or introspection features that feel harmless inside a single host boundary can become lateral-movement surfaces when network assumptions break down. In container-heavy environments, “internal introspection” should never automatically be treated as “safe because it is meant for operators.” Cloud boundaries are fluid. Security groups, peering, workload sprawl, and shared environments make supposedly local surfaces travel farther than teams expect. (NVD)

ثم هناك CVE-2025-8904 in Amazon EMR Secret Agent. NVD says the agent created a keytab file containing Kerberos credentials in /tmp, and that users with access to that directory and another account could potentially decrypt the keys and escalate privileges. Users were advised to upgrade to EMR 7.5 or newer, with a workaround for older branches. This is not an exotic AI-era flaw. It is a reminder that credential staging, temporary file handling, and agent lifecycle hygiene remain basic cloud security issues even in modern managed data platforms. Whenever an agent handles secrets, temporary artifacts, or delegated trust, treat its storage paths and cleanup behavior as security-relevant design elements, not implementation trivia. (NVD)

AWS’s October 2025 IMDS impersonation bulletin is especially relevant to hybrid and AI-automation environments. AWS says that when tools such as the AWS CLI, AWS SDKs, or SSM Agent run on non-EC2 compute nodes, a third-party-controlled IMDS can potentially serve unexpected AWS credentials if the attacker has a privileged network position. AWS recommends following installation guidance outside the AWS data perimeter and monitoring for IMDS endpoints in on-prem environments. This is a bigger deal than it first appears. Many teams now run AWS-aware automation, CI agents, lab infrastructure, and AI tooling outside EC2. If those systems accidentally trust metadata-style endpoints in the wrong place, the trust model you assumed for EC2 does not automatically follow you off-cloud. (Amazon Web Services, Inc.)

That bulletin also makes the IMDSv2 conversation more concrete. Security Hub recommends configuring EC2 instances with IMDSv2 by requiring HttpTokens, and AWS explicitly ties that setting to defenses against SSRF, open reverse proxies, and related metadata exposure paths. Combined with the 2025 bulletin, the takeaway is straightforward: metadata access is not just a feature detail for EC2. It is part of the trust root for any AWS-aware agent or automation path that relies on instance credentials. If your organization is adding more agents, more automation, and more AI-driven workflows, metadata discipline becomes more important, not less. (docs.aws.amazon.com)

| Identifier | Affected component | ما أهمية ذلك | Minimum response |

|---|---|---|---|

| CVE-2022-29527 | Amazon SSM Agent | Local privilege escalation through a world-writable sudoers file in vulnerable versions | Patch past the vulnerable version range and treat management agents as high-priority privileged software |

| CVE-2025-9039 | Amazon ECS agent | Off-host access to the introspection server under certain network conditions | Upgrade to the fixed version and prevent unintended access to introspection ports |

| CVE-2025-8904 | Amazon EMR Secret Agent | Kerberos credential material staged in /tmp, enabling potential escalation | Upgrade to EMR 7.5 or newer or apply AWS’s documented workaround path for older versions |

| AWS-2025-021 | AWS tools on non-EC2 compute | Third-party-controlled IMDS can serve unexpected credentials outside the AWS data perimeter | Follow AWS configuration guidance and monitor for IMDS traffic in on-prem or hybrid networks |

| Security Hub IMDSv2 control | EC2 metadata path | Credential path remains exposed if HttpTokens is optional | Require IMDSv2 and monitor metadata access patterns |

The table above is based on NVD entries, AWS’s security bulletin, and AWS Security Hub guidance. (NVD)

Common Mistakes When Teams Buy or Deploy an AWS Security Agent

The most common mistake is treating the phrase as though it refers to one category of product. It does not. If you buy or enable AWS Security Agent expecting endpoint-style telemetry, you will be disappointed. If you deploy workload-side agents expecting business-logic exploit validation, you will also be disappointed. The phrase only becomes useful when you attach it to the question you need answered. (docs.aws.amazon.com)

The second mistake is assuming agentless coverage replaces runtime instrumentation. Inspector’s agentless mode is valuable, but AWS documents it as an EBS snapshot-based inventory and vulnerability mechanism for eligible instances. GuardDuty Runtime Monitoring, by contrast, is about process, file, command-line, and network behavior. If you disable the runtime layer because your vulnerability dashboard looks healthy, you are removing the behavioral context that tells you whether exposure has turned into action. (docs.aws.amazon.com)

The third mistake is feeding offensive automation overly broad credentials. AWS’s own guidance around AWS Security Agent says pentest credentials should be scoped appropriately and that customers should create new credentials for the purpose. That is the right pattern because exploit validation should test realistic attack paths, not grant the pentest engine more privilege than the target population would ever have. When teams use admin credentials because setup is faster, they create misleading findings and unnecessary risk. (docs.aws.amazon.com)

The fourth mistake is running application pentests in production by default. AWS explicitly recommends non-production environments for AWS Security Agent because the underlying toolset can modify state, data, and configuration. That recommendation should be taken literally. A useful pentest environment mirrors production closely enough to preserve app behavior while remaining isolated enough to tolerate aggressive validation traffic, state changes, and repeated retests. The impulse to “just test prod so we know it is real” is understandable, but it often ignores change control, customer data exposure, and operational safety. (docs.aws.amazon.com)

The fifth mistake is forgetting that accessible URLs are trust decisions. The AWS documentation is very clear that these URLs are not test targets but can still receive pentest data and credentials. Teams often configure external identity providers or support services in a hurry, then later realize they expanded the data path without treating it as part of the test design. In modern applications, especially federated and SaaS-heavy ones, that is not a minor omission. It is part of the threat model. (docs.aws.amazon.com)

The sixth mistake is trusting AI findings or AI-generated fixes without review. AWS says multiple pentest runs can vary, validation scripts should be reviewed and executed, and generated remediation code should be tested before deployment. That is the correct operating assumption for any AI-assisted security workflow today. Good AI systems compress review time and increase testing frequency. They do not eliminate the need for security judgment, code review, and controlled retesting. (docs.aws.amazon.com)

The seventh mistake is assuming IAM or Guardrails alone solve AI agent security. AWS’s Bedrock documentation says prompt injection is an application-level concern. Automated Reasoning does not itself provide prompt injection protection. AWS’s security principle says deterministic external controls must govern the agent’s reasoning loop. NIST says the core risk comes from the union of model outputs and software actions. Put together, those statements rule out a one-control fantasy. If the application is AI-enabled, you need both preventative governance and adversarial validation. (docs.aws.amazon.com)

Where Human Testers and External Workflows Still Matter

AWS’s own documentation provides the most honest baseline here: AWS Security Agent is not a professional penetration testing service and should be integrated into a larger security review workflow. That does not diminish its value. It clarifies it. High-frequency, context-aware validation during development is enormously useful, especially for web applications and APIs that change faster than quarterly external tests can keep up. But human testers still matter for novel objectives, unusual trust chains, production risk management, cloud-control-plane edge cases, and the kind of cross-domain reasoning that depends as much on business context as on exploit mechanics. (docs.aws.amazon.com)

External workflows also matter when the environment is not cleanly AWS-native. AWS Security Agent can target apps in other clouds and on premises, but some teams still want a separate exploit-validation path that is not anchored to one cloud vendor’s control model. That is especially true for organizations whose exposed surface spans AWS, SaaS integrations, third-party APIs, and externally reachable custom apps. In those environments, the question is not “AWS-native or external” in a tribal sense. It is whether you have enough validated evidence across the environments attackers can actually reach. (Amazon Web Services, Inc.)

That is why evidence-driven automation is the more useful category than any single vendor label. Penligent’s cloud-security and AI-agent-security material is helpful here not because it argues for a brand, but because it draws the same distinction the AWS stack now makes in different language: one layer identifies what may be risky; another layer proves what is actually exploitable; and the hardest failures in AI systems often occur after authentication, during tool use and delegated execution rather than at the login screen. That is a sound way to reason about cloud security programs regardless of which offensive automation product you use. (بنليجنت)

The right endpoint for most mature teams is a blended model. Use AWS-native workload controls where AWS has strong telemetry and control-plane leverage. Use AWS Security Agent where frequent application validation helps compress feedback loops. Use Bedrock guardrails, identity, and IAM-based controls where AI systems are granted action authority. Bring in human testers and, when useful, external AI-assisted workflows when the objective is broader than any one vendor boundary or when the consequence of getting it wrong is too large to leave to a single automated path. That is not redundancy for its own sake. It is defense aligned to how modern systems actually fail. (docs.aws.amazon.com)

AWS Security Agent Is a Layer, Not a Silver Bullet

The most accurate answer to the keyword AWS security agent in 2026 is that it now names a layered problem, not a single category. Part of that problem is still the old one: how to manage, observe, and inventory cloud workloads through agents and agentless scanners. Part of it is now new: how to use AWS Security Agent for design review, code review, and AI-assisted exploit validation against real web applications and APIs. And part of it is newer still: how to govern AI-mediated actions after authentication with guardrails, identity controls, and IAM policies that understand when an AWS-managed MCP path is involved. (docs.aws.amazon.com)

If your team keeps those layers separate, the AWS stack becomes easier to reason about. Workload agents and agentless scans tell you what exists and how it behaves. AWS Security Agent tells you whether real application paths can be abused. AI security controls tell you how an authenticated, tool-using model is constrained. Human testing and external validation tell you where automation still needs help. That division of labor is the technical angle that makes the keyword worth pursuing. It also happens to be the difference between a cloud security program that produces dashboards and one that produces evidence. (docs.aws.amazon.com)

Further Reading and References

AWS Security Agent and related AWS-native controls

AWS Security Agent product page and FAQ. (Amazon Web Services, Inc.)

AWS Security Agent preview announcement, December 2, 2025. (Amazon Web Services, Inc.)

AWS Security Agent on-demand penetration testing GA announcement, March 31, 2026. (Amazon Web Services, Inc.)

How AWS Security Agent works, setup, pentest configuration, GitHub integration, remediation, CloudTrail logging, and best practices. (docs.aws.amazon.com)

Inside AWS Security Agent’s multi-agent pentest architecture. (Amazon Web Services, Inc.)

SSM Agent, Amazon Inspector EC2 scanning, GuardDuty Runtime Monitoring, and GuardDuty Malware Protection for EC2. (docs.aws.amazon.com)

Security Hub IMDSv2 guidance and AWS IMDS impersonation bulletin. (docs.aws.amazon.com)

AWS material on AI agent security

Amazon Bedrock prompt injection security and Guardrails. (docs.aws.amazon.com)

Automated Reasoning checks in Amazon Bedrock Guardrails. (docs.aws.amazon.com)

Amazon Bedrock AgentCore Identity. (Amazon Web Services, Inc.)

AWS security principles for agentic AI systems and IAM controls for AWS-managed MCP servers. (Amazon Web Services, Inc.)

NIST on distinct risks in AI agent systems. (المعهد الوطني للمعايير والتكنولوجيا والابتكار)

NIST on large-scale red-teaming against frontier models. (المعهد الوطني للمعايير والتكنولوجيا والابتكار)

NVD entries for CVE-2022-29527, CVE-2025-9039, and CVE-2025-8904. (NVD)

Cloud Security: A Practical, Engineer-Verified Guide for Modern Infrastructure. (بنليجنت)

AI Agent Security Beyond IAM, Why the Real Risk Starts After Authentication. (بنليجنت)

Pentest AI, What Actually Matters in 2026. (بنليجنت)