The easy part is over. By 2026, the security world has already seen enough AI demos that can explain a payload, draft a proof of concept, summarize a Burp request, or solve a neatly framed CTF challenge. The harder question is whether any of that survives contact with a real authorized engagement, where scope matters, state drifts, evidence has to be preserved, tools can do damage, and every claim eventually lands on an engineer’s desk. That is where the recent attention around Cybersecurity AI, and especially CAI, starts to matter. CAI is not interesting because it proves that an LLM can “hack.” It is interesting because it packages model capability, tool access, human interruption, external integrations, and benchmark-driven claims into something much closer to an operating model for security work than a chat window or single-purpose assistant. (جيثب)

That does not mean the field is solved. In fact, the opposite is true. The most credible recent research cuts against the loudest marketing story. Cybersecurity benchmarks are getting harder, not easier. Jeopardy-style CTF success does not transfer cleanly into multi-step offensive work. Attack and Defense formats expose weaknesses that simple challenge sets hide. Large-scale real-world vulnerability reproduction remains difficult. And when AI agents are tested against human professionals in live environments, the result is not “humans are obsolete.” The result is a more interesting split: agents are increasingly strong at systematic enumeration, parallelization, and cost efficiency, while still weak in places where real pentesting gets messy, ambiguous, visual, or context-sensitive. (arXiv)

That is the right frame for CAI. Not as a mascot for autonomous offense, and not as a shortcut around pentest discipline, but as one of the clearest examples of where Cybersecurity AI is heading: away from isolated “AI does security” tricks and toward bounded, evidence-bearing, tool-connected workflows. (جيثب)

Cybersecurity AI Is Not Just a Smarter Scanner

A lot of confusion comes from collapsing very different products into the same label. A scanner with an LLM summary layer is not the same thing as a coding agent. A coding agent is not the same thing as a CTF scaffold. A CTF scaffold is not the same thing as a real pentest workflow. All of them may involve language models, but they solve different problems, fail in different ways, and deserve to be judged by different evidence. Research across the last two years makes that mismatch obvious. PentestGPT showed early on that model scaffolding could outperform raw prompting on pentest-style tasks, but later work such as CAIBench, CyberGym, multi-step cyber range studies, and live enterprise evaluations all point to the same deeper lesson: the important variable is no longer only the base model. It is the system wrapped around the model. (USENIX)

A useful way to think about Cybersecurity AI is to ask what kind of truth the system can actually produce. Can it produce a clean explanation of a vulnerability? That is valuable, but it is still language. Can it produce a plausible exploit chain? Better, but still a hypothesis. Can it execute a bounded tool action against an authorized target, preserve the raw output, recover from failure, and make the result replayable by another human? That is much closer to penetration testing. Most products in the market do not fail because they lack intelligence in the abstract. They fail because they collapse the difference between suggestion and proof. (arXiv)

The distinction matters even more once tools enter the picture. The moment a model can touch a shell, a browser-connected workflow, a proxy, a code executor, or a remote target, the system is no longer being judged only as an AI application. It is being judged as an operational security component. That changes the standard completely. Now the relevant questions are not “Does it know about SSRF?” or “Can it write Python?” but “Can it stay in scope?”, “Can I interrupt it?”, “Does it keep evidence?”, “Does it separate a suspected issue from a verified issue?”, and “What happens when hostile content lands in the prompt stream?” Those are workflow questions, not benchmark trivia. (جيثب)

The table below is a good sanity check.

| System type | What it usually does well | What it does not prove | Real value to pentesting teams |

|---|---|---|---|

| Scanner with AI summary | Clustering findings, summarizing output, drafting remediation text | That the issue is exploitable, in scope, reproducible, or high priority | Speeds triage, improves readability |

| Coding agent | Repo-aware reasoning, local testing, quick script generation, patch suggestions | That a target-facing issue is validated against a live system | Strong for white-box work, code review, local reproduction |

| CTF agent | Structured tool use, challenge solving, exploit drafting under constrained rules | That the agent handles real scope, messy state, or enterprise complexity | Good for capability exploration and research |

| Workflow-native pentest system | Multi-step validation, target-facing actions, evidence capture, retest support | That it is safe by default or mature enough without governance | Useful when it preserves proof and control |

| Research benchmark agent scaffold | Controlled comparison of models and scaffolds across tasks | That its results transfer cleanly into your environment | Valuable for measurement, less so as direct deployment guidance |

That is why Cybersecurity AI has become such a strong search and discussion cluster. Practitioners are not really looking for “AI that knows security facts.” They are looking for systems that can carry context across tools, preserve enough state to keep moving, and leave behind enough evidence to justify action. (arXiv)

CAI, The Part That Matters Is the Workflow

Alias Robotics describes CAI as a lightweight, open-source framework for offensive and defensive security automation. In its public repository and product material, the project emphasizes several structural choices that are more important than the branding itself: an agent-based design, support for many underlying models through LiteLLM, built-in tools, tracing, MCP integration, and an explicit human-in-the-loop design principle. The public disclaimer goes further and frames CAI as a cybersecurity evaluation tool operating under a “human-on-the-loop” principle, which is exactly the kind of language that matters once models stop being passive assistants and start touching systems. (جيثب)

Those details are not cosmetic. A workable Cybersecurity AI system needs at least six layers. The first is a model layer, where reasoning, planning, code generation, and synthesis live. The second is an orchestration layer that decides how tasks are broken down, how state is preserved, and when the model gets to call tools. The third is the tool layer itself, where shell, web, proxy, or custom functions become available. The fourth is a memory and state layer that keeps the agent from forgetting what already happened. The fifth is an evidence layer, where output becomes traceable rather than conversational. The sixth is the human control layer, where scope, approval, interruption, and retest discipline live. Remove any one of those and the system may still look impressive, but it stops looking like reliable pentesting. (جيثب)

Seen through that lens, CAI is best understood as a framework that tries to solve the middle of the job. Not the raw intelligence problem alone, and not the final report formatting problem alone, but the difficult space in between, where an operator needs a model to maintain direction while crossing multiple tools, multiple turns, and multiple small decisions. That is also why CAI’s public materials lean so heavily on CLI usage, MCP connectivity, and workflow features rather than a single “AI assistant” interface. A command line is not glamorous, but it is stateful, composable, interruptible, and familiar to serious security operators. (news.aliasrobotics.com)

The current CAI repository also shows why the term Cybersecurity AI is more useful than CAI alone. “CAI” by itself is noisy in the broader information ecosystem, where the same acronym is used for Commercially Available Information in U.S. intelligence policy. “Cybersecurity AI,” by contrast, is much more specific to this emerging category of model-plus-workflow security tooling. That is one reason the stronger search and publishing terms around the topic are not just “CAI,” but phrases such as Cybersecurity AI, AI security agent, AI pentesting CLI, and agentic security workflows. (Intelligence)

A more useful mental model is to treat CAI as an example of a broader category. You may or may not choose CAI itself. You may prefer another scaffold, another product, or an internal build. The real question is whether the system you use behaves like a workflow, or behaves like a demo. Workflow means bounded execution, evidence retention, replayability, interruption, and separation between hypothesis and proof. Demo means clever text plus optimistic assumptions. (جيثب)

Why Cybersecurity AI Benchmarks Are Moving Beyond Jeopardy CTFs

The benchmark story around Cybersecurity AI has matured faster than most people realize. Early excitement came from systems that could solve challenge-like tasks better than raw model prompting. PentestGPT was a major moment because it showed scaffolding mattered: the paper reported a 228.6 percent task-completion increase over GPT-3.5 on its benchmark targets and demonstrated useful performance on real-world targets and CTFs. That was not the end state of the field, but it was an important proof that pentesting-adjacent work was not just about model IQ. It was about how the operator and scaffold managed the work. (USENIX)

The next step was realizing that many common benchmarks were still too narrow. CAIBench is one of the clearest formalizations of that problem. Its authors argue that labor-relevant measurement in cybersecurity cannot be reduced to a single challenge type or to static security Q&A. The benchmark integrates more than 10,000 instances across five categories: Jeopardy-style CTFs, Attack and Defense CTFs, cyber range exercises, knowledge benchmarks, and privacy assessments. The paper’s results are telling: knowledge performance begins to saturate, but performance degrades sharply in multi-step adversarial settings, and the scaffold-model pairing can swing results substantially. (arXiv)

That is exactly why the “CTFs are solved” narrative needs more nuance than headline writers usually give it. CAI’s own CTF paper claims first-place or top-tier outcomes across several 2025 circuits and argues that Jeopardy-style CTFs have become a solved game for well-engineered agents. That may be directionally true for a subset of challenge formats, especially those that reward rapid localized reasoning. But the same authors also helped publish work showing that Attack and Defense settings behave differently. In one controlled A&D study, defensive agents achieved higher unconstrained patching success than offensive initial access, yet that advantage disappeared once real operational constraints such as service availability and complete intrusion prevention were imposed. That is a far more realistic result than “AI offense wins” or “AI defense wins.” It says the environment and the metric matter. (arXiv)

The field then moved again. CyberGym shifted the focus from challenge solving to reproducing real-world vulnerabilities at scale. In its latest arXiv version, the authors describe 1,507 real-world vulnerabilities across 188 software projects and report that even top-performing combinations only reach around a 20 percent success rate. At the same time, the benchmark led to 34 zero-day discoveries and 18 historically incomplete patches. That combination is important. It shows why current Cybersecurity AI is simultaneously more capable and less “finished” than simplistic coverage suggests. The agents can produce real impact, but only on a minority of cases in a realistic large-scale setting. (arXiv)

The most sobering work may be the direct comparison between agents and professionals in a live enterprise environment. In that study, researchers evaluated ten cybersecurity professionals and several agents, including ARTEMIS, on a real university network with roughly 8,000 hosts across 12 subnets. ARTEMIS placed second overall, found nine valid vulnerabilities, achieved an 82 percent valid submission rate, and outperformed nine of ten human participants. But the paper did not say “humans are done.” It also found that off-the-shelf scaffolds lagged, that agents still produced more false positives, and that GUI-heavy tasks remained difficult. The right takeaway is not hype. It is that a carefully engineered agent system can be very competitive on some classes of work while still being structurally unreliable in others. (arXiv)

The benchmark stack now looks something like this.

| Benchmark or study | What it measures | What it gets closer to in real work | What it still misses |

|---|---|---|---|

| PentestGPT | Scaffolded pentest task completion, challenge-style workflows | The importance of orchestration and step management | Large-scale production complexity and strong evidence requirements |

| CAI CTF results | High-speed challenge performance across major CTF circuits | Fast exploit reasoning under competition rules | Enterprise scope control, messy multi-system state, reporting discipline |

| A&D CTF evaluation | Simultaneous attacking and defending under constraints | Adaptive reasoning under adversarial pressure | Full business logic, live customer risk, organizational process |

| CAIBench | Multi-category cybersecurity evaluation across more than 10,000 instances | Better measurement of labor-relevant variety | It is still a benchmark, not your environment |

| CyberGym | Real vulnerability reproduction and PoC generation across 188 projects | Stronger realism for software security workflows | Mostly code-centric, less target-facing web and human factors |

| Live enterprise human comparison | Agents versus professionals on a real network | Closer to real pentesting economics and quality | One environment, limited sample size, still an experimental setting |

What matters for buyers and builders is that Cybersecurity AI is no longer a question of whether models can help. They can. The question is where the benchmark evidence actually transfers. A Jeopardy CTF win may mean a lot for tooling speed and local reasoning. It does not automatically mean a system can preserve scope, handle authentication state, avoid destructive actions, or produce a finding that survives retest in a business application. (arXiv)

Real Pentesting Still Breaks Weak Cybersecurity AI Systems

Once you leave benchmark abstraction, pentesting gets strange very quickly. Authentication flows are fragile. Session state is inconsistent. Business logic spans multiple roles and time windows. Targets change mid-engagement. Findings that look obvious from code evaporate when a deployment flag is different in production. Multi-step exploit chains fail for boring reasons: a header was normalized, a queue was delayed, a WAF rate-limited the path, or the “vulnerable” user role did not exist on the target tenant. These are not edge cases. They are the normal texture of real testing.

This is where the recent live-enterprise research is useful. The human-versus-agent comparison found strong agent performance in systematic enumeration and parallel exploitation, but also higher false-positive rates and weaker performance on GUI-based tasks. That maps closely to what many operators already feel intuitively. Agents are strongest when the truth is mostly textual, mostly enumerable, mostly serializable, and mostly reachable through a clean tool surface. They get weaker when the work requires subtle visual inspection, delicate interaction timing, ambiguous product behavior, or careful social reading of what a business flow is supposed to do. (arXiv)

The same pattern shows up in cyber range work. A recent multi-step cyber-attack study evaluated seven frontier models on a 32-step corporate network range and a 7-step ICS range. The authors observed that performance improves with both newer models and larger inference-time budgets, and that the best run completed 22 of 32 steps on the corporate network scenario. That is serious capability. It is also not full autonomy. The industrial control scenario remained much weaker, and the study explicitly frames these environments as still simpler than fully defended real-world operations. That gap is exactly where many product narratives become misleading. (arXiv)

So the honest statement is narrower and more valuable: Cybersecurity AI is becoming good at structured offensive labor, not at magically replacing the whole pentest profession. It is getting better at triage, enumeration, exploit branch planning, repetition, and evidence formatting. It is not yet uniformly trustworthy at judgment under uncertainty. That is why the systems worth paying attention to are not the ones with the wildest autonomy claims. They are the ones that expose more control, more auditability, and more replay. (arXiv)

A practical version of that split looks like this.

| Task shape | Agents are often strong at | Agents often remain weak at | Human review still matters most |

|---|---|---|---|

| الاستطلاع | Asset clustering, header and response pattern review, route mapping, parameter discovery | Distinguishing noise from business-critical signal | Deciding what is worth pursuing |

| White-box validation | Code navigation, grep-like reasoning, local harness drafting, patch direction | Proving exploitability against a live environment | Separating likely from verified |

| Web testing | Replaying requests, generating permutations, multi-parameter fuzz branches | Business logic abuse, strange auth edge cases, GUI-only workflows | Impact confirmation and chain realism |

| Reporting | Normalizing findings, draft remediation, formatting evidence narratives | Ground truth, severity judgment, environmental nuance | Final wording and prioritization |

| Long engagements | Maintaining broad task lists, reusing patterns, parallel exploration | State drift, context poisoning, overconfidence after partial wins | Steering, interruption, and retest discipline |

CAI’s public updates make sense against that backdrop. The v1.0 release emphasizes usability, long-session consistency, automated agent selection, and stronger compatibility with MCP and Burp Suite. Those are the kinds of improvements that matter in real operations because they target the brittle middle of the workflow rather than only raw intelligence claims. A pentest assistant that forgets what already happened is much less useful than one that reasons slightly worse but preserves coherence across hours of work. (news.aliasrobotics.com)

What a Usable Cybersecurity AI Stack Looks Like

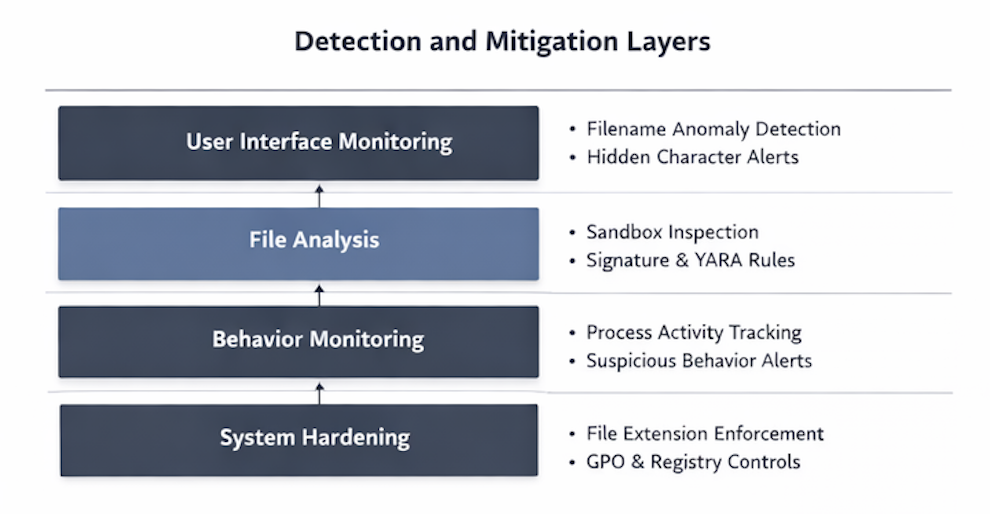

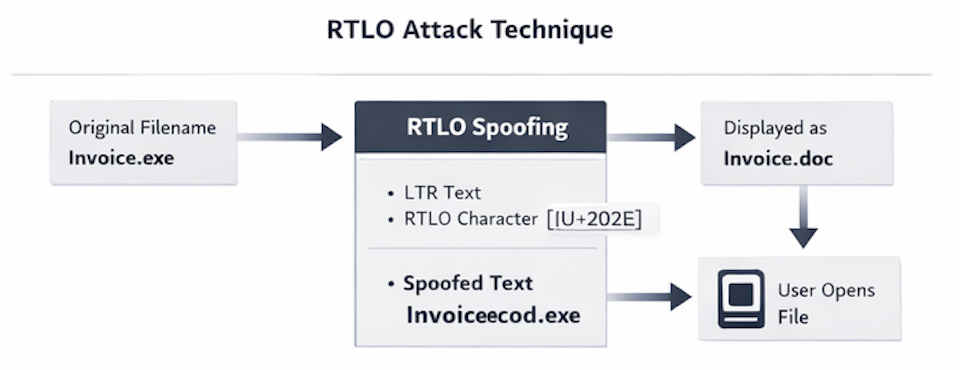

A usable stack begins with authorization, not intelligence. Before the first prompt, somebody has to define the target, the scope, the allowed techniques, the time window, the approval rules, and the evidence standard. That is not bureaucracy. It is the frame that turns an AI system from general-purpose attack capability into legitimate security work. The moment that frame is weak, every model improvement becomes a liability multiplier rather than a productivity gain. NIST’s Cyber AI Profile exists for exactly this kind of problem: helping organizations apply a common risk-management structure to the overlap between AI and cybersecurity, including issues such as prompt injection, data poisoning, adversarial input, recovery, and incident learning. (nvlpubs.nist.gov)

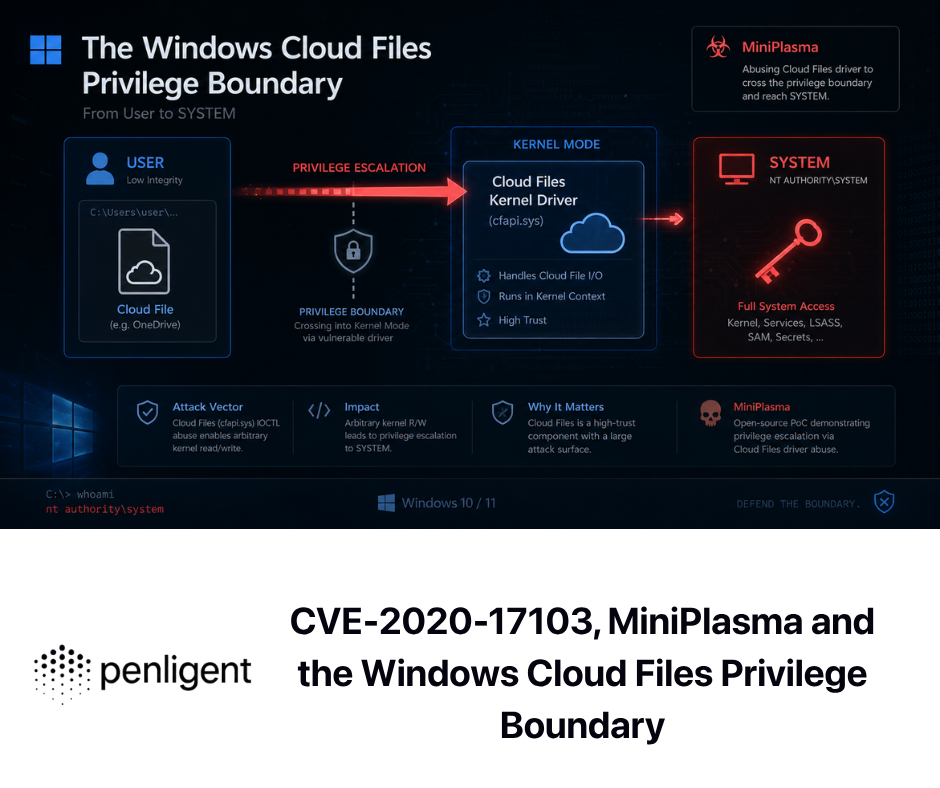

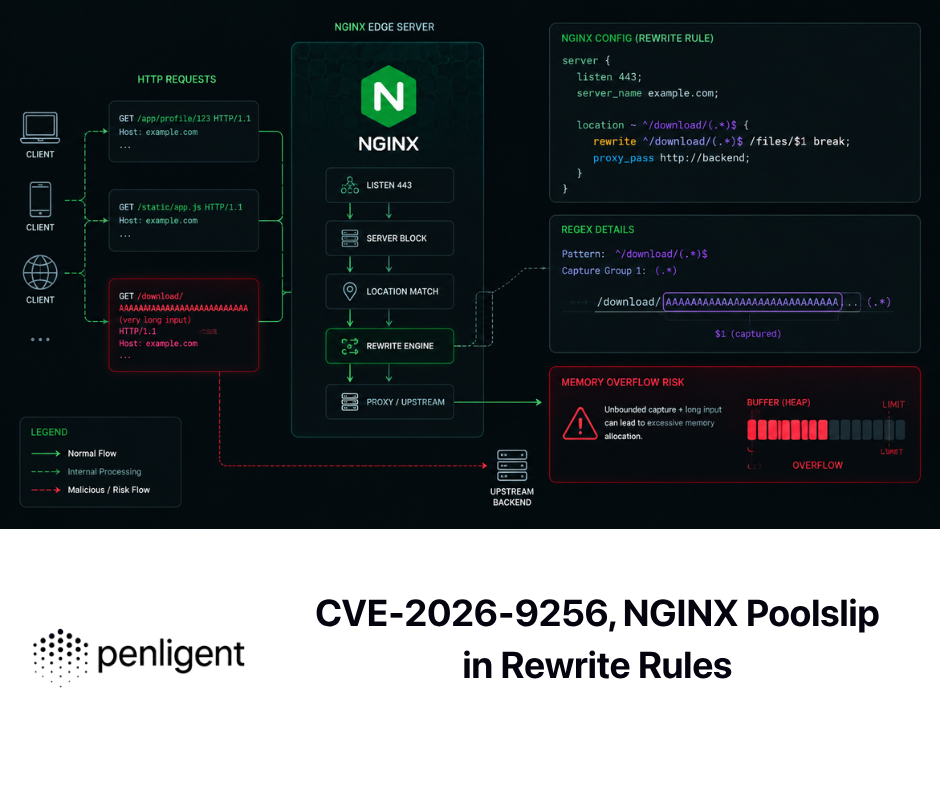

After authorization comes recon, and this is where many AI systems should spend more time than they do. Public materials from Penligent make a useful point here without much hype: agentic offense becomes more trustworthy when it is recon-first, policy-aware, and evidence-first rather than exploit-first. That sounds conservative, but it is actually what makes the workflow more powerful. A planning worker that maps routes, identities, data flows, and scope boundaries before active testing begins is less likely to waste time, break things, or mislabel noise as impact. Penligent’s own public writing frames the problem similarly: the workflow matters because the report is only useful if the evidence survives retest. (penligent.ai)

Then comes bounded execution. The agent needs enough tool access to validate something real, but not so much freedom that every hostile input becomes a path to the host or the wrong target. That is one reason the move toward tool abstraction layers such as MCP is important. When handled correctly, MCP standardizes how models connect to external tools and data. The official specification defines it as an open protocol for integrating LLM applications with external data sources and tools, and its authorization material makes clear that security and trust boundaries are first-class concerns, not optional afterthoughts. Standardization is useful. It is not the same thing as safety. (modelcontextprotocol.io)

Next comes evidence preservation. This is the difference between a smart conversation and a credible engagement artifact. Penligent’s recent writing on AI pentest reports gets this exactly right: a report is only useful if another human can verify the scope, reproduce the behavior, and act on the result. That standard should be applied much earlier than reporting. It should shape the entire workflow. A finding should only move from hypothesis to report candidate once the raw traces, commands, requests, responses, and environmental conditions are preserved well enough for replay. (penligent.ai)

A simple evidence structure is often better than an elaborate one.

TARGET="app.example.com"

RUN_ID="$(date +%F-%H%M%S)"

ROOT="evidence/${TARGET}/${RUN_ID}"

mkdir -p "${ROOT}/http" "${ROOT}/notes" "${ROOT}/screens" "${ROOT}/cmd"

# Save raw commands as they happen

script -q "${ROOT}/cmd/session.log"

# Save copied HTTP requests and responses

# Store each candidate path in its own file pair

# Example filenames:

# ${ROOT}/http/001-login-request.txt

# ${ROOT}/http/001-login-response.txt

# Keep one note file per finding candidate

touch "${ROOT}/notes/candidate-001.md"

Nothing in that example is glamorous. That is the point. Evidence discipline should be boring. If a workflow cannot keep something this simple and replayable, it is not ready to generate authoritative claims about security posture.

Finally comes retest and handoff. This is where many AI systems reveal whether they were built for demos or for operations. Can the system re-run only the proof path? Can it tell you what changed between the failed and successful attempt? Can it separate “potentially vulnerable parameter” from “verified exploit condition”? Can it produce a record that engineering or compliance teams can consume without reading the whole session history? These are workflow design questions, not model questions. The stronger products and frameworks in this space increasingly recognize that. (penligent.ai)

Using CAI With MCP and Burp, A Practical Control Surface

This is one of the most interesting parts of CAI’s public documentation because it shows how Cybersecurity AI starts connecting to the tools that pentesters already trust. CAI’s README documents support for the Model Context Protocol with both SSE and STDIO transports, and it provides an example of loading an MCP server and attaching the resulting tools to an agent. The example uses Burp, which is not incidental. Burp is useful because it sits directly in the place where many web findings become real: intercepted requests, parameter manipulation, repeated submissions, issue verification, and request sequencing. (جيثب)

The installation path is straightforward in the public docs.

python3.12 -m venv cai_env

source cai_env/bin/activate

pip install cai-framework

cat > .env <<'EOF'

OPENAI_API_KEY="sk-1234"

ANTHROPIC_API_KEY=""

OLLAMA=""

PROMPT_TOOLKIT_NO_CPR=1

CAI_STREAM=false

EOF

cai

That public example is useful for one reason more than another: it shows that the system is not pretending to be a black box. It is a framework that expects explicit configuration, explicit model backing, and explicit operator startup. (جيثب)

The more operationally important example is the MCP connection pattern.

CAI>/mcp load http://localhost:9876/sse burp

CAI>/mcp add burp redteam_agent

In the repository example, CAI then exposes Burp-connected tools such as send_http_request, create_repeater_tab, send_to_intruder, and related proxy-history functions to the selected agent. That matters because PortSwigger’s own documentation defines Repeater as the tool for modifying and resending interesting requests and for manually verifying reported issues, while Intruder is designed for configurable repeated attacks with payload insertion. Those are exactly the kinds of controlled, high-signal actions that make AI assistance useful in web testing when the operator still needs a strong verification surface. (جيثب)

What makes this powerful is not that the agent can “use Burp.” Humans already can. The power is in a more disciplined split of labor. The model can decide what to inspect next, cluster branches, draft candidate parameter mutations, and keep the local narrative coherent. Burp remains the concrete test surface where the operator can see, replay, constrain, and verify. That is a much better design than asking a model to freehand web exploitation from memory and luck. (portswigger.net)

The trap, however, is obvious. Every time a model gets tool access, the tool boundary becomes part of your threat model. The MCP specification itself treats security and trust as a first-class concern, and its authorization draft notes that authorization is optional for MCP implementations, with different expectations for HTTP-based and STDIO transports. That is useful flexibility for developers, but it also means teams cannot assume the protocol itself will save them from overexposure. A weak server, a sloppy client policy, or an over-permissive environment can still turn a “helpful integration” into a privilege bridge. (modelcontextprotocol.io)

A minimal policy model for agent-linked tooling should look more like this than like a blanket allow-all.

scope:

allowed_hosts:

- app.example.com

- api.example.com

blocked_hosts:

- "*.corp.internal"

- metadata.google.internal

tools:

allow:

- burp_repeater

- burp_proxy_history_read

- web_fetch

require_human_approval:

- burp_intruder

- shell_exec

- ssh_tunnel

evidence:

save_raw_http: true

save_tool_output: true

require_finding_id_before_export: true

execution:

destructive_actions: deny

max_parallel_requests: 5

That is not a vendor-specific format. It is a policy shape. The point is that good Cybersecurity AI is not just about what the agent can do. It is about what the system refuses to let the agent do without explicit human consent.

Evidence First, The Difference Between an AI Security Demo and a Real Engagement

The strongest public writing from practical AI-pentest vendors is converging on the same idea. The artifact pipeline matters as much as the model router. Penligent’s public homepage emphasizes operator-controlled workflows and human-in-the-loop control, while its technical blog repeatedly argues that an AI pentest report is only useful if it survives retest and that a workflow should preserve enough raw material to replay a finding later. Those are not small details. They are the dividing line between a system that generates confidence and a system that generates theater. (penligent.ai)

That same principle is the right way to evaluate CAI and similar frameworks. Suppose an agent says it found an IDOR. What should exist before that statement becomes a deliverable? At minimum, the target identity context, the exact request, the mutation performed, the response delta, the preconditions, the privilege model, the impact description, and a replay path that another tester can follow. Most AI systems are very good at turning weak evidence into polished text. Very few are naturally disciplined about refusing to escalate a claim until the evidence clears a threshold. That discipline must come from the workflow. (penligent.ai)

This is also where the “AI agent versus pentest platform” distinction becomes useful. Public Penligent material, for example, makes a sharp distinction between raw agent capability and finished pentest workflow, arguing that the hard part is not producing the next command but preserving direction, evidence, and control. That is the right distinction to apply across the entire category. A coding agent may be excellent at hypothesis generation. A workflow-native system may be less flexible but much better at turning signal into verified findings and verified findings into reportable artifacts. Mature teams will increasingly use both, but only if they keep those roles separate. (penligent.ai)

A useful operational rule is simple: every important sentence should be traceable either to raw evidence, to an explicitly documented reviewer judgment, or to a reproducible external reference. If a workflow cannot tell you which of those three supports a given claim, it is not ready for high-trust security output. That rule sounds basic. In practice, it is where most AI-assisted security systems still fall apart. (penligent.ai)

Why Cybersecurity AI Fails, Prompt Injection, Tool Abuse, and Classical AppSec Bugs

The most dangerous mistake in this market is thinking that Cybersecurity AI fails in exotic new ways only. It does fail in new ways, but many of the ugliest failures are old. Prompt injection is the best-known AI-native example. OWASP’s 2025 LLM Top 10 places prompt injection at LLM01, and NIST’s Cyber AI Profile repeatedly treats prompt injection, data poisoning, adversarial input, and related issues as concrete organizational risks rather than abstract research curiosities. NIST even notes that techniques such as jailbreaking and prompt injection may emerge from AI publications and should be incorporated into threat intelligence and training. (مشروع OWASP Gen AI Security Project)

But the really important step is what happens next. Prompt injection becomes a much more serious problem when it crosses the boundary from language into action. OWASP’s definition is useful here because it centers the concatenation of untrusted input with higher-trust instructions. Once that higher-trust instruction stack is attached to a tool layer, hostile content does not just distort text output. It may influence what the system searches, which file it opens, which command it runs, or which target it touches. That is not an “AI hallucination” problem. That is a control-plane problem. (csrc.nist.gov)

NIST’s January 2025 work on agent hijacking says the quiet part out loud: many AI agents are vulnerable to hijacking through indirect prompt injection, where malicious instructions are hidden in ingested content and the agent is pushed into unintended actions. In a security workflow, that risk compounds because the agent may already have privileged tools, network reach, filesystem access, or the ability to call custom functions. The problem is no longer just “the model believed something silly.” The problem is that the system trusted the model at the wrong boundary. (المعهد الوطني للمعايير والتكنولوجيا والابتكار)

CAI’s own public advisory history is a useful reminder that Cybersecurity AI frameworks also inherit ordinary application-security failure modes. In January 2026, a GitHub-reviewed advisory disclosed command injection through argument injection in CAI’s find_file() agent tool. The summary states that user-controlled input was passed directly to shell commands via subprocess.Popen() مع shell=True, allowing arbitrary command execution on the host system. That is not some novel model pathology. That is classical injection in a tool wrapper, made more dangerous because the wrapper sits behind an agent layer that may consume untrusted content. (جيثب)

The Langflow CVEs make the same point from another angle. CVE-2025-3248 affected the /api/v1/validate/code endpoint in Langflow, where a remote unauthenticated attacker could execute arbitrary code. That vulnerability was important not just because it was severe, but because it showed how an agent-workflow platform can become a direct remote execution target. CISA later added it to the Known Exploited Vulnerabilities catalog, which means the vulnerability crossed the line from theoretical risk into active exploitation relevance. (nvd.nist.gov)

CVE-2026-27966 is even more on-theme for Cybersecurity AI workflows. NVD describes it as a Langflow issue in which the CSV Agent node hardcoded allow_dangerous_code=True, exposing LangChain’s Python REPL tool and allowing arbitrary Python and OS command execution on the server via prompt injection. That is one of the clearest public examples of why “prompt injection” cannot be treated as just a weird text-generation bug. Once dangerous execution is wired into the workflow, prompt injection becomes a path to full host compromise. The fix was to move to version 1.8.0. The design lesson is larger than the patch: dangerous tools should never be casually or silently bridged into untrusted prompt channels. (nvd.nist.gov)

CVE-2026-33017 extends the same lesson. The public Langflow advisory describes an unauthenticated RCE path in a “public flow” build endpoint where attacker-controlled flow data containing arbitrary Python code could be executed server-side without sandboxing. CISA’s KEV catalog now includes the vulnerability as well. Again, the relevance to Cybersecurity AI is direct. Convenience features in agent platforms often create the most dangerous surfaces because they normalize the idea that structured user input is safe to interpret deeply. If your workflow system handles code, tools, or node definitions, every “helpful” public endpoint becomes part of your RCE story. (جيثب)

The quickest practical audit for this class of problems is still boring static review.

# Look for shell execution shortcuts

grep -R "shell=True" -n .

# Look for agent tools that pass user-controlled arguments into commands

grep -R "subprocess.Popen" -n .

grep -R "os.system" -n .

# Look for dangerous execution flags in agent or workflow nodes

grep -R "allow_dangerous_code=True" -n .

grep -R "exec(" -n .

grep -R "eval(" -n .

This is not enough for a real audit. It is enough to expose whether a project treats tool-execution risk as a first-class engineering concern or as an afterthought.

The CVEs That Matter for Cybersecurity AI Teams

It helps to slow down and ask why these particular vulnerabilities are more instructive than a random list of severe bugs.

CVE-2025-3248 matters because it reminds teams that AI workflow platforms are still software platforms. If they expose code-validation or execution features through web endpoints without proper authentication and isolation, attackers do not need to “beat the model.” They can attack the application directly. For defenders, the mitigation path is conventional: patch to a fixed version, remove unnecessary internet exposure, front the service with proper authentication, and monitor for abuse of the vulnerable endpoint. The bigger organizational lesson is that agent platforms belong in the same asset inventory, hardening, and exposure review process as any other sensitive management plane. (nvd.nist.gov)

CVE-2026-27966 matters because it shows the shortest bridge from AI-specific risk language to ordinary system compromise. “Prompt injection” sounds abstract until it reaches a Python REPL or shell-capable tool. Then it becomes a command-execution issue with a very familiar blast radius. The mitigation here is also practical and boring: upgrade, disable dangerous execution features unless explicitly needed, run the workflow in a constrained environment, and design the system so that untrusted content cannot silently approve its own tool use. (nvd.nist.gov)

CVE-2026-33017 matters because it highlights a recurring design temptation in AI tooling: public build surfaces, “temporary” endpoints, or convenience APIs that are assumed to be harmless because they are meant for configuration rather than production operations. In agentic systems, configuration often is execution. A node definition, a flow graph, or a tool description may be one parse step away from code. So the classic assumptions about public versus private interfaces become much more dangerous. Patch management still matters, but so does architecture: avoid putting agent-workflow control planes directly on the internet, enforce authentication consistently, and sandbox every execution boundary even when the input looks “structured.” (جيثب)

The CAI advisory matters because it cuts through the myth that security-focused AI software is naturally less likely to repeat old mistakes. A cybersecurity AI framework can still concatenate user input into shell strings. A pentest agent can still become a command-injection gadget. A “red team assistant” can still be a weakly hardened local RCE path if its tool wrappers are sloppy. Teams evaluating Cybersecurity AI should treat these projects exactly as seriously as they treat any other high-privilege software: threat-model the tool wrappers, inspect how parameters are passed, review sandbox boundaries, and assume hostile input will eventually hit the system. (جيثب)

The combined lesson is blunt. The danger is not only that AI systems are becoming more capable at offensive security. The danger is also that AI security tooling is itself becoming a rich attack surface. Strong teams will have to do both kinds of work at once: use AI to test systems, and test the AI-enabled systems they adopt.

How Defenders Should Evaluate Cybersecurity AI Platforms

The fastest way to waste money in this category is to ask the wrong question. “Which platform looks the most autonomous?” is usually the wrong question. “Which platform turns an observation into a verified finding with the least noise and the strongest control?” is much better. Public Penligent writing phrases the choice in almost exactly those terms when discussing AI pentest copilots: the useful buying question is about evidence, scope, validation, and operational noise, not about the most impressive autonomy story. That framing works far beyond one vendor. It should be the default procurement lens for the whole category. (penligent.ai)

A security team evaluating CAI, a workflow-native pentest product, or an internal agent build should press on at least ten questions.

| Evaluation question | ما أهمية ذلك | Bad answer pattern |

|---|---|---|

| Can I interrupt or approve risky tool use? | Prevents scope drift and destructive actions | “The agent usually behaves well” |

| Can I replay every high-severity finding? | Separates proof from polished prose | “We keep a chat transcript” |

| Does the system preserve raw requests, commands, and responses? | Enables retest and engineering handoff | “We summarize the important parts” |

| How is target scope enforced? | Stops accidental out-of-scope execution | “The operator just tells it the scope” |

| What happens when it reads hostile input? | Prompt injection is a primary risk | “Our system prompt is very strong” |

| Which tools are available by default? | Reduces hidden execution exposure | “Everything is enabled for convenience” |

| Is there a permission model for external integrations? | MCP and similar bridges widen trust boundaries | “Integrations inherit full access” |

| Are benchmark claims based on knowledge, action, or real environments? | Avoids buying leaderboard optics | “We scored highly on security benchmarks” |

| Can I swap models or pin versions? | Model behavior changes over time | “The platform manages that for you” |

| Does the product distinguish hypothesis from verified proof? | Reduces false positives and trust erosion | “The agent tells you if it’s confident” |

That table is not vendor-specific, but recent public research supports every line of it. Benchmark results show that scaffolding matters, that context management matters, and that measured performance changes significantly with environment and task shape. Live comparisons show that false positives and GUI-heavy tasks remain weak spots. Standards bodies and security frameworks warn that prompt injection and related AI-specific risks must be integrated into ordinary cyber risk management. Public CVEs show that workflow platforms and agent tools themselves can become the weak point. (arXiv)

For buyers, this means the field is now mature enough to demand real proofs. Ask for a replay of a verified finding. Ask what raw evidence is preserved. Ask how approval gates work. Ask what the product does when the model reads a malicious HTML comment, a hostile PDF, or a poisoned knowledge entry. Ask whether Burp or proxy workflows are integrated in a way that preserves manual verification. Ask whether the platform is strong at the middle of the job, not just the beginning and the end. Those are the questions that separate a useful system from a charismatic one. (portswigger.net)

What CAI Gets Right, and What It Still Does Not Solve

The strongest thing CAI gets right is the category definition. It treats Cybersecurity AI as a framework problem, not just a model problem. The public materials emphasize model diversity, built-in tools, tracing, MCP connectivity, CLI-based operation, and human-in-the-loop interruption. That is a stronger architecture than simply wrapping a frontier model around a scanner or a terminal. It aligns with what the better research now says matters: scaffold quality, adaptive reasoning under constraints, and labor-relevant measurement rather than shallow knowledge tests. (جيثب)

The second thing CAI gets right is its benchmark stance, at least in one important respect. Even if many of the strongest public claims still come from project-affiliated papers, the broader research direction is correct: Jeopardy-style CTF performance is no longer enough. CAI’s own papers and related benchmark work push toward Attack and Defense formats, cyber ranges, and richer evaluation categories. That is exactly where the field needs to go if it wants to measure something closer to real work. (arXiv)

The third thing it gets right is practical integration. Public documentation showing MCP support, Burp-oriented examples, and many supported underlying models points toward a serious operator workflow rather than a sealed demo path. Security teams rarely want yet another isolated assistant. They want systems that can inhabit the places where testing already happens. In that sense, CAI is aligned with the same broader trend visible in MCP itself and in modern agentic tooling more generally: context and tool interoperability are becoming the new center of gravity. (جيثب)

What CAI does not solve is equally important. It does not erase the need for human judgment. It does not turn CTF wins into automatic proof of enterprise safety. It does not protect teams from prompt injection or tool abuse by default. Its own advisory history shows why that would be a fantasy. Like every other serious system in this space, it still lives inside a tension that the whole field has not resolved: the same properties that make Cybersecurity AI useful for defenders also make it dangerous when governance, tool boundaries, or software quality are weak. (جيثب)

That is why the right conclusion is not that CAI is overhyped or that Cybersecurity AI is hype. The right conclusion is that the category is real, the capabilities are moving fast, the benchmark story is getting more honest, and the operational bottleneck is shifting from raw reasoning to trustworthy control. Teams that understand that shift will get real leverage from these systems. Teams that do not will buy polished confusion. (arXiv)

Cybersecurity AI Needs More Control, Not Bigger Demos

The next phase of this market will not be won by whoever sounds most autonomous. It will be won by whoever makes autonomous-seeming systems easier to constrain, inspect, verify, and replay. That is the real significance of CAI and the surrounding research. It is not that Cybersecurity AI has magically become “the hacker.” It is that security work is finally being decomposed into machine-amenable pieces with enough state, enough tooling, and enough measurement to become operationally meaningful.

That also means the field should stop asking weak questions. Stop asking whether a model can solve a CTF. Ask whether the workflow can survive a long session without losing the plot. Stop asking whether it can draft an exploit. Ask whether it knows the difference between a plausible exploit and a verified one. Stop asking whether it integrates with Burp. Ask whether Burp remains a human legibility layer rather than becoming a blind actuator. Stop asking whether the report looks polished. Ask whether the finding survives retest. (news.aliasrobotics.com)

That is the standard worth building toward. Not free-roaming autonomous offense. Not a chatbot with shell access. Not a benchmark leaderboard as a purchasing shortcut. Cybersecurity AI becomes truly useful when it behaves like disciplined security engineering under constraints. CAI is one of the clearest signals that the industry has started moving in that direction. The rest of the market will now have to prove that it can do the same without sacrificing control on the way. (جيثب)

Further Reading and References

CAI official framework page. (aliasrobotics.com)

CAI GitHub repository and public documentation. (جيثب)

CAI foundational paper, CAI: An Open, Bug Bounty-Ready Cybersecurity AI. (arXiv)

Cybersecurity AI Benchmark, CAIBench: A Meta-Benchmark for Evaluating Cybersecurity AI Agents. (arXiv)

Cybersecurity AI: Evaluating Agentic Cybersecurity in Attack and Defense CTFs. (arXiv)

Cybersecurity AI: The World’s Top AI Agent for Security Capture-the-Flag. (arXiv)

CyberGym: Evaluating AI Agents’ Real-World Cybersecurity Capabilities at Scale. (arXiv)

Comparing AI Agents to Cybersecurity Professionals in Real-World Penetration Testing. (arXiv)

NIST Cybersecurity Framework Profile for Artificial Intelligence, preliminary draft. (nvlpubs.nist.gov)

OWASP LLM01 Prompt Injection and the OWASP Top 10 for LLM Applications. (مشروع OWASP Gen AI Security Project)

Model Context Protocol specification and authorization material. (modelcontextprotocol.io)

Anthropic’s introduction to MCP. (أنثروبيك)

PortSwigger documentation for Burp Repeater and Burp Intruder. (portswigger.net)

NVD and advisory material for CVE-2025-3248, CVE-2026-27966, and CVE-2026-33017. (nvd.nist.gov)

CAI command-injection advisory in GitHub’s reviewed database. (جيثب)

Penligent homepage. (penligent.ai)

بنليجنت كيفية الحصول على تقرير اختبار الذكاء الاصطناعي الخماسي. (penligent.ai)

بنليجنت الهجمات السيبرانية العميلة تحتاج إلى اختبار ذكاء اصطناعي متحقق منه. (penligent.ai)

بنليجنت Pentest AI, What Actually Matters in 2026. (penligent.ai)