Cybersecurity jobs in 2026 are not shrinking into irrelevance. They are getting sharper edges. In the United States, the Bureau of Labor Statistics projects 29 percent employment growth for information security analysts from 2024 to 2034, with about 16,000 openings per year on average. BLS also lists a 2024 median pay of $124,910 for the role. That does not describe every security job on earth, but it is a strong signal that the labor market still values people who can reduce real risk, not just talk about it. (Bureau of Labor Statistics)

The broader market tells the same story with more nuance. CyberSeek reports 514,359 U.S. cybersecurity job listings in its latest reporting period, a global cybersecurity workforce midpoint estimate of 4.97 million, and a striking new detail for 2025 hiring: about 10 percent of cybersecurity job listings specifically reference AI skills. World Economic Forum data says networks and cybersecurity are among the top three fastest-growing skills through 2030, while Security Management Specialists rank among the top five fastest-growing roles and Information Security Analysts land in the top fifteen. At the same time, the Forum says 39 percent of workers’ key skills are expected to change by 2030. The message is not that security work is going away. It is that the center of gravity is moving. (cyberseek.org)

That shift is tied directly to AI. In the World Economic Forum’s Global Cybersecurity Outlook 2026, 87 percent of respondents identified AI-related vulnerabilities as the fastest-growing cyber risk over the course of 2025. IBM’s 2026 cyberthreat analysis warns that AI chatbot and agent platforms are becoming a credential-rich attack surface. Gartner, looking at employee behavior, says more than 57 percent of surveyed workers use personal GenAI accounts for work and 33 percent admit entering sensitive information into unapproved tools. Those are not abstract trend lines. They are new reasons to hire people who can test, constrain, monitor, and validate systems that now mix classic software risk with model-driven behavior. (Foro Económico Mundial)

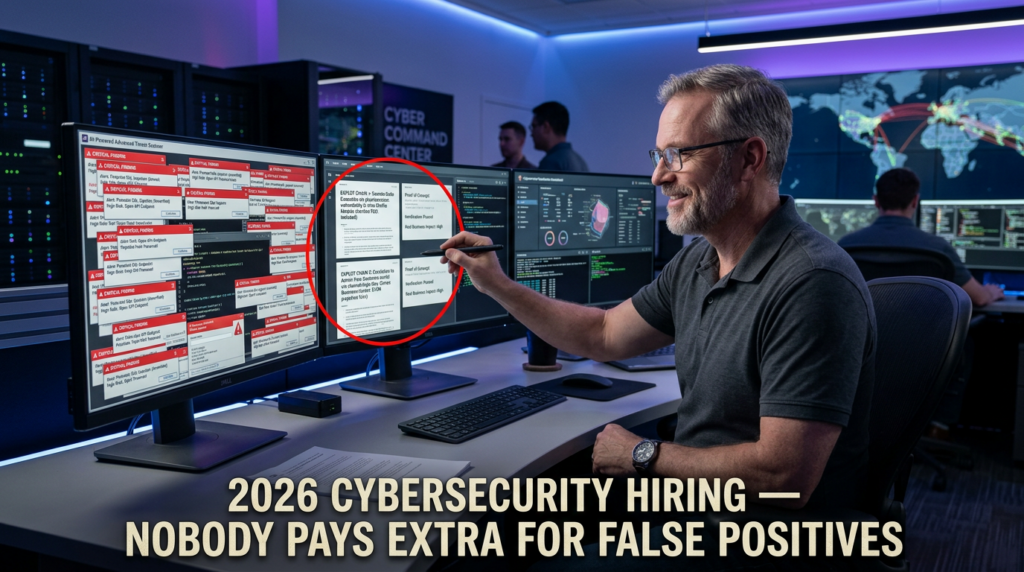

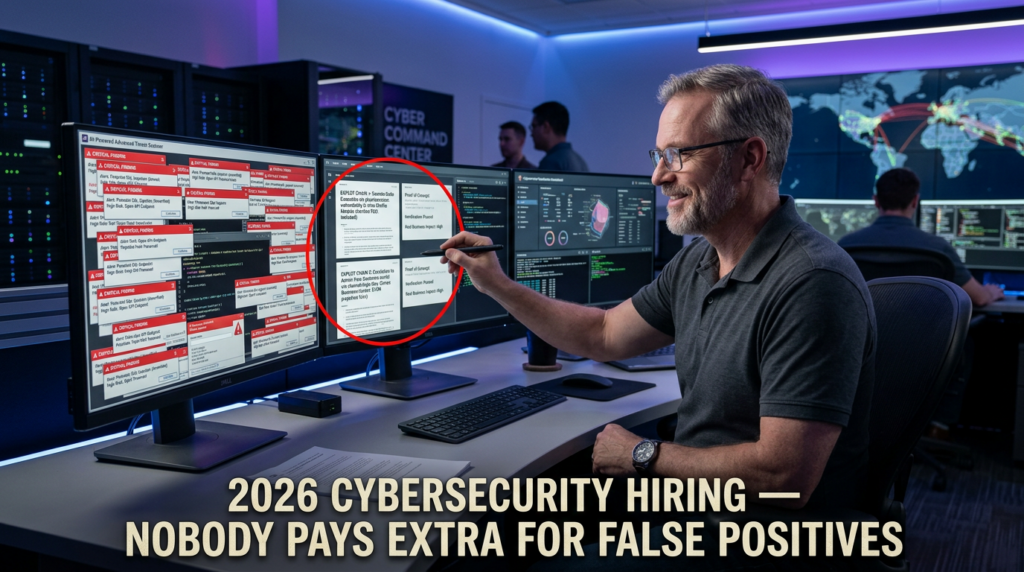

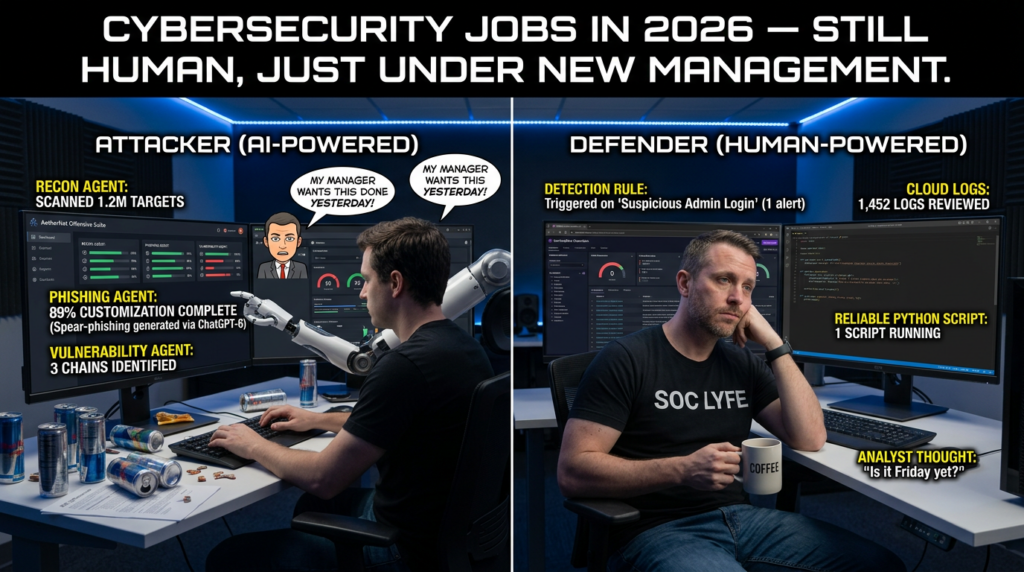

The lazy version of the 2026 story says AI will replace junior analysts, junior pentesters, and anyone whose work can be turned into a prompt. The more accurate version is harsher and more useful. AI compresses low-value repetition. It raises the value of people who can frame scope, understand architecture, validate exploitability, separate signal from noise, and turn technical findings into evidence another engineer can trust. For offensive security, that is exactly why AI pentesting matters: it changes the speed and shape of the workflow, but it does not remove the need for judgment, constraints, or proof. (csrc.nist.gov)

The market for cybersecurity jobs in 2026 is still expanding

It helps to separate three claims that often get mixed together. The first claim is whether employers still need security workers. They do. BLS projects strong growth for information security analysts, and CyberSeek’s job posting data remains elevated. The second claim is whether the industry still has a staffing problem. It does. ISC2’s 2024 workforce study said there were 5.5 million people active in cyber worldwide but a gap of 4.8 million, meaning roughly 47 percent of global need was not being addressed. The third claim is whether budgets have become tighter and more selective. They have. ISC2’s 2025 workforce study says the hiring freezes, layoffs, budget cuts, and promotions reported in 2024 were stabilizing rather than disappearing, leaving existing teams under continued pressure. That combination matters because it means employers are still hiring, but they are looking harder for people who can produce leverage. (isc2.org)

A clean way to read the 2026 market is to stop asking whether cybersecurity is “hot” and start asking which kinds of cyber work are becoming non-optional. The World Economic Forum’s Future of Jobs Report 2025 says technology-related skills are rising faster than any others, with AI and big data first, then networks and cybersecurity, then technological literacy. In the same report, Security Management Specialists appear among the top five fastest-growing roles and Information Security Analysts among the top fifteen. That is not a forecast of a narrow niche. It is a forecast that security skills are becoming foundational inside more jobs, while dedicated security roles still grow in absolute importance. (Foro Económico Mundial)

CyberSeek adds another useful layer. Its updated dataset shows that employers are not hiring only for incident response and SOC work. Job postings mapped heavily into Oversight and Governance, Implementation and Operation, Design and Development, and Protection and Defense. That pattern lines up with what many practitioners already feel on the ground: cybersecurity jobs in 2026 span policy, engineering, operations, product design, software development, cloud hardening, detection, validation, and investigation. It is a wider field than the old “blue team versus red team” picture suggests. (comptia.org)

The table below captures the current market signal more clearly than a thousand career-influencer posts.

| Señal | Latest data point | What it means in practice |

|---|---|---|

| BLS information security analyst outlook | 29 percent projected growth from 2024 to 2034, about 16,000 openings per year, 2024 median pay of $124,910 | Dedicated security roles remain durable and well-compensated |

| CyberSeek U.S. hiring signal | 514,359 cybersecurity job listings in the reporting period | Hiring remains broad even in a more selective budget environment |

| CyberSeek AI crossover | 10 percent of cybersecurity job listings explicitly mention AI skills | AI fluency is moving from nice-to-have toward differentiated advantage |

| WEF skills outlook | Networks and cybersecurity among top three fastest-growing skills through 2030 | Security capability is spreading into more technical jobs, not fewer |

| WEF role outlook | Security Management Specialists in the top five fastest-growing roles, Information Security Analysts in the top fifteen | Governance, leadership, and technical analysis all remain valuable |

| ISC2 workforce pressure | 2024 study reported 5.5 million active professionals and a 4.8 million gap; 2025 study says economic pressure continues | Demand persists, but employers want stronger proof of capability |

| WEF cyber risk outlook | 87 percent of respondents identified AI-related vulnerabilities as the fastest-growing cyber risk during 2025 | AI security and AI-assisted testing are not side topics anymore |

Table sources: BLS, CyberSeek, WEF, and ISC2. (Bureau of Labor Statistics)

There is one more reason the market still has room for serious practitioners. Work is fragmenting. A company that used to need a network security person, a cloud engineer, and a part-time appsec reviewer now often needs someone who understands identity, APIs, cloud permissions, CI pipelines, logging, and at least the basics of model-connected systems. That does not always create more headcount. Sometimes it creates a harder job description. But harder job descriptions still create opportunity for people who build compound skills rather than treating “cybersecurity” as a single bucket. That interpretation is consistent with NICE’s work-role model and the CyberSeek mapping to those roles. (NIST)

Why cybersecurity jobs now map to work roles, not static titles

One of the most useful things NIST has done for the cyber labor market is make a simple point that job boards still ignore: occupations, jobs, and work roles are not the same thing. NIST says the NICE Framework currently comprises 52 work roles in seven categories, and it defines a work role as a grouping of work for which an individual or team is responsible or accountable. A job, by contrast, is a specific instance of employment created by an employer. That distinction matters because security hiring in 2026 often combines multiple work roles into one title. A “security engineer” might be doing cloud security review, IAM policy design, API authorization testing, and vulnerability validation in the same week. (NIST)

The seven NICE categories are not a perfect map of every modern team, but they are still a better mental model than guessing from titles. Oversight and Governance covers policy, planning, awareness, and risk direction. Design and Development covers secure architecture and secure software work. Implementation and Operation covers the systems that keep environments functioning. Protection and Defense covers monitoring, analysis, and active defense. Investigation, Collection and Operation, and Analysis add the intelligence, evidence, and mission-oriented dimensions. Once you think in work-role combinations rather than job-title mythology, a lot of the 2026 market suddenly makes sense. (NIST)

This is also why so many people get confused about “entry-level cybersecurity jobs.” An entry-level job is rarely entry-level across every work role it touches. BLS says the typical entry-level education for information security analysts is a bachelor’s degree, and CyberSeek’s latest language emphasizes that employers should provide realistic entry-level opportunities. But realistic does not mean responsibility-free. It usually means the employer expects some narrow set of demonstrated capabilities: log analysis, cloud basics, Linux competence, API testing, scripting, or documentation discipline. In 2026, employers are not looking for zero skill. They are looking for lower-risk on-ramps into one part of the work. (Bureau of Labor Statistics)

That change is actually good news for candidates who come from adjacent backgrounds. NIST and CyberSeek both emphasize pathways, not a single funnel. CyberSeek explicitly frames careers through pathway dashboards and many on-ramps into cyber careers. NICE likewise treats work roles as modular building blocks. A developer can move toward appsec and product security. An SRE can move toward cloud security and detection engineering. A QA engineer can move toward security validation and abuse-case testing. A bug bounty hunter can move toward offensive validation or application security review. The people who struggle most are often not the people with the least experience. They are the people trying to enter the field with no idea which work roles they are actually optimizing for. (cyberseek.org)

AI pentesting changes the work faster than it changes the headcount

To understand what AI pentesting does to cybersecurity jobs, it helps to start with what penetration testing still is. NIST’s glossary describes penetration testing as security testing in which evaluators mimic real-world attacks to identify ways to circumvent the security features of an application, system, or network, often using real attacks on real systems and data under constraints. NIST SP 800-115 frames technical testing around planning, execution, analysis, and mitigation strategies. That definition matters because it separates real offensive validation from a scanner that found a weak signal and wrote a confident paragraph about it. (csrc.nist.gov)

AI does not erase that definition. It changes which parts of the workflow can be accelerated. Recon review can be summarized faster. Raw HTTP traces can be clustered faster. Hypotheses about privilege boundaries can be generated faster. Report drafting can be structured faster. But the parts that decide whether the work is trustworthy still look familiar: scope control, environment understanding, state management, exploit verification, evidence capture, and communication. That is why the best AI-assisted offensive workflows are becoming more evidence-driven, not less. (csrc.nist.gov)

The AI side of red teaming has matured in parallel. OWASP’s GenAI Red Teaming Guide describes a four-part approach that includes model evaluation, implementation testing, infrastructure assessment, and runtime behavior analysis. OpenAI’s paper on external red teaming says red teaming is a structured effort to find flaws and vulnerabilities in AI systems and explains it as one element in a broader model and system evaluation process. NIST’s emerging Cybersecurity Framework Profile for Artificial Intelligence reflects the same shift by discussing both the secure use of AI in defense and the need to thwart AI-enabled cyber attacks. In other words, “AI security jobs” are not just about policy. They increasingly include hands-on testing and validation work. (Proyecto OWASP Gen AI Security)

The reason this changes pentesting roles so deeply is that AI has expanded both sides of the attack surface. Security teams are now using AI to accelerate existing testing against normal web apps, APIs, cloud environments, and exposed infrastructure. At the same time, they also have to test AI systems themselves: agents, RAG pipelines, browser use, tool calls, memory layers, hidden instructions, indirect prompt injection paths, and model-to-system boundary failures. Google says prompt injection becomes far more serious when agents move from answering questions to handling multi-step business workflows with sensitive data and critical actions. Anthropic says every webpage an agent visits can become a vector for prompt injection when the agent browses the internet on a user’s behalf. That is a major labor-market implication, not just a product design footnote. (Nube de Google)

NIST’s draft Cyber AI Profile goes even further. It says AI-enabled attacks can support adversaries across the cyber kill chain, including discovering exploitable weaknesses, advancing attack paths on shorter timelines, exfiltrating and tampering with data, and lifting the capabilities of previously unsophisticated actors. It also notes that AI agents are increasingly capable of autonomously orchestrating phases of cyberattack from reconnaissance and attack surface mapping to vulnerability exploitation, credential harvesting, lateral movement, and data collection. When the standards community starts writing about that as a planning reality, security hiring changes with it. Employers begin to care not only whether you can defend against ordinary attacks, but whether you understand the new tempo of semi-autonomous attack workflows. (nvlpubs.nist.gov)

This is where AI pentesting becomes a career question instead of just a tooling question. The people who benefit most from AI in 2026 are not the ones who can ask the prettiest prompt for a vulnerability explanation. They are the ones who can decide where to trust automation, where to distrust it, and how to force a system to produce reproducible evidence under bounded conditions. That applies to defenders, appsec engineers, offensive testers, and AI red teamers alike. (csrc.nist.gov)

The four lanes shaping cybersecurity jobs in 2026

The NICE Framework remains the best formal taxonomy, but for practical career planning in 2026, most cybersecurity jobs can be grouped into four high-value lanes. This is a synthesis, not a replacement for NICE. It is meant to help real candidates, hiring managers, and team leads understand how the market is clustering: defenders and detection engineers, product and application security engineers, offensive validation and pentesting practitioners, and AI security and AI red team specialists. That synthesis is grounded in NICE work roles, CyberSeek demand patterns, and current AI security guidance from NIST, OWASP, OpenAI, Google, and Anthropic. (NIST)

| Career lane | Core problem it solves | Typical proof of work | How AI changes it | Common entry points |

|---|---|---|---|---|

| Defender and detection engineering | Detect, contain, and recover from live risk | Queries, playbooks, investigations, detections, hardening changes | AI speeds triage and query drafting, but raises the bar for judgment | IT ops, SOC, cloud ops, incident support |

| Product security and appsec | Prevent design and implementation flaws from shipping | Threat models, secure reviews, auth tests, CI checks, fix guidance | AI-generated features and faster release cycles increase product risk and review volume | Software engineering, QA, SRE, platform engineering |

| Offensive validation and pentesting | Prove exploitability and prioritize real exposure | Reproducible findings, PoCs, attack paths, retest evidence | AI compresses recon, testing loops, and reporting, but proof still matters most | Bug bounty, QA automation, appsec, consulting labs |

| AI security and AI red teaming | Test model-connected systems, agent boundaries, and misuse paths | Prompt injection tests, RAG leak cases, tool abuse findings, runtime safeguards | AI is the target as well as the accelerator | ML engineering, appsec, red teaming, platform security |

Table concept is synthesized from NICE, CyberSeek, NIST, OWASP, OpenAI, Google, and Anthropic materials. (NIST)

The defender and detection engineering lane

The defender lane remains one of the most durable routes into cybersecurity jobs in 2026 because it sits closest to business continuity. Companies can delay a red team engagement. They can reduce conference travel. They can even postpone a mature application security program. They cannot ignore detections, incident handling, identity hygiene, logging pipelines, and cloud exposure for long without paying for it in outages, fraud, or breach impact. WEF’s skills data and CyberSeek’s role mapping both support the idea that operational and governance-oriented security work remains foundational across the market. (Foro Económico Mundial)

AI changes this lane in an uneven but useful way. It is already good at compressing repetitive tasks such as writing first-pass detections, summarizing alerts, enriching cases, extracting indicators, and suggesting hypotheses. It is far less reliable at understanding what makes your environment weird. It does not inherently know that a service account is misused in one business unit but normal in another, or that a permissions change is expected during a migration window. In practice, the defender who becomes more valuable in 2026 is not the one who resists AI, but the one who uses it for speed while retaining ownership of the investigation narrative and the control boundaries. That interpretation lines up with NIST’s framing of AI-enhanced defense and the continuing importance of practical, experience-driven proficiency. (nvlpubs.nist.gov)

This lane is especially good for people with IT operations, cloud administration, or support engineering backgrounds because the adjacency is real. You already understand systems, tickets, change windows, and the difference between a lab artifact and a production outage. The upgrade path is to learn how to think adversarially about those systems: identity misuse, log gaps, suspicious process chains, cloud role abuse, persistence, and evidence handling. CyberSeek’s pathway emphasis exists precisely because employers do not need every candidate to start from zero. (cyberseek.org)

The product security and application security lane

If there is one lane that looks unusually resilient in 2026, it is product security. Modern software is increasingly API-driven, cloud-native, permission-heavy, and now often AI-connected. OWASP’s stable Web Security Testing Guide still emphasizes authorization testing, privilege escalation, insecure direct object references, session management, and other application-layer issues that scanners routinely miss. OWASP’s 2025 Top 10 keeps Broken Access Control at number one, and the OWASP API Security Project still places Broken Object Level Authorization at the top of API risk in the 2023 edition. That is a direct warning that the hard part of security work remains contextual, not merely syntactic. (owasp.org)

This is also the lane most obviously expanded by AI-generated product velocity. When teams ship faster, security review volume goes up. When teams generate code with assistants, review quality becomes more important, not less. When teams add a chat interface, a browser agent, or retrieval pipeline to a normal application, the application security engineer suddenly has to reason about traditional auth flaws and model-connected misuse paths in the same system. NIST’s AI RMF and the draft Cyber AI Profile both reflect that AI risk management now has to connect to ordinary cybersecurity controls rather than sit in a separate policy silo. (NIST)

Product security is a strong target for developers, QA engineers, SREs, and platform engineers because the best appsec practitioners rarely think like checklist auditors. They think like engineers who know where assumptions fail. They understand schemas, state transitions, object ownership, role boundaries, deployment pipelines, secrets exposure, caching behavior, and the ugly ways business logic gets encoded into normal-looking HTTP requests. In 2026, that engineering realism is worth more than memorizing another list of tool names. (owasp.org)

The offensive validation and pentesting lane

Offensive roles are not dead in the AI era. They are being forced to mature. The old market could tolerate a certain amount of “scanner plus screenshots plus severity adjectives.” Modern teams are less patient. NIST’s definition of penetration testing still points toward active attempts to circumvent security features under constraints. OWASP’s testing material still points toward broad, stateful testing of authorization, authentication, sessions, and application behavior. Employers now increasingly care whether the tester can prove exploitability and hand off something engineers can act on. (csrc.nist.gov)

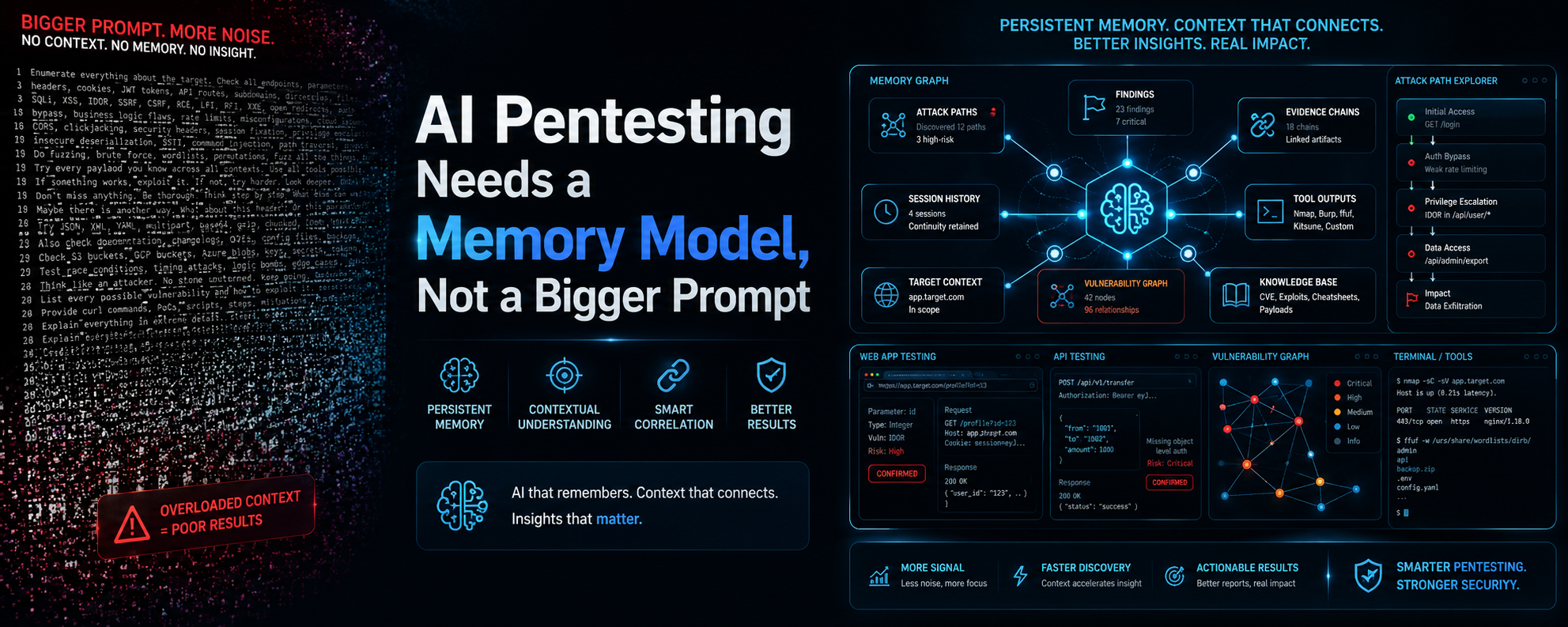

This is where AI-assisted pentesting helps the most. It can compress reconnaissance review, organize attack hypotheses, assist with state handling, and speed up report drafting. But it also creates a new trap: plausible findings with weak proof. That is why offensive validation roles are drifting toward evidence-first workflows. A strong tester in 2026 knows how to turn an LLM-generated suggestion into a bounded experiment, how to confirm that an auth flaw is real across roles, how to preserve the artifacts needed for retest, and how to explain impact without inflating it. Public materials from the AI pentesting market increasingly frame the problem in those terms. Recent Penligent material, for example, emphasizes verification, one-click PoC generation, editable reporting, and operator-controlled workflows rather than only conversational summaries. Whether a buyer chooses that platform or another, that product shape reflects what the labor market now rewards: trustworthy validation over noisy alert volume. (csrc.nist.gov)

This lane remains one of the best fits for bug bounty practitioners, red team enthusiasts, security researchers, and QA engineers who enjoy stateful testing. But it now rewards breadth differently. Five years ago, it was easy to build identity around a bag of tools. In 2026, the stronger identity is a workflow: map the surface, preserve auth state, test object and function authorization, verify exploitability, collect evidence, retest after fixes, and connect findings back to operational reality. That workflow travels better across employers than a favorite scanner does. (csrc.nist.gov)

The AI security and AI red teaming lane

The AI security lane is the newest, but it is no longer speculative. OWASP’s GenAI Red Teaming Guide is explicit that AI red teaming should include not only model evaluation but also implementation testing, infrastructure assessment, and runtime behavior. OpenAI’s external red teaming paper treats structured red teaming as part of a broader evaluation and deployment process. Google’s AI red team notes that prompt injection becomes more dangerous as agents gain the ability to handle sensitive context and perform multi-step actions. Anthropic says browser agents face one of their biggest challenges when they browse untrusted content that may contain hidden instructions. That is a full-time problem space, not a side quest for policy teams. (Proyecto OWASP Gen AI Security)

This lane is not the same as classic appsec and not the same as pure ML engineering. It sits in the boundary layer. The practitioner has to think about prompt injection, indirect injection, retrieval poisoning, tool misuse, unsafe delegation, credential spill, memory contamination, output handling, identity propagation, and action authorization. The most useful people here can usually speak more than one language: application security, system security, and enough model behavior to understand failure modes without pretending the LLM is magic. (nvlpubs.nist.gov)

For candidates, this lane is attractive because it is still fluid. There is less title stability, but also more room to define a profile. A product security engineer who learns agent risk can grow into AI security. An offensive tester who learns RAG and prompt injection can move into AI red teaming. An ML platform engineer who learns threat modeling and abuse testing can become the bridge their team currently lacks. The demand signal here is supported by both the risk data and the hiring crossover data: AI-related vulnerabilities are rising quickly, and employers are already writing AI skills into security job listings. (Foro Económico Mundial)

What AI changes inside the pentest workflow

The workflow shift is easiest to see when you compare old offensive bottlenecks with new ones. The old bottlenecks were finding endpoints, juggling too many tabs, hand-transcribing requests, and writing repetitive findings. Those still exist, but AI trims them. The new bottlenecks are harder: understanding authenticated state, proving authorization failures, preserving causal chains, deciding whether the target is an ordinary app or an AI-mediated one, and keeping the whole operation bounded and auditable. That is a more demanding job, not a smaller one. (csrc.nist.gov)

This matters because many hiring discussions still ask the wrong question. They ask whether a candidate knows Burp, Nuclei, ffuf, sqlmap, or Promptfoo. Those tools matter, but the more useful question in 2026 is whether the candidate knows what the tool is for in a larger workflow. Are they using a scanner to discover a signal, a proxy to maintain state, a red-team harness to test prompt injection, a script to verify authorization drift, and a report template to preserve reproducibility? Or are they throwing tools at a target until one says something interesting? The standards and guidance now point strongly toward the first model. (csrc.nist.gov)

A lot of modern pentesting value comes from understanding authorization, not just input validation. OWASP’s latest testing material still highlights authorization testing and privilege escalation. OWASP’s API Security Project still puts Broken Object Level Authorization at API1 for the 2023 edition and explicitly says APIs expose object identifiers that create a wide attack surface for object-level access control issues. That is why many of the best offensive testers in 2026 behave less like “payload artists” and more like careful systems analysts. They compare role behavior, object ownership, workflow steps, and state transitions. They do not just fuzz blindly. (owasp.org)

A minimal example looks like this:

# Compare the same object under two different roles

curl -s -H "Authorization: Bearer $USER_TOKEN" \

https://target.example/api/v1/invoices/1001 | jq '{id, owner, total}'

curl -s -H "Authorization: Bearer $ADMIN_TOKEN" \

https://target.example/api/v1/invoices/1001 | jq '{id, owner, total}'

# Now test whether object ownership is enforced

curl -i -s -H "Authorization: Bearer $USER_TOKEN" \

https://target.example/api/v1/invoices/1002

This is not flashy, but it is the kind of work that actually finds broken access control. The point is to observe whether the system enforces ownership and role boundaries at the object level, not merely whether the endpoint exists. That lines up directly with OWASP’s emphasis on authorization and API object-level access control. (owasp.org)

The AI side of the workflow needs the same clarity. Promptfoo’s current docs describe red teaming as a configurable process with targets, plugins, strategies, and a statement of the application’s purpose. That is not accidental. AI red teaming fails when it becomes detached from the real purpose, user boundaries, and allowed actions of the application under test. A generic jailbreak battery is not the same thing as testing whether a support agent can leak another customer’s ticket data or whether a browser-capable agent can be tricked into performing an unsafe action. (promptfoo.dev)

A useful minimal configuration concept for an AI red team harness looks like this:

targets:

- id: https

label: support-agent

config:

url: https://target.example/agent/respond

method: POST

purpose: >

The system helps authenticated users review their own support tickets.

It must not reveal other users' tickets, internal notes, secrets, or take

actions outside the authenticated user's permissions.

redteam:

plugins:

- prompt-injection

- pii

- bola

strategies:

- basic

- crescendo

The exact implementation will vary by target and provider, but the operational idea is stable. Describe the application honestly. Define the target. Select adversarial plugins. Use multi-turn strategies when the system preserves state. That is much closer to real AI security testing than asking a frontier model to “hack my app.” (promptfoo.dev)

The last workflow shift is evidence handling. NIST SP 800-115 is old, but one reason it remains useful is that it still frames testing as planning, execution, analysis, and mitigation. The underrated skill in 2026 is not just exploitation. It is packaging. Can you collect the request, response, role context, preconditions, impact boundaries, fix advice, and retest outcome cleanly enough that engineering accepts it and management can prioritize it? This is where a lot of AI-assisted platforms are converging. Public material from Penligent, for instance, talks about verification, editable reports, and PoC generation in ways that are much closer to deliverable quality than the old scanner-report paradigm. Again, the brand is less important than the market signal it reflects. Evidence survives. Vibes do not. (csrc.nist.gov)

A simple evidence discipline can look like this:

mkdir -p evidence/authz-bug-001

cp requests/user_1002.txt evidence/authz-bug-001/

cp requests/admin_1002.txt evidence/authz-bug-001/

cp responses/user_1002.json evidence/authz-bug-001/

cp responses/admin_1002.json evidence/authz-bug-001/

cat > evidence/authz-bug-001/notes.md <<'EOF'

Finding: Broken object level authorization

Precondition: Authenticated low-privilege user

Object tested: invoice 1002

Expected result: 403 or object not found

Observed result: 200 with another user's invoice details

Retest status: pending

EOF

That discipline feels basic because it is basic. But it is also what makes your work useful after the adrenaline fades. The teams hiring pentesters, appsec engineers, and AI red teamers in 2026 increasingly want people who can leave behind clean technical truth. (csrc.nist.gov)

The vulnerabilities future teams still have to break and defend

Career advice gets better when it is tied to real incidents. The easiest way to see what employers still value is to look at the kinds of failures that keep forcing patching, hunting, validation, and postmortems.

The first example is CVE-2024-3400 in Palo Alto PAN-OS. Palo Alto’s advisory says it was a command injection vulnerability resulting from arbitrary file creation in the GlobalProtect feature and that it could allow an unauthenticated attacker to execute arbitrary code with root privileges on the firewall. The advisory also says the issue applied only to specific versions and configurations involving GlobalProtect gateway or portal, and that exploitation was increasing in the wild. This is a perfect example of why edge validation remains a serious job category. Someone has to know which assets are exposed, which configurations are actually vulnerable, whether exploitation is feasible in the environment, what compensating controls exist, and how to retest after upgrades. That is offensive validation, product knowledge, infrastructure hygiene, and incident readiness in one case. (seguridad.paloaltonetworks.com)

The second example is CVE-2025-0282 affecting Ivanti Connect Secure, Policy Secure, and Neurons for ZTA gateways. NVD describes it as a stack-based buffer overflow that allows remote unauthenticated code execution in affected versions. NVD also notes that it is in CISA’s Known Exploited Vulnerabilities Catalog, with required action to apply mitigations, conduct hunt activities, take remediation actions as needed, and apply updates before returning the device to service. Ivanti said at disclosure that a limited number of Connect Secure appliances had already been exploited. This is another reminder that “security job” boundaries are blurry. A real response to this kind of edge-device issue touches vulnerability management, threat hunting, incident response, logging, asset inventory, and retest discipline. (nvd.nist.gov)

The third example is CVE-2024-3094, the XZ Utils backdoor. NVD says malicious code was discovered in upstream tarballs of xz beginning with version 5.6.0 and that the build process extracted a prebuilt object file from a disguised test file, modifying liblzma during build. Microsoft’s guidance described it as a software supply chain compromise affecting versions 5.6.0 and 5.6.1 and noted CISA’s recommendation to downgrade to a previous uncompromised version. This case matters for the job market because it expands the importance of roles that sit between development and operations: DevSecOps, platform security, software supply chain review, dependency governance, and secure build pipeline ownership. If your security worldview still stops at external scanning, you will miss why these roles keep growing. (TECHCOMMUNITY.MICROSOFT.COM)

The fourth example is CVE-2023-34362 in MOVEit Transfer. NVD says it was a SQL injection vulnerability that could allow an unauthenticated attacker to gain access to the MOVEit Transfer database, infer database structure and contents, and execute SQL statements that alter or delete database elements, with exploitation observed in the wild in May and June 2023. This one keeps showing up in career conversations because it is a reminder that boring, exposed business systems remain enormously important targets. Secure file transfer is not glamorous, but it is exactly the kind of external, trusted, high-value workflow that product security, vulnerability management, incident response, and offensive validation teams are paid to understand. (nvd.nist.gov)

These incidents map neatly to skills employers still prize.

| CVE | ¿Qué falló? | Why it matters for careers | Skills it rewards |

|---|---|---|---|

| CVE-2024-3400 | Externally exposed edge component with exploitable configuration | Edge exposure and retest work are still core security labor | Asset inventory, exposure validation, patch prioritization, IR coordination |

| CVE-2025-0282 | Internet-facing access gateway with active exploitation | Security teams need people who can hunt, remediate, and verify | Threat hunting, appliance hardening, incident response, recovery testing |

| CVE-2024-3094 | Software supply chain compromise inside the build path | Appsec now includes dependency trust and build integrity | SBOM awareness, build security, dependency review, platform engineering |

| CVE-2023-34362 | High-value business application with pre-auth SQLi | Product and application security remain business-critical | Web testing, data-layer reasoning, exploit verification, remediation guidance |

Table derived from Palo Alto, NVD, Microsoft, Ivanti, and CISA-linked NVD references. (seguridad.paloaltonetworks.com)

The deeper lesson is that AI has not made ordinary security failures less relevant. It has made the labor around them more layered. A team may use AI to accelerate recon, ticket triage, or code review. But the work still lands on humans who understand exposure boundaries, exploit preconditions, and business impact. That is why AI raises the value of compound operators. The person who can read an advisory, validate actual exposure, script a test, preserve evidence, and hand a clean decision to leadership is more valuable in 2026 than the person who simply knows where the CVE page lives. (csrc.nist.gov)

What a portfolio looks like in 2026

Many people trying to break into cybersecurity still ask the wrong portfolio question. They ask whether they need certifications, a blog, a GitHub, a few CTF wins, or a home lab. The answer is that none of those are sufficient on their own. A useful 2026 portfolio proves that you can do bounded technical work that resembles a real job. The shape of that work depends on the lane you want, but the underlying standard is consistent: can another practitioner review your output and trust that you understood the system, the risk, and the limits of your claim? That expectation is strongly aligned with NICE’s work-role framing and NIST’s testing emphasis on planning, analysis, and mitigation, not just activity. (NIST)

For defenders, a portfolio should show that you can reason from telemetry to hypothesis and from hypothesis to action. That may include a documented detection rule, an investigation note showing how you separated false positives from abuse, a cloud hardening change with before-and-after evidence, or a small incident reconstruction. The key is not glamour. It is judgment. If you can show a disciplined investigative write-up, you are already ahead of many candidates whose resumes only list tools. (NIST)

For product security and appsec candidates, the best portfolio items usually come from real code or realistic demos. That can be a threat model for a small SaaS feature, a write-up showing a broken object level authorization path in a lab app, a secure design review of a file upload flow, or a CI-integrated test that catches a class of auth drift. OWASP’s testing material gives you plenty of structure here. You do not need to invent exotic zero-days. You need to show that you can analyze how normal systems break. (owasp.org)

For offensive candidates, three portfolio pieces go farther than twenty vague screenshots. The first is a clean write-up of a real finding or well-reconstructed lab case with exact reproduction steps and bounded impact. The second is an authorization or business-logic test that shows you understand state, roles, and object ownership. The third is a retest case showing how you confirmed the fix. That last item is underrated. Retesting proves you can close the loop rather than only opening it. NIST’s emphasis on mitigation strategies is a good reminder that testing only becomes valuable when it informs action. (csrc.nist.gov)

For AI security candidates, the fastest portfolio win is usually not a frontier research paper. It is a practical red-team artifact. Build a small RAG assistant or tool-using agent. Define its purpose and user boundaries. Run a prompt injection test. Document what happened. Explain whether the failure was in the model, the system prompt, the retrieval layer, the tool policy, the output handling, or the authorization boundary. OWASP, OpenAI, Google, and Anthropic all point toward structured evaluation and boundary-aware testing. If you can demonstrate that in a small but honest project, you already look more credible than many people who merely repeat “prompt injection is dangerous.” (Proyecto OWASP Gen AI Security)

A concise 2026 portfolio blueprint looks like this:

| Target lane | Best first project | What it proves |

|---|---|---|

| Defender | Detection and investigation write-up | You can turn telemetry into action |

| Product security | API authorization test or design review | You understand app and business-logic risk |

| Offensive validation | Reproducible pentest finding plus retest | You can validate and communicate real exposure |

| AI security | Small red-team exercise against an agent or RAG app | You can test AI systems as systems, not just models |

This table is a practical synthesis of the frameworks and guidance already discussed. (NIST)

How to move into cybersecurity jobs in 2026 without guessing

The best career transitions into cybersecurity are not random. They start by exploiting a nearby strength and then adding adversarial perspective.

If you are coming from IT operations, infrastructure, or cloud administration, your advantage is environmental realism. You know hosts, services, tickets, outages, IAM drift, and the pain of changing production. Move toward detection engineering, cloud security, or incident response by learning log analysis, identity abuse patterns, cloud permission boundaries, and the mechanics of triage. Do not throw away your operational habits. Security teams need them. (cyberseek.org)

If you are coming from software engineering, your advantage is code and system behavior. Move toward product security, appsec, or AI security by learning threat modeling, auth testing, API risk, secure design review, and the security implications of model-connected features. In 2026, this is one of the strongest transitions because so much high-value security work lives inside software delivery rather than outside it. OWASP’s continued focus on authorization and API risk makes this path especially durable. (owasp.org)

If you are coming from QA or test automation, your advantage is reproducibility. That is a hidden superpower in cybersecurity. Many security teams struggle not because they cannot find signals, but because they cannot consistently reproduce and validate them. QA engineers who learn security testing methodology, role-based access testing, abuse cases, and evidence capture can become strong appsec or offensive validation hires. The workflow mindset translates very well. (csrc.nist.gov)

If you are coming from bug bounty or CTFs, your advantage is adversarial curiosity and persistence. Your risk is overfitting to challenge-style work. The upgrade path is to make your work more enterprise-legible: bound your claims, preserve evidence, explain remediation, understand identity and business context, and demonstrate retest ability. A great bug bounty hunter is already halfway to being a valuable validation engineer. The missing half is often communication and constraint handling, not raw exploitation skill. (csrc.nist.gov)

If you are coming from governance, audit, compliance, or risk, your advantage is context. You understand policy, control intent, evidence standards, and organizational decision-making. The missing piece is technical depth. That gap can be closed selectively. You do not need to become a kernel exploit researcher to be useful. But if you add enough system understanding to evaluate cloud misconfigurations, AI data risk, or application control failures with precision, you become much more powerful than a compliance specialist who cannot assess how controls fail in practice. NIST’s AI RMF and the draft Cyber AI Profile both reinforce the idea that governance and technical testing need to converge rather than remain separate silos. (NIST)

What entry-level means in cybersecurity jobs in 2026

Entry-level does not mean harmless. It means bounded.

That sounds obvious, but it is one of the most important labor-market truths in cybersecurity jobs in 2026. CyberSeek’s language about realistic entry-level opportunities matters because employers do need on-ramps. But they need on-ramps into narrow slices of the work: alert review, cloud hygiene, identity administration, API testing, security QA, log enrichment, secure coding review, or structured AI red-team support. BLS’s “typical entry-level education” figure for information security analysts does not mean every entry-level hire needs to arrive as a finished analyst. It means most employers still expect a base layer of maturity. (comptia.org)

Candidates hurt themselves when they interpret entry-level as permission to stay vague. The market no longer rewards vague enthusiasm very much. It rewards narrow, legible skill. If you can show one clean detection, one decent authorization test, one small AI red-team case, and one clear technical write-up, you are more employable than someone who says they are “passionate about cybersecurity” and knows fifty tool names badly. The reason is simple: employers can bound your risk. They can see where you fit. (NIST)

The flip side is that teams should stop writing entry-level roles as if they want a five-year veteran who will accept junior pay. CyberSeek’s own update explicitly emphasized realistic entry-level opportunities. In a market where skills are changing quickly and AI is redistributing work, the employers who win will be those who can hire on proof of potential plus a bounded technical foundation, then develop deeper capability over time. That is not charity. It is workforce strategy. (comptia.org)

What hiring managers should screen for now

The AI era creates a temptation to screen candidates in shallow ways. Some managers now ask whether the candidate “uses AI,” which is about as useful as asking whether a surgeon “uses metal.” The better question is how they use it.

For defenders, screen for judgment under uncertainty. Can the candidate explain how they would validate an alert, handle incomplete context, distinguish normal from abnormal behavior, and write a defensible escalation? For product security, ask them to reason through an authorization problem or an API ownership model. For offensive roles, ask for a bounded validation workflow from discovery to retest. For AI security, ask how they would test a tool-using or browser-capable agent without confusing model behavior with system behavior. The public guidance from OWASP, OpenAI, Google, Anthropic, and NIST all point toward this more structured and systems-focused form of evaluation. (Proyecto OWASP Gen AI Security)

Tool familiarity should be screened, but only in context. A candidate who knows Burp but cannot explain role separation is weaker than a candidate who uses fewer tools but can walk you through object-level authorization logic. A candidate who can run Promptfoo but cannot define the target system’s purpose and allowed actions will struggle in real AI red teaming. A candidate who asks an LLM to summarize findings but cannot preserve the underlying artifacts is a reporting risk. In 2026, the strongest signal is still disciplined thinking tied to real systems. (promptfoo.dev)

One practical interview improvement is to ask for evidence design, not just exploit trivia. Give the candidate a small scenario. Ask what evidence they would collect, how they would test role boundaries, how they would decide whether a flaw is real, how they would write the fix guidance, and how they would retest. That single exercise reveals far more about readiness than asking them to recite the OWASP Top 10. (csrc.nist.gov)

The most durable skill stack for cybersecurity jobs in 2026

Every cycle of cyber hiring creates a new rush toward fashionable labels. In 2026, the labels are AI security, agent security, AI red teaming, offensive automation, and model risk. Some of that excitement is justified. Some of it is branding. The durable part is the underlying skill stack.

The first durable layer is security fundamentals. You still need access control, networking, operating systems, HTTP, identity, logging, cryptographic hygiene, and threat modeling. Those do not become obsolete because a model can write Python. They become more important because a model can now generate fragile code and accelerate fragile deployment. BLS, NICE, OWASP, and NIST all implicitly reinforce this: the field still rests on systems, not slogans. (Bureau of Labor Statistics)

The second durable layer is cloud and identity. Many serious modern incidents are less about memory corruption wizardry and more about exposure, secrets, tokens, trust relationships, and permission edges. Even AI systems inherit those realities. A browser agent with bad tool boundaries, a support agent with excessive retrieval access, or a model-connected workflow with broad OAuth scopes is still an identity and authorization problem at heart. Google’s and Anthropic’s prompt injection discussions make this point indirectly but clearly: once the model can act, action boundaries matter. (Nube de Google)

The third durable layer is application and API behavior. OWASP’s continuing emphasis on broken access control, authorization testing, and object-level access flaws is a reminder that many of the most important bugs live in normal logic. They are not hidden because they are technically esoteric. They are hidden because they require system understanding. That is good news for serious practitioners because system understanding is much harder to commoditize than commodity scanning. (owasp.org)

The fourth durable layer is automation and scripting. AI can write scripts, but the person who understands what should be automated still wins. A Bash loop, a Python notebook, a small evidence normalizer, a detection test harness, or an API role-differencing tool still creates real leverage. The gap between “can generate code” and “can design trustworthy automation” remains wide. That gap is where many good jobs live. (csrc.nist.gov)

The fifth durable layer is evidence and communication. This is the one too many people ignore. The analyst who can make a clean case, the appsec engineer who can explain a fix path, the pentester who can preserve artifacts, and the AI red teamer who can show exactly where a boundary failed all create organizational value beyond the raw technical event. In a budget-conscious market, communication is not fluff. It is how technical work becomes decision-ready. (csrc.nist.gov)

The sixth durable layer is AI-specific system risk. That does not mean becoming a model researcher overnight. It means understanding the practical failure modes already documented by the leading labs and standards work: prompt injection, indirect prompt injection, unsafe tool use, sensitive data exposure, retrieval abuse, data supply chain issues, and the way agents turn untrusted content into control-plane input. That layer is increasingly optional for no one and mandatory for some. (Departamento de Guerra de EE.UU.)

A good 2026 skill stack looks like this:

| Skill layer | Why it remains durable |

|---|---|

| Security fundamentals | Everything else rests on it |

| Cloud and identity | Modern attack paths and AI systems both depend on trust boundaries |

| App and API behavior | Broken access control and business logic flaws remain central |

| Automation and scripting | Leverage comes from trustworthy workflows, not manual repetition |

| Evidence and communication | Technical work only matters when others can act on it |

| AI system risk | Agents and model-connected systems are now part of mainstream security work |

This is a synthesis of the frameworks, reports, and advisories discussed throughout the article. (NIST)

The job is changing, not disappearing

Cybersecurity jobs in 2026 are not defined by whether AI exists. They are defined by where human accountability still lives after AI enters the workflow.

That accountability still lives in scope. It still lives in architecture understanding. It still lives in deciding whether a flaw is real, whether a boundary is meaningful, whether a report is honest, and whether a fix actually closed the hole. AI pentesting makes offensive work faster and, in the hands of disciplined people, better. AI security makes the field broader because now the systems under test increasingly include agents, model-connected applications, and tool-using workflows. None of that reduces the need for practitioners who can reason clearly under constraints. It increases it. (csrc.nist.gov)

The practical takeaway is simple. Pick a lane. Build three pieces of evidence that prove you belong in it. Learn to use AI as an accelerator without letting it turn your work into guesswork. Get good at one thing that organizations truly need: finding truth in a messy system and expressing that truth in a way another team can act on. That is still what strong cybersecurity work looks like. It may be the clearest definition of the profession in 2026. (cyberseek.org)

Para saber más

- U.S. Bureau of Labor Statistics, Information Security Analysts outlook and pay data. (Bureau of Labor Statistics)

- World Economic Forum, Future of Jobs Report 2025 and jobs outlook. (Foro Económico Mundial)

- World Economic Forum, Global Cybersecurity Outlook 2026. (Foro Económico Mundial)

- CyberSeek workforce data and career pathways. (cyberseek.org)

- ISC2 workforce studies and labor pressure analysis. (isc2.org)

- NIST NICE Framework resources on work roles. (NIST)

- NIST SP 800-115 and NIST penetration testing glossary. (csrc.nist.gov)

- NIST AI RMF and Cybersecurity Framework Profile for AI. (NIST)

- OWASP Web Security Testing Guide and OWASP API Security Project. (owasp.org)

- OWASP GenAI Red Teaming Guide. (Proyecto OWASP Gen AI Security)

- OpenAI, external red teaming for AI models and systems. (cdn.openai.com)

- Google on AI red teaming and indirect prompt injection risk. (Nube de Google)

- Anthropic on browser-agent prompt injection defenses. (antropic.com)

- Penligent homepage and product positioning. (penligent.ai)

- Penligent on AI penetration testing in 2026. (penligent.ai)

- Penligent on pentest AI tools in 2026. (penligent.ai)

- Penligent on what an AI red team assistant should actually do. (penligent.ai)