Why “xz cve” became a global fire drill

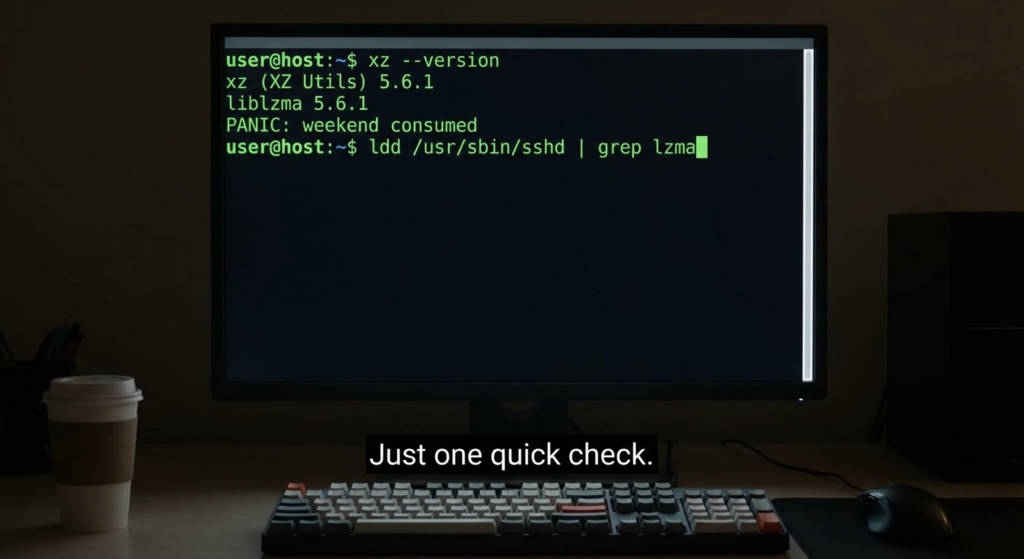

When security engineers search “xz cve”, they are almost always trying to resolve one urgent operational question: Is my fleet exposed to the XZ backdoor, and how do I prove it either way? That’s because CVE-2024-3094 was not a conventional vulnerability where you patch a buggy function and move on. It was a high-intent supply-chain compromise: malicious logic was placed in the upstream release artifacts pour XZ Utils 5.6.0 and 5.6.1, engineered to survive casual review and to activate through a build-time injection path into liblzma. (NVD)

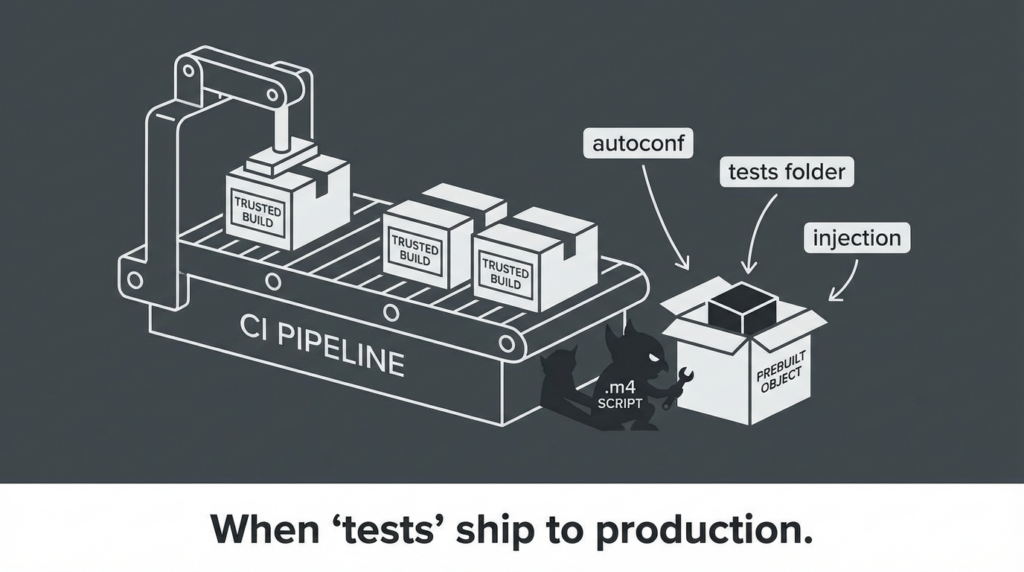

The reason this still matters long after the initial disclosure is simple: many organizations learned the wrong lesson from the first 48 hours. They treated it like “just update xz” rather than “we lost a trust boundary between source, release artifacts, and downstream distribution.” NVD’s description is blunt about where the malicious code lived and how it was delivered: in the upstream tarballs, with extra build instructions and obfuscation that extracted and injected a prebuilt object during the liblzma build. (NVD)

The one-paragraph truth of CVE-2024-3094

CVE-2024-3094 refers to malicious code discovered in XZ Utils upstream release tarballs starting with 5.6.0, where obfuscated build logic extracted a prebuilt object from disguised test files and modified liblzma during compilation, resulting in a compromised library that could affect software linked against it. (NVD)

CISA described it as a reported supply chain compromise affecting XZ Utils 5.6.0 and 5.6.1, and urged organizations to take immediate mitigation actions. (CISA)

If you want a mental model: this was less “buffer overflow” and more “someone swapped your factory’s calibration tool so every unit coming off the line contains a hidden defect that only shows up in the field.”

Affected versions, likely exposure, and why the answer depends on your distro

At the center is a narrow version window. The highest-confidence affected upstream versions were XZ Utils 5.6.0 and 5.6.1 release tarballs. (tukaani.org)

But operational risk depends on whether those versions actually shipped into your environment through your Linux distribution packages, images, CI base containers, or internal mirrors.

Quick reference table — what to check first

| What you are checking | Pourquoi c'est important | How to check fast |

|---|---|---|

| xz / liblzma package version | Known-bad window starts here | xz --version and package manager queries |

| Whether sshd can reach the compromised code path | The reported impact path involved SSH authentication flow under specific conditions | Inspect dynamic dependencies, packaging patches, and systemd linkage on your distro |

| Whether the installed liblzma came from compromised artifact chain | The “repo vs tarball” split is the entire point | Validate package provenance, signatures, and distro advisories; don’t rely on “GitHub source looks clean” |

The upstream maintainer of xz published a fact page emphasizing that the backdoor was in release tarballs and that tarballs created and signed by the malicious actor were distinct from safe ones. (tukaani.org)

That single detail is the headline for defenders: your review process might be staring at the wrong artifact.

How the compromise worked — the part most teams need to internalize

The core trick — your source repo can look fine while your release artifacts are poisoned

One of the defining properties of this incident was the artifact/repository mismatch: malicious build logic existed in the release tarballs in a way that would not necessarily appear in the same form inside the public source repository you might audit. (tukaani.org)

NVD explicitly notes the tarballs included extra build instructions — including extra .m4 files — that drove an obfuscated extraction/injection process during build. (NVD)

The injection concept — prebuilt objects hidden in “tests” become production code

Multiple write-ups describe test assets being used as a delivery vehicle for the injected payload and scripts. CrowdStrike’s analysis, for example, discusses obfuscated commands used in the build process and identifies test files involved in the backdoor chain. (CrowdStrike)

LWN’s technical breakdown became a widely referenced explanation of “how it worked” at a systems level, including the discovery story and mechanism-level discussion that helped the community converge on what was happening. (LWN.net)

Why SSH showed up in the story even though OpenSSH doesn’t “use xz” in a normal mental model

A lot of engineers’ initial reaction was “OpenSSH doesn’t link liblzma, so why do I care?” The reason the incident was so alarming is that real systems are not clean diagrams. Distros patch, services load other components, and dependency graphs contain surprising edges. Several incident recaps discuss the relationship between liblzma, system integration choices, and the potential for OpenSSH server compromise under certain conditions. (SSH)

The defensive lesson is not “remember this one weird graph,” but “assume your prod graph contains edges you didn’t design, then build verification to discover them.”

Practical verification — prove you are not exposed, don’t just assume you’re patched

Step 1 — identify xz and liblzma versions across a host

Use multiple views: binary version, package manager version, and library file version.

# 1) xz binary version

xz --version

# 2) Debian/Ubuntu

dpkg -l | egrep '^(ii)\\s+(xz-utils|liblzma)'

# 3) RHEL/Fedora

rpm -qa | egrep '^(xz|xz-libs|liblzma)'

# 4) Alpine

apk info | egrep '^(xz|xz-libs)'

If you see 5.6.0 or 5.6.1 in environments that plausibly shipped them, treat it as a stop-the-line event until proven otherwise. This aligns with the public advisory framing around the affected versions. (CISA)

Step 2 — check whether sshd or its reachable dependency chain loads liblzma

This is not about “is it supposed to,” it’s about “does it, here, now.”

# Does sshd depend on liblzma directly?

ldd "$(command -v sshd)" | grep -i lzma || true

# What does sshd load at runtime?

sudo lsof -p "$(pgrep -o sshd)" 2>/dev/null | grep -i lzma || true

# If systemd is in play, inspect the chain

ldd "$(command -v sshd)" | grep -i systemd || true

Different distros and packaging choices can affect linkage and loaded modules; treat this as a measurement exercise, not a debate exercise. (SSH)

Step 3 — confirm your installed liblzma came from a trusted, fixed package stream

CISA’s alert is explicit that this was a supply chain compromise and that organizations should follow vendor/distribution guidance and mitigations. (CISA)

So your proof should include at least one of the following, depending on your environment:

- package signature verification and repository trust settings

- SBOM or provenance record showing the package source

- base image digest pinning to a known-good build, plus rebuild/redeploy evidence

For example, on Debian-like systems:

# Show which repository a package came from

apt-cache policy xz-utils liblzma5

# Verify package integrity metadata where applicable

debsums -s xz-utils liblzma5 2>/dev/null || true

And on RPM-based systems:

rpm -q --qf '%{NAME} %{VERSION}-%{RELEASE} %{SIGPGP:pgpsig}\\n' xz xz-libs 2>/dev/null || true

Do not oversell simple file hashing as a universal guarantee, because the point of this incident was that compromise can occur upstream of where your usual checks begin. Use signature/provenance plus distro advisories as your anchor. (tukaani.org)

Detection ideas that actually survive contact with enterprise reality

Fleet-level detection signals

You want signals that scale:

- presence of known-bad package versions in inventories

- unexpected liblzma load events in sshd runtime contexts

- base image lineage drift where CI pulls “latest” instead of pinned digests

- anomalous CPU usage patterns around SSH authentication on dev/edge hosts, which mirrors the original discovery path story reported in multiple recaps (LWN.net)

A simple Linux audit script you can adapt

#!/usr/bin/env bash

set -euo pipefail

echo "== Host: $(hostname) =="

echo "-- xz version --"

xz --version 2>/dev/null | head -n 1 || echo "xz not found"

echo "-- package versions --"

if command -v dpkg >/dev/null 2>&1; then

dpkg -l | egrep '^(ii)\\s+(xz-utils|liblzma)' || true

elif command -v rpm >/dev/null 2>&1; then

rpm -qa | egrep '^(xz|xz-libs|liblzma)' || true

elif command -v apk >/dev/null 2>&1; then

apk info | egrep '^(xz|xz-libs)' || true

else

echo "No supported package manager detected"

fi

echo "-- sshd linkage --"

if command -v sshd >/dev/null 2>&1; then

ldd "$(command -v sshd)" | egrep -i '(lzma|systemd)' || echo "No lzma/systemd deps shown by ldd"

else

echo "sshd not found"

fi

This doesn’t “detect the backdoor” by itself. It gives you a fast triage map: who has the versions, and who has the dependency shape that makes the incident relevant.

The hard lesson — this was a build and release boundary failure

Why code review alone is structurally insufficient

If your control strategy is “we review code changes,” you are defending the wrong surface area. NVD’s description highlights that the malicious build behavior extracted a prebuilt object and modified liblzma during build. (NVD)

That is the nightmare scenario for review-centric programs:

- malicious behavior can be encoded in generated artifacts, build scripts, or opaque blobs

- a clean-looking repo does not guarantee a clean release

- release managers, CI pipelines, and distribution channels become first-class targets

Controls that meaningfully reduce xz-class risk

1 Reproducible builds and artifact equivalence checks

Your goal is to make “repo build” and “released artifact” converge. If you cannot reproduce vendor artifacts, you cannot strongly assert integrity.

2 Signed provenance, not just signed commits

Signed tags help, but what you needed here was an enforceable chain: source → build steps → artifact digest → distribution package.

3 Dependency intake policy that treats maintainership changes as risk events

Supply chain defense is also social engineering defense. Some public discussions after the incident emphasize the broader risk of maintainer takeovers and pressure campaigns in open source ecosystems. (Wikipedia)

4 Base image governance and digest pinning

A surprising amount of exposure comes from “we rebuilt from latest upstream image last night.”

5 Runtime containment

Even if a library is compromised, least privilege, service hardening, and segmentation should prevent “one compromised dependency becomes full fleet compromise.”

What to do if you find exposure — a response sequence that doesn’t waste your weekend

- Isolate and stop new deployments of affected images or packages

- Roll back to known-good versions per distro guidance and rebuild from pinned dependencies

- Reimage or redeploy, do not “surgically edit” prod nodes in place if you can avoid it

- Rotate credentials and keys relevant to SSH access and CI/build pipelines, because the attacker’s objective is lateral movement and persistence

- Capture evidence: package metadata, image digests, build logs, and host telemetry for later root-cause and compliance narratives

- Write the post-incident control delta: the controls you will enforce next time, not the ones you wish existed

CISA’s alert framing is useful for operational tone: treat it as a supply chain compromise event and follow official guidance and mitigations rather than improvising. (CISA)

When teams handled CVE-2024-3094 well, they did two things at once: they verified internal exposure et they validated external attack surface impact. The second part is often where organizations slow down, because it spans inventories, cloud assets, edge services, and messy ownership boundaries.

Penligent can be used as an execution layer to turn your verification checklist into a repeatable workflow: you can scope targets, run evidence-based recon, validate SSH exposure posture, and generate an auditable report that ties “what we checked” to “what we observed.” This is especially relevant when you need to prove that a rollback or redeploy actually removed the risky dependency shape, not just that someone changed a version string.

Separately, xz taught an uncomfortable lesson that also shows up in AI tool ecosystems: the artifact you consume is not always the code you reviewed. Penligent’s own security research content has used CVE-2024-3094 as an anchor example for artifact-versus-repository attacks and for why verification needs to be evidence-based rather than assumption-based. (Penligent)

Key takeaways you can reuse for the next supply-chain incident

CVE-2024-3094 mattered because it compressed three truths into one incident.

First, upstream compromise can be staged over time and delivered through release engineering rather than through obvious commits. (NVD)

Second, “it’s only two versions” is not reassurance if those versions can enter your build graph through images, mirrors, and CI defaults. (CISA)

Third, the only credible posture is provable posture: commands, artifacts, provenance, and runtime observations — not comfort.

Références

- National Vulnerability Database entry for CVE-2024-3094 (NVD)

- CISA alert on the reported supply chain compromise affecting XZ Utils (CISA)

- Upstream xz maintainer fact page on the backdoor and affected tarballs (tukaani.org)

- Datadog Security Labs analysis of the xz backdoor (Datadog Security Labs)

- Akamai’s technical overview and mitigation discussion (Akamai)

- Wiz analysis summary for CVE-2024-3094 (wiz.io)

- Rapid7 incident response perspective (Rapid7)

- LWN deep technical explanation of how the backdoor works (LWN.net)

- ClawHub malicious skills and artifact trust lessons (Penligent)

- Quand SKILL.md becomes an installer, supply-chain execution playbook (Penligent)

- GPT-5.3-Codex bug reports and an xz-class analogy for operational failures (Penligent)

- Anthropic cybersecurity tool in 2026, includes CVE-2024-3094 as a supply-chain anchor (Penligent)