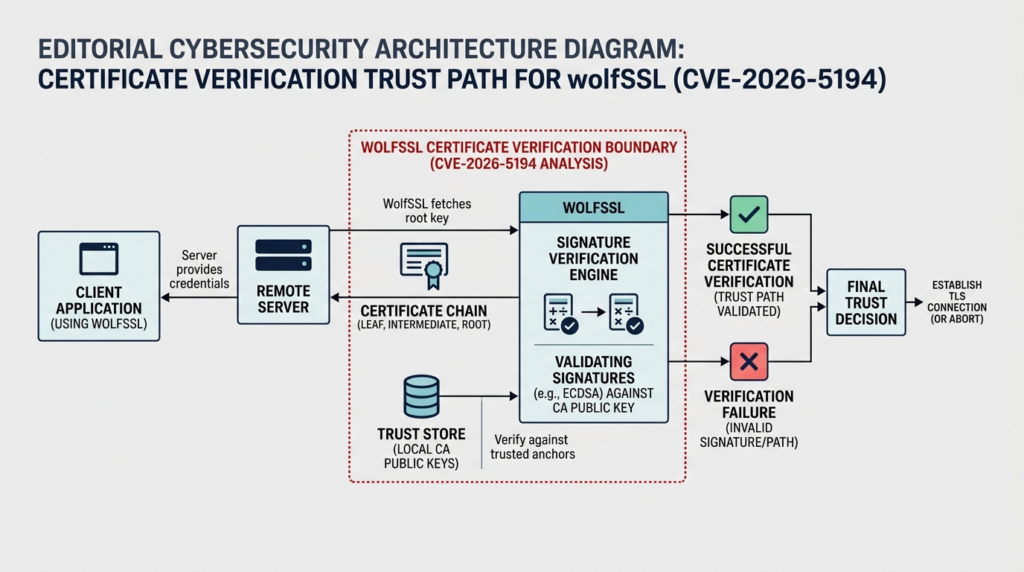

CVE-2026-5194 is a certificate-verification flaw in wolfSSL, not a memory-corruption bug, and that distinction matters. The issue sits in signature validation: missing hash or digest size checks and Object Identifier checks can let signature verification functions accept digests that are smaller than allowed or smaller than appropriate for the key type. NVD lists wolfSSL versions from 3.12.0 up to, but not including, 5.9.1 as affected. wolfSSL shipped the fix in version 5.9.1, and the core patch work landed through PR 10131. NVD currently shows a 9.1 CVSS v3.1 score, while the CNA record on the same page shows a 9.3 CVSS v4.0 score. (nvd.nist.gov)

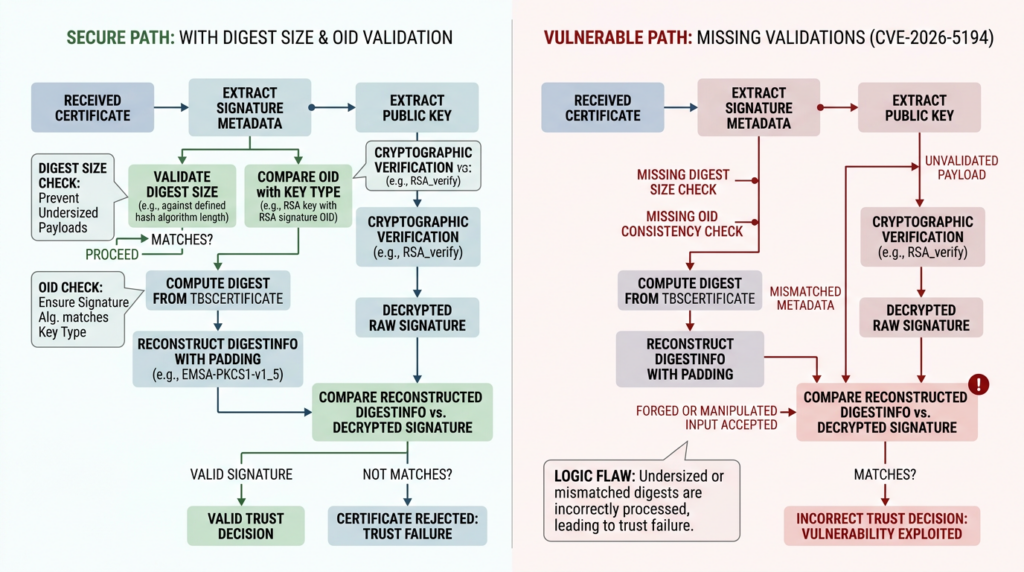

The official wording is precise enough to matter. NVD and the GitHub advisory describe the flaw as a condition where missing digest-size and OID checks weaken ECDSA certificate-based authentication and note that the affected path is ECDSA or ECC verification when EdDSA or ML-DSA is also enabled. wolfSSL’s own 5.9.1 release notes broaden the implementation view by saying the missing digest-size and OID checks touched multiple algorithms, including ECDSA or ECC, DSA, ML-DSA, Ed25519, and Ed448, and then make the practical recommendation that builds performing certificate verification with both ECC and EdDSA or ML-DSA enabled should be updated. Read together, those sources describe a narrow but serious trust failure: not every wolfSSL deployment is equally exposed, but the ones that depend on certificate verification in the affected paths should treat this as a real authentication problem, not as cosmetic hardening. (nvd.nist.gov)

The timeline is short and useful. The upstream patch set was merged on April 6, 2026. wolfSSL released 5.9.1 on April 8, 2026. NVD published the CVE on April 9 and later modified the entry on April 16. wolfSSL’s release notes credit Nicholas Carlini of Anthropic for reporting the issue. The same 5.9.1 release also addressed a broader batch of security issues, so upgrading only “for one CVE” understates the security delta between 5.9.0 and 5.9.1. (GitHub)

CVE-2026-5194 in one page

| Objet | Détail confirmé |

|---|---|

| Vulnérabilité | CVE-2026-5194 |

| Produit | wolfSSL |

| Vulnerable range in NVD | 3.12.0 up to, but not including, 5.9.1 |

| Core problem | Missing digest-size and OID checks in signature verification |

| Main impact statement | Reduced security of ECDSA certificate-based authentication |

| Publicly named condition in NVD and GHSA | ECDSA or ECC verification when EdDSA or ML-DSA is also enabled |

| Upstream fixed version | 5.9.1 |

| Upstream patch reference | PR 10131 |

| CWE | CWE-295, Improper Certificate Validation |

| Severity views | NVD CVSS v3.1 9.1, CNA CVSS v4.0 9.3 |

| Distro nuance | Debian package notes narrow risk in some builds, Ubuntu still shows medium priority and several releases under evaluation |

Each item in the table above comes from the official CVE and NVD records, wolfSSL’s 5.9.1 release materials, the upstream patch, and current distro tracking pages. (nvd.nist.gov)

That last row is more than paperwork. One of the fastest ways to mishandle CVE-2026-5194 is to stop after the headline score. NVD calls it critical at 9.1 in CVSS v3.1, and the CNA score shown on NVD is 9.3 in CVSS v4.0. Ubuntu currently labels it medium and still shows supported releases as needing evaluation, while Debian’s tracker distinguishes package states and adds notes that narrow the problem in some build configurations. That does not mean the flaw is harmless. It means crypto-validation bugs often require a more exact reading of version, build options, trust path, and packaging than a generic library bug. (nvd.nist.gov)

CVE-2026-5194 and what actually failed inside signature verification

The easiest way to misunderstand this bug is to treat it as a vague “certificate validation issue” and move on. Certificates do not become trusted because they exist. They become trusted because a verifier checks a chain of signatures and associated metadata under very specific rules. In an ECDSA-signed certificate path, the verifier is supposed to evaluate whether the signature structure, the claimed algorithm information, the digest properties, and the key material all line up with what the signing and verification procedure expects. If the verifier accepts a digest that is too small, or accepts an OID relationship that should have been rejected, then the trust decision stops matching the intended security model of the signature scheme. (nvd.nist.gov)

NIST’s digital-signature guidance gives the right frame for why this matters. FIPS 186-5 states that for RSA, ECDSA, and HashEdDSA, the verifier generates the message digest using the same hash function used during signature generation, and it ties valid verification to additional assurances around the public key and associated parameters. FIPS 186-5 also says that for ECDSA, the approved hash or XOF should provide security strength that is the same as or greater than that of the key pair, and warns that using a weaker hash reduces the signature process’s security strength. FIPS 186-4 makes the same security point in older language. The standards are not saying “a small digest is a stylistic preference.” They are saying that the choice and handling of the digest are part of the security boundary. (nvlpubs.nist.gov)

That is exactly why the upstream patch is so informative. PR 10131 adds support for minimum and maximum digest-size handling, introduces SigOidMatchesKeyOid(), and adds additional size and OID agreement checks around signature generation and verification operations. The downstream code changes show new rejection logic for bad digest sizes and explicit expectation changes from a generic parse error to a more specific signature OID error in tests. In plain English, wolfSSL did not just shuffle code around. It added concrete checks that reject inputs which previously had too much room to pass. (GitHub)

The OID side of the bug matters as much as the digest side. In X.509 and related cryptographic formats, OIDs tell the verifier what algorithm and parameter family the signature is supposed to belong to. Loose OID handling is dangerous because the verifier may stop making the same algorithm assumptions the signer and the surrounding certificate structure require. The patch’s introduction of a dedicated OID-matching helper is a strong signal that the fix is not merely about a single boundary check. It is about re-establishing consistency between the signature metadata and the key or algorithm context used during verification. That is an implementation detail, but it is also the difference between “the certificate is cryptographically bound to the right algorithm story” and “the verifier is being too forgiving.” (GitHub)

This is also why the official impact statement sounds slightly abstract if read too quickly. NVD says the flaw could lead to reduced security of ECDSA certificate-based authentication if the public CA key used is also known. That phrasing is not saying there is some unexpected leak of the CA’s public key. Public verification keys are supposed to be public. The point is subtler and more important: certificate-based authentication relies on the fact that a public verification key can safely validate signatures only because the math and the validation rules are enforced correctly. Once the verifier starts accepting signature inputs that the scheme should reject, the trust you place in a certificate chain is no longer backed by the intended verification discipline. (nvd.nist.gov)

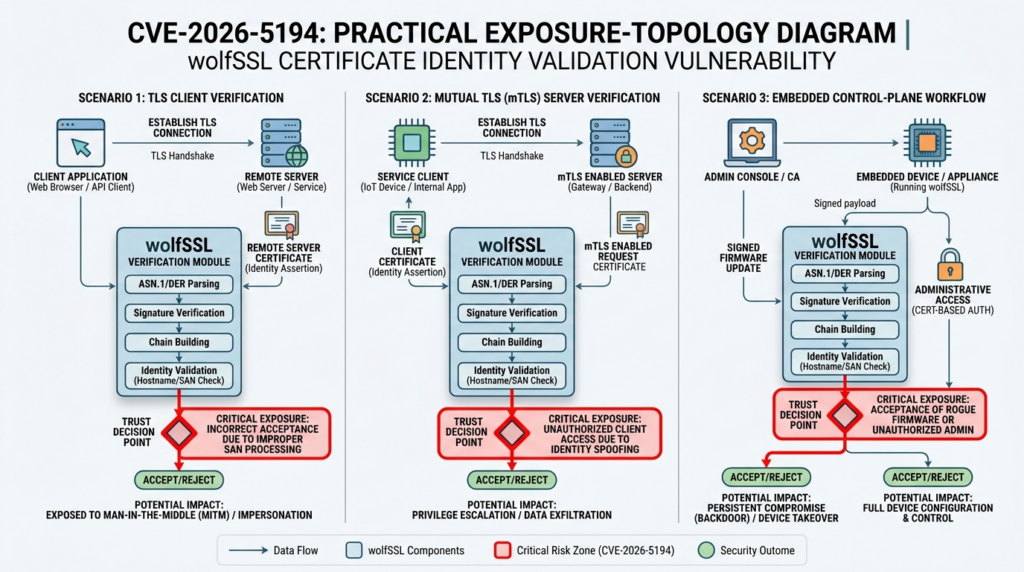

That distinction makes CVE-2026-5194 a real authentication flaw rather than a nuisance. There is no need to invent a dramatic exploit chain to justify urgency. A certificate-verification defect is already a high-value problem whenever certificate acceptance gates a session, a device identity, an API trust boundary, a control-plane login, or a mutual-authentication path. In those cases, the dangerous event is not a crash. The dangerous event is a trust decision that should have failed but did not. (nvd.nist.gov)

CVE-2026-5194 and the upstream fix, line by line in practical terms

The upstream materials give enough patch evidence to move beyond hand-waving. PR 10131 says it adds new FIPS constants, support for custom minimum and maximum digest sizes, SigOidMatchesKeyOid()et WC_MIN_DIGEST_SIZE, along with additional size and OID agreement checks for signature generation and verification operations. It also updates ECC test vectors with FIPS 186-5 vectors. That last change matters because cryptographic fixes are much stronger when the test suite is updated to match the security rules the implementation is supposed to honor. (GitHub)

The code diff makes the enforcement visible. In the ECDSA verification path, the patched code checks whether the digest length is greater than the maximum or smaller than the minimum and rejects it if either bound is violated. The surrounding tests also move toward expecting explicit OID mismatch behavior. That is a strong indicator that the bug was not limited to a malformed-input edge case in parsing. The fix enforces semantic constraints that the verification logic should have enforced all along. (GitHub)

The 5.9.1 release notes add two practical clues. First, they say the issue affected multiple algorithm families, which suggests the relevant validation logic had broader reach than one might guess from the headline description alone. Second, they narrow the upgrade recommendation to builds that both perform certificate verification and have ECC plus EdDSA or ML-DSA enabled. That practical recommendation is more useful to operators than the raw CVSS score because it points directly at the feature combinations that should move to the front of the remediation queue. (GitHub)

The wolfSSL 5.9.1 release blog adds another useful layer. It calls out CVE-2026-5194 as one of the high-severity cases in the release and recommends all users update, while also noting broader security changes in the same version such as ML-KEM being enabled by default and additional key-validation checks in related algorithm families. In other words, 5.9.1 is not just a point release with one line item. It is the current upstream security baseline. That matters when teams are tempted to cherry-pick severity scores instead of treating the release as a package of security corrections. (wolfSSL)

CVE-2026-5194 and which wolfSSL deployments actually need urgent attention

Not every instance of wolfSSL in an environment carries the same practical risk. The official record narrows the exposed path to certificate verification and calls out build conditions involving ECC with EdDSA or ML-DSA enabled. That means the remediation conversation should begin with one question: where is wolfSSL being used to decide whether a peer, a device, or a service should be trusted on the basis of a certificate? (nvd.nist.gov)

wolfSSL’s own manual helps answer that question for client-side TLS. The documentation states that SSL_VERIFY_PEER in client mode verifies the server certificate during the handshake and notes that this behavior is on by default in wolfSSL. The same documentation also explains that loading CA certificates into a WOLFSSL_CTX creates the trusted roots used to verify the peer certificate during the SSL handshake. Those details mean a standard client deployment that connects outward and trusts public or private CAs is not some fringe edge case. Certificate verification is part of the normal TLS path. (wolfSSL)

That creates a clear prioritization pattern. Internet-facing clients, agents, and embedded devices that connect to remote services over TLS deserve fast review because they may be verifying a server certificate on every outbound connection. Systems that use mutual TLS deserve equal or greater scrutiny because client-certificate verification often gates privileged access. PKI-backed control planes, device-management systems, and internal APIs that treat certificate acceptance as an identity decision should also rank near the top. A local appliance using wolfSSL for unrelated cryptographic functions but not for the affected certificate-verification path still deserves inventory, but its remediation urgency is lower until the path is confirmed. (wolfSSL)

Build configuration is the next filter. wolfSSL documentation exposes build-time support for Ed25519, and its install materials document optional post-quantum support. The official 5.9.1 notes specifically call out ECC together with EdDSA or ML-DSA as the condition worth treating urgently when certificate verification is in play. That does not mean teams should assume they are safe unless they can recite exact macro names from memory. It means they need to verify what their build actually enables instead of reasoning from product marketing or package names alone. (wolfSSL)

Distro packaging makes the picture even more concrete. Debian’s tracker shows multiple release branches carrying vulnerable package versions and notes that the fix maps to the upstream stable merge in 5.9.1. At the same time, Debian adds notes that narrow the issue in some packaged builds. Ubuntu’s tracker currently marks the issue medium and lists several supported releases as needing evaluation. Those pages are not contradictions. They are reminders that package maintainers and platform vendors often apply their own exposure analysis based on the exact shipped build and packaging choices. A mature response to CVE-2026-5194 uses those sources as a second lens, not as a reason to ignore the upstream fix. (security-tracker.debian.org)

Here is a more useful exposure matrix than a generic “critical means patch everything first” line:

| Deployment profile | Why CVE-2026-5194 matters | Priorité |

|---|---|---|

| TLS clients that verify remote server certificates | Certificate trust is part of every handshake, and wolfSSL client verification is enabled by default | Le plus élevé |

| Services that verify client certificates in mTLS | Certificate acceptance can become an authorization decision for privileged access | Le plus élevé |

| PKI-backed device identity or gateway products | A wrong certificate decision can alter device, service, or control-plane trust | Haut |

| Systems that link wolfSSL but do not use the affected certificate-verification path | Presence alone is not proof of exposure | Medium until verified |

| Builds outside the affected algorithm and feature combination | Still inventory them, but prioritize based on actual configuration and trust path | Lower until confirmed |

The logic behind that table comes from the official flaw description, wolfSSL’s verification model, and distro tracking that shows how exposure changes with packaging and build conditions. (nvd.nist.gov)

CVE-2026-5194 and what a real attacker would need

It is easy to produce two kinds of bad writing about crypto-validation bugs. One kind says the flaw is “just theoretical” because there is no crash or glossy public exploit chain. The other says every wolfSSL deployment on the internet is now one handshake away from impersonation. Neither view is disciplined. The right question is simpler: what must be true for an attacker to turn this implementation failure into a wrong trust decision in a real environment? (nvd.nist.gov)

First, the target has to use the affected verification path. That means the system must be doing certificate verification with wolfSSL in a context that actually matters to authentication or trust. A library present in the filesystem is not enough. An application that never verifies certificates through the affected code path is not the same risk as a device or service that uses wolfSSL to decide whether to trust a remote peer during a handshake. (wolfSSL)

Second, the relevant feature combination has to be live. The official records do not describe this as a universal failure of all certificate validation in wolfSSL. They point to ECDSA or ECC verification when EdDSA or ML-DSA is also enabled, and the vendor release notes repeat that recommendation in operational form. That means build flags, shipped algorithm support, and sometimes package variants determine whether the system actually reaches the risky logic. (nvd.nist.gov)

Third, the attacker has to control or influence a certificate-verification event. On a TLS client, that may mean standing up or steering traffic toward a server that presents a crafted certificate path. In mTLS, it may mean presenting a client certificate to a server that makes authorization decisions from certificate acceptance. In custom PKI workflows, it may mean supplying a signed object or certificate chain to a process that delegates verification to the vulnerable functions. The problem is not that “all traffic is exposed.” The problem is that wherever the vulnerable verifier is responsible for a yes-or-no trust decision, a crafted input might gain acceptance under conditions that should have produced rejection. (nvd.nist.gov)

That is enough to justify action. Authentication flaws do not need loud exploit theater to be dangerous. The business impact comes from what the trust decision controls. If certificate acceptance gates an operational API, a management plane, a mutual-authentication session, a device enrollment workflow, or an identity-bearing connection between services, then a trust failure can be more valuable than many noisy memory-safety bugs because it goes straight to identity and authorization. (nvd.nist.gov)

CVE-2026-5194 is not five other things people will call it

CVE-2026-5194 is not remote code execution. None of the official records describe code execution, memory corruption, or generic parser compromise. The public materials point instead to weakened certificate verification and reduced security of certificate-based authentication. Teams that lump it into “another critical library RCE” will miss what must be tested and what evidence must be preserved after patching. (nvd.nist.gov)

It is also not proof that every TLS deployment using wolfSSL is equally compromised. The official condition is narrower. The exposure is tied to certificate verification, and the released guidance points to builds that combine ECC with EdDSA or ML-DSA. A server using wolfSSL for one set of features and a device using it for outbound certificate verification are not the same operational problem. (nvd.nist.gov)

It is not a bug you can accurately prioritize from CVSS alone. NVD’s score is high. The CNA’s score is also high. Ubuntu’s priority is lower at present. Debian’s tracker narrows some package states. The correct response is not to average those views into a single magic number. The correct response is to ask which trust boundary is using the affected code path, whether the feature combination exists in your shipped build, and whether the component is dynamically or statically linked into something you actually deploy. (nvd.nist.gov)

It is not the kind of issue where the absence of a crash, an IOC-rich exploit URL, or a screaming SIEM alert should calm anyone down. Certificate-validation bugs often fail quietly. Success can be the indicator. If a system accepts a certificate it should have rejected, the application may look healthy while the trust boundary is already broken. (nvd.nist.gov)

How to find CVE-2026-5194 exposure in real environments

Inventory comes first because teams regularly lose days on the wrong asset set. Start by determining where wolfSSL is present, then separate dynamic packages, statically linked binaries, container images, and embedded firmware. A Linux package query is useful, but it should never be the last step, because many operationally important products bundle the library into an appliance image, a device firmware build, or an application-specific binary rather than exposing it as a clean system package. The official distro trackers are still worth checking because they help distinguish upstream version strings from backported package states. (security-tracker.debian.org)

A practical first pass on Linux systems can start with commands like these:

pkg-config --modversion wolfssl 2>/dev/null || true

dpkg-query -W '*wolfssl*' 2>/dev/null || true

rpm -qa | grep -i wolfssl || true

apk info -a 2>/dev/null | grep -i wolfssl || true

find /usr /lib /opt -type f 2>/dev/null | grep -E 'libwolfssl|wolfssl' || true

strings /usr/lib*/libwolfssl.so* 2>/dev/null | grep -i 'wolfSSL' || true

Those commands answer only the presence and visible-version question. They do not tell you whether the application actually uses certificate verification in the affected path, and they do not tell you whether the binary was rebuilt with the right patch if your platform vendor backported the fix without bumping to the upstream version string. That is where package changelogs, vendor advisories, and rebuild evidence matter. Debian and Ubuntu tracking pages are especially useful here because they expose package-level status separately from upstream release numbering. (security-tracker.debian.org)

The next step is to determine whether the vulnerable feature combination is plausible in your build. That usually means checking build scripts, CI flags, generated configuration headers, or source manifests for the relevant algorithm families rather than guessing. A crude but useful source-tree sweep looks like this:

grep -R -i -E 'ed25519|ed448|ml-dsa|dilithium|ecc|certificate verify|SSL_VERIFY_PEER' \

. 2>/dev/null | head -n 200

That is intentionally broad. The point is not to prove the presence of one exact macro name across every release. The point is to surface evidence that the build enables the algorithms and verification paths the official advisory calls out. wolfSSL’s documentation confirms that Ed25519 support is build-selectable and that certificate verification is part of the default client flow, which is enough to justify this style of review. (wolfSSL)

For container images, inventory should combine package-level and binary-level checks because slim images and vendor appliances often hide what they contain. Tools such as SBOM generators are especially helpful when the application vendor statically links wolfSSL or when the base image provides no obvious package metadata. A workflow like the following is crude but effective:

syft <image-or-directory> -o table | grep -i wolfssl || true

docker run --rm <image> sh -lc 'find / -type f 2>/dev/null | grep -E "wolfssl|libwolfssl"'

docker run --rm <image> sh -lc 'strings /usr/lib*/libwolfssl.so* 2>/dev/null | grep -i wolfSSL'

For firmware and embedded products, assume static linking until proven otherwise. That means extracting the image and searching both metadata and strings, because the vulnerable code may be buried inside a proprietary daemon rather than a separate shared library. A simple first pass might look like this:

binwalk -e firmware.bin

find _firmware.bin.extracted -type f -maxdepth 5 2>/dev/null | while read -r f; do

strings "$f" 2>/dev/null | grep -q -i wolfssl && echo "$f"

done

syft dir:_firmware.bin.extracted -o table | grep -i wolfssl || true

The operational reason to do this is straightforward. If the product statically linked a vulnerable wolfSSL build, updating the host OS package will not fix the shipped binary. The vendor has to rebuild and redistribute the product or firmware. That is obvious to anyone who works close to build systems, but incident response routinely wastes time rediscovering it during library advisories. (GitHub)

A good inventory record for CVE-2026-5194 should preserve more than a version number. At minimum, keep the component name, the package or binary path, the observed version or package state, whether the library is dynamically or statically linked, evidence of certificate-verification use, evidence of relevant algorithm support, the product owner, and the planned patch or rebuild action. Without that context, teams end up arguing over CVSS while the actual trust boundary remains invisible.

| Evidence to capture | Pourquoi c'est important |

|---|---|

| Exact package or binary path | Proves which component actually ships wolfSSL |

| Observed version or package status | Distinguishes upstream versioning from distro backports |

| Dynamic vs static linkage | Determines whether OS-level package updates are sufficient |

| Proof of certificate-verification usage | Presence alone is not exposure |

| Evidence of relevant algorithm support | Official exposure is tied to feature combinations |

| Product owner and deployment context | Determines remediation path and business priority |

| Retest status after patch | Confirms the trust boundary was revalidated |

The evidence model above follows directly from the upstream description, distro status pages, and the fact that certificate verification and algorithm enablement are the central exposure conditions. (nvd.nist.gov)

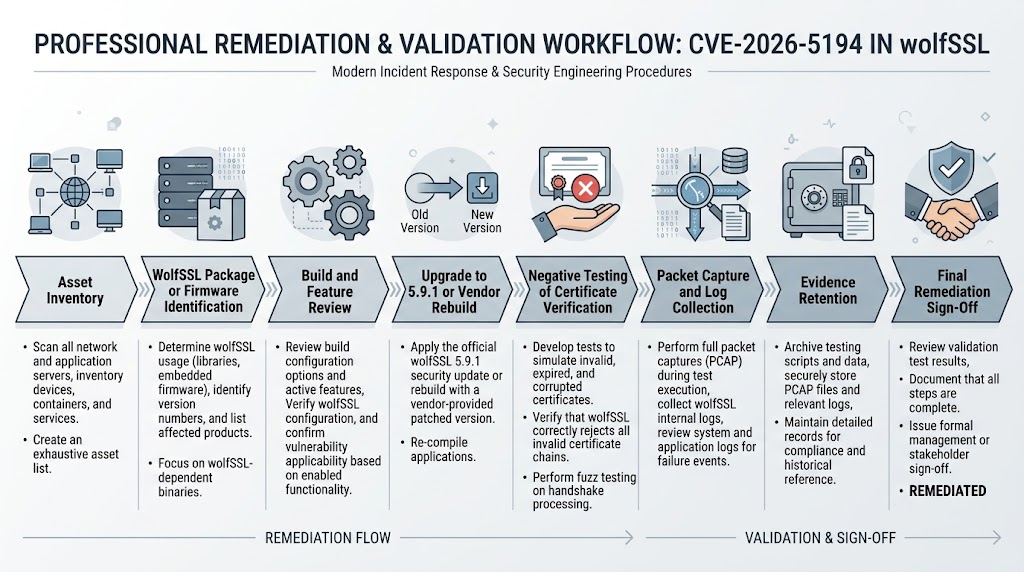

How to patch CVE-2026-5194 without fooling yourself

The clean upstream answer is simple: move to wolfSSL 5.9.1 or later. The hard part is proving that your running asset actually did. Upstream shipped the fix in 5.9.1, and the release notes tie CVE-2026-5194 directly to that version. If you build from source or vendor wolfSSL directly in your software, PR 10131 is the patch lineage that matters. If you consume distro packages, you need to verify the package maintainer’s status page and changelog rather than assuming that every fix comes with an upstream-looking version string. (GitHub)

This is where many teams make their first operational mistake. They patch the shared library package on the OS and declare victory, even though the exposed service is a vendor appliance with a statically linked copy inside its own runtime. Or they see a version string that is lower than 5.9.1 and panic, even though the distro has already backported the relevant fix. Both mistakes come from treating “library update” as a single pattern when there are really at least three: upstream source consumption, distro package consumption, and bundled or statically linked vendor builds. The official distro trackers make that distinction visible enough that no team should skip the check. (security-tracker.debian.org)

For systems you own end to end, the remediation sequence should be mechanical. Pin or upgrade to 5.9.1 or newer, rebuild the binary, redeploy the artifact, and preserve evidence of the rebuilt dependency and the negative test outcome. For distro-managed systems, query the package state and changelog. For vendor products, ask for an explicit statement of which wolfSSL code lineage they shipped and whether their build includes the PR 10131 fix set or an equivalent backport. “We updated our crypto stack recently” is not a useful answer for a certificate-validation bug. (GitHub)

A simple package-side validation sequence can look like this:

pkg-config --modversion wolfssl 2>/dev/null || true

apt-cache policy libwolfssl* 2>/dev/null || true

apt changelog libwolfssl* 2>/dev/null | head -n 80 || true

rpm -q wolfssl --changelog 2>/dev/null | head -n 80 || true

For vendor binaries and appliances, keep a separate validation checklist. Ask whether the product statically links wolfSSL. Ask whether certificate verification occurs in any outbound client, mutual-TLS, or custom PKI path. Ask whether EdDSA, Ed25519, Ed448, or ML-DSA support is enabled in the shipped build. Ask for the exact fixed build number. Ask for any negative-test evidence the vendor ran after integrating the patch. The more the vendor answers in slogans instead of artifacts, the more your own retest burden rises.

Temporary mitigations are possible but should be described honestly. If the vulnerable component does not need to perform the affected certificate-verification path, reducing or disabling the relevant feature combination may lower risk while a full upgrade is prepared. If TLS or certificate validation can be terminated in a separate, trusted boundary that is already patched and properly verified, that may shrink the blast radius for some topologies. But neither of those is a substitute for fixing the vulnerable verifier itself. A trust-boundary flaw remains a trust-boundary flaw until the code path making the decision is corrected. (nvd.nist.gov)

Another practical reason to prefer the full 5.9.1 upgrade is that wolfSSL’s release blog says the release addressed 22 CVEs. Even if CVE-2026-5194 is the issue currently driving your incident queue, the release moved the upstream security baseline in a broader way. Teams that try to talk themselves into “we only care about this one item” are usually creating a second cycle of avoidable remediation work. (wolfSSL)

How to verify the CVE-2026-5194 fix after patching

The strongest post-patch evidence for a trust bug is not a screenshot of a version string. It is a failed trust decision where the vulnerable build would have been at risk of accepting input it should reject. That shifts validation away from the usual “does the service still come up” smoke test and toward controlled negative testing. (GitHub)

A practical validation flow has five steps. First, prove the patched dependency or backported package is present in the running artifact. Second, record whether the application actually performs certificate verification in the path you care about. Third, prepare test material that exercises the certificate-validation boundary. Fourth, compare behavior before and after patching if a lab copy of the old build is available. Fifth, preserve enough evidence that another engineer can repeat the test and reach the same conclusion. That last step matters because certificate-validation issues often create disagreement between security, engineering, and product teams unless the evidence chain is clean. (nvd.nist.gov)

In practice, that means your retest artifact set should include the target identity, the exact build identifier, the certificate chain used during the test, the handshake or verification logs, the expected outcome, the observed outcome, and the command or request that triggered the path. For mTLS or custom PKI flows, keep both sides of the conversation if possible. A packet capture, a server-side log, and a client-side failure record together are far more persuasive than a single text note that says “fixed and verified.” (penligent.ai)

That evidence-first mindset is exactly where AI-assisted offensive and validation tooling can either help or hurt. A generic AI summary that says a certificate issue is “resolved” adds almost no value. What matters is whether the workflow preserves the artifacts another human can inspect and replay. Penligent’s public reporting and product materials get that part right: they frame testing and reporting as a chain of scope control, validation, proof, and reproducible evidence rather than as a polished paragraph generator. For a flaw like CVE-2026-5194, that model is more useful than any amount of fluent prose because the decisive question is whether the trust boundary fails closed after the fix. (penligent.ai)

A concise negative-test checklist can look like this:

# 1. Confirm the running artifact or package state

pkg-config --modversion wolfssl 2>/dev/null || true

# 2. Record cert-verification settings and trust roots

grep -R -i -E 'SSL_VERIFY_PEER|CAfile|CApath|trust store|client cert|mTLS' /etc /opt /app 2>/dev/null

# 3. Exercise the certificate-verification path in a lab or staging copy

# Preserve packet capture and application logs while presenting the chosen cert material

tcpdump -i any -w cvE-2026-5194-retest.pcap host <target-ip> and port <tls-port>

# 4. Save logs and the exact certificate chain used

mkdir -p retest-evidence

cp cvE-2026-5194-retest.pcap retest-evidence/

cp <app-log-path> retest-evidence/ 2>/dev/null || true

Those commands are intentionally ordinary. The important part is not the elegance of the shell. It is the discipline of preserving the exact evidence that shows the trust decision now fails where it should. (wolfSSL)

Another operational detail is worth saying plainly. For a certificate-validation flaw, the absence of a successful connection can be the success condition. Security teams are used to looking for positive exploit proof. Here, the strongest proof after patching may be a controlled failure with clean logs and a preserved capture showing that the connection or verification step no longer proceeds under the bad input. That is not anticlimactic. That is how trust-boundary bugs are properly closed. (nvd.nist.gov)

Why CVE-2026-5194 is hard to detect from logs alone

Many vulnerability classes produce obvious telemetry. A web exploit might leave a path, a payload, or an exception. A memory-corruption bug might crash. A brute-force attempt might spike auth failures. CVE-2026-5194 does not naturally behave like that. The vulnerable event is a cryptographic trust decision happening inside a verifier. If the flaw is exercised successfully, the external signal may be little more than a connection or certificate validation succeeding when it should have failed. (nvd.nist.gov)

That pattern is familiar from related certificate-trust bugs. Apache Tomcat’s CVE-2026-24734 involved incomplete OCSP verification and freshness checks that could allow revocation to be bypassed. Apache Log4j’s CVE-2025-68161 involved missing TLS hostname verification in the Socket Appender path and Apache later documented that even the initial fix was incomplete in one configuration route. In both cases, the real security problem was not a dramatic exploit artifact. It was a trust-control failure under conditions where operators might wrongly assume that “TLS is already on” means the boundary is secure. (tomcat.apache.org)

The detection lesson is simple. For CVE-2026-5194, control validation is more reliable than passive log hunting. That means tracking where certificate acceptance should fail, testing those boundaries directly, and preserving the evidence. It also means looking for suspiciously successful certificate-based interactions in high-value paths such as mTLS entry points, device-authentication channels, and outbound service connections from security-sensitive agents or appliances. Those may not give you a clean CVE-shaped indicator, but they will give you the operational truth that matters. (nvd.nist.gov)

Where logging does help, it helps as supporting evidence. Keep server-side handshake logs if the application exposes them. Keep packet captures for lab retests. Record the peer certificate chain. Record the trust store in use at test time. If the system supports certificate-validation callbacks or debug output, preserve the return paths. Those artifacts will not magically say “CVE-2026-5194 happened,” but they can prove that the certificate-verification control behaved correctly or incorrectly under a known test case. That is the level of evidence worth collecting. (wolfSSL)

Related trust-boundary CVEs that make CVE-2026-5194 easier to understand

One reason CVE-2026-5194 deserves careful reading is that it belongs to a family of bugs security teams repeatedly underweight: implementation failures in trust evaluation. These bugs often look less cinematic than RCE, yet they strike at the point where software decides who or what is allowed to be believed.

The first two comparison points come from wolfSSL’s own 5.9.1 release notes. CVE-2026-5263 is described there as a case where URI name constraints in certificate chain validation were not enforced. CVE-2026-5501 is described as an issue in the OpenSSL compatibility layer where, in a corner case, a certificate chain could be accepted without checking the leaf signature, although wolfSSL specifically notes that the native TLS handshake is not susceptible to that case. Those are not clones of CVE-2026-5194, but they rhyme. All three are about the difference between “the system is using certificates” and “the system is enforcing the trust rules that make certificates meaningful.” (GitHub)

Apache Tomcat’s CVE-2026-24734 is another useful parallel. Apache says the flaw involved incomplete OCSP verification and freshness checks, which could allow certificate revocation to be bypassed. That is a different control from digest-size or OID validation, but the operational lesson is identical: certificate trust is not one check. It is a stack of checks. If one of those checks is implemented loosely, software may accept an identity that policy says should have been rejected. (tomcat.apache.org)

Apache Log4j’s CVE-2025-68161 belongs in the same conversation because it shows how often teams mistake encrypted transport for verified identity. Apache documented the issue as missing TLS hostname verification in the Socket Appender path, and later clarified that the initial fix was incomplete in one configuration path. Again, the exact mechanics differ from wolfSSL’s signature-verification problem, but the trust lesson is the same: if the verifier does not actually enforce the expected relationship between the peer, the certificate, and the configured trust rule, then “TLS enabled” can become a dangerously misleading comfort signal. (logging.apache.org)

A useful way to compare these bugs is to stop sorting them by vendor and start sorting them by failed trust decision:

| CVE | Produit | Trust boundary that failed | Practical consequence |

|---|---|---|---|

| CVE-2026-5194 | wolfSSL | Signature validation in certificate verification | Certificate-based authentication can lose the intended cryptographic assurance |

| CVE-2026-5263 | wolfSSL | URI name constraints in chain validation | Certificates may be accepted outside intended constraints |

| CVE-2026-5501 | wolfSSL OpenSSL compatibility layer | Leaf-signature check in a corner chain-acceptance case | A chain can be accepted incorrectly in that compatibility path |

| CVE-2026-24734 | Apache Tomcat | OCSP verification and freshness checks | Revoked certificates may still be accepted |

| CVE-2025-68161 | Apache Log4j Core | TLS hostname verification | Connections may trust the wrong peer identity |

The value of that table is not taxonomy for its own sake. It is that it pushes remediation and validation toward the right engineering question: what exact trust rule failed, and how do you prove the software now enforces it? (GitHub)

What CVE-2026-5194 should change for red teams, pentesters, and security buyers

For red teams and pentesters, CVE-2026-5194 is a reminder that certificate and PKI weaknesses are rarely captured by shallow scanning alone. You can fingerprint a library version with scanners and still miss the operational truth if you do not verify whether the target actually uses the affected path and whether the trust decision can be exercised in a realistic handshake or certificate-validation flow. The hard middle of the work is contextual validation, not CVE enumeration. (nvd.nist.gov)

For defenders, the main lesson is to stop treating “TLS is enabled” as a finished sentence. Trust on a modern system depends on several independent checks: signature validation, algorithm consistency, name validation, revocation handling, hostname enforcement, certificate constraints, and the way the application uses the result. Recent issues across wolfSSL, Tomcat, and Log4j show how often the break happens in exactly that layer of implementation detail. (GitHub)

For technical buyers evaluating AI-assisted offensive tooling or automated validation platforms, this CVE points to a useful buying criterion. The product that matters most is not the one that can summarize the vulnerability prettiest. It is the one that can help you inventory the exposure, preserve the trust-chain evidence, retest the patched path, and produce artifacts another engineer can independently verify. Penligent’s public material repeatedly frames offensive testing and reporting around that proof-first model, including scope control, validation, and reproducible reporting. That is materially more relevant to flaws like CVE-2026-5194 than generic “AI for security” messaging because trust-boundary bugs live or die on evidence, not tone. (penligent.ai)

The deeper point is uncomfortable but useful. As offensive automation gets more capable, certificate-trust bugs become easier to miss at scale unless validation workflows improve too. The sensible answer is not panic about agents. It is stronger control around what gets tested, how negative cases are preserved, and how evidence survives handoff between security, engineering, and product owners. That is a workflow problem as much as a cryptography problem. (penligent.ai)

CVE-2026-5194 FAQ

CVE-2026-5194 and whether every wolfSSL deployment is affected

No. The official records narrow exposure to certificate-verification behavior and call out ECDSA or ECC verification when EdDSA or ML-DSA is also enabled. Presence of the library alone is not enough to prove operational exposure. That is why inventory must include both version and actual use of the relevant verification path. (nvd.nist.gov)

CVE-2026-5194 and whether only clients should care

No. wolfSSL documentation makes client-side peer verification especially easy to reason about because it is enabled by default in client mode, but the flaw is fundamentally about certificate verification functions. Any deployment where certificate acceptance makes a trust or authorization decision deserves review, including mutual-TLS and custom PKI workflows. (wolfSSL)

CVE-2026-5194 and whether a lack of public exploit proof lowers urgency

Not in a useful way. The official CVE, NVD, and upstream release materials focus on the flaw, the affected path, and the fix. They do not need to publish a dramatic exploit chain for the risk to be real. Authentication flaws should be prioritized from trust impact and actual exposure, not from whether a public exploit post is making the rounds. (nvd.nist.gov)

CVE-2026-5194 and whether upgrading the OS package is always enough

No. It is enough only when the exposed application actually uses that patched package at runtime. If the product statically links wolfSSL or ships its own bundled copy, the vendor or your build pipeline must rebuild the affected binary. Debian and Ubuntu package status pages are useful for package-managed systems, but they do not automatically prove the state of every appliance or vendor product running in your environment. (security-tracker.debian.org)

CVE-2026-5194 and whether reverse proxies or TLS terminators solve it

Sometimes they reduce exposure, but they do not erase it by magic. If the vulnerable component no longer performs the relevant certificate-verification decision because trust is terminated and re-established safely elsewhere, risk may drop for that path. But if the component still performs mTLS, client-certificate checks, device-identity checks, or any internal certificate verification of its own, the trust boundary remains in play. The right answer comes from architecture review, not from the presence of a proxy alone. (wolfSSL)

CVE-2026-5194 and whether disabling algorithm combinations can help temporarily

It may help in narrowly controlled environments if you can prove the affected feature combination is no longer reachable, but it is not a substitute for the upstream fix. The official guidance from wolfSSL is to update the relevant builds, and the patch itself shows that the defect sits in verification logic that needed correction. Temporary configuration changes should be treated as stopgaps, not end states. (GitHub)

CVE-2026-5194 and whether certificate rotation is automatically required

Not automatically. The official materials describe a verification flaw and a fix; they do not prescribe universal certificate rotation. Rotation may be justified if you have reason to believe a high-value trust boundary was exposed and potentially abused, but patching and controlled retesting come first. Without evidence of misuse, rotating everything by reflex may consume time that should be spent finding the actual exposed validation paths. (nvd.nist.gov)

Autres lectures et références

- NVD entry for CVE-2026-5194, including affected versions, severity, and CWE mapping. (nvd.nist.gov)

- CVE and GitHub advisory record for CVE-2026-5194. (GitHub)

- wolfSSL 5.9.1 release notes, including the vendor description and upgrade recommendation. (GitHub)

- wolfSSL 5.9.1 release blog, including broader security context for the release. (wolfSSL)

- PR 10131 and the associated code changes that added digest-size and OID checks. (GitHub)

- Debian tracker and Ubuntu tracker entries for package and distro-specific status. (security-tracker.debian.org)

- NIST FIPS 186-5 and FIPS 186-4 material on signature verification and hash-strength requirements. (nvlpubs.nist.gov)

- Apache Tomcat security material for CVE-2026-24734 and Apache Logging security material for CVE-2025-68161. (tomcat.apache.org)

- Penligent, Comment obtenir un rapport de pentest sur l'IA. Useful for evidence retention and retest discipline after trust-boundary fixes. (penligent.ai)

- Penligent, Les cyberattaques agentiques ont besoin d'un test d'IA vérifié. Useful for the broader case for proof-driven validation workflows. (penligent.ai)

- Penligent, AI Pentest Tool, ce à quoi ressemble une véritable attaque automatisée en 2026. Useful for evaluating whether a tool can move from finding lists to reproducible validation. (penligent.ai)

- Penligent, CVE-2026-24734, contournement de la révocation OCSP d'Apache Tomcat. Useful as a parallel case where certificate trust failed through incomplete validation logic. (penligent.ai)

- Penligent homepage and docs for current product and authorized-use context. (penligent.ai)