CVE-2026-42945 is not a vague “NGINX is vulnerable” headline. It is a specific heap buffer overflow in ngx_http_rewrite_module, reachable only when an affected NGINX build processes a particular rewrite configuration pattern. The risk is serious because NGINX often sits at the public edge, but the right response is not blind panic. The right response is to patch, restart, audit rewrite rules, and verify whether the vulnerable code path exists in your own configuration.

The official NVD record describes the flaw as a vulnerability in NGINX Plus and NGINX Open Source when a rewrite directive is followed by another rewrite, siou set directive, uses an unnamed PCRE capture such as $1 ou $2, and includes a question mark in the replacement string. NVD also records the impact as a heap buffer overflow in the NGINX worker process, leading to restart, with code execution possible on systems where ASLR is disabled. The CNA score is CVSS 4.0 9.2 Critical and CVSS 3.1 8.1 High. (NVD)

NGINX’s own security advisory lists the affected Open Source range as 0.6.27 through 1.30.0 and marks 1.30.1 and 1.31.0 as not vulnerable. The same advisory page places CVE-2026-42945 beside several other 2026 NGINX fixes, including HTTP/2 request injection, buffer overread issues, HTTP/3 address spoofing, and an OCSP resolver use-after-free. That context matters because many teams will patch NGINX once and assume the job is finished, while the real work is to prove that every running binary, container image, ingress controller, and config template has moved past the vulnerable boundary. (Nginx)

The short version for operators

The fastest useful risk sentence is this: if you run an affected NGINX or F5 NGINX-derived product and your config uses the vulnerable rewrite pattern, an unauthenticated remote attacker may be able to crash worker processes and, under harder conditions, may reach code execution. That is urgent enough to patch quickly. It is not accurate to say every default NGINX install is trivially exploitable.

| Question | Practical answer |

|---|---|

| Vulnérabilité | Heap buffer overflow in ngx_http_rewrite_module |

| Main CVE | CVE-2026-42945 |

| Common name | NGINX Rift |

| Most important affected range | NGINX Open Source 0.6.27 through 1.30.0 |

| Patched Open Source versions | 1.30.1 and 1.31.0 |

| Required config pattern | Unnamed PCRE capture such as $1, replacement string with ?, suivi de rewrite, siou set in the same context |

| Attacker position | Remote, unauthenticated HTTP access to the vulnerable route |

| Most realistic public-edge effect | Worker crash and denial of service |

| Worst discussed effect | Remote code execution, especially when ASLR is disabled or bypassed |

| First action | Upgrade and restart NGINX workers |

| Temporary mitigation | Replace unnamed captures with named captures or remove one trigger condition |

| Unsafe response | Running public exploit code against production without explicit authorization and a rollback plan |

The most common mistake is treating version and exploitability as the same thing. Version tells you whether the vulnerable code exists. Configuration tells you whether the vulnerable path is reachable. Runtime state tells you whether patched code is actually serving traffic.

Why this bug is bigger than a single config typo

NGINX is widely deployed as a web server and reverse proxy. W3Techs reported on May 15, 2026 that NGINX was used by 32.4 percent of websites whose web server it knew, which explains why even configuration-dependent flaws in NGINX deserve fast triage. (W3Techs)

The rewrite module is not an obscure corner used only by unusual deployments. It appears in API migrations, legacy routing, PHP front controller patterns, language or region redirects, canonical URL handling, multi-tenant routing, reverse proxy normalization, and Kubernetes ingress-generated configurations. A lot of teams do not remember writing their rewrite rules. They inherited them from an old CMS, a Helm chart, a platform template, an ingress annotation, a CDN migration, or a “temporary” compatibility block that became permanent.

That history is why CVE-2026-42945 has a messy operational profile. A team can be exposed because of a tiny line inside a config file that nobody has touched in years. Another team running the same NGINX version may not be exposed to this exact code path because the trigger pattern does not exist. A scanner that only checks the NGINX version can therefore overstate risk for some hosts and understate risk for environments where old templates are copied into many edge nodes.

The responsible path is to answer four questions in order:

- Are we running an affected NGINX or NGINX-derived product?

- Are affected builds reachable from untrusted clients?

- Does the running configuration contain the vulnerable rewrite pattern?

- Have we upgraded and restarted the actual worker processes that serve traffic?

You need all four answers. Missing any one of them leads to false confidence.

What actually triggers CVE-2026-42945

The vulnerable condition is narrow enough to describe precisely. You should look for a rewrite directive that uses an unnamed regex capture in the replacement, includes a ? in that replacement, and is followed in the same context by another rewrite, siou set directive.

A simplified risky pattern looks like this:

location ~ ^/api/(.*)$ {

rewrite ^/api/(.*)$ /internal?migrated=true;

set $original_path $1;

}

That example is not an exploit. It is a configuration shape. The important parts are:

| Trigger element | Pourquoi c'est important |

|---|---|

rewrite uses a regex capture | It creates captured URI data that can later be copied |

$1 ou $2 is used | Unnamed captures are the risky form |

Replacement contains ? | NGINX enters argument handling behavior |

Later set, siou rewrite uses captured data | The later operation can evaluate captures under the wrong escaping state |

| Attacker controls matching URI bytes | The request path supplies bytes that may expand during escaping |

The clean mitigation pattern is to use named captures and named variables:

location ~ ^/api/(?<path>.*)$ {

rewrite ^/api/(?<new_path>.*)$ /internal?migrated=true;

set $original_path $path;

}

Named captures are not magic security dust. They help here because the known vulnerable path involves unnamed capture handling. The best mitigation is still to upgrade. Rewriting configuration is a temporary way to remove the known trigger while you schedule a safe patch rollout.

DepthFirst’s public write-up describes the trigger as a common configuration pattern: a rewrite directive with an unnamed regex capture and a replacement string containing a question mark, followed by another rewrite, siou set directive. The same write-up states that NGINX computes the destination buffer using one set of escaping assumptions and later writes using another, causing the write to run past the allocated buffer. (DepthFirst)

The root cause, state mismatch in the script engine

NGINX compiles rewrite-related directives into an internal script representation. At runtime, the script engine effectively does two important things: it calculates how much memory it needs, then it copies the resulting data into the allocated buffer. That design is efficient, but it is unforgiving. If the length calculation and copy phase disagree about escaping, the buffer size becomes wrong.

CVE-2026-42945 comes from that disagreement.

The relevant state is is_args. When a rewrite replacement contains a question mark, NGINX treats what follows as query-string related data. DepthFirst’s technical analysis says ngx_http_script_start_args_code sets e->is_args = 1, and later capture evaluation may calculate length using a fresh sub-engine where is_args is zero, while the actual copy runs on the main engine where is_args remains set. The result is that length is calculated as raw capture length but copy output may be escaped as argument data. (DepthFirst)

That matters because some URI characters expand during escaping. A single byte such as +, %ou & can become a three-byte escaped sequence. If the buffer was allocated for raw length but the copy writes escaped length, each escapable byte can contribute extra bytes beyond the allocation.

| Data phase | Engine assumption | Résultat |

|---|---|---|

| Length calculation | Capture is treated as raw bytes | Buffer is allocated too small |

| Copy phase | Capture is treated as argument data requiring escaping | More bytes are written |

| Overflow condition | Escaped output exceeds allocated length | Heap memory past the buffer is overwritten |

The upstream NGINX commit is small but revealing. The patch titled “Rewrite: fixed escaping and possible buffer overrun” states that if a rewrite replacement contained arguments, is_args was set and incorrectly never cleared, causing escaping to be applied to captures evaluated later in set ou si. The commit adds e->is_args = 0 near the end of regex handling. (GitHub)

Small patches often fix large bugs. That is the lesson here. The vulnerability is not “rewrite is dangerous.” The bug is that a state flag outlived the context where it was valid, and a later operation trusted a buffer length computed under a different state.

Why a one-line patch can protect an internet edge

The patch teaches defenders something useful. A production NGINX deployment can have a very small code-level mistake hidden under years of safe-looking behavior. Most requests will not trigger it. Most rewrite rules will not trigger it. Even many vulnerable versions will not crash until the right config and the right request meet.

That is why configuration-aware triage is mandatory. The vulnerable code can be present for years, yet the exploit path may be dormant until a new route, new ingress annotation, or new rewrite template creates the missing precondition. Security teams that only ask “what version is installed” miss this class of issue. Teams that only ask “do we have public exploit code” also miss it. The mature question is “is the vulnerable state machine reachable from untrusted input in our deployment.”

For CVE-2026-42945, the answer depends on version, configuration, and traffic path.

Affected products and fix boundaries

The public affected list is broader than plain NGINX Open Source. DepthFirst’s summary lists NGINX Open Source 0.6.27 through 1.30.0, NGINX Plus R32 through R36, NGINX Instance Manager 2.16.0 through 2.21.1, F5 WAF for NGINX 5.9.0 through 5.12.1, NGINX App Protect WAF 4.9.0 through 4.16.0 and 5.1.0 through 5.8.0, F5 DoS for NGINX 4.8.0, NGINX App Protect DoS 4.3.0 through 4.7.0, NGINX Gateway Fabric 1.3.0 through 1.6.2 and 2.0.0 through 2.5.1, and NGINX Ingress Controller 3.5.0 through 3.7.2, 4.0.0 through 4.0.1, and 5.0.0 through 5.4.1. The same summary says fixed Open Source versions are 1.30.1 and 1.31.0, with NGINX Plus fixes in R36 P4 and R32 P6. (DepthFirst)

The official NGINX GitHub release notes for 1.30.1 and 1.31.0 list CVE-2026-42945 among the security fixes included in those releases. The 1.30.1 stable release notes identify the ngx_http_rewrite_module buffer overflow fix, and the 1.31.0 mainline release notes include the same security item alongside the other May 2026 NGINX fixes. (GitHub)

For Ubuntu, Canonical’s CVE page marks CVE-2026-42945 as High priority, describes it as a possible remote code execution issue, and lists fixed package versions for several supported releases, including 26.04 LTS, 25.10, 24.04 LTS, and 22.04 LTS. (Ubuntu)

Debian’s security tracker, at the time captured, showed several releases as vulnerable and unstable sid fixed at 1.30.0-3. That is a reminder that distro backports do not always look like upstream version numbers. A Debian or Ubuntu package can contain the security fix even if the displayed upstream NGINX version is older than 1.30.1. You must check the distribution’s security tracker or package changelog, not only nginx -v. (security-tracker.debian.org)

AlmaLinux published a detailed update stating that patched NGINX packages were rolling out to production repositories for supported releases and module streams, and it recommended sudo dnf clean metadata && sudo dnf upgrade nginx suivi de sudo systemctl restart nginx. AlmaLinux also emphasized that a patched package must be loaded by restarted workers, not merely installed. (AlmaLinux OS)

Version checks that do not lie to you

Start with the running binary:

nginx -v

nginx -V

On package-managed Linux hosts, also check the package build:

# Debian and Ubuntu

dpkg -l | grep -E '^ii\s+nginx'

apt-cache policy nginx

apt changelog nginx | head -80

# RHEL-like systems, AlmaLinux, Rocky Linux, Oracle Linux

rpm -q nginx

dnf info nginx

dnf updateinfo list nginx

On systemd hosts, check whether old workers are still serving traffic:

systemctl status nginx --no-pager

ps -o pid,ppid,lstart,cmd -C nginx

sudo ls -l /proc/$(pgrep -n nginx)/exe

If the package was upgraded but worker processes started before the upgrade, you have not finished remediation. A reload may be enough for many configuration changes, but a security fix in the binary should be treated as requiring a service restart unless your packaging and operational model clearly prove otherwise.

sudo nginx -t

sudo systemctl restart nginx

ps -o pid,ppid,lstart,cmd -C nginx

For containers, check the image and the binary inside the running container:

docker ps --format 'table {{.ID}}\t{{.Image}}\t{{.Names}}'

docker exec <container_id> nginx -v

docker inspect <container_id> --format '{{.Image}} {{.Config.Image}}'

For Kubernetes, do not stop at the deployment manifest. Inspect the running pod image and binary:

kubectl get pods -A -o wide | grep -Ei 'nginx|ingress'

kubectl get deploy -A -o jsonpath='{range .items[*]}{.metadata.namespace}{" "}{.metadata.name}{" "}{.spec.template.spec.containers[*].image}{"\n"}{end}' | grep -Ei 'nginx|ingress'

kubectl exec -n <namespace> <pod> -- nginx -v

If you run an ingress controller, also inventory Helm chart versions, controller images, config maps, annotations that generate rewrites, and any custom templates. CVE-2026-42945 is a runtime rewrite bug, so generated NGINX config matters.

Configuration triage, the part many scanners miss

Once you know affected code may exist, inspect the running configuration. Use nginx -T, not only files under /etc/nginx, because includes, generated files, and packaged defaults can hide the relevant rule.

sudo nginx -T > /tmp/nginx-full-config.txt 2>&1

A fast first-pass grep:

grep -nE 'rewrite[[:space:]].*\$[0-9].*\?' /tmp/nginx-full-config.txt

That command is intentionally broad. It finds rewrite lines that contain an unnamed capture reference and a question mark. It does not prove exploitability because it does not confirm the next directive in the same scope. It is a triage filter, not a verdict.

You can also look for nearby set, si, or additional rewrite directives:

grep -nE 'rewrite|set[[:space:]]+\$|if[[:space:]]*\(' /tmp/nginx-full-config.txt

A safer manual process looks like this:

- Find rewrite rules with

$1,$2, or other numeric captures. - Confirm whether the replacement string contains

?. - Inspect the same

server,localisation, or nested context for a laterrewrite,siouset. - Check whether untrusted users can reach the matching route.

- Patch first where internet reachability and trigger confidence are highest.

Do not assume a lack of public crashes means a lack of exposure. A vulnerable rewrite path may sit behind a rarely used legacy route. Conversely, do not assume every $1 in a config is vulnerable. The trigger is a combination.

A configuration scanner for safe local review

The following Python script scans an nginx -T output file for suspicious rewrite lines and nearby follow-on directives. It does not send network requests. It does not test exploitability. It helps reduce a large configuration dump into review candidates.

#!/usr/bin/env python3

"""

nginx_rewrite_rift_triage.py

Purpose:

Identify NGINX rewrite rules that may deserve manual review for

CVE-2026-42945 style trigger conditions.

Input:

Output from: nginx -T > nginx-full-config.txt 2>&1

Limitations:

- This is a heuristic scanner, not a parser for every valid NGINX grammar edge case.

- It does not prove exploitability.

- It does not understand every include-generation workflow.

- It is designed for defensive configuration review.

"""

from __future__ import annotations

import re

import sys

from pathlib import Path

from dataclasses import dataclass

REWRITE_WITH_NUMERIC_CAPTURE_AND_QMARK = re.compile(

r"\brewrite\s+[^;]*\$[0-9]+[^;]*\?[^;]*;",

re.IGNORECASE,

)

FOLLOW_ON_DIRECTIVE = re.compile(

r"^\s*(rewrite\b|set\s+\$|if\s*\()",

re.IGNORECASE,

)

CONTEXT_OPEN = re.compile(r"\{")

CONTEXT_CLOSE = re.compile(r"\}")

@dataclass

class Finding:

line_number: int

line: str

context_depth: int

follow_on_line_number: int | None

follow_on_line: str | None

def strip_comment(line: str) -> str:

if "#" in line:

return line.split("#", 1)[0]

return line

def update_depth(line: str, current_depth: int) -> int:

return current_depth + len(CONTEXT_OPEN.findall(line)) - len(CONTEXT_CLOSE.findall(line))

def scan(lines: list[str]) -> list[Finding]:

findings: list[Finding] = []

depth_by_line: list[int] = []

depth = 0

for raw in lines:

clean = strip_comment(raw)

depth_by_line.append(depth)

depth = update_depth(clean, depth)

for index, raw in enumerate(lines):

clean = strip_comment(raw).strip()

if not REWRITE_WITH_NUMERIC_CAPTURE_AND_QMARK.search(clean):

continue

current_depth = depth_by_line[index]

follow_line_no = None

follow_line = None

for lookahead in range(index + 1, min(index + 40, len(lines))):

candidate = strip_comment(lines[lookahead]).strip()

if not candidate:

continue

if depth_by_line[lookahead] < current_depth:

break

if depth_by_line[lookahead] == current_depth and FOLLOW_ON_DIRECTIVE.search(candidate):

follow_line_no = lookahead + 1

follow_line = candidate

break

findings.append(

Finding(

line_number=index + 1,

line=clean,

context_depth=current_depth,

follow_on_line_number=follow_line_no,

follow_on_line=follow_line,

)

)

return findings

def main() -> int:

if len(sys.argv) != 2:

print("Usage: nginx_rewrite_rift_triage.py <nginx-full-config.txt>", file=sys.stderr)

return 2

path = Path(sys.argv[1])

if not path.is_file():

print(f"File not found: {path}", file=sys.stderr)

return 2

lines = path.read_text(errors="replace").splitlines()

findings = scan(lines)

if not findings:

print("No suspicious rewrite pattern found by this heuristic.")

return 0

for item in findings:

print("=" * 80)

print(f"Candidate rewrite at line {item.line_number}:")

print(f" {item.line}")

if item.follow_on_line:

print(f"Nearby follow-on directive at line {item.follow_on_line_number}:")

print(f" {item.follow_on_line}")

print("Review priority: high")

else:

print("No nearby follow-on rewrite, if, or set directive found in the lookahead window.")

print("Review priority: medium")

return 1

if __name__ == "__main__":

raise SystemExit(main())

Run it like this:

sudo nginx -T > nginx-full-config.txt 2>&1

python3 nginx_rewrite_rift_triage.py nginx-full-config.txt

Treat every hit as a review candidate. The script may miss exotic formatting, template-generated runtime files, or context relationships beyond the lookahead window. It may also flag rules that are not exploitable. That is acceptable for triage. The goal is to find the lines a human should read first.

Temporary mitigation, rewrite the rewrites

If you cannot upgrade immediately, remove one of the known trigger conditions. The cleanest short-term change is to replace unnamed captures with named captures.

Risky:

location ~ ^/users/([0-9]+)/profile/(.*)$ {

rewrite ^/users/([0-9]+)/profile/(.*)$ /profile.php?id=$1&tab=$2 last;

set $route_key $1;

}

Plus sûr :

location ~ ^/users/(?<user_id>[0-9]+)/profile/(?<section>.*)$ {

rewrite ^/users/(?<rewrite_user_id>[0-9]+)/profile/(?<rewrite_section>.*)$ /profile.php?id=$rewrite_user_id&tab=$rewrite_section last;

set $route_key $user_id;

}

After editing:

sudo nginx -t

sudo systemctl reload nginx

If you are only changing configuration, reload is normally the relevant operation. If you are installing a patched NGINX binary, restart the service so workers are replaced.

AlmaLinux’s temporary mitigation guidance is similar: it says the exploitable condition requires a rewrite using unnamed PCRE capture, a replacement containing ?, and a following rewrite, siou set in the same context. It also states that removing any one of those conditions is enough as a stopgap, while the right fix remains installing the patched package and restarting NGINX. (AlmaLinux OS)

Why RCE claims need precision

DepthFirst demonstrated a proof of concept for unauthenticated RCE with ASLR off and described a heap manipulation path involving request pools, adjacent pool corruption, cleanup handlers, and sprayed fake cleanup structures. That is a serious research result. It does not mean every production NGINX instance with an affected version is automatically one request away from shell access. (DepthFirst)

BleepingComputer reported the same distinction: the flaw can be exploited for denial of service and, under certain conditions, remote code execution. Its coverage notes that RCE was achieved on a system with ASLR disabled and also reports pushback from researchers who argued that real-world exploitability depends on a vulnerable configuration, discovering the affected endpoint, and environmental conditions. (BleepingComputer)

That distinction is important for communication inside a company. The wrong message is “stable unauthenticated RCE everywhere.” The equally wrong message is “only theoretical, ignore it.” The accurate message is:

- The worker-crash path is realistic enough to treat as urgent.

- RCE has been demonstrated in controlled conditions.

- Production RCE reliability depends on hardening, memory layout, ASLR, config shape, and target-specific details.

- Edge exposure raises priority even when exploitation is configuration-dependent.

- Patch and restart are still the correct default response.

Security communication should reduce confusion, not trade one exaggeration for another.

Denial of service still matters

Some teams underreact when a vulnerability is not a guaranteed RCE. That is a mistake for edge infrastructure. A repeatable worker crash can still take down customer-facing applications, trigger autoscaling churn, exhaust connection pools, break health checks, and create cascading failures behind load balancers.

NGINX’s master-worker design can restart crashed workers, but “worker restarts” are not free. During repeated crashes, active requests fail, upstream retry behavior changes, logs fill, latency climbs, and monitoring noise rises. If an attacker can continuously trigger a crash on a hot route, the business impact can be real even without code execution.

AlmaLinux said it independently reproduced the issue and that the denial-of-service path was trivial on all supported AlmaLinux releases, while reliable code execution with ASLR enabled was a different and harder problem. That is a good operational framing: the DoS path alone is enough to justify urgency. (AlmaLinux OS)

Safe validation in production-adjacent environments

Do not run public exploit code against production NGINX. For CVE-2026-42945, safe validation should focus on evidence that does not crash workers:

- Version evidence

- Package evidence

- Container image evidence

- Running process start time

- Full config dump

- Presence or absence of trigger patterns

- Proof that patched workers are serving traffic

- Confirmation that temporary rewrite mitigations were applied

A safe validation record might look like this:

Asset:

edge-nginx-prod-03

Exposure:

Internet-facing reverse proxy for app.example.com

Binary:

nginx version: nginx/1.24.0-2ubuntu7.8

Source: Ubuntu package

Patch evidence:

Ubuntu security page lists this build as fixed for 24.04 LTS.

Package installed at 2026-05-15 02:14 UTC.

Runtime evidence:

NGINX master and workers restarted after package update.

Worker process start time is after package install time.

Config evidence:

nginx -T captured and stored.

grep for rewrite + numeric capture + question mark returned two candidates.

Both candidates were converted to named captures.

nginx -t passed.

reload completed.

Residual risk:

No destructive exploit test run in production.

Recommend isolated lab reproduction only if needed for internal assurance.

That is the kind of record a security lead can attach to a remediation ticket. It proves work was done without turning a memory corruption bug into a production outage.

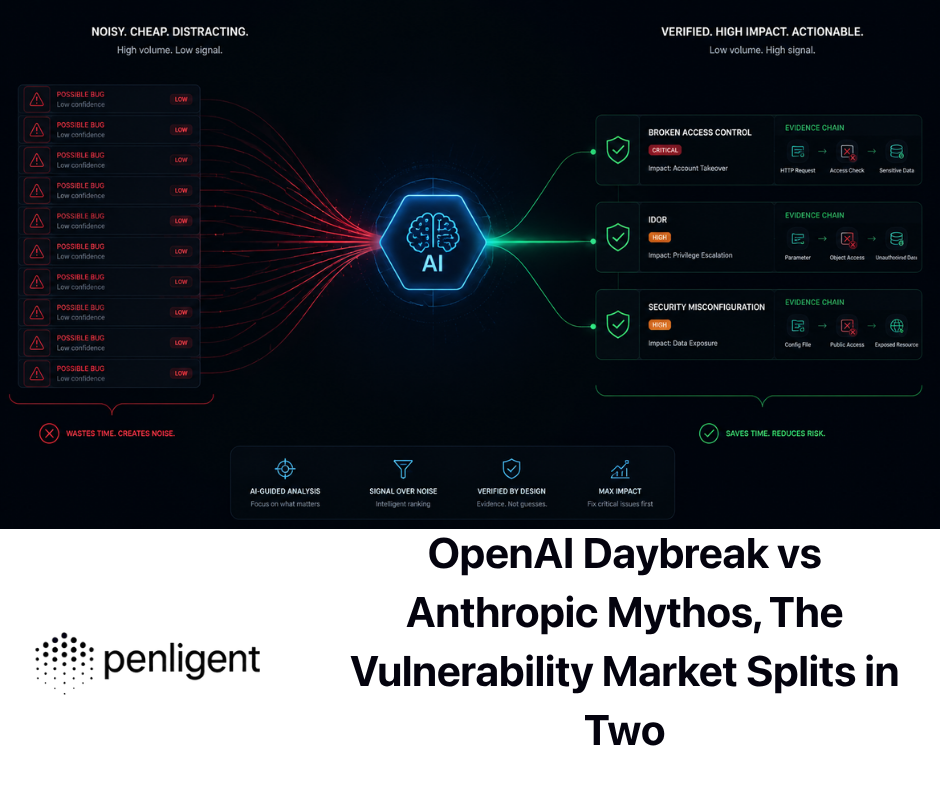

Teams that use Penligent for authorized security testing can model CVE-2026-42945 as an evidence workflow rather than a blind exploit run: inventory edge assets, capture versions and configs, identify risky rewrite patterns, preserve command output, and generate a replayable remediation report. That is especially useful when multiple teams own NGINX configs across Linux packages, containers, and Kubernetes ingress deployments. Penligent’s public homepage describes CVE exploit generation, guided execution, evidence-first results, and operator-controlled agentic workflows, but for this specific bug the safer production value is controlled verification and reporting, not destructive live exploitation. (penligent.ai)

A practical triage workflow

Use a simple priority model. Do not try to boil the ocean.

| Priorité | Condition | Action |

|---|---|---|

| Critique | Internet-facing affected NGINX, trigger pattern present | Patch or mitigate immediately, restart workers, monitor crashes |

| Haut | Internet-facing affected NGINX, rewrite rules present but trigger not confirmed | Patch quickly, inspect config, run heuristic scanner |

| Moyen | Internal affected NGINX, trigger pattern present | Patch in normal emergency cycle, restrict reachability |

| Moyen | Container image includes affected NGINX but route not exposed | Rebuild image, verify deployment rollout |

| Faible | Patched package installed and workers restarted | Keep evidence, schedule regression checks |

| Unknown | Version or config cannot be confirmed | Treat as high until inventory is complete |

A mature remediation sequence:

# 1. Capture before-state evidence

date -u

nginx -v

sudo nginx -T > nginx-before-cve-2026-42945.txt 2>&1

# 2. Find candidate rewrite patterns

grep -nE 'rewrite[[:space:]].*\$[0-9].*\?' nginx-before-cve-2026-42945.txt

# 3. Install patched package through your approved channel

sudo apt update && sudo apt install --only-upgrade nginx

# or for dnf-based systems

sudo dnf clean metadata

sudo dnf upgrade nginx

# 4. Test config and restart

sudo nginx -t

sudo systemctl restart nginx

# 5. Capture after-state evidence

date -u

nginx -v

ps -o pid,ppid,lstart,cmd -C nginx

sudo nginx -T > nginx-after-cve-2026-42945.txt 2>&1

For a blue-green environment, do not patch only the currently active pool. Rebuild the base image, roll it through staging, deploy to the inactive pool, switch traffic, then drain and rebuild the old pool. CVE-2026-42945 is exactly the kind of bug that reappears when a forgotten autoscaling group or rollback image still carries the affected binary.

Kubernetes and ingress controllers

Kubernetes adds two traps. First, the NGINX process may live inside a controller image, not on the node package manager. Second, user-facing rewrite behavior may be generated from annotations, config maps, or templates rather than a hand-written nginx.conf.

Inventory controllers:

kubectl get pods -A -o jsonpath='{range .items[*]}{.metadata.namespace}{" "}{.metadata.name}{" "}{.spec.containers[*].image}{"\n"}{end}' \

| grep -Ei 'nginx|ingress'

Check the running NGINX version:

kubectl exec -n <namespace> <pod-name> -- nginx -v

Dump generated NGINX configuration if your controller supports it:

kubectl exec -n <namespace> <pod-name> -- nginx -T > ingress-nginx-full-config.txt 2>&1

Then scan for candidate rules:

grep -nE 'rewrite[[:space:]].*\$[0-9].*\?' ingress-nginx-full-config.txt

For ingress-nginx or NGINX Ingress Controller deployments, do not assume the Kubernetes object version tells the whole story. You need the controller image, embedded NGINX version, generated config, custom snippets, rewrite annotations, and any platform-specific patches. DepthFirst’s affected list includes NGINX Ingress Controller ranges, so controller owners need their own upgrade path rather than relying on host-level NGINX patching. (DepthFirst)

Detection signals after disclosure

There is no universal “CVE-2026-42945 exploited” log line. Detection is about correlating weak signals around worker crashes, suspicious URI patterns, and request bursts against routes using risky rewrites.

Start with NGINX error logs:

sudo grep -Ei 'worker process|segmentation fault|signal 11|exited on signal|core dumped|malloc|buffer' /var/log/nginx/error.log*

Check system logs:

journalctl -u nginx --since "2026-05-13" --no-pager \

| grep -Ei 'worker|signal|segfault|core|restart|failed'

Look for response anomalies:

awk '{print $9}' /var/log/nginx/access.log | sort | uniq -c | sort -nr | head

grep ' 502 ' /var/log/nginx/access.log | tail -50

grep ' 499 ' /var/log/nginx/access.log | tail -50

In Kubernetes:

kubectl logs -n <namespace> <pod-name> --since=24h \

| grep -Ei 'worker|signal|segfault|core|restart|panic|fatal'

kubectl get events -A --sort-by=.lastTimestamp | grep -Ei 'nginx|ingress|restart|crash'

For traffic hunting, prioritize:

- Repeated requests to legacy rewrite-heavy routes

- URI paths containing many escapable characters

- Bursts of 500, 502, 504, or upstream reset errors

- Worker restarts without deploy activity

- Error spikes after public disclosure dates

- Requests targeting old API migration paths, PHP front-controller paths, or route compatibility shims

Detection has limits. A crash may leave little application-level evidence. If logs are short-lived or container restarts wipe local files, you may only see indirect metrics. That is why prevention and configuration audit are more reliable than waiting for a clean intrusion signal.

Hardening that reduces blast radius

Patch first. Then harden.

Check ASLR:

cat /proc/sys/kernel/randomize_va_space

A value of 2 is the normal full randomization setting on Linux. Do not disable ASLR for performance convenience. If you find ASLR disabled, treat that as a separate security finding.

Confirm NGINX workers are not running as root:

ps -o user,pid,ppid,cmd -C nginx

grep -R "^[[:space:]]*user[[:space:]]" /etc/nginx/nginx.conf /etc/nginx/conf.d 2>/dev/null

Use systemd hardening where compatible with your workload:

# /etc/systemd/system/nginx.service.d/hardening.conf

[Service]

NoNewPrivileges=true

PrivateTmp=true

ProtectSystem=full

ProtectHome=true

RestrictSUIDSGID=true

Then:

sudo systemctl daemon-reload

sudo systemctl restart nginx

sudo systemctl status nginx --no-pager

Do not apply hardening blindly to production. Some deployments need access to specific paths, certificates, cache directories, temporary upload paths, or dynamic modules. Test in staging first.

For containers, rebuild from a patched base image and prevent drift:

docker build --pull -t registry.example.com/edge/nginx:patched-20260515 .

docker push registry.example.com/edge/nginx:patched-20260515

Then ensure the deployment actually uses the new immutable tag or digest:

kubectl set image deployment/edge-nginx nginx=registry.example.com/edge/nginx@sha256:<digest>

kubectl rollout status deployment/edge-nginx

The point of hardening is not to make CVE-2026-42945 harmless. The point is to reduce the damage if an edge memory corruption bug reaches a worker process.

Why config ownership is the hard part

The hardest CVE-2026-42945 remediation conversation may not be technical. It may be organizational.

Security owns the alert. Platform owns ingress. Application teams own routes. DevOps owns Helm charts. A legacy migration team wrote the rewrite rules. A vendor appliance embeds NGINX. A base image team owns the Dockerfile. Nobody owns the old server-snippet annotation that still ships to production.

That is why a good remediation ticket should not say only “patch NGINX.” It should list evidence owners:

| Evidence needed | Likely owner |

|---|---|

| Running NGINX binary version | Platform or SRE |

| Package fixed version | Linux engineering |

| Container image digest | Build or DevOps team |

| Ingress controller version | Kubernetes platform team |

| Generated NGINX config | Platform team |

| Application rewrite intent | Application team |

| Public reachability | Network or edge team |

| Worker restart proof | SRE |

| Post-fix monitoring | Detection engineering |

CVE-2026-42945 is a good example of why CVE validation is an orchestration problem. Advisory text, patch notes, config dumps, runtime checks, and evidence packaging all need to come together. Penligent’s article AI Vulnerability Discovery Is an Orchestration Problem makes the same broader point for modern CVE handling: defenders need to know whether they run the affected product, which versions and builds are present, whether the vulnerable code path is reachable, what preconditions exist, how to validate safely, and how to prove the fix worked. (penligent.ai)

Related NGINX CVEs worth triaging at the same time

CVE-2026-42945 was not the only NGINX security issue fixed in the same release window. The NGINX 1.30.1 and 1.31.0 release notes list multiple fixes, including CVE-2026-42926, CVE-2026-42946, CVE-2026-42934, CVE-2026-40460, and CVE-2026-40701. (GitHub)

| CVE | Composant | Why it belongs in the same triage wave | Practical defender action |

|---|---|---|---|

| CVE-2026-42945 | ngx_http_rewrite_module | Memory corruption in rewrite processing, public-edge reachable under config conditions | Patch, restart, audit rewrite rules |

| CVE-2026-42926 | ngx_http_proxy_module HTTP/2 request injection | Edge proxy request handling can cross trust boundaries | Patch, review HTTP/2 proxy exposure |

| CVE-2026-42946 | SCGI and uWSGI modules | Buffer overread or excessive allocation behavior can affect worker stability | Patch, check SCGI and uWSGI usage |

| CVE-2026-42934 | ngx_http_charset_module | Character-set transformation bugs can create memory safety issues in response processing | Patch, check charset module usage |

| CVE-2026-40460 | HTTP/3 | Address spoofing in a newer protocol surface | Patch, review HTTP/3 exposure |

| CVE-2026-40701 | OCSP resolver handling | Use-after-free around async resolver behavior | Patch, review TLS and OCSP configuration |

DepthFirst’s technical write-up states that its system identified several NGINX memory corruption issues, and that four findings were confirmed by NGINX: CVE-2026-42945, CVE-2026-42946, CVE-2026-40701, and CVE-2026-42934. That cluster matters because it points to an operational lesson: edge servers are not only “config risk.” They are also low-level C code processing attacker-controlled bytes at scale. (DepthFirst)

Historical NGINX advisories show the same pattern. NGINX has previously addressed memory corruption, resolver, HTTP/2, HTTP/3, range filter, and module-specific vulnerabilities across many years. The lesson is not that NGINX is unusually unsafe; the lesson is that any high-performance edge proxy written in low-level code deserves fast patching and careful configuration review when a memory bug lands. (Nginx)

What not to do

Do not run an exploit from GitHub against production to “see if it works.” Public proof-of-concept code may crash workers, violate authorization boundaries, generate ambiguous evidence, or create an incident while you are trying to close one.

Do not disable ASLR to reproduce RCE on a live system. That changes the security posture and can make a previously harder exploit path easier.

Do not rely on nginx -v alone for distro packages. Backports mean a package may be fixed without showing upstream 1.30.1. The inverse is also possible in custom builds: a version string can be misleading if the binary came from an unofficial source.

Do not reload only one node in a pool and call the fleet patched. Load balancers, autoscaling groups, canary pools, old container digests, and disaster recovery images all need review.

Do not delete the evidence. Keep before and after config dumps, package versions, process start times, and rollout records. When a high-profile edge CVE lands, the remediation record matters.

A safer lab reproduction boundary

Some security teams will want a controlled reproduction in a lab. That can be useful, but the goal should be to understand exposure and validate mitigations, not to build a reusable weapon.

A safe lab design:

- Use an isolated VM or container network with no production routes.

- Use a deliberately vulnerable NGINX version only in the lab.

- Use a minimal config that demonstrates the vulnerable pattern.

- Avoid payloads that execute commands or target real services.

- Stop at observing a controlled crash or sanitizer report if your policy allows it.

- Compare behavior before and after patching or rewriting captures.

- Document the control case and fixed case.

For production, a configuration proof is usually enough. You do not need to crash the edge to prove that a risky rewrite pattern exists. You do not need to trigger memory corruption to prove that an affected binary is running. You need evidence that leads to remediation.

Patch verification checklist

Use this checklist after the emergency work is done:

| Vérifier | Command or evidence | Pass condition |

|---|---|---|

| Running version captured | nginx -v or package query | Matches fixed distro package or upstream fixed version |

| Worker restart confirmed | ps -o pid,lstart,cmd -C nginx | Workers started after package install |

| Config syntax valid | nginx -t | Test passes |

| Full config archived | nginx -T output | Stored with ticket |

| Trigger pattern reviewed | grep or scanner output | No unmitigated risky pattern remains |

| Containers rebuilt | image digest and rollout record | New digest deployed |

| Kubernetes controller checked | pod image and embedded nginx -v | Controller contains fix or vendor-fixed build |

| ASLR enabled | /proc/sys/kernel/randomize_va_space | Value is 2 |

| Monitoring reviewed | error logs and restart metrics | No unexplained worker crash pattern |

| Rollback image updated | deployment records | No old affected image remains as default rollback |

This checklist is intentionally boring. Boring is good. The best CVE response is one that can be repeated by another engineer and audited later.

Further reading for defenders

NVD’s CVE-2026-42945 record is the best compact source for the official description, CVSS vectors, CWE mapping, and advisory references. Start there when you need a neutral vulnerability record for tickets or risk registers. (NVD)

The NGINX security advisories page is the cleanest source for upstream affected and fixed Open Source version ranges. Use it to confirm the 1.30.1 and 1.31.0 boundaries and adjacent NGINX CVEs fixed in the same release window. (Nginx)

DepthFirst’s NGINX Rift technical write-up is the deepest public explanation of the root cause and exploitation research. Read it for the script-engine state mismatch and exploitability discussion, not as a license to test production systems destructively. (DepthFirst)

The NGINX commit 524977e is useful because it shows how small the fix is and why the is_args flag mattered. It is also a good artifact for engineers who want to understand what their vendor backported. (GitHub)

FAQ

Does CVE-2026-42945 affect every NGINX server?

- No. Affected code exists in NGINX Open Source 0.6.27 through 1.30.0, but exploitability also depends on configuration.

- The known trigger requires an unnamed PCRE capture such as

$1, a rewrite replacement containing?, and a followingrewrite,siousetdirective in the same context. - A server with an affected version but no matching rewrite pattern is not exposed through the known path.

- Patch anyway, because version-only safety assumptions often fail across templates, containers, and ingress-generated configs.

Is CVE-2026-42945 a real RCE or only a denial-of-service bug?

- It is a heap buffer overflow with reliable worker-crash risk under the right configuration.

- Public research demonstrated remote code execution in a controlled setup with ASLR disabled.

- Reliable production RCE is more environment-dependent than the headline suggests.

- Treat the issue as urgent because public-edge DoS is already serious and memory corruption bugs can evolve.

How do I quickly check whether my config has the risky rewrite pattern?

- Dump the running config with

sudo nginx -T > nginx-full-config.txt 2>&1. - Search for rewrite rules using numeric captures and question marks with

grep -nE 'rewrite[[:space:]].*\$[0-9].*\?' nginx-full-config.txt. - Manually inspect each hit for later

rewrite,siousetdirectives in the same context. - Treat automated results as triage candidates, not proof of exploitability.

What should I do if I cannot upgrade NGINX immediately?

- Remove one of the trigger conditions from the configuration.

- The preferred temporary mitigation is replacing unnamed captures such as

$1with named captures such as$user_id. - Validate the config with

nginx -tand reload after configuration-only changes. - Continue planning the binary patch, because config mitigation can miss generated or forgotten rules.

Is Kubernetes ingress affected by CVE-2026-42945?

- It can be, depending on the controller, embedded NGINX version, generated config, and custom rewrite behavior.

- Check the controller image, the NGINX version inside the running pod, and the generated NGINX config.

- Do not assume host package updates patch controller containers.

- Upgrade the ingress controller or vendor package according to the controller vendor’s fixed release path.

Est systemctl reload nginx enough after patching?

- Usually no. Reload is for configuration changes.

- A binary security fix should be followed by a service restart so old workers are replaced.

- Confirm with process start times after the restart.

- In container or Kubernetes environments, confirm rollout to new images or pods rather than only reloading config.

Should I run the public PoC to prove whether I am vulnerable?

- Not on production.

- For production, prove exposure through version, config, reachability, and runtime evidence.

- If lab reproduction is required, isolate it from production and stop at the smallest safe proof allowed by your policy.

- Do not use RCE payloads or worker-crashing tests against systems you do not own and have explicit permission to test.

What should go into a remediation ticket for CVE-2026-42945?

- Affected asset name and exposure level.

- NGINX or controller version before and after remediation.

- Package or image evidence proving the fix.

- Worker restart or pod rollout evidence.

- Config audit output for risky rewrite patterns.

- Temporary mitigation details if patching was delayed.

- Monitoring review for worker crashes or error spikes.

Arrêt de clôture

CVE-2026-42945 deserves fast action because it sits in the request-handling path of a widely deployed edge server and can produce real worker crashes under a known configuration pattern. It also deserves precise language. The bug is not “every NGINX is instantly owned.” It is a memory corruption flaw where version, rewrite configuration, reachability, ASLR, and runtime deployment details decide the real exposure.

The practical answer is straightforward: upgrade to a fixed build or vendor-patched package, restart workers, audit rewrite rules for the trigger pattern, replace risky unnamed captures where needed, preserve evidence, and monitor for crash signals. The teams that handle this well will not be the ones with the loudest alert. They will be the ones that can prove, host by host and config by config, that the vulnerable path is gone.