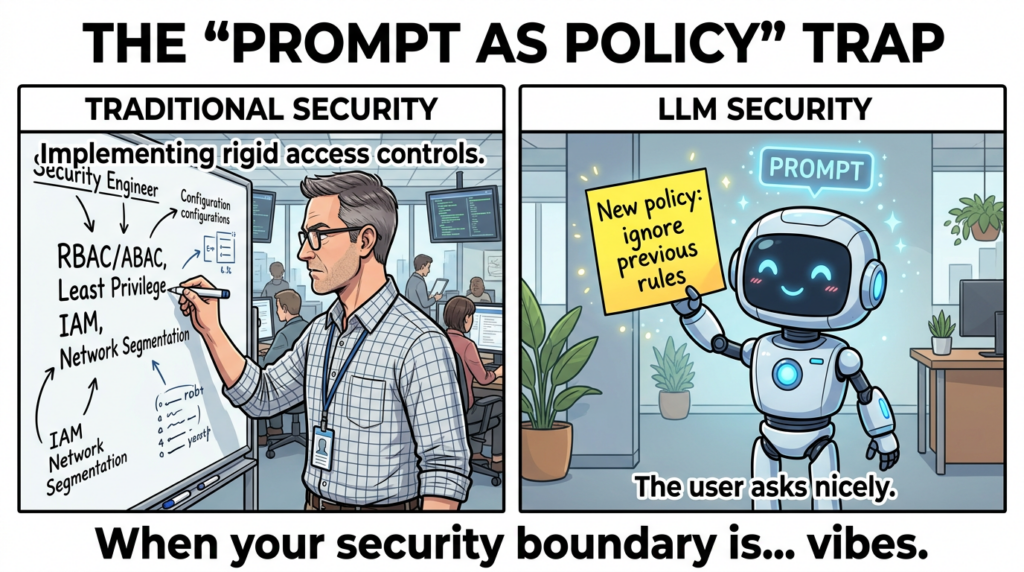

The moment your app became an agent, your threat model changed

Agent applications are not “LLM apps with a few tool calls.” They are autonomous systems that plan, decide, and act across multiple steps, often across multiple systems, and often on behalf of users. OWASP’s Agentic Security Initiative treats that shift as a boundary change: the critical security surface is no longer limited to code paths and typed APIs. It expands into natural language instructions, tool descriptors, schemas, memory, delegation chains, and inter-agent communication. (Projet de sécurité Gen AI de l'OWASP)

AWS’s China-region blog on “Privacy and Security of Agent Applications” frames the same thesis in operational language: traditional application security controls still matter, and GenAI security controls still matter, but agentic systems introduce new, system-specific risks—especially around identity, tool manipulation, and memory poisoning—so the defensible posture must be layered, not replaced. (Amazon Web Services, Inc.)

That’s the key to reading the AWS post correctly: it is not “yet another AI safety checklist.” It is a production-minded translation of Agentic Security Initiative concepts into architecture decisions you can enforce.

Agentic Security Initiative — the shortest definition that stays accurate

Les Agentic Security Initiative is an OWASP GenAI Security Project initiative focused on the security implications of agentic systems, including threat modeling, mitigations, and practitioner resources. AWS explicitly points to OWASP’s Agentic Security Initiative as the place where agent-specific threats are cataloged and mitigations are proposed. (Amazon Web Services, Inc.)

In December 2025, OWASP released the OWASP Top 10 for Agentic Applications 2026 as a globally peer-reviewed framework to identify the most critical risks for autonomous and agentic AI systems. The document is designed to be operational: each item includes description, common examples, scenarios, and mitigation guidance. (Projet de sécurité Gen AI de l'OWASP)

If your engineering team is asking “What do we ship first to reduce risk,” this Top 10 is intended to be a compass—not a research survey. (Projet de sécurité Gen AI de l'OWASP)

The SERP language engineers are actually using around Agentic Security Initiative

You asked for the “highest-click” phrasing. Without access to private keyword tools, the defensible way to approximate this is to look at the phrases repeatedly used by the top-ranking, high-authority pages around the term and the Top 10 naming itself.

Across OWASP materials and high-visibility vendor write-ups, the repeated, high-intent queries cluster around:

- OWASP Top 10 for Agentic Applications et Agentic Top 10 (Projet de sécurité Gen AI de l'OWASP)

- Tool Misuse, Tool Poisoning, Tool Shadowing, Rug Pull et MCP security (CrowdStrike)

- Détournement de l'objectif de l'agent et Injection indirecte et rapide (Projet de sécurité Gen AI de l'OWASP)

- Identity and Privilege Abuse, often described as delegation chain ou confused deputy behavior (Projet de sécurité Gen AI de l'OWASP)

- Vulnérabilités de la chaîne d'approvisionnement agentique, explicitly tied to MCP servers, registries, and evolving tool metadata (CrowdStrike)

- Unexpected Code Execution and practical “agent toolchain RCE” incidents (NVD)

The reason these phrases dominate is structural: OWASP’s Top 10 names are now becoming the taxonomy that security teams use in tickets, incident reports, and architecture reviews—exactly like the classic OWASP Top 10 did for web apps. (Projet de sécurité Gen AI de l'OWASP)

AWS’s reading of the agent threat landscape — what matters most in the blog

A layered model, not a replacement model

AWS proposes a layered approach: general application security, then GenAI security controls, then deeper agent-specific controls focused on identity, tool manipulation, and memory poisoning. This is a helpful corrective to a common failure mode: teams jump to “prompt injection filters” and ignore that their agent can still do damage via over-privileged tools. (Amazon Web Services, Inc.)

MCP changes the economics of “tool integration,” and the security math follows

AWS cites a Backslash Security MCP report and states that identifiable MCP servers exceeded 15,000 globally, with more than 7,000 directly exposed to the internet—framing this as a major attack surface expansion. (Amazon Web Services, Inc.)

Backslash’s own published research shows why this matters: MCP servers are critical infrastructure for AI agents, and their team analyzed thousands of publicly available servers for patterns like tool poisoning, rug pull attacks, tool shadowing, and data exfiltration. (Backslash)

Once tools become dynamic, discoverable, and shared across agents, the “safe integration” assumption collapses. CrowdStrike describes the same structural issue: in agent systems, the boundary is written in natural language tool descriptions and metadata, so the reasoning layer becomes an attack surface that traditional code scanning won’t see. (CrowdStrike)

The OWASP Agentic Top 10 — the map you can actually use in architecture reviews

OWASP’s diagram “Agentic Top 10 at a glance” is useful because it pins the Top 10 onto a flow: inputs, integration and processing, outputs, plus policy, governance, memory, tools, and human oversight. (Projet de sécurité Gen AI de l'OWASP)

Below is a practitioner mapping that aligns OWASP’s Top 10 intent with AWS’s engineering emphasis.

Table — What to secure first, by surface

| Surface | What breaks in practice | OWASP anchor | AWS emphasis |

|---|---|---|---|

| Untrusted content becomes instructions | Agents can’t reliably distinguish instruction from content | ASI01 Agent Goal Hijack (Projet de sécurité Gen AI de l'OWASP) | Layered guardrails; intent traceability; reduce tool surface (Amazon Web Services, Inc.) |

| Tools become a stealth exfil path | Tool metadata and chaining create “policy in text” | ASI02 Tool Misuse and Exploitation (Projet de sécurité Gen AI de l'OWASP) | Tool access control, sandbox execution, JIT permissions (Amazon Web Services, Inc.) |

| Delegation chains become privilege escalation | Confused deputy behavior emerges across agents and users | ASI03 Identity and Privilege Abuse (Projet de sécurité Gen AI de l'OWASP) | Fine-grained RBAC/ABAC; prevent cross-agent delegation; session isolation (Amazon Web Services, Inc.) |

| Tool ecosystem becomes a supply chain | MCP servers and registries evolve outside CI review | ASI04 Agentic Supply Chain Vulnerabilities (Projet de sécurité Gen AI de l'OWASP) | Centralized governance via Gateway; allowlists; security review (Amazon Web Services, Inc.) |

| Natural language paths unlock code execution | Agents generate or pass dangerous strings to shells and CLIs | ASI05 Unexpected Code Execution (Projet de sécurité Gen AI de l'OWASP) | Sandboxing, parameter validation, deny-by-default execution (Amazon Web Services, Inc.) |

| Memory becomes a persistence layer for attackers | Poisoned memory outlives the initial session | ASI06 Memory and Context Poisoning (Projet de sécurité Gen AI de l'OWASP) | Memory validation, provenance, anomaly detection (Amazon Web Services, Inc.) |

ASI01 Agent Goal Hijack — why “indirect prompt injection” is the default failure mode

OWASP defines Agent Goal Hijack as the consequence of a fundamental weakness: agents and underlying models cannot reliably distinguish legitimate instructions from related content, so attackers can manipulate objectives, task selection, or decision pathways via prompt-based manipulation, deceptive tool outputs, poisoned external data, and more. (Projet de sécurité Gen AI de l'OWASP)

The important shift versus classic prompt injection is multi-step autonomy. A hijacked goal is not “one bad answer.” It is a redirected plan that triggers tool invocations, data access, and actions. (Projet de sécurité Gen AI de l'OWASP)

Engineering controls that actually change outcomes

- Treat every natural-language channel as untrusted, not just the chat box RAG documents, HTML, emails, calendar invites, and tool outputs are all “prompt carriers” in agent systems. OWASP calls this out explicitly as common examples. (Projet de sécurité Gen AI de l'OWASP)

- Lock goals and scope as auditable configuration If scope changes are not configuration-managed and reviewable, you have no way to tell whether the agent is drifting because of user intent, system evolution, or attacker content. (Projet de sécurité Gen AI de l'OWASP)

- Put a policy gate between planning and execution If you do one thing, do this: do not let the model’s plan be the executor. Put an enforcement layer that can say “no” when the agent proposes actions outside scope or with suspicious arguments.

Code — A minimal “intent gate” pattern

from dataclasses import dataclass

from typing import Any, Dict, List

@dataclass

class ToolCall:

name: str

args: Dict[str, Any]

risk: str # e.g. "low", "high"

ALLOWED_TOOLS = {"search_docs", "read_ticket", "summarize"}

HIGH_RISK_TOOLS = {"send_email", "refund_customer", "run_shell"}

def policy_gate(tool_call: ToolCall, goal_id: str, allowed_goal_ids: List[str]) -> None:

# 1) Goal binding: only allow execution under an approved goal context

if goal_id not in allowed_goal_ids:

raise PermissionError("Goal not approved for execution")

# 2) Tool allowlist

if tool_call.name not in ALLOWED_TOOLS and tool_call.name not in HIGH_RISK_TOOLS:

raise PermissionError("Tool not allowed")

# 3) High-risk tools require explicit approval

if tool_call.name in HIGH_RISK_TOOLS:

raise PermissionError("High-risk tool requires human approval")

# 4) Basic argument hygiene

for k, v in tool_call.args.items():

if isinstance(v, str) and "<IMPORTANT>" in v:

raise ValueError("Suspicious prompt-carrier pattern detected")

This pattern matches the OWASP spirit—validate intent before high-impact actions—and aligns with AWS’s emphasis on limiting tools and improving traceability. (Projet de sécurité Gen AI de l'OWASP)

ASI02 Tool Misuse and Exploitation — the most underrated agent risk

OWASP’s definition is blunt: agents can misuse legitimate tools due to prompt injection, misalignment, unsafe delegation, or ambiguous instructions—leading to data exfiltration, workflow hijacking, and costly repeated calls. Crucially, the agent can remain “within its authorized privileges” while still producing catastrophic outcomes. (Projet de sécurité Gen AI de l'OWASP)

AWS’s blog mirrors this: tool integration becomes a key attack vector, especially when tools are integrated through MCP in unregulated ecosystems, and code generation adds RCE and code attack risk. (Amazon Web Services, Inc.)

CrowdStrike’s “agentic tool chain attacks” framing is especially actionable: tool poisoning, tool shadowing, and rug pull attacks are attacks on metadata and reasoning, not tool code. That’s why static analysis misses them. (CrowdStrike)

AWS’s concrete examples — tool rug pull and tool shadowing

AWS gives practical patterns:

- A “benign” tool description that later includes hidden instructions to read local files and exfiltrate them, describing a rug pull behavior. (Amazon Web Services, Inc.)

- Tool shadowing patterns where one tool’s description influences how another tool is called, or a malicious server intercepts calls across servers. (Amazon Web Services, Inc.)

Those examples are not theoretical. They map directly to the agentic tool chain attacks described in industry write-ups. (CrowdStrike)

Controls that matter more than filters

- Per-tool least privilege profiles: permissions, data scope, rate limits, and egress allowlists should be attached to tools, not sprinkled in prompt text. (Projet de sécurité Gen AI de l'OWASP)

- Sandbox execution: AWS repeatedly pushes execution sandboxing for tool misuse containment. (Amazon Web Services, Inc.)

- Immutable logging and forensic traceability: if you can’t explain a tool chain, you can’t secure it. (Amazon Web Services, Inc.)

Code — a tool descriptor linter for MCP-style prompt carriers

import re

from typing import Dict, List

SUSPICIOUS_PATTERNS: List[re.Pattern] = [

re.compile(r"<IMPORTANT>.*?</IMPORTANT>", re.DOTALL | re.IGNORECASE),

re.compile(r"read.*?(/|\\\\)|cat\\s+/", re.IGNORECASE),

re.compile(r"curl\\s+https?://|wget\\s+https?://", re.IGNORECASE),

re.compile(r"redirect.*?email|bcc|exfiltrate|send.*?to", re.IGNORECASE),

]

def lint_tool_description(tool: Dict[str, str]) -> List[str]:

findings = []

desc = tool.get("description", "") or ""

for p in SUSPICIOUS_PATTERNS:

if p.search(desc):

findings.append(f"Matched suspicious pattern: {p.pattern}")

return findings

This is conceptually aligned with AWS’s suggested tool security validation approach for MCP tools. (Amazon Web Services, Inc.)

ASI03 Identity and Privilege Abuse — where agents break classic IAM assumptions

OWASP explains Identity and Privilege Abuse as the exploitation of dynamic trust and delegation chains in agents: agents cache credentials, inherit roles, call each other, and operate across interconnected systems. Without distinct governed identity, enforcing real least privilege becomes difficult. (Projet de sécurité Gen AI de l'OWASP)

AWS’s blog gives the classic “confused deputy” shape: when an agent has higher privilege than a user but can be tricked into acting on the user’s behalf in unauthorized ways, the trust boundary is violated. (Amazon Web Services, Inc.)

Agentic Zero Trust is not branding — it’s a control model

Cloud Security Alliance’s Agentic Trust Framework explicitly applies Zero Trust principles to agents: “never trust, always verify” becomes “no AI agent should be trusted by default,” and trust must be continuously verified through monitoring. (Cloud Security Alliance)

If you want a single operational sentence: every tool call should be explainable as a permitted action by a specific principal under a specific session. If you can’t prove that, you don’t have least privilege—you have vibes.

Code — short-lived credentials and session binding

# Pseudocode — the point is binding, not a specific cloud SDK.

def mint_ephemeral_token(user_id: str, tool_name: str, ttl_seconds: int = 60) -> str:

"""

Issue a token that:

- is scoped to a single tool

- is scoped to a single user session

- expires quickly

"""

claims = {

"sub": user_id,

"tool": tool_name,

"exp": now() + ttl_seconds,

"nonce": random_uuid(),

}

return sign_jwt(claims)

def invoke_tool(tool_name: str, args: dict, user_session: dict):

token = mint_ephemeral_token(user_session["user_id"], tool_name)

return tool_gateway_call(tool_name=tool_name, args=args, token=token)

This aligns with both OWASP’s emphasis on least agency and AWS’s emphasis on identity isolation and minimizing privilege inheritance. (Projet de sécurité Gen AI de l'OWASP)

ASI04 Agentic Supply Chain Vulnerabilities — MCP made this unavoidable

If ASI02 is “misuse of tools you already trust,” ASI04 is the more uncomfortable truth: your agent system now depends on third-party tools, plugins, prompt templates, MCP servers, registries, connectors, and evolving metadata—often outside your CI pipeline.

AWS calls MCP “a new, fast-growing, trust-poor software supply chain,” where developers can easily deploy servers from public repos with unknown security and maintenance status. (Amazon Web Services, Inc.)

Backslash’s research list of patterns—tool poisoning, rug pull, tool shadowing—are supply chain behaviors as much as they are runtime behaviors, because the “artifact” you consume is not only code; it is also tool description and capability advertisement. (Backslash)

CVE-2024-3094 XZ backdoor — why supply chain is not a theory exercise

NVD’s description of CVE-2024-3094 documents malicious code discovered in upstream xz tarballs, including obfuscated build behavior that produced a modified liblzma library. (NVD)

CISA’s alert frames it explicitly as a reported supply chain compromise affecting a widely used compression library. (CISA)

Agent ecosystems are walking toward an analogous failure mode: an innocuous-seeming tool or server becomes a privileged dependency, and the “update path” becomes the attack path. The lesson from xz is not “never use open source.” The lesson is: provenance, pinning, verification, and review boundaries are security controls, not paperwork. (NVD)

ASI05 Unexpected Code Execution — when agent toolchains touch the shell, you are already late

Agent systems routinely create or transform code: scripts, SQL queries, shell commands, configuration snippets, API payloads. If any of those strings crosses into an execution context without strict policy, you get “unexpected code execution.”

AWS’s threat list explicitly includes “unexpected RCE and code attacks,” describing attacker-driven injection of malicious code and unauthorized script execution. (Amazon Web Services, Inc.)

CVE-2026-0755 — gemini-mcp-tool execAsync command injection

NVD’s record for CVE-2026-0755 describes a command injection remote code execution vulnerability in gemini-mcp-tool’s execAsync method, allowing remote attackers to execute arbitrary code without authentication due to lack of validation before a system call. (NVD)

This is a clean example of why “agent toolchains are a privileged input channel.” Once the agent can reach a network-accessible MCP endpoint that shells out, the old boundary between “prompt” and “OS” is gone. (NVD)

CVE-2025-68143 to CVE-2025-68145 — Git MCP server toolchain weaknesses

NVD records a set of MCP Git server vulnerabilities that illustrate real-world toolchain risk:

- CVE-2025-68143: git_init accepted arbitrary filesystem paths prior to a fixed version, enabling repository creation in arbitrary locations. (NVD)

- CVE-2025-68144: git_diff and git_checkout passed user-controlled arguments to git CLI without sanitization, enabling arbitrary file overwrites via flag-like values. (NVD)

- CVE-2025-68145: repository scoping via a flag did not validate subsequent repo_path arguments were within the configured path. (NVD)

These are not “AI vulnerabilities” in a mystical sense. They are classic path and argument injection problems—made higher impact because an agent can be induced to call the tool and chain the effects across systems. (NVD)

ASI06 Memory and Context Poisoning — persistence without malware

Memory is the part of agent systems that most closely resembles attacker persistence. OWASP separates goal hijack from memory poisoning: the latter is about persistent corruption of stored context or long-term memory. (Projet de sécurité Gen AI de l'OWASP)

AWS lists “memory poisoning” as a top agent-specific threat and recommends concrete mitigations: validate memory content, restrict persistence to trusted sources, apply cryptographic validation for long-term storage, and perform anomaly detection on memory logs. (Amazon Web Services, Inc.)

A production-grade way to think about memory is: it is a database with a new query language, and the query language is prompts. If you wouldn’t allow untrusted writes into your configuration database, you shouldn’t allow untrusted writes into agent memory.

ASI07 Insecure Inter-Agent Communication — trust breaks at scale

OWASP treats insecure inter-agent communication as a Top 10 item and places it directly on the architecture map. (Projet de sécurité Gen AI de l'OWASP)

AWS’s threat taxonomy includes “agent communication poisoning,” “rogue agents in multi-agent systems,” and “human attacks on multi-agent systems,” and then recommends protecting multi-agent communication and trust mechanisms as one of the six mitigation strategies. (Amazon Web Services, Inc.)

A reliable baseline:

- Sign agent-to-agent messages

- Enforce protocol version pinning

- Add anti-replay fields such as nonce and timestamp

- Require that every message is authorized as if it were an API call, not “internal chatter”

If you do not do this, you will eventually debug an incident that looks like “the wrong agent did the right thing.”

ASI08 Cascading Failures — agents amplify small errors into system incidents

Cascading failures are not “LLMs hallucinate.” They are systems failures where one agent’s output becomes another agent’s input, and the error compounds across automation boundaries.

AWS explicitly includes “cascading hallucination attacks” and “resource overload” in its agent threat list, reflecting how performance, cost, and decision propagation become security problems. (Amazon Web Services, Inc.)

The agentic twist is that feedback loops create “automatic persistence” for mistakes: retries, self-correction loops, and planner heuristics will keep pushing until something changes—often your data.

ASI09 Human-Agent Trust Exploitation — the soft target is now the approval layer

OWASP flags human-agent trust exploitation as a Top 10 item on the architecture diagram. (Projet de sécurité Gen AI de l'OWASP)

AWS’s threat taxonomy includes “overwhelming human in the loop” and “human manipulation,” then recommends optimizing human workflows to reduce decision fatigue and strengthening traceability and logging. (Amazon Web Services, Inc.)

A practical takeaway: if your approval UX is “Approve” vs “Deny” with no diff, no context, and no cost/risk cues, your HITL design is not a safety net. It’s a rubber stamp waiting to happen.

ASI10 Rogue Agents — when misalignment becomes behavior, not output

OWASP includes rogue agents as a Top 10 item and places it at the agent core in the diagram. (Projet de sécurité Gen AI de l'OWASP)

The defensible stance is not panic. It’s governance:

- Reduce autonomy where it adds little value

- Require monitoring that can answer “what did it do, why, and under whose authority”

- Build kill-switches and rollback, not just alerting

AWS’s implementation path — from taxonomy to deployable controls

AWS doesn’t stop at taxonomy. It outlines a concrete path: lifecycle controls, guardrails, architecture design, and centralized MCP governance via AgentCore Gateway. (Amazon Web Services, Inc.)

Guardrails and runtime enforcement

The AWS blog includes sample code using Amazon Bedrock guardrails and AgentCore runtime configuration to apply guardrails at runtime. (Amazon Web Services, Inc.)

The important point is not the specific SDK call—it’s the architecture: guardrails are applied where the agent is invoked, not as a pre-processing afterthought. (Amazon Web Services, Inc.)

Centralized MCP governance via AgentCore Gateway

AWS recommends central governance for MCP servers: only reviewed servers can be deployed, vulnerable or unmaintained ones should be removed, and a unified management platform should exist. (Amazon Web Services, Inc.)

AWS documentation for AgentCore Gateway frames it as a managed way to convert APIs and Lambda functions into MCP-compatible tools, provide unified endpoints, and crucially, handle ingress and egress authentication in a managed service. (AWS Documentation)

This is the “platform answer” to ASI04 and ASI02: tool sprawl is inevitable; unmanaged tool sprawl is optional.

A practical blueprint — Agentic Zero Trust in five enforceable layers

CSA’s Agentic Trust Framework aligns with OWASP guidance and describes Zero Trust applied to agents: identity, behavior, and continuous verification. (Cloud Security Alliance)

Here is the production-minded version:

- Identity layer Every agent run is a session with an authenticated principal, scoped permissions, short-lived credentials, and immutable audit logs.

- Tool layer Every tool is version-pinned, source-verified, metadata-linted, and executed behind an enforcement gateway. Egress allowlists are default.

- Couche de données Every retrieval source is treated as untrusted content. Prompt-carrier detection, sanitization, and provenance metadata are required.

- Memory layer Memory writes are constrained to trusted channels, validated, encrypted, and monitored for anomaly. Memory reads are scoped to task and role.

- Observability layer You capture tool call sequences, approvals, risk decisions, and deviations from baseline in a privacy-safe format.

Table — Minimal controls you can ticket tomorrow

| Contrôle | Stops | OWASP/AWS link |

|---|---|---|

| Tool allowlist + per-tool scopes | Tool misuse, unintended data access | ASI02 (Projet de sécurité Gen AI de l'OWASP) |

| Execution sandbox + egress allowlist | Command injection blast radius | ASI05 (NVD) |

| Metadata linting + signature + pinning | Tool poisoning, rug pull, shadowing | Backslash + AWS examples (Backslash) |

| Session isolation + JIT credentials | Confused deputy and privilege inheritance | ASI03 + AWS strategy (Projet de sécurité Gen AI de l'OWASP) |

| Immutable logs + approval diffs | Human trust exploitation | AWS HITL strategy (Amazon Web Services, Inc.) |

Agentic security isn’t only architecture. It’s also verification. Once your agent can browse, call APIs, and execute workflows, you need testing that reflects those real attack chains—especially where tool misuse, identity abuse, and injection meet real systems.

Penligent is positioned as an AI-powered penetration testing platform focused on turning natural language into concrete security actions and validating risk in real environments. (Penligent)

In practice, that means you can use a platform like Penligent as part of a continuous validation loop: when your policy gate or tool governance changes, you retest the agent app’s externally reachable surfaces, tool endpoints, and auth boundaries to confirm the change reduced exploitable paths rather than shifting them.

If your agent stack leans on MCP, Penligent’s own research content around MCP-era execution boundaries, supply chain, and toolchain vulnerabilities can be useful context for engineering teams building internal controls. (Penligent)

The CVE reality check — why “classic vulns” matter more in agent systems

Agentic security is not separate from vulnerability management. It amplifies it.

- CVE-2026-0755 shows how a toolchain endpoint can become unauthenticated RCE. (NVD)

- CVE-2025-68143/68144/68145 show how path and argument injection inside MCP tooling can become a chainable attack surface. (NVD)

- CVE-2024-3094 is a supply chain compromise reminder: provenance and review boundaries are first-class controls. (NVD)

- CVE-2021-44228 demonstrates how “attacker-controlled strings” can become code execution—an old lesson that returns in new places, including logs and tool outputs. (NVD)

- CVE-2026-20127 illustrates why exploited-in-the-wild infrastructure flaws remain urgent; automation and agents don’t reduce that urgency—they increase the speed at which misconfigurations propagate. (NVD)

Internal links — authoritative English sources and Penligent related articles

OWASP GenAI Security Project — Agentic Security Initiative https://genai.owasp.org/initiatives/agentic-security-initiative/

OWASP Top 10 for Agentic Applications for 2026 — official resource page https://genai.owasp.org/resource/owasp-top-10-for-agentic-applications-for-2026/

OWASP Top 10 for Agentic Applications 2026 — PDF download (official) https://genai.owasp.org/download/52117/

AWS documentation — Amazon Bedrock AgentCore Gateway https://docs.aws.amazon.com/bedrock-agentcore/latest/devguide/gateway.html

Backslash Security — Threat research on MCP servers https://www.backslash.security/blog/hundreds-of-mcp-servers-vulnerable-to-abuse

CrowdStrike — Agentic tool chain attacks overview https://www.crowdstrike.com/en-us/blog/how-agentic-tool-chain-attacks-threaten-ai-agent-security/

Cloud Security Alliance — Agentic Trust Framework, Zero Trust governance for AI agents https://cloudsecurityalliance.org/blog/2026/02/02/the-agentic-trust-framework-zero-trust-governance-for-ai-agents

NVD — CVE-2026-0755 gemini-mcp-tool command injection RCE https://nvd.nist.gov/vuln/detail/CVE-2026-0755

NVD — CVE-2025-68143 mcp-server-git git_init arbitrary path https://nvd.nist.gov/vuln/detail/CVE-2025-68143

NVD — CVE-2025-68144 mcp-server-git git_diff argument injection https://nvd.nist.gov/vuln/detail/CVE-2025-68144

NVD — CVE-2025-68145 mcp-server-git repository scoping path validation https://nvd.nist.gov/vuln/detail/CVE-2025-68145

NVD — CVE-2024-3094 xz backdoor https://nvd.nist.gov/vuln/detail/CVE-2024-3094

CISA Alert — Reported supply chain compromise affecting XZ Utils CVE-2024-3094 https://www.cisa.gov/news-events/alerts/2024/03/29/reported-supply-chain-compromise-affecting-xz-utils-data-compression-library-cve-2024-3094

NVD — CVE-2021-44228 Log4Shell https://nvd.nist.gov/vuln/detail/CVE-2021-44228

CISA guidance — Apache Log4j vulnerability guidance https://www.cisa.gov/news-events/news/apache-log4j-vulnerability-guidance

NVD — CVE-2026-20127 Cisco SD-WAN auth bypass https://nvd.nist.gov/vuln/detail/CVE-2026-20127

Penligent — Kali Linux + Claude via MCP Is Cool—But It’s the Wrong Default for Real Pentesting Teams https://www.penligent.ai/hackinglabs/kali-linux-claude-via-mcp-is-cool-but-its-the-wrong-default-for-real-pentesting-teams/

Penligent — OpenClaw + VirusTotal, The Skill Marketplace Just Became a Supply-Chain Boundary https://www.penligent.ai/hackinglabs/openclaw-virustotal-the-skill-marketplace-just-became-a-supply-chain-boundary/