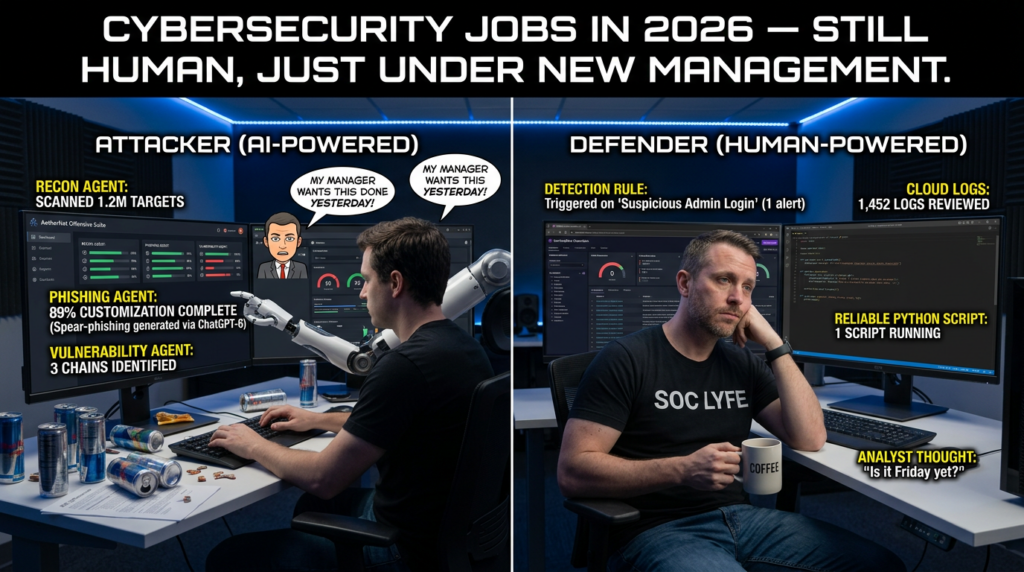

AI is no longer just helping security researchers write cleaner one-liners or summarize a stack trace. Public evidence from late 2025 through early 2026 shows a sharper shift: the best models are getting materially better at bounded offensive-security tasks, external researchers are using AI across day-to-day workflows, and AI-native products are now formal bug bounty targets with their own reward criteria. The UK AI Security Institute says cyber task capability is improving quickly, with the length of cyber tasks frontier systems can complete unassisted doubling at roughly eight months, and Lyptus Research’s offensive-cyber study reports a 9.8-month doubling time across 2019 to 2026 models, steepening to 5.7 months when restricted to the 2024-and-later frontier. (AI Security Institute)

That does not mean AI can replace a strong hunter. It does mean the old mental model is broken. If you still think AI is mainly a faster autocomplete layer for ffuf, regexes, and boilerplate PoCs, you are underestimating the change. Lyptus measured recent frontier models around the point where they succeed half the time on offensive-security tasks that take human experts just over three hours, and it reported substantially higher performance when token budgets were expanded, while explicitly warning that its results are lower bounds and limited to bounded, verifiable subtasks rather than full real-world operations. METR’s broader “time horizon” framing matters here too: the metric is about the human difficulty of a task, not about an agent being left alone for that exact duration. (lyptusresearch.org)

Recent public incidents make the point more concrete. Anthropic said in November 2025 that it disrupted what it described as the first documented large-scale cyberattack executed without substantial human intervention, with attackers using Claude Code across roughly thirty targets. In March 2026, FreeBSD published an advisory for CVE-2026-4747, a remote code execution issue in RPCSEC_GSS, crediting “Nicholas Carlini using Claude, Anthropic.” On April 3, 2026, NVD published CVE-2026-31402 for a Linux kernel NFS replay-cache heap overflow that public reporting linked to Claude-assisted discovery. (anthropic.com)

Bug bounty has changed with that backdrop. HackerOne says its latest report is built on more than 580,000 validated vulnerabilities and shows 210 percent growth in valid AI vulnerability reports, led by prompt injection. Bugcrowd’s 2026 Inside the Mind of a Hacker report says it analyzed over 2,000 survey responses, that about 82 percent of hackers are already using AI in their workflow, and that common uses include automating tasks, analyzing data, speeding up recon, triage, and code analysis. (HackerOne)

The right conclusion is not “let the model hack for you.” The right conclusion is that bug bounty now rewards hunters who know exactly where AI belongs in the workflow, where it does not, and how to keep it boxed inside program rules, test safety, and evidence discipline.

AI bug bounty starts with authorization, not prompts

Every good workflow starts with boring documents. That is not a moral lecture. It is what keeps your speed from turning into liability.

The U.S. Department of Justice’s 2022 CFAA charging guidance says good-faith security research should not be charged and defines it as access carried out solely for good-faith testing, investigation, or correction of a security flaw, in a manner designed to avoid harm, with the results used primarily to promote security or safety. That matters, but it is not a universal shield. It does not replace the need to stay inside a program’s authorization, scope, and disclosure rules. (justice.gov)

Modern bug bounty and disclosure programs increasingly formalize those boundaries. CISA’s vulnerability disclosure policy template exists to help organizations define covered systems, how research should be reported, and what conduct is authorized. disclose.io standardizes safe-harbor language around authorization, anti-circumvention claims, limited Terms of Service waivers, and organizational support when research follows the policy. HackerOne expanded that logic in January 2026 with a Good Faith AI Research Safe Harbor, explicitly aimed at reducing legal ambiguity around responsible testing of AI systems. (cisa.gov)

This matters more when AI enters the loop, because AI makes it easy to move faster than your judgment. The model will not protect you from fuzzing the wrong host, touching an out-of-scope mobile backend, replaying state-changing requests on production assets, or misreading a ban on destructive testing. It will often speak confidently about scope even when it has no durable internal representation of the program brief. That is why the mature order of operations is simple: read the brief yourself, mark the boundaries yourself, then use AI to compress and organize what you already know.

A practical way to do that is to build a one-page scope sheet before you do any serious testing. The model can help create it, but only after you feed it the program brief and force it into a constrained extraction task. The sheet should answer five questions: what assets are explicitly in scope, what asset classes are explicitly out of scope, what forms of automated testing are prohibited or rate-limited, what disclosure restrictions apply, and what kinds of proof the program historically expects. That is the first place AI saves time without increasing risk.

The right mental model for AI in bug bounty

The best way to think about AI in bug bounty is not as an autonomous attacker, but as a bounded analyst attached to a disciplined operator. If you use it that way, it becomes extremely valuable.

AI is strong at turning noise into structure. It can ingest long HTTP transcripts, JavaScript bundles, CLI output, GraphQL introspection results, mobile API traces, changelogs, and patch diffs, then produce an index of likely attack surfaces much faster than a tired human can. It can cluster similar endpoints, group parameters by likely semantics, extract candidate object identifiers, compare role-based responses, summarize code you do not want to read line by line, and turn rough notes into a report skeleton. Bugcrowd’s 2026 research reflects exactly that pattern: hackers describe using AI to automate menial tasks, analyze ugly code and large codebases, triage scanner noise, remove duplicates, and spend more time on the creative part of the work. (Bugcrowd)

AI is weak when asked to infer certainty from weak evidence. It loves producing plausible theories of impact before you have proven a boundary was crossed. It often overreads indirect signals like timing differences, ambiguous HTTP codes, reflected values, or frontend-only state changes. It will also happily generate test plans that violate a program’s rules if you phrase the prompt carelessly or fail to supply the right constraints. This is why experienced testers increasingly want AI embedded into stateful tools rather than floating in isolated chat tabs. PortSwigger’s own framing of Burp AI is telling: it emphasizes validation, exploration, repetitive-task relief, and keeping the tester in control. (portswigger.net)

That “keep the tester in control” line is not marketing fluff. It is the central design principle of any AI stack that belongs anywhere near real bug bounty work. If the model cannot see the exact request you are mutating, the exact response you got back, the exact role or session state attached to that response, and the exact boundaries of the program, then it is mostly guessing. Fluent guessing is the raw material of low-quality submissions.

The good use of AI is narrower and more powerful: reduce reading time, preserve state, propose deltas, organize evidence, and surface promising comparisons. Human judgment still decides whether the claim is real.

Where AI actually helps bug bounty hunters

Most bug bounty time is not spent on the moment of discovery. It is spent in the hard middle. That is where AI helps.

The hard middle includes sorting recon output, figuring out which hosts deserve attention, reading minified or half-minified JavaScript, understanding how two users’ responses differ, mapping object IDs across workflows, comparing tenants or roles, turning one suspicious response into a repeatable test, and turning a working note into a report a triager can validate. OWASP’s WSTG still matters because it gives you the test map; AI helps you move through that map faster. OWASP’s API guidance still matters because BOLA and related authorization failures remain central in real targets. (owasp.org)

A helpful way to frame AI assistance is by job type rather than tool type.

| Job in the workflow | Why it is expensive manually | What AI can do well | What still needs you |

|---|---|---|---|

| Scope parsing | Program briefs are messy and nuanced | Extract constraints, prohibited actions, disclosure notes | Final interpretation |

| Recon triage | Too many hosts, endpoints, headers, and docs | Cluster assets, summarize likely tech, highlight anomalies | Choose what to touch |

| API auth testing | Roles, IDs, fields, and states multiply fast | Build role/object matrices, diff responses, suggest variants | Prove unauthorized access or write impact |

| JS and SPA analysis | Large bundles hide routes and logic | Summarize modules, extract endpoints, flag auth-sensitive flows | Verify backend behavior |

| Source-assisted research | Large codebases are slow to read | Summarize sinks, wrappers, patches, data flow | Confirm exploitability |

| Reporting | Triagers hate ambiguity and filler | Reformat notes into clean structure | Guarantee every claim is true |

That table is the practical core of AI bug bounty. The model is most valuable where the task is repetitive, state-heavy, text-heavy, or comparison-heavy. It is least trustworthy where the task requires harm judgment, program nuance, or final exploitability calls.

Build an AI-augmented bug bounty workflow

A productive 2026 workflow looks less like “ask one giant prompt” and more like a loop: capture, compress, hypothesize, validate, preserve, report.

Start with real traffic. Browse the application, sign up, log in with at least two accounts when the rules allow it, exercise core functionality, and capture everything in a proxy. Burp Repeater remains the perfect anchor for this because bug bounty is often won by changing one variable at a time and watching the delta. Burp’s own documentation still teaches the right instinct: resend interesting requests repeatedly to study how the application reacts to different input. Add AI on top of that, not instead of it. (portswigger.net)

Next, compress the noise. Feed the model captured requests and responses from one feature at a time. Ask it to identify objects, roles, state transitions, file flows, long-lived identifiers, hidden actions, and which parameters look user-controlled versus server-generated. Ask it what comparisons would falsify a hypothesis, not just what payload it would try. That single change in prompting matters. It pushes the model toward being a scientific assistant instead of a fiction engine.

Then validate with minimal movement. Do not ask AI to “fully exploit” a production target. Ask it how to prove or disprove a narrow boundary claim with the smallest safe delta. For example: compare two authenticated users’ access to the same invoice endpoint; test whether a workflow-relevant flag is server-enforced or frontend-only; check whether an upload pipeline can be redirected into another user’s namespace without causing damage. The best bug bounty testing is often one step short of damage and still enough for acceptance.

After that, preserve the evidence before you write prose. Save raw requests and responses, screenshots, role labels, timestamps, and the exact artifacts that show the boundary crossed. This is where an operator-controlled agentic environment is more useful than a generic chat tab. Penligent’s public materials emphasize scope locking, operator control, and evidence-oriented workflows, and its bug-bounty-oriented pages frame AI as a way to preserve state, validate promising signals, and organize artifacts a triager can accept. That is a healthier model than “one magic prompt finds the bug.” (Penligent)

Finally, draft only after the claim is stable. HackerOne says a quality report should be clear, detailed, and actionable, with a concise title and detailed reproduction steps. Bugcrowd says you need to explain where the bug was found, who it affects, how to reproduce it, what parameters it affects, and include supporting proof-of-concept information. It also explicitly notes that you cannot edit a submission after it is reported. (HackerOne Help Center)

That last point changes how you should use AI. Use it while the report is still a draft. Never use it to “finish” a report whose proof is still unstable.

AI for API bug bounty, the highest-ROI workflow today

If I had to pick one area where AI already pays for itself in bug bounty, it would be API authorization and object-level testing.

OWASP continues to place Broken Object Level Authorization at the top of API risk because APIs expose object identifiers everywhere, and attackers can often exploit those identifiers by manipulating values in paths, queries, headers, or bodies. That is exactly the kind of pattern AI is good at finding across a large traffic set. (owasp.org)

In a normal API bounty session, you might capture a hundred requests that all look slightly different: /orders/123, /orders/124, /tenant/abc/users/9f…, {"invoiceId":"..."}, X-Org-ID: ..., cursor=..., project_id=.... AI is useful because it can normalize those into a smaller set of hypotheses: object reads, object writes, property overexposure, role confusion, tenant routing, bulk endpoints, export endpoints, async job polling, and admin-only actions exposed through common schemas.

The best pattern is to give the model paired data. One role and another role. One user and another user. One object the user owns and one they do not. One successful request and one denied request. One response before a role change and one after. Then ask the model for structured comparisons: which object identifiers appear controllable, which fields appear sensitive, which actions lack obvious server-side enforcement, and which minimal mutations would be most diagnostic.

Here is a small Python helper that flattens two JSON responses so you can compare them before handing the result to an LLM:

import json

from typing import Any, Dict

def flatten(obj: Any, prefix: str = "") -> Dict[str, Any]:

out = {}

if isinstance(obj, dict):

for k, v in obj.items():

new_prefix = f"{prefix}.{k}" if prefix else k

out.update(flatten(v, new_prefix))

elif isinstance(obj, list):

for i, v in enumerate(obj):

new_prefix = f"{prefix}[{i}]"

out.update(flatten(v, new_prefix))

else:

out[prefix] = obj

return out

def compare_json(a: dict, b: dict) -> None:

fa = flatten(a)

fb = flatten(b)

all_keys = sorted(set(fa.keys()) | set(fb.keys()))

for key in all_keys:

va = fa.get(key, "<missing>")

vb = fb.get(key, "<missing>")

if va != vb:

print(f"{key}\n user_a: {va}\n user_b: {vb}\n")

if __name__ == "__main__":

with open("user_a.json", "r", encoding="utf-8") as f:

a = json.load(f)

with open("user_b.json", "r", encoding="utf-8") as f:

b = json.load(f)

compare_json(a, b)

This script does not find a vulnerability by itself. What it does is make the interesting differences obvious. Once you have that output, the model can help you answer narrower questions: which changed fields look identity-bound, which look permission-bound, which should never be visible cross-user, and which deserve manual verification.

A safe and useful prompt for that stage looks like this:

You are helping with an authorized bug bounty test.

I will give you:

1. The program scope notes

2. A baseline request/response pair for user A

3. A baseline request/response pair for user B

4. A flattened field diff

Your task:

- Identify fields that may indicate object-level or property-level authorization issues

- Separate "interesting but weak" from "high-priority to validate"

- Suggest the smallest non-destructive request mutations to confirm or disprove each hypothesis

- Do not assume a vulnerability exists unless the evidence supports it

- Do not suggest any action that changes or deletes production data

That framing keeps the model useful. It limits the blast radius, demands falsifiable tests, and stops the conversation from turning into “invent a critical.”

AI for JavaScript-heavy and SPA targets

Single-page apps and JS-heavy products are where a lot of hunters quietly waste days. The frontend ships more state and business logic than ever, route structure is fragmented, and authentication and authorization clues are scattered across bundles, GraphQL operations, feature flags, and client stores.

AI is good here for one reason: it reads ugly text faster than you do.

The goal is not to let it reverse an entire bundle as an oracle. The goal is to reduce the amount of code you need to read with your own eyes. Feed it a single bundle or module at a time. Ask for routes, API calls, action names, role checks, upload handlers, AI-related tool invocations, document-processing paths, feature gates, and places where a user-controlled field appears to influence another subsystem. Ask it to return a test plan, not a prose summary. Then verify the endpoints in captured traffic or by controlled navigation.

A lightweight first pass for candidate endpoint extraction can be scripted before AI ever gets involved:

import re

from pathlib import Path

JS_PATH = Path("app.bundle.js")

text = JS_PATH.read_text(encoding="utf-8", errors="ignore")

patterns = [

r'"/api/[^"]+"',

r"'\/api\/[^']+'",

r'"\/v[0-9]+\/[^"]+"',

r"'\/v[0-9]+\/[^']+'",

r'"https:\/\/[^"]+"',

r"'https:\/\/[^']+'",

]

hits = set()

for pattern in patterns:

for match in re.findall(pattern, text):

hits.add(match.strip("\"'"))

for item in sorted(hits):

print(item)

This kind of script is intentionally primitive. That is fine. Its job is recall, not precision. Once you have the raw list, AI can cluster it into “likely authenticated data endpoints,” “admin surfaces,” “export/download paths,” “upload pipelines,” “tenant settings,” or “AI assistant/tooling features.” That second pass saves huge amounts of attention.

This is also the point where AI helps you separate frontend theater from backend reality. A lot of bounty work gets misled by client-side role checks, disabled buttons, hidden routes, or optimistic UI flows. The model can flag where the frontend appears to make security decisions, but the only truth is what happens when you replay the underlying HTTP request in a controlled way. Burp Repeater plus AI-assisted analysis is a very strong combination here because you keep the execution state grounded. (portswigger.net)

If you want one workspace that can combine browsing, tool execution, captured artifacts, and structured follow-up testing, that is the design space where operator-controlled agentic tooling starts making sense. Penligent’s public material and related articles repeatedly emphasize stateful validation, note-keeping, exploit packaging, and evidence rather than vague “AI does pentesting” promises. For bounty-adjacent work, that is the right product instinct: fewer guesses, more preserved context. (Penligent)

AI for source-assisted and open source bug bounty work

Open source bounty and source-assisted research are a different game from proxy-heavy web testing, but AI is helping there too.

The big shift is not that AI suddenly made static analysis obsolete. It is that static analysis and LLMs now fit together much better than people expected. CodeQL remains one of the best examples of that pairing. GitHub describes CodeQL as a semantic code analysis engine that lets you query code as though it were data, and specifically calls out finding all variants of a vulnerability. In practice, that means you can use CodeQL or another structural analysis tool to generate candidate flows, then use AI to explain wrapper functions, framework conventions, sink behavior, or historical patch deltas. (codeql.github.com)

That workflow matters because plain LLM code review still fails in predictable ways. It misreads custom framework semantics. It overstates reachability. It confuses user-controlled and server-controlled data when a call chain is large. It also tends to miss the importance of negative checks, parser edge cases, concurrency, lifetime, and ownership semantics unless you show it exactly where to look. Static analysis gives you structure. AI gives you speed in reading the structure.

The recent public cases are useful here not because they are bounty targets in the ordinary web sense, but because they show where the frontier has moved. FreeBSD’s March 26, 2026 advisory for CVE-2026-4747 credits “Nicholas Carlini using Claude, Anthropic” for a remote code execution issue in RPCSEC_GSS. Public reporting on April 3 said Claude-assisted work had also uncovered multiple Linux kernel vulnerabilities, including a bug hidden for 23 years, and NVD published CVE-2026-31402 for the NFS replay-cache heap overflow on April 3. (The FreeBSD Project)

The lesson for bounty hunters is not “go build kernel exploits with a chatbot.” The lesson is narrower and more practical. If AI can materially help researchers spot protocol-sensitive boundary errors and code-path assumptions in mature, low-level systems, then it can absolutely help you review a large SaaS codebase, open-source dependency, or self-hosted enterprise app faster than you used to. Especially in programs where source is available, patch diffs are public, or the target is an open-source project with a formal rewards program, the productivity gain is real.

A useful pattern is to ask AI to explain a patch, then ask what sibling sites or sibling handlers may still implement the same unsafe assumption. That is a much better use of a model than “find me bugs.”

AI-native targets are now real bug bounty surface

A lot of older “AI for bug bounty” content still assumes the target is a conventional web app and AI is just your assistant. That is no longer enough. The target itself may now be an AI product, an AI feature inside a conventional product, or an agent/tool ecosystem glued together with connectors, memory, retrieval, and stateful actions.

Google’s published reward criteria for bugs in AI products are one of the best public maps of what this looks like. Google explicitly says its scope aims to support testing for both traditional security vulnerabilities and risks specific to AI systems. The in-scope examples include invisible prompt injections that change the state of a victim’s account or assets, prompt injections into tools where the response drives decisions affecting victims, extraction of sensitive non-public training data, security-relevant misclassifications, and precise extraction of confidential model architecture or weights. Out of scope are things like ordinary jailbreaks in your own session, hallucinations, non-sensitive reconstruction, and harmful use cases already possible through other tooling. (Google Online Security Blog)

That distinction is extremely important. In AI bug bounty, the bug is not “the model said something bad.” The bug is that an adversary can drive security-relevant state change, extract sensitive information, bypass intended trust boundaries, or subvert a control in a way that harms another user, the platform, or a protected asset. Google’s April 2026 security blog on indirect prompt injection makes the same point operationally: it treats indirect prompt injection as an evolving threat, uses human and automated red teaming, relies on external researchers through the AI VRP, and runs discovered issues through reproduction, categorization, synthetic-data expansion, and end-to-end defense evaluation. (Google Online Security Blog)

That means an AI-native bounty workflow looks different from a classic XSS or IDOR workflow. You care about content ingestion, tool invocation, memory, action chains, policy engines, retrieval connectors, cross-user state leakage, and whether the model’s output can cause a privileged subsystem to do something it should not. You also care about whether there is a real victim path, not just a funny demo in your own account.

The practical implication is that AI can help you do bug bounty in two directions at once. It can help you hunt traditional products faster, and it can help you hunt the new class of AI-enabled products that now have public reward criteria and rapidly evolving attack surfaces.

Recent cases and CVEs that matter

The best articles on this topic do not stop at “AI is changing things.” They show where that change is landing.

CVE-2026-4747, FreeBSD RPCSEC_GSS remote code execution

FreeBSD-SA-26:08 says CVE-2026-4747 is a remote code execution issue in RPCSEC_GSS packet validation. The advisory explains that packet validation copied part of a packet into a stack buffer without ensuring the buffer was large enough, allowing a malicious client to trigger a stack overflow. The project credited “Nicholas Carlini using Claude, Anthropic,” said the issue affected all supported FreeBSD versions, and recommended upgrading to corrected branches because no workaround was available aside from not having the vulnerable module loaded. (The FreeBSD Project)

Why is that relevant to bug bounty? Not because most hunters are writing kernel RCE chains. It is relevant because it demonstrates that AI is crossing from syntax help into serious vulnerability reasoning. Even if you never touch a kernel target, the broader lesson is that advisory-to-understanding-to-repro workflows are accelerating.

CVE-2026-31402, Linux kernel NFS replay-cache heap overflow

NVD describes CVE-2026-31402 as a Linux kernel vulnerability in which the NFSv4.0 replay cache used a fixed 112-byte buffer that did not account for LOCK denied responses containing a conflicting lock owner up to 1024 bytes, enabling a slab out-of-bounds write. The NVD entry says it can be triggered remotely with two cooperating NFSv4.0 clients and summarizes the fix as bounds-checking the encoded response length before copying it into the replay buffer. (nvd.nist.gov)

The connection to bug bounty is the same one as above, but with a more immediate takeaway for source-assisted hunting: old protocol code and replay/serialization logic are exactly the kind of areas where AI’s reading speed can expose rare edge assumptions faster than a human doing everything manually.

CVE-2026-27825, MCP Atlassian arbitrary file write

The CVE record for CVE-2026-27825 says the MCP Atlassian server accepted a download_path parameter without directory-boundary enforcement in the confluence_download_attachment tool, allowing arbitrary content to be written anywhere the server process could write if an attacker could call the tool and supply or access malicious attachment content. (cve.org)

This matters because the Model Context Protocol and adjacent agent ecosystems are becoming real security targets. For bug bounty hunters, that opens a new class of findings around tool argument validation, filesystem confinement, connector trust, and action routing. The bug is not “AI is unsafe” in the abstract. The bug is that a concrete tool interface crosses a boundary it should not cross.

CVE-2026-33946, MCP Ruby SDK SSE hijacking

NVD says CVE-2026-33946 in the MCP Ruby SDK allowed complete hijacking of a victim’s Server-Sent Events stream by an attacker who obtained a valid session ID, and that version 0.9.2 fixed it. (nvd.nist.gov)

That is classic session-binding failure in a modern AI/agent ecosystem. It is relevant because a lot of 2026 bug bounty surface is moving from “web app route does not authorize this object” to “agent transport, connector, or stream layer does not bind authority and state tightly enough.”

CVE-2026-32916, OpenClaw plugin subagent authorization bypass

NVD says OpenClaw versions before 2026.3.11 contained an authorization bypass in which plugin subagent routes executed gateway methods through a synthetic operator client with broad administrative scopes, allowing remote unauthenticated requests to plugin-owned routes to perform privileged gateway actions. (nvd.nist.gov)

This is a good example of where bug bounty is headed in AI ecosystems: plugin boundaries, synthetic identities, tool routing, approval logic, and runtime authorization assumptions are all fertile ground. If you know how to use AI to map those trust boundaries, you will have an edge.

A practical AI prompt discipline for bug bounty

The prompt is not the product. Still, prompt quality matters because it determines whether the model helps you think or helps you fantasize.

The first rule is to always frame the work as authorized research on a known scope. The second is to ask for classifications and comparisons before you ask for tests. The third is to demand uncertainty labels. The fourth is to ban destructive or state-changing suggestions unless the program explicitly allows them and you intentionally choose them.

Here is a prompt pattern that works well across recon, traffic analysis, and report drafting:

You are assisting with an authorized bug bounty assessment.

Constraints:

- Stay inside the supplied scope

- Prefer non-destructive validation

- Do not claim impact unless the evidence supports it

- Separate confirmed facts, strong hypotheses, and weak hypotheses

- Suggest the minimum additional test needed to confirm or disprove each strong hypothesis

Input:

- Program scope notes

- Captured requests/responses

- Role labels

- Notes on observed behavior

Output:

1. Confirmed facts

2. Strong hypotheses worth testing

3. Weak hypotheses to ignore for now

4. Minimal next tests

5. Evidence I should preserve if a hypothesis is confirmed

That prompt shape works because it enforces discipline. It makes the model show its work. It also reduces one of the worst failure modes in AI-assisted hunting: the model jumping straight from “something looks odd” to “critical auth bypass.”

When you move from discovery to writing, change the goal. Do not ask the model whether the bug is high severity. Ask it to reformat your confirmed notes into a report structure and explicitly preserve all uncertainty. That distinction keeps your submission clean.

Reporting with AI without sending slop

The more AI enters bug bounty, the more report quality becomes a real differentiator.

HackerOne says a quality report is clear, detailed, and actionable, and specifically calls for a concise title and detailed reproduction steps. Bugcrowd says the report should explain where the bug was found, who it affects, how to reproduce it, and what proof-of-concept evidence supports the claim. Bugcrowd also strongly recommends illustrative evidence and notes that you cannot edit a submission after reporting it. (HackerOne Help Center)

That is why your raw notes should become a draft before they become a submission. The draft is where AI can be extremely helpful. It can rewrite awkward sequences, remove repetition, tighten the title, convert screenshots into caption placeholders, and make impact language proportional. The final submission is where humans must take responsibility.

A minimal report template that works well in 2026 looks like this:

## Summary

A concise description of the issue and the boundary crossed.

## Target

Exact host, route, feature, or component tested.

## Environment

Account role, tenant context, feature flags, and any prerequisites.

## Steps to Reproduce

1. Start from this state

2. Send this request or perform this action

3. Change this specific parameter / identifier / role

4. Observe this exact response or effect

## Observed Result

What happened, with exact evidence.

## Expected Result

What should have happened if authorization or validation were correct.

## Security Impact

Who is affected, what data or action is exposed, and what the realistic attack path is.

## Artifacts

Attached requests/responses, screenshots, logs, PoC video, or minimal script.

## Suggested Fix Direction

Short, technical, and proportional to the issue.

The AI-assisted version of that template is not “make this more impressive.” It is “make this easier to validate.” Triagers reward clarity. They do not reward adjectives.

A good habit is to make the model explicitly label each sentence of your report as one of three things: observed fact, inference from evidence, or contextual explanation. That one step removes a lot of accidental overclaiming.

Common ways hunters misuse AI

There are at least six common mistakes I see in AI-assisted bounty work.

The first is using AI as a scope interpreter. This is how people drift into out-of-scope mobile APIs, third-party SaaS integrations, or destructive behavior the program explicitly banned.

The second is using AI as an exploitability oracle. A response difference is not impact. A reflected value is not code execution. A hidden admin button is not broken access control. A timeout is not SSRF. The model will tell a story if you let it.

The third is using AI to generate too many leads too early. Good hunters do not drown in hypotheses. They ruthlessly compress them.

The fourth is pasting scanner output into a model and submitting whatever came back. Bugcrowd’s own researchers describe AI as a way to clean scanner noise, remove duplicates, and suggest verification steps. That is the right posture. AI should reduce slop, not manufacture it. (Bugcrowd)

The fifth is letting long chat threads become the notebook. That is a bad idea because state becomes muddy fast. Keep your source of truth outside the model: raw requests, screenshots, PoC files, and a short written timeline.

The sixth is writing impact in the tone of a marketing deck. Security teams want an exact boundary, exact blast radius, and exact reproduction path. They do not want “catastrophic compromise of the platform ecosystem” unless you can prove it.

What this means for program owners and triagers

The other side of AI bug bounty is that program owners need to expect more volume, faster reports, and a wider gap between strong and weak submissions.

HackerOne’s reported 210 percent growth in valid AI vulnerability reports is a sign of that broader shift, not just a data point about one platform. As AI makes research faster, researchers will reach more edges of the product surface. They will also arrive with AI-native bug classes that many security teams are still learning how to triage. (HackerOne)

That means good programs need clearer briefs, tighter scope definitions, more explicit prohibited actions, better examples of acceptable evidence, and better handling for AI-related findings. Google’s public criteria are a good example because they distinguish actual security and abuse issues from ordinary model weirdness. That single distinction prevents a lot of wasted time. (Google Online Security Blog)

It also means internal teams should think carefully about the tooling they use for validation. If you are introducing AI into your own retest or exposure-validation workflows, the key questions are not “does it sound smart” and “does it have agentic branding.” The key questions are whether it preserves state, constrains actions, leaves an audit trail, and outputs evidence that a human can verify. That is one reason the better operator-controlled platforms are emphasizing scope controls, human review, and artifact capture rather than theatrical autonomy. Penligent’s public product and workflow pages point in that direction, which is the right direction for organizations that care about real validation rather than demos. (Penligent)

What the next year probably looks like

The trendline is clear enough to say a few things with confidence and a few things with caution.

AI will keep getting better at the bounded, ugly parts of bug bounty: traffic triage, code reading, patch understanding, state comparison, and evidence packaging. That is already visible in the AISI and Lyptus capability work, and in how researchers describe their own use of AI in practice. (AI Security Institute)

API authorization testing will remain one of the highest-ROI areas for AI augmentation because it combines repeated structure with high manual comparison cost. AI-native targets will grow as a separate bounty category because programs now explicitly reward issues like prompt injection with victim impact, training-data leakage, model exfiltration, and tool-chain abuse rather than just conventional web flaws wrapped around a chatbot. Google’s public criteria and AI VRP activity make that direction unmistakable. (owasp.org)

What probably will not change is the value of method. The hunters who win in this environment will not be the ones with the longest prompt libraries. They will be the ones who can build a stateful workflow, preserve evidence, separate facts from hypotheses, and ask the model the narrowest useful question at the right moment.

That is the durable skill.

Final take

AI is already part of bug bounty. The question is no longer whether to use it. The question is whether you will use it with enough discipline to become faster without becoming sloppy.

Used badly, AI makes you test the wrong things faster, overclaim impact faster, and submit worse reports faster. Used well, it compresses the hard middle of the work. It helps you read more code, sort more noise, compare more states, and write cleaner reports while you keep responsibility for scope, proof, and judgment.

That is how to use AI for bug bounty in 2026. Not as a replacement for research skill, but as a force multiplier for a hunter who already knows what a real claim looks like.

Further reading

For the best public data on frontier model capability growth in cyber tasks, start with the UK AI Security Institute’s Frontier AI Trends Report and Lyptus Research’s Offensive Cybersecurity Time Horizons. They are the clearest recent sources on what has actually improved and where the limits of current benchmarking still are. (AI Security Institute)

For concrete real-world signals that AI-assisted offensive work has crossed into public incident reporting, read Anthropic’s disclosure on the AI-orchestrated cyber espionage campaign, the FreeBSD advisory for CVE-2026-4747, and the NVD entry for CVE-2026-31402. (anthropic.com)

For AI-native bug bounty surface, Google’s reward criteria for AI products and its April 2026 post on indirect prompt injection are required reading. They show what a mature program treats as a real AI security issue. (Google Online Security Blog)

For report quality and disclosure discipline, HackerOne’s guidance on quality reports, Bugcrowd’s reporting documentation, and HackerOne’s AI-era safe harbor materials are worth bookmarking. (HackerOne Help Center)

For tool-assisted workflow design from the Penligent side, the most relevant pages are its article on using AI pentest tools for OpenAI bug bounty work, its bug bounty hunter software article, its AI pentest tool workflow article, and the main product page. (Penligent)