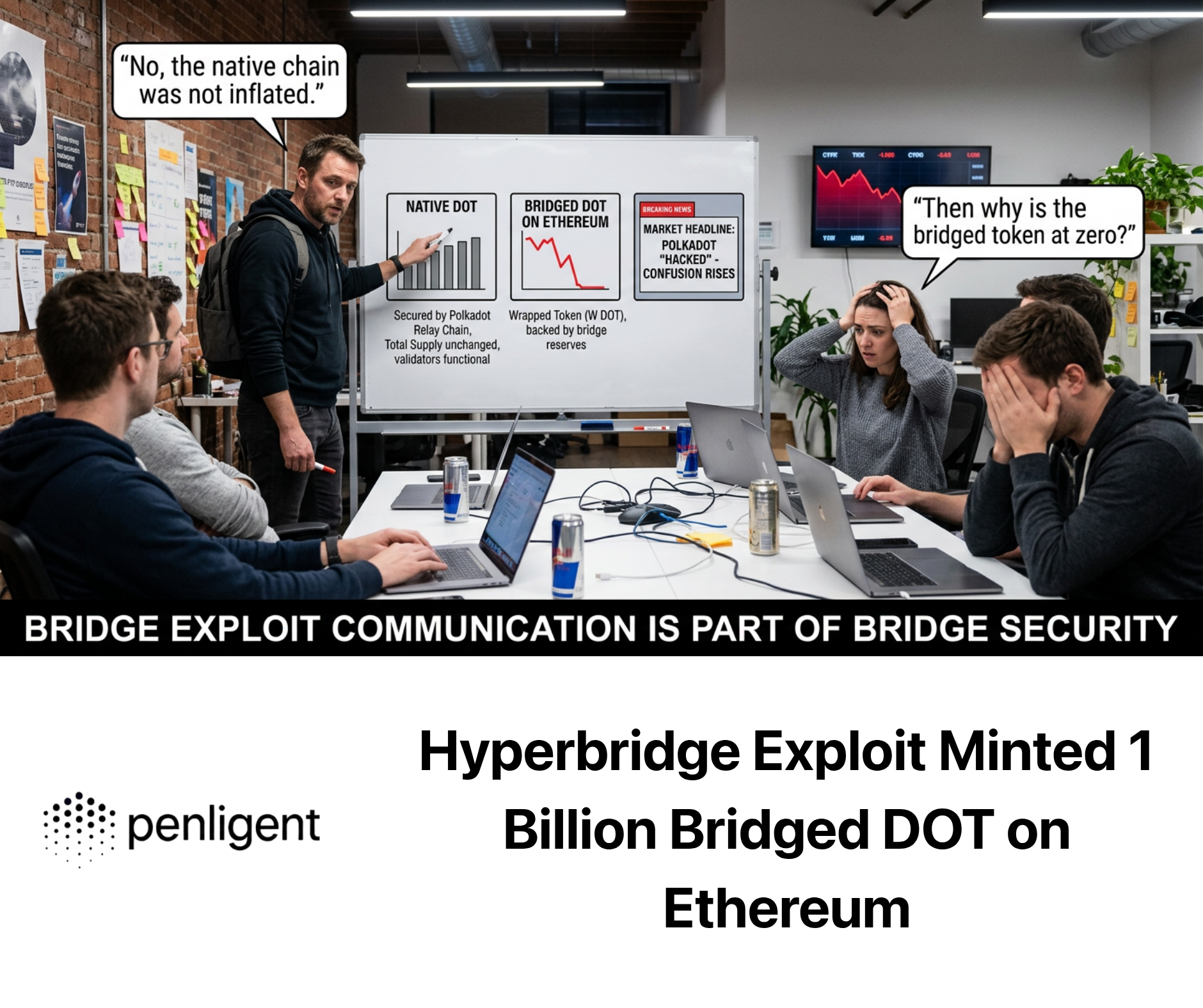

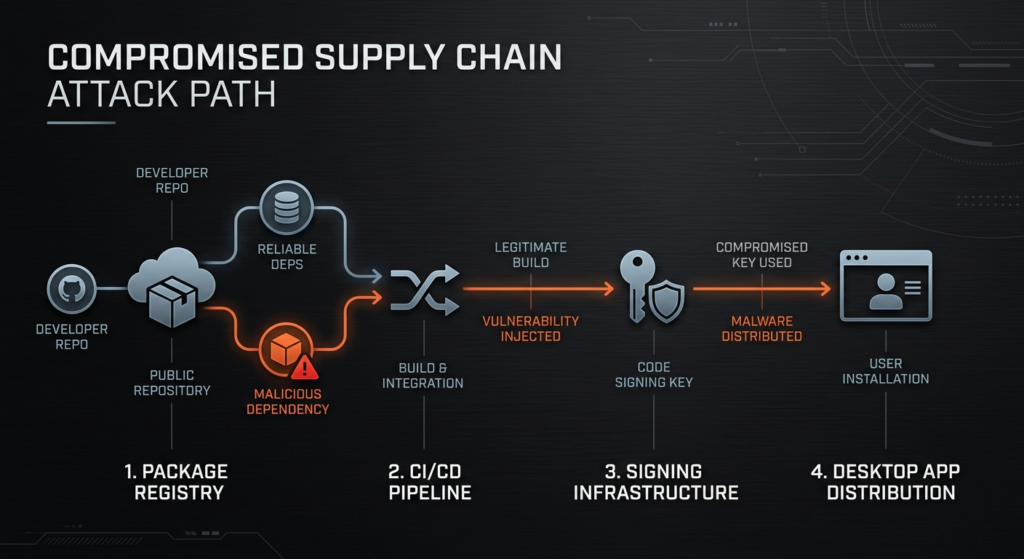

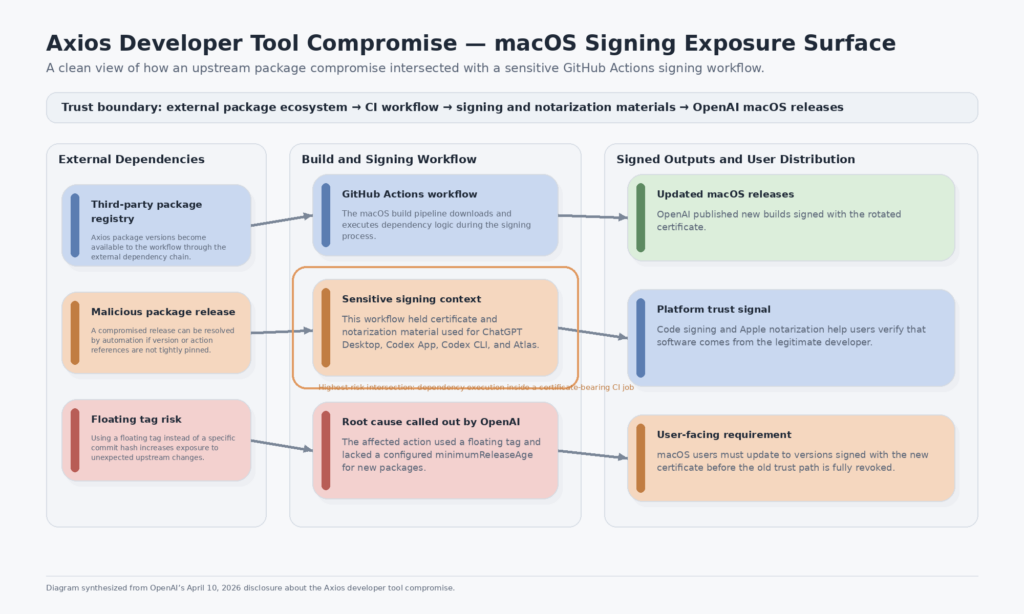

OpenAI’s Axios developer tool compromise notice was easy to misread if you only skimmed the headline. The company did לא say attackers had compromised OpenAI user data, taken over published applications, or stolen API keys. It said something more specific and, for software supply chain defenders, more interesting: a malicious Axios version ran inside a GitHub Actions workflow tied to the macOS app-signing process, and that workflow had access to certificate and notarization material used to sign OpenAI’s macOS applications. OpenAI found no evidence that its software had been altered or that the signing certificate had actually been exfiltrated, but it still revoked and rotated that certificate, published new builds, and told macOS users to update. (openai.com)

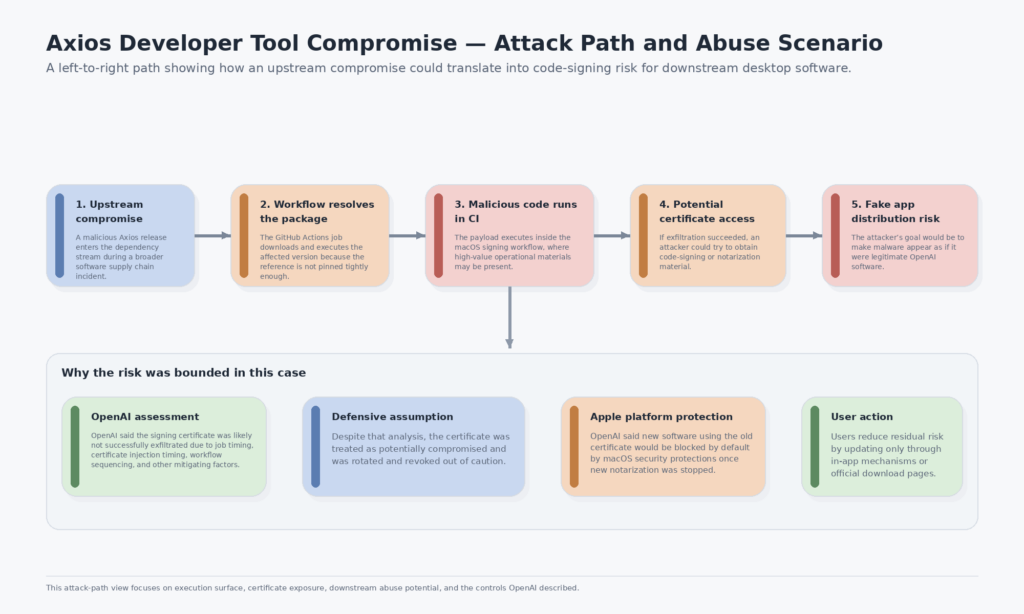

That distinction matters. A normal dependency compromise is already serious because it can expose developer workstations, CI runners, cloud credentials, or long-lived tokens. A dependency compromise that reaches a code-signing workflow is worse because it touches software trust itself. Once malicious code runs in the wrong build stage, the attacker’s ambition no longer stops at stealing secrets. The next objective can become legitimacy: shipping malware that looks like it came from a trusted developer. Apple’s Developer ID and notarization systems exist precisely because users need a way to distinguish legitimate Mac software from tampered or fake installers distributed outside the App Store. (openai.com)

That is why this incident deserves more than a quick news summary. OpenAI’s notice is useful, but it is intentionally scoped. It tells users what happened, what did not happen, and what they should do next. It does not attempt to be a full engineering essay on why a poisoned npm dependency in a signing workflow changes the threat model, how Apple’s trust chain shapes the remediation, or what CI and release teams should change after reading it. Those are the gaps that matter to security engineers, red teamers, appsec leads, release engineers, and anyone who ships desktop software or highly trusted binaries. (openai.com)

The broader incident gives the OpenAI disclosure its real technical meaning. Google Threat Intelligence Group said that between 00:21 and 03:20 UTC on March 31, 2026, an attacker introduced a malicious dependency named plain-crypto-js into Axios versions 1.14.1 and 0.30.4. Google described the dependency as an obfuscated dropper that deployed the WAVESHAPER.V2 backdoor across Windows, macOS, and Linux. Microsoft independently reported that the malicious Axios versions were 1.14.1 and 0.30.4, that they connected to attacker-controlled infrastructure, and that the payload installed a second-stage RAT on affected systems. Google attributed the activity to UNC1069, while Microsoft attributed the infrastructure and compromise to Sapphire Sleet. That difference in naming is normal in threat intelligence; vendors often track the same cluster under different labels. The important part is the shared technical picture: malicious package versions entered the registry, installed code executed on victim systems, and downstream environments were exposed. (ענן גוגל)

OpenAI’s disclosure places its own impact inside that wider timeline. The company said that on March 31, 2026 UTC, a GitHub Actions workflow used in the macOS app-signing process downloaded and executed Axios 1.14.1. That workflow had access to the certificate and notarization material used for signing ChatGPT Desktop, Codex App, Codex CLI, and Atlas on macOS. OpenAI also said its internal analysis concluded the signing certificate was likely לא successfully exfiltrated, based on the timing of payload execution, the timing of certificate injection into the job, sequencing of the job itself, and other mitigating factors. Even so, it treated the certificate as compromised and rotated it. That combination of statements is exactly what mature incident response looks like when the thing potentially exposed is a trust anchor. (openai.com)

Axios Developer Tool Compromise, What OpenAI Confirmed and What It Did Not

Before digging into supply chain lessons, it is worth pinning down the facts OpenAI actually confirmed. The company said it found no evidence that OpenAI user data had been accessed, that its systems or intellectual property had been compromised, or that its software had been altered. It also said passwords and OpenAI API keys were not affected, that the incident only affected OpenAI macOS apps, and that it did not affect the web versions of OpenAI software or other client platforms such as iOS, Android, Linux, or Windows. That scope is narrower than many readers assumed when the news first spread. (openai.com)

OpenAI also said it had found no evidence that malware had been signed as OpenAI software, and no evidence that the potentially exposed notarization and code-signing material had been misused. It reviewed notarization events tied to the impacted material and said those events were expected. That point is important because defenders should avoid sliding from “a signing workflow was exposed” to “malicious signed binaries are already circulating.” OpenAI did not claim that had happened. It explicitly said the opposite, while still making users move to a new trust path. (openai.com)

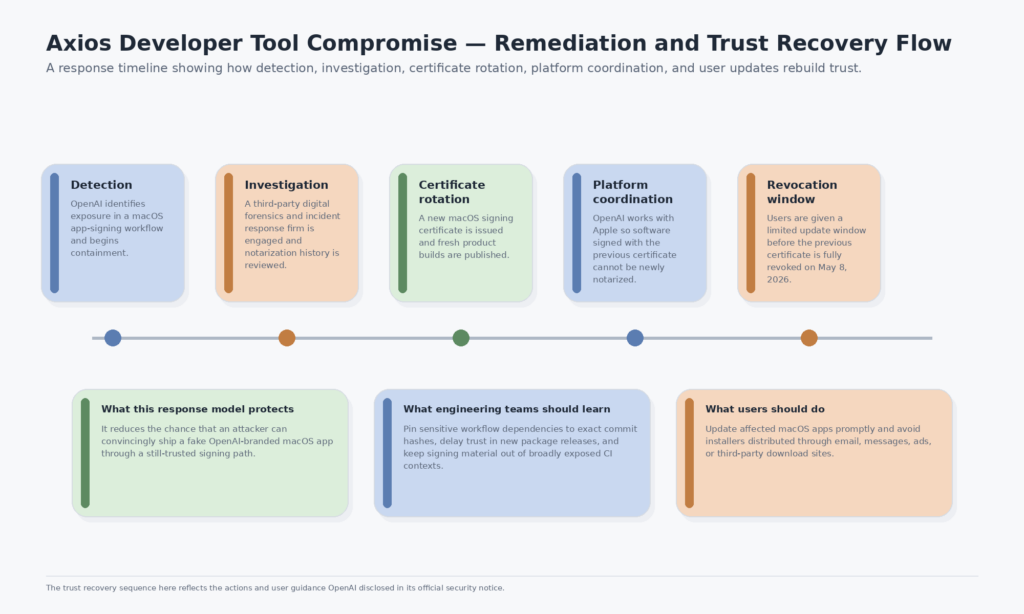

The update requirement was not symbolic. OpenAI listed the earliest macOS releases signed with the updated certificate: ChatGPT Desktop 1.2026.051, Codex App 26.406.40811, Codex CLI 0.119.0, and Atlas 1.2026.84.2. It also said that effective May 8, 2026, older versions of those macOS desktop apps would no longer receive updates or support and might not be functional. That is a classic post-rotation cleanup move: shift users off the old certificate path, narrow the residual attack surface, and align support policy with the new trust boundary. (openai.com)

What OpenAI did לא say is equally important. It did not publish forensic details about the exact execution environment, the full workflow YAML, the lifetime of the certificate material, or the precise control that prevented likely exfiltration. That omission is reasonable in a public incident notice. But it means the engineering community has to infer the more general lesson from the combination of OpenAI’s statement, Apple’s trust model, GitHub’s guidance on Actions security, and the broader Axios supply chain reporting. (openai.com)

Axios Supply Chain Attack, What Happened in npm

The OpenAI incident only makes sense when you zoom back out to the npm event. Google Threat Intelligence Group said the attacker compromised the maintainer account associated with Axios, changed the email on the account to an attacker-controlled address, and introduced plain-crypto-js into Axios 1.14.1 and 0.30.4 during a short but dangerous release window on March 31, 2026. Google also said Axios was then one of the most widely used JavaScript libraries for HTTP requests, with the affected lines receiving more than 100 million and 83 million weekly downloads respectively. That scale is why package ecosystem compromises are so attractive: one successful upstream breach can produce enormous downstream reach. (ענן גוגל)

Microsoft’s writeup filled in the install-time risk. It said the malicious package versions connected to attacker-controlled C2 to retrieve a second-stage remote access trojan, and that any projects resolving Axios versions higher than ^1.14.0 או ^0.30.0 could connect to that infrastructure during installation and download second-stage malware. Microsoft urged users who had installed Axios 1.14.1 or 0.30.4 to rotate secrets and credentials immediately and downgrade to safe versions. That language is much stronger than a routine dependency advisory because it treats affected hosts as potentially compromised systems, not merely mis-versioned build environments. (microsoft.com)

Google’s IOC and malware detail make the danger concrete. It documented the sfrclak[.]com domain, the associated IP 142.11.206.73, multiple WAVESHAPER.V2 file hashes, and YARA rules aimed at developer workstations, CI or build systems, and other impacted hosts. It described OS-specific behavior, including AppleScript execution and ad-hoc signing on macOS. That is a reminder that install-time package compromise is not an abstract supply chain category. It is executable malware delivery against the environments that fetch and build software. (ענן גוגל)

This is where many shallow summaries fail. They stop at “Axios was compromised” and move on. But the more important story is how the compromise lands. In one environment, the malicious package might only reach a single developer laptop with limited secrets. In another, it might run inside a CI job with repository secrets, cloud deploy tokens, package publishing credentials, or signing keys. The package version is the same. The blast radius is not. That difference is exactly why OpenAI’s notice is more than just another downstream victim note. It reveals that a high-trust release stage was in scope. (openai.com)

Google also placed the Axios compromise in a wider pattern. It said UNC6780, also known as TeamPCP, had recently poisoned GitHub Actions and PyPI packages tied to projects such as Trivy, Checkmarx, and LiteLLM, and warned that the growing number of stolen secrets from these campaigns could drive further supply chain attacks, SaaS compromises, ransomware and extortion events, and cryptocurrency theft. That broader context matters because it shows defenders should not treat the Axios event as a weird one-off affecting only JavaScript packages. It is part of an active period in which CI/CD trust, registry trust, and release trust are all being targeted. (ענן גוגל)

Why a Signing Workflow Changes the Risk

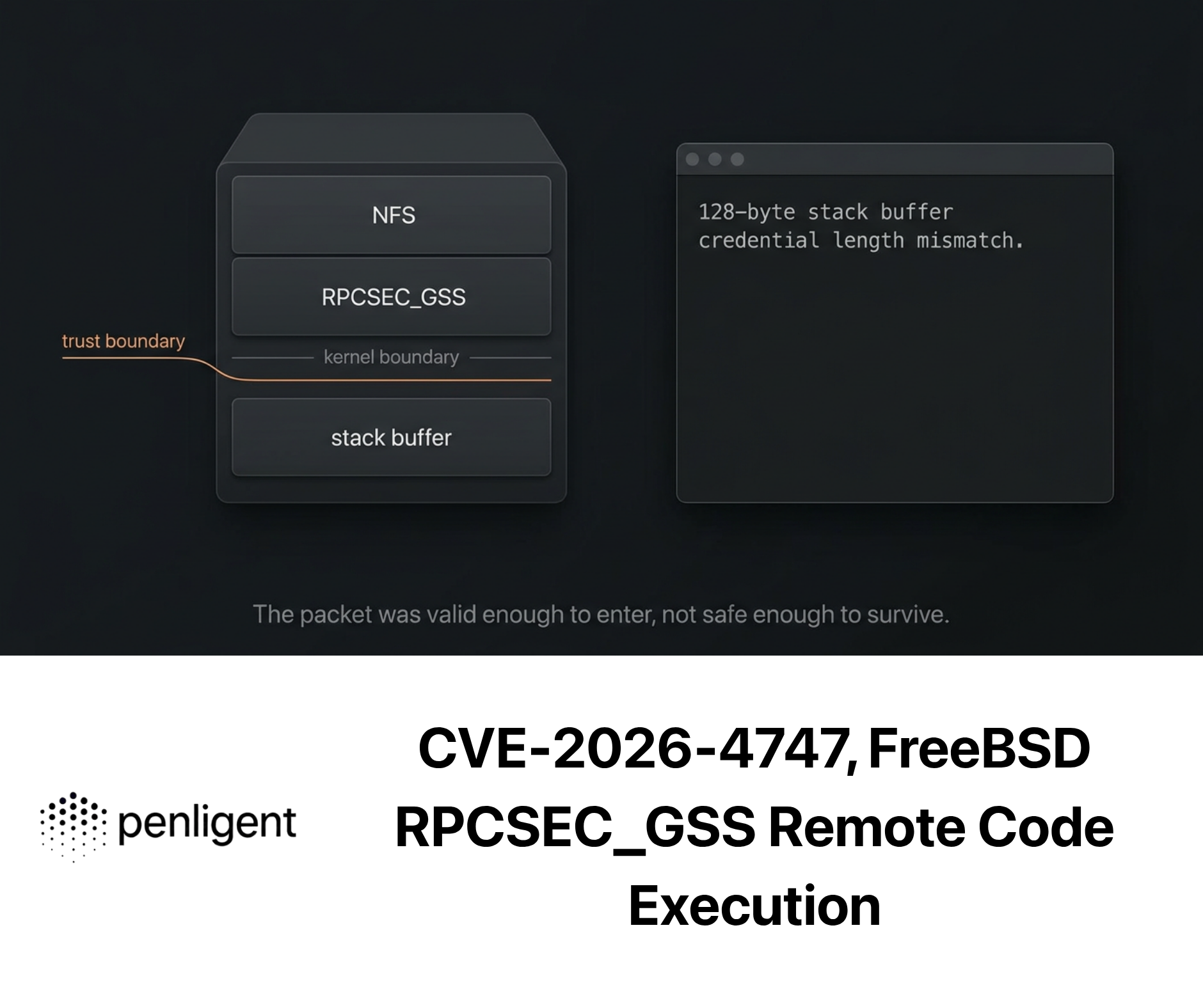

OpenAI’s announcement becomes easier to understand once you separate ordinary build compromise from signing workflow compromise. If malicious package code runs in a low-privilege test job, the attacker may steal whatever secrets that job has or tamper with ephemeral outputs. That is bad, but the damage is bounded by the permissions and secrets of that stage. If the same malicious code runs in a job that signs software, the attacker may be able to steal the material that tells end-user operating systems the software is legitimate. That is not just a CI problem. It is a distribution trust problem. (openai.com)

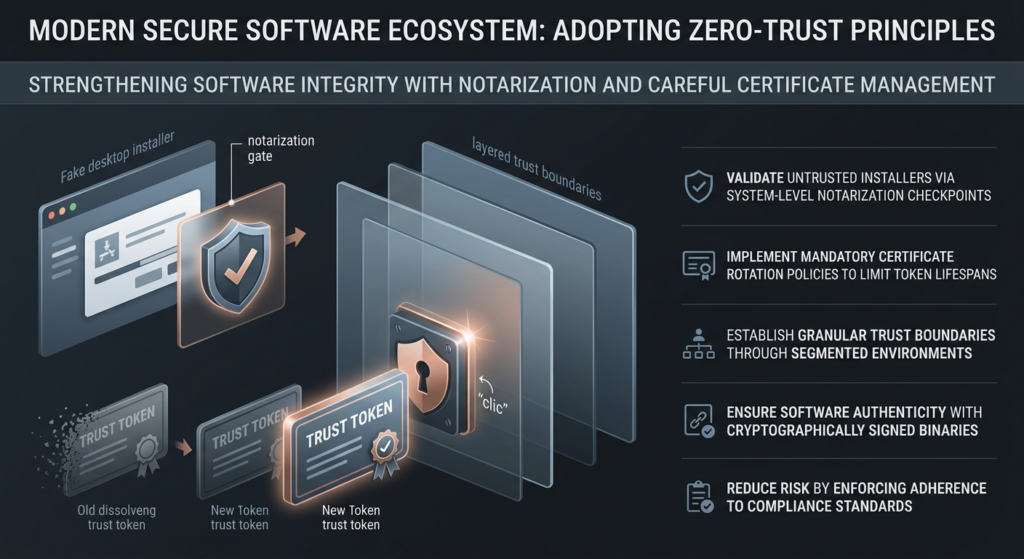

Apple’s Developer ID and notarization model explains the escalation. Apple says that for software distributed outside the Mac App Store, developers use a Developer ID certificate and submit software for notarization. Digitally signing software with a unique Developer ID and including a notarization ticket allows Gatekeeper to verify that the software is not known malware and has not been tampered with. Apple also says Gatekeeper helps protect users from downloading and installing malicious software by checking for a Developer ID certificate from apps distributed outside the Mac App Store. That means the signing path is a security boundary in the user’s trust experience, not just an internal release checklist item. (מפתח Apple)

Once malicious code reaches a certificate-bearing workflow, the attacker’s target can shift from immediate host compromise to downstream impersonation. The nightmare outcome is no longer only “the build runner was infected.” It becomes “the attacker can produce malware that looks like a legitimate vendor build.” OpenAI’s own language makes this explicit when it says the update is required to prevent the risk, however unlikely, of someone attempting to distribute a fake app that appears to be from OpenAI. The key word there is appears. Trust is the asset at risk. (openai.com)

That is also why OpenAI’s statement that exfiltration was “likely” unsuccessful does not remove the need for rotation. A leaked cloud token can sometimes be invalidated with narrow blast radius. A potentially exposed signing certificate or notarization path implicates user trust in a vendor’s software distribution. If you are wrong and the certificate did leak, the cost of inaction can be far higher than the cost of a controlled rotation. In software trust incidents, “low probability but high consequence” is often enough to justify aggressive remediation. (openai.com)

This is the same reason defenders should think differently about privileged CI jobs. Signing jobs, package publishing jobs, artifact promotion jobs, release tagging jobs, and deployment jobs are not just another part of the pipeline. They are where trust gets amplified. A harmless misconfiguration in a unit test workflow can stay local. The same misconfiguration in a signing or publishing workflow can become a supply chain event for every downstream user or customer. GitHub’s own security guidance reflects that view when it warns that compromise of a single action in a workflow can be very significant because the action may have access to all repository secrets and may be able to use GITHUB_TOKEN to write to the repository. (GitHub Docs)

Apple Gatekeeper, Developer ID, and Notarization, Why OpenAI Had to Treat This as a Trust Incident

To understand why OpenAI worked with Apple and why the remediation was not limited to internal cleanup, it helps to unpack Apple’s model in plain language. A Developer ID certificate tells macOS that a piece of software distributed outside the App Store came from an identified developer. Notarization adds another layer: the software is submitted to Apple, scanned, and issued a ticket if it passes the process. Apple’s documentation says notarization gives people more confidence that Developer ID-signed software has been checked by Apple for malicious content. Together, Developer ID and notarization create the trust signals many users see as “this looks like real Mac software.” (מפתח Apple)

That does not mean signed or notarized software is magically safe forever. It means macOS has a stronger basis for deciding whether the software is from the claimed publisher and whether it passed Apple’s checks at the time of notarization. If the underlying certificate or notarization path is exposed, the defender’s problem becomes preventing any future misuse of that trust material while preserving legitimate user updates long enough to migrate them to a new certificate. OpenAI’s FAQ makes that logic unusually clear. It said it worked to block any further notarization of macOS apps with the impacted material, so that a fraudulent app using the impacted certificate would lack notarization and therefore be blocked by default by macOS security protections unless a user explicitly bypassed them. (openai.com)

That explains two decisions some readers found odd. The first is why OpenAI coordinated with Apple rather than merely rolling new binaries. The answer is that the platform trust system is part of the remediation. The second is why OpenAI did not immediately hard-fail every older Mac build on the same day. The FAQ says immediate revocation could cause legitimate older apps to be blocked during download or first launch, so OpenAI used a time window while monitoring for abuse. In other words, the response balanced platform trust, end-user continuity, and abuse prevention. That is an operational decision shaped by Apple’s distribution model, not by general incident response slogans. (openai.com)

Apple’s certificate guidance also helps explain why rotation matters even when expiration is not the issue. Apple notes that if a Developer ID certificate was valid when an app was compiled, users can still download and run the app after the certificate’s expiration date, but developers need a new certificate to sign updates and new applications. That distinction is not identical to a compromise scenario, but it shows how existing distributed software and future signed updates occupy different trust states. OpenAI’s rotation strategy follows that split: preserve enough continuity to move users, but shift all future trust to new material. (מפתח Apple)

A useful way to think about the trust chain is to separate the layers:

| שכבה | What it does | Why it mattered here |

|---|---|---|

| Developer ID certificate | Identifies the developer for software distributed outside the App Store | Potential exposure could enable fake software to appear publisher-legitimate |

| Notarization ticket | Signals Apple-scanned software for Gatekeeper | Blocking new notarization with impacted material reduces abuse potential |

| Gatekeeper | Enforces trust checks when users open downloaded Mac software | Limits unsigned or improperly trusted installer execution by default |

| Certificate rotation | Moves future releases to fresh trust material | Prevents future updates from relying on potentially exposed material |

| User update window | Migrates real users to newly signed builds | Reduces disruption while shutting down the old trust path |

The definitions in that table follow Apple’s Developer ID and notarization documentation plus OpenAI’s description of the remediation path. (openai.com)

Why OpenAI Rotated the Certificate Even Without Evidence of Exfiltration

One of the most instructive parts of the OpenAI notice is its risk logic. The company said the signing certificate was likely not successfully exfiltrated because of timing and sequencing factors. Many organizations would stop there, breathe a sigh of relief, and quietly tighten internal controls. OpenAI did the opposite. It made the more conservative assumption and rotated anyway. That is not overreaction. It is the right bias when the potentially exposed asset is a trust anchor whose misuse would affect end users and brand trust at the software distribution layer. (openai.com)

In incident response, the right question is not “What do we hope happened?” but “What do we need to assume to prevent the highest-consequence outcome?” If an attacker might have seen a low-value secret, you can often wait for stronger proof. If an attacker might have seen certificate or notarization material used to assert product legitimacy, waiting is a much riskier gamble. The trust system itself is the thing under uncertainty. That changes the calculus. (openai.com)

OpenAI’s published actions make sense in that frame. It engaged a third-party digital forensics and incident response firm, rotated the macOS code-signing certificate, published new builds of the relevant products, worked with Apple so software signed with the previous certificate could not be newly notarized, and reviewed notarization records tied to the previous certificate. Each action maps to a specific uncertainty: what happened, what may have been exposed, what future software could still be trusted, what already published software needs to be replaced, and whether abuse has already occurred. This is a cleaner response than “we rotated secrets,” because it is layered across investigation, platform coordination, trust migration, and verification. (openai.com)

The timeline below helps show how the event unfolded from registry compromise to trust migration:

| Date | Event | מדוע זה חשוב |

|---|---|---|

| March 31, 2026 | Malicious Axios versions 1.14.1 and 0.30.4 released into npm with plain-crypto-js | Upstream package compromise begins |

| March 31, 2026 | OpenAI says its macOS signing workflow downloaded and executed Axios 1.14.1 | Downstream release pipeline enters the blast radius |

| After internal analysis | OpenAI concludes exfiltration was likely unsuccessful but treats certificate as compromised anyway | High-consequence asset triggers conservative response |

| April 10, 2026 | OpenAI publishes public notice and update guidance | End-user trust migration begins |

| May 8, 2026 | Old Mac app versions stop receiving updates or support and may stop functioning | Previous trust path is retired |

The dates and product milestones in that table come from OpenAI’s notice, the Google incident report, and Microsoft’s writeup of the malicious Axios releases. (openai.com)

OpenAI Users and Axios Users Are Not the Same Incident Response Audience

A major source of confusion after the disclosure was the tendency to treat every reader as if they had the same exposure. They do not. There are at least two materially different audiences.

The first audience is the ordinary OpenAI macOS user. For that person, the correct reading of the notice is simple: update to the current app through in-app update or official download links, avoid third-party installers, and do not assume your account credentials were compromised just because a security notice mentioned a code-signing workflow. OpenAI explicitly says passwords and API keys were not affected, that the issue only affected macOS apps, and that users should avoid installers from email, messages, ads, file-sharing links, or third-party download sites. (openai.com)

The second audience is the development or security team that may have installed or built against the malicious Axios versions in its own environment. That team must respond much more aggressively. Microsoft’s guidance is to rotate secrets and credentials immediately and downgrade to safe versions. Google’s guidance goes further by treating affected hosts as potentially compromised, recommending dependency tree audits, CI/CD deployment pauses for affected packages, host isolation, secret rotation, and IOC hunting against the attacker domain, IPs, hashes, and YARA patterns. That is very different from the OpenAI end-user path. (microsoft.com)

A simple matrix makes the split clearer:

| Audience | What is confirmed | פעולה מיידית | What not to assume |

|---|---|---|---|

| OpenAI macOS app users | Exposure occurred in OpenAI’s signing workflow, but OpenAI found no evidence of user-data compromise or signed malware misuse | Update to the newly signed versions using official channels | Do not assume your OpenAI password or API key was stolen |

| Teams that installed Axios 1.14.1 or 0.30.4 | Malicious packages fetched second-stage malware on affected hosts | Treat hosts as suspect, rotate secrets, downgrade, hunt IOCs, verify CI history | Do not treat this as a routine semver bugfix mistake |

| Release and CI engineers | High-value workflows amplify blast radius if untrusted code executes inside them | Re-examine signing, publishing, and deployment jobs as trust boundaries | Do not assume “CI compromise” and “customer trust compromise” are separate categories |

The table above is drawn from OpenAI’s FAQ and the public Google and Microsoft incident guidance. (openai.com)

How to Audit Your Environment for the Axios Supply Chain Attack

If your organization uses JavaScript or Node build systems, the first priority is evidence gathering, not arguments about how likely it is that your exact project path was hit. You want to answer four questions fast. Did any lockfile or dependency tree resolve Axios 1.14.1 or 0.30.4. Did any environment contain plain-crypto-js. Did any build or workstation communicate with the known attacker infrastructure. And did any high-value CI job run during the relevant time window with secrets, signing material, or publish credentials in scope. Those questions map directly to the public remediation guidance from Google and Microsoft. (microsoft.com)

Start with repository and lockfile searches. This is not enough to clear a host, but it is a fast way to identify projects that need deeper review.

rg -n '"axios"\s*:\s*"1\.14\.1"|\"axios\"\s*:\s*\"0\.30\.4\"|plain-crypto-js' \

package-lock.json pnpm-lock.yaml yarn.lock bun.lockb . -g '!node_modules'

That search is aimed at the versions and malicious dependency publicly identified by Google and Microsoft. If it hits, the next question is not merely “Can we update?” but “Where was this resolved and executed?” because the same version string has very different consequences on a disposable laptop sandbox versus a privileged build job. (microsoft.com)

Then inspect the resolved dependency tree in environments that are still available.

npm ls axios plain-crypto-js || true

pnpm why axios || true

bun why axios || true

These commands help establish whether Axios was present directly or transitively. They do not prove historical installation state if the environment has already changed, and they will not save you if the relevant runner or workstation was ephemeral and has already been destroyed. But they are still useful for triage and scoping. Bun’s dependency tooling and pnpm’s lockfile-centric model are also a reminder that package manager choice affects how easy it is to reason about past installs after an incident. (bun.com)

For lockfiles, jq can be helpful on package-lock.json when you want structured output instead of regex matches.

jq -r '

.. | objects

| select(has("version") and has("resolved") | not)

| select(.version == "1.14.1" or .version == "0.30.4")

' package-lock.json

This is not universal across every lockfile format, and it says nothing about the presence of plain-crypto-js if the package was later garbage-collected from your environment. But for repositories that keep lockfiles under version control, it can quickly tell you whether a project pinned or resolved one of the malicious versions. Google specifically recommended auditing dependency trees and inspecting for plain-crypto-js. (ענן גוגל)

After package-level scoping, move to network and execution evidence. Google published the domain sfrclak[.]com, the C2 IP 142.11.206.73, and multiple file indicators. If you have EDR, DNS logs, proxy logs, network telemetry from build runners, or shell history from developer systems, search for those indicators immediately.

# Example shell-history triage on Linux or macOS

grep -R "sfrclak\.com\|142\.11\.206\.73" ~/.zsh_history ~/.bash_history 2>/dev/null

# Example local artifact scan

find /tmp /var/tmp /Library/Caches -type f 2>/dev/null | grep -E 'com\.apple\.act\.mond|plain-crypto-js'

Those examples are intentionally simple. Real enterprises should prefer EDR, centralized logs, and forensic collection over ad hoc shell greps. The important point is that Google explicitly provided IOC and YARA material, which means teams do not need to invent a detection strategy from scratch. (ענן גוגל)

If any build runner, developer host, or signing machine resolved the malicious versions, the safest default is to treat that host as suspect and rotate the secrets that were present there. Microsoft explicitly advised immediate secret and credential rotation for users who installed the malicious Axios versions. Google similarly advised that if plain-crypto-js is detected, defenders should assume the environment is compromised, revert to a known-good state, and rotate all credentials or secrets present on that machine. That is the right posture because the public reporting describes a second-stage RAT, not a harmless telemetry beacon. (microsoft.com)

If you are responsible for CI, add one more layer of review: reconstruct the exact high-value workflows that ran during the exposure window. Which jobs could publish packages, sign binaries, create releases, upload artifacts, or deploy to production. Which of those jobs pulled fresh dependencies instead of consuming locked artifacts. Which of them used long-lived cloud credentials, certificate materials, or human-scoped tokens. The OpenAI case shows why this matters. A poisoned package in a generic test workflow and the same poisoned package in a signing workflow are different incidents even when the registry compromise is identical. (openai.com)

Hardening GitHub Actions After the Axios Developer Tool Compromise

GitHub’s own Actions security guidance is unusually direct on the first control that matters here: pin actions to a full-length commit SHA. GitHub says this is currently the only way to use an action as an immutable release, and that pinning helps mitigate the risk of a bad actor adding a backdoor to the action’s repository. It also warns that compromise of a single action inside a workflow can be very significant because the action may gain access to repository secrets and GITHUB_TOKEN. OpenAI’s public root-cause summary lines up with that guidance, because it says the affected action used a floating tag instead of a specific commit hash. (GitHub Docs)

That point is easy to repeat and easy to under-implement. Many teams pin only the most obvious third-party actions and ignore reusable workflows, setup helpers, release helpers, or internal wrappers that transitively fetch external code. GitHub’s docs make the broader model clear: if you reference reusable workflows by a commit SHA, reuse becomes stable and reviewable; if you reference a tag or branch, you are trusting that mutable reference over time. In security terms, that means tags and branches are not just versioning conveniences. They are supply chain boundaries. (GitHub Docs)

A hardened signing or release workflow should look more like this:

name: macos-release

on:

workflow_dispatch:

push:

tags:

- "v*"

permissions:

contents: read

id-token: write

jobs:

build:

runs-on: macos-latest

steps:

- uses: actions/checkout@8ade135a41bc03ea155e62e844d188df1ea18608

- uses: actions/setup-node@60edb5dd545a775178f52524783378180af0d1f8

with:

node-version: "22"

- run: npm ci

sign-and-release:

needs: build

runs-on: macos-latest

environment: production-release

steps:

- uses: actions/checkout@8ade135a41bc03ea155e62e844d188df1ea18608

- name: Authenticate to cloud KMS with OIDC

run: ./scripts/oidc-login.sh

- name: Sign artifact

run: ./scripts/sign-macos.sh

- name: Publish release

run: ./scripts/release.sh

This example is illustrative, not a drop-in production workflow. The design choices are the point: full-length commit SHAs, reduced token permissions, a separate release environment, and OIDC instead of long-lived cloud credentials where possible. GitHub explicitly recommends SHA pinning and also recommends OIDC so workflows can stop storing long-lived cloud secrets. (GitHub Docs)

OIDC matters because every long-lived secret present in a privileged CI job increases the value of that job to an attacker. GitHub’s documentation says OIDC allows workflows to access cloud resources without having to store long-lived cloud secrets in GitHub, and says this provides security benefits. That does not make a compromised workflow harmless. A short-lived token minted for the job can still be abused during its lifetime. But it does reduce the value of stealing secrets from the environment compared with harvesting broad, durable credentials that remain valid long after the workflow ends. (GitHub Docs)

Runner choice matters too. GitHub’s secure-use guidance says GitHub-hosted runners execute in ephemeral, clean, isolated virtual machines, while self-hosted runners do not have those guarantees and can be persistently compromised by untrusted code in a workflow. That warning becomes much more important once you start discussing package compromises and poisoned actions. A malicious dependency running on a self-hosted runner may inherit residue from earlier jobs, local caches, attached infrastructure, or internal network reach that simply would not exist on a fresh hosted runner. (GitHub Docs)

The higher the privilege of the job, the more valuable it is to split roles and shrink exposure windows. Signing material should not sit around in broad workflow contexts that also fetch fresh third-party code. Release jobs should be separated from general build jobs when possible. Environment approvals and protected environments should be used for workflows that can deploy or sign. GITHUB_TOKEN permissions should be set explicitly rather than left broad by default. None of these controls would make malicious Axios packages disappear, but together they reduce what a successful compromise can actually touch. GitHub’s security model is built around exactly that principle: compromise the impact of an action, then reduce the permissions and secrets available to it. (GitHub Docs)

Minimum Release Age, Why OpenAI’s Root-Cause Note Matters

One small phrase in OpenAI’s notice deserves more attention than it got. The company said the affected action lacked a configured minimumReleaseAge for new packages. That sounds like a minor package-manager tuning issue until you understand the security idea behind it.

The basic logic is simple. Many malicious package releases are caught quickly after publication. If your installation logic refuses to consume packages that were published only minutes or hours ago, you give the ecosystem time to detect, report, and remove obvious malicious uploads before they enter your environment. pnpm’s supply chain security guidance says delaying updates by 24 hours will most likely prevent you from installing a bad version, and defines minimumReleaseAge as the number of minutes that must pass after a version is published before pnpm will install it. Bun uses nearly identical language, saying you can configure a minimum age requirement so recently published package versions are filtered out during installation. (pnpm.io)

This control is not magic. It is a time buffer, not a provenance guarantee. A patient attacker can wait out the window. A sophisticated supply chain attack can target a dependency update cadence or maintain malicious logic long enough to survive the cooldown. And if you rely on emergency hotfixes from certain vendors, a blanket age gate may be too blunt. But for the exact class of event represented by malicious rapid-fire package publication, it is a meaningful friction layer. OpenAI’s mention of it suggests the company sees release timing as part of the defense surface, not just version pinning. (openai.com)

Here is what that looks like in practice with pnpm:

# pnpm-workspace.yaml

minimumReleaseAge: 1440

minimumReleaseAgeExclude:

- typescript

- webpack

pnpm says a minimumReleaseAge של 1440 waits one day before installing a newly published version, and its settings documentation also supports exclusions for packages that need faster uptake. That makes the control usable in real environments rather than purely academic. (pnpm.io)

And here is the same idea with Bun:

# bunfig.toml[install]

minimumReleaseAge = 259200 minimumReleaseAgeExcludes = [“@types/node”, “typescript”]

Bun’s documentation says the value is in seconds, that all direct and transitive dependencies are filtered during new resolution, and that recently published packages can be excluded selectively. That is a concrete example of a package manager absorbing supply chain lessons into install-time behavior. (bun.com)

The bigger point is that “don’t use latest” is not enough. Plenty of teams already pin semver ranges but still allow lockfile refreshes or CI installs to pull the newest matching release on a routine cadence. Minimum release age acts as a second question after version matching: even if a version is syntactically acceptable, is it old enough to trust yet. That is a subtle but important shift in package hygiene. (pnpm.io)

Immutable Releases and Artifact Attestations, Publisher-Side Controls That Matter More Than Most Teams Think

Consumer-side controls like full-SHA pinning and release-age gating are only half of the picture. Publisher-side controls matter too, especially in ecosystems where tags, release assets, or build outputs are a normal trust surface. GitHub’s immutable releases feature says that once a release is marked immutable, its assets cannot be added, modified, or deleted, and tags for new immutable releases are protected and cannot be moved. GitHub explicitly says this protects distributed artifacts from supply chain attacks. (The GitHub Blog)

That matters because mutable tags have repeatedly shown up in real incidents. If consumers trust v1, v2, or other moving tags, and a maintainer account or release process is compromised, those references can become an attack delivery mechanism. Immutable releases attack that problem from the publisher’s side by reducing the ability to rewrite release history after publication. They do not solve every compromise scenario, but they change the attacker’s operating room. (The GitHub Blog)

Artifact attestations address a different but related gap. GitHub says artifact attestations create a verifiable way to link software artifacts back to source code and build instructions. In practice, that means downstream consumers and internal release teams can ask not only “Did I download the same bytes?” but also “Can I verify where these bytes came from and how they were built?” GitHub’s release attestation support and verification commands make this increasingly practical rather than theoretical. (The GitHub Blog)

For teams shipping desktop clients, CLIs, GitHub Actions, or other highly trusted artifacts, these controls are not just open source hygiene theater. They are the next layer after pinning. Pinning says “consume an immutable reference.” Immutable releases say “publish immutable references.” Attestations say “prove that the artifact came from the declared build.” When you combine those controls with Apple’s code-signing and notarization model, you start to get a much stronger end-to-end trust story. (The GitHub Blog)

Here is a minimal example of the verification side using GitHub’s release verification tooling:

gh release verify v1.2.3

gh release verify-asset v1.2.3 myapp-macos-arm64.zip

GitHub’s release documentation says immutable releases receive signed attestations and can be verified with GitHub CLI. That does not replace your own signing pipeline, but it adds another check between build output and downstream trust. (The GitHub Blog)

The CVEs That Explain the Bigger Pattern

The Axios incident was not filed as a neat product CVE in the same way defenders are used to seeing ordinary vulnerabilities. But if you want to understand its security shape, two recent CVEs are highly relevant.

The first is CVE-2025-30066, ה tj-actions/changed-files GitHub Actions supply chain compromise. NVD says the issue allowed remote attackers to discover secrets by reading action logs, and explicitly notes that tags v1 through v45.0.7 were affected because they were modified to point to a malicious commit. This is directly relevant to the Axios and OpenAI story because it demonstrates the security cost of trusting mutable references in CI. The mechanism is different, but the lesson is the same: if your workflow consumes third-party automation through references that can move, your CI is trusting mutable code. (nvd.nist.gov)

The exploitation condition in CVE-2025-30066 also maps cleanly to CI hardening. The dangerous part was not a traditional memory corruption bug or remotely reachable application flaw. It was the ability to redirect trusted workflow references to malicious code that could expose secrets in logs. That is why full-SHA pinning shows up so often in GitHub’s guidance and why it should be treated as baseline rather than best-effort. If a tag can move, the trust relationship is softer than many teams realize. (nvd.nist.gov)

The second is CVE-2024-3094, the XZ Utils backdoor. NVD says malicious code was discovered in upstream tarballs beginning with version 5.6.0, and that through a complex build-time process the backdoor extracted a prebuilt object file from disguised test data and modified library behavior during build. The lesson was not merely “xz had a bug.” The lesson was that the artifact supply chain and build path had been compromised. (nvd.nist.gov)

That is why CVE-2024-3094 belongs in the same conversation as the Axios developer tool compromise. In both cases, the attacker abused trust in software distribution and build material rather than attacking the final application from the outside. In XZ, the malicious logic rode in release material and activated during build. In Axios, the malicious logic rode in package registry distribution and activated during installation. In OpenAI’s downstream case, that install-time execution reached a signing workflow. Different mechanics, same strategic truth: trusted build and distribution paths are privileged attack surfaces. (nvd.nist.gov)

A comparison table is useful here:

| Incident | Attack position | Why it matters to this story | Key mitigation theme |

|---|---|---|---|

| CVE-2025-30066 | GitHub Actions reference trust and log exposure | Shows how mutable action references can turn CI into a secret leakage path | Full-SHA pinning, token minimization, workflow review |

| CVE-2024-3094 | Upstream artifact and build-path compromise | Shows that trusted release material can be malicious even when the source repo story looks normal | Provenance checks, release verification, build trust segregation |

| Axios 2026 compromise | Registry package compromise with install-time malware | Shows how poisoned packages can reach developer hosts, CI, and signing workflows | Safe version pinning, release-age gating, host rotation, high-value workflow isolation |

The comparison is based on NVD’s CVE descriptions, GitHub’s Actions security guidance, and the public reporting on the Axios incident. (nvd.nist.gov)

What these incidents share is more important than what makes them different. They all exploit the fact that modern software trust is not one control. It is a chain of assumptions across registries, maintainers, package resolution, reusable workflows, release references, build systems, signing systems, and endpoint validation. If one link is both trusted and weakly controlled, attackers do not need a classic software exploit. They can ride the normal development path. (nvd.nist.gov)

The Practical Hardening Model After an Incident Like This

After a supply chain event, teams often focus first on eradication and secret rotation, which is necessary. But the deeper engineering win comes from changing how trust is distributed in the pipeline.

The first design principle is to treat high-value workflows differently from ordinary build jobs. If a job signs code, notarizes software, publishes packages, uploads release assets, or deploys production services, it should not also be the place where the environment casually resolves fresh third-party code under broad permissions. That principle follows directly from the OpenAI incident and from GitHub’s own warning about the significance of a compromised action inside a secret-bearing workflow. (openai.com)

The second principle is to assume that mutable references are not security boundaries. Tags are useful for humans. They are not enough for privileged automation. GitHub says full-length commit SHA pinning is the only immutable release model for actions today, and GitHub’s own release immutability work is essentially an admission that the ecosystem needs stronger defaults on the publishing side as well. If your release security still depends on “nobody will ever move this tag,” you are trusting a policy story more than a technical control. (GitHub Docs)

The third principle is to reduce secret value inside CI. GitHub’s OIDC documentation is important not because it solves supply chain compromise, but because it reduces the long-tail damage if compromise happens. Replacing long-lived cloud secrets with short-lived federated tokens does not make a stolen credential safe. It makes it less durable, less reusable, and easier to scope tightly to the workflow context. In high-trust jobs, that is a major improvement. (GitHub Docs)

The fourth principle is to shorten trust in newly published packages. Minimum release age is one concrete example. Internal registries with approval or quarantine are another. Safe lockfile workflows are another. The point is not to create bureaucracy for its own sake. The point is that a surprising number of real package compromises rely on the victim trusting a new release immediately. Time can be a security control if you use it intentionally. (pnpm.io)

The fifth principle is to verify distributed artifacts, not just source code. GitHub’s artifact attestation and immutable release work, Apple’s notarization process, and standard package-lock and provenance controls are all responses to the same structural problem: source repositories, release assets, package registry versions, and final binaries do not always tell the same story unless you force them to. That is the heart of modern supply chain defense. (The GitHub Blog)

The Post-Incident Verification Problem

A lot of incident writeups end once the package is downgraded and the secrets are rotated. That is understandable, but incomplete. Security teams do not just need remediation. They need evidence that the dangerous path is gone.

In the OpenAI case, the post-incident questions were obvious. Are all active macOS apps now signed with the new certificate. Can the old certificate still be used for new notarization. Have expected notarization events been reviewed. Are users being pushed only through legitimate update paths. Are there fake installers exploiting the confusion window. OpenAI’s public notice answers some of those questions at a high level, but they are exactly the kinds of questions every affected organization should be asking about its own trust surfaces after a pipeline compromise. (openai.com)

That is why post-incident validation is a different discipline from static review. After a supply chain event, teams often need to verify not just that a vulnerable version is gone from source control, but that the unsafe execution path is gone in practice, that old release routes cannot be abused, that external download and update surfaces are behaving as expected, and that the evidence is strong enough to satisfy internal stakeholders, auditors, and customers. That work usually involves adversarial retesting rather than passive inventory alone. (GitHub Docs)

This is one of the few places where a small mention of Penligent is natural rather than forced. Penligent’s public homepage frames its platform around verified attack chains, evidence-first results, reproducible findings, and exportable reporting for security engineers and red teams. Its own supply chain writing also focuses on the same practical controls exposed by these incidents, including immutable references, overpowered CI tokens, and the difference between a normal finding and a verified impact path. In other words, the value in this context is not “AI summarizing a CVE.” It is using automation to retest risky external or workflow-adjacent paths and produce evidence that the dangerous route no longer reproduces. (penligent.ai)

That is the larger lesson from this event. A supply chain incident is not fully closed when the bad package disappears. It is closed when the organization can show that the trust boundary that failed has been rebuilt, constrained, and retested. For desktop software, that means signing and update channels. For CI/CD, it means workflow references, publish credentials, and artifact provenance. For external attackers, it often means trying to reach the system the wrong way and confirming it no longer accepts the same path. (openai.com)

A Practical Checklist for Teams That Ship Trusted Software

The checklist below is intentionally ordered by urgency instead of by team boundary.

| עדיפות | פעולה | מדוע זה חשוב |

|---|---|---|

| Today | Audit for Axios 1.14.1, 0.30.4, and plain-crypto-js in code, lockfiles, CI, and hosts | Establish whether you are in the blast radius |

| Today | Rotate secrets on any host that installed the malicious versions | Google and Microsoft both treat affected hosts as potentially compromised |

| Today | Stop consuming mutable third-party Actions references in privileged workflows | GitHub says full SHA is the only immutable action reference model |

| This week | Move privileged CI cloud access to OIDC where possible | Reduces long-lived secret exposure in workflows |

| This week | Separate signing, publishing, and deployment workflows from broader build contexts | Shrinks what a compromised dependency can touch |

| This week | Review self-hosted runner exposure for sensitive workflows | Persistent runners expand blast radius |

| This quarter | Enable immutable releases and artifact attestations for critical outputs | Improves artifact integrity and provenance verification |

| This quarter | Introduce release-age gating or equivalent quarantine for risky package updates | Gives the ecosystem time to catch malicious uploads |

| מתמשך | Retest update and distribution paths after fixes | Confirms the bad path is gone, not just patched on paper |

Every line in that table is grounded in either OpenAI’s published remediation, public Axios incident guidance from Google and Microsoft, or GitHub’s official Actions security documentation. (openai.com)

The Real Lesson of the Axios Developer Tool Compromise

The easiest way to misunderstand the Axios developer tool compromise is to treat it as a niche OpenAI story or a transient npm scare. It is neither. It is a clear example of how one upstream package compromise can cross multiple trust layers: registry trust, install-time execution, CI trust, signing trust, platform trust, and user trust. OpenAI’s public response makes that visible because the company had to deal not just with a poisoned dependency, but with the possibility that the software legitimacy path for its Mac apps had been touched. (openai.com)

The broader engineering lesson is uncomfortable but useful. Modern software security is not defined only by whether your application has exploitable bugs. It is also defined by where you fetch code, how fast you trust it, which workflows can execute it, what secrets those workflows can reach, how you publish what they produce, and how endpoint platforms decide whether users should trust the final artifact. When those layers are cleanly separated, a malicious package may stay local. When they collapse into one broad, convenient, overly privileged pipeline, a dependency issue can become a distribution trust incident. (GitHub Docs)

That is why OpenAI rotated the certificate. Not because the worst case had been proven, but because the trust anchor had entered the uncertainty set. In a mature security program, that is enough. (openai.com)

Further Reading

- OpenAI, Our response to the Axios developer tool compromise (openai.com)

- Microsoft Security, Mitigating the Axios npm supply chain compromise (microsoft.com)

- Google Threat Intelligence Group, North Korea-Nexus Threat Actor Compromises Widely Used Axios NPM Package in Supply Chain Attack (ענן גוגל)

- Apple Developer, Signing your apps for Gatekeeper (מפתח Apple)

- Apple Developer, Developer ID Support (מפתח Apple)

- GitHub Docs, Secure use reference for GitHub Actions (GitHub Docs)

- GitHub Docs, OpenID Connect security hardening (GitHub Docs)

- GitHub Changelog, Immutable releases are now generally available (The GitHub Blog)

- GitHub Changelog, Releases now support immutability in public preview (The GitHub Blog)

- GitHub Blog, Introducing Artifact Attestations (The GitHub Blog)

- NVD, CVE-2025-30066 (nvd.nist.gov)

- NVD, CVE-2024-3094 (nvd.nist.gov)

- Penligent homepage (penligent.ai)

- Penligent Hacking Labs, AI Supply Chain Security After Mercor (penligent.ai)

- Penligent Hacking Labs, LiteLLM on PyPI Was Compromised, What the Attack Changed and What Defenders Should Do Now (penligent.ai)