OpenClaw 2026.2.23 Is a Useful Release, but Security Teams Should Read It as a Signal, Not a Finish Line

OpenClaw 2026.2.23 is being framed as a release that combines security updates with new AI capabilities, and that framing is basically correct. Public reporting highlights security improvements plus support for newer model/provider workflows, while the official release notes show a broad set of changes across gateway behavior, provider integrations, session maintenance, tooling, and reasoning-leakage-related fixes. (Cyber Security News)

But if you’re a security engineer, the more important takeaway is not “OpenClaw got better.” It’s that OpenClaw is now operating in a threat model where every release should be interpreted as part of a moving control boundary: local execution, AI tool invocation, web search, provider routing, browser/network access, and extension ecosystems all overlap in ways that classic appsec checklists don’t fully cover. That broader risk picture is visible not only in the 2026.2.23 release notes, but also in the recent stream of OpenClaw-related incidents and advisories across the ecosystem, including marketplace abuse and exposed deployments. (GitHub)

This article breaks down what changed in 2026.2.23, extracts the most relevant SEO/GEO search terms from the source article and broader coverage, maps those changes to real attack surfaces, and gives defenders a practical hardening plan that goes beyond “just update.”

The keywords that matter most around this release

Based on the source article you shared plus recurring wording in recent coverage, the strongest high-intent terms around this topic are not just “OpenClaw release” or “AI features.” Security audiences are clicking on combinations of versioned release terms + risk terms + exploit context. In practice, the highest-value keyword cluster is usually:

- OpenClaw 2026.2.23

- OpenClaw security updates

- OpenClaw new AI features

- OpenClaw vulnerabilities

- OpenClaw CVE

- OpenClaw ClawHub malicious skills

- OpenClaw exposed instances

- OpenClaw SSRF

- OpenClaw prompt injection

- OpenClaw hardening guide

That pattern is consistent with how the release is described in the Cyber Security News article (security updates + new AI features), how the official GitHub release is structured (changes, breaking change, fixes), and how parallel reporting emphasizes exploitation, malicious skill ecosystems, and deployment exposure. (Cyber Security News)

A practical SEO point (without turning this into editorial process talk): the phrase “OpenClaw 2026.2.23 security updates” is usually a stronger entry keyword than a generic “OpenClaw release notes” phrase for a security audience, because it aligns with what defenders actually want to know—what changed, what risk was reduced, and what is still exploitable.

What actually changed in OpenClaw 2026.2.23

The official release notes for v2026.2.23 are much richer than the summary most people will see on news sites. Yes, there are new AI features, but there are also several changes that directly affect security posture, operational behavior, and trust boundaries. (GitHub)

Security-relevant additions and fixes in the release notes

A few changes are especially important for defenders:

- Optional HTTP security headers (HSTS) at the gateway

- The release adds optional

gateway.http.securityHeaders.strictTransportSecuritysupport to emitStrict-Transport-Securityfor direct HTTPS deployments, including validation/tests/docs. (GitHub) - The source article also calls this out as a key security highlight. (Cyber Security News)

- The release adds optional

- Security/config redaction improvements

- The release notes mention redaction of sensitive-looking dynamic catchall keys in

config.getsnapshots (examples includeenv.*औरskills.entries.*.env.*) while preserving round-trip restore behavior. This matters because configuration snapshots are often reused for debugging, automation, and AI-assisted troubleshooting—exactly where accidental secret exposure becomes likely. (GitHub)

- The release notes mention redaction of sensitive-looking dynamic catchall keys in

- Reasoning leakage protections

- The release includes fixes preventing delivery of suppressed reasoning segments and blocking raw fallback resend of

Reasoning:/<think>text in certain legacy session paths, plus additional logic around model-default thinking behavior. In plain terms: this is part security fix, part privacy/control-plane fix. (GitHub)

- The release includes fixes preventing delivery of suppressed reasoning segments and blocking raw fallback resend of

- Breaking change in browser SSRF policy defaults

- The release notes explicitly state a breaking change where browser SSRF policy defaults to trusted-network mode and config key migration occurs via

openclaw doctor --fix. This is security-critical because “browser/network reachability” in agent systems is not a simple UI feature; it can become an internal-network access path if not tightly controlled. (GitHub)

- The release notes explicitly state a breaking change where browser SSRF policy defaults to trusted-network mode and config key migration occurs via

New AI/provider features that also change security assumptions

The same release adds or expands support for:

- Kilo Gateway provider support and default model routing (including Claude Opus 4.6 references)

- Vercel AI Gateway model shorthand normalization

- Moonshot/Kimi support for

web_searchand video provider flows - Per-agent parameter overrides and cache behavior tuning

- Session cleanup and disk-budget controls

These are productively useful features. They also expand the configuration surface and the number of integration points where credentials, routing rules, logs, and tool outputs need to be governed. In agent systems, “feature surface” and “attack surface” often grow together. (GitHub)

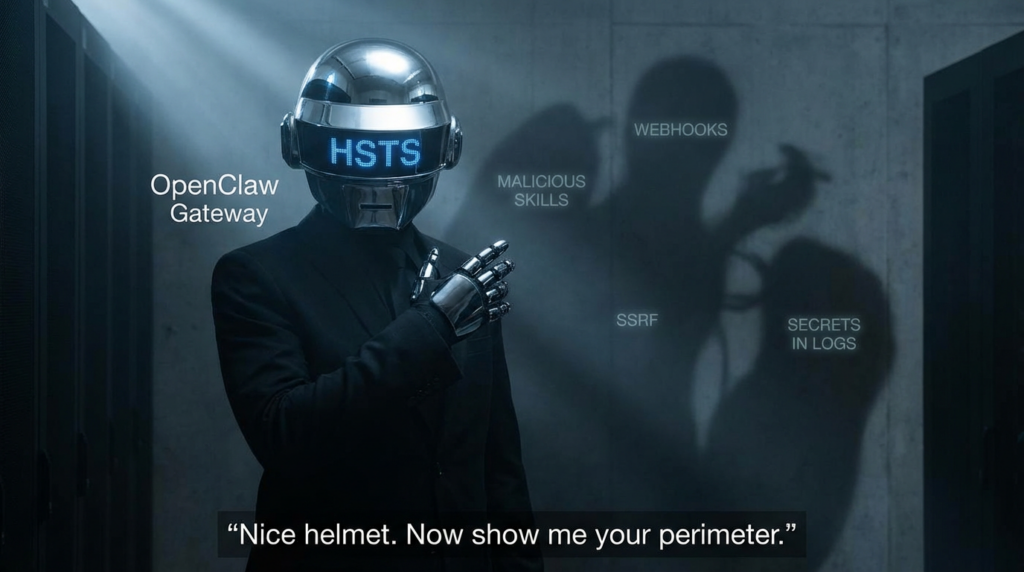

Why the HSTS change is good, but not enough by itself

The addition of optional HSTS support is a welcome hardening step. The Strict-Transport-Security header tells browsers to use HTTPS for future requests to a host, reducing downgrade/MITM exposure after the policy is learned. MDN’s reference is very clear about this behavior. (MDN वेब दस्तावेज़)

That said, security teams should not overread this as “transport risk solved.”

What HSTS does help with

- Reduces protocol downgrade risk for browser clients after policy is cached

- Helps enforce HTTPS-only behavior on subsequent requests

- Aligns with secure defaults for direct HTTPS gateway deployments

What HSTS does not solve

- It does नहीं sanitize unsafe tool outputs

- It does नहीं fix weak auth on plugins/webhooks

- It does नहीं prevent SSRF or internal-network misuse

- It does नहीं mitigate prompt injection in skill content or web content

- It does नहीं stop secret leakage via logs/config snapshots/telemetry

This is the recurring problem in AI agent security: a valid and useful web security control can be implemented correctly, while the highest-risk pathways sit somewhere else entirely (tool invocation, plugin auth, skill ecosystems, memory/log reuse, or agent-driven network actions).

The CVEs security engineers should still care about when evaluating this release

Even if 2026.2.23 is not itself a “CVE bundle release,” defenders should interpret it in the context of recent OpenClaw vulnerabilities and the broader hardening trajectory that accelerated in February 2026.

Below are the most relevant recent CVEs publicly documented in NVD and widely discussed in the OpenClaw security conversation.

CVE-2026-26322 and the Gateway gatewayUrl trust problem

NVD describes CVE-2026-26322 as an issue (prior to OpenClaw 2026.2.14) where the Gateway tool accepted a tool-supplied gatewayUrl without sufficient restrictions, allowing the OpenClaw host to attempt outbound WebSocket connections to user-specified targets under certain tool invocation conditions. (एनवीडी)

This is not just “another SSRF bug” in the traditional webapp sense. In an agent platform, a WebSocket-capable outbound connection path can become an execution-adjacent control plane primitive, depending on what downstream services trust and how the agent/runtime uses the returned data.

Why this matters more in AI agents than in ordinary web apps

In a standard web app, SSRF usually threatens:

- metadata endpoints

- internal APIs

- admin consoles

- service discovery endpoints

In an AI agent runtime, SSRF-like behavior can also affect:

- tool backends the agent relies on

- provider/gateway routing paths

- data sources later summarized by the agent

- internal services whose responses become instructions या context

OWASP still gives the right foundational SSRF guidance (allowlists, network controls, validation, segmentation), but the AI-specific twist is that the semantic meaning of retrieved content matters too, not just the network destination. (OWASP Cheat Sheet Series)

CVE-2026-26319 and why optional plugin authentication is never “optional” in production

NVD describes CVE-2026-26319 as missing webhook authentication in the optional @openclaw/voice-call Telnyx webhook handler when telnyx.publicKey is not configured, enabling forged Telnyx events in affected versions (2026.2.13 and below). (एनवीडी)

This kind of issue is common in fast-moving ecosystems: a plugin supports secure verification, but deployment reality drifts into “temporary test mode” and then stays there.

What security teams should learn from this CVE

If a feature depends on a verification key for authenticity (here, Telnyx Ed25519 signatures), then production systems should enforce a fail-closed posture:

- if verification config is missing, reject requests

- if endpoint is exposed, assume unsolicited traffic will arrive

- if webhook events can trigger workflows, treat them as privileged input

In an agent environment, forged events are especially dangerous because they may not just “create bad records”—they can trigger workflows, tool calls, or follow-on actions.

CVE-2026-26323 shows the supply chain is not only dependencies, but maintainer tooling and CI paths

NVD describes CVE-2026-26323 as a command injection issue in the maintainer/dev script scripts/update-clawtributors.ts affecting versions 2026.1.8 through 2026.2.13, with risk to contributors/maintainers/CI running the script on a checkout containing malicious commit metadata. (एनवीडी)

This is a good reminder that modern security reviews should include:

- contributor tooling

- release scripts

- CI helper scripts

- “internal-only” developer automation

Why? Because internal scripts can become production-adjacent if they alter release artifacts, publish packages, or write metadata consumed by users.

This matters even more in projects with large communities and rapid release cadence. The GitHub release page shows OpenClaw moving quickly through versions in late February 2026 (including 2026.2.22, 2026.2.23, and 2026.2.24 in close succession). Fast cadence is good for shipping fixes, but it also increases the importance of hardened release engineering. (GitHub)

CVE-2026-26326 and the agent-era version of “debug output leaked secrets”

NVD describes CVE-2026-26326 as an information disclosure issue where skills.status could expose raw resolved config values in requirement checks to operator.read clients before version 2026.2.14, including paths tied to skill config requirements. The fix stops returning raw resolved values and narrows a Discord-related requirement scope. (एनवीडी)

This is exactly the kind of bug that gets underestimated in agent systems.

In traditional systems, a “status endpoint leak” is already serious. In AI agent systems, it can be worse because:

- secrets may unlock multiple SaaS systems (mail, chat, cloud, model providers)

- leaked values can be copied into logs, tickets, or AI conversations

- “read-only” roles may still be enough for exfiltration and pivoting

The 2026.2.23 redaction improvements in config.get snapshots should be read in this same context: the ecosystem is learning (correctly) that introspection and debugging features need stronger secret hygiene. (GitHub)

The bigger threat picture: the release is happening in the middle of an ecosystem security stress test

The reason this release matters is not just what changed in the codebase. It’s that OpenClaw is now operating under heavy real-world adversarial pressure across multiple layers.

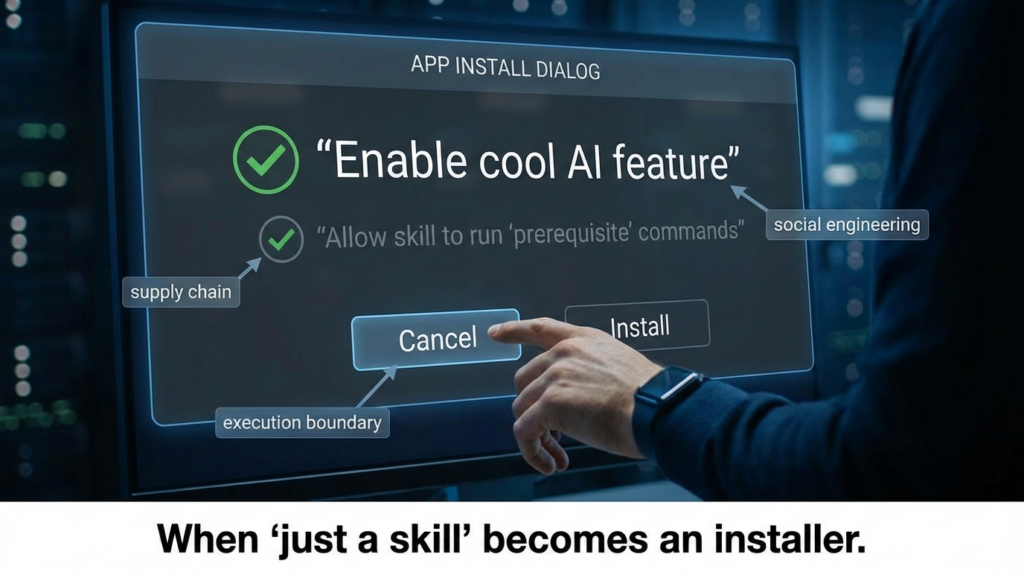

Malicious skills and marketplace abuse are now a mainstream risk

The Hacker News reported findings of 341 malicious ClawHub skills out of a larger audited set, describing campaigns that used fake prerequisites and social engineering to push malware/stealer delivery, including macOS AMOS-related behavior and credential theft themes. The article also notes OpenClaw’s open marketplace model and the later addition of a reporting option / auto-hiding thresholds. (The Hacker News)

This matters because many users still mentally model “skills” as something closer to templates or prompts, when in practice they can function as execution-adjacent artifacts that influence users and agents into running commands.

Exposure at internet scale changes patch urgency

Recent reporting also highlighted tens of thousands of exposed OpenClaw instances (the search result summary cites SecurityScorecard findings and 40,000+ exposed deployments). Even if exact counts vary by scan window and fingerprinting method, the operational conclusion is the same: once a platform is internet-exposed at that scale, configuration weaknesses and known CVEs become mass-exploitation opportunities. (Infosecurity Magazine)

Prompt injection remains the background condition

The recent Verge coverage around OpenClaw/Cline-related prompt injection incidents is another signal that AI-agent security cannot be reduced to package CVEs. Prompt injection and instruction/data boundary failures remain a live operational risk in any workflow where agents consume external content and can execute actions. (The Verge)

What security teams should do after upgrading to 2026.2.23

The right response to this release is not “upgrade and move on.” It is “upgrade, then verify the new boundary conditions.”

Here’s a practical checklist that maps to the release and the current threat environment.

Upgrade, then explicitly verify version and release assumptions

Use a release validation process that records:

- installed OpenClaw version

- enabled skills/plugins

- exposed interfaces (UI, API, gateway, webhook endpoints)

- provider credentials configured

- proxy/tunnel/public exposure paths

- reverse proxy/header configuration

Do not assume a version bump automatically fixes your deployment if:

- you run forks

- you keep old config keys

- you expose optional plugins

- you route through custom proxies

- you pin older containers/images

Treat HSTS as a transport hardening step, not a security program

If you are deploying direct HTTPS to end users, enabling Strict-Transport-Security is a good move. But validate your deployment path:

- HTTPS terminates where you think it does

- no mixed-content regressions break your UI

- staging/test domains are not accidentally HSTS-preloaded in ways that hurt ops

- your reverse proxy and OpenClaw gateway aren’t duplicating/conflicting headers

A minimal Nginx example (adapt to your environment):

server {

listen 443 ssl http2;

server_name openclaw.example.com;

ssl_certificate /etc/letsencrypt/live/openclaw.example.com/fullchain.pem;

ssl_certificate_key /etc/letsencrypt/live/openclaw.example.com/privkey.pem;

# HSTS: use cautiously in rollout; start lower if needed

add_header Strict-Transport-Security "max-age=31536000; includeSubDomains" always;

location / {

proxy_pass <http://127.0.0.1:3000>;

proxy_set_header Host $host;

proxy_set_header X-Forwarded-Proto https;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

}

}

Why the caution? Because HSTS improves browser transport behavior, but it doesn’t compensate for application-layer trust issues. MDN’s description makes the scope clear: it influences future browser requests to the host. (MDN वेब दस्तावेज़)

Re-audit any plugin/webhook feature that can run in “convenience mode”

CVE-2026-26319 is the perfect reminder here. If your deployment includes webhooks, voice integrations, bot connectors, or event-driven actions:

- require signature verification

- reject unsigned/invalid events

- log auth failures with rate limits

- isolate webhook listeners from privileged internal networks

- review whether the endpoint must be public at all

A generic webhook verification pattern (pseudocode) looks like this:

def handle_webhook(request):

signature = request.headers.get("X-Signature-Ed25519")

timestamp = request.headers.get("X-Timestamp")

if not signature or not timestamp:

return {"error": "missing signature headers"}, 401

if not verify_signature(public_key=TELNYX_PUBLIC_KEY, request=request):

return {"error": "invalid signature"}, 401

event = parse_json(request.body)

# Optional: replay protection

if is_replay(event, timestamp):

return {"error": "replay detected"}, 409

# Enqueue minimal event for downstream processing

enqueue_event(event)

return {"status": "ok"}, 202

The main point is architectural: authenticate before parse/use/trigger.

Lock down SSRF and agent-driven network reachability with layered controls

OpenClaw’s release notes explicitly mention a browser SSRF policy breaking change/default shift in 2026.2.23. That should prompt a policy review, not just a config migration. (GitHub)

OWASP’s SSRF cheat sheet remains a strong baseline for destination validation and network segmentation. In agent deployments, you should add AI-specific controls on top. (OWASP Cheat Sheet Series)

Recommended control layers

| परत | Goal | Example Controls |

|---|---|---|

| Input/config validation | Prevent unsafe destinations at config/tool boundary | Allowlist schemes/hosts, reject raw IP literals, canonicalize URLs |

| Runtime policy | Restrict agent/browser/tool network actions | Deny private CIDRs by default, explicit exceptions, egress ACLs |

| Network segmentation | Reduce blast radius if validation fails | Isolate OpenClaw host from admin planes/metadata/internal DBs |

| Authentication | Prevent trust-by-reachability | Require auth on internal services, mTLS where possible |

| Observability | Detect abuse and policy drift | Log destination attempts, blocked CIDRs, unexpected WebSocket egress |

A practical allow/deny policy sketch

network_policy:

default_outbound: deny

allow_dns: true

allow_https:

- api.anthropic.com

- *.openrouter.ai

- api.openai.com

- your-approved-saas.example

deny_cidrs:

- 127.0.0.0/8

- 10.0.0.0/8

- 172.16.0.0/12

- 192.168.0.0/16

- 169.254.0.0/16 # metadata/link-local

websocket:

allowlist_only: true

Even if your exact OpenClaw config surface differs, this is the right mental model.

Treat “skills” like code, not content

The ClawHub incidents are a warning for every team that lets users install third-party OpenClaw skills. The THN reporting describes malicious skills masquerading as useful tools while using fake prerequisites and social engineering to get users to run commands that fetch malware. (The Hacker News)

That means your review process must cover both:

- what the skill does

- what the skill tells the user to do

Minimum skill governance policy for serious deployments

- disable public marketplace installs by default on managed deployments

- maintain an internal allowlist of reviewed skills

- require code review and provenance checks

- ban skills that ask users to manually run shell commands during setup

- sandbox or containerize risky tool execution paths

- separate test agents from production agents

- monitor outbound connections after new skill installs

- rotate credentials after suspicious skill activity

If your security model still treats SKILL.md as “just documentation,” you are behind the threat model.

Prevent secrets from leaking into logs, status views, and telemetry

OpenClaw’s recent CVEs and 2026.2.23 redaction improvements both point in the same direction: introspection and observability are powerful, but dangerous without strict data minimization and redaction. (एनवीडी)

OpenTelemetry’s official guidance is useful here: it explicitly says implementers are responsible for understanding what is sensitive in their context and protecting it, and recommends data minimization plus processors that can remove, filter, redact, or transform sensitive fields. (OpenTelemetry)

What to redact by default in an OpenClaw environment

- API keys (model providers, automation platforms, SaaS)

- webhook secrets and signature verification keys

- OAuth access/refresh tokens

- session tokens and cookies

- chat/mail connector credentials

- SSH keys and cloud credentials

- user identifiers not needed for observability

- prompt content that may include private business data

Example OpenTelemetry Collector redaction approach (illustrative)

processors:

attributes/redact_sensitive:

actions:

- key: api.key

action: delete

- key: authorization

action: delete

- key: user.email

action: hash

- key: session.token

action: delete

transform/scrub_patterns:

error_mode: ignore

log_statements:

- context: log

statements:

- replace_pattern(body, "(?i)bearer\\\\s+[A-Za-z0-9\\\\-\\\\._~\\\\+\\\\/]+=*", "Bearer [REDACTED]")

- replace_pattern(body, "(?i)(api[_-]?key\\\\s*[:=]\\\\s*)[^\\\\s,;]+", "$1[REDACTED]")

service:

pipelines:

logs:

processors: [attributes/redact_sensitive, transform/scrub_patterns]

No single redaction pattern is sufficient, but “collect everything and clean it later” is not a safe default for agent systems.

Revisit UI and admin surfaces for XSS and unsafe rendering assumptions

Even if the current release is not headline-driven by XSS, OpenClaw deployments often include admin panels, logs, skill metadata views, and message histories that can end up rendering attacker-controlled content.

OWASP’s XSS Prevention Cheat Sheet remains the right reference point: use framework protections correctly, avoid unsafe rendering APIs, and apply output encoding/sanitization based on context. The cheat sheet explicitly notes common framework escape hatches (including React’s dangerouslySetInnerHTML) and why no single technique solves XSS alone. (OWASP Cheat Sheet Series)

This is especially important in AI tooling because:

- logs and transcripts may contain adversarial strings

- skill descriptions are user-supplied

- web search results may be displayed and summarized

- admins often trust “internal dashboards” too much

The real lesson of 2026.2.23: OpenClaw security is now a systems problem

If you only look at 2026.2.23 as a product update, you’ll miss the bigger shift.

This release shows a project that is:

- shipping meaningful hardening improvements,

- expanding model/provider capabilities quickly,

- and responding to a rapidly maturing attacker ecosystem.

That combination is exactly why defenders need a systems view.

The security boundary has moved

The old boundary was roughly:

- app server

- database

- browser

The new OpenClaw boundary is closer to:

- local runtime + OS permissions

- tool invocation framework

- provider/gateway routing

- plugins/webhooks/connectors

- skills marketplace content + social engineering

- logs/memory/transcripts reused as agent context

- browser/network egress from agent-controlled workflows

A release like 2026.2.23 improves parts of that boundary. It does not collapse it into a single patchable object.

Common mistakes teams will make after reading the release announcement

A few predictable mistakes are worth calling out because they create false confidence.

Mistake 1: “Security headers were added, so the gateway is secure now”

HSTS is useful. It is not a substitute for auth, SSRF controls, plugin hardening, or secret hygiene. (GitHub)

Mistake 2: “We’re on a newer version, so those February CVEs don’t matter anymore”

They still matter because they reveal design and operational failure patterns you may reproduce elsewhere (webhooks, status views, tool routing, maintainer automation). (एनवीडी)

Mistake 3: “We don’t install shady skills, so ClawHub risk doesn’t apply to us”

If users can install from a public marketplace, or if teams copy/paste setup commands from third-party docs, you are still exposed to the same class of risk. THN’s coverage shows the attack pattern relied heavily on social engineering through “prerequisites,” not only pure code execution inside the skill package. (The Hacker News)

Mistake 4: “Telemetry helps incident response, so collect everything”

Collecting everything without redaction and minimization creates another breach surface. OpenTelemetry’s guidance explicitly places responsibility on implementers to classify and protect sensitive data. (OpenTelemetry)

Final take

OpenClaw 2026.2.23 is a meaningful release. It improves security posture in several real ways, including transport hardening options, config redaction behavior, and reasoning-leakage-related fixes, while adding useful provider and AI workflow capabilities. (GitHub)

But the release should be read in context:

- recent OpenClaw CVEs show recurring issues in gateway trust, webhook auth, maintainer tooling, and secret disclosure paths, (एनवीडी)

- recent reporting shows the ecosystem is already under active abuse pressure through malicious skills and exposed deployments, (The Hacker News)

- and the core challenge of AI-agent security remains architectural: the boundary between content, instruction, configuration, and action is thinner than most teams are used to.

If you run OpenClaw in production or semi-production, the right next step is not just patching. It’s verifying the post-upgrade behavior of your specific deployment—network reachability, authentication, redaction, skills governance, and operator workflows included.

That is the difference between “updated” and “hardened.”

Links

- OpenClaw Sovereign AI Security Manifest: A Comprehensive Post-Mortem and Architectural Hardening Guide for OpenClaw AI (2026) (पेनलिजेंट)

- People giving OpenClaw root access to their entire life (पेनलिजेंट)

- Multiple Hacking Groups Exploit OpenClaw Instances to Steal API Keys and Deploy Malware (पेनलिजेंट)

- OpenClaw AI Vulnerability: A Step-by-Step Guide to Zero-Click RCE and Indirect Injection (पेनलिजेंट)

- When SKILL.md Becomes an Installer: The OpenClaw ClawHub Poisoning Playbook (पेनलिजेंट)

- OpenClaw “Log Poisoning” Vulnerability: Indirect Prompt Injection via WebSocket Headers (fixed in 2026.2.13) (पेनलिजेंट)

- OpenClaw GitHub release

v2026.2.23(official release notes) (GitHub) - OpenClaw GitHub releases page (release cadence and version timeline) (GitHub)

- NVD: CVE-2026-26322 (Gateway

gatewayUrl/ outbound WebSocket target issue) (एनवीडी) - NVD: CVE-2026-26319 (Telnyx webhook signature verification issue) (एनवीडी)

- NVD: CVE-2026-26323 (maintainer/dev script command injection) (एनवीडी)

- NVD: CVE-2026-26326 (

skills.statussecret disclosure) (एनवीडी) - OWASP SSRF Prevention Cheat Sheet (OWASP Cheat Sheet Series)

- OWASP XSS Prevention Cheat Sheet (OWASP Cheat Sheet Series)

- MDN

Strict-Transport-Securityheader reference (MDN वेब दस्तावेज़) - OpenTelemetry: Handling sensitive data (official guidance) (OpenTelemetry)