OpenClaw has become one of the clearest examples of what happens when large language models stop being isolated chat systems and start acting inside real environments. The project positions itself as a personal AI assistant that runs on your own devices, speaks across mainstream messaging channels, and uses a Gateway as its control plane. In practice, that means a deployed OpenClaw agent can sit close to your files, browser, credentials, messages, shell, and account-linked workflows. That design is exactly why OpenClaw Security Audit deserves to be treated as a distinct discipline, not as a thin variation of web application testing. (ギットハブ)

The official documentation is unusually direct about the security posture. OpenClaw tells operators to run openclaw security audit, openclaw security audit --deepそして openclaw security audit --fix, and it explicitly states that there is no “perfectly secure” setup. The docs also make a critical architectural claim: OpenClaw assumes a personal assistant model, one trusted operator boundary per gateway, and does ない claim hostile multi-tenant isolation on a single shared gateway. That single sentence changes how the whole product should be audited. (OpenClaw)

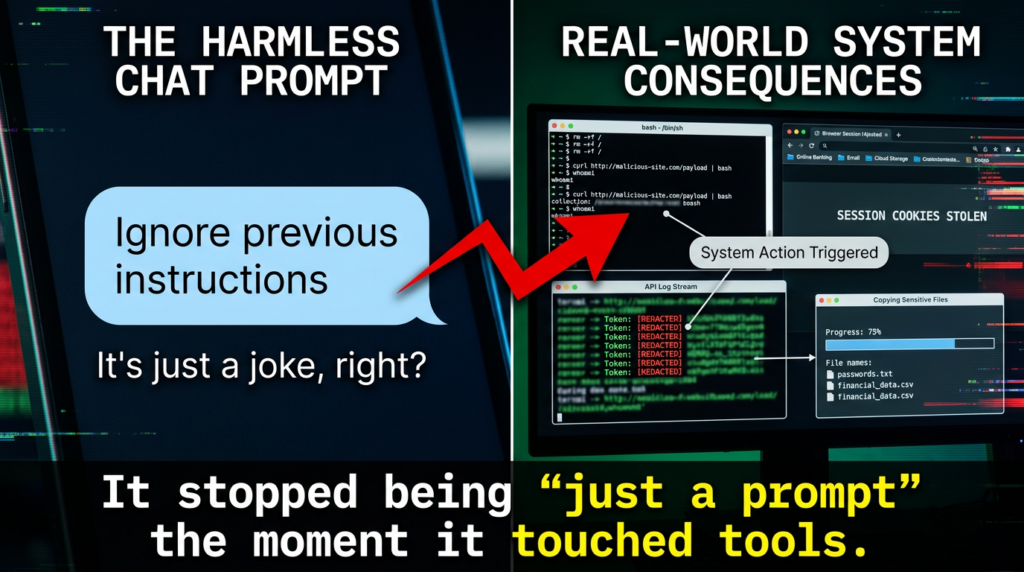

A serious OpenClaw Security Audit therefore starts with a different question from the one many teams ask about AI systems. The first question is not “Can the model be prompted into saying something unsafe?” The first question is: Who can steer this runtime, what authority does that steering imply, and which actions become real once the model is connected to tools, plugins, channels, local state, and durable memory? That framing is also consistent with the recent Agents of Chaos study, which documented unauthorized compliance, sensitive data disclosure, destructive system actions, denial-of-service conditions, identity spoofing, and partial system takeover in autonomous agents operating with persistent memory, shell execution, and live communication channels. (arXiv)

Why OpenClaw needs its own audit model

Traditional web security testing is built around routes, sessions, trust boundaries, server-side logic, data flow, and exposure of internet-facing components. An OpenClaw deployment can include some of those things, but its highest-risk failures often live elsewhere: shared conversational entry points, local-to-remote bridges, skill packages that bundle instructions and scripts, operator trust assumptions, runtime file permissions, agent memory, delegated access to productivity tools, and shell execution paths that are one prompt away from real system state changes. (OpenClaw)

That is why an OpenClaw Security Audit cannot be reduced to “scan the exposed Gateway” or “test for prompt injection.” Prompt injection matters, but OpenClaw’s own GitHub security overview makes clear that prompt-injection-only attacks, without an authorization, policy, sandbox, or boundary bypass, are treated differently from true platform security defects. The project’s published trust model is host-first, operator-trusting, and explicit that plugins and extensions belong to the trusted computing base for a gateway unless a real boundary bypass is shown. (ギットハブ)

This distinction matters because many failures in agent deployments are not single-bug stories. They are composed failures. A shared messaging channel provides input. A skill adds instructions. A permissive tool policy grants execution. A credentialed browser session or API token creates impact. A transcript file or retained log preserves secrets. A plugin auto-discovery path turns cloned content into code execution. None of those pieces is unusual by itself. Their combination is where the security story changes. (OpenClaw)

What OpenClaw officially says about its threat model

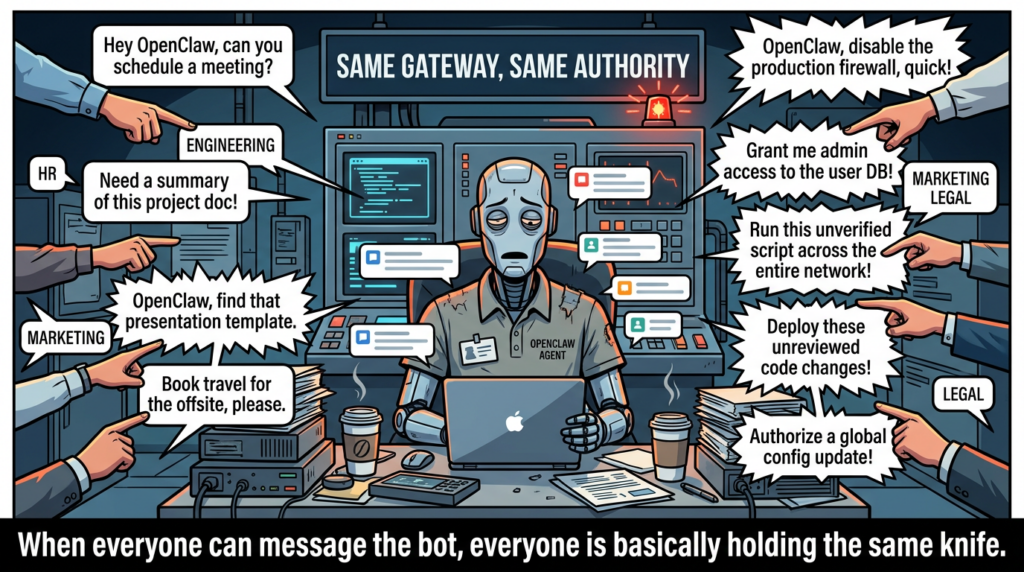

A good audit begins with the vendor’s own assumptions. OpenClaw’s documentation says security guidance assumes a personal assistant deployment, meaning one trusted operator boundary and potentially many agents inside it. It warns that if multiple untrusted users can message one tool-enabled agent, they should be treated as sharing the same delegated tool authority. The docs further recommend one OS user, host, or VPS per trust boundary and say a shared gateway for mutually untrusted people is not a supported security boundary. (OpenClaw)

That has several immediate implications.

まず最初に、 shared Slack or messaging deployments are not neutral defaults. The docs explicitly call out the risk: if everyone in a workspace can message the bot, any allowed sender may induce tool calls, affect shared state, or drive exfiltration if the agent has access to sensitive credentials or files. In other words, the real issue is not whether the channel is “authenticated.” It is whether the channel collapses multiple people into one effective authority domain. (OpenClaw)

セカンドだ、 session scoping is not authorization. OpenClaw says session identifiers are routing controls, not authorization tokens, and that per-user memory isolation does not transform one shared agent into a per-user host authorization boundary. This is exactly the kind of subtlety that gets missed when teams import assumptions from SaaS chat systems into local agent runtimes. (ギットハブ)

Third, the project is unusually transparent that the host and config boundary are trusted. If someone can modify ~/.openclaw, including openclaw.json, they are effectively a trusted operator. For auditing, that means filesystem permissions, secrets placement, residual plaintext, workspace ownership, and local configuration drift are not secondary hygiene issues. They are core control points. (OpenClaw)

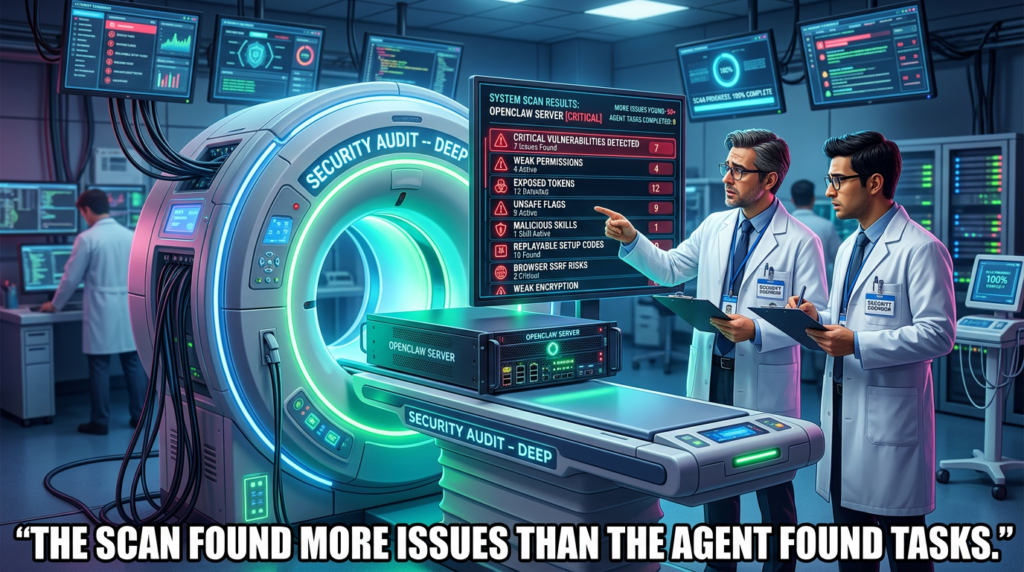

Fourth, OpenClaw exposes an unusually practical built-in audit surface. The CLI reference documents openclaw security audit, openclaw security audit --deepそして openclaw security audit --fix. The security page says these checks flag common footguns such as Gateway auth exposure, browser control exposure, elevated allowlists, filesystem permissions, and insecure flags. That means a professional OpenClaw Security Audit should treat the built-in audit not as a replacement for testing, but as baseline telemetry to compare against deeper findings. (OpenClaw)

The core domains an OpenClaw Security Audit should cover

A real audit should be structured around attack surfaces that match the runtime, not around a generic application checklist.

| Audit domain | What you are really testing | なぜそれが重要なのか |

|---|---|---|

| Gateway and control plane | Exposure, authentication, origin handling, device auth, shared auth scopes | A compromised control plane can turn every downstream tool into reachable impact |

| Trust boundary design | Whether one gateway is serving multiple adversarial users, channels, or roles | OpenClaw itself does not claim hostile multi-tenant isolation on one gateway |

| Skills and plugin supply chain | 悪意がある SKILL.md content, bundled scripts, auto-discovery, unsafe dependencies | Skills can function as executable supply-chain artifacts, not harmless templates |

| Tool execution and shell | Sandbox state, exec routing, approval bypass, workspace-only restrictions | “Helpful automation” becomes system modification quickly |

| Filesystem and secrets | Plaintext residues, permission drift, transcript files, memory artifacts | Local state often becomes the easiest path to credential theft or persistence |

| Channels and inboxes | Slack, Discord, Telegram, Teams, iMessage, webhooks, name matching | The messaging layer is often the first point where authority is confused |

| Browser and network access | SSRF policy, internal network reachability, credentialed browsing | Agents can bridge content injection into authenticated actions |

| Logging and forensics | Token exposure, file URL leaks, replay artifacts, reviewability | Detection and cleanup are hard when agent actions blur with user intent |

The reason to frame the audit this way is simple: OpenClaw is not merely software that stores or renders data. It is software that interprets instructions, touches state, and can act across boundaries that humans usually keep separate. (OpenClaw)

Recent vulnerabilities show the pattern clearly

The most widely cited OpenClaw vulnerability so far is CVE-2026-25253. NVD describes it as a condition in versions before 2026.1.29 where OpenClaw obtained a gatewayUrl value from a query string and automatically made a WebSocket connection without prompting, sending a token value. That is not just a bug about URL handling. It is a case study in what happens when local agent control surfaces meet browser-mediated trust and implicit credential forwarding. (NVD)

An auditor should read CVE-2026-25253 not as an isolated flaw, but as a class marker. It tells you to inspect every place where the runtime can be induced to trust a connection target, propagate a credential, or promote a browser-origin interaction into a control-plane relationship. In agent systems, these are often the most dangerous seams because the user experiences them as “just opening a page” or “just pairing a device,” while the runtime experiences them as a control authorization event. (NVD)

The GitHub security advisories page adds even more texture. As of March 14, 2026, published advisories included session transcript files created without forced user-only permissions, Telegram webhook request bodies being read before secret validation enabling unauthenticated resource exhaustion, Telegram media fetch errors exposing bot tokens in logged file URLs, unsanitized iMessage attachment paths enabling SCP remote-path command injection, replayable bootstrap setup codes that could escalate pending pairing scopes, WebSocket shared-auth connections that could self-declare elevated scopes, workspace plugin auto-discovery allowing code execution from cloned repositories, and non-owners reaching owner-only /config そして /debug surfaces. (ギットハブ)

A pattern emerges immediately. These are not all “AI flaws” in the narrow sense. Some are classic parsing, auth, permissions, injection, and lifecycle defects. What makes them important in OpenClaw is that the platform aggregates them into a runtime with high delegated authority. A transcript file with weak permissions is worse when the transcript can contain credentials, prompts, task history, and operational context. A webhook validation mistake is worse when the system behind it can trigger workflows. A cloned repository is worse when plugin auto-discovery turns content into trusted execution. (ギットハブ)

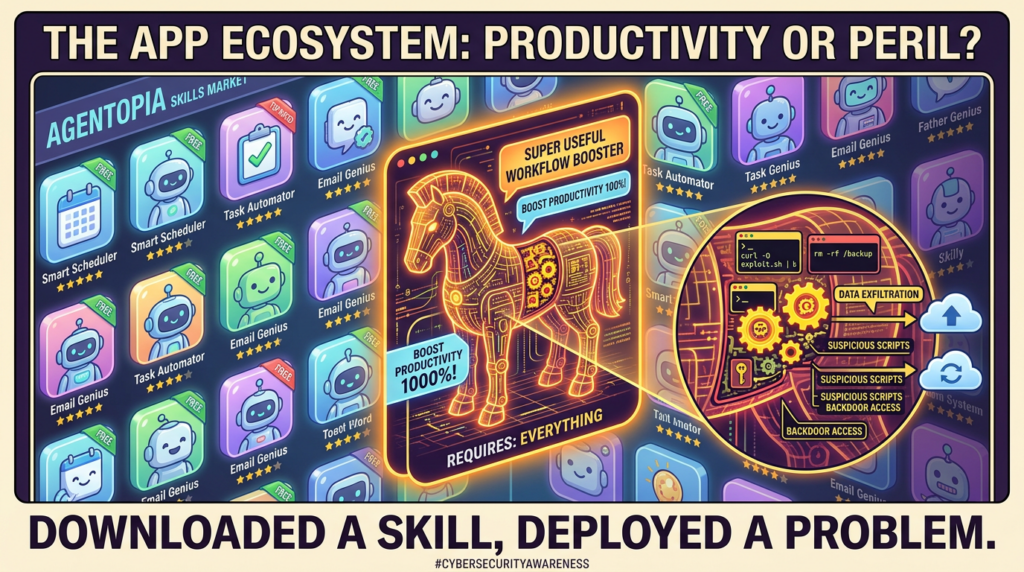

Skills are not “just prompts,” they are supply-chain artifacts

If there is one point every OpenClaw Security Audit should emphasize, it is this: skills must be treated as supply-chain inputs with executable consequences. A major part of the public discussion around OpenClaw security has centered on ClawHub and malicious skills, and the concern is not hype. Coverage from multiple outlets, along with security writeups, converges on the same problem: user-submitted skills can function as malware delivery vehicles, social engineering payloads, or instruction-layer traps that route around expected tool controls. (ザ・ヴァージ)

The 1Password analysis is especially useful because it explains なぜ this is bigger than one registry or one project. The Agent Skills format places no restrictions on the markdown body, skills can include freeform instructions, and they can bundle scripts and other resources. The article makes the key point that if your safety story is “MCP will gate tool calls,” you can still lose to a malicious skill that routes around that assumption through social engineering, bundled code, or direct shell instructions. It also notes that the same general skill shape appears across broader agent ecosystems, not just OpenClaw. (1Password)

That means a professional audit cannot stop at network exposure or control-plane configuration. It must inspect the content plane そして artifact plane. A skill should be treated closer to a third-party integration package than to a documentation snippet. Questions that belong in scope include:

- Does the skill instruct the user or agent to fetch remote code?

- Does it bundle executable material?

- Does it declare “dependencies” that are really side-loading paths?

- Can it influence approval flows or induce copy-paste terminal use?

- Can it persist state outside the expected workspace?

- Does it rely on name confusion, rebranding ambiguity, or fake package identities? (1Password)

This is also why OpenClaw Security Audit is a better framing than “OpenClaw prompt testing.” The decisive question is not only whether the model can be tricked. It is whether the runtime, trust model, and extension ecosystem allow untrusted content to become effective authority.

What recent research adds beyond vulnerability headlines

The strongest academic signal in this space is Agents of Chaos. The paper reports a two-week red-teaming study of autonomous language-model agents in a live environment with persistent memory, email accounts, Discord access, file systems, and shell execution. The authors document representative case studies involving unauthorized compliance, sensitive information disclosure, destructive actions, denial-of-service conditions, identity spoofing, cross-agent propagation of unsafe practices, and situations where agents claimed task completion while system state contradicted the claim. (arXiv)

This matters for OpenClaw because it validates a shift many practitioners already suspect: once an agent has persistence, communication, and tools, the right mental model is closer to systems security with probabilistic decision-making than to ordinary chatbot safety. The most important failures are often not content harms but governance failures, where the system cannot robustly maintain who is authorized to ask for what, what state is trusted, or whether an action actually completed as reported. (arXiv)

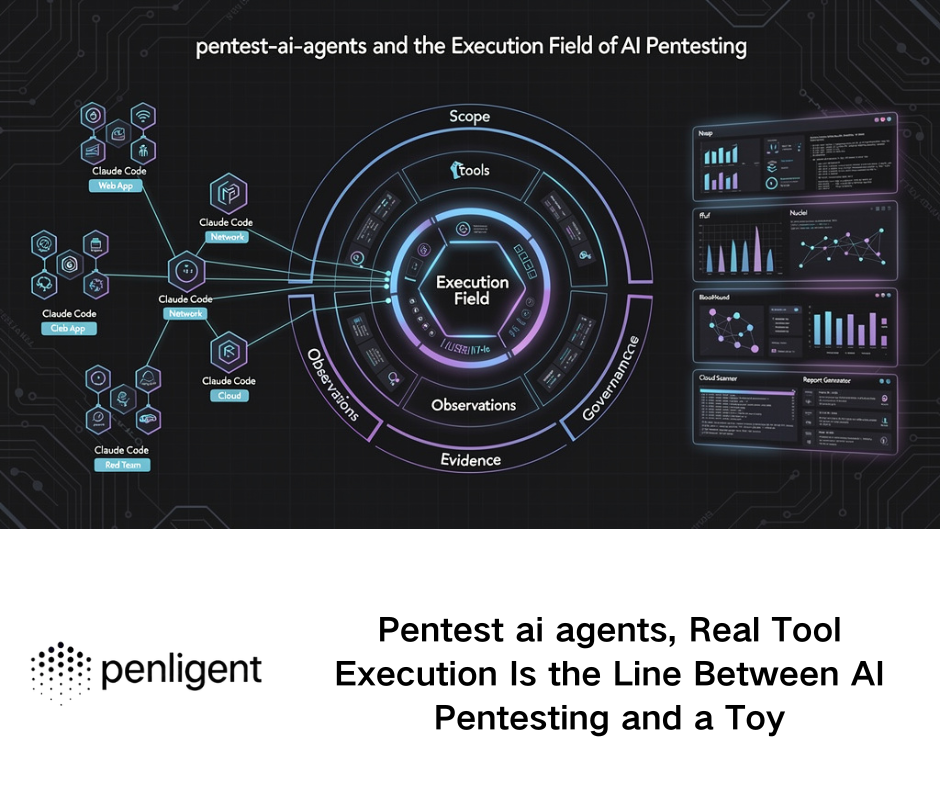

PinchBench is useful here too, but in a different way. The benchmark is valuable because it tests models as they run inside OpenClaw, with the same tools, workspace, and constraints a real agent faces. It measures real task success, speed, and cost across 23 tasks. That is a meaningful advance over synthetic prompt-only evaluation. But it is not a security benchmark by itself. A model that performs well on file operations, web research, or email triage can still be fragile under hostile inputs, boundary confusion, or malicious skills. In other words, runtime realism is necessary, but security realism still requires adversarial evaluation. (openclaw.report)

A practical methodology for OpenClaw Security Audit

The most reliable way to audit OpenClaw is to split the work into four layers: exposure, authority, 執行そして residue.

Exposure

Start by mapping every way the runtime can be reached or influenced. That includes the control UI, Gateway APIs, WebSocket surfaces, pairing flows, webhook receivers, messaging channels, plugin auto-discovery paths, skill ingestion, cloned repositories, imported workspaces, and browser-mediated onboarding or auth flows. The built-in commands are a good first pass:

openclaw security audit

openclaw security audit --deep

openclaw security audit --json

openclaw secrets audit

These commands are useful because they reflect what the product itself considers common footguns, including auth exposure, browser control exposure, elevated allowlists, filesystem permissions, plaintext secret residues, and configuration drift. They should not end the audit, but they should definitely begin it. (OpenClaw)

Then check for dangerous configuration states specifically called out in the docs, such as insecure auth settings, dangerous host header fallback behavior, disabled device auth, unsafe external content allowances, private-network browser SSRF relaxation, or patch application paths that are not workspace-only. The documentation explicitly lists these as insecure or dangerous flags surfaced by the audit command. (OpenClaw)

Authority

Next, identify who can steer the agent and how that steering maps to actual power. This is where many OpenClaw deployments fail even if the software is fully patched.

Questions to answer include:

- Is one gateway serving people who are not in the same trust boundary?

- Are non-owner senders still able to reach meaningful tools?

- Does a shared Slack or Discord bot imply a larger authority set than the operator realizes?

- Are session scoping assumptions being mistaken for authorization?

- Are setup codes, pairing links, or shared auth modes acting as privilege shortcuts?

- Does the deployment rely on mutable names, channel aliases, or weak sender matching? (OpenClaw)

This layer is also where CVE-class issues and configuration issues meet. A patched deployment can still be insecure if it is deployed as a shared assistant for users who should not actually share tool authority. OpenClaw’s own docs explicitly say that is outside the intended boundary model. (OpenClaw)

実行

Now move to what the agent can actually do once it is steered.

OpenClaw’s GitHub security overview says exec behavior is host-first by default, with agents.defaults.sandbox.mode defaulting to off, and that if the sandbox runtime is not active for the session, exec runs on the gateway host. That single design detail changes the severity of many findings. A skill that nudges execution, a channel input that triggers an action, or a content-induced tool call can move from “agent behavior issue” to “host impact” very quickly. (ギットハブ)

A useful review pattern here is:

# check current security posture

openclaw security audit --deep

# verify local ownership and residual plaintext

find ~/.openclaw -maxdepth 3 -type f -ls

grep -RIn "token\\|apikey\\|secret\\|bearer" ~/.openclaw 2>/dev/null

# inspect workspace plugin and skill paths

find . -type f \\( -name "SKILL.md" -o -name "package.json" -o -name "*.sh" -o -name "*.py" \\)

# look for symlinks and cloned content that could affect discovery paths

find . -type l -ls

These are not exploit scripts. They are operator-side checks that reveal the three things auditors care most about in this class of system: what is executable, what is trusted, and what persists after the action is over. The Mar. 13 and Mar. 14 advisories around plugin auto-discovery, transcript permissions, token exposure in file URLs, and attachment path injection all reinforce why this review has to be concrete and host-aware. (ギットハブ)

Residue

The final layer is what remains after the agent acts. This is where many otherwise “fixed” deployments still bleed data.

A transcript file with weak permissions, a debug surface that reveals too much, a log line containing token-bearing URLs, a memory artifact that preserves sensitive context, or a temporary download left in a world-readable directory can turn a one-time action into long-lived compromise material. OpenClaw’s published advisories already show that permissions and logged URLs are not theoretical issues in this ecosystem. (ギットハブ)

This is also the layer most likely to matter in incident response. If a skill was malicious, or a user was socially engineered through a skill, the question is no longer only “Was something executed?” It becomes “What credentials, files, messages, tokens, browser sessions, or local metadata are now recoverable by an attacker or another local user?”

What to look for in a skill review

A defensible OpenClaw Security Audit should include at least a lightweight static review process for every nontrivial skill. That process should treat a skill as an untrusted third-party package until proven otherwise.

Here is a minimal reviewer checklist:

| Skill review question | なぜそれが重要なのか | Audit action |

|---|---|---|

| Does the skill ask for copy-paste terminal commands? | Social engineering can bypass tool guardrails entirely | Flag as high risk and require manual security review |

| Does the skill bundle scripts or executables? | Execution may occur outside expected MCP/tool boundaries | Inspect every bundled file and every fetch path |

| Does the skill reference remote installers, curl pipes, or shortened links? | Common malware staging pattern | Block by default |

| Does the skill request broad filesystem, browser, or wallet access? | Expands impact radius far beyond stated feature scope | Compare requested reach against actual use case |

| Does the skill rely on ambiguous package names or “helper” dependencies? | Brand confusion and side-loading are common supply-chain tricks | Verify source provenance independently |

| Does the skill write memory, config, or persistent files? | Can create stealthy long-lived behavior changes | Track all persistence paths |

That style of review follows directly from the public analysis of malicious skills and from the Agent Skills format behavior discussed by 1Password. The important mindset shift is to stop calling these artifacts “extensions” in the harmless sense and start handling them as packages that may carry instruction-layer malware, bundled code, or persistence logic. (1Password)

CVE and advisory relevance, which findings matter most in practice

Not every published flaw has equal operational weight. For most real deployments, the highest-priority issues are the ones that combine easy trigger conditions with broad authority.

A practical prioritization looks like this:

- Connection hijack and auth-confusion flaws CVE-2026-25253 and the WebSocket shared-auth elevated-scopes advisory belong here because they affect who gets to become the effective controller of the runtime. (NVD)

- Code execution through trusted discovery paths Workspace plugin auto-discovery from cloned repositories is dangerous because it collapses a content import path into code execution. In agent-centric workflows, cloned content is common enough that this class deserves special attention. (ギットハブ)

- Credential leakage and token-bearing residue Transcript permissions and bot token exposure in logged URLs are the kinds of findings defenders underestimate until they need to do cleanup at scale. (ギットハブ)

- Webhook and message-ingestion prevalidation weaknesses Reading untrusted request bodies before secret validation is an old lesson, but in always-on agent systems it can become a reliable path to resource exhaustion or noisy operational failure. (ギットハブ)

- Path and input-to-command transitions Attachment path injection and similar issues matter because agent systems constantly transform untrusted content into filenames, commands, prompts, patches, and tool arguments. (ギットハブ)

This is one reason vulnerability-only reporting is incomplete for OpenClaw. A patched instance may still be running in a configuration that makes a malicious skill or a shared channel more dangerous than a nominal medium-severity advisory.

A strong OpenClaw Security Audit report should answer these ten questions

A good final report should be able to answer the following clearly:

- What is the actual trust boundary of this deployment?

- Who can message the agent, and are any of those senders unintentionally sharing authority?

- Which tool calls can reach the host, not just a sandbox or workspace?

- Which skills, plugins, or imported artifacts are part of the effective trusted computing base?

- Are secrets confined to expected stores, or do plaintext residues and logged URLs exist?

- Can browser, WebSocket, or onboarding flows promote an untrusted interaction into control access?

- Are message channels validated strongly enough to resist spoofing, replay, or mutable-name confusion?

- Can cloned content, imported workspaces, or local paths trigger discovery or execution behavior?

- Is there enough logging and state traceability to investigate a malicious skill incident?

- If the model behaves unsafely, what boundaries still prevent irreversible damage?

If an audit cannot answer those questions, it is probably not an OpenClaw Security Audit yet. It is just a partial configuration review.

There is a practical reason teams struggle with this class of work. OpenClaw security problems are often multi-step. The issue is rarely just one CVE or one config line. It is the chain from input surface to agent reasoning to tool invocation to host impact to residual data. That chain is exactly why AI-native validation workflows are more useful here than static checklists alone. A strong assessment needs replayable paths, evidence capture, runtime-aware probing, and enough context to connect “what the agent was told” to “what the system actually did.” (arXiv)

That is also where a platform like Penligent becomes relevant without forcing the fit. If the goal is to move from one-off manual spot checks toward repeatable security validation of agent runtimes, skills, and delegated tool paths, an AI-driven penetration testing workflow can help teams test misconfigurations, local exposure, risky execution paths, and extension behavior in a more systematic way. For OpenClaw-like deployments, the real value is not generic scanning. It is being able to validate runtime conditions, trace evidence, and turn a suspected agent risk into a concrete, reviewable finding set. (OpenClaw)

Final view

The most useful way to think about OpenClaw is not that it is unusually reckless, and not that it is uniquely broken. It is useful because it makes the real problem visible. Once an AI system can read messages, access files, call tools, browse authenticated sessions, persist memory, and install third-party skills, security moves out of the realm of “model safety only” and into runtime engineering, authority design, and supply-chain discipline. OpenClaw’s own docs more or less say this in plain English: there is no perfectly secure setup, and the safe starting point is the smallest access that still works. (OpenClaw)

That is why OpenClaw Security Audit should be treated as a live systems exercise. The central question is not whether the model can produce an unsafe sentence. The central question is whether the system around the model can stop unsafe authority from becoming unsafe action. Recent advisories, CVE-2026-25253, malicious skill investigations, and the Agents of Chaos findings all point in the same direction. The gap is no longer between “AI” and “security.” The gap is between what the agent is allowed to do そして what the operator thinks they delegated. (NVD)

Recommended reading

OpenClaw Security documentation. (OpenClaw)

OpenClaw CLI security commands. (OpenClaw)

OpenClaw GitHub security overview and trust model. (ギットハブ)

NVD entry for CVE-2026-25253. (NVD)

Agents of Chaos, paper and project page. (arXiv)

PinchBench, runtime benchmark context for OpenClaw agents. (openclaw.report)

1Password analysis of OpenClaw skills as an attack surface. (1Password)

Penligent, “What a PinchBench-Cyber Security Benchmark for OpenClaw Should Look Like.” (寡黙)

Penligent, “Agents of Chaos, the paper that turned agent hype into a security problem.” (寡黙)