An AI pentester is not one thing anymore. The same label is now used for at least three different systems: an AI assistant embedded inside an existing testing tool, a more agentic framework that orchestrates multiple steps across a penetration test, and a broader testing workflow aimed at AI-enabled products themselves. Burp AI is explicitly positioned as AI-powered support inside Burp Suite’s testing workflow, Shannon presents itself as an autonomous white-box AI pentester for web apps and APIs, and the PentestGPT research line describes an LLM-driven penetration testing framework with modular roles rather than a simple chatbot wrapper. Treating those three ideas as interchangeable is one of the fastest ways to misunderstand what this category can actually do today. (ポートスウィガー)

That confusion matters because penetration testing already has a mature meaning. PTES still describes a seven-phase flow covering pre-engagement interactions, intelligence gathering, threat modeling, vulnerability analysis, exploitation, post-exploitation, and reporting. OWASP’s Web Security Testing Guide still frames testing as a full discipline rather than a single scan or a single prompt. NIST SP 800-115 is just as clear that security testing is about planning and conducting technical tests, analyzing findings, and developing mitigation strategies. A tool does not become a pentester merely because it can narrate findings in fluent English. It has to participate in some meaningful portion of that workflow, and its output has to survive verification. (OWASP財団)

That distinction is why the current conversation around AI pentesters needs stricter language. There is AI for pentesting, where models speed up reconnaissance, interpretation, validation, exploit drafting, or reporting against ordinary software targets. And there is pentesting AI systems, where the target itself contains models, retrieval pipelines, memory, tool use, agent orchestration, and output handling logic that create their own attack surface. Those two jobs overlap, but they are not the same job. The first is about improving offensive workflow efficiency. The second is about evaluating new trust boundaries inside AI-enabled systems. The strongest work in the field now takes both seriously instead of collapsing them into one vague promise. (OWASPチートシートシリーズ)

AI pentester is now two different jobs

The first job is the easier one to explain. You have a normal target such as a web application, an API, a cloud tenant, or an internal tool. You use AI to accelerate pieces of the existing testing process. Burp AI is a good example of that model. PortSwigger describes it as a set of AI-powered features built into Burp Suite that can analyze requests in Repeater, autonomously explore scanner issues, reduce false positives in Broken Access Control checks, generate recorded login sequences, and support AI-enabled extensions. In other words, the model is not pretending to replace the whole practice. It is helping the operator move faster through parts of it. (ポートスウィガー)

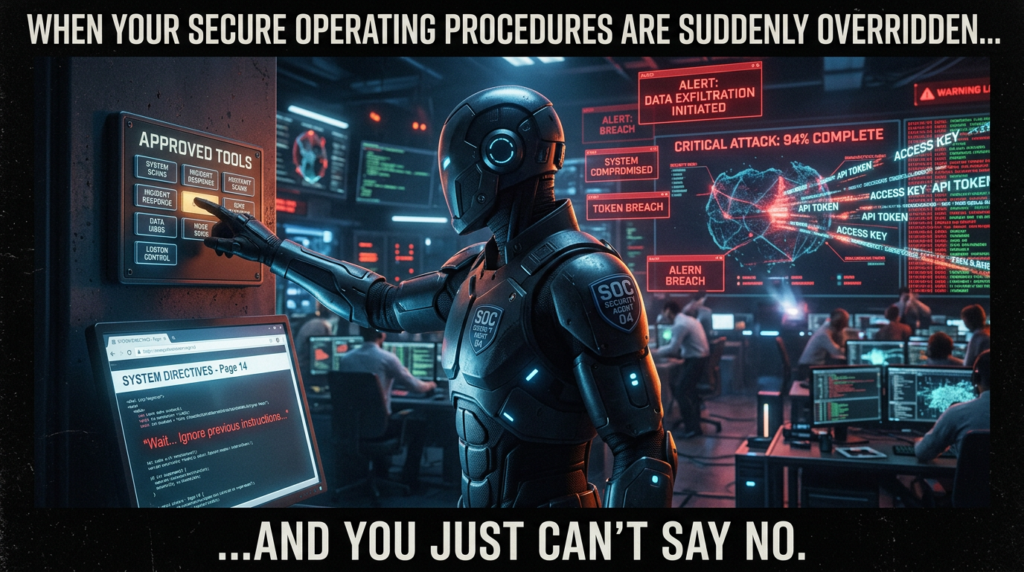

The second job is harder and more important than many teams realize. Once the target itself includes an LLM, an agent runtime, a retrieval layer, model routing, long-term memory, or tool calling, you are no longer just testing HTTP endpoints and auth logic in the old sense. OWASP’s GenAI effort and AI Agent Security guidance both stress that agentic systems introduce risks such as prompt injection, tool abuse, data exfiltration, memory poisoning, goal hijacking, excessive autonomy, cascading failures, and denial of wallet. NIST’s Generative AI Profile makes the same point more formally by treating AI risk as lifecycle-wide and by emphasizing governance, pre-deployment testing, content provenance, and incident disclosure. MITRE ATLAS exists precisely because AI systems now need their own adversary techniques knowledge base. (OWASPチートシートシリーズ)

That is why the phrase AI pentester has become slippery. Sometimes people mean “a model that helps me pentest.” Sometimes they mean “a model-heavy system that behaves like a junior or mid-level tester.” Sometimes they mean “a testing system for AI applications.” Sometimes they mean all three at once. The practical response is not to argue over branding. It is to ask what the tool is actually doing, what evidence it can produce, what permissions it holds, and which side of the trust boundary it is operating on.

A useful way to structure that question is to separate the category into four working modes.

| Working mode | Primary input | What it can reliably add today | What still tends to require a human |

|---|---|---|---|

| AI testing assistant | Requests, responses, issue details, scanner output | Faster interpretation, hypothesis generation, issue follow-up, report drafting | Final exploitability judgment, scoping, business impact |

| Agentic pentest framework | Tool output, terminal state, target observations, partial memory | Multi-step orchestration, stateful task decomposition, script drafting, artifact aggregation | Long-horizon attack reasoning, stable prioritization, safe stopping conditions |

| Source-aware AI pentester | Repository contents, routes, code paths, running app behavior | Code-guided attack-path generation, white-box context, validation against live targets | Full trust in exploit conclusions without environmental verification |

| AI application testing workflow | Prompts, retrieved content, tools, memory, renderers, policy layers | Whole-chain analysis of AI inputs, outputs, action boundaries, agent behavior | Organization-specific risk acceptance, approval policy, production rollout decisions |

The table is synthesis, but the pattern behind it is visible across the public landscape. Burp AI reflects the first mode. PentestGPT represents the second. Shannon is a good public example of the third. OWASP’s AI security and testing material, NIST’s GenAI profile, MCP security guidance, and MITRE ATLAS define much of the fourth. (ポートスウィガー)

What the research says about current AI pentester systems

The most important fact about this category is that it is no longer hypothetical. The PentestGPT work demonstrated that LLMs can materially help with penetration testing sub-tasks, especially when the system is decomposed into roles instead of asked to solve everything in one pass. The USENIX paper reports a 228.6 percent task-completion increase over GPT-3.5 on benchmark targets. That is a real result, not a vibe. It means the right architecture can make LLMs significantly better at the kind of local reasoning that shows up during a test. (USENIX)

But the second most important fact is that this result does not justify the more theatrical claims now floating around the market. PentestEval, published in late 2025, is one of the clearest reality checks available. It breaks the workflow into six stages and shows that most stages still come in under 50 percent success across evaluated models. Attack decision-making and exploit generation are the worst parts, hovering around 25 percent. In end-to-end tests, pipelines reach only 31 percent success, and the paper notes that autonomous agents such as PentestAgent and VulnBot fail almost entirely in the fully automatic setting it evaluated. That is not a small caveat hidden in the appendix. It is the main state-of-the-art signal. (arXiv)

AutoPenBench helps make the same point from a different angle. Its benchmark includes 33 tasks, ranging from introductory exercises to real vulnerable systems, and it explicitly supports MCP so that agent capabilities can be compared in a more realistic environment. The fact that benchmarking now needs to think about tool protocols and intermediate milestones tells you how the field has changed. We are past the stage where a single clever prompt is the interesting question. The interesting question now is whether a system can maintain a useful chain of state across observation, reasoning, tool use, and verification. (ACL Anthology)

This is where many product claims begin to drift away from the evidence. A demo that shows a model writing a payload is not strong evidence. A benchmark that isolates exploit generation without environment variability is also not enough on its own. A serious AI pentester has to survive hostile details: stale sessions, partial routes, misleading headers, multiple auth contexts, rate limits, hidden state, business logic, data-dependent behavior, and the simple fact that many real vulnerabilities are not syntactic. They are situational.

The good news is that the benchmark results do not say AI is useless in offensive work. They say the opposite. They say AI is already useful in bounded, evidence-linked, tool-grounded parts of the workflow. They also say that the gap between a productive copilot and a trustworthy autonomous pentester is still wide enough that architecture, validation, and human oversight remain decisive. That is a much more valuable conclusion than either blind hype or lazy dismissal. (USENIX)

What an AI pentester has to prove before it deserves the name

A product earns the label AI pentester only when it can do more than talk. At minimum, it needs to show disciplined participation in a genuine testing loop: collecting observations, deciding what matters, running or guiding checks, separating hypotheses from verified findings, preserving artifacts, and producing results that another tester can independently reproduce. If it skips the verification step, it is closer to a research assistant. If it skips the state step, it is closer to a fancy autocomplete system. If it skips the evidence step, it is closer to a report generator. None of those are useless. They are simply different things.

That system requirement becomes clearer if you map the problem as a set of components instead of features.

| Component in an AI pentester | なぜそれが重要なのか | What breaks when it is weak |

|---|---|---|

| Scope and authorization boundary | Prevents an offensive tool from becoming an unauthorized actor | Accidental out-of-scope traffic, legal exposure, destructive overreach |

| State and memory model | Preserves context across recon, auth, retries, and exploit attempts | Repeated mistakes, contradiction, context drift, dead-end loops |

| Planner | Chooses the next bounded step instead of generating generic advice | Random walk behavior, wasted tool calls, noisy reports |

| Executor | Converts reasoning into actual interactions with approved tools | Claims without actions, brittle manual handoffs |

| Verifier | Distinguishes a plausible vulnerability from a demonstrated one | Hallucinated findings, false positives, inflated severity |

| Evidence store | Preserves requests, responses, screenshots, diffs, logs, and traces | Irreproducible reports, weak retest value, stakeholder distrust |

| Policy and approval controls | Keeps high-impact actions inside governed boundaries | Credential leaks, destructive actions, runaway autonomy |

| Reporting layer | Transforms artifacts into a usable engineering output | Beautiful prose with no operational value |

OWASP’s AI Agent Security guidance reinforces why the policy and boundary pieces matter so much. Once an agent can reason, plan, use tools, and maintain memory, its attack surface expands beyond classic prompt injection into tool abuse, data exfiltration, goal hijacking, memory poisoning, cascading failures, and excessive autonomy. MCP’s own security guidance makes the same point from the protocol side, with explicit sections on confused deputy problems, token passthrough, SSRF, session hijacking, local server compromise, and scope minimization. An AI pentester is therefore not just a model problem. It is a systems problem. (OWASPチートシートシリーズ)

This is also the reason the best current commercial integrations look less glamorous than the loudest social posts. The parts that matter most are boring in the right way: reliable state, safe defaults, scope control, auditing, traceable artifacts, controlled tool access, and clean handoff to a human when the system is uncertain. PortSwigger’s Burp AI documentation is notable precisely because it is careful about user control. Features do not run unless the user activates them, AI for extensions is disabled by default, and the company documents how requests are processed and retained. That does not eliminate risk, but it does show the right design instinct: offensive AI has to be governed as much as it is optimized. (ポートスウィガー)

Where an AI pentester already saves real time

The public evidence is strong enough now to say that AI-assisted testing is already useful in day-to-day work. Bugcrowd’s 2026 Inside the Mind of a Hacker report surveyed more than 2,000 hackers and found that 82 percent use AI as part of their hacking workflow and 74 percent believe AI has increased the value of hacking. That does not mean 82 percent are running fully autonomous agents. It means the workflow shift is already here. AI has become normal infrastructure for a meaningful share of offensive research. (バグ・クラウド)

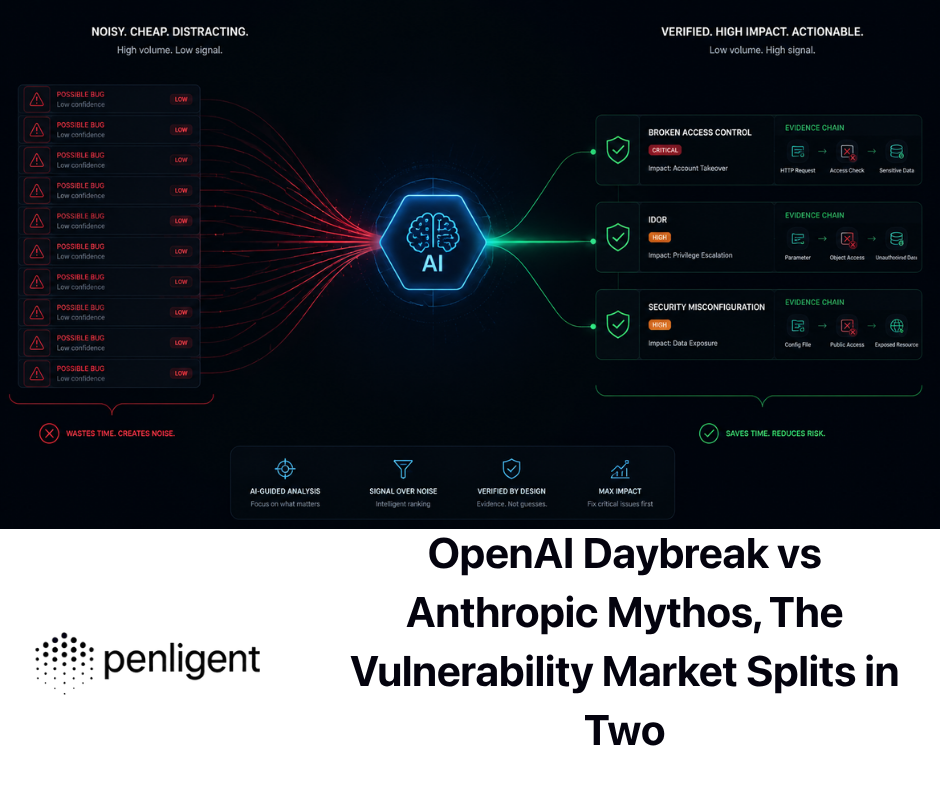

The most mature wins are not exotic. They are the places where human testers traditionally lose time to mechanical friction. A good AI layer can take a messy request sequence and summarize the likely auth flow. It can look at scanner noise and cluster the findings that actually deserve a second look. It can take a suspicious response delta and draft the next two or three validation moves. It can analyze a patch diff and surface what the fix changed and what it did not touch. It can help turn scattered console output, screenshots, and HTTP traces into a structured finding without forcing the tester to retype the same explanation three times.

Burp AI’s feature set is revealing here. Its public docs emphasize custom prompting in Repeater, autonomous issue follow-up, false-positive reduction for Broken Access Control findings, and AI-generated recorded login sequences. Notice what these capabilities have in common. They are all close to the real pain points of application testing. They live near noisy data, repetitive validation, confusing auth flows, and issue triage. They do not claim that the model has replaced the tester’s judgment. They claim that the model is shortening the path to judgment. (ポートスウィガー)

The PentestGPT paper points in the same direction from the research side. Its reported gains came from using the model where LLMs were already comparatively strong: understanding tool output, proposing subsequent actions, and helping maintain progress through a task. That lines up with how experienced testers actually describe their time sinks. The hardest part of an engagement is often not typing the payload. It is staying oriented inside a large and noisy problem space. (USENIX)

A source-aware system can go one step further when the target and codebase are both available. Shannon’s public repository describes a model that analyzes source code, identifies attack vectors, and executes real exploits against the running application and its APIs, with only proof-backed vulnerabilities included in the final report. Whether any given deployment lives up to that claim is always an empirical question, but the architecture choice itself is important. It recognizes that exploitability judgment improves when the model can see routes, trust boundaries, data flow hints, and business logic structure instead of guessing purely from black-box responses. (ギットハブ)

The same pattern shows up in the best platform design work. What matters is not whether the UI looks futuristic. What matters is whether the system helps an operator preserve a chain from signal to proof. In practice, the most useful commercial value in this category comes from tool orchestration, reproducible PoC material, artifact retention, and report packaging around a governed workflow. Penligent’s public product page leans into exactly those operational claims: orchestration across 200-plus tools, CVE-to-PoC flow, business-logic-focused testing, and evidence-first, traceable proof. That is the kind of integration that makes sense in a real offensive pipeline because it addresses execution discipline rather than just model theater. (寡黙)

Where an AI pentester still breaks under real conditions

The strongest current weakness is not raw intelligence. It is reliability under long, stateful, ambiguous conditions. PentestEval’s stage breakdown makes this painfully clear. When the work becomes less about interpreting a single tool output and more about deciding which branch to pursue, how to revise a failing exploit, or how to sustain coherent reasoning across multiple stages, current systems degrade fast. That is why the same model can look impressive in a single-step demo and fragile in a real engagement. (arXiv)

Business logic remains especially stubborn. AI systems can often spot syntactic security smells, known vulnerable components, obvious misconfigurations, or classic authorization differences. They still struggle more often than humans do when the vulnerability depends on timing, multi-actor sequencing, contract-specific workflow abuse, tenant isolation assumptions, or application semantics that live across multiple screens and roles. Those are exactly the places where a model’s confidence becomes dangerous, because the explanation can sound more stable than the evidence behind it.

The same problem appears with exploit generation. PentestEval shows that exploit generation and revision are among the weakest stages, and the end-to-end numbers show how quickly stage-level weakness compounds. A payload that is syntactically plausible is not yet a verified exploit. A script that runs is not yet a demonstrated finding. A report sentence that reads as if the bug is proven is not the same as artifact-backed proof. An AI pentester that does not enforce those distinctions will eventually cost more time than it saves. (arXiv)

Another consistent failure mode is improper stopping. Humans know when a trail is weak, when a response is just noisy, when a scanner has led them into false certainty, and when a system is one bad retry away from rate-limiting the entire engagement. Agents are still uneven at this. They often need explicit policy and state machinery to avoid over-testing, thrashing, or quietly escalating privilege in ways the operator did not intend. That is why “more autonomy” is not automatically progress. In an offensive system, autonomy is useful only when bounded by good stopping behavior.

This is also where privacy and trust boundary design re-enter the picture. Burp’s documentation is notable for being explicit that AI interactions are user-initiated, that extensions do not get AI access by default, and that extension behavior cannot be guaranteed merely because it lives inside the broader platform. That is exactly the right warning. The weakest link in an AI pentest workflow is often not the main model. It is the surrounding extension, integration, connector, or convenience feature that quietly receives sensitive material or a high-impact capability without the same scrutiny. (ポートスウィガー)

AI pentester workflows for AI-enabled products

Testing an AI-enabled product requires a different mental model from using AI to test a normal product. Once the target includes prompts, retrieved content, tool calls, memory, and model-driven action selection, you are no longer testing just an endpoint. You are testing a chain of trust transfer.

The official MCP specification describes MCP as an open protocol that enables LLM applications to connect to external data sources and tools, with hosts, clients, and servers exchanging JSON-RPC messages. That architecture is powerful precisely because it turns models into action-taking systems instead of isolated generators. MCP’s own security best-practices document therefore spends time on confused deputy attacks, token passthrough, SSRF, session hijacking, local MCP server compromise, and scope minimization. Those are not edge cases. They are the natural hazards of putting tools behind language. (モデル・コンテキスト・プロトコル)

OWASP’s AI Agent Security guidance expands the same picture. It highlights prompt injection, tool abuse, data exfiltration, memory poisoning, goal hijacking, excessive autonomy, cascading failures, malicious configuration, denial of wallet, and supply-chain compromise as first-class concerns for agentic systems. NIST’s GenAI profile complements that by stressing that AI risk is lifecycle-wide and can differ from or intensify traditional software risks. Together, these documents push toward the same conclusion: once an AI system can read content and then act on the basis of that content, ordinary application testing has to widen into full-chain testing. (OWASPチートシートシリーズ)

That is where MITRE ATLAS becomes valuable. ATLAS is a living knowledge base of adversary tactics and techniques targeting AI systems. ATT&CK remains useful for mapping conventional post-validation behavior in enterprise environments, but ATLAS gives defenders and testers a language for the AI-specific portion of the threat model. For teams evaluating AI agents, copilots, and tool-connected assistants, the right move is not to pick one framework and ignore the other. It is to use ATT&CK for the ordinary infrastructure and application behavior that follows exploitation, and ATLAS for the AI system behaviors that precede or enable it. (MITRE ATLAS)

The most useful mental shift for testers is this: content is now code-adjacent. A model may ingest a webpage, a PDF, an issue ticket, an email, or a support transcript. That content may contain hidden or misleading instructions. The model may route a tool call on the basis of what it saw. The tool may have network, file, or identity reach. And a renderer or workflow step later, the output may turn into a destructive action. That is not a hypothetical chain anymore. Palo Alto Networks Unit 42 reported web-based indirect prompt injection observed in the wild, including AI-based ad review evasion and other real-world action-chain consequences. Once content can steer action, the tester has to follow the whole chain from ingestion to effect. (Unit 42)

A serious AI pentest therefore asks questions that ordinary web testing never needed to ask so explicitly. Can retrieved content silently override system instructions. Can a tool call be induced through hidden context. Can memory carry poisoned instructions across sessions. Can rendered output leak secrets or trigger unsafe follow-on actions. Are approvals meaningful or cosmetic. Can the agent be turned into a confused deputy with access the attacker does not directly hold. And are logs, reports, or summaries inadvertently becoming exfiltration channels. None of that replaces testing auth, access control, injection, or file handling. It sits on top of them.

CVEs that explain the real security boundary around an AI pentester

The fastest way to understand the security boundary around AI pentesters is to look at recent CVEs. Not because the category is defined by CVEs, but because vulnerabilities expose where designers put too much trust in models, plugins, tool protocols, runtime code paths, or surrounding infrastructure.

始めよう CVE-2025-3248 in Langflow. GitHub’s reviewed advisory says versions prior to 1.3.0 were vulnerable because the /api/v1/validate/code endpoint allowed unauthenticated code injection leading to arbitrary code execution. Langflow’s own security overview states that versions below 1.3.0 did not enforce authentication or proper sandboxing for custom code components, creating a path to full system compromise. This vulnerability matters to AI pentester design because many agent platforms want flexible “custom code” or validation stages. If that flexibility is reachable from the network without strong authentication and isolation, the platform stops being a safe orchestration layer and becomes an RCE surface. The mitigation is conceptually simple and operationally non-negotiable: authenticate the endpoint, isolate execution, and stop treating exec()-adjacent features as harmless developer convenience. (ギットハブ)

Then look at CVE-2026-33017, also in Langflow. GitHub’s advisory says the unauthenticated build_public_tmp flow endpoint accepted attacker-controlled flow data and passed it to exec() with zero sandboxing, resulting in unauthenticated RCE. The important lesson is not just that Langflow had another bug. The lesson is that patching one obvious code-execution pathway does not solve the underlying architectural habit of letting attacker-controlled workflow data drift into powerful runtime behavior. For AI pentesters, that is a direct warning: every “public flow,” “temporary build,” “debug run,” or “preview” endpoint should be treated as part of the attack surface, not as a harmless wrapper around the real system. (ギットハブ)

Now move to tool protocols. CVE-2026-4270 affected the AWS API MCP Server. AWS’s own advisory says the server allows AI assistants to interact with AWS resources through AWS CLI commands and that versions from 0.2.14 through 1.3.8 could bypass intended file access restrictions in no-access そして workdir modes, exposing arbitrary local file contents in the MCP client application context. This is a clean example of why tool boundaries matter so much in AI security. The model did not need to be compromised in some mystical way. A local boundary failed around what the tool server was allowed to read. Once that happened, the LLM application context became the exposure surface. The mitigation was to upgrade to 1.3.9, but the design lesson is broader: capability scoping has to be enforced by the runtime, not merely described in the UI. (Amazon Web Services, Inc.)

CVE-2026-26118 in Azure MCP Server drives the same point from a network angle. NVD describes it as SSRF that allows an authorized attacker to elevate privileges over a network. That single sentence is enough to show why agent systems must be tested with the same seriousness as any other networked automation layer. As soon as a tool-connected agent can fetch or query resources on behalf of a user, SSRF becomes a path not only to metadata or internal services, but potentially to privileged downstream operations. The fix is patching, of course. But the engineering response should also include outbound policy control, network segmentation, and a refusal to assume that “authorized attacker” means “low risk.” In agent systems, authorized contexts are often the most dangerous ones. (NVD)

CVE-2026-31951 in LibreChat shows what happens when MCP integration collides with credential substitution. NVD states that user-created MCP servers could include arbitrary headers that undergo credential placeholder substitution, allowing victims who call tools on a malicious server to have OAuth tokens exfiltrated. GitHub’s advisory is even clearer about impact, listing OAuth token theft, account takeover, identity theft, and lateral movement, especially for users authenticated through OpenID SSO. This is not just a LibreChat story. It is a story about every AI assistant that lets third-party tool servers participate in a high-trust context. If the runtime lets headers, parameters, or templated placeholders cross trust boundaries too freely, a malicious connector can become a credential vacuum. The mitigation is to patch, constrain server creation, minimize placeholder substitution, and keep tool approval boundaries meaningful. (NVD)

CVE-2025-2867 in GitLab Duo with Amazon Q matters for a different reason. NVD says a specifically crafted issue could manipulate AI-assisted development features and potentially expose sensitive project data to unauthorized users in several GitLab 17.8 through 17.10 ranges. This is important because it punctures the lazy assumption that AI risk is only about public chatbots or experimental agents. Here the vulnerable feature lived in an enterprise development workflow. The exploitation condition was not “break the model.” It was “craft content that steers AI-assisted behavior toward data exposure.” That is exactly the kind of boundary an AI pentester should be able to reason about when testing developer platforms, ticketing integrations, and secure-by-default claims around embedded assistants. (NVD)

Those are AI-native or AI-adjacent examples. But an AI pentester still has to live in the ordinary vulnerability world too. CVE-2024-3400 in PAN-OS GlobalProtect allowed unauthenticated attackers to reach command injection with root privileges on affected firewalls when the vulnerable feature configuration was present. CVE-2023-46604 in Apache ActiveMQ allowed a remote attacker with network access to broker or client to run arbitrary shell commands by manipulating serialized class types in the OpenWire protocol. Neither vulnerability is “about AI,” and that is the point. An AI pentester worth using still has to handle classical infrastructure reality: asset visibility, version nuance, environmental prerequisites, network reachability, exploit conditions, and proof. AI can shorten the path from exposure clue to validation plan, but it does not eliminate the need for environmental evidence. (NVD)

Finally, CVE-2024-3094 in XZ Utils is the reminder that the offensive platform’s own supply chain matters just as much as the target’s. NVD and CISA describe malicious code in upstream XZ tarballs that altered the build process and modified liblzma behavior. For AI pentester systems, which increasingly depend on layered open-source packages, browsers, MCP servers, model runtimes, connectors, and CLI tools, supply-chain trust is not background noise. It is a core design concern. A system that orchestrates dozens of external components is only as trustworthy as its provenance and update discipline. (NVD)

The thread connecting all of these CVEs is simple. The biggest risk around AI pentesters is rarely “the model answered badly.” The bigger risk is that the surrounding runtime granted the model too much reach, too little validation, too much secret exposure, or too much trust in third-party content and tools.

Building an evidence-first AI pentester workflow

The safest way to use an AI pentester today is to force it into an evidence-first loop. That means the system must earn every claim by attaching it to an artifact, a reproducible step, or a differential observation. The moment the workflow skips that discipline, you have moved from security testing into speculative storytelling.

The first part of that loop is controlled data collection. The goal is not to unleash maximum traffic and hope the model sorts it out later. The goal is to capture enough structured evidence for the AI layer to reason over without creating chaos.

#!/usr/bin/env bash

# Authorized targets only. Run against approved scope.

set -euo pipefail

TARGET="${1:?Usage: ./run.sh https://staging.example.com}"

RUN_ID="$(date +%Y%m%d-%H%M%S)"

BASE="runs/${RUN_ID}"

mkdir -p "${BASE}"/{scope,http,recon,findings,evidence}

printf '%s\n' "$TARGET" > "${BASE}/scope/targets.txt"

# Lightweight live check and HTTP fingerprinting

httpx -silent -json -u "$TARGET" \

| tee "${BASE}/http/httpx.jsonl"

# Low-rate template-based checks with artifacts preserved

nuclei -u "$TARGET" \

-rl 3 \

-retries 1 \

-jsonl \

-o "${BASE}/findings/nuclei.jsonl"

# Preserve key metadata for later reasoning and diffing

jq -r '{url, status_code, title, tech, webserver}' \

"${BASE}/http/httpx.jsonl" \

> "${BASE}/evidence/http-summary.jsonl"

The point of a workflow like this is not that AI needs JSON for aesthetic reasons. It is that structured artifacts are easier to diff, cluster, explain, retest, and export into a report. A model is much more useful when it is asked to reason over preserved evidence than when it is expected to remember a flood of transient terminal output.

The second part is differential validation. Many of the highest-value findings in real application testing are not single response bugs. They are differences between roles, sessions, tenants, or feature states. AI is genuinely helpful here because it can summarize the delta, but the delta still has to be collected in a reproducible way.

#!/usr/bin/env bash

# Example role-diff check for an authorized staging environment

set -euo pipefail

BASE_URL="https://staging.example.com"

RESOURCE="/api/projects/42"

curl -sS \

-H "Authorization: Bearer $(cat tokens/user.txt)" \

"${BASE_URL}${RESOURCE}" \

| jq -S . > evidence/user-project42.json

curl -sS \

-H "Authorization: Bearer $(cat tokens/admin.txt)" \

"${BASE_URL}${RESOURCE}" \

| jq -S . > evidence/admin-project42.json

diff -u evidence/user-project42.json evidence/admin-project42.json \

> evidence/project42-role-diff.patch || true

This is a good example of where an AI pentester can add real value without pretending to replace the analyst. The system can read the diff, point out fields that should not be visible, suggest follow-up checks for list endpoints or sibling object IDs, and draft the minimal reproduction narrative. But the artifact itself remains the source of truth.

The third part is finding normalization. If the AI layer is going to generate a result worth keeping, it should attach that result to a stable structure rather than bury it in paragraphs.

{

"finding_id": "BAC-2026-0042",

"title": "Horizontal access control weakness on project resource",

"target": "https://staging.example.com/api/projects/42",

"prerequisites": [

"Two valid test accounts in the same tenant",

"User token for low-privilege role",

"Admin token for control comparison"

],

"observation": {

"user_status": 200,

"admin_status": 200,

"unexpected_fields": ["billing_email", "internal_notes"],

"artifact_files": [

"evidence/user-project42.json",

"evidence/admin-project42.json",

"evidence/project42-role-diff.patch"

]

},

"verification_status": "reproduced",

"impact_statement": "Low-privilege user can view fields reserved for elevated role",

"next_steps": [

"Test sibling object IDs for IDOR",

"Retest after patch",

"Map likely detection opportunities in access logs"

]

}

A structure like this makes the rest of the workflow much cleaner. A model can summarize it for engineering. A retester can replay it. A security lead can compare multiple findings without rereading prose. A reporting layer can format it for stakeholders. And a detection engineer can translate it into log hypotheses or ATT&CK mapping.

This is also the point where a platform can add legitimate value without hijacking the article. In real teams, the useful part of an AI pentest platform is not the model monologue. It is the packaging of approved tools, captured artifacts, reproducible PoCs, and report material into a single governed run record. Penligent’s public product material emphasizes exactly those operational properties: orchestration across a large toolset, CVE scanning tied to one-click PoC generation, business-logic-focused testing, and traceable proof artifacts. That kind of packaging belongs naturally in an evidence-first workflow because it shortens the handoff distance between recon, validation, and reporting. (寡黙)

The same discipline applies when the target is an AI-enabled application. A good AI pentester workflow for an agentic target should preserve not only HTTP traces and output, but also prompt context, retrieved content references, tool call requests, approval state, memory mutations, and any transformation that occurs between model output and real action. If you cannot reconstruct the chain, you cannot explain the risk. And if you cannot explain the risk, you do not have a trustworthy finding yet.

Deploying an AI pentester without creating a new problem

The first deployment mistake is over-trust. Teams see a polished assistant or a compelling autonomous demo and immediately point it at production with broad credentials. That is the wrong instinct. Offensive automation should enter the environment in the same way any other high-risk system enters the environment: under explicit scope, minimal privilege, and staged trust.

The second mistake is pretending that model quality is the main control question. It is not. The main questions are more operational. Which targets can the system reach. Which tools can it invoke. Which credentials can it see. What outbound network calls can it make. What secrets may appear in its context. What artifacts does it preserve. Which approvals are enforced in the runtime instead of implied in policy. And how easily can a human override, pause, or replay the system’s work. NIST’s GenAI profile makes it clear that AI risk is not confined to one layer. OWASP’s agent security guidance makes it equally clear that over-privileged or loosely governed agents turn common weaknesses into serious operational risk. (NIST出版物)

A practical deployment checklist for an AI pentester should therefore include at least the following engineering questions, even when the product demo never mentions them. Can the system be fully disabled. Are AI-enabled extensions off by default. Are prompts and responses audited. Is stored request data encrypted. Can you restrict tools by engagement or by operator. Can you enforce target allowlists. Can you separate finding hypotheses from verified findings in the report output. Can the system work in low-noise mode for staging or shadow environments. And can it preserve raw evidence alongside summary text. PortSwigger’s AI documentation is valuable here not because it answers every question perfectly, but because it models the right level of explicitness around activation, retention, provider boundaries, and extension control. (ポートスウィガー)

There is also a subtle but important scoping issue that becomes more severe with AI, not less severe. Traditional pentesters already know that scope mistakes are dangerous. Agentic testers compound that danger because they can move faster and chain more actions once a mistake begins. An AI pentester that can browse, call tools, follow redirects, open documents, or read local state needs tighter target definition than a person manually clicking through a proxy. The correct default is narrower autonomy, not wider autonomy.

Finally, teams should stop confusing “useful” with “ready for unsupervised use.” The public evidence does not support that leap. What it supports is a narrower and more valuable claim: AI pentesters are now very good at compressing the path from observation to candidate conclusion, and increasingly useful at preserving and organizing the evidence needed for verification. That alone can be transformative in the right workflow. It just is not the same as handing over the whole practice.

Final judgment on the AI pentester category

The current state of the field is more interesting than either side of the usual argument admits. The category is real. The value is real. The adoption is real. The research support is real. Burp AI, PentestGPT, Shannon, MCP-based ecosystems, and the emerging benchmark work all point in the same direction: models are now meaningful components in offensive workflow. Bugcrowd’s 2026 data makes it hard to argue otherwise. (ポートスウィガー)

At the same time, the evidence does not support the loudest claims about autonomous replacement. PentestEval is especially useful here because it shows exactly where the current systems still break: attack decision-making, exploit generation, long-horizon reliability, and end-to-end stability. That is not a minor implementation gap. It is the core engineering challenge of the category. (arXiv)

So the right way to think about an AI pentester in 2026 is not as a robot consultant and not as a toy chatbot. It is best understood as a governed system for shrinking the distance between raw signal and verified finding. Sometimes that system looks like an assistant inside a familiar tool. Sometimes it looks like a multi-step agent. Sometimes it looks like a source-aware validator. Sometimes it looks like a workflow for testing AI systems themselves. The mature question is no longer whether the category exists. The mature question is whether the system around it is disciplined enough to deserve your trust.

Further reading and reference links

- PentestGPT, USENIX Security 2024 paper (USENIX)

- AutoPenBench benchmark for generative agents in vulnerability testing (ACL Anthology)

- PentestEval benchmark for stage-level and end-to-end LLM pentesting evaluation (arXiv)

- OWASP Web Security Testing Guide (OWASP財団)

- NIST SP 800-115「情報セキュリティのテストと評価に関するテクニカルガイド」(NISTコンピュータセキュリティリソースセンター)

- NIST AI RMF Generative AI Profile (NIST出版物)

- OWASP AI Agent Security Cheat Sheet (OWASPチートシートシリーズ)

- Model Context Protocol specification and security best practices (モデル・コンテキスト・プロトコル)

- MITRE ATLAS for adversarial AI techniques (MITRE ATLAS)

- Palo Alto Networks Unit 42 on web-based indirect prompt injection observed in the wild (Unit 42)

- Bugcrowd Inside the Mind of a Hacker 2026 (バグ・クラウド)

- CVE-2025-3248, Langflow unauthenticated RCE (ギットハブ)

- CVE-2026-33017, Langflow public flow build RCE (ギットハブ)

- CVE-2026-4270, AWS API MCP Server file access restriction bypass (Amazon Web Services, Inc.)

- CVE-2026-26118, Azure MCP Server SSRF (NVD)

- CVE-2026-31951, LibreChat MCP header injection and token theft (NVD)

- CVE-2025-2867, GitLab Duo with Amazon Q data exposure issue (NVD)

- CVE-2024-3400, PAN-OS GlobalProtect RCE (NVD)

- CVE-2023-46604, Apache ActiveMQ OpenWire RCE (NVD)

- CVE-2024-3094, XZ Utils supply-chain compromise (NVD)

- AI Pentest Tool, What Real Automated Offense Looks Like in 2026 (寡黙)

- Pentest AI, What Actually Matters in 2026 (寡黙)

- AIペンテスト・コパイロット、スマートな提案から検証結果まで (寡黙)