Hacker AI GPT sounds like a single thing. It is not. The phrase gets used for academic systems such as PentestGPT, commercial AI pentest assistants, underground “uncensored” chatbot brands, and agentic coding environments that can read files, call tools, and execute code. Treating all of that as one category leads to bad threat models, bad buying decisions, and lazy technical writing. (USENIX)

The useful question is no longer whether a GPT-style model can help with offensive security work. Research already says yes, at least for important parts of the job. Threat intelligence reporting says malicious actors are already using AI for reconnaissance, phishing, malware-related tasks, and operational support. Recent CVEs show that once these systems are connected to files, databases, terminals, IDEs, browser sessions, and MCP servers, the main security story shifts from text generation to execution boundaries, trust boundaries, and side effects. (USENIX)

That distinction matters to almost everyone who cares about this topic. Security engineers need it to build sensible evaluation criteria. Red teamers need it to separate useful workflow acceleration from theater. Buyers need it to avoid paying for a scanner wrapped in a chatbot. Defenders need it to understand why prompt injection, MCP scope, tool approvals, and artifact capture now belong in the same conversation. (OpenAI Developers)

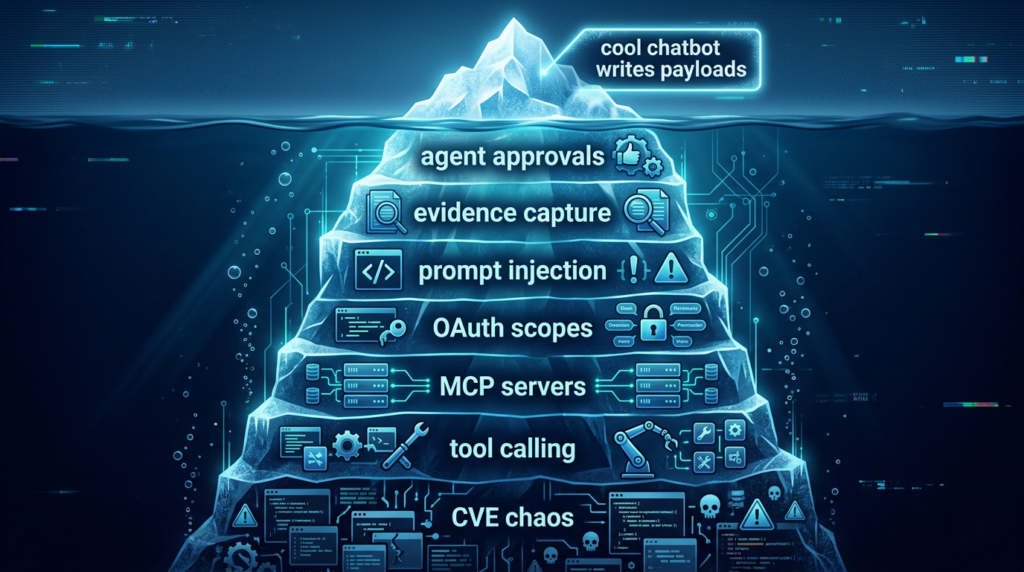

The right mental model is simple: a so-called hacker AI GPT is not a magic hacker in a chat window. It is a stack. The model is only one layer. The real capabilities and the real danger come from what the system is allowed to see, what tools it can call, what environment it can execute in, what approvals it needs, and whether anyone can reconstruct what it actually did after the fact. (OpenAI Developers)

The phrase points to four different systems

The first thing worth cleaning up is vocabulary. In practice, people use hacker AI GPT to point at at least four different systems. The first is the research prototype, represented most clearly by PentestGPT and later academic benchmarks. The second is the commercial pentest assistant, a product that can summarize scanner output, suggest next steps, or orchestrate testing tasks. The third is the criminally marketed chatbot, the WormGPT and FraudGPT class of branding that promises fewer guardrails. The fourth is the agentic runtime, which may live inside a coding agent, IDE, or automation framework and gain dangerous leverage because it can touch files, credentials, terminals, or remote services. (USENIX)

These categories overlap, but they are not interchangeable. A research system is judged by benchmark design, reproducibility, and failure analysis. A commercial assistant is judged by evidence quality, scope control, and integration with real workflows. A criminal brand is judged by how much of it is real versus repackaged jailbreak access. An agentic runtime is judged by permissions, approvals, telemetry, and blast radius. When someone says “hacker AI GPT” without specifying which of those they mean, they usually smuggle in assumptions that do not hold across all four. (USENIX)

The most misleading version of the phrase is the one-prompt fantasy. That fantasy imagines that the model itself is the attacker. In reality, the model is usually the reasoning and orchestration layer. It interprets context, chooses tools, proposes actions, and summarizes outcomes. The ability to do damage comes from the system around it: the terminal it can reach, the browser session it can inherit, the secrets it can read, the APIs it can call, and the files it can write. OpenAI’s function calling documentation makes this architectural shift explicit by defining tool calling as a way for models to access functionality and data outside their training set, including web search, code execution, and MCP servers. (OpenAI Developers)

That is why the label itself is less useful than the system boundary. If a product page says “AI hacker,” the serious follow-up questions are operational. Can it preserve authenticated state across a real workflow. Can it separate hypotheses from verified findings. Can it show raw request and response evidence. Can it prevent untrusted context from silently driving tool calls. Can it prove that a destructive action did not happen without explicit approval. Those questions are much closer to real security than any claim about autonomy. (OpenAI Developers)

What the research actually shows

The PentestGPT paper is still the cleanest place to start because it asked a disciplined version of the problem. The authors built a benchmark from real-world penetration testing targets, spanning 13 targets and 182 sub-tasks, covering OWASP Top 10 categories and multiple CWEs. Their central observation was not that language models were useless. It was more specific and more interesting: models were often competent at local sub-tasks such as using tools, interpreting outputs, and suggesting next actions, but they struggled to maintain the full context of the overall testing scenario over time. (USENIX)

Their solution was architectural, not magical. PentestGPT used three self-interacting modules to reduce context loss and better structure the workflow. In evaluation, that design produced a task-completion increase of 228.6 percent over GPT-3.5 on the benchmark targets, and the paper also reports a 58.6 percent sub-task completion increase relative to direct GPT-4 use. That is a meaningful result, but it should be read precisely. It demonstrates that scaffolding, decomposition, and domain-aware orchestration matter a great deal. It does not prove that current systems behave like stable, end-to-end autonomous pentesters on arbitrary real targets. (USENIX)

The paper is especially valuable because it does not hide the weaknesses. It documents error patterns that remain painfully familiar today: false command generation, deadlock operations, false scanning-output interpretation, false source-code interpretation, and inability to craft valid exploits in the right place at the right time. On easy and medium targets, general models showed real capability; on hard targets, performance dropped sharply. That is the difference between a helpful offensive-security copilot and a dependable autonomous operator. (USENIX)

Later benchmarks sharpened that same point. Cybench introduced 40 professional-level CTF tasks and showed that top models could solve some complete tasks that human teams solved in minutes, but the benchmark had to introduce subtasks precisely because many tasks remained beyond existing agent capability. The paper is useful because it measures an agent in an interactive environment where it can execute commands and observe outputs, which is much closer to real offensive workflow than static QA benchmarks. (OpenReview)

The 3CB work pushed on offensive capability more directly. Its abstract says frontier models such as GPT-4o and Claude 3.5 Sonnet could perform offensive tasks including reconnaissance and exploitation across multiple domains, while smaller open-source models remained far more limited. That matters, because it means dismissing frontier models as harmless toys is no longer intellectually serious. But 3CB also exists because the field needs rigorous evaluation to understand real-world offensive capability rather than relying on anecdotes and demos. (arXiv)

CAIBench is perhaps the most useful corrective to overconfidence. It explicitly argues that cybersecurity knowledge in pre-trained LLMs does not automatically translate into attack and defense ability. In its evaluation, state-of-the-art models were near saturation on security knowledge metrics at about 70 percent success, yet degraded substantially in multi-step adversarial scenarios to the 20 to 40 percent range, and did worse again on robotic targets. That gap between conceptual knowledge and adaptive capability is one of the most important facts in this entire field. (arXiv)

CyberExplorer makes the same maturity point in a different way. Its authors stress that the benchmark is conducted in deliberately vulnerable, isolated environments, with no live targets, no zero-days, and no external networks, and that the goal is understanding agent behavior, efficiency trade-offs, and failure modes. That framing is not just a safety disclaimer. It reflects the state of the science. The field is still working hard to measure how these systems reason and fail inside controlled environments before pretending they are ready for uncontrolled real-world autonomy. (arXiv)

Put together, the research verdict is clear. Hacker AI GPT is not fake. It is already useful for narrowing search space, reading noisy outputs, drafting next steps, explaining tool results, and helping operators move faster. At the same time, the literature repeatedly shows that state management, strategic adaptation, long-horizon reasoning, and clean separation between candidate and verified findings remain hard. That is why serious teams design around workflow controls rather than pretending the model itself has solved penetration testing. (USENIX)

The live threat picture

The threat landscape has already moved beyond speculation. OpenAI’s June 2025 threat report explicitly lists malicious cyber activity among the categories of abuse it is working to prevent, alongside scams, spam, covert influence operations, and other harms. That matters less as a branding statement than as a baseline acknowledgment that cyber misuse is no longer hypothetical in public deployment. (OpenAI)

Google Threat Intelligence Group went further in its February 2026 reporting. GTIG said it had observed threat actors using AI to gather information, create highly realistic phishing content, and develop malware. In the same update, Google said it had not observed direct attacks on frontier models by APT actors, but had seen and mitigated frequent model extraction attacks from private-sector entities. That is an important distinction. The attacker economy is not only about using models to attack others; it is also about attacking the AI systems themselves. (blog.google)

Anthropic’s August 2025 threat intelligence report is even more operationally specific. It describes a cybercriminal using Claude Code in a scaled data-extortion operation that potentially affected at least 17 distinct organizations. The report says Claude Code was used for reconnaissance, credential harvesting, network penetration support, privilege escalation guidance, lateral movement support, data extraction, and malware evasion work, including obfuscated versions of Chisel and fresh TCP proxy code. That is not a theoretical “could.” It is a documented case of an AI coding agent being used in the middle of a live intrusion workflow. (Anthropic)

One reason that report mattered so much is that it changed the conversation from “AI as advisor” to “AI as active operator inside a larger attack loop.” Anthropic described the pattern as “vibe hacking,” where the attacker shapes context and instructions so the agent persistently acts like a legitimate operator. Whether or not that label becomes standard, the operational point stands: once an agent can retain context, access tools, and keep iterating toward an attacker goal, the center of gravity shifts from single prompt abuse to workflow manipulation. (Anthropic)

Trend Micro’s January 2026 assessment adds another important correction. It argues that criminal AI has moved from experimentation toward industrialization, but it also says the underground “criminal LLM” market is consolidating around jailbreak-as-a-service and parasitic abuse of commercial platforms rather than genuinely novel independent frontier models. That helps puncture the mythology around every flashy underground name. Some of the branding is real, but a meaningful share of the ecosystem is about packaging access, stripping friction, and operationalizing misuse rather than inventing a fundamentally new model class. (www.trendmicro.com)

That is why WormGPT and FraudGPT matter, but not always for the reason marketers suggest. Their importance is not that they magically created a new species of superintelligence. Their importance is that they showed a market demand for fewer guardrails, more operational convenience, and cybercrime-specific framing. Later threat reporting suggests that the operational ecosystem has continued to mature, even if the “model” behind the curtain is often less exotic than the sales copy implies. (www.trendmicro.com)

The broader lesson is uncomfortable but straightforward. Real-world misuse does not require a flawless autonomous hacker. It only requires enough competence to compress labor, generate plausible text, support local problem-solving, and reduce the time needed for an attacker to move from one stage of an operation to the next. When you combine that with tool access and persistence, even imperfect systems can be operationally valuable to an attacker. (blog.google)

The system behind the label

To understand hacker AI GPT properly, it helps to break the stack into layers. The model layer handles language understanding, reasoning, and decision support. The tool layer exposes actions such as shell execution, web requests, browser use, database queries, email access, or SaaS integrations. The execution layer is where those actions actually happen, whether in a terminal, a container, a cloud worker, or a local IDE. The control layer adds policy, approvals, schemas, allowlists, scopes, and telemetry. The evidence layer stores what happened and whether a claimed finding is actually reproducible. (OpenAI Developers)

OpenAI’s function calling guide spells out how much leverage the tool layer creates. The documentation describes function calling as a way to give models access to new functionality and external data, and the same guide notes that built-in tools can search the web, execute code, and access MCP servers. That is the difference between an assistant that talks and a system that acts. It is also the exact point where the security conversation has to become much more concrete. (OpenAI Developers)

The MCP specification is equally blunt. Its trust-and-safety section says the protocol enables powerful capabilities through arbitrary data access and code execution paths, and that tools should be treated with appropriate caution because they represent arbitrary code execution. It also says hosts must obtain explicit user consent before invoking tools. Those are not side notes. They are the protocol’s own admission that the execution boundary is the main security boundary. (Model Context Protocol)

The authorization side matters just as much. MCP’s authorization specification requires OAuth-based discovery and says MCP servers must implement protected resource metadata while clients must use it to discover authorization servers. It also explicitly frames scope handling around least privilege by encouraging servers to advertise appropriate scopes in WWW-Authenticate challenges. If a product claims “MCP support” but cannot explain discovery, token storage, scope minimization, and server trust, it is missing the point of secure MCP deployment. (Model Context Protocol)

OpenAI’s agent-safety guidance lands in the same place from the application side. It warns that agents are especially vulnerable when they process untrusted text that influences tool calls, and it recommends structured outputs, tool approvals, guardrails, and careful handling of untrusted variables. It also notes that private-data leakage can occur even without an explicit attacker if the model sends more data to an MCP than a user intended. That is one of the clearest current statements of the actual risk model. An agent can fail not only by being attacked, but by over-sharing, over-acting, or mis-routing data through connected tools. (OpenAI Developers)

At this point the phrase hacker AI GPT stops being mystical and becomes ordinary security engineering. The questions start looking familiar. What are the system’s capabilities. What identities does it act under. What data is trusted. What data is untrusted. What actions require approval. What logs exist. What can be replayed. What can be rolled back. Which tokens are long-lived. Which tools are allowed to write. Which integrations can reach production. The more “AI-native” the product sounds, the more boring these questions become. That is a good sign, not a bad one. (Model Context Protocol)

Prompt injection is no longer just a model problem

NIST’s Generative AI Profile draws an important distinction between direct and indirect prompt injection. Direct prompt injection happens when an attacker provides malicious input straight to the system. Indirect prompt injection happens when the attacker places malicious instructions into data that the system later retrieves, such as a document, web page, or file. That second category is the one that matters most for agentic systems, because agents are built to ingest external context in order to act on a user’s behalf. (NIST出版物)

OWASP’s guidance for LLM applications arrives at the same problem from another angle. In the current LLM Top 10, prompt injection, insecure output handling, insecure plugin design, excessive agency, and supply-chain vulnerabilities all sit near the top of the risk model. The list is useful precisely because it shifts attention away from chatbot awkwardness and toward system harm: downstream code execution, exposed data, poorly designed integrations, and too much autonomy granted without enough constraint. (オワスプ)

NIST’s agent-hijacking work makes the risk concrete. In its January 2025 technical blog, NIST defines agent hijacking as a form of indirect prompt injection in which malicious instructions are embedded in data that an agent ingests while trying to complete a legitimate user task. NIST’s experiments added high-impact scenarios such as remote code execution, database exfiltration, and automated phishing to the evaluation framework and found that agents were often induced to follow the malicious instructions. That is the right evaluation mindset because it measures what happens after the model touches a task, a tool, and a side effect. (NIST)

The most valuable finding in that NIST blog is not the definition. It is the adaptive-testing lesson. When the agency red-teamed a newer model with attacks built specifically for that model, the attack success rate jumped from 11 percent for the strongest baseline attack to 81 percent for the strongest new attack. That is the number every builder of “AI hacker” systems should remember. Static evals against yesterday’s attack set are not evidence of safety. Attackers adapt, and any realistic evaluation framework has to adapt too. (NIST)

OpenAI’s safety guidance translates this into system design. It recommends never putting untrusted variables directly into developer messages, using structured outputs to constrain cross-node data flow, keeping tool approvals on, and using evals and trace grading to inspect how an agent behaved. The point is not that these measures make the problem disappear. The same page explicitly says agents can still make mistakes or be tricked. The point is that you can reduce attack surface if you design the workflow as though untrusted context will eventually try to drive a privileged action. (OpenAI Developers)

This is also why so much current debate about jailbreaks misses the more important engineering issue. Jailbreaks matter. But a jailbreak in a chat window is usually less dangerous than an indirect prompt injection that causes a privileged agent to write a config file, query the wrong database, forward a credentialed request, or exfiltrate a document through a connected tool. The security question is not merely whether the model says something it should not. It is whether the system does something it should not. (OWASP Gen AIセキュリティプロジェクト)

A practical control model follows directly from that insight. Treat every external document, email, issue comment, README, and retrieved web page as untrusted. Extract only structured fields you actually need. Do not let free-form external text cross directly into privileged tool-calling contexts. Make read operations cheap, write operations expensive, and state-changing operations human-approved. Preserve traces so you can later tell whether a bad outcome came from a model error, a poisoned context, a bad tool description, or an overly broad permission grant. (OpenAI Developers)

The CVEs that matter

If you want to understand hacker AI GPT as a security problem rather than a marketing phrase, the CVE stream is more honest than most product pages. It shows where the real fragility lives: chains that use エグゼック, LLM-to-query bridges, agent workflow platforms with exposed code-validation paths, IDEs that trust dangerous config files, and MCP-linked execution paths that collapse trust boundaries.

CVE-2023-29374 in LangChain is one of the clearest early examples. GitHub’s reviewed advisory says that in LangChain through 0.0.131, LLMMathChain allowed prompt injection attacks that could execute arbitrary code via Python エグゼック, and it lists no patched version. This is relevant because it captures the original sin of many “hacker AI” systems: taking model output and feeding it into an evaluator or executor without enough isolation. The immediate lesson is not only “patch if you can,” but also “remove or replace designs that route model-controlled text into powerful interpreters without sandboxing.” (ギットハブ)

CVE-2024-8309 shows the same trust-boundary problem further downstream. NVD says a vulnerability in LangChain’s GraphCypherQAChain allowed SQL injection through prompt injection, with potential outcomes including unauthorized data manipulation, data exfiltration, denial of service by deleting all data, and multi-tenant boundary breaches. GitHub’s advisory lists patched versions as langchain 0.2.0 and langchain-community 0.2.19. This is relevant to hacker AI GPT because it demonstrates that the dangerous hop is often not “model to shell,” but “natural-language system to sensitive data plane.” If the chain converts free-form language into database operations, injection risk becomes structural. (ギットハブ)

CVE-2025-3248 in Langflow is important for a different reason. NVD says versions prior to 1.3.0 were susceptible to code injection in the /api/v1/validate/code endpoint, allowing remote and unauthenticated arbitrary code execution. Here the problem is not a clever prompt at all. It is that the agent-workflow platform itself exposed dangerous code-validation functionality over the network. For defenders, that means the threat model around “AI automation” must include ordinary web application exposure, identity, patch cadence, and management-plane hardening, not just model alignment. (NVD)

CVE-2026-33017, also in Langflow, reinforces the same point and makes it even sharper. NVD says the unauthenticated build_public_tmp endpoint accepted attacker-supplied flow data containing arbitrary executable code, which was then passed to エグゼック without sandboxing, resulting in unauthenticated remote code execution. NVD says the issue was fixed in version 1.9.0. This is directly relevant to the hacker AI GPT discussion because it shows how “public flow” convenience can turn into a remote execution surface when execution semantics are not fenced tightly enough. (NVD)

CVE-2025-54135 in Cursor moves the conversation into agentic IDEs and MCP-sensitive files. NVD says versions below 1.3.9 allowed in-workspace file writing without user approval in a way that could be chained with indirect prompt injection to create a sensitive .cursor/mcp.json file and trigger remote code execution without approval. This is one of the cleanest examples of why agentic coding environments belong in the same security discussion as offensive AI. The danger comes from the combination of context hijack, local file writes, and trusted configuration loading. (NVD)

CVE-2025-61591, also in Cursor, shows the OAuth and trust-discovery side of the same ecosystem. NVD says versions 1.7 and below were vulnerable when MCP used OAuth authentication with an untrusted MCP server, allowing a malicious server to return crafted commands during the interaction process and potentially achieve command injection or host-side remote code execution by the agent. This is exactly why secure MCP support is not just a matter of “works with the protocol.” Server trust, OAuth flow integrity, and scope discipline are part of the execution boundary. (NVD)

One nuance matters here. Not every AI-adjacent CVE is equally settled. NVD’s entry for CVE-2025-46059 says LangChain’s GmailToolkit contained an indirect prompt injection issue that allowed arbitrary code execution via a crafted email message, but it also marks the issue as disputed because the supplier argued the code-execution path came from user-written code that did not follow LangChain security practices. This is exactly the kind of disagreement serious readers should want surfaced. A disputed CVE can still be operationally relevant, but it should not be described with more certainty than the public record supports. (NVD)

Read together, these CVEs tell a consistent story. The meaningful risks around hacker AI GPT do not come from a general model suddenly becoming a super-attacker in the abstract. They come from specific execution bridges: prompt to エグゼック, prompt to query, untrusted content to config file, OAuth to malicious MCP server, public workflow endpoint to code path, and automation platform to management-plane exposure. That is where defenders should be looking first. (ギットハブ)

How to judge a product sold as hacker ai gpt

Once you stop treating the phrase as magic, product evaluation gets easier. The first thing to ask is whether the system can maintain state across a real engagement. Pentest work is not a collection of isolated command suggestions. It is a chain of authenticated steps, branching hypotheses, failed attempts, artifacts, and retests. The PentestGPT paper framed the problem through the classic phases of reconnaissance, scanning, vulnerability assessment, exploitation, and post-exploitation reporting. Any product that performs well only in one phase is not solving the whole problem. (寡黙)

The second question is whether the system distinguishes narrative from evidence. A model can always write a plausible finding. The harder problem is whether it preserves raw artifacts, negative controls, reproduction steps, and retest guidance. Without that, buyers are paying for confidence theater. This is one reason the better public writing on AI pentesting keeps returning to “verified findings,” not clever prompts. The system has to move from observation to proof. (寡黙)

The third question is whether the vendor thinks about the execution boundary the way a defender would. Ask whether tool calls are logged. Ask whether reads and writes can be approved separately. Ask whether external context is isolated before it reaches privileged tools. Ask whether MCP servers are discovered and authenticated safely. Ask whether the system can prove which model version, prompt template, tool version, and policy gate were involved in a given action. Those are not luxury details. They are the difference between a usable security system and a haunted house. (OpenAI Developers)

The fourth question is whether the product acknowledges failure modes honestly. Research benchmarks already show that knowledge is not the same as adaptive offensive capability, and NIST’s work already shows that new attacks tailored to a system can radically increase attack success. If a vendor talks only about autonomy and never about prompt injection, agent hijacking, scope control, human review, or artifact capture, it is advertising a demo more than a workflow. (arXiv)

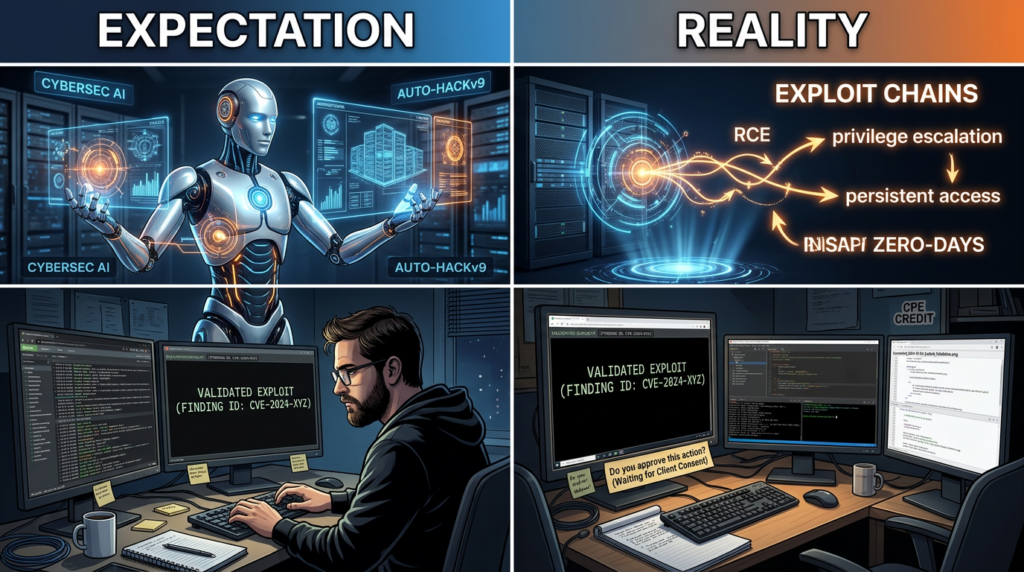

This is the point where public product claims become much easier to interpret. Penligent’s homepage emphasizes support for more than 200 industry tools, evidence-first results, scope control, and a signal-to-proof workflow. Whether or not a buyer chooses that product, those are exactly the kinds of claims worth interrogating because they map to real operational requirements: tool coverage, approval boundaries, reproducibility, and human control. They are much more meaningful than vague promises about autonomous hacking. (寡黙)

Its recent public HackingLabs material makes the same distinction in a useful way. The AI Pentest Tool article argues that the core value is the hard middle between raw signal and defensible proof, and the Pentest GPT article distinguishes a model that writes commands from a system that orchestrates tools, state, evidence, and reporting. That is the right frame for buyers. The question is not whether the tool can say hacker-ish things. The question is whether it can shorten the path from noisy evidence to a verified, reviewable security result. (寡黙)

A safer workflow for legitimate teams

For legitimate offensive-security teams, the safest path is not maximal autonomy. It is constrained autonomy with explicit evidence requirements. Start with owned targets or contractually authorized targets only. Make read-only reconnaissance cheap and state-changing actions expensive. Separate observation from exploitation. Separate candidate findings from verified findings. Require raw artifacts before anything gets promoted to a report. Preserve a replayable trace of tool calls and approvals. Those principles line up with the direction of current research, NIST’s agent-hijacking guidance, OWASP’s LLM risk framing, and vendor guidance on safe agent design. (NIST)

A minimal policy might look like this:

engagement:

targets:

- app.staging.example.com

- api.staging.example.com

prohibited:

- production_data_exfiltration

- destructive_actions

- persistence_changes

tool_policy:

read_only_default: true

approval_required:

- shell_write

- file_write

- database_write

- privileged_api_action

evidence_requirements:

- raw_request_response

- reproduction_steps

- negative_control

- impact_statement

- retest_guidance

This kind of policy is intentionally boring. That is the point. Good offensive automation should feel more like disciplined change control than like improv theater. If a workflow cannot express target boundaries, disallowed actions, approval gates, and evidence requirements in a form this plain, it is not ready to touch anything important. (OpenAI Developers)

The promotion logic for findings should be equally dull and equally strict:

def promote_finding(finding: dict) -> str:

required = [

"raw_artifacts",

"reproduction_steps",

"negative_control",

"impact_statement",

"affected_scope",

]

if any(not finding.get(k) for k in required):

return "candidate"

if finding.get("state_change_performed") and not finding.get("human_approved"):

return "candidate"

if finding.get("confidence") != "verified":

return "candidate"

return "verified"

This does not make the model smarter. It makes the workflow safer. That is often the more important improvement. Many so-called hacker AI GPT systems fail because they optimize for fluent output instead of promotion discipline. The fastest way to corrupt a report is to let a model jump from plausible explanation to verified finding without evidence gates. (OpenAI Developers)

A useful finding artifact should also be structured enough that another engineer can inspect it without trusting the prose:

{

"finding_type": "idor",

"asset": "api.staging.example.com",

"endpoint": "GET /v1/invoices/{invoice_id}",

"tested_roles": ["customer_basic", "customer_admin"],

"expected": "403 Forbidden for cross-tenant object access",

"observed": "200 OK with foreign tenant invoice data",

"raw_artifacts": [

"request_001.txt",

"response_001.txt",

"request_002.txt",

"response_002.txt"

],

"negative_control": "same request with own tenant invoice returns expected 200 OK",

"impact_statement": "cross-tenant invoice disclosure",

"retest_guidance": "repeat after object-level authorization fix using same role pair"

}

The benefit of this structure is not aesthetic. It limits what the model is allowed to smuggle into the reporting pipeline, and it makes human review much easier. It also helps downstream consumers such as security engineers, auditors, or remediation owners tell the difference between an exploit claim and an artifact-backed result. That is exactly the kind of design move that current agent-safety guidance keeps recommending when it talks about structured outputs and controlled data flow. (OpenAI Developers)

On owned systems, simple inventory hygiene also goes a long way. A surprising number of organizations do not know where agentic tooling already exists in local developer environments or CI runners.

python -m pip list | egrep 'langchain|langflow|openai|anthropic|mcp'

find "$HOME" -type f \( -name "mcp.json" -o -name "*.code-workspace" -o -path "*/.cursor/mcp.json" \) 2>/dev/null

These commands do not secure anything by themselves, but they help answer the first adult question in this space: what do we already have running, and where can it already reach. That inventory step becomes much more urgent when recent CVEs show real risk in LangChain components, Langflow workflow surfaces, and Cursor plus MCP trust chains. (ギットハブ)

The strongest legitimate AI pentest workflows increasingly converge on the same shape. They use a capable model for interpretation and planning, deterministic tools for observation and validation, explicit approvals for state-changing actions, and artifact-based gates before promotion. That is also the most natural place to evaluate vendors. Penligent’s public material is directionally aligned with that model when it foregrounds reproducible evidence, scope controls, and step-by-step movement from signal to proof. The broader lesson is bigger than any one product: a serious “AI hacker” workflow should feel constrained, auditable, and evidence-led. (寡黙)

What defenders should log

Defenders should assume that if an agent can read untrusted content and call tools, then the logs that matter are not only network logs or endpoint alerts. They are also workflow logs. Preserve the model version, developer instructions, approval decisions, tool definitions, tool-call arguments, returned artifacts, and final report outputs. Without that chain, incident response cannot tell whether a bad action came from model hallucination, malicious context injection, over-broad tool access, or a bug in the wrapper application. (OpenAI Developers)

OAuth and MCP telemetry deserves special attention. Log which MCP server was discovered, how authorization metadata was obtained, which scopes were requested, which scopes were granted, whether the server was previously trusted, and whether the user explicitly approved a tool invocation. The MCP specification’s emphasis on protected resource metadata and scope signaling is not paperwork. It is one of the only reliable ways to reconstruct why a tool call was permitted in the first place. (Model Context Protocol)

Configuration-file integrity also matters more than many teams realize. The Cursor CVEs are a reminder that workspace files and MCP-sensitive configuration files are not inert. In agentic environments, they can become execution pivots. Monitoring file creation or modification for sensitive config paths, especially after an agent ingests untrusted project content, is now part of the defensive baseline for AI-enabled development environments. (NVD)

Defenders should also preserve evidence of what the system refused to do. Negative traces are valuable. If a user-approved agent was blocked from a dangerous write, that is a sign the control worked. If a model attempted to call a high-risk tool after ingesting an external document, that is a sign the prompt-injection surface was real even if the action never completed. Mature telemetry is not only about successful abuse. It is about near misses that reveal where your control plane is thin. (OpenAI Developers)

Finally, teams should test the telemetry itself. NIST’s agent-hijacking work is a useful model here because it emphasizes multiple attempts, adaptive attack design, and task-specific analysis rather than one aggregate number. A logging strategy that looks sufficient on paper may fail under a real indirect prompt injection, a malicious MCP server, or a local config-file trust-chain attack. The time to discover that is inside a controlled red-team exercise, not after an agent has already acted with someone else’s permissions. (NIST)

The right conclusion in 2026

Hacker AI GPT is not a myth, and it is not a finished autonomous hacker either. The research record shows real capability gains when models are placed inside better scaffolding. Threat-intelligence reporting shows that malicious actors are already operationalizing AI for cybercrime and intrusion support. Current CVEs show that the fragility of these systems often lives in execution bridges, workflow platforms, IDE trust chains, and connected-tool boundaries rather than in the language model alone. (USENIX)

The wrong way to think about the category is to ask whether the model can “hack” on its own. That framing is too theatrical and not technical enough. The right way is to ask what the system can observe, what it can execute, what permissions it inherits, what evidence it produces, and how safely it moves from a candidate action to a verified result. That is the design problem that matters to builders, buyers, and defenders alike. (OpenAI Developers)

There is still plenty of room for skepticism. Benchmarks continue to show a meaningful gap between cybersecurity knowledge and adaptive offensive performance. Static safety claims fail against adaptive red teaming. Underground branding exaggerates model novelty. Supplier disagreement exists around some reported AI-adjacent vulnerabilities. None of that justifies complacency. It means only that the field should be discussed with precision rather than hype. (arXiv)

The teams that will get the most value from this technology are not the ones chasing the most cinematic demo. They are the ones building the shortest, safest path from observation to evidence. In 2026, that remains the cleanest dividing line between a flashy hacker AI GPT and a system that can actually belong in a serious security workflow. (寡黙)

Further reading

PentestGPT, the USENIX Security 2024 paper and official project materials, remain the best starting point for understanding the original research framing of LLM-assisted penetration testing. (USENIX)

NIST’s Generative AI Profile and its technical blog on agent hijacking are essential for understanding direct and indirect prompt injection, task-specific agent risk, and why adaptive evaluations matter. (NIST出版物)

OWASP’s LLM Top 10, OpenAI’s function-calling documentation, OpenAI’s agent-safety guidance, and the MCP specification plus security best practices are the clearest current references for designing safer agentic systems and tool-calling boundaries. (オワスプ)

For current threat activity, Google Threat Intelligence Group’s February 2026 reporting, Anthropic’s August 2025 threat intelligence report, and OpenAI’s public malicious-use reporting are the most relevant primary sources discussed above. (blog.google)

For relevant Penligent reading, the public product page, the AI Pentest Tool article, and the Pentest GPT article are the most directly related internal references for this topic. (寡黙)