The most important fact in this story is also the one most easy to blur in headlines. Polkadot’s native supply was not suddenly rewritten on its own chain. What was compromised was the Ethereum-side representation of DOT managed through Hyperbridge’s cross-chain messaging and token gateway path. On-chain evidence shows that 1,000,000,000 bridged DOT were minted on Ethereum in a single transaction on April 13, 2026, then dumped through Uniswap V4 and Odos for 108.206143512481490001 ETH. The exploit transaction is public, the token contract is public, the Hyperbridge mainnet contract addresses are public, and those four pieces together already tell most of the story. (Ethereum (ETH) Blockchain Explorer)

That distinction matters because “1 billion DOT minted” sounds like a terminal failure of the Polkadot monetary base. It was not. In a bridge architecture, the destination-chain token is a claim, a representation, or at least a settlement-side abstraction tied to source-chain state and bridge logic. When the destination representation is corrupted, parity can collapse, price can collapse, redemptions can freeze, and users can get hurt, all without the source chain’s native issuance logic being touched. Reports from FXStreet and other outlets were careful on this point: the asset hit hardest was bridged DOT on Ethereum, while native DOT sold off under market stress but was not shown to have been directly inflated on Polkadot itself. (FXStreet)

The second fact that deserves equal attention is the gap between the eye-catching mint amount and the attacker’s realized proceeds. Etherscan’s transaction page shows an enormous notional value attached to the minted tokens at execution time, but the exploiter only extracted a little over 108 ETH because the affected Ethereum-side DOT representation did not have deep enough liquidity to convert anywhere near the printed face value. That is not a sign that the bug was minor. It is a sign that bridge exploits can destroy the integrity of a wrapped asset even when the immediate cashout path is shallow. In other words, the biggest loss can be trust and market structure, not just the cash the attacker got away with in the first block. (Ethereum (ETH) Blockchain Explorer)

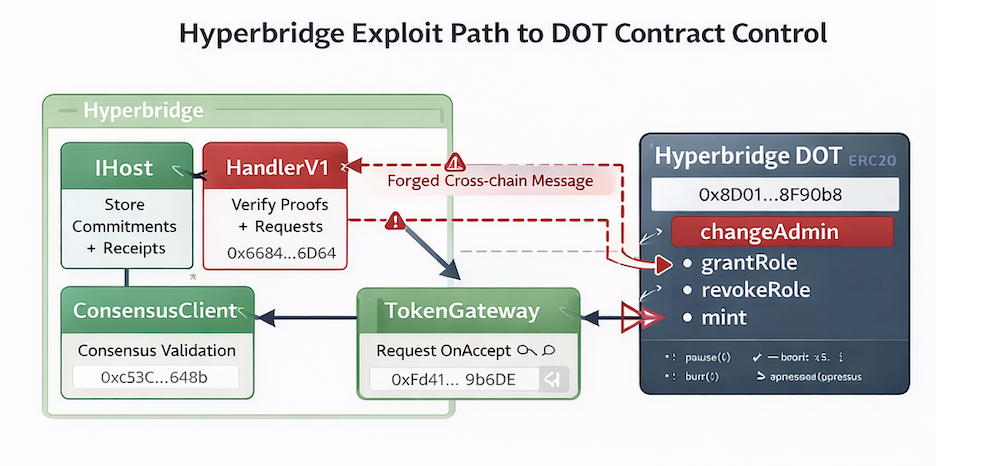

That is also why this incident is worth reading as an engineering case study, not just a market shock. Hyperbridge describes itself as a verifiable, permissionless interoperability protocol powered by interoperability proofs. Its EVM documentation says the IHandler contract is the stateless entry point for all ISMP datagrams and is responsible for consensus and state proof verification, while the IHost stores commitments and receipts. The mainnet documentation publishes the Ethereum addresses for HandlerV1, IsmpHost, ConsensusClientund TokenGateway. When a protocol with that design loses integrity at the asset-admin level, the right question is not “how did a bridge get hacked again.” The right question is “which exact assumptions failed between proof, request, execution, and role management.” (docs.hyperbridge.network)

What is confirmed about the Hyperbridge exploit

The hardest facts are the ones on-chain. The exploit transaction on Ethereum is 0x240aeb9a8b2aabf64ed8e1e480d3e7be140cf530dc1e5606cb16671029401109, timestamped Apr 13, 2026 at 03:55:23 UTC on Etherscan. The transaction created two contracts, minted 1,000,000,000 DOT from the zero address, routed those tokens into an intermediary flow ending at Uniswap V4, and returned 108.206143512481490001 ETH to the address Etherscan labels as “Bridged DOT Exploiter.” The exploit did not require a long laundering path to be visible. The entire mint-to-dump path is compact and readable in one public transaction page. (Ethereum (ETH) Blockchain Explorer)

A second hard fact is the contract topology. Hyperbridge’s official mainnet documentation lists Ethereum HandlerV1 at 0x6C84eDd2A018b1fe2Fc93a56066B5C60dA4E6D64 und TokenGateway at 0xFd413e3AFe560182C4471F4d143A96d3e259B6dE. The Ethereum-side DOT token affected in this incident is the verified contract at 0x8d010bf9c26881788b4e6bf5fd1bdc358c8f90b8. Etherscan shows that this DOT token contract includes administrative and minting capabilities such as changeAdmin, mint, grantRoleund revokeRole. That means an attacker did not need to “invent” a weird new mint path once admin control was available. Administrative control over this representation was already enough to cause catastrophic supply corruption. (docs.hyperbridge.network)

A third hard fact is that external monitoring firms and exchanges reacted as if this were a real bridge-side security incident, not a rumor. CertiK Pulse summarized the event as a Hyperbridge gateway vulnerability that let attackers forge messages, manipulate the administrator of a Polkadot token contract on Ethereum, and sell the fraudulently minted tokens for roughly $237,000. The same CertiK Pulse feed also summarized an Upbit precautionary suspension for Polkadot and AssetHub Polkadot deposits and withdrawals. Bithumb published its own official warning notice, referenced the exploit transaction directly, and stated that DOT deposits and withdrawals were suspended at the time of the notice. (skynet.certik.com)

A fourth hard fact is what was not yet clearly available when this article was prepared. Hyperbridge’s public blog homepage still surfaced product announcements and earlier protocol updates, but not a clearly indexed April 13 incident postmortem or final root-cause analysis for this exploit. That absence matters because it changes how carefully one must write about root cause. The exploit itself is confirmed. The precise internal failure mode remains partly reconstructed from external analysis rather than from an official vendor RCA. (Hyperbridge)

The confirmed facts are easier to absorb in one place.

| Artikel | Confirmed detail |

|---|---|

| Exploit date and time | Apr 13, 2026, 03:55:23 UTC |

| Exploit transaction | 0x240aeb9a8b2aabf64ed8e1e480d3e7be140cf530dc1e5606cb16671029401109 |

| Ethereum-side DOT token | 0x8d010bf9c26881788b4e6bf5fd1bdc358c8f90b8 |

Hyperbridge Ethereum HandlerV1 | 0x6C84eDd2A018b1fe2Fc93a56066B5C60dA4E6D64 |

Hyperbridge Ethereum TokenGateway | 0xFd413e3AFe560182C4471F4d143A96d3e259B6dE |

| Tokens minted | 1,000,000,000 bridged DOT |

| Realized proceeds | 108.206143512481490001 ETH |

| Native DOT directly inflated | No evidence of that |

| Exchange reaction | Bithumb official caution and suspension, Upbit suspension reported by CertiK Pulse |

The table above is entirely built from public contract documentation, Etherscan, and exchange or security monitoring sources. (Ethereum (ETH) Blockchain Explorer)

How Hyperbridge moves DOT between Polkadot and Ethereum

To understand why this exploit looked dramatic but did not rewrite native DOT issuance, it helps to separate canonical assets from destination representations. In a typical bridge flow, the source-side asset is locked, escrowed, reserved, or otherwise accounted for on the origin side, while the destination side issues a representation that is only supposed to exist under valid cross-chain state transitions. The destination token is therefore not “the same thing” in the monetary sense. It is a representation whose integrity depends on the bridge’s verification path. Once that path fails, the destination-side token can be printed, frozen, or desynced even while the canonical source asset continues to obey its native issuance rules. That is the category this incident falls into. (docs.hyperbridge.network)

Hyperbridge’s own documentation is explicit about the moving parts. The IHost is the storage core holding consensus states, state commitments, request commitments and receipts, and response commitments and receipts. The IHandler is the stateless entry point for ISMP messages and is responsible for consensus and state proof verification. The IConsensus interface verifies Hyperbridge consensus on EVM chains and, according to the docs, validates authority signatures or zero-knowledge proofs, validates MMR proofs for state commitments, checks authority set transitions, and extracts finalized intermediate states. That architecture is supposed to let an EVM-side application accept verified cross-chain messages without inheriting a multisig trust model. (docs.hyperbridge.network)

Hyperbridge also published the operational framing that made this exploit especially significant for DOT users. In April 2025, Hyperbridge announced that the Polkadot DAO had selected it as Polkadot’s native bridge for the DeFi Singularity initiative, with the stated goal of improving DOT liquidity and accessibility across external ecosystems. In November 2025, Hyperbridge announced that DOT bridging had fully resumed following Polkadot’s Asset Hub migration. Those posts matter because they tie this exploit to an officially promoted DOT bridging path rather than to some obscure third-party wrapper with no ecosystem relevance. (Hyperbridge)

The affected Ethereum-side DOT contract also tells its own story. Etherscan identifies the token contract as ERC6160Ext20, and its verified ABI exposes the kinds of functions one would expect in an asset gateway or bridge-issued token: mint, burn, changeAdmin, role checks, and role grants. That means the attacker did not merely find a price oracle edge case or exploit a trading venue. The attack path reached the token administration plane itself. Once that plane is compromised, minting and distribution are no longer downstream effects. They are the direct consequence. (Ethereum (ETH) Blockchain Explorer)

This is why language discipline matters when writing about bridge incidents. Saying “Polkadot was hacked” hides the trust boundary that actually failed. Saying “Hyperbridge’s Ethereum-side DOT representation was compromised through the cross-chain verification and asset-admin path” is longer, but it points engineers toward the right systems: proof verification, request binding, gateway authorization, and representation-side privilege controls. In bridge security, naming the right trust boundary is half the analysis. (docs.hyperbridge.network)

Reconstructing the exploit path on-chain

The public transaction record is unusually clean. Etherscan shows that the exploit call created two new contracts during execution. Those contracts then participated in the mint-and-dump sequence. The transaction details show 1,000,000,000 DOT moving from the null address into an attacker-controlled contract, then to another intermediary, and finally into Uniswap V4’s Pool Manager. The internal transfers show ETH traveling back from the pool manager through Odos Router V3 and intermediary contracts until 108.206143512481490001 ETH lands back at the exploiter address. This is not one of those incidents where you need days of graph work to see the primary extraction path. The first pass is visible immediately. (Ethereum (ETH) Blockchain Explorer)

That first pass already establishes a lot. It establishes that the mint was not merely a reporting bug on an analytics dashboard. It establishes that the dumped asset was accepted by at least some on-chain liquidity route. It establishes that the attacker did not need to withdraw to a centralized exchange to monetize the exploit. And it establishes that the exploit was not a failed attempt that only changed a role without monetization. The mint, transfer, swap, and proceeds path are all part of the same successful transaction flow. (Ethereum (ETH) Blockchain Explorer)

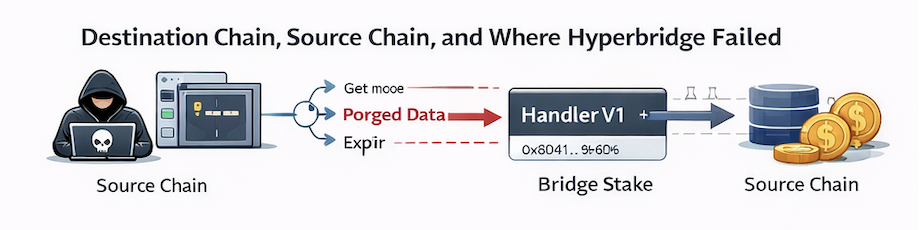

External analysis fills in the message-level interpretation. Crypto Times, citing CertiK, described the sequence as an exploit on the Hyperbridge gateway contract on Ethereum in which the attacker forged an incoming cross-chain message, used forged state proofs against HandlerV1, and triggered a malicious ChangeAssetAdmin action via TokenGateway.onAccept(). That description matches what the public contracts make plausible: Hyperbridge’s docs place message verification in IHandler, the mainnet address page maps the relevant contracts, the TokenGateway ABI exposes onAccept, and the DOT token ABI shows that admin change and mint are enough to reach catastrophic supply abuse. (The Crypto Times)

At this point the analysis must slow down and separate certainty from inference. The on-chain facts prove that admin-like power over the DOT representation was obtained and immediately monetized. The external reconstructions strongly suggest that this power was acquired through a forged or improperly verified inbound message path. But the exact internal validation mistake is still best framed as a high-confidence external reconstruction, not a final official statement. That distinction matters because bridge bugs often live in subtle combinations of proof validity, stale data acceptance, replay handling, nonce semantics, and request-body binding. One missing predicate can look like five different failure modes from the outside. (Ethereum (ETH) Blockchain Explorer)

One practical way to analyze incidents like this is to read the chain before reading anyone’s thread. The smallest useful script is one that compares token supply before and after the exploit block and then fetches mint logs from the token contract.

import { JsonRpcProvider, Contract, id, ZeroAddress } from "ethers";

const provider = new JsonRpcProvider(process.env.ETH_RPC_URL);

const DOT = "0x8d010bf9c26881788b4e6bf5fd1bdc358c8f90b8";

const ABI = [

"function totalSupply() view returns (uint256)",

"event Transfer(address indexed from, address indexed to, uint256 value)"

];

const token = new Contract(DOT, ABI, provider);

const exploitBlock = 24868295;

const before = await token.totalSupply({ blockTag: exploitBlock - 1 });

const after = await token.totalSupply({ blockTag: exploitBlock });

console.log("Supply before:", before.toString());

console.log("Supply after :", after.toString());

console.log("Delta :", (after - before).toString());

const transferTopic = id("Transfer(address,address,uint256)");

const zeroTopic = "0x" + "0".repeat(24) + ZeroAddress.slice(2);

const mintLogs = await provider.getLogs({

address: DOT,

fromBlock: exploitBlock,

toBlock: exploitBlock,

topics: [transferTopic, zeroTopic]

});

for (const log of mintLogs) {

console.log(log);

}

That snippet is not fancy, but it answers the two questions that matter first: did supply jump at the exploit block, and did the contract emit a standard ERC-20 mint pattern from the zero address. From there, the next step is to decode the transaction receipt and join the token transfer logs with internal ETH transfers. (Ethereum (ETH) Blockchain Explorer)

Another useful perspective is to inspect the gateway contract for evidence of privileged asset administration hooks. On Etherscan, Hyperbridge’s TokenGateway ABI exposes onAccept, onPostResponse, and an AssetAdminChanged event. That event is especially important because it compresses the whole exploit thesis into a single monitoring primitive. In a healthy bridge environment, asset-admin changes should be rare, intentional, and loudly reviewed. If AssetAdminChanged fires unexpectedly, that alone should already trigger a severe incident workflow before anyone has time to tweet that the price is crashing. (Ethereum (ETH) Blockchain Explorer)

What the likely root cause looks like so far

The broad root-cause description is relatively stable across sources. CertiK’s characterization, repeated by several outlets, is that attackers exploited a Hyperbridge gateway or handler-side verification issue to forge messages and manipulate the administrator of the DOT token contract on Ethereum. FXStreet’s summary similarly described the attack as a forged cross-chain message sent through Hyperbridge’s ISMP flow to execute a ChangeAssetAdmin request. Even without a formal vendor RCA, the public narrative is consistent on the key point: the exploit was not a conventional DEX bug and not a native-chain issuance bug. It was a cross-chain verification and authorization failure that cascaded into asset administration. (The Crypto Times)

The narrower explanation is less settled and should be written that way. Several newsflash-style outlets citing BlockSec Phalcon described the issue as an MMR proof replay vulnerability in HandlerV1, where proofs were not bound tightly enough to the request they were meant to authorize. Under that description, an attacker could replay a historically valid proof alongside a newly forged request body, then use the resulting authorization gap to perform sensitive operations such as changing asset admin and minting new tokens. That explanation is plausible, technically coherent, and consistent with the kind of architecture Hyperbridge documents for consensus and state proof verification. But because those reports are still second-hand and not yet replaced by a clearly indexed official incident report, it should be treated as a preliminary but serious hypothesis rather than as settled vendor fact. (KuCoin)

The difference between those two framings is not academic. “Forged message” tells defenders where the trust boundary failed at a high level. “MMR proof replay because proofs were not bound to requests” tells engineers exactly what class of invariant they may have forgotten to encode. Those are related but not identical statements. One describes what the attacker achieved. The other describes the missing cryptographic or protocol constraint that may have enabled it. A good incident analysis keeps both layers visible and does not confuse them. (The Crypto Times)

The lack of a clearly indexed official incident writeup is part of the security story too. Hyperbridge’s public blog continued to surface regular product and integration posts, and the site’s most visible exploit-adjacent headline near the top was still the April 1 prank post that said no hack had occurred. That does not mean the team was inactive behind the scenes. It means outside engineers, integrators, exchanges, and users did not yet have a crisp public RCA to anchor on. In bridge incidents, that gap matters because market structure reacts in minutes while technical certainty usually takes longer. (Hyperbridge)

Why native DOT was not directly inflated

A bridge exploit often produces two very different truths at once. The first is that a token called DOT on Ethereum really was minted in absurd volume and really was dumped. The second is that Polkadot’s native issuance rules were not thereby rewritten. Both are true because destination-chain tokens live under bridge security assumptions, not under the source chain’s monetary policy. A wrapped or bridged asset can become worthless, overissued, or nonredeemable without the source asset having the same defect. That is exactly the distinction traders, exchange operators, and security teams must maintain when the first headlines hit. (FXStreet)

Hyperbridge’s own DOT-bridging announcements provide the right context. The protocol explicitly promoted restored DOT bridging after Polkadot’s Asset Hub migration and described itself as a native bridge within Polkadot’s multichain ecosystem. In other words, the affected Ethereum asset was not pretending to be independent. Its value proposition depended on being accepted as a valid external representation of DOT under the bridge’s trust model. Once that trust model failed, the representation could be inflated locally even though native DOT on Polkadot remained subject to native chain rules. (Hyperbridge)

That also explains why market language can become dangerously sloppy. A trader may say “DOT got hacked” because the ticker on the screen is DOT. An engineer should say “the Ethereum-side bridged DOT representation lost integrity.” A protocol risk manager should say “the redemption and parity assumptions for the destination representation are now unreliable.” Those statements are not semantics games. They determine whether exchanges suspend the right network routes, whether users understand which chain is safe to withdraw on, and whether postmortem work focuses on the right code and proof systems. (No.1 가상자산 플랫폼, 빗썸)

There is another practical consequence. If the source-side canonical asset remains intact but the destination-side representation is corrupt, then incident response is not just about pausing a contract. It is about preserving clear chain-specific language everywhere: wallet UIs, exchange notices, market data feeds, asset metadata, and risk engines. If one part of the ecosystem says “DOT is unsafe” while another says “only bridged DOT on Ethereum is unsafe,” users can still do the wrong thing. Bridge failures propagate through naming ambiguity almost as efficiently as through code. (No.1 가상자산 플랫폼, 빗썸)

Why 1 billion minted did not mean 1 billion stolen

The transaction minted a billion tokens, but the attacker came away with roughly 108 ETH. The reason is not that the exploit somehow half-worked. The reason is market microstructure. A freshly printed bridge representation is only monetizable to the extent that someone, somewhere, will still take it. On Ethereum, that usually means DEX pools, aggregators, or centralized venues that have not yet halted the asset. If the destination-side representation has shallow liquidity, its price can implode long before its notional minted amount turns into equivalent extractable value. That is what happened here. (Ethereum (ETH) Blockchain Explorer)

Etherscan shows the attacker routing the dump through Uniswap V4 and Odos. That already tells you a lot about the environment. This was not a case where the attacker bridged the fake DOT back into a deep, trusted redemption venue and cashed out against full collateral. It was a case where the exploiter sold into whatever on-chain path would still execute. The internal ETH transfers show that the gross amount reaching Odos was slightly above the final amount returned to the exploiter, which is consistent with routing and execution overhead in a stressed liquidation path. The destination representation became massively overissued in one shot, but the market could only absorb a small cash equivalent before the price floor disappeared. (Ethereum (ETH) Blockchain Explorer)

That gap between printed value and realized profit is a recurring mistake in public discussion of bridge exploits. When a bridge-side synthetic asset can be printed, its total “market cap” becomes meaningless almost instantly because the quoted price assumes scarcity that the exploit has already destroyed. The exploiter’s real ceiling is the combination of available pool reserves, routing depth, slippage tolerance, and whether faster defenders can freeze adjacent off-ramps. That is why a billion-token exploit can still be a six-figure theft. It is also why the security impact should not be reduced to the cashout number alone. (Ethereum (ETH) Blockchain Explorer)

Seen from a defender’s side, this is where bridge risk and market risk meet. The exploit created protocol-side integrity failure first, but AMM mechanics determined the realized financial loss in the first minutes. That means DEX liquidity design, bridge design, and exchange response are not separable topics in wrapped-asset incidents. A protocol that ignores its downstream market structure may overestimate how safe it is because “the pool is small.” A protocol with large pools may underestimate how fast a representation-side compromise turns into system-wide contagion. Both mistakes are common. (Ethereum (ETH) Blockchain Explorer)

The numbers are easiest to read side by side.

| Measure | What it means in this exploit |

|---|---|

| 1,000,000,000 DOT minted | Bridge-side representation was catastrophically overissued |

| Etherscan notional token value | A spot-like estimate at execution time, not extractable economic reality |

| 108.206143512481490001 ETH realized | What the attacker actually got out on-chain |

| Price collapse toward zero | The market immediately repriced the bridged representation after the mint |

| Native DOT issuance | Not shown to have changed |

The lesson is simple: in bridge incidents, supply integrity fails first, pricing integrity fails second, and extracted value is only the fraction the market can still absorb in between. (Ethereum (ETH) Blockchain Explorer)

How the Hyperbridge exploit fits the bridge failure pattern from Wormhole, Nomad, and Ronin

Bridge history is full of incidents that look different at the headline level but rhyme at the trust-boundary level. Ronin was fundamentally a validator and control-plane compromise. Sky Mavis said the attacker gained control of five of nine validator keys, using that power to forge fake withdrawals and drain 173,600 ETH and 25.5 million USDC from the bridge. The key lesson from Ronin was that decentralization theater does not help if operational control is still concentrated enough for an attacker to cross the approval threshold. (Ronin)

Wormhole was closer to a message verification bypass that resulted in synthetic minting. CertiK’s incident analysis described how the attacker bypassed the verification process on Solana by injecting a fake sysvar account, generated a malicious message, and invoked complete_wrapped to mint 120,000 wrapped ETH. The structural resemblance to the Hyperbridge event is stronger here than with Ronin. In both cases, the attacker did not need to compromise the native source asset directly. The attacker only needed to corrupt the destination-side authorization that said a wrapped representation was valid to mint. (certik.com)

Nomad is the other strong comparison point. Mandiant described the Nomad bridge exploit as the result of a smart-contract update that left the system in a state where specially crafted transactions would be processed without proper validation. The chaos of that exploit came from how little technical sophistication was needed once the validation failure became public. The Hyperbridge incident does not look socially chaotic in the same way, but the family resemblance is still there: when a protocol accepts a message or proof under looser conditions than intended, the economic damage arrives downstream through what the application layer is permitted to do once “verification” says yes. (Google Wolke)

Those three incidents map three major bridge failure classes. Ronin represents control-plane compromise at the signer or validator threshold. Wormhole represents verification bypass followed by forged wrapped-asset minting. Nomad represents improper validation defaults that convert authorization into a copy-and-paste exploit surface. Hyperbridge, based on public evidence so far, sits much closer to the Wormhole-Nomad side of that triangle than to the Ronin side. That is a more useful mental model than simply calling everything a “bridge hack.” (Google Wolke)

The comparison becomes even more useful when you line up the broken assumption in each case.

| Incident | Broken trust assumption | Immediate exploit outcome | Why it matters for Hyperbridge |

|---|---|---|---|

| Ronin | Enough validators remained independent and uncompromised | Fake withdrawals approved | Shows the risk of concentrated control planes |

| Wormhole | Verification step correctly authenticated wrapped-asset mint authority | 120,000 wETH minted | Closest prior example of verification failure turning into synthetic mint |

| Nomad | Message validation state could not silently accept bad requests | Mass copycat draining | Shows how one bad validation condition can flatten the whole security model |

| Hyperbridge | Proof and message path correctly bound authorization to a real cross-chain action | 1 billion bridged DOT minted and dumped | Reinforces that proof-based bridges still fail if proof context is wrong |

The point is not to force every exploit into the same template. The point is to notice how often the bridge loses because it authorizes execution under a weaker condition than the asset model assumes. (Google Wolke)

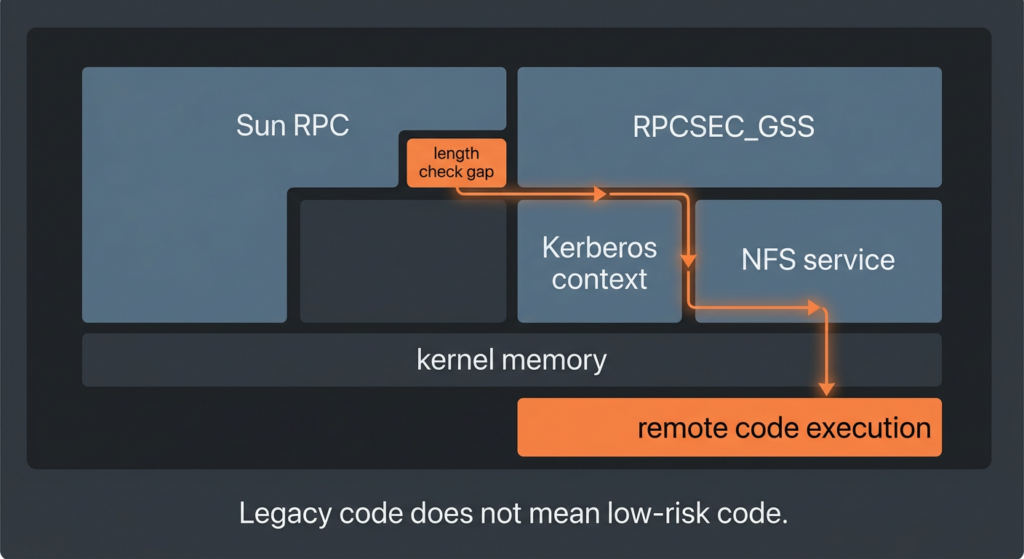

This is also where the industry’s broader threat trend matters. TRM Labs reported that illicit actors stole USD 2.87 billion across nearly 150 hacks in 2025 and argued that attackers had been moving up the stack toward keys, wallets, and control planes rather than relying only on traditional smart contract mistakes. Hyperbridge is an awkward but valuable reminder that proof systems, gateway logic, and token administration are all part of that control plane. A protocol can avoid a multisig bridge and still lose at the moment a verified message is allowed to become administrative power over a destination asset. (trmlabs.com)

Relevant CVEs that help explain this incident class

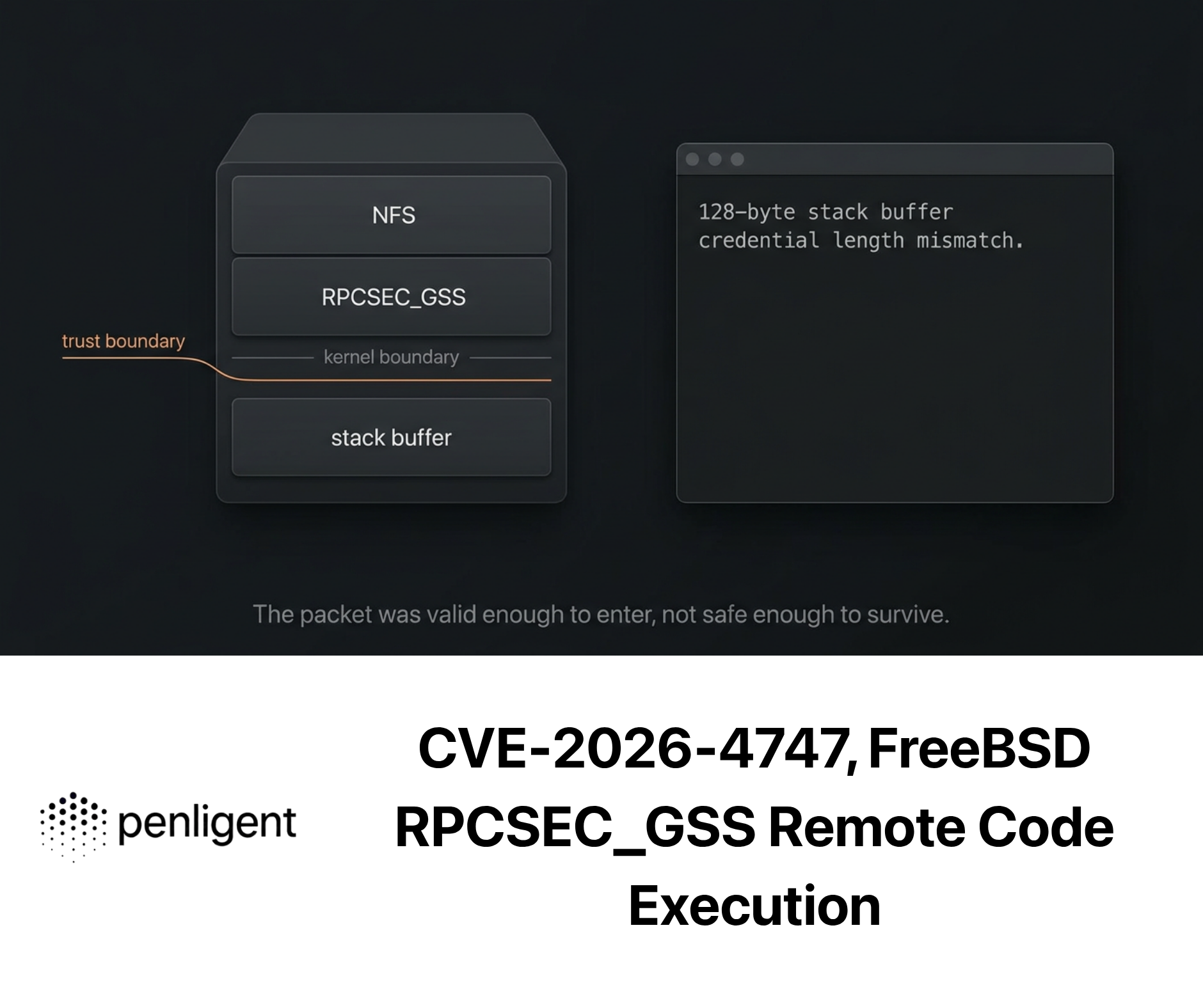

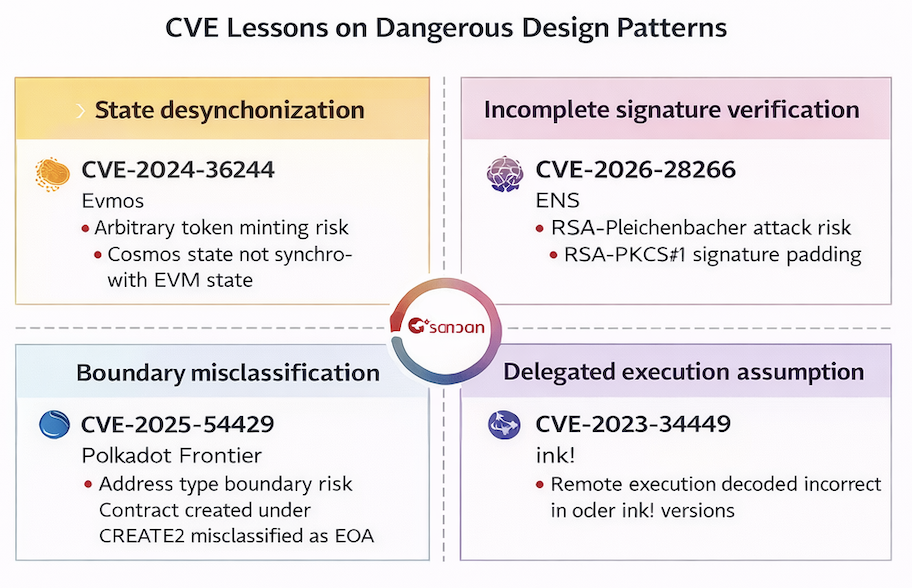

No CVE in this section is “the same bug” as the Hyperbridge exploit. That is not the purpose. The purpose is to show that the design mistakes bridge engineers fear most already recur across adjacent blockchain systems: state desynchronization, boundary misclassification, broken signature verification, and bad assumptions around delegated execution. Those are exactly the classes of failure that make cross-chain infrastructure brittle.

CVE-2024-32644 and the danger of cross-system state desynchronization

NVD describes CVE-2024-32644 in Evmos as an arbitrary-token-mint issue caused by two different states, the Cosmos SDK state and the EVM state, getting out of sync during transaction execution. The critical detail is not just “arbitrary mint.” It is the mechanism: state sync relied on stateDB.Commit(), and a contract storage pattern could exploit a case where state changed during the transaction but ended in a value equal to the origin state, making the transaction effectively non-atomic in a dangerous way. The vulnerability was patched in Evmos 17.0.0 and later. (NVD)

That has obvious relevance here even though Hyperbridge is a bridge and Evmos is an EVM-compatible chain. In both cases, security depends on consistent interpretation across system boundaries. In Evmos, the boundary was between Cosmos SDK and EVM state. In Hyperbridge, the boundary appears to have been between proof validation, request semantics, and destination-side authorization. Different architecture, same lesson: once two state machines disagree about what is authorized, arbitrary minting becomes a realistic outcome instead of a theoretical one. (NVD)

The remediation logic travels well. Evmos fixed the issue by patching the vulnerable synchronization behavior. For bridge builders, the analogue is to treat proof verification and authorization derivation as one atomic trust decision. A request must never become executable because one layer believes the proof is valid while another layer has not yet bound that proof to the exact action, asset, and nonce being requested. That is the bridge version of state atomicity. (NVD)

CVE-2025-54429 and the cost of getting address-type boundaries wrong

NVD describes CVE-2025-54429 in Polkadot Frontier, the Ethereum and EVM compatibility layer for Polkadot and Substrate, as a bug in the implementation of CallableByContract that incorrectly considered a contract address running under CREATE oder CREATE2 to be AddressType::EOA rather than AddressType::Contract. GitHub’s CNA score marked it medium severity, and the issue was fixed in version 0822030. The official note also said it was not directly exploitable in predefined Frontier precompiles, but it remained relevant for users of custom precompile implementations that relied on those distinctions. (NVD)

Why does that matter for a bridge-side asset exploit. Because bridge security is full of hidden type assumptions. A proof is assumed to correspond to a given request. A request is assumed to come from a given module. A destination call is assumed to represent an authenticated remote intent rather than merely well-formed calldata. A destination asset admin change is assumed to be the result of an authorized governance action rather than a replayed verification artifact. Once the system misclassifies one of those boundaries, later checks become weaker than their authors think. Frontier’s address-type bug is a sharp illustration of how boundary classification bugs can quietly undermine higher-level policy. (NVD)

The practical lesson for bridge engineers is to distrust “impossible” contexts. If a role change must only come from an authenticated remote governance message, then the receiving path should explicitly encode that origin, not infer it from some intermediate execution environment that could be misclassified. Typed authorization should be made explicit, not emergent. That sounds obvious until a bridge grows enough abstraction layers for “obvious” to disappear. (NVD)

CVE-2026-22866 and why incomplete signature verification still means forgery

NVD describes CVE-2026-22866 in ENS as a failure in the RSASHA256Algorithm und RSASHA1Algorithm contracts to validate the PKCS#1 v1.5 padding structure when verifying RSA signatures. The contracts only checked whether the last bytes of the decrypted signature matched the expected hash. That allowed Bleichenbacher-style signature forgery for affected DNSSEC-backed claims under supported TLDs using low-exponent keys. The fix involved patched contracts and updated algorithm references. (NVD)

This is not a bridge bug, but it is deeply relevant to bridge thinking. It destroys the comforting idea that “we are safe because we verify signatures or proofs on-chain.” No, you are safe only if you verify the full thing you need to trust. Partial checks are often worse than no checks because they create a false sense of authorization. If Hyperbridge’s public root-cause story ultimately lands on something like proof replay or request-unbound verification, that would be the bridge equivalent of a signature verifier that only checked the easy part and skipped the context that makes the proof meaningful. (NVD)

The shared lesson is structural. Proof acceptance is not enough. The verifier must prove the right statement about the right subject at the right time for the right destination action. In cryptographic systems, missing context is not a documentation gap. It is often the vulnerability itself. (NVD)

CVE-2023-34449 and why delegated execution assumptions deserve paranoia

NVD describes CVE-2023-34449 in ink! as an incorrect decoding of return values when using delegate call mechanics in versions 4.0.0 through prior to 4.2.1. The fix was to upgrade to 4.2.1. This is not directly a bridge proof issue either, but it is highly relevant to the execution side of cross-chain design. The moment a remote message or governance action arrives, some local application logic must interpret it. If the execution environment or delegated-call assumptions are wrong, then a perfectly valid upstream authorization can still become a broken local effect. (NVD)

That matters because bridge incidents are often narrated as if there is one magical “bridge layer” and everything below it is boring. In reality, destination-side asset contracts, token gateways, and delegated application modules are part of the same blast radius. A bridge can verify a state commitment correctly and still lose if the receiving contract interprets the resulting call too broadly or too ambiguously. ink!’s delegate-call bug is a reminder that execution correctness is not a second-tier concern. In bridge systems, it is part of the trust boundary. (NVD)

The CVEs are easier to compare directly.

| CVE | System | Failure class | Why it helps explain Hyperbridge |

|---|---|---|---|

| CVE-2024-32644 | Evmos | State desynchronization causing arbitrary mint | Shows how multi-layer state inconsistency can turn into issuance failure |

| CVE-2025-54429 | Polkadot Frontier | Incorrect boundary classification for address type | Shows how category mistakes weaken policy assumptions |

| CVE-2026-22866 | ENS | Incomplete signature verification allowing forgery | Shows why partial cryptographic validation is not real validation |

| CVE-2023-34449 | ink! | Delegate call result handling error | Shows that destination-side execution assumptions are part of the trust boundary |

The point is not that bridges and all blockchain software are the same. The point is that authorization failures in multi-layer systems keep repeating in recognizable forms. (NVD)

How defenders should detect this class of failure

Most protocols discover these incidents too late because they watch price before they watch privilege. Price is a lagging signal. In the Hyperbridge case, the market crash followed a chain of far more meaningful events: a sensitive cross-chain message path executed, asset administration changed, mint authority was abused, destination supply exploded, and only then did liquidity venues express the damage. A strong defender pipeline flips that order. It treats admin events, role changes, abnormal mint emissions, and supply drift as first-class severity-one signals. (Ethereum (ETH) Blockchain Explorer)

The minimum telemetry set for a destination-side bridge representation should include the token contract, the token gateway, the message handler, and the host state store. From the token contract, you care about Transfer from the zero address, any role-granting events, and any admin changes. From the gateway, you care about AssetAdminChanged, new asset registration, and request-acceptance flows. From the handler and host, you care about unusual message-verification invocations, replay-like patterns, or surges in calls that are rare under normal operation. If those streams are not already correlated into one timeline, you are relying on human memory during the worst possible minute. (Ethereum (ETH) Blockchain Explorer)

There is also a bridge-specific invariant that too many teams still do not operationalize: destination representation supply should be continuously reconcilable against source-side collateral, reserves, or governance-approved issuance state. That does not mean the reconciliation must happen synchronously on-chain for every user-facing read. It means the protocol must have a machine-verifiable way to answer, at short intervals, whether bridged supply is still within authorized bounds. If a single exploit can print one billion units before any alarm sees the supply delta, the monitoring model is not fit for cross-chain assets. (Ethereum (ETH) Blockchain Explorer)

The first monitoring script worth writing is one that treats administrative drift as a catastrophic signal.

import { JsonRpcProvider, Contract, id, ZeroAddress } from "ethers";

const provider = new JsonRpcProvider(process.env.ETH_RPC_URL);

const GATEWAY = "0xFd413e3AFe560182C4471F4d143A96d3e259B6dE";

const DOT = "0x8d010bf9c26881788b4e6bf5fd1bdc358c8f90b8";

const gatewayAbi = [

"event AssetAdminChanged(address asset, address newAdmin)"

];

const dotAbi = [

"event Transfer(address indexed from, address indexed to, uint256 value)"

];

const gateway = new Contract(GATEWAY, gatewayAbi, provider);

const dot = new Contract(DOT, dotAbi, provider);

gateway.on("AssetAdminChanged", (asset, newAdmin, event) => {

console.error("SEV-1 Asset admin change detected");

console.error({ asset, newAdmin, txHash: event.log.transactionHash });

});

dot.on("Transfer", (from, to, value, event) => {

if (from.toLowerCase() === ZeroAddress.toLowerCase() && value > 10_000n * 10n ** 18n) {

console.error("SEV-1 Large mint detected");

console.error({

to,

value: value.toString(),

txHash: event.log.transactionHash

});

}

});

This script is intentionally simple. In production you would add rate limits, severity thresholds, historical baselining, and cross-checks against approved governance windows. But even this small monitor would have recognized the shape of the Hyperbridge exploit earlier than a price-only workflow. (Ethereum (ETH) Blockchain Explorer)

There is a second layer that should exist before the first exploit, not after it: property-based or invariant testing of the bridge asset model. The invariant can be stated in plain English before it is written in code. No inbound cross-chain message should ever cause the destination representation supply to exceed the authorized source-side locked or accounted amount, except under explicitly approved administrative procedures that are separately observable and rate-limited. If that invariant is not encoded somewhere, teams are trusting architecture diagrams more than executable security guarantees. (docs.hyperbridge.network)

A minimal invariant sketch looks like this.

/// Pseudocode for bridge-side supply integrity

function invariant_supplyBoundedByAuthorizedState() public view {

uint256 bridgedSupply = dot.totalSupply();

uint256 authorizedSupply = sourceState.lockedAmount(assetId) + approvedEmergencyBuffer(assetId);

assert(bridgedSupply <= authorizedSupply);

}

function invariant_adminChangesAreGoverned() public view {

assert(lastAdminChange.assetId == bytes32(0) || lastAdminChange.executedThroughGovernanceWindow);

assert(lastAdminChange.delayElapsed);

assert(lastAdminChange.sourceCommitmentBoundToRequestHash);

}

The exact implementation will vary with architecture, but the security logic should not. A bridge asset should have a machine-readable statement of how much it is allowed to exist, and an admin change should have a machine-readable statement of who is allowed to cause it and through what path. (docs.hyperbridge.network)

For teams that need to turn those checks into repeatable verification workflows rather than one-off manual reviews, the relevant operational problem is not “find a cooler dashboard.” It is building a pipeline that can connect static logic review, event monitoring, proof-path inspection, and evidence capture. That is one reason Penligent’s blockchain-focused material is relevant here. Its blockchain smart contract work on race conditions emphasizes evidence bundles, transaction traces, and non-destructive verification, while its longer blockchain red-teaming piece explicitly calls out cross-chain inconsistencies as a modern attack surface. Those are the right instincts for bridge security even when the exact exploit class is different. (Sträflich)

Engineering changes that would have reduced blast radius

The first engineering requirement is strict binding between proof and request. If the preliminary replay explanation is correct, then a valid proof was accepted in a context broader than the protocol intended. The cure is not “more cryptography” in the abstract. The cure is narrower acceptance conditions. A verified proof should commit not only to finalized state but to the exact request hash, destination module, action type, nonce, asset identifier, and execution domain expected by the receiving contract. The receiving path should not be able to swap in a new body while reusing an old proof. (KuCoin)

The second engineering requirement is one-time consumption. Replays are not only about signature reuse. They are about any artifact that remains valid longer than intended or valid in more contexts than intended. Message receipts, request commitments, processed proof identifiers, and action-specific nonces should all participate in replay resistance. If the protocol lets a historically valid proof remain combinable with a semantically different request, then the bug is already in the system even before an attacker finds the profitable asset to abuse. (docs.hyperbridge.network)

The third requirement is privilege isolation on the destination asset. The Ethereum-side DOT contract exposed high-impact capabilities like changeAdmin, mint, and role grants. That does not automatically mean the design was unsound. Many bridged assets need administrative logic. The problem is when bridge message acceptance and destination asset administration sit too close together. A remote message path that can eventually trigger an asset-admin change is a control plane. Treating it like an ordinary application callback is an architectural mistake. The more valuable the bridged asset, the more those powers should be split, rate-limited, delayed, and supervised. (Ethereum (ETH) Blockchain Explorer)

One practical pattern is to make asset-admin changes go through a separately governed window that cannot execute in the same transaction as proof acceptance. Another is to restrict bridge messages so they can only request narrow, typed state transitions, never arbitrary “admin changed to X” semantics. A third is to make mint authority depend on a vault-side bounded accounting rule rather than on an unstructured role that can issue unlimited supply once compromised. None of these patterns is novel. Their value is cumulative. Bridges fail when teams use one and skip the other two. (docs.hyperbridge.network)

The fourth requirement is a real circuit breaker for destination representations. Bithumb’s official caution notice and CertiK Pulse’s Upbit summary show the market side improvising a response after the exploit surfaced. Protocols should not leave that burden entirely to exchanges. If a bridged asset supply deviates violently from expected bounds, the destination-side token or gateway should have a predefined emergency lane to stop further privileged actions and clearly mark the representation as unsafe for routing. That does not mean every system should become centrally pausable by one multisig. It means the protocol should recognize that integrity failure of a synthetic asset is not the same kind of event as ordinary user transfers. (No.1 가상자산 플랫폼, 빗썸)

The fifth requirement is to treat the bridge as both a protocol and a market infrastructure component. Hyperbridge’s own posts emphasized DOT liquidity, accessibility, and cross-ecosystem usability. Once a bridge takes on that role, security design must include how quickly DEX pools, exchange notices, token metadata, and routing systems will recognize an integrity failure. A protocol that thinks only in terms of proofs and contracts may still lose socially because the market keeps believing the representation for too long. (Hyperbridge)

What exchanges, market makers, and token issuers should do in the first hour

The first job is chain-specific language. Bithumb’s notice is a useful example because it referenced the transaction and framed the event as a Polkadot-related security incident driving volatility, while also explicitly stating that DOT deposits and withdrawals were suspended. In a bridge-side exploit, every exchange and wallet should identify the exact network path affected rather than freezing communication into a generic “asset risk” warning. Users need to know whether Ethereum bridged DOT is unsafe, whether native DOT is still transferable on its own rails, and whether redemption assumptions are suspended. (No.1 가상자산 플랫폼, 빗썸)

The second job is to isolate the market path even before the final RCA is published. CertiK Pulse’s summary that Upbit suspended Polkadot deposits and withdrawals is consistent with that principle. Market operators do not need full exploit anatomy before they decide that a bridge representation with broken integrity should not flow freely into their systems. Waiting for the perfect incident report is an operational luxury that fast-moving bridge markets do not offer. (skynet.certik.com)

The third job is evidence preservation. Every protocol and exchange touched by the asset should snapshot transaction receipts, event logs, current admin state, current role state, pool reserves, routing paths, and any proof data or request bodies still recoverable from application logs. Bridge incidents tend to create enormous hindsight confidence once a thread goes viral. The only cure is early evidence preservation before hot takes rewrite the timeline. (Ethereum (ETH) Blockchain Explorer)

The fourth job is scope expansion. Crypto Times reported that this may have been the second exploit of the same system on the same day, with an earlier incident involving MANTA and CERE tokens. That claim is plausible enough to matter operationally but still not official enough to treat as final fact. In practice, that means defenders should immediately audit whether any other assets share the same gateway, handler, or proof-processing path. A bridge exploit is rarely an “only this token” event until proven otherwise. (The Crypto Times)

What still needs confirmation from Hyperbridge and Polkadot

The exploit itself does not need confirmation. The chain confirms it. The open questions now are the ones only the protocol team can answer cleanly. Was the root cause truly an MMR proof replay issue, or some adjacent request-binding bug in the same family. Did the vulnerability affect only DOT or a wider class of assets under the same gateway path. Were any mitigations deployed on-chain already. Were bridge operations paused selectively or globally. And what exact invariants will be changed so the same verified proof cannot authorize a semantically different request in the future. (Ethereum (ETH) Blockchain Explorer)

There is also a communication question that matters for integrators. Hyperbridge previously positioned itself as a proof-driven alternative to legacy trust-heavy bridges and described its architecture as secure, on-chain verification of cross-chain messages via advanced consensus proofs such as BEEFY and zk-light clients. If the protocol is now dealing with a verification-path failure, then the eventual public writeup needs to do more than say “funds are safe now.” It needs to explain which security claim failed, which still holds, and how external integrators should re-evaluate trust in the destination-side asset model. (Hyperbridge)

That matters beyond this one exploit. Bridge incidents are rarely only about the stolen amount. They are about what the ecosystem learns about the protocol’s real trust boundary. If a bridge says it replaced multisig trust with proof trust, then the postmortem must tell engineers whether the failure lived in proof validity, proof consumption, proof binding, request construction, or local execution semantics. Without that precision, the next integration is being asked to trust the same black box with slightly different marketing language. (docs.hyperbridge.network)

Closing judgment

The Hyperbridge exploit is a useful corrective to two bad habits in crypto security writing. One is the habit of collapsing every cross-chain incident into “bridge hacked, funds gone.” The other is the habit of assuming that a protocol built around cryptographic proofs is automatically safer than one built around ugly but obvious trust assumptions. Neither simplification survives contact with this event.

What happened here was more specific and more instructive. A destination-side DOT representation on Ethereum lost integrity because the authorization path that was supposed to connect cross-chain state to destination execution appears to have accepted a forged or improperly bound message. That let the attacker cross the most dangerous boundary in the design: the boundary between message acceptance and asset administration. From there, minting one billion bridged DOT was not magic. It was the expected effect of having the keys to the wrong room. (The Crypto Times)

The core lesson is not “never use bridges.” It is narrower and more useful. If a cross-chain protocol allows remote state to influence destination-side asset control, then proof validity, request binding, replay resistance, role isolation, and supply invariants are one security problem, not five separate ones. Miss one, and the whole architecture can still reduce to “attacker prints synthetic value and races the market to cash it out.” Hyperbridge is just the latest reminder. Wormhole, Nomad, Ronin, and multiple recent blockchain CVEs already taught the same thing in different dialects. (Google Wolke)

Weiterführende Literatur und Referenzen

For the primary record of what happened on-chain, start with the exploit transaction on Etherscan and the Ethereum-side DOT token contract page, then match those to Hyperbridge’s own EVM architecture and mainnet contract address documentation. (Ethereum (ETH) Blockchain Explorer)

For current incident tracking and early market response, the most useful public references are CertiK Pulse, Bithumb’s official warning notice, and reporting that clearly distinguishes bridged DOT on Ethereum from native DOT on Polkadot. (skynet.certik.com)

For bridge history and failure-pattern comparison, the strongest references are Mandiant’s analysis of Nomad, CertiK’s Wormhole incident analysis, and Ronin’s official postmortem. (Google Wolke)

For closely related vulnerability classes in blockchain systems, the most relevant NVD records are CVE-2024-32644, CVE-2025-54429, CVE-2026-22866, and CVE-2023-34449. (NVD)

For related Penligent reading that is genuinely relevant to blockchain attack surfaces rather than forced brand placement, see Penligent’s piece on automated blockchain smart contract vulnerability detection and its longer essay on cross-chain inconsistencies and autonomous red teaming in blockchain applications. (Sträflich)