Jackson is not “just a JSON library” in most shops. It is an infrastructure dependency: it sits on API boundaries, service-to-service payloads, streaming pipelines, gateways, ingestion jobs, and message consumers. That’s why GHSA-72hv-8253-57qq matters even though its headline is “only DoS.” The issue is not a theoretical micro-optimization flaw. It’s a consistency break in constraint enforcement: Jackson’s synchronous parser enforces numeric length limits, while the non-blocking async parser path silently bypasses them, enabling unbounded resource consumption in a class of applications that often exist precisely to handle large volumes of data efficiently. (GitHub)

This write-up is built from primary sources: the GitHub-reviewed advisory, the upstream Jackson security advisory and patch, the release notes that reference the fix, and the relevant API docs for the constraint system. Where it helps with real-world operations, it also pulls in ecosystem signals from downstream projects and scanners that surfaced GHSA-72hv-8253-57qq in practice. (GitHub)

What GHSA-72hv-8253-57qq is, in one paragraph

GHSA-72hv-8253-57qq is a high-severity vulnerability in com.fasterxml.jackson.core:jackson-core où les non-blocking async JSON parser bypasses StreamReadConstraints.maxNumberLength (default 1000). An attacker who can send JSON to an application that uses this async parser path can include an arbitrarily long number token, forcing unbounded memory allocation in buffering and potentially severe CPU exhaustion during numeric conversions, resulting in a denial of service. (GitHub)

That “uses the async parser path” clause is the operational pivot. Not every Jackson deployment is equally exposed; the difference between synchronous parsing and non-blocking parsing matters here. (GitHub)

Key facts you can paste into a security advisory without hand-waving

Affected and fixed versions, jackson-core 2.x and 3.x

The GitHub-reviewed advisory lists the affected and patched ranges as follows:

- Affected:

>= 2.0.0, <= 2.18.5,>= 2.19.0, < 2.21.1,>= 3.0.0, < 3.1.0 - Patched:

2.18.6,2.21.1,3.1.0(GitHub)

GitLab’s advisory database mirrors the fixed versions and expresses the affected ranges as “from 2.0.0 before 2.18.6, from 2.19.0 before 2.21.1, from 3.0.0 before 3.1.0.” (GitLab Advisory Database)

| Composant | Vulnerable ranges | Versions corrigées |

|---|---|---|

| jackson-core 2.x | 2.0.0 → 2.18.5, 2.19.0 → 2.21.0 | 2.18.6, 2.21.1 |

| jackson-core 3.x | 3.0.0 → 3.0.x before 3.1.0 | 3.1.0 |

The upstream security advisory for Jackson-core also frames the issue as an async parser number-length constraint bypass and emphasizes the same constraint default (1000) and DoS impact. (GitHub)

Severity and scoring nuance

- GitHub marks it Haut and shows a CVSS v4 base score 8.7, with Availability impact rated High. (GitHub)

- GitLab lists a CVSS 3.1 vector and a 7.5 High score. (GitLab Advisory Database)

This difference isn’t “disagreement” about risk; it’s primarily different scoring versions and publication contexts. If your org standardizes on CVSS v3.1, use the GitLab vector as a compatible reference; if you’re already moving to CVSS v4, the GitHub advisory is immediately usable. (GitHub)

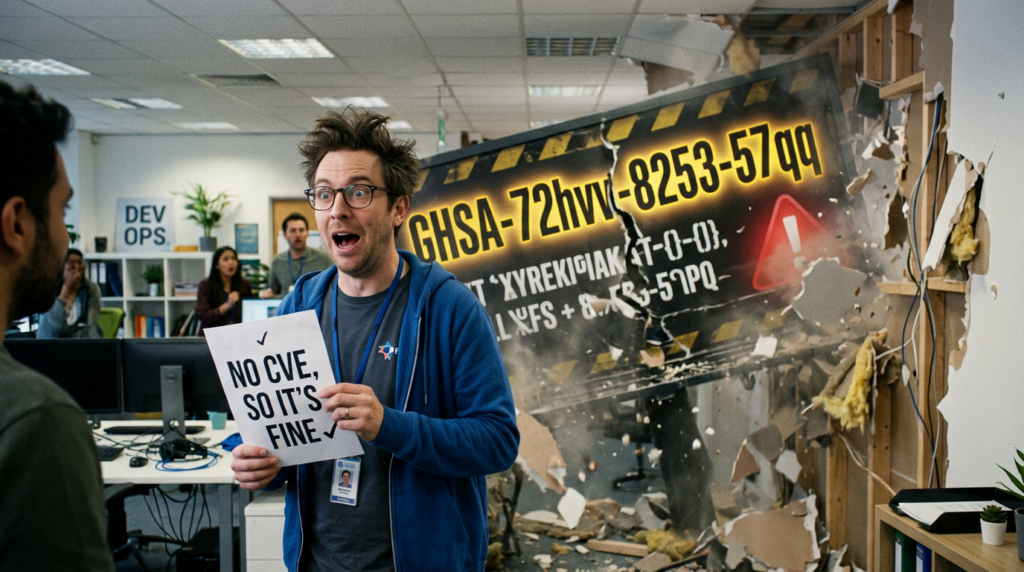

Does it have a CVE

As of the GitHub-reviewed advisory page, there is no known CVE ID for GHSA-72hv-8253-57qq. (GitHub)

That matters for tooling and workflows, because many enterprise pipelines still key off CVE IDs. You should treat this as a first-class vulnerability anyway: GHSA identifiers are stable, machine-consumable, and recognized across modern SCA tooling—just not always in older “CVE-only” gates. (GitHub)

Why this vulnerability is different from the usual Jackson risk stories

Most engineers associate “Jackson vulnerabilities” with deserialization gadget chains in jackson-databind. That history is real and still relevant, but GHSA-72hv-8253-57qq is a different category: it’s a streaming constraint bypass en jackson-core, not an object-mapper gadget exploit.

That distinction changes:

- Where the risk lives This sits at the parsing layer and can affect any code or framework that uses the non-blocking parser path, including reactive stacks.

- What “exploitability” looks like No classpath gadget hunting is needed. The attacker’s input is “just JSON,” but crafted to stress numeric parsing and buffering.

- What defenses work Classic “disable default typing” style mitigations are irrelevant. Constraint enforcement, request limits, and upgrades are the right levers.

The advisory explicitly frames the attack as sending a JSON document with an arbitrarily long number to an application using the async parser, with Spring WebFlux called out as a representative environment. (GitHub)

The constraint that is supposed to protect you, StreamReadConstraints and maxNumberLength

Jackson introduced a stronger concept of read-time guardrails in StreamReadConstraints: limits intended to prevent resource blowups during parsing, especially with adversarial or unexpected payloads.

The key setting here is maxNumberLength, which is the maximum length of a single numeric value in the stream. The official Javadoc for the builder method states that the default is 1000. (JavaDoc)

In other words, a JSON token like:

{"v": 1111111111...}

should be rejected once the number token length crosses the configured limit. The synchronous parser enforces that. The async parser path, in vulnerable versions, does not. (GitHub)

Root cause, what actually breaks in the async path

The GitHub advisory’s “Details” section is unusually specific and useful for engineers. The async parsing path in NonBlockingUtf8JsonParserBase accumulates digits into TextBuffer but does not call the methods where numeric length validation occurs. The synchronous parsers go through resetInt() ou resetFloat() en ParserBase, which call validateIntegerLength() et validateFPLength(). The async path finalizes via _valueComplete() without triggering that validation, so the length constraint is never enforced. (GitHub)

This is the important mental model:

- The guardrail exists.

- One code path uses it.

- The other code path bypasses it.

That inconsistency is exactly the kind of vulnerability that survives for a while in mature libraries: the feature is present, tests cover one path, and production deployments drift into the other path due to framework defaults or performance refactors.

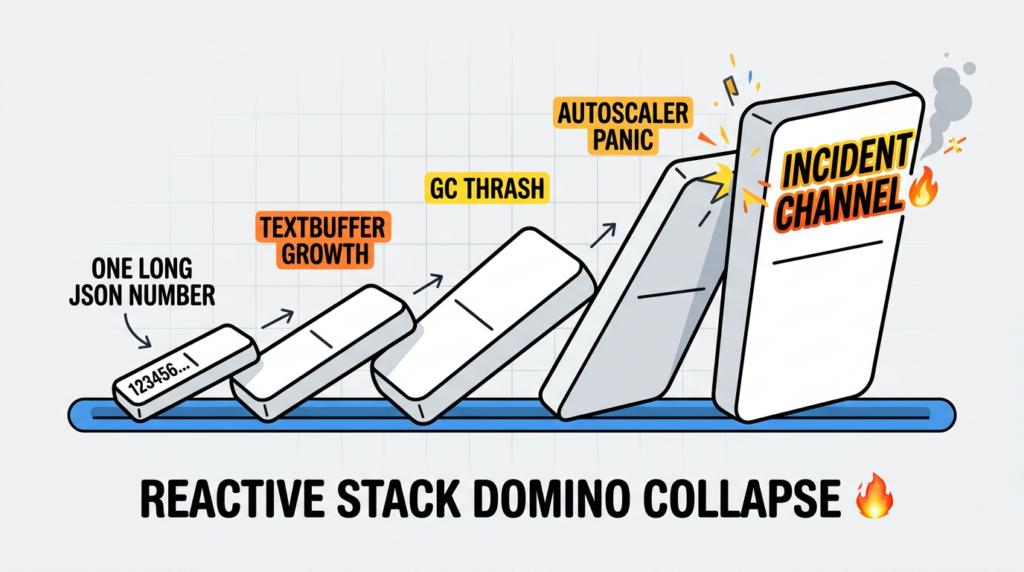

Impact in the real world, memory first, CPU second

The advisory describes two distinct DoS dimensions:

- Memory exhaustion from unbounded growth of the buffer storing the numeric token’s digits. (GitHub)

- CPU exhaustion if the application later converts the token into

BigIntegerouBigDecimal, potentially tying up CPU in expensive parsing behavior. (GitHub)

Those are not mutually exclusive. In practice, the worst incidents often follow a pattern:

- A burst of requests arrives with a small number of “poison” payloads.

- A subset of instances hit allocation pressure and begin GC thrashing.

- The instances that survive memory pressure still spend disproportionate CPU on parsing or downstream validation.

- Your autoscaler spins, your tail latencies explode, and your rate limits become the last line of defense.

The fact that this is a network-reachable input vector is what makes it operationally serious in API-heavy environments, and aligns with the CVSS metrics that treat it as network, low complexity, no privileges. (GitHub)

Where you are most likely exposed, async parsing shows up more than you think

Reactive stacks and streaming decoders

You do not need to instantiate NonBlockingByteArrayJsonParser directly to be exposed. Frameworks can choose non-blocking parsing internally for performance or to match a reactive IO model.

The GHSA text explicitly calls out “Spring WebFlux or other reactive application” as a representative async parser usage context. (GitHub)

Spring’s WebFlux documentation describes that the web module provides Jackson encoders/decoders as part of the reactive stack. (Accueil)

Gateways, message consumers, and ingestion jobs

Reactive gateways and service meshes are obvious, but there are other recurring patterns:

- Event ingestion services that process JSON records and aim to avoid blocking calls

- Kafka consumers that deserialize JSON payloads at high throughput

- “Import” endpoints for logs, telemetry, or user uploads

Downstream projects noticed the impact quickly. Apache Kafka tracked GHSA-72hv-8253-57qq as a jackson-core vulnerability affecting Kafka versions and shipped fixes in their own release train, illustrating how widely embedded this dependency is. (Apache JIRA)

The practical threat model, when this becomes a real incident

A useful way to reason about GHSA-72hv-8253-57qq is to treat it as a parsing-layer amplification primitive:

- The attacker does not need to exceed your request body limit.

- The attacker does not need to send many fields.

- The attacker only needs a numeric token long enough to blow past the intended limit.

- Your service does the rest, allocating memory and burning CPU.

This becomes especially attractive to attackers when:

- Your endpoint is unauthenticated or cheaply authenticated Public APIs, signup endpoints, webhook receivers, telemetry endpoints.

- You operate a high-concurrency reactive server More concurrent parsing means the blast radius scales faster with less attacker traffic.

- You convert numeric tokens into big-number types If you map inputs into

BigInteger,BigDecimal, or you do schema validation that triggers conversions. - Your error handling leaks compute Retrying, circuit-breaker storms, noisy logs, expensive exception formatting.

The advisory’s own impact narrative explicitly ties to memory exhaustion and CPU exhaustion paths. (GitHub)

Fast triage, am I vulnerable

You want two answers:

- Do I run a vulnerable version of jackson-core

- Do I ever hit the vulnerable async parsing path

Step 1, check your versions

If you have Maven:

mvn -q -DskipTests dependency:tree | grep -E "jackson-core|com.fasterxml.jackson.core:jackson-core"

If you have Gradle:

./gradlew -q dependencies --configuration runtimeClasspath | grep -E "jackson-core"

Then compare the resolved version to the fixed versions 2.18.6, 2.21.1, 3.1.0. (GitHub)

If you run a vulnerable version but you believe “we don’t parse JSON asynchronously,” do not stop here. Frameworks may choose async parsing behind the scenes.

Step 2, look for async parser usage signals

Search your codebase for Jackson non-blocking parser classes:

grep -R --line-number -E "createNonBlocking|NonBlocking|Jackson2Tokenizer|AbstractJackson2Decoder" .

The goal is not to prove exactly which parser class is used in every path; it is to identify whether your application or frameworks are wired to non-blocking decoding. Spring’s long-standing issues and APIs around non-blocking Jackson decoders show that this is a real implementation detail in WebFlux-style stacks. (GitHub)

Step 3, map exposure to actual inputs

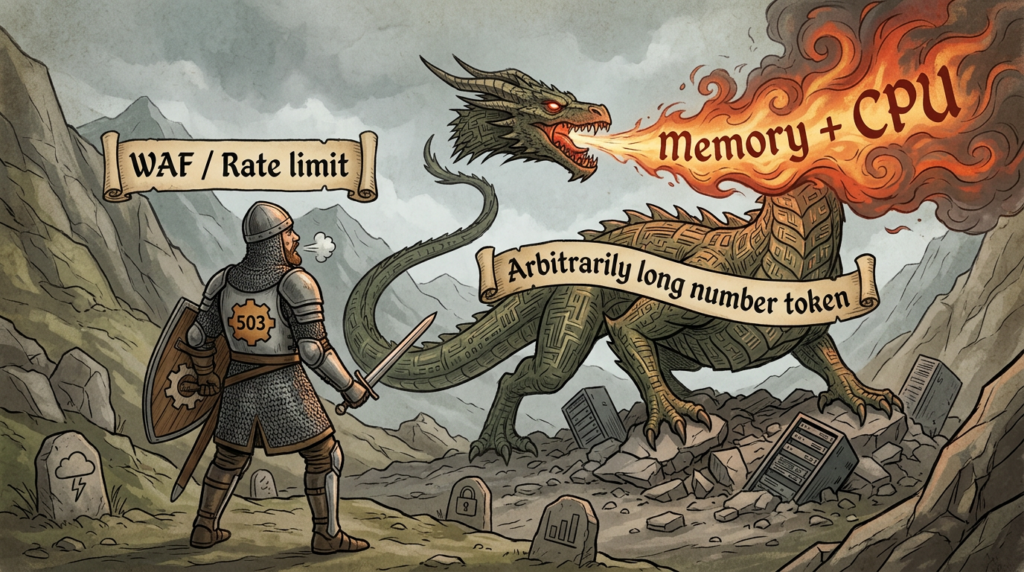

Even if async decoding exists, you may have compensating controls:

- Strict schema validation at the edge

- Body size limits in the gateway

- Rate limits

- Authentication requirements

Those controls reduce risk but do not eliminate it. A small payload can still contain a very long number token, and rate limiting is not a substitute for patching when a single request can trigger disproportionate cost.

Safe verification in a test environment, confirm the bug and confirm the fix

You do not need an HTTP “attack script” to validate this. A unit test that parses a JSON payload containing a number longer than the configured limit is enough to:

- reproduce the vulnerability in a controlled way

- verify that upgrading to a patched version fixes behavior

Below is a minimal JUnit-style test sketch that avoids copying the upstream PoC verbatim while preserving the logic: the synchronous parser should reject, the async parser should reject only after patching.

import com.fasterxml.jackson.core.*;

import com.fasterxml.jackson.core.json.JsonFactory;

import com.fasterxml.jackson.core.json.async.NonBlockingByteArrayJsonParser;

import org.junit.jupiter.api.Test;

import java.nio.charset.StandardCharsets;

import static org.junit.jupiter.api.Assertions.*;

class JacksonAsyncNumberLengthRegressionTest {

private static byte[] jsonWithLongNumber(int digits) {

StringBuilder sb = new StringBuilder(digits + 16);

sb.append("{\\"v\\":");

for (int i = 0; i < digits; i++) sb.append('7');

sb.append("}");

return sb.toString().getBytes(StandardCharsets.UTF_8);

}

@Test

void syncParserShouldEnforceMaxNumberLength() throws Exception {

JsonFactory f = new JsonFactory();

byte[] payload = jsonWithLongNumber(1500); // exceeds default 1000

assertThrows(StreamConstraintsException.class, () -> {

try (JsonParser p = f.createParser(payload)) {

while (p.nextToken() != null) { /* drain */ }

}

});

}

@Test

void asyncParserShouldAlsoEnforceMaxNumberLengthAfterUpgrade() throws Exception {

JsonFactory f = new JsonFactory();

byte[] payload = jsonWithLongNumber(1500);

NonBlockingByteArrayJsonParser p =

(NonBlockingByteArrayJsonParser) f.createNonBlockingByteArrayParser();

p.feedInput(payload, 0, payload.length);

p.endOfInput();

// On vulnerable versions this may complete without throwing.

// On fixed versions it should throw StreamConstraintsException.

assertThrows(StreamConstraintsException.class, () -> {

while (p.nextToken() != null) { /* drain */ }

});

p.close();

}

}

The upstream advisory includes a PoC test and explains the mismatch between sync and async enforcement as the core evidence of the bug. (GitHub)

If you want a stronger regression, make this test part of your CI gate so future dependency changes do not reintroduce unsafe parsing behavior.

The real fix, upgrade to patched jackson-core versions

If you can upgrade, do it. This is the only durable remediation because the constraint bypass is inside the library.

The patched versions are:

- 2.18.6

- 2.21.1

- 3.1.0 (GitHub)

The upstream fix is tied to Jackson PR #1555, and the commit that references it adds release notes indicating enforcement of StreamReadConstraints.maxNumberLength for the non-blocking parser. (GitHub)

Maven, enforce a safe version even when it is transitive

In multi-module services or platforms that inherit Jackson via Spring Boot, Kafka, or other dependencies, you often need to override transitive versions explicitly.

A pragmatic approach is to pin jackson-core with dependencyManagement:

<dependencyManagement>

<dependencies>

<dependency>

<groupId>com.fasterxml.jackson.core</groupId>

<artifactId>jackson-core</artifactId>

<version>2.21.1</version>

</dependency>

</dependencies>

</dependencyManagement>

Choose a fixed version that matches your major line. The goal is not “latest at all costs,” but “not in a vulnerable range.” (GitHub)

Gradle, use constraints to force fixed versions

dependencies {

constraints {

implementation("com.fasterxml.jackson.core:jackson-core:2.21.1") {

because("Fixes GHSA-72hv-8253-57qq async parser maxNumberLength bypass")

}

}

}

Tie this to your internal vulnerability ticket so future maintainers understand why the constraint exists.

When you cannot upgrade immediately, mitigations that actually help

Mitigations do not replace patching, but they can buy you time and reduce incident likelihood.

Put hard limits at the edge, size and rate

Even though the attack can be “small but expensive,” payload size limits still matter because they cap the worst-case token length. For HTTP gateways, set request size limits in the gateway and in upstream proxies.

For reactive stacks, memory buffering limits are also a practical guardrail. Spring WebFlux commonly throws DataBufferLimitException when buffering exceeds a configured maximum, and guidance around spring.codec.max-in-memory-size is widely referenced for controlling that behavior. (Baeldung on Kotlin)

This does not specifically enforce numeric token limits, but it prevents unlimited buffering in many ingestion paths.

Avoid returning detailed parsing exceptions to clients

Resource exhaustion bugs and exception-message leakage bugs often stack. Jackson-core has had a separate information disclosure issue where exception messages could include unintended bytes from buffers in certain versions, recorded as CVE-2025-49128. (NVD)

In practice, you should treat “parsing exceptions” as internal signals:

- return generic client errors

- log structured error details internally

- avoid echoing raw exception strings in HTTP responses

This reduces the chance that a parsing failure becomes both a DoS vector and a data exposure vector when other bugs exist.

Add field-level validation where it is cheap and high value

If you have known numeric fields that should never exceed certain digits, validate them early:

- request DTO validation

- schema validation

- streaming checks before conversion

Be careful: validation must be cheaper than the thing you are preventing. Don’t turn mitigation into another CPU-heavy step on attacker-controlled input.

Detection and governance, make sure your tooling catches GHSA IDs

Why GHSA-72hv-8253-57qq can slip through CVE-only processes

The advisory explicitly lists no known CVE. (GitHub)

Some scanners and reporting pipelines still assume “vulnerability = CVE,” which can lead to false negatives in dashboards, SBOM exports, and compliance reports.

A practical example: a Trivy discussion referenced GHSA-72hv-8253-57qq metadata in GitHub’s advisory database JSON and noted scoring differences when exporting CycloneDX. This is the kind of integration gap that can make a vulnerability invisible in one format but visible in another. (GitHub)

Make your SCA policy accept GHSA as first-class

If you rely on GitHub Advanced Security, Dependabot alerts, or GitHub Advisory Database feeds, you already have a natural path to ingest GHSA identifiers. (GitHub)

If you rely on third-party advisories, GitLab and Snyk both carry GHSA-72hv-8253-57qq entries that mirror the technical summary and fixed versions. (GitLab Advisory Database)

Jackson ecosystem context, related high-impact CVEs you should understand

GHSA-72hv-8253-57qq is best understood as part of a broader class: “untrusted JSON can become unbounded work.” Jackson has been actively adding constraints like max depth and max string length to avoid these failure modes.

Here are related, high-impact entries that frequently appear in production Jackson inventories:

| Identifier | Composant | Class of issue | Pourquoi c'est important |

|---|---|---|---|

| CVE-2025-52999 | jackson-core | Deep nesting DoS via StackOverflowError, fixed with default max depth 1000 | Same theme: constraints prevent attacker-controlled parser blowups (NVD) |

| CVE-2025-49128 | jackson-core | Exception message may include unintended bytes from buffers | Risk can be amplified by frameworks that surface parse errors to clients (NVD) |

| CVE-2020-36518 | jackson-databind | Deeply nested objects can trigger StackOverflow and DoS | Databind layer still inherits core parsing constraints but has its own pitfalls (NVD) |

| CVE-2022-42003 | jackson-databind | Resource exhaustion via deep wrapper array nesting when certain features are enabled | Highlights how “valid JSON” can still be adversarial for deserializers (NVD) |

| CVE-2019-12384 | jackson-databind | Polymorphic deserialization gadget risk, potential RCE depending on classpath | Still shows up in legacy stacks and in third-party products (NVD) |

The operational takeaway is not “Jackson is unsafe.” It’s that JSON parsing is an attack surface, and the library is continuing to add guardrails—your job is to keep those guardrails present and correctly enforced across all parsing paths.

GHSA-72hv-8253-57qq fits squarely into this pattern: a guardrail existed, but one high-performance parsing path bypassed it. (GitHub)

What to do next, a crisp checklist you can operationalize

- Inventory où

jackson-coreis used and resolve the actual runtime version. - Mise à niveau to 2.18.6, 2.21.1, or 3.1.0 depending on your major line. (GitHub)

- Add a regression test that asserts async parsing rejects numeric tokens longer than the configured limit. (GitHub)

- Harden the edge with body size limits, rate limiting, and sane buffering limits in reactive decoders. (Baeldung on Kotlin)

- Update your SCA gate to accept GHSA IDs, not only CVEs, because this advisory currently has no known CVE. (GitHub)

- Document why the constraint exists so future refactors don’t reintroduce unsafe parsing via “performance improvements.”

Further reading

GitHub Advisory Database, GHSA-72hv-8253-57qq https://github.com/advisories/GHSA-72hv-8253-57qq

Upstream Jackson security advisory, GHSA-72hv-8253-57qq https://github.com/FasterXML/jackson-core/security/advisories/GHSA-72hv-8253-57qq

Patch PR in jackson-core, Enforce StreamReadConstraints.maxNumberLength for async parser https://github.com/FasterXML/jackson-core/pull/1555

Patch commit b0c428e, release notes mention #1555 https://github.com/FasterXML/jackson-core/commit/b0c428e6f993e1b5ece5c1c3cb2523e887cd52cf

StreamReadConstraints maxNumberLength Javadoc, default 1000 https://javadoc.io/doc/com.fasterxml.jackson.core/jackson-core/latest/com/fasterxml/jackson/core/StreamReadConstraints.html

MITRE CWE-770, Allocation of Resources Without Limits or Throttling https://cwe.mitre.org/data/definitions/770.html

GitLab Advisory Database mirror, GHSA-72hv-8253-57qq https://advisories.gitlab.com/pkg/maven/com.fasterxml.jackson.core/jackson-core/GHSA-72hv-8253-57qq/

Apache Kafka tracking issue referencing GHSA-72hv-8253-57qq https://issues.apache.org/jira/browse/KAFKA-20241

Spring Framework reference, WebFlux and codecs https://docs.spring.io/spring-framework/reference/web/webflux/reactive-spring.html

Spring WebClient codec configuration reference, maxInMemorySize patterns https://docs.spring.io/spring-framework/reference/web/webflux-webclient/client-builder.html

NVD, CVE-2025-52999 jackson-core deep nesting DoS context https://nvd.nist.gov/vuln/detail/CVE-2025-52999

NVD, CVE-2025-49128 jackson-core exception source disclosure https://nvd.nist.gov/vuln/detail/CVE-2025-49128

Penligent, product homepage https://penligent.ai/

Penligent, OpenClaw + VirusTotal supply-chain boundary analysis https://www.penligent.ai/hackinglabs/openclaw-virustotal-the-skill-marketplace-just-became-a-supply-chain-boundary/

Penligent, MCP security and the execution boundary in production https://www.penligent.ai/hackinglabs/agentic-ai-security-in-production-mcp-security-memory-poisoning-tool-misuse-and-the-new-execution-boundary/

Penligent, Vulnerability management tools guide, operational lifecycle framing https://www.penligent.ai/hackinglabs/vulnerability-management-tools-a-complete-2026-guide/