What changed, agents are not chatbots anymore

Agent applications don’t merely answer questions. They plan, retrieve, rememberそして execute actions through tools. Once your system can call an MCP server that can touch files, repos, tickets, cloud APIs, or internal data, you’ve built an automation platform with a probabilistic planner at the center.

That shift is exactly why the OWASP Agentic Security Initiative exists: to force a threat-model-first view of multi-step workflows, not just model outputs. (OWASP Gen AIセキュリティプロジェクト)

AWS frames the same reality from a deployment perspective: agentic systems create new security challenges that require scoping, controls across dimensions, and explicit risk management. (Amazon Web Services, Inc.)

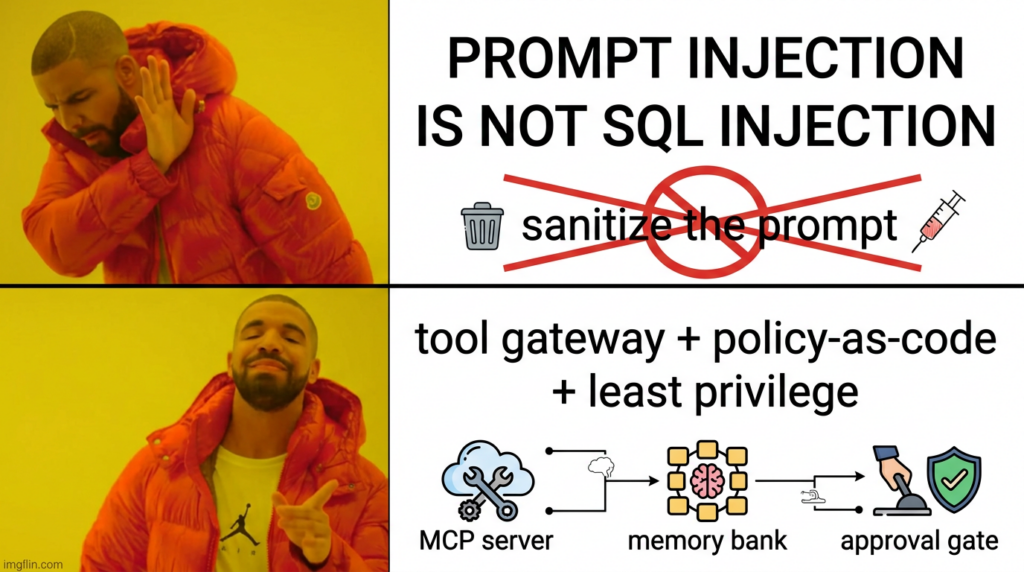

And the UK NCSC’s warning is the most honest starting point: current LLMs do not reliably enforce a boundary between 指示 そして データ, which is why prompt injection can’t be “patched” the way classic injection classes were. The mitigation has to happen at the system design layer. (NCSC)

The five terms engineers search when the incidents start

This article is built around five keywords that capture today’s highest-leverage failure modes:

- エージェント型AIセキュリティ

- Agentic Security Initiative

- MCP Security

- Memory Poisoning

- Tool Misuse

If you skim the highest-ranking technical writeups and security advisories around these topics, you’ll notice the same query phrases show up again and again—“MCP server security best practices,” “misconfigured MCP servers,” “long-term memory poisoning,” “prompt injection is not SQL injection,” “argument injection in MCP git server.” Those phrases matter because they map to real operational failures and real CVEs. (Model Context Protocol)

Agentic Security Initiative, the threat model spine

OWASP’s Agentic Security Initiative material is valuable because it’s not a vibe check. It’s a structured threat model reference aimed at autonomous, multi-step workflows. (OWASP Gen AIセキュリティプロジェクト)

A practical mental model is to treat an agent system as four planes:

- Input and context plane — user input, retrieved web content, documents, tool outputs, tickets, code, logs.

- Memory plane — short-term scratchpad and long-term persistent memory, plus any RAG store used as “knowledge.”

- Tool execution plane — tools, MCP servers, plugins, code runners, CI, database clients, cloud SDKs.

- Identity and authorization plane — who the agent acts as, token scopes, approval gates, session lifecycle.

These planes are why AWS and OWASP both emphasize scope, governance, and controls across dimensions rather than “just guardrails in prompts.” (Amazon Web Services, Inc.)

AWS threat language that maps directly to production failures

AWS’s agent security writeup (the one you provided) explicitly names Memory Poisoning そして Tool Misuse as distinct threats, along with privilege compromise and resource overload. That’s useful because it translates “agent risk” into things you can actually build controls for. (Amazon Web Services, Inc.)

You’ll see the same threat families echoed in OWASP material and in real-world research demos. (OWASP Gen AIセキュリティプロジェクト)

MCP Security, interoperability became a supply chain boundary

Model Context Protocol turns “LLM talks to tools” into a standardized interface. That’s great for ecosystem growth. It’s also a perfect way to multiply blast radius if you treat MCP servers like casual dev plugins.

MCP is a protocol with an actual security spec, not an idea

The MCP specification defines authoritative protocol requirements, and the 2025-06-18 revision includes a concrete authorization model. (Model Context Protocol)

One detail that matters operationally: MCP servers must advertise authorization server locations via OAuth protected resource metadata, which pushes you toward a more enterprise-aligned pattern—MCP servers as protected resources, not as ad hoc auth issuers. (Model Context Protocol)

That’s not theoretical. When teams skip this and ship “quick MCP,” they often end up with identity fragmentation, inconsistent token handling, and brittle sessions. This is why so many modern MCP security guides focus on OAuth, token binding, session expiry, and replay resistance. (WorkOS)

MCP security best practices that should be non-negotiable

The MCP security best practices docs call out a basic but critical control: bind session IDs to user-specific info and rotate or expire sessions. That’s a direct defense against session replay and hijacking when MCP calls get queued, logged, or forwarded. (Model Context Protocol)

In production terms:

- Session IDs should be short-lived

- Session context should be user-bound

- Background queues should not store bearer-like session identifiers without binding and expiry

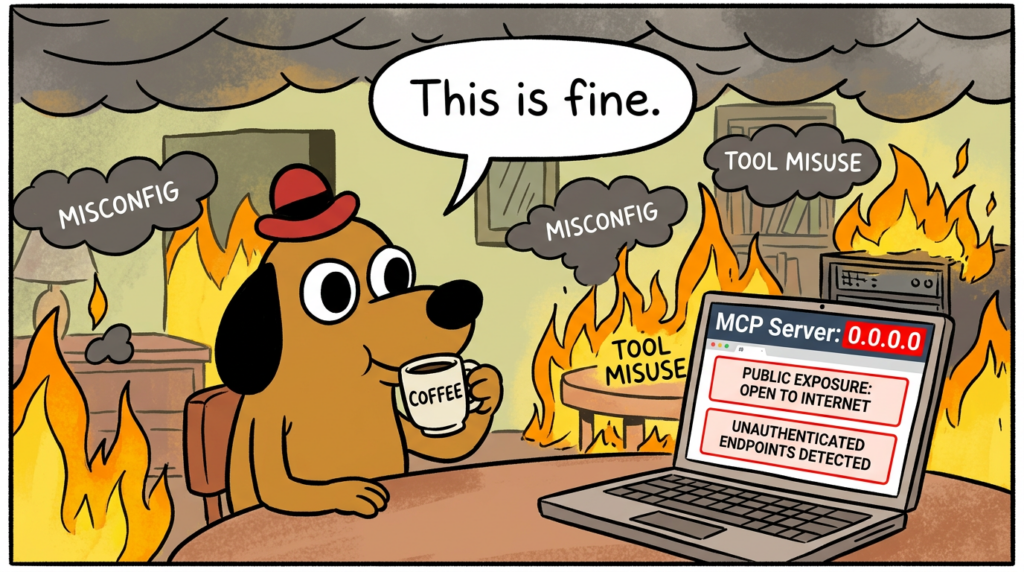

Misconfigured MCP servers are not a corner case

Research and reporting throughout 2025 highlighted the prevalence of vulnerable or misconfigured MCP servers and tool ecosystems, including uncontrolled input handling, unnecessary permissions, and exposure that could enable RCE or data leakage. (Backslash)

Don’t get stuck arguing about exact counts. The operational signal is simpler: MCP servers are being deployed widely, and a meaningful slice of them are deployed insecurely.

CVEs made MCP risk tangible

The moment you have CVEs in MCP server implementations, you no longer get to treat MCP as “just an interface.”

NVD describes CVE-2025-68144 in mcp-server-git as argument injection in git_diff そして git_checkout, where flag-like values can be interpreted as CLI options, enabling arbitrary file overwrites; the fix rejects - prefixed args and verifies refs via rev_parse. (NVD)

And real-world reporting on the Anthropic Git MCP server vulnerability chain explains why composition matters: Git + Filesystem MCP servers can become “toxic combinations” that escalate into file tampering or code execution under prompt injection conditions. (レジスター)

This is the point most teams miss: even if each tool looks safe in isolation, chaining tools inside an agent workflow creates a new exploit surface, because the model is the orchestrator and can be steered.

MCP Security checklist you can run this week

| コントロール | なぜそれが重要なのか | Fast validation |

|---|---|---|

| OAuth resource-server model, dedicated authorization server | Avoids ad hoc token issuance and weak auth patterns | Confirm protected resource metadata and auth server discovery (Model Context Protocol) |

| Session binding and expiry | Prevents replay/hijack in queued or logged workflows | Rotate session IDs, bind <user_id>:<session_id> (Model Context Protocol) |

| Tool allowlists and least privilege | Limits blast radius of tool misuse | Per-tool scopes, deny-by-default |

| Strict argument validation | Stops CLI-style argument injection classes | Reject flag-like values, schema validate (NVD) |

| Network exposure discipline | Prevents “MCP server on the internet” accidents | Bind to localhost by default, explicit ingress policies |

Memory Poisoning, prompt injection that persists and comes back later

Prompt injection is already painful. Memory poisoning is worse because it turns a one-time interaction into a durable control mechanism.

AWS describes memory poisoning as injecting malicious or false data into short- or long-term memory systems that can alter decisions and trigger unauthorized actions. (Amazon Web Services, Inc.)

Unit 42 demonstrates a particularly practical version: indirect prompt injection can silently poison long-term memory, using Amazon Bedrock Agents as the demonstration environment, and the attack can be planted via a malicious web page or document accessed through social engineering. (Unit 42)

MINJA, a concrete memory injection attack model

MINJA is useful to security engineers because it frames memory injection as an interaction-only attack: the attacker doesn’t need direct write access to the memory bank; they can guide the agent into writing malicious records by interacting with it. (arXiv)

The security lesson is not “MINJA exists therefore panic.” The lesson is: if your agent writes memory based on untrusted context, memory becomes an attack surface and you need memory write controls the same way you need input validation and authz controls.

AgentPoison, poisoning RAG and long-term memory for backdoor behavior

AgentPoison proposes a backdoor-style red-teaming approach against agents by poisoning long-term memory or a RAG knowledge base, emphasizing that reliance on unverified knowledge bases creates safety and trust concerns. (arXiv)

You don’t need to adopt every defense from research papers to get value from the findings. You need two engineering principles:

- Memory is not truth. It needs provenance and trust scoring.

- Retrieval must be constrained by task, not “whatever is similar.”

Memory Poisoning is an incident response problem, not a prompt problem

When memory is persistent, remediation changes:

- You might need to purge または quarantine memory

- You need to know who wrote each record and なぜ

- You need to prevent cross-task contamination, where an injected instruction from “summarize this web page” affects “deploy that config”

If your system can’t answer “where did this memory come from,” you can’t clean it safely.

A memory record format that supports forensics

{

"id": "mem_2026_03_04_001",

"content": "Staging SSO uses Okta tenant A.",

"source_type": "user_message | tool_output | retrieved_doc",

"source_ref": "ticket:INC-18421 | url_hash:9f2c... | convo:msg:88421",

"created_at": "2026-03-04T21:05:12Z",

"writer_identity": "agent_runtime:svc-agent-staging",

"trust_score": 0.74,

"tags": ["identity", "staging"],

"expiry_days": 30,

"review_state": "auto | needs_review | quarantined"

}

Tool Misuse, when “allowed” actions violate intent

AWS defines tool misuse as manipulating an AI agent within its authorized permissions using deceptive prompts or commands, potentially leading to agent hijacking and unintended tool interactions. (Amazon Web Services, Inc.)

The NCSC offers the deeper framing: the problem isn’t a single exploit primitive. The problem is that LLMs don’t inherently separate instruction from data, so you must assume they can be steered—then design your system so “being steered” doesn’t equal “catastrophic action.” (NCSC)

Tool misuse patterns you will see in production

- Intent laundering Attackers wrap harmful actions inside legitimate tasks: “Export the report,” “Fix the config,” “Summarize the doc.” The model then selects tools that exfiltrate or modify data while believing it’s helping.

- Indirect control via context The attacker places instructions in a web page, README, issue comment, log line, or document that the agent ingests as context, then the agent executes tool calls as a result.

- Privilege pivot by workflow composition One tool reveals a token, another tool uses it. One tool writes a file, another tool executes it. Chaining is where the damage happens, which is why MCP server combinations matter. (ハッカーニュース)

CVE lessons that belong in every agent security review

MCP server implementation CVEs, treat them like production dependencies

CVE-2025-68144 demonstrates why “tool arguments” are a serious input surface: unsanitized user-controlled args can be interpreted as flags and enable file overwrite classes. (NVD)

If you’re running any MCP servers that wrap CLI tools, you should assume you are one missing validation rule away from similar failures.

XZ backdoor, why agent execution magnifies supply chain risk

CVE-2024-3094 is a supply chain compromise where malicious code was embedded in upstream xz tarballs (5.6.0 and 5.6.1), with obfuscations that altered liblzma build outputs. NVD’s description is explicit about the mechanics and why downstream consumers were at risk. (NVD)

CISA’s alert underscores the impact: malicious code embedded in those versions prompted coordinated response and mitigation guidance. (CISA)

The agent-specific lesson: if your agent can fetch dependencies, run builds, or “helpfully install tools,” you’ve effectively granted a decision loop access to the same trust surface that made XZ dangerous. You need deterministic controls around what can be installed, executed, and where secrets can flow.

Architecture that actually holds up, design for residual prompt injection risk

This section is not “best practices” wallpaper. It’s a blueprint you can implement.

1 Tool Gateway, a hard boundary between model and tools

Never let the model call tools directly. Your model should emit a structured request that is validated and authorized by a gateway service.

Key properties:

- Allowlist tools per workflow

- JSON schema validation

- Deny flag-like argument patterns for CLI wrappers

- Path and URL policies

- Rate limits and cost ceilings

- JIT approval for high-risk actions

- Full audit logs with allow/deny reasons

Python skeleton, schema validate and policy enforce

import json

from jsonschema import validate, ValidationError

TOOL_SCHEMAS = {

"http_fetch": {

"type": "object",

"properties": {

"url": {"type": "string", "pattern": r"^https://"},

"timeout_s": {"type": "integer", "minimum": 1, "maximum": 15},

"max_bytes": {"type": "integer", "minimum": 1024, "maximum": 2_000_000},

},

"required": ["url"],

"additionalProperties": False

},

"git_diff": {

"type": "object",

"properties": {

"repo_id": {"type": "string"},

"ref": {"type": "string", "minLength": 1, "maxLength": 128}

},

"required": ["repo_id", "ref"],

"additionalProperties": False

}

}

def reject_flag_like(value: str) -> None:

# Defense aligned with CVE-2025-68144 style failures

if value.strip().startswith("-"):

raise PermissionError("Flag-like argument rejected")

def tool_gateway(tool_name: str, raw_args: str, user_id: str, workflow: str):

if tool_name not in TOOL_SCHEMAS:

raise PermissionError("Tool not allowlisted")

try:

args = json.loads(raw_args)

validate(instance=args, schema=TOOL_SCHEMAS[tool_name])

except (json.JSONDecodeError, ValidationError) as e:

raise ValueError(f"Invalid tool args: {e}")

# Example: apply tool-specific safety rules

if tool_name == "git_diff":

reject_flag_like(args["ref"])

# Enforce workflow allowlist

# Example: only allow git tools in "code_review" workflow

if workflow != "code_review" and tool_name.startswith("git_"):

raise PermissionError("Tool not allowed for this workflow")

# Emit audit record

audit = {

"event": "tool_call_allowed",

"user_id": user_id,

"workflow": workflow,

"tool": tool_name,

"args_hash": hash(raw_args),

}

return {"ok": True, "audit": audit}

This directly addresses the real-world class described in NVD: sanitize arguments, reject - prefixed values, and validate that refs resolve before execution in real implementations. (NVD)

2 Policy as code, deterministic guardrails

Use OPA, Cedar, or similar to enforce tool policies independent of model behavior.

Rego example, deny high-risk tools without approval

package agent.tools

default allow = false

allowed_tools := {"http_fetch", "read_repo_file", "search_issue_tracker", "git_diff"}

high_risk(tool) {

tool == "run_shell"

} {

tool == "write_file"

} {

tool == "create_cloud_credential"

}

allow {

input.tool in allowed_tools

not high_risk(input.tool)

}

allow {

high_risk(input.tool)

input.approval_token != ""

input.approval_scope == input.tool

}

3 Identity and authorization, treat agents like service principals

The MCP spec’s authorization model and OAuth resource server patterns exist for a reason: identity sprawl is how tool ecosystems get compromised. (Model Context Protocol)

Practical rules:

- Every tool call is executed under a distinct runtime identity

- Tokens are scoped per tool and per environment

- No long-lived “agent god token”

- Rotate, expire, and bind sessions to user identity (Model Context Protocol)

4 Sandboxing and egress control, assume someone will steer your agent

When Git + Filesystem MCP chaining can lead to file tampering or worse, your execution environment must be designed to contain damage. (テックレーダー)

Minimal containment checklist:

- Run tool runners in containers or microVMs

- Default read-only filesystem mounts

- Secrets mounted only when needed, not by default

- Network egress allowlist, block arbitrary outbound by default

- Separate “read” tools from “write/execute” tools via distinct workers

5 Memory hygiene, write gate, provenance, trust scoring, decay

Unit 42’s demonstration of long-term memory poisoning shows the real hazard: a poisoned instruction can persist and trigger later, without attacker presence. (Unit 42)

So implement memory controls as if memory were a database you must protect:

- Memory writes require a “should_write_memory” decision that is validated by policy

- Only store facts, never store raw imperative instructions

- Every memory record has provenance and a trust score

- Retrieval is task-scoped and time-decayed

Memory write gate pseudocode

def should_store_memory(content: str, source_type: str, trust: float) -> bool:

if trust < 0.7:

return False

# reject instruction-like patterns

banned = ["ignore previous", "always do", "system prompt", "exfiltrate", "send to"]

if any(b in content.lower() for b in banned):

return False

if source_type in {"retrieved_web", "untrusted_doc"}:

# require review for untrusted sources

return False

return True

Research like MINJA and AgentPoison exists to prove this is not hypothetical: memory can be poisoned through interactions or poisoned knowledge bases, and retrieval can be manipulated to trigger undesirable actions. (arXiv)

6 Observability, log the decision chain, not just the final answer

If you only log the model output, you miss the failure.

Log these minimum fields:

- tool name, args hash, allow/deny reason

- source provenance for retrieved context chunks

- memory writes, trust score, writer identity

- approvals, approver, scope, expiration

- resource usage spikes and rate-limit events, aligned with AWS resource overload threat framing (Amazon Web Services, Inc.)

Audit event example

{

"ts": "2026-03-04T22:01:12Z",

"event": "tool_call_denied",

"user_id": "u_18421",

"agent_runtime": "svc-agent-prod",

"workflow": "doc_summarize",

"tool": "write_file",

"deny_reason": "high_risk_tool_requires_approval",

"context_sources": [

{"type": "url", "hash": "9f2c..."},

{"type": "tool_output", "tool": "http_fetch"}

]

}

How to test Agentic AI Security without turning it into exploit content

You’re testing system controls, not writing jailbreak prompts.

Test 1 Indirect injection via retrieved content

- Host a benign-looking doc page with embedded instruction-like text

- Verify the model sees it

- Verify tool gateway denies any attempt to convert it into tool calls

- Confirm logs show provenance and deny reason

This aligns with the NCSC’s “confusable deputy” framing and Unit 42’s indirect injection story. (NCSC)

Test 2 Memory poisoning persistence

- Attempt to cause memory write from untrusted source

- Confirm memory write gate blocks or quarantines

- Confirm later tasks do not retrieve that poisoned record

MINJA exists to show interaction-only memory injection is feasible when memory writes are permissive. (arXiv)

Test 3 Tool misuse within permissions

- Try to steer an agent toward “allowed but unintended” data access

- Confirm policy-as-code blocks intent-violating tool calls

- Confirm approvals are required for any high-risk write or execute tool

AWS’s definition of tool misuse is exactly this scenario. (Amazon Web Services, Inc.)

If you treat your agent application as a production system, you need continuous validation—especially after you add a new MCP server, widen a tool scope, or change how memory is stored. This is where an AI-driven pentesting workflow can be useful: you can run asset discovery against your agent endpoints, test exposed MCP services, fuzz tool gateway parameters, and continuously verify that dangerous actions remain blocked by policy and environment controls.

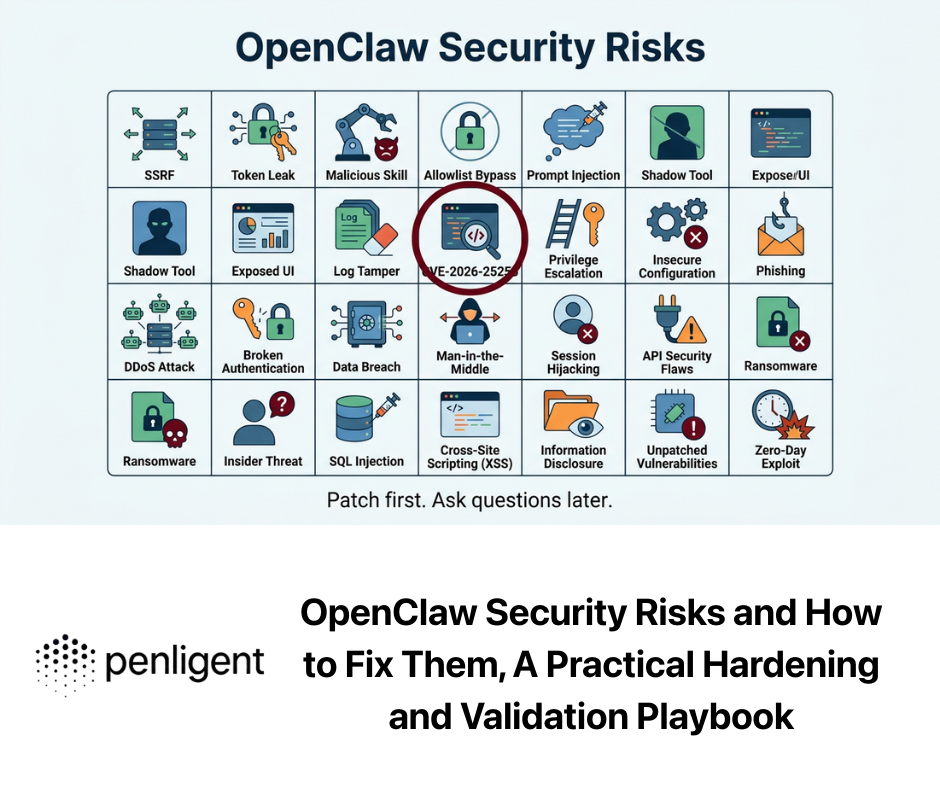

Penligent’s Hacking Labs content also tracks the same boundary shift: MCP-driven tooling, agent execution chains, and supply-chain style risks in the agent ecosystem. For engineers who want implementation-oriented security discussions, those internal references can serve as a “living” set of examples and checklists for modern agent deployments. (寡黙)

Further reading

Authoritative external references OWASP Agentic Security Initiative https://genai.owasp.org/initiatives/agentic-security-initiative/ OWASP Agentic AI threats and mitigations https://genai.owasp.org/resource/agentic-ai-threats-and-mitigations/ AWS Agentic AI Security Scoping Matrix post https://aws.amazon.com/blogs/security/the-agentic-ai-security-scoping-matrix-a-framework-for-securing-autonomous-ai-systems/ AWS China blog, privacy and security of agent applications https://aws.amazon.com/cn/blogs/china/privacy-and-security-of-agent-applications/ Model Context Protocol spec 2025-06-18 https://modelcontextprotocol.io/specification/2025-06-18 MCP authorization section https://modelcontextprotocol.io/specification/2025-06-18/basic/authorization MCP security best practices https://modelcontextprotocol.io/docs/tutorials/security/security_best_practices UK NCSC, prompt injection is not SQL injection AI Agents Hacking in 2026: Defending the New Execution Boundary https://www.penligent.ai/hackinglabs/ai-agents-hacking-in-2026-defending-the-new-execution-boundary/ Kali Linux + Claude via MCP Is Cool—But It’s the Wrong Default for Real Pentesting Teams https://www.penligent.ai/hackinglabs/kali-linux-claude-via-mcp-is-cool-but-its-the-wrong-default-for-real-pentesting-teams/ Claude Code Remote Control Security Risks—When a “Local Session” Becomes a Remote Execution Interface https://www.penligent.ai/hackinglabs/claude-code-remote-control-security-risks-when-a-local-session-becomes-a-remote-execution-interface/ OpenClaw + VirusTotal, the skill marketplace became a supply-chain boundary https://www.penligent.ai/hackinglabs/tr/openclaw-virustotal-the-skill-marketplace-just-became-a-supply-chain-boundary/ CVE-2024-3094 and the XZ Utils liblzma backdoor, why a routine update almost became a trust crisis https://www.penligent.ai/hackinglabs/cve-2024-3094-and-the-xz-utils-liblzma-backdoor-why-a-routine-update-almost-became-a-trust-crisis/