The fast answer you came for, in plain terms

CVE-2024-3094 is not a typical memory bug or a single vulnerable function you patch and move on from. It is a supply-chain compromise: malicious code was discovered in the upstream release tarballs of XZ Utils beginning with versions 5.6.0 and 5.6.1, where obfuscated build logic extracted a prebuilt object file from a disguised test archive and modified functions during the liblzma build process. The result was a modified liblzma library that could affect software linked against it. (NVD)

That one sentence is the difference between “run your scanner” and “rethink what you trust.”

Most security people searched this story using a few high-click phrases that show up repeatedly across major incident write-ups: xz backdoor, XZ Utils backdoor, liblzma backdoor, and, because of the likely practical impact path, sshd backdoor. The convergence of those terms across vendor advisories is not a branding accident; it reflects what responders needed most: escopo, exposure, evidênciase a safe remediation path. (Akamai)

This guide is written for practitioners who want a publishable, technically accurate account with repeatable verification steps and a defensible mitigation plan.

Why this incident matters more than a “Linux package compromise” headline

It targeted the trust gap between repository code and shipped artifacts

One of the most important facts in the official record is not “someone committed bad code.” The NVD description explicitly notes that the upstream tarballs included extra build instructions that did not exist in the repository, and that the build process extracted a prebuilt object from a disguised test file to modify liblzma at build time. (NVD)

That is a fundamentally different threat class:

- Code review in the repo is necessary, but it is not sufficient.

- Your risk hinges on whether you ingest the same bits you think you are ingesting.

- Your security posture becomes entangled with release engineering, packaging, and artifact provenance.

The upstream project’s own backdoor page makes the core scope painfully concrete: XZ Utils 5.6.0 and 5.6.1 release tarballs contain a backdoor, and it even discusses who signed which tarballs, reinforcing the theme that provenance and signing are part of the story, not an afterthought. (Tukaani)

It was a near-miss, and near-misses are the ones you learn from

Reuters’ reporting described the event as a near-miss that could have compromised a massive number of systems, and emphasized how the discovery came from careful observation of anomalous behavior rather than a routine automated process. (Reuters)

CISA’s alert underscores the urgency and the immediate operational ask: versions 5.6.0 and 5.6.1 were implicated, and organizations were advised to take action such as downgrading to a non-compromised version. (CISA)

Red Hat’s postmortem-style write-up is also worth reading because it highlights coordination realities in open source incident response, and it reflects a point many defenders feel but rarely state: security incidents at this layer are as much about ecosystem coordination as they are about code. (Red Hat)

What happened, a high-confidence timeline you can cite

This is a condensed timeline anchored in primary and high-quality secondary sources.

Late March 2024, discovery and disclosure

A public disclosure was posted to the oss-security mailing list describing odd symptoms (notably SSH performance anomalies and related debugging signals) that led to a deeper investigation and the conclusion that upstream xz repository and tarballs had been backdoored. (openwall.com)

Rapid7’s incident response coverage summarizes the same disclosure event and explicitly references the affected versions 5.6.0 and 5.6.1, tracking it as CVE-2024-3094. (Rapid7)

March 29, 2024 onward, coordinated response

NVD published the vulnerability record describing the malicious build-time behavior, emphasizing obfuscation, extra .m4 files, and extraction of a prebuilt object from a test archive to modify liblzma during compilation. (NVD)

CISA released an alert about the reported supply-chain compromise, advising organizations to act. (CISA)

Linux distributions and major vendors issued advisories and rollback guidance; Debian’s advisory is a useful example of concrete distribution-side response. (NVD)

Early April 2024, detection and defense guidance expands

Elastic Security Labs published analysis with practical detection ideas including YARA, osquery, and SIEM searches, helping teams move beyond “what version” into “what signals can I look for.” (Elastic)

Microsoft published FAQ and guidance for defenders, reinforcing affected versions and the supply-chain nature of the issue. (TECHCOMMUNITY.MICROSOFT.COM)

CVE-2024-3094 explained, the threat model that actually matches reality

What the CVE record says, and why the wording matters

The NVD record describes a build-time modification of liblzma driven by obfuscated build logic present in upstream tarballs, including extra build scripts not present in the repository. (NVD)

That means the “vulnerable surface” is not a single API. It is:

- release artifacts

- build system behavior

- packaging pipelines

- the set of downstream consumers who ingest those artifacts

Why everyone kept saying “sshd backdoor”

Multiple security write-ups described the compromise as enabling RCE or unauthorized access via SSH in certain scenarios, which is why “sshd backdoor” became a sticky phrase in headlines. Akamai’s overview notes the initial reporting framing and the evolving understanding of potential impact. (Akamai)

However, a careful operational interpretation is:

- the compromised component is liblzma

- exploitability depends on specific build and linking/loading conditions

- exposure is not automatically “any system with xz installed”

This is the core responder mistake to avoid: do not confuse ubiquity with exploitability, and do not confuse exploitability with “no action required.” The right stance is evidence-driven: inventory, verify, mitigate.

Who was affected, and how not to turn scoping into mythology

High-confidence affected versions

The upstream project and CISA both point to XZ Utils 5.6.0 and 5.6.1 as the key compromised releases. (Tukaani)

The NVD record also anchors compromise starting with 5.6.0 tarballs. (NVD)

Why distro exposure skewed toward development branches

Many major advisories emphasize that stable distro releases were often not affected, while development/testing tracks and certain build pipelines were the realistic exposure points. Tenable’s FAQ is a practical roundup that tracks “affected” and “not affected” distributions and was updated as the incident evolved. (Tenable®)

This aligns with the lived experience of responders: the riskiest places are often CI, staging, pre-release, and packaging environments, not necessarily the long-lived stable production fleet.

The scoping mistake to avoid

A lot of teams asked: “Do we have xz installed anywhere?”

That’s the wrong first question because it produces noise. Almost everyone has xz somewhere.

A better question sequence is:

- Do we have xz/liblzma 5.6.0 or 5.6.1 installed anywhere

- If yes, where did those packages come from, and which track were they sourced from

- Are those hosts internet-facing SSH endpoints, build systems, bastions, or high-value infrastructure

- Can we collect evidence that all affected builds are removed or rolled back

Verification, safe and repeatable checks for fleets

The goal here is not to “reverse engineer the backdoor.” The goal is to establish a defensible answer to:

- Are we affected

- If affected, are we still affected after remediation

- What evidence supports those claims

Quick fleet inventory commands

Debian and Ubuntu family

# Show installed packages that commonly carry xz and liblzma

dpkg -l | egrep 'xz-utils|liblzma' || true

# Show candidate versions and repository sources

apt-cache policy xz-utils liblzma5 2>/dev/null | sed -n '1,120p'

# Quick local version

xz --version 2>/dev/null || true

RHEL, Fedora, CentOS Stream family

rpm -qa | egrep '(^xz|xz-libs|liblzma)' || true

dnf info xz xz-libs 2>/dev/null | sed -n '1,160p'

xz --version 2>/dev/null || true

Arch family

pacman -Qi xz 2>/dev/null | sed -n '1,140p'

xz --version 2>/dev/null || true

If you see 5.6.0 ou 5.6.1, treat it as a priority and tie your response to your distro’s advisory and update channel.

Verify what sshd is actually loading, without assumptions

One pragmatic approach in many detection write-ups is library-loading visibility. Elastic and others published investigation and detection ideas to help teams build confidence. (Elastic)

Here is a safe baseline check:

# If sshd is running, list mapped libraries and look for liblzma or related components

pidof sshd >/dev/null && \\

sudo cat /proc/"$(pidof sshd | awk '{print $1}')"/maps 2>/dev/null | egrep 'liblzma|libsystemd' || true

# Alternatively, lsof can show opened shared objects

pidof sshd >/dev/null && \\

sudo lsof -p "$(pidof sshd | awk '{print $1}')" 2>/dev/null | egrep 'liblzma|libsystemd|sshd' || true

This does not prove exploitability. It helps you understand runtime reality, which is essential when internet narratives flatten nuance.

A simple scoping table you can drop into an incident ticket

| Scoping question | What to collect | What it tells you | Por que é importante |

|---|---|---|---|

| Do we have affected versions | package inventory showing xz/liblzma versions | narrows scope | 5.6.0 and 5.6.1 are the primary focus (Tukaani) |

| Did they come from risky channels | repo mirror snapshots, apt/dnf metadata, build pipeline logs | provenance | CVE centers on upstream tarballs and downstream packaging |

| Are high-value endpoints involved | list of bastions, SSH exposed systems, CI runners | priority | reduces risk quickly by focusing on the most likely impact paths |

| Did remediation remove all affected builds | post-change inventory, hash evidence if needed | closure | produces defensible evidence for auditors and incident reviews |

Detection ideas that work even when the next backdoor looks different

Version-based hunting is necessary but brittle. The moment attackers change the packaging path, your “check version X” playbook has diminishing returns.

Good defenders use a layered approach:

- package and SBOM visibility

- artifact provenance

- runtime behavior baselines

- anomaly detection in key processes

Behavior signal, unusual sshd child processes

SigmaHQ maintains an emerging-threat rule relating to suspicious sshd child process creation tied to this incident class. Use it as inspiration for your own EDR or audit rules, tuned for your baseline. (Elastic)

The reliable concept is simple:

- sshd is usually a low-variance process

- sshd spawning unusual children is often a high-signal event

- if you alert too loudly, you tune, not abandon the detection

osquery and SIEM searches, a practical starting point

Elastic Security Labs published detection content including osquery and SIEM queries, which many defenders used as a practical on-ramp for triage. (Elastic)

A generic osquery idea you can implement safely without copying proprietary rules is:

- inventory installed packages for xz/liblzma

- query running processes and loaded libraries

- correlate sshd runtime with unexpected shared objects

Example osquery snippets:

-- Packages, Debian

SELECT name, version FROM deb_packages

WHERE name IN ('xz-utils','liblzma5');

-- Packages, RPM

SELECT name, version, release FROM rpm_packages

WHERE name IN ('xz','xz-libs','liblzma');

-- Running sshd processes

SELECT pid, name, path, cmdline FROM processes

WHERE name = 'sshd';

File integrity, treat tarballs as security objects

This incident strongly suggests adding policy gates that verify:

- the origin of release tarballs

- signatures and signing identity expectations

- reproducibility or independent rebuild checks for critical components

Upstream’s backdoor page explicitly calls out tarball creation and signing details, reinforcing why signature trust must be paired with identity trust and reproducibility. (Tukaani)

Remediation, what to do now, what to do next, what to change forever

Immediate containment playbook, the first hour

1 Identify and prioritize

- Find systems with xz/liblzma 5.6.0 or 5.6.1

- Prioritize:

- internet-facing SSH endpoints

- bastion hosts

- CI runners and build systems

- packaging and repository mirror infrastructure

This aligns with the practical focus of CISA’s alert and vendor response content. (CISA)

2 Roll back or upgrade per your distro’s guidance

CISA recommended downgrading to a non-compromised version. (CISA)

Do not “hunt a random package” on the internet. Use your distro’s official channels and signed repos, then document:

- before and after package versions

- repo snapshot timestamps

- the change request and approval trail

3 Collect evidence, because you will be asked

When incidents hit foundational tooling, leadership and auditors ask for proof. Build a lightweight evidence packet:

- list of hosts with affected versions pre-remediation

- list of those hosts post-remediation showing safe versions

- note of the mitigation actions and dates

Medium-term hardening, the next one to two weeks

This is where most teams stop too early.

Lock down the places where trust is minted

CVE-2024-3094 is a reminder that build systems, CI runners, and packaging pipelines are not “internal dev convenience.” They are production-grade trust factories.

Red Hat’s response write-up highlights the coordination and response side, but the deeper implication is that distro and enterprise security has to treat these layers as part of the security boundary. (Red Hat)

Do the basics that are usually postponed:

- isolate CI runners from open internet where feasible

- restrict who can modify build scripts and dependency sources

- enforce immutable build images for critical releases

- monitor unusual outbound connections from build pipelines

Add safety rails for dev and pre-release channels

Many exposure narratives centered on development tracks and early adoption channels. Tenable’s FAQ is a useful reference for “what was affected” as the picture evolved. (Tenable®)

A practical policy is:

- dev and pre-release repos can exist

- but they must not be promoted to production without explicit approval checks

- build provenance, dependency diffs, and SBOM diffs should be part of that approval

Long-term changes, the trust model upgrades you should budget for

This is the part leadership often hears as “process overhead.” It is not. It is business continuity.

1 Reproducible builds and independent rebuild verification

When artifacts can diverge from repository code, reproducibility becomes a security control.

You do not need 100 percent reproducibility tomorrow. Start with the critical path:

- compression libraries

- crypto libraries

- auth and PAM related components

- ssh and remote management stack

- build tooling itself

2 Provenance frameworks, sign what you ship, verify what you install

The incident’s emphasis on tarballs and signing identity makes it hard to ignore artifact-level controls. (Tukaani)

A modern baseline includes:

- signature verification with explicit trust roots

- provenance attestations for builds

- SBOM generation and intake gates

- controlled mirror infrastructure

3 Fund and support upstream, because the economics are part of your risk

Reuters framed the incident as a wake-up call about the fragility of widely used open source maintained by small teams under stress. (Reuters)

This is not an abstract moral. It is a practical risk statement:

- foundational projects are critical infrastructure

- under-resourced maintenance increases systemic risk

- long-term adversaries exploit those gaps with patience

Lessons for offensive security teams, why attackers love this layer

If you build pentest plans or red team playbooks, CVE-2024-3094 is a reminder that modern initial access is not only “find a web bug.” Supply chain compromise is attractive because it can:

- bypass perimeter controls

- land inside trusted update paths

- scale quietly

- survive naive patching logic if artifacts are compromised upstream

This is the key difference between “a vulnerability” and “a trust boundary collapse.”

If you are running internal adversary simulation, you do not need to replicate this specific incident to learn from it. You can test your organization’s readiness by running tabletop exercises around questions like:

- what happens if a core dependency is found compromised

- how quickly can we inventory it

- how quickly can we roll back fleet-wide

- can we prove remediation, not just claim it

Comparing CVE-2024-3094 to other high-impact CVEs, how to prioritize correctly

Security teams often need analogies to justify budget and urgency. Here are two comparisons that help.

Log4Shell, ecosystem blast radius versus trust chain compromise

Log4Shell was a code-level vulnerability in a ubiquitous dependency with devastating exploitability. CVE-2024-3094 is a different beast: it’s a compromise of the supply chain and artifacts, which raises the cost of trust across your organization.

The shared lesson is not “libraries are dangerous.” The lesson is:

- a small set of dependencies forms the backbone of modern infrastructure

- when those dependencies break, response cost spikes across every team

regreSSHion, the primitive layer keeps coming back

Even though regreSSHion is a separate OpenSSH issue, its trendiness as a search term reflects a meta truth: when foundational primitives are questioned, the blast radius is organizational, not technical.

If your org treats “patch it” as the full story, CVE-2024-3094 is a perfect counterexample. The threat is not only patch latency; it is trust integrity.

A practical checklist you can paste into your incident workflow

Scoping

- Inventory hosts for xz/liblzma versions, focusing on 5.6.0 and 5.6.1 (Tukaani)

- Identify internet-facing SSH endpoints and prioritize them

- Identify CI runners, build boxes, package mirrors, staging environments

Verificação

- Collect “before” package versions and sources

- Roll back or upgrade per distro guidance and CISA advisory (CISA)

- Collect “after” evidence showing safe versions across fleet

- Record dates, repository snapshot IDs, and change approvals

Detecção

- Alert on suspicious sshd child process behavior, tuned to baseline

- Add runtime library load monitoring for key daemons where feasible

- Run periodic SBOM diff checks on promoted builds

Hardening

- Reduce SSH exposure surface area, enforce strong auth policies

- Gate production promotions on dependency provenance checks

- Introduce reproducibility goals for critical packages over time

Incidents like CVE-2024-3094 keep exposing a reality that security teams already feel: vulnerability management is no longer limited to “patch the app.” Your dependency intake, build chain, and runtime behavior are part of the attack surface—especially when tools and agents run inside sensitive networks. The workflows that hold up under pressure are the ones that translate ambiguity into concrete actions: enumerate exposure, validate fixes, and continuously re-check drift with evidence.

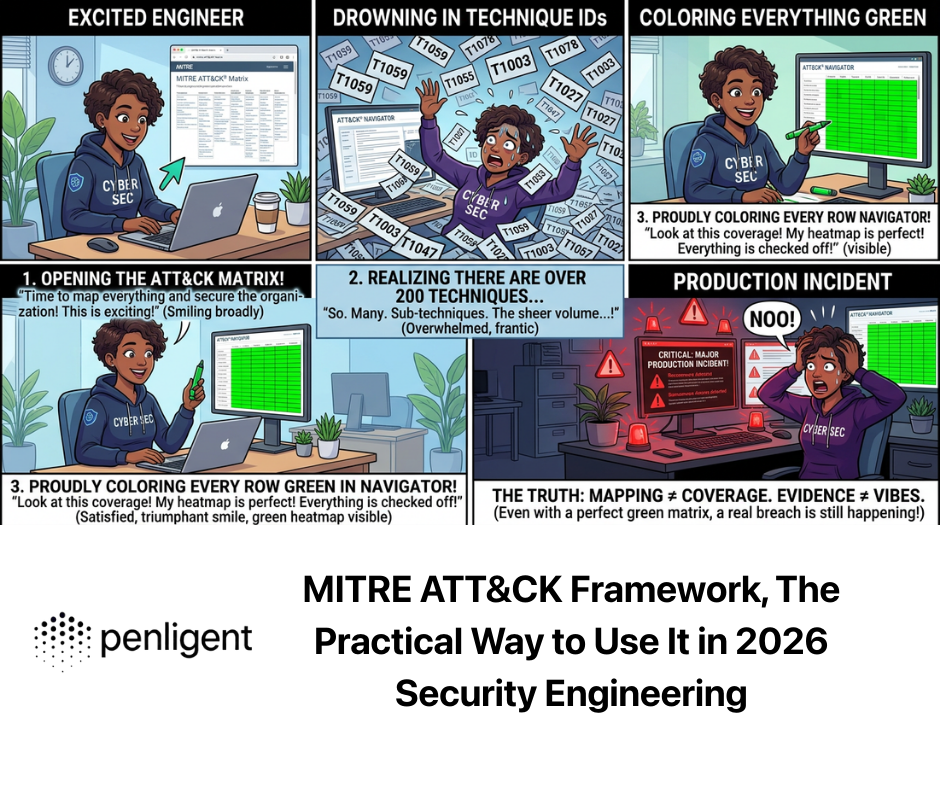

If you are building or operating automated offensive security programs, an AI-driven platform like Penligent can support the unglamorous but essential operational pieces: continuously inventory externally exposed services, track risky drift around SSH-facing assets, and help standardize verification steps so that response is repeatable and auditable rather than tribal knowledge. When supply-chain events happen, speed matters, but so does proof.

Penligent has also published a focused write-up on this incident from an engineering perspective, emphasizing why build pipelines and artifact trust were the real target, which you can use as an internal explainer for non-specialists who still need to understand the operational implications. (Penligente)

Referências

https://nvd.nist.gov/vuln/detail/cve-2024-3094 (NVD)

https://www.cisa.gov/news-events/alerts/2024/03/29/reported-supply-chain-compromise-affecting-xz-utils-data-compression-library-cve-2024-3094 (CISA)

https://www.openwall.com/lists/oss-security/2024/03/29/4 (openwall.com)

https://tukaani.org/xz-backdoor/ (Tukaani)

https://tukaani.org/xz/ (Tukaani)

https://www.rapid7.com/blog/post/2024/04/01/etr-backdoored-xz-utils-cve-2024-3094/ (Rapid7)

https://securitylabs.datadoghq.com/articles/xz-backdoor-cve-2024-3094/ (TECHCOMMUNITY.MICROSOFT.COM)

https://www.akamai.com/blog/security-research/critical-linux-backdoor-xz-utils-discovered-what-to-know (Akamai)

https://www.redhat.com/en/blog/understanding-red-hats-response-xz-security-incident (Red Hat)

https://www.elastic.co/security-labs/500ms-to-midnight (Elastic)

https://techcommunity.microsoft.com/blog/vulnerability-management/microsoft-faq-and-guidance-for-xz-utils-backdoor/4101961 (TECHCOMMUNITY.MICROSOFT.COM)

https://www.tenable.com/blog/frequently-asked-questions-cve-2024-3094-supply-chain-backdoor-in-xz-utils (Tenable®)

https://jfrog.com/blog/xz-backdoor-attack-cve-2024-3094-all-you-need-to-know/ (JFrog)

https://www.penligent.ai/hackinglabs/xz-utils-cve-reality-check-cve-2024-3094-the-liblzma-backdoor-and-why-your-build-pipeline-was-the-real-target/ (Penligente)

https://www.penligent.ai/hackinglabs/kali-llm-claude-desktop-and-the-new-execution-boundary-in-offensive-security/ (Penligente)

https://www.penligent.ai/hackinglabs/openclaw-virustotal-the-skill-marketplace-just-became-a-supply-chain-boundary/ (Penligente)

https://www.penligent.ai/hackinglabs/ko/anthropic-cybersecurity-tool-in-2026/ (Penligente)