The fastest way to get worse at CTFs with AI is to treat the model like a vending machine. Paste in the prompt, wait for a payload, copy it into a terminal, and hope a flag falls out. That works just often enough on easy challenges to build bad habits. It fails the moment the problem gets stateful, noisy, or just slightly weird.

A better way to use AI in CTFs is to think of it as a search space compressor. It can sort clues faster than you can, explain unfamiliar syntax, generate rough solver skeletons, summarize packet captures, translate decompiler output into plain English, and help you compare one hypothesis against another. What it still does poorly is exactly what strong CTF players are paid for in the real world: deciding what matters, knowing when a “plausible” answer is false, preserving state across a long chain of experiments, and proving impact instead of narrating it. Current public research points in the same direction. The NYU work on offensive-security CTFs found that LLMs can beat the average human participant in some competition settings, but their fully automated results remained uneven and highly category-dependent. Their later benchmark and framework work, Cybench’s professional CTF task set, Anthropic’s public Cybench reporting, and more recent planning-based pentest research all show the same pattern: models are getting better, but autonomy still breaks down around long-horizon planning, complex reasoning, and specialized tool use. (arXiv)

That gap is the whole game. If you use AI as a teammate that helps you think faster, not as a replacement for thought, it becomes useful immediately. If you use it as an oracle, it turns a winnable challenge into a hallucination generator. The right mindset is not “Can the model solve this for me?” It is “Which parts of this problem are repetitive, low-leverage, or format-heavy enough that a model can accelerate them without becoming the decision-maker?” That framing aligns better with how real security testing is described in NIST SP 800-115 and the OWASP Web Security Testing Guide, both of which emphasize planning, technical testing, analysis of findings, and mitigation rather than random tool firing. (NIST 컴퓨터 보안 리소스 센터)

AI in CTFs Starts With the Right Practice Environment

Before workflow, prompts, or tools, you need a legal place to practice. For web-heavy AI experimentation, PortSwigger’s Web Security Academy is one of the cleanest options because it is free, explicitly framed as safe and legal, and organized around concrete vulnerability classes rather than vague challenge descriptions. picoCTF is equally useful because it pairs practice and competition with structured learning material across cryptography, web exploitation, forensics, binary exploitation, and reversing. Hack The Box runs an official CTF platform for live CTF play as well. If you want to test how your AI workflow behaves under pressure, these environments give you fast feedback without crossing the line into unauthorized testing. (포트스위거)

That distinction matters more with AI than it did with ordinary note-taking or solver scripts. A human can usually tell when they are drifting from a challenge instance into a real asset. A model cannot. If you hand an agent a URL and a vague instruction like “enumerate everything,” it has no internal sense of what is contest infrastructure, what is production, what is rate-limited for a reason, and what you are actually allowed to touch. Good CTF hygiene has always required scope awareness. Good AI CTF hygiene requires it twice.

One practical rule is to start every challenge by writing down four things before the model gets involved: the platform, the exact target instance, the challenge category you think it might belong to, and the set of files or artifacts you have locally. That sounds trivial, but it stops a surprising amount of nonsense. It prevents the model from inventing adjacent endpoints, mixing two categories together, or suggesting tools that do not fit the artifact set you actually have. It also makes your later debugging easier because you can tell whether failure came from the challenge, the model, or your own bad assumptions.

How to Use AI in CTFs Without Losing Control of the Workflow

The strongest AI CTF workflows are boring in the best sense. They have structure. They record what happened. They make it easy to rerun the same step later. NIST SP 800-115 describes technical security testing as a process that includes planning, executing tests, analyzing findings, and developing mitigation strategies. OWASP’s testing guidance likewise treats testing as a phased discipline, not a sequence of disconnected tricks. That model fits CTF work better than most people realize. A flag is only the last line of a much larger loop: intake, classification, experiment design, execution, verification, and record-keeping. (NIST 컴퓨터 보안 리소스 센터)

The table below is the simplest way to think about AI’s role in that loop.

| CTF phase | High-value AI work | Human still owns | Common failure mode |

|---|---|---|---|

| Intake and classification | Summarize challenge text, label likely category, extract filenames, endpoints, protocols, encodings | Decide whether the category guess is credible | Wrong first classification sends the whole workflow sideways |

| Recon and artifact parsing | Generate parsers, cluster clues, propose quick triage commands, normalize outputs | Choose what is actually worth testing | Over-enumeration and noisy dead ends |

| Hypothesis generation | Suggest likely bug classes, solver directions, or exploit families | Rank hypotheses and pick the next minimal experiment | Confident but unsupported claims |

| Exploit or solver scaffolding | Draft scripts, build payload templates, translate tool output, create harnesses | Validate every key assumption and adjust to state | Brittle code and hallucinated steps |

| Verification and write-up | Reformat traces, summarize differences, clean up scripts, draft notes | Confirm the proof and reject false positives | Turning narrative into “evidence” without proof |

That table is just a synthesis of what the benchmark literature and official tool docs already imply. LLMs are comfortable when the task is structured, local, and text-heavy. They are far less comfortable when they must maintain a long plan, use several specialized tools correctly, and adapt to partial failure without drifting. The NYU CTF work showed category-specific performance gaps, while later benchmark and planning research has kept pointing to tool use and long-range coherence as pressure points. Tool documentation tells the same story from the opposite direction: pwntools, angr, Burp, TShark, Ghidra, and Volatility are all strong because they expose precise, inspectable primitives. AI helps most when it sits on top of those primitives, not instead of them. (arXiv)

A simple five-loop workflow works well across almost every category. First, collect the challenge facts without interpretation: description text, attachments, URLs, binaries, packet captures, hashes, screenshots, service banners, and any initial responses. Second, ask the model to classify the problem and list unknowns, not solutions. Third, design the smallest experiment that could kill one hypothesis. Fourth, run that experiment and capture the exact output. Fifth, ask the model to explain the delta between expectation and reality. If you repeat those five steps, you get better results than trying to have a single long conversation where the model free-associates toward the flag.

A Prompt Pattern That Actually Works in CTFs

Most bad AI CTF sessions start with a bad prompt. The worst version is one line long, overloaded with hope, and missing all structure: “Solve this web challenge for me.” That tells the model nothing about what you know, what artifacts exist, which actions are allowed, or what level of certainty you need before you execute something. Since the model has to fill in those blanks somehow, it usually fills them with fiction.

A better prompt has five blocks. The first block is facts. Put only observable data there: challenge text, filenames, output snippets, headers, assembly fragments, PCAP summaries, or the exact error message you saw. The second block is unknowns. State what you do not know yet. The third block is allowed actions. If you want only a hypothesis, say so. If you want a Python helper script, say so. If you do not want brute force, say so. The fourth block is required output shape. Ask for ranked hypotheses, confidence levels, minimal validation steps, or a short solver scaffold. The fifth block is 중지 조건. Tell the model to stop once it reaches a point where real execution or human validation is required.

Here is a base template that works well across categories:

You are helping with an authorized CTF challenge.

Facts:

- Platform: picoCTF

- Category guess: web

- Target: challenge instance URL only

- Observed behavior:

- GET / returns 200

- /robots.txt exists

- POST /api/check-stock accepts XML

- Replaying the same stock request changes nothing

- Artifacts:

- One Burp request and response pair

- One ffuf result file

- One screenshot of the admin page

Unknowns:

- Whether the bug is XXE, SSRF, or both

- Whether any internal hostnames are reachable

- Whether response differences are meaningful or noise

Allowed actions:

- Explain likely bug classes

- Suggest the next 3 smallest validation steps

- Draft one minimal Python request script

- Do not assume success

- Do not claim a flag is reachable unless the evidence supports it

Required output:

1. Ranked hypotheses with confidence scores

2. What evidence supports each hypothesis

3. One minimal next experiment per hypothesis

4. A short Python script only if needed

5. A list of assumptions that still need verification

This kind of prompt does two things well. It constrains the model, and it makes your later review faster. Instead of reading a wall of guesses, you get a work queue. You also reduce the risk that the model will treat challenge content as instructions. OWASP’s prompt injection guidance is directly relevant here. The organization defines prompt injection as a vulnerability caused when user or external inputs alter the model’s intended behavior, and it specifically recommends clearly separating untrusted content, adversarial testing, and boundary-aware handling of external inputs. In a CTF context, challenge text, downloaded files, web pages, writeups, and even hidden strings inside artifacts all count as untrusted content. (OWASP 치트 시트 시리즈)

That last point is easy to underestimate. When you use AI in CTFs, the challenge is not only the thing you are attacking. It is also part of the input attacking your model.

How to Use AI in Web CTFs

Web CTFs are where AI feels the most productive, and that is exactly why people get careless. The model is good at recognizing familiar web bug patterns. PortSwigger’s academy covers the same classes that dominate many web CTFs, including SQL injection, XXE, SSRF, path traversal, and server-side template injection. OWASP’s current Top 10 still places injection in the standard awareness set for web applications. None of that means the model can spot the bug reliably from one screenshot. It means there is a rich pattern library available once you have enough evidence to narrow the search space. (포트스위거)

The first mistake in web CTFs is to ask the model for a vulnerability class before you establish a baseline. Start with boring facts. What routes exist. Which ones redirect. Which parameters are reflected. Which content types are accepted. Whether the application behaves differently for GET versus POST, JSON versus form data, XML versus JSON, or one cookie versus another. If there is network exposure, Nmap is still the right primitive for host and service discovery. Its docs are refreshingly clear that port scanning is fundamentally about finding reachable services and that ports have more than two states. That matters because a filtered port and a closed port imply very different next steps. When version detection works, Nmap can also tell you which service families are likely behind the open ports. (Nmap)

For content discovery, ffuf remains one of the easiest ways to give an AI workflow real leverage because the tool is simple, fast, and structured. The official project describes it as a fast web fuzzer and documents common uses such as content discovery, vhost discovery, parameter fuzzing, and POST data fuzzing. That makes it a good fit for model-assisted generation of targeted wordlists and match rules, especially after you already have some hints from the challenge or the application framework. (GitHub)

A minimal authorized content-discovery run might look like this:

ffuf -u https://challenge-instance/FUZZ \

-w ./wordlists/common-web.txt \

-mc 200,204,301,302,307,401,403 \

-fc 404 \

-of json \

-o ffuf-results.json

AI is useful before and after that command, not instead of it. Before the run, it can generate a small themed wordlist from clues in the challenge title, JavaScript variable names, or route names you already saw. After the run, it can cluster the results into likely auth endpoints, likely admin paths, static assets, API prefixes, and blind alleys. What it should not do is tell you that /debug-old is “probably vulnerable” just because the name sounds interesting.

Once you find interesting traffic, Burp becomes the center of gravity. Burp Repeater exists for one reason: modify and resend an interesting HTTP or WebSocket message until you learn something. Decoder exists to transform and identify encodings. Comparer exists to diff similar pieces of data, including near-identical HTTP messages. Those three tools map almost perfectly to the way AI should be used in web CTFs: Repeater for controlled experiments, Decoder for transformation and normalization, Comparer for disciplined before-and-after reasoning. (포트스위거)

If you want a model to help, give it paired observations. Show it the normal request and the abnormal request. Show it the unchanged response and the changed one. Show it the request that returned a 403 and the request that returned a 200 after only one parameter changed. The quality of the model’s reasoning improves dramatically when you provide deltas instead of isolated artifacts.

This is where a small Python harness can save time. picoCTF’s own primer teaches HTTP requests in Python for challenge work, and that basic habit scales surprisingly well. For a web CTF, your goal is not to write a giant client. It is to encode a repeatable micro-experiment so you can change one variable at a time and store the output. (CTF Primer)

import http.client

import json

HOST = "challenge-instance"

PATH = "/api/check-stock"

xml_payloads = [

"""<?xml version="1.0"?>

<stockCheck><productId>1</productId><storeId>1</storeId></stockCheck>""",

"""<?xml version="1.0"?>

<!DOCTYPE foo [ <!ENTITY xxe SYSTEM "file:///etc/passwd"> ]>

<stockCheck><productId>&xxe;</productId><storeId>1</storeId></stockCheck>"""

]

results = []

for payload in xml_payloads:

conn = http.client.HTTPSConnection(HOST)

conn.request(

"POST",

PATH,

body=payload,

headers={

"Content-Type": "application/xml",

"User-Agent": "ctf-lab-test"

}

)

resp = conn.getresponse()

body = resp.read().decode(errors="replace")

results.append({

"status": resp.status,

"reason": resp.reason,

"body_prefix": body[:400]

})

conn.close()

print(json.dumps(results, indent=2))

That code is not exciting, and that is why it is good. It gives you structured output the model can reason over. Instead of asking “Is this XXE?”, you can ask “Why does payload two produce a body-length increase and a different parsing error from payload one?” That is much closer to how strong web players actually think.

When AI is used well in web CTFs, it speeds up category triage. SQLi is a classic example. PortSwigger defines SQL injection as interference with the queries an application makes to its database, which can expose or modify data. That is a precise description, but it does not tell you whether the challenge is using a string context, a numeric context, a blind boolean channel, an error channel, or an out-of-band path. The model can help enumerate possibilities, but it still needs you to pin down the context experimentally. (포트스위거)

The same is true for XXE and SSRF. PortSwigger’s definitions matter because they tell you what to look for. XXE often starts with XML processing and may expose server-side files or internal systems. SSRF starts when the server makes a request on your behalf and can sometimes be pushed toward localhost or internal endpoints. In PortSwigger’s labs, those classes are cleanly separated for teaching, but CTFs often blur them together. An XML parser with external entity support can become an SSRF path if the entity points at an internal metadata or admin endpoint. AI is genuinely helpful in those situations because it can propose the shortest chain from “XML sink exists” to “internal fetch might be possible,” but you still need the server response to prove it. (포트스위거)

Path traversal and SSTI are another good pair. Path traversal is about reading files outside the intended directory boundary. SSTI is about getting user-controlled input interpreted by a template engine in a way that can lead to code execution. Both reward careful observation. Path traversal challenges often hinge on normalization, filtering, or unexpected file paths. SSTI challenges often hinge on engine identification, syntax quirks, and sandbox assumptions. AI is good at generating candidate syntax or bypass patterns once you know what engine family you are in. It is bad at deciding the engine family from one vague rendering glitch. (포트스위거)

The hardest web CTF trap for AI users is business logic. Models love named vulnerabilities because they can retrieve familiar patterns. They struggle more when the challenge is a workflow bug, a privilege edge, a session mix-up, or a state transition that only becomes visible after several ordinary requests. In those cases, use AI for bookkeeping. Ask it to make a state table. Ask it to summarize which cookies appear after which actions. Ask it to group routes by authentication state. Ask it to compare response bodies and header sets. Do not ask it for a root cause until the state machine is already on paper.

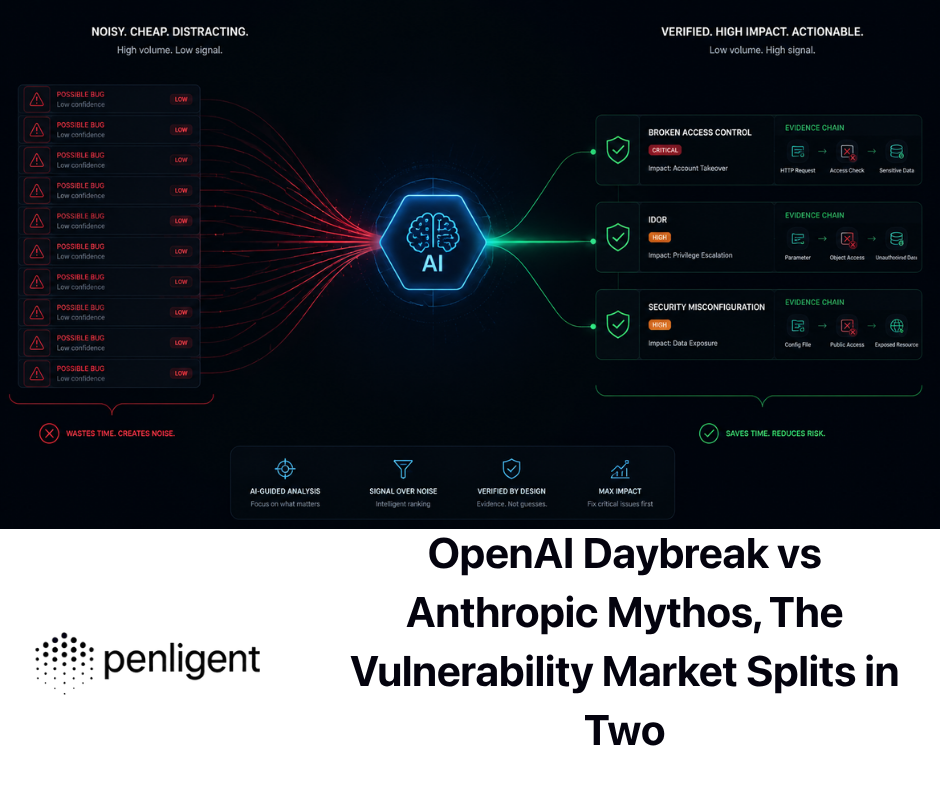

If you use a dedicated AI pentest workspace instead of a loose chat tab, the same principles still apply. Penligent’s public docs describe operator-created tasks, configurable Python and Bash runtimes, the ability to invoke tools already installed in Kali such as nmap 그리고 하이드라, and one-click import of common tool configurations. Its product page also emphasizes editable prompts, scope control, support for many industry tools, and evidence-first results. In a web CTF lab, those features matter less because they are flashy and more because they reduce context sprawl. You can keep generated scripts, tool outputs, and your own manual verification in one workflow instead of scattering them across terminal history and chat windows. (펜리전트)

How to Use AI in Binary Exploitation CTFs

Binary exploitation is where AI looks smartest from a distance and weakest up close. From a distance, it seems perfect for an LLM. You have code, decompiler output, assembly, memory bugs, and the need for Python scripts. Up close, the hard part is not text generation. It is precise interaction with a target process and ruthless attention to details the model often smooths over.

The official pwntools docs tell you exactly why the library became standard: it exposes a uniform way to talk to processes, sockets, and remote services. The corefile docs make another important point. Core dumps are useful not just for manual debugging, but for automation. You can use a crashing address and a cyclic pattern to compute offsets quickly without doing the arithmetic by hand. That is an ideal handoff point between human judgment and AI acceleration. (Pwntools Documentation)

A practical pattern for AI in pwn is to divide the work into four layers. First, the model explains the binary at a high level: protections, likely input path, suspicious functions, and possible memory corruption class. Second, you run short experiments to confirm the class. Third, the model drafts a pwntools scaffold. Fourth, you debug the scaffold like a normal exploit developer. The important detail is that the exploit is never “owned” by the model. It is only drafted by the model.

A minimal pwntools scaffold for an authorized challenge might look like this:

from pwn import *

context.binary = ELF("./chall")

context.log_level = "info"

HOST = "challenge-instance"

PORT = 31337

def start():

if args.REMOTE:

return remote(HOST, PORT)

return process(context.binary.path)

io = start()

# Example interaction, replace with challenge-specific logic

io.recvuntil(b"> ")

io.sendline(b"A" * 128)

# Keep output visible for triage

print(io.recvall(timeout=1).decode(errors="replace"))

That example is intentionally plain. It is not an exploit. It is a harness. In many pwn challenges, the first thing you need is not a ROP chain. It is a reliable way to drive the program, watch where it crashes, and compare output under small input variations.

When the issue is a simple overflow, corefiles help you turn a crash into an offset. pwntools’ docs explicitly show this workflow: generate a cyclic pattern, crash the process once, load the core dump, and check where the pattern ended up. (Pwntools Documentation)

from pwn import *

payload = cyclic(256)

p = process(["./chall", payload])

p.wait()

core = Coredump("./core")

offset = cyclic_find(core.eip if context.bits == 32 else core.rip)

print(f"Offset: {offset}")

AI is good at stitching these steps together, especially when you feed it the crash offset, the security properties, and the decompiler output for the vulnerable function. It is good at saying, “You probably need to control RIP after 72 bytes, here is a cleaner harness, here is how to build a payload with flat, and here is a checklist for ret2win versus ret2libc.” It is less good at knowing whether the crash happened before a stack canary check, whether an off-by-one changes alignment in a way that breaks your assumptions, or whether the remote service behaves differently from your local libc.

This is why angr can be useful, but only at specific moments. The angr docs define symbolic execution as exploring multiple execution paths using symbolic variables and constraint solving rather than fixed concrete inputs. For CTFs, that is powerful when the binary is really a constraint system in disguise: a maze of checks on input bytes, a buried success branch, or a validation routine with many linear or bitwise conditions. If the challenge is better understood as “find the input that makes the program take path B,” symbolic execution is a strong fit. If the challenge is actually heap grooming, race behavior, or tight interaction with process state, symbolic execution may only waste time. (Angr Documentation)

A very small angr example looks like this:

import angr

proj = angr.Project("./chall", auto_load_libs=False)

state = proj.factory.entry_state()

simgr = proj.factory.simgr(state)

simgr.explore(find=lambda s: b"Correct" in s.posix.dumps(1))

if simgr.found:

found = simgr.found[0]

candidate = found.posix.dumps(0)

print(candidate)

That script is not a universal solver. It is a reminder of the right question. If the binary’s success condition is textually visible and path-based, AI can help you get to an angr experiment faster. If the binary is really about memory corruption or subtle runtime state, AI should help you instrument, not fantasize.

Ghidra fits the same pattern. NSA’s project page describes it as a full software reverse engineering framework with disassembly, decompilation, graphing, and scripting. Those features matter because they let you cut a big binary into manageable slices. The model should rarely see the whole binary at once. It should see one function, one call chain, one branch cluster, or one suspicious string neighborhood. Good reversing with AI is local. Bad reversing with AI is “I pasted 7,000 lines of pseudo-C and now the model says the bug is probably in 메인.” (GitHub)

The public benchmark work supports that caution. In the 2024 NYU study, models could solve some pwn and reversing tasks, but performance varied sharply across categories, and the authors’ failure analysis included empty outputs, wrong command execution, broken code, and missing challenge context. More recent agent research still says strong systems struggle with long-horizon plans, specialized tools, and experience-driven cues. In other words, current models can help with the middle of pwn work, but they still need a human to keep the exploit honest. (arXiv)

How to Use AI in Reversing CTFs

Reversing rewards patience more than cleverness, and AI tends to simulate cleverness. That is dangerous. The right way to use AI in reversing is not to ask it to reverse the challenge. It is to ask it to reduce local confusion.

Start with the same triage you would do without AI. Run strings. Identify imported libraries. Look for obvious encodings, error messages, file paths, or format markers. Open the binary in Ghidra. Find the entry point and the functions that actually touch user-controlled data. Then decide what kind of help you want from the model.

Some of the best uses are almost editorial. Ask the model to rename variables in a single decompiled function based on how they are used. Ask it to summarize the control flow in plain English. Ask it to guess which branches correspond to validation versus anti-analysis. Ask it to turn three disjoint functions into one linear narrative. Ask it to spot whether a bytewise loop looks more like a checksum, a substitution table, or a staged decode routine. Those are all local questions. They work because the model is matching patterns against a small, well-bounded artifact.

What does not work well is global synthesis too early. The moment you paste five unrelated functions and ask “What is going on?”, the model starts filling semantic gaps with story. In reversing, story is cheap and wrong more often than people think. A better pattern is to make the model earn its global summary by succeeding on several small local summaries first.

One practical approach is this. Create a folder for the challenge with a short text note. Every time you inspect a function manually, write down the function name, what data it touches, and what you think its job is. Then ask the model to process only one unit at a time. That gives you two benefits. First, you preserve your own reasoning instead of letting the model overwrite it. Second, you can compare the model’s interpretation against your notes and spot when it starts overfitting.

The same principle applies to obfuscation. If a reversing challenge is hiding logic behind encoded strings, packed tables, or suspicious build artifacts, the model can help decode and classify patterns, but it should not become your source of truth. This becomes even more obvious when you look at a real-world case like CVE-2024-3094 in xz. NVD’s description explains that malicious code was introduced into upstream tarballs and extracted a prebuilt object from disguised test files during the build process, modifying specific functions in the resulting library. That is a perfect lesson for reversing players: the interesting behavior may live outside the obvious source path, and build-time artifacts can matter as much as runtime logic. (NVD)

Reversing with AI gets dramatically better when you remember that the model is stronger at translation than at discovery. Let it translate assembly to narrative, transform weird tables into structured data, explain compiler artifacts, and draft tiny emulators for suspicious routines. Do not let it decide what the whole binary “means” until you already have a map.

How to Use AI in Crypto CTFs

Crypto CTFs expose the limits of language models faster than almost any other category. The 2024 NYU results are a useful warning here. In their selected dataset, crypto performance was especially weak compared with categories like reversing and some pwn tasks. That should not surprise anyone who has spent time on CTF crypto. Many crypto challenges turn on exact structure, not vague semantic pattern matching. If the model does not have the right algebraic grip on the problem, all the Python in the world will not save it. (arXiv)

That does not make AI useless in crypto. It just changes the job description. The model is strongest when the challenge still needs classification. Is this likely substitution, transposition, block formatting, XOR abuse, base-encoding layering, or a constraint problem? Are the important clues in alphabet size, repeated blocks, byte frequency, fixed prefix, or key reuse? What should you extract before you even try a solve? Those are good AI questions because they help you decide whether to open CyberChef, write a parser, or move to an SMT solver.

CyberChef’s own site calls it a “Cyber Swiss Army Knife,” and that description is accurate for crypto CTF work. It is excellent for the part of the workflow where you are not yet proving a theorem, only testing whether the data behaves like compression, hex, base64, XOR, JSON, URL encoding, or some combination. AI pairs well with CyberChef because the model can propose a small number of likely transformation chains based on visible structure in the data, and you can confirm or reject them immediately. (GCHQ)

Z3 is the opposite. Microsoft’s guide describes it as a theorem prover and SMT solver that checks satisfiability over supported theories. That matters when your crypto challenge stops being about guesswork and becomes about constraints. If the puzzle really says, “Find bytes that satisfy these arithmetic and bitvector conditions,” then the model should stop improvising and help you formalize the system. (Microsoft GitHub)

A tiny example looks like this:

from z3 import BitVec, Solver

a = BitVec("a", 8)

b = BitVec("b", 8)

c = BitVec("c", 8)

s = Solver()

s.add(a ^ b == 0x12)

s.add(b ^ c == 0x34)

s.add(a + c == 150)

if s.check().r == 1:

m = s.model()

print(m[a], m[b], m[c])

The point of that snippet is not the toy math. It is the workflow shift. Once the challenge becomes a formal system, AI’s best job is to help you encode the system correctly and explain the solver output, not to guess the answer in prose.

Another place AI works well in crypto is parser generation. Many medium-difficulty crypto challenges are not actually about advanced cryptanalysis. They are about extracting the right bytes from a messy format, splitting blocks correctly, interpreting endianness, or converting between textual and binary views without making dumb mistakes. Models are often very good at turning those chores into small scripts. That is real value. Just do not confuse it with cryptographic insight.

One reliable way to keep the model honest is to ask for elimination, not identification. Instead of asking “What cipher is this?”, ask “Based on this ciphertext length, alphabet, and repetition pattern, which common families become less likely?” That encourages the model to reason from properties instead of reaching for the most familiar name. It also keeps you from wasting an hour because the model called something “Vigenere-like” when the real problem was just XOR with a reused key and a weird framing layer.

The model is also useful after you have already solved the challenge. Ask it to explain why the solve worked. Ask it to rewrite your scratch script into a cleaner, documented version. Ask it to summarize which clues actually mattered. Those post-solve tasks reinforce pattern recognition for future crypto challenges in a way that “one-shot solve me” prompting never does.

How to Use AI in Forensics and PCAP CTFs

Forensics CTFs often feel overwhelming because the artifacts are large and the flag is small. That is exactly the sort of asymmetry where AI can help, provided you avoid the temptation to dump everything into context at once.

Start by separating artifact types. Packet capture is one workflow. Filesystem evidence is another. Memory analysis is another. The model should not have to infer which one it is looking at. Your first task is to reduce each artifact to a manageable index: interesting protocols, interesting files, interesting processes, interesting timestamps.

For packet work, TShark is ideal because it exposes the same display-filter logic as Wireshark in a scriptable form. The man page emphasizes that display filters are powerful and are specified with -Y, while capture filters are different and specified with -f. That distinction matters in CTFs because you often do not want to recapture anything. You want to slice an existing PCAP repeatedly, each time asking a narrower question. (Wireshark)

A simple first-pass triage on an authorized challenge PCAP might look like this:

tshark -r challenge.pcap \

-Y 'http.request || dns || tcp.flags.syn==1' \

-T fields \

-e frame.time \

-e ip.src \

-e ip.dst \

-e _ws.col.Protocol \

-e _ws.col.Info

That output is small enough for a model to summarize sensibly. Once you have that, AI can help group traffic by phase, cluster suspicious destinations, identify bursts, or point out that an HTTP POST is followed by a DNS lookup and then a second connection that might matter. What it should not do is infer exfiltration just because a domain name looks strange. Time order and content still matter.

Memory forensics is similar. Volatility 3’s docs explain that the framework organizes analysis through memory layers, templates and objects, symbol tables, and a context holding the relevant structures. That is a reminder that memory analysis is structural. Good AI use in memory forensics means feeding the model structured plugin outputs, not raw dumps. Give it pslist, netscan, command lines, loaded modules, or suspicious handles. Ask it to correlate them. Ask it to build a timeline. Ask it what additional plugin would best distinguish two competing explanations. (Volatility 3)

A very basic pattern might be:

python3 vol.py -f memory.raw windows.pslist

python3 vol.py -f memory.raw windows.cmdline

python3 vol.py -f memory.raw windows.netscan

Once you have those outputs, AI can be genuinely helpful. It can spot that a process has an unusual parent, that a command line contains an encoded PowerShell fragment, or that a listening socket appeared shortly after a suspicious child process. Those are the kinds of relationships humans miss when they are tired. But again, the proof comes from the artifacts, not from the summary.

Filesystem forensics follows the same rule. Use AI to catalog, compare, and rank. Do not ask it to divine meaning from a tarball. If you recover strings from an image, ask the model to cluster them into credentials, URLs, timestamps, or file markers. If you carve several files, ask the model to compare headers and infer likely types. If you extract browser history or shell history, ask it to propose a timeline. These are all “reduce entropy” tasks. They are where AI earns its keep.

The common mistake in forensics CTFs is to skip narrative discipline. A process name looks weird, so people jump to malware. A DNS query looks odd, so people jump to exfiltration. A JPEG has extra bytes, so people jump to stego. AI makes that worse unless you force it into evidence mode. Make it tell you what artifact supports each claim. Make it separate direct evidence from inference. Make it list alternative explanations. That single habit improves both human and AI performance.

How to Use AI in CTFs Without Letting the Model Attack You

The most interesting risk in AI-assisted CTF play is that the challenge can attack your assistant. OWASP’s prompt injection guidance is unusually relevant here. The project distinguishes direct and indirect prompt injection and notes that external sources such as websites or files can contain content that alters model behavior when interpreted by the model. The guidance also recommends segregating and clearly identifying external content, testing trust boundaries, and treating the model as a possible attack path rather than a neutral helper. (OWASP Gen AI 보안 프로젝트)

In a CTF workflow, that means challenge text, HTML, README files, source code comments, PDFs, hidden strings, screenshots, base64 blobs, and even image metadata should be treated as untrusted input. A malicious or intentionally tricky challenge could include instructions aimed at a model, not at you. The content does not even have to be human-obvious. OWASP notes that prompt injections do not need to be human-visible as long as the model parses them. In practical terms, that means a perfectly ordinary-looking document could still poison your assistant’s next step. (OWASP Gen AI 보안 프로젝트)

The first defense is architectural. Do not mix system instructions, your own task rules, and raw challenge content in one undifferentiated blob. Label sections. Use explicit markers for untrusted text. Keep the model’s job narrow. “Summarize the following untrusted HTML and extract only observed routes and forms” is a much safer instruction than “Read this page and tell me what to do next.”

The second defense is operational. Never auto-execute model output in a shell or downstream system. OWASP’s insecure output handling material says the risk appears when model outputs are passed into shells, browsers, or other components without validation or sanitization. It explicitly lists outcomes such as XSS, CSRF, SSRF, privilege escalation, and remote code execution when LLM output is handled insecurely. In a CTF, that is not theoretical. If your workflow lets a model turn a decoded hint into a shell command and then run it, you have just given challenge data a path into execution. (OWASP Gen AI 보안 프로젝트)

The third defense is social, not technical. Make yourself slow down before trust. If the model suggests a payload, ask what observation it is based on. If it suggests a command, ask what would falsify the command’s underlying hypothesis. If it suggests a complete exploit, ask which single precondition is most likely to fail. Those questions are good CTF practice even without AI. With AI, they are mandatory.

The AI Mistakes That Lose the Most CTF Points

The first major mistake is category lock-in. The model sees XML and decides the problem is XXE. You then spend thirty minutes pushing XXE payloads into a stock-check feature that actually only wants you to notice an SSRF pattern or a credential leak in a second endpoint. The cure is to force ranked hypotheses instead of one label. Make the model argue for second and third choices.

The second mistake is evidence starvation. People feed a model a single symptom and expect it to reason like a human who has been staring at the challenge for an hour. It cannot. If you want good help, give it a baseline request, a mutated request, and the precise difference between the responses. Give it the disassembly for one function and the crash offset. Give it the PCAP slice and the DNS burst timing. Every extra grounded artifact narrows the model’s degrees of freedom.

The third mistake is context bloat. Dumping a full binary, a full PCAP, three screenshots, and an entire chat history into one prompt rarely makes the model smarter. It usually makes it sloppier. Small context windows are not the only problem. Large windows encourage diffuse reasoning. The better move is to pre-triage artifacts with real tools, then give the model only the slice that matters.

The fourth mistake is script worship. AI-generated code looks satisfying. In CTFs, that can become addictive. But a script that runs is not the same as a script that proves anything. Benchmark work has repeatedly found failure modes around empty outputs, broken commands, and wrong code even when the surrounding reasoning sounded plausible. Treat AI code like a junior teammate’s first draft. You inspect it, minimize it, and test it against one tiny condition before you trust it with the whole challenge. (arXiv)

The fifth mistake is not keeping notes because “the model remembers.” It does not remember the way a disciplined player does. It remembers token context until it does not. Notes beat vibes. Save requests. Save script revisions. Save hashes. Save offsets. Save which hypotheses were killed and why. That habit also makes your post-CTF writeups better, which in turn makes your future AI prompting better because you have cleaner examples of your own reasoning to learn from.

Real CVEs That Make You Better at CTFs

CTFs are better when they sharpen your intuition for real systems. The easiest way to make AI-assisted CTF practice more useful is to anchor challenge patterns to real vulnerabilities. Not because every CTF mirrors production, but because the best CTF lessons are about structure: how input reaches an interpreter, how normalization fails, how privilege edges appear, how hidden artifacts change trust.

CVE-2021-41773 and Why Path Traversal Is Never Just About Dots and Slashes

NVD describes CVE-2021-41773 as a flaw in Apache HTTP Server 2.4.49 caused by a path normalization change. An attacker could use path traversal to map URLs to files outside directories configured by Alias-like directives. If those files were not protected by the usual require all denied default, requests could succeed. If CGI was enabled for the affected aliased paths, the issue could become remote code execution. NVD also notes that the issue was known to be exploited in the wild, and CISA later highlighted that the related Apache HTTP Server issues under exploitation affected 2.4.49 and 2.4.50. (NVD)

Why is that useful for CTF players. Because it teaches the real shape of path traversal. The bug is not “someone forgot to block ../.” The bug is about normalization, path mapping, deployment configuration, and what the server is allowed to expose outside the intended boundary. In CTF terms, that is the difference between a toy file-read challenge and a meaningful traversal chain. AI can help you generate traversal variants, but the interesting reasoning is environmental. Which directories are reachable. Which normalization step is broken. Whether file disclosure is the endpoint or only the bridge to something bigger.

The mitigation lesson matters too. The fix is not one magic regex. It is version correction, correct path handling, and defensive configuration that prevents files outside intended directories from being served or executed. That is the same defensive thinking good web CTF players eventually internalize: the vulnerability lives in trust boundaries, not in syntax alone. (NVD)

CVE-2021-44228 and the CTF Habit of Following Data Into an Interpreter

CVE-2021-44228, Log4Shell, remains one of the cleanest examples of why “user-controlled input reaches an interpreter” is a core offensive pattern. NVD states that affected Log4j2 versions allowed attacker-controlled log messages or parameters to trigger JNDI lookups to attacker-controlled endpoints, making remote code execution possible when message lookup substitution was enabled. The same record notes that 2.15.0 disabled the risky behavior by default and that 2.16.0 removed the functionality completely. CISA’s Log4j guidance likewise frames Log4Shell as a critical remote code execution issue and points to JNDI disablement in later fixes. (NVD)

Why is this relevant to CTFs. Because it trains the exact kind of reasoning AI often needs help with. The challenge is not merely “spot injection.” It is “follow the data flow from source to sink and then notice the external resolution step that turns data into control.” Many web and misc CTFs are simplified versions of that same cognitive move. A header, parameter, or loggable string does not matter because it exists. It matters because some downstream component interprets it.

When you use AI on challenges with that structure, do not ask only for payloads. Ask for path analysis. Which component consumes the input. Whether any intermediate transformation exists. Whether external resolution, templating, database interpretation, shell interpretation, or deserialization is part of the chain. That kind of prompting makes the model much more useful than “Give me a Log4Shell string.” It also mirrors real mitigation work: patch the component, reduce dangerous features, and constrain interpreter behavior. (NVD)

CVE-2021-3156 and the Pwn Lesson Hidden in Everyday Argument Parsing

NVD describes CVE-2021-3156 as an off-by-one error in sudoedit -s before sudo 1.9.5p2 that can produce a heap-based buffer overflow and local privilege escalation to root. This is a beautiful teaching case for pwn players because it comes from normal-looking argument handling in a widely deployed program, not from a cartoonishly broken toy binary. (NVD)

The CTF lesson is that memory corruption often hides in parsing logic that feels “administrative” rather than exotic. Argument processing, escaping, quoting, boundary conditions, length fields, and mode-specific behavior are all prime places for bugs. AI can be useful here when you feed it a small patch diff, decompiled parser logic, or crash behavior and ask it to explain what state transition makes the overflow reachable. It is far less useful if you ask it to generate a final exploit from the CVE description alone.

The mitigation lesson is just as important. Updating to a fixed version is the answer operationally, but for challenge reasoning the real takeaway is deeper: local privilege bugs are often about overlooked transitions inside trusted code paths. That makes them ideal material for AI-assisted explanation and terrible material for AI-only exploitation. The model can help you understand the boundary mistake. You still need a human to drive the exploit reasoning with precision. (NVD)

CVE-2024-3094 and Why Reversing Players Should Care About Build Pipelines

CVE-2024-3094 in xz is relevant to far more CTF categories than people first assume. NVD says malicious code was discovered in upstream xz tarballs starting with version 5.6.0 and that the build process extracted a prebuilt object from a disguised test file, modifying specific functions in the resulting library. CISA’s alert on the incident said the malicious code might allow unauthorized access to affected SSHD instances. (NVD)

This is a gift to reversing and forensics players. It teaches that the thing you trust by habit may not be the thing you should trust. The source repository may not match the release artifact. The interesting file may be labeled as test data. The exploit path may be introduced during build, not during obvious runtime logic. AI is genuinely helpful in this territory for artifact comparison, build-script summarization, and anomaly clustering. It is not trustworthy if you let it wave away ugly details with a smooth explanation.

The mitigation lesson again maps cleanly back to CTF instinct. Provenance matters. Release artifacts matter. Reproducibility matters. If a challenge or real incident contains multiple representations of the “same” program, the mismatch itself may be the clue. That habit of comparing source, build, and behavior is valuable far beyond one supply-chain case. (NVD)

Turning AI CTF Practice Into Better Pentesting

The biggest long-term value of AI in CTFs is not speed. It is discipline. CTFs compress offensive reasoning into a short cycle: observe, hypothesize, test, verify. If AI makes you lazier at any of those steps, it hurts you. If AI makes you more systematic, it helps you not only in contests but in real pentest work.

That is why the most useful AI habits in CTFs are almost boringly operational. Save the request pair that proved the bug. Save the script revision that finally worked. Save the crash offset and the binary hash. Save the exact artifact that changed your mind. Those are not just writeup habits. They are the beginning of reproducible security work, which is exactly the standard NIST and OWASP try to push people toward in real testing programs. (NIST 컴퓨터 보안 리소스 센터)

One reason a tool like Penligent can sit naturally inside that workflow is that its public material is not framed only around chat. The homepage emphasizes operator-controlled agentic workflows, prompt editing, scope controls, broad tool support, and evidence-first results, while the docs describe invoking installed Kali tools and configuring Python and Bash runtimes for generated scripts. In a CTF setting, that does not magically solve challenges. What it does do is make it easier to keep the parts of your AI workflow that matter in real work: explicit boundaries, rerunnable scripts, and artifacts that do not disappear when a chat window gets messy. (펜리전트)

Penligent’s own CTF AI writeup also makes a useful architectural point: separate intent, planning, execution, and evidence handling. Even if you never use that exact stack, the separation is solid advice. One long AI conversation that contains challenge text, solver drafts, terminal outputs, payload experiments, and conclusions all mixed together becomes un-auditable fast. Breaking the workflow into explicit stages keeps both the model and the operator more honest. (펜리전트)

The core rule is simple. Use AI to make the loop tighter, not blurrier.

The Best AI CTF Players Still Verify Everything

If you want one sentence to remember, it is this: AI is best in CTFs when it helps you run more good experiments, not when it gives you more answers.

Current public evidence supports a balanced view. Benchmarks show real progress. Models can help on structured offensive tasks, and some can outperform average participants in specific settings. At the same time, performance remains highly sensitive to orchestration, tooling, category, and evaluation setup. Professional-level benchmark work, vendor research on cyber evaluations, and planning-based pentest papers all still show limits around long-range coherence, specialized tools, and stateful reasoning. (arXiv)

That is not a disappointment. It is a roadmap. In web CTFs, let AI classify and compare. In pwn, let it scaffold and explain. In reversing, let it summarize locally. In crypto, let it formalize and eliminate. In forensics, let it cluster and narrate from evidence. In every category, make it earn trust one artifact at a time.

The players who get the most out of AI are not the ones who ask for magic. They are the ones who know exactly where magic ends and measurement begins.

추가 읽기

For safe, legal web practice with strong vulnerability-class coverage, PortSwigger’s Web Security Academy remains one of the best starting points. (포트스위거)

For broad CTF learning material across web exploitation, cryptography, forensics, binary exploitation, and reversing, picoCTF’s learning guides and primer are worth keeping open while you work. (picoCTF)

For pwn work, keep the official pwntools docs nearby, especially the sections on process and remote interaction and the corefile workflow. (Pwntools Documentation)

For symbolic execution and binary-path solving, angr’s official docs are the right reference. (Angr Documentation)

For reversing, Ghidra’s official project page is the authoritative starting point. (GitHub)

For packet and memory work, TShark and Volatility 3 official docs are the most useful references to pair with AI-assisted analysis. (Wireshark)

For grounding your expectations about what AI can and cannot do in offensive-security CTFs, NYU CTF Bench, Cybench, and the related public benchmark papers are more informative than social-media demos. (GitHub)

For workflow discipline in real testing, NIST SP 800-115 and the OWASP Web Security Testing Guide still hold up. (NIST 컴퓨터 보안 리소스 센터)

For Penligent-specific material that is genuinely relevant to this topic, the most useful pages are its homepage, the docs, and its CTF AI workflow article on evidence-backed chains you can rerun. (펜리전트)